-

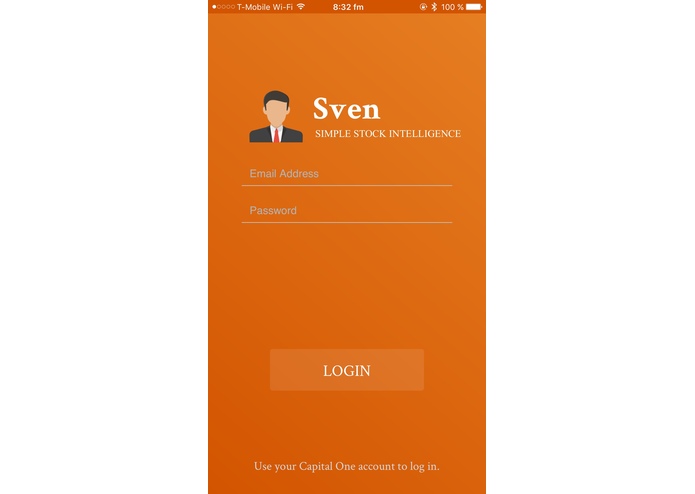

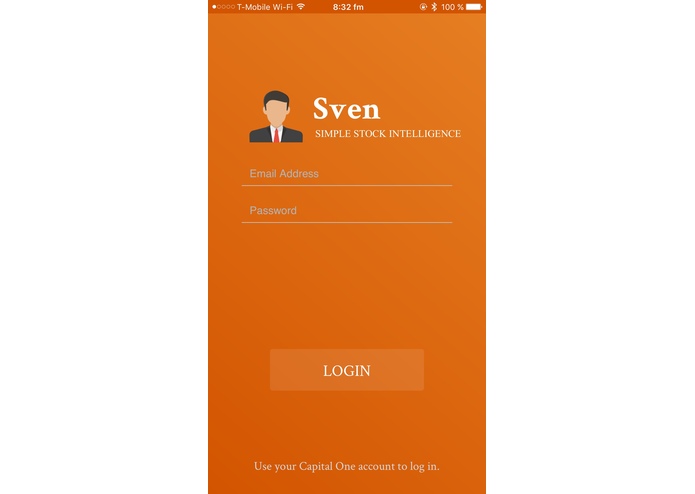

Login page where users connect to Capital One. Featuring logo by Zach.

-

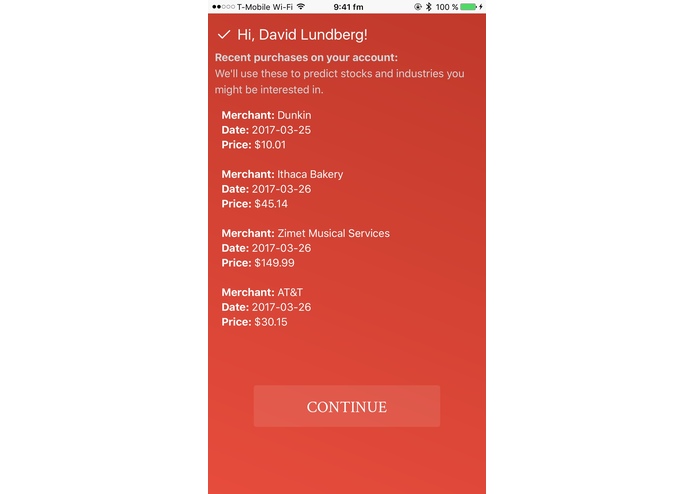

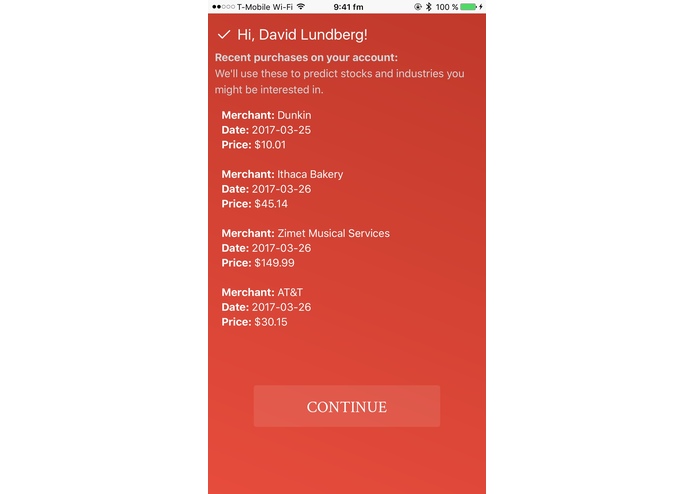

Shows the user recent purchases we'll assemble a portfolio based on.

-

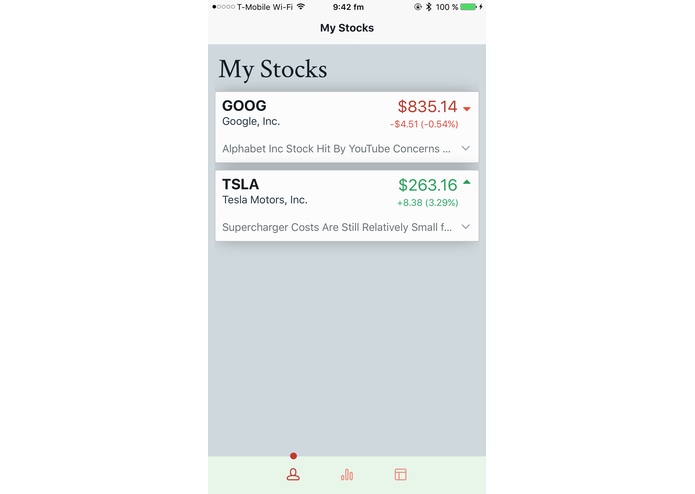

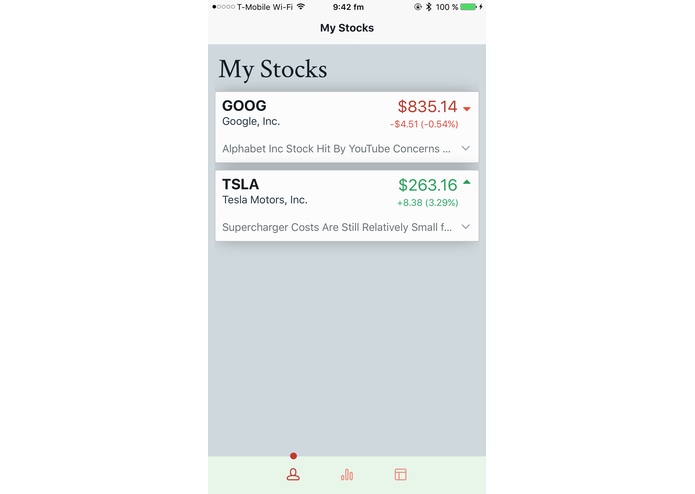

Home page of stock info, with news stories

-

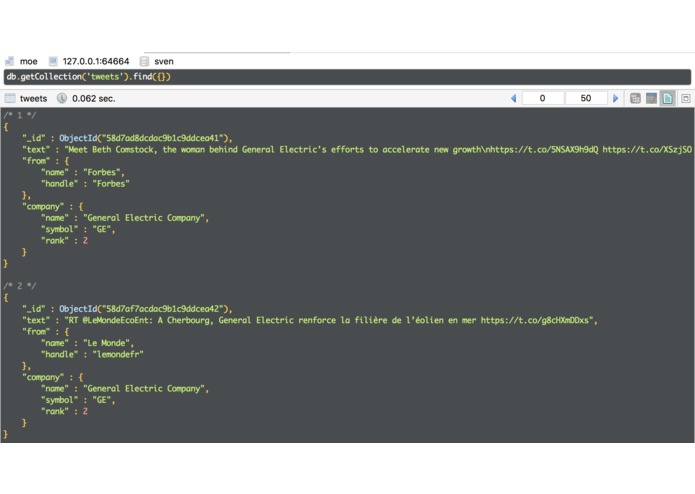

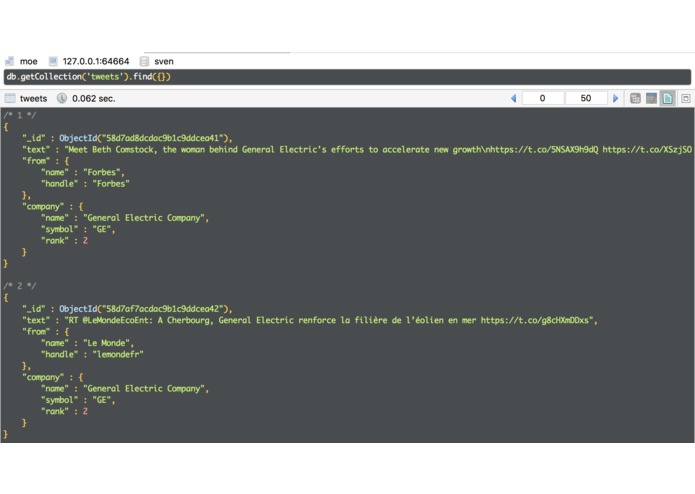

The first tweets our algorithm found! Decends in order of company popularity, then account.

-

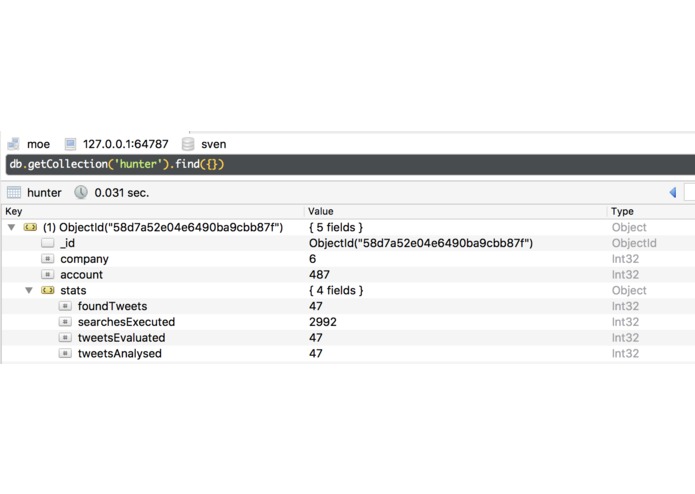

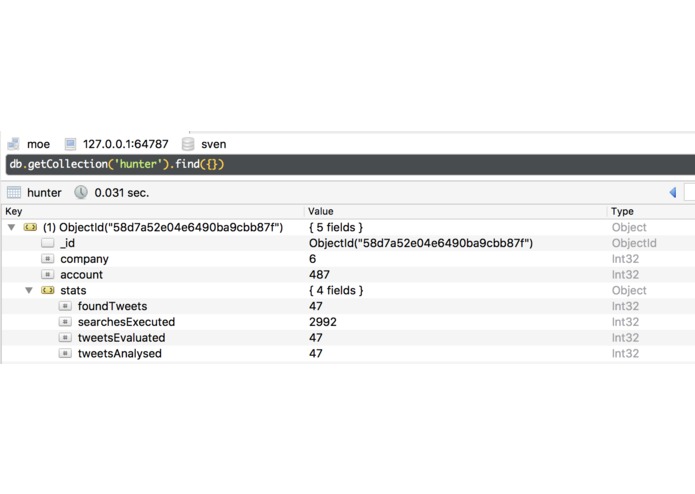

Stats from the tweet crawler

-

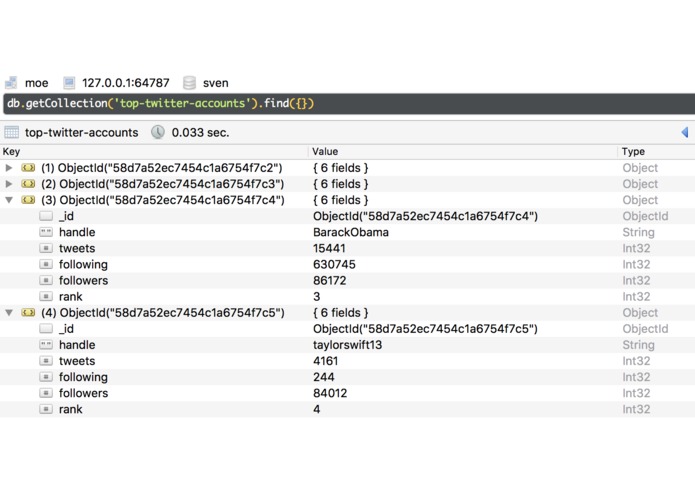

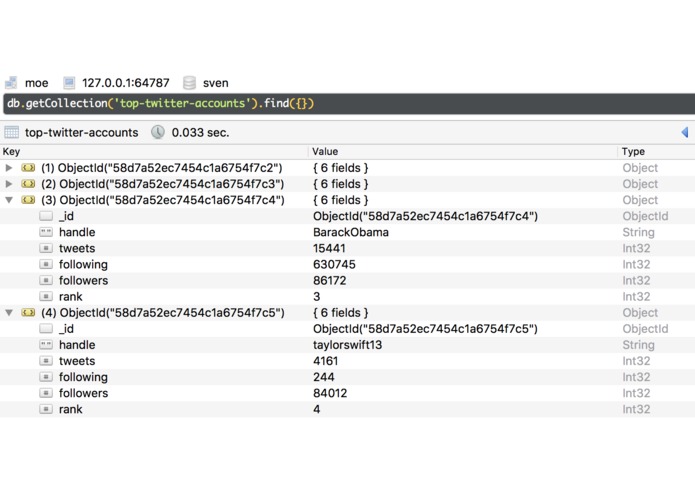

Our scraped top twitter accounts

-

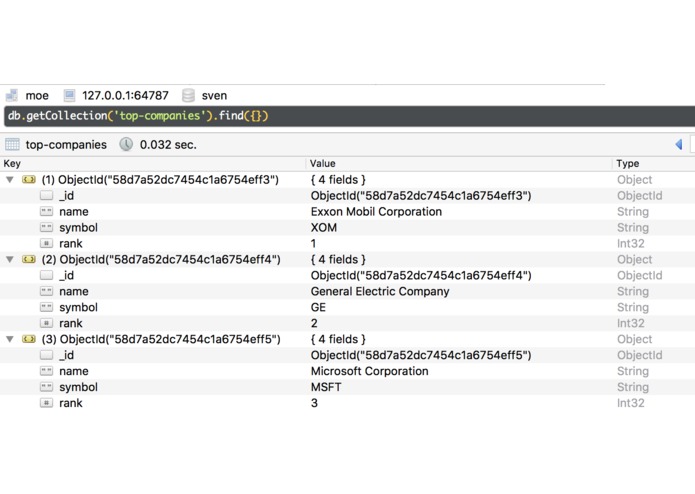

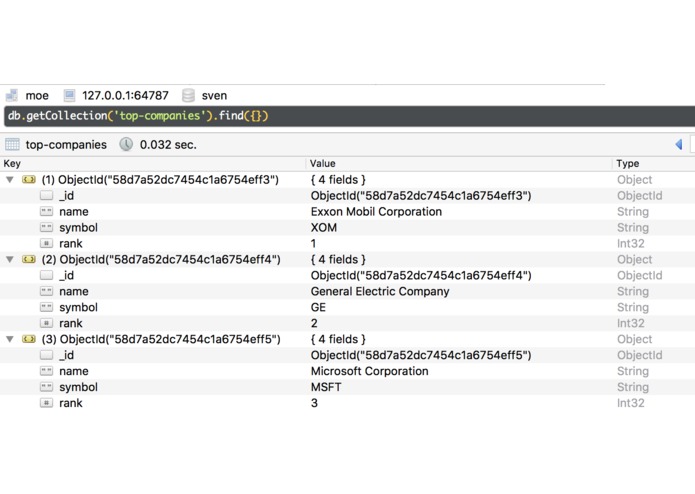

Stock info

-

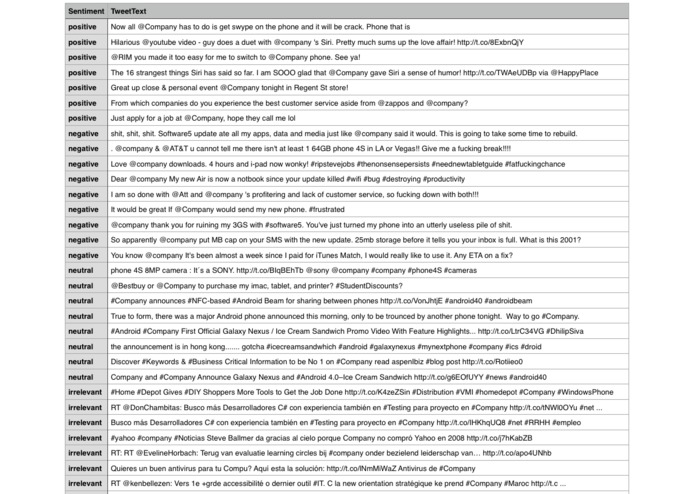

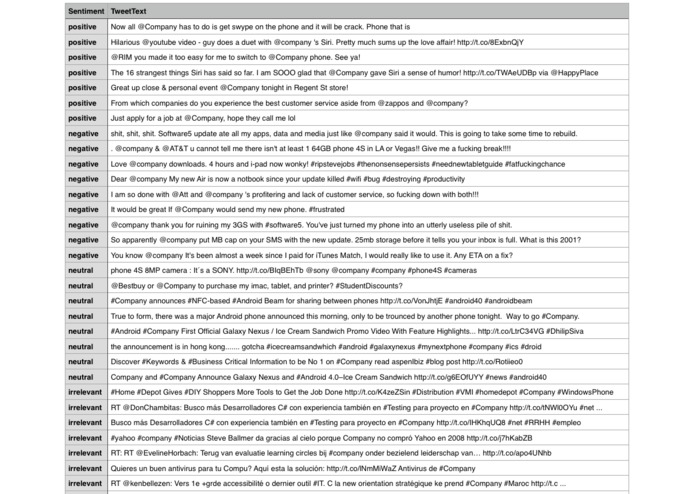

A sample of some of the twitter data used to train the convolutional network

Inspiration

We were inspired by recent advancements in accessible forms of AI such as Tensorflow and the reactionary nature of today's news. We were interested in learning more about the stock market, after a taste from our Economics classes.

What it does

Sven is your virtual stock broker friend. Sign in with a Capital One account in our iOS app, and Sven will review recent purchases. From there, he'll recommend you a portfolio. Once you're watching stocks, Sven can help you by showing you up to date prices and news, and lets you know when breaking news and controversy occurs. We use neural networks and semantic analysis to read the pulse of top companies on Twitter.

How we built it

We built scrapers to import the top company list and top twitter accounts, keeping them in our database. We then worked on, in parallel, tools to scrape twitter (real-time and historical data), and a convolutional neural network to analyse the tweets for sentiment (are they positive, negative, or neutral towards the company?). The network consists of a single convolution layer and a single pooling layer complete with dropout to avoid overfitting to the training data. We then integrated the Capital One API to get fields of interest the user might like, and finally built an iOS app that talked to our system.

Challenges we ran into

This was our group's first time using TensorFlow, and many of our first times' using Python. This caused plenty of headaches, but makes us only more proud of our network. Beyond that, many small tasks ended up taking unexpected time. We had to get the server process to talk with the neural network, so tweets could be streamed in. That proved more convoluted than we initially thought.

The Capital One API was confusing at first, but the mentors there helped us make quick work of the implementation. The test purchases, merchants, and accounts, we had to create ourselves. Company names were initially throwing the neural network off, so we made all of them generic and that removed biases. Once the network was functional, it was the daunting task of writing APIs and designing interfaces, while being thoroughly exhausted.

Accomplishments that we're proud of

We were able to get a lot of code written by splitting up tasks, which was essential for a hack with this many moving parts. The first tweet the twitter crawler recognized, at about 5:50 AM, was from Forbes praising General Electric for new leadership. That, combined with the training data accurately placing tweets, was very motivating for the last stretch.

What we learned

Lots about semantic analysis in machine learning, and how to efficently scrape sites, documents, and APIs.

What's next for Sven

We'd like to bring in more news sources other than tweets, and develop a reliability/priority system for accounts so Sven knows more about his sources.

Built With

- apache

- bootstrap

- capital-one

- cheerio

- express.js

- mongodb

- node.js

- tensorflow

Log in or sign up for Devpost to join the conversation.