-

-

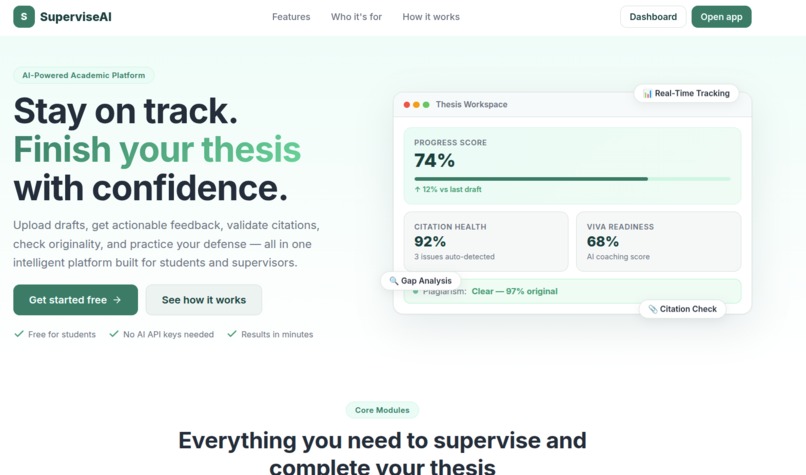

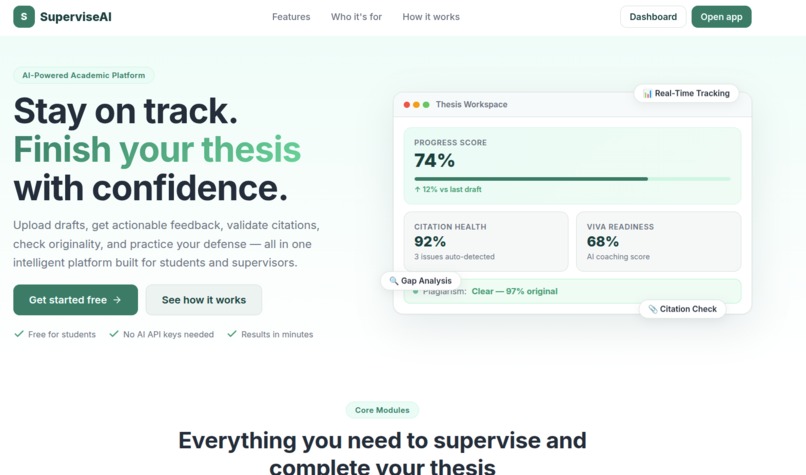

Landing Page

-

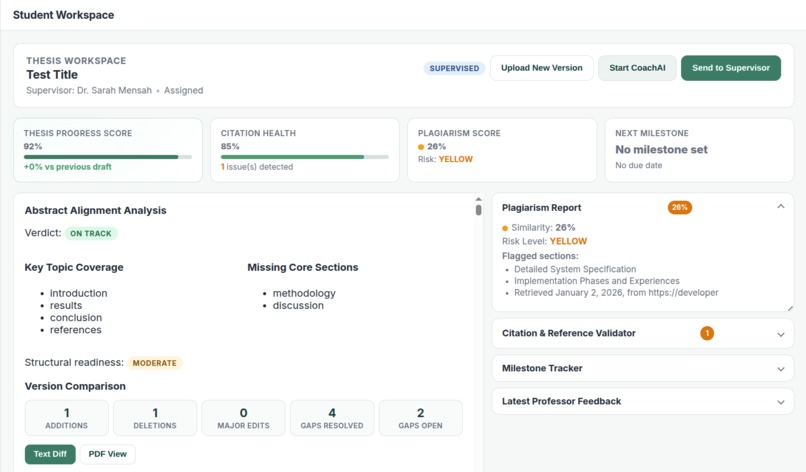

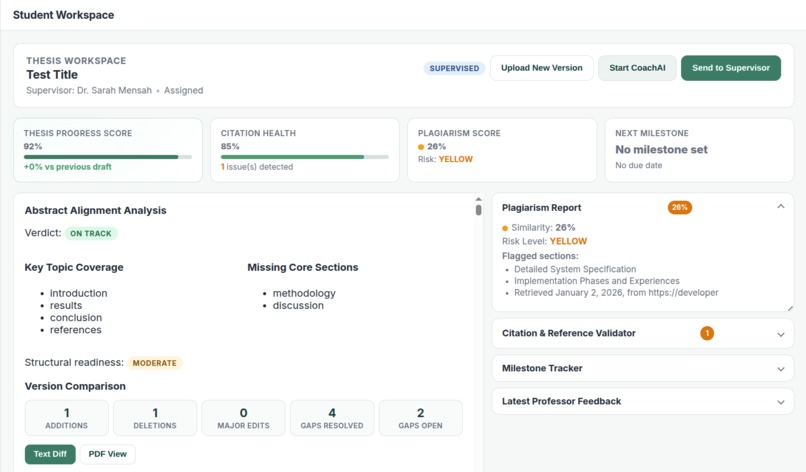

Student workspace where the student can view live stats for any submitted thesis

-

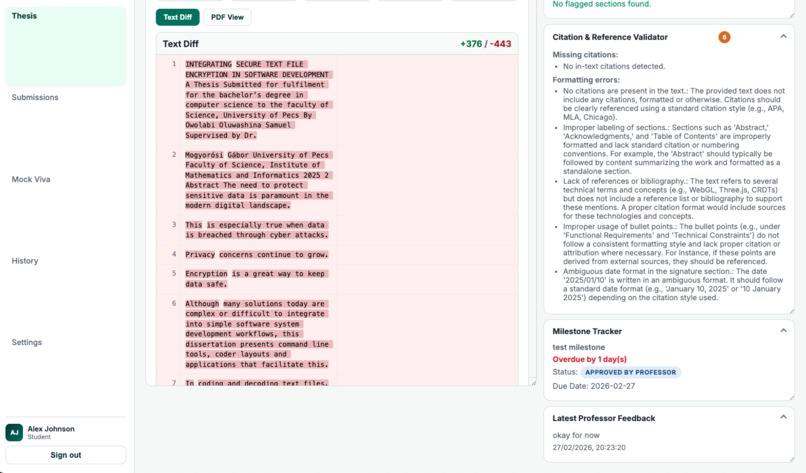

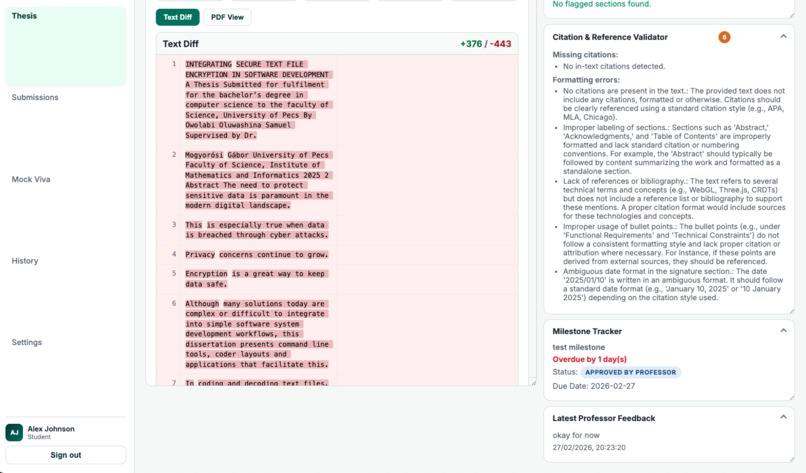

More student analytics fro the student dashboard

-

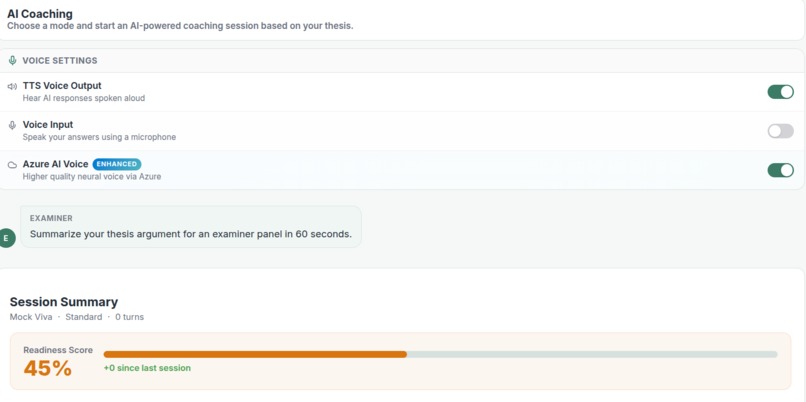

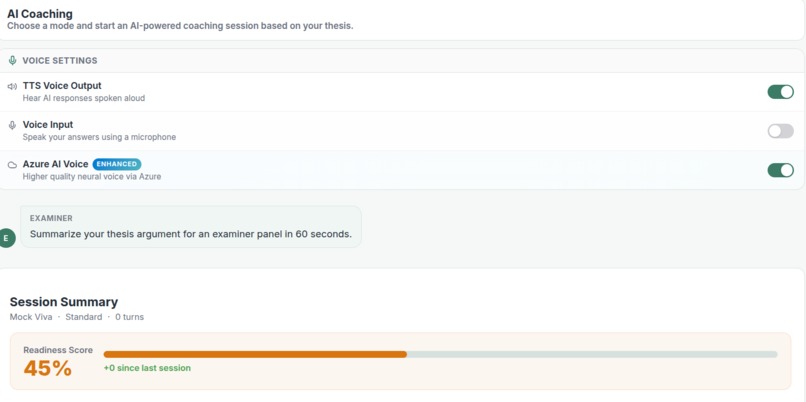

AI Coaching dashboard that structures debates based on student submission

-

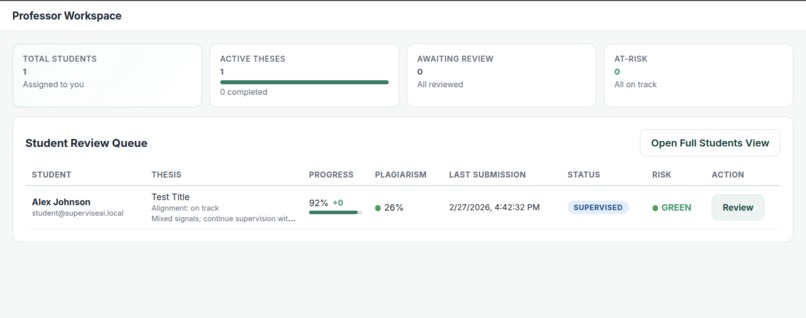

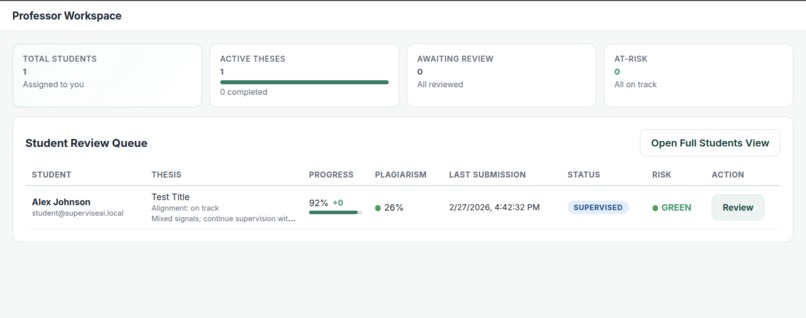

Professor dashboard overview

-

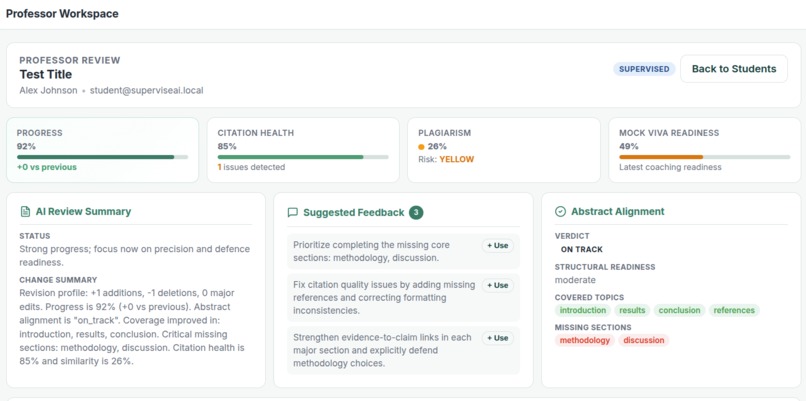

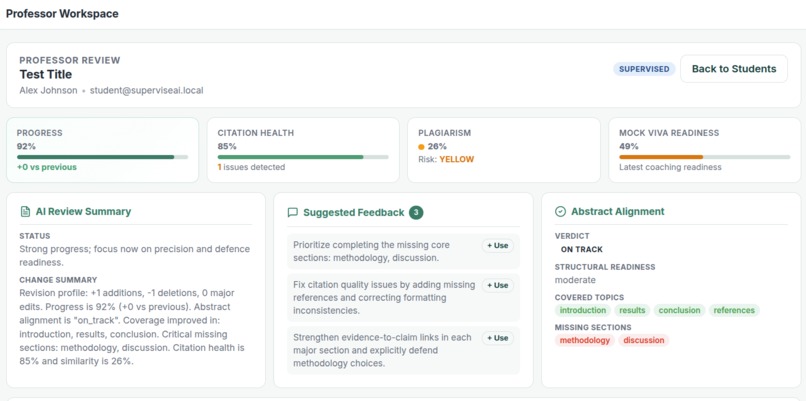

(Professor view) - Analysis of submitted thesis by students assigned to the professor

-

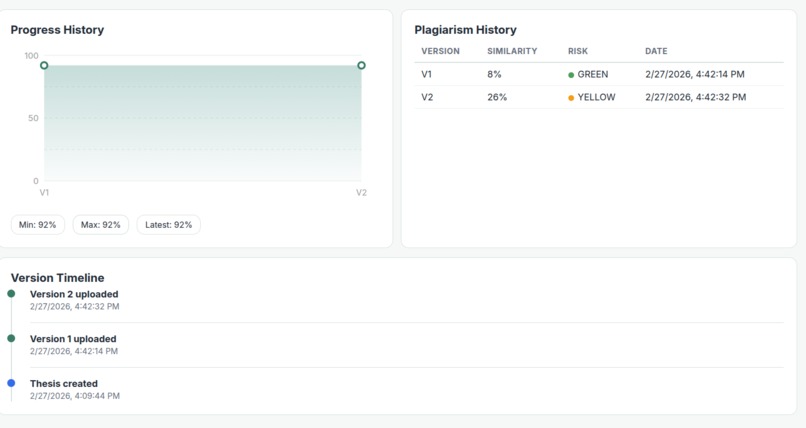

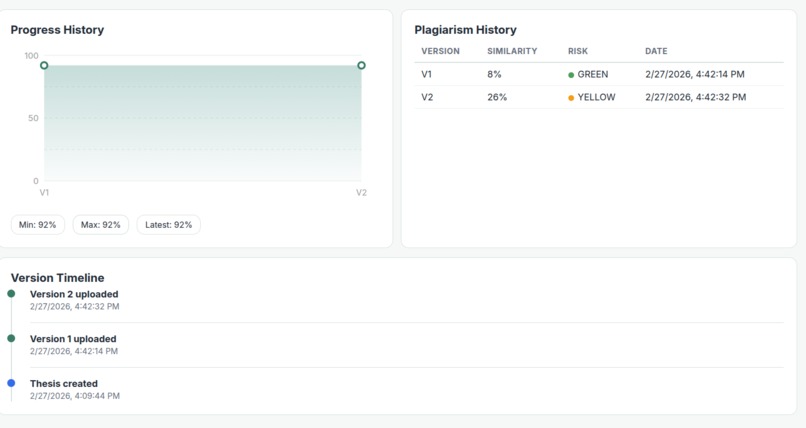

More student details

-

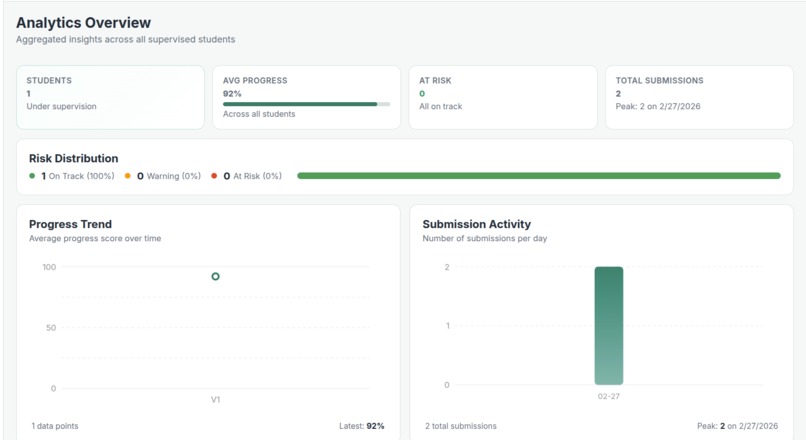

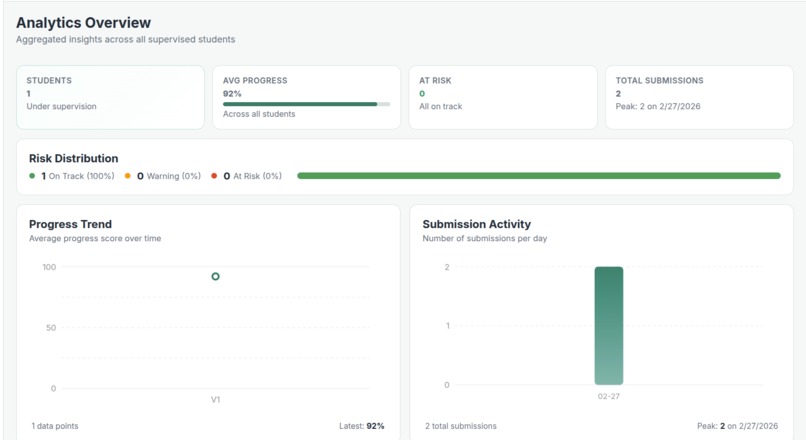

Live analytics for the professor

Inspiration

Thesis supervision and defense preparation remain one of the most stressful and inconsistent parts of higher education. Students often prepare in isolation, unsure whether their arguments are strong enough, while supervisors lack scalable tools to simulate realistic defense conditions.

We were inspired by a simple question:

What if students could train for their thesis defense the same way pilots train in flight simulators?

Instead of passively rereading their thesis, students could engage in adaptive, AI-powered mock defenses that challenge their reasoning, test their confidence, and provide structured feedback before the real exam.

SuperviseAI was born from the idea of transforming thesis preparation from static review into interactive simulation.

What it does

SuperviseAI is an AI-powered Thesis Defense Simulator and Intelligent Supervision Platform designed to transform how students prepare for high-stakes academic evaluations.

At its core is an AI Mock Viva Engine that:

- Analyzes a student’s thesis draft

- Extracts key claims and arguments

- Generates context-aware examiner questions

- Conducts an interactive defense session (voice or text-based)

- Adapts question difficulty based on response quality and confidence

- Produces a structured readiness score and improvement plan

In addition, SuperviseAI provides:

- Draft evolution tracking and structured progress analysis

- AI-powered argument and methodology critique

- Claim extraction and rebuttal training (Argument Defender mode)

- Supervisor analytics to monitor development over time

- Milestone tracking for structured thesis progression

Unlike generic AI chatbots, SuperviseAI is context-aware — it evaluates the student’s actual research content and simulates realistic academic pressure in a safe, adaptive environment.

How we built it

We built SuperviseAI using a modular architecture designed for real-time interaction and scalable AI evaluation.

- Azure OpenAI for thesis analysis, claim extraction, question generation, and structured feedback.

- Azure Speech-to-Text and Text-to-Speech to enable live, voice-based mock defense simulations.

- Azure Cognitive Services for sentiment and confidence analysis during viva sessions.

- A structured backend for draft comparison, milestone tracking, and supervision analytics.

- A dynamic scoring engine that evaluates argument strength, clarity, methodological consistency, and defense readiness.

The system combines document analysis, adaptive questioning logic, and feedback generation into a cohesive AI coaching loop.

Challenges we ran into

One of the biggest challenges was ensuring that the AI did not behave like a generic chatbot. We needed structured, reliable outputs rather than verbose, unpredictable responses.

We also faced challenges in:

- Designing prompts that consistently return structured evaluation data

- Maintaining conversational context across multiple defense rounds

- Handling latency in real-time speech interactions

- Creating scoring metrics that feel academically meaningful, not arbitrary

Balancing realism with hackathon-time constraints required careful prioritization and architectural simplification.

Accomplishments that we're proud of

- Building a fully functional AI Mock Viva simulator within hackathon constraints

- Successfully integrating document-aware question generation instead of generic prompts

- Designing an adaptive questioning system that responds to student confidence

- Creating a supervision dashboard that connects AI coaching with measurable academic progress

- Delivering a working prototype that moves beyond tutoring into true simulation

We’re especially proud that SuperviseAI does not just “give answers” — it challenges them.

What we learned

Through this project, we learned:

- Effective AI in education requires structure, not just intelligence

- Prompt engineering is as much about constraints as creativity

- Simulation creates deeper learning engagement than passive feedback

- Voice interaction dramatically increases immersion and perceived realism

- Educational AI must balance rigor, clarity, and psychological safety

We also saw how AI can act as a training partner rather than a replacement for educators.

What's next for SuperviseAI

Next, we plan to:

- Expand the Mock Viva engine with multiple examiner personas (e.g., strict methodologist, theory-focused reviewer)

- Improve scoring transparency with detailed evaluation rubrics

- Add longitudinal readiness tracking across multiple defense simulations

- Introduce peer-simulation modes for debate-style training

- Pilot the system in real university environments for validation and feedback

Our long-term vision is to make AI-powered academic simulation a standard part of higher education — helping students enter high-stakes evaluations confident, prepared, and resilient.

Built With

- codex

- docker

- node.js

- openai

- postgresql

- react

Log in or sign up for Devpost to join the conversation.