Inspiration

People with certain physical disabilities often find themselves at an immediate disadvantage in gaming and in using educational software. There are some amazing people and organizations in the gaming and accessibility worlds that have set out to make that statement less true. People like Bryce Johnson who created the Xbox Adaptive Controller, or everyone from the Special Effect and Able Gamers charities. They use their time and money to create custom controllers that are fit to a specific user with their own unique situation.

Here's an example of those setups:

You can see the custom buttons on the pad and the headrest as well as the custom joysticks. These types of customized controllers using the XAC let the user make the controller work for them. These are absolutely amazing developments in the accessible gaming world, but we can do more.

Games and software that are fast paced or just challenging in general still leave an uneven playing field for people with disabilities. For example, I can tap a key or click my mouse drastically faster than the person in the example above can reach for the joystick or hit a button on a pad. I have a required range of motion of 2mm where he has a required range of over 12 inches.

I built SuaveKeys to level the playing field, now made even better with the power of Azure and Cognitive Services.

Lastly, I'd like to take the opportunity to thank my little brother, Bryan, for always being an inspiration to make software more accessible for all. Having had to learn remotely while being homebound for the last few years, he's found ways to interact with both the tools he uses in learning as well as other students in ways that works for him. His inspiration drives me to help others find better ways to navigate the digital world as well.

Suave Keys in Education

Suave Keys was built to help people use any software, not just games, with more ease. While more and more students are learning remotely, they have to use more and more software in every activity. From Zoom, to Google Classroom, to Microsoft Office. While many of these tools have their own accessibility tools to make navigation and control a bit easier, disabled students are still at a disadvantage. By using other means like voice and expression, students can customize how they interact with the technology they now have to use every day in ways that work best for them. From shortcutting average tasks with voice-driven macros to interacting with their tools without having to reach for a keyboard or mouse. Spending less time fighting to make their tools work for them, students can spend more time and energy on their learning subjects and be able to keep pace with everyone else. While remote learning has left students computer-bound, it doesn't need to keep them keyboard-bound.

What it does

SuaveKeys lets you play games and use software with your voice and expression alongside the usual input of keyboard and mouse. It acts as a distributed system to allow users to make use of whatever resources they have to connect. For example, if the user only has an alexa speaker and their computer, they can play using Alexa, but now they can use their Android phone or iPhone using the SuaveKeys mobile app.

Here's what it looks like:

The process is essentially:

- User signs into their smart speaker and client app

- User speaks to the smart speaker or app OR user makes expressions to webcam

- NLU and expression detection happens with LUIS and Cognitive services

- The request goes to Voicify to add context and routing

- Voicify sends the updated request to the SuaveKeys API

- The SuaveKeys API sends the processed input to the connected Client apps over websockets

- The Client app checks the input phrase against a selected keyboard profile

- The profile matches the phrase to a key or a macro

- The client app then sends the request over a serial data writer to an Arduino Leonardo

- The Arduino then sends USB keyboard commands back to the host computer

- The computer executes the action in game

The app also allows the user to customize their profiles from their phone as well as their desktop client. So if you want to quickly create a new command or macro, you can register it right within the app.

Here's a quick gif of it in action in Fallguys where I use my voice, facial expressions, and hand gestures to control the character.

If you watch the bottom left, you can see my phone screen where I say "attack" which then triggers the right intent in LUIS, and then sends it to Voicify, to the SuaveKeys API, to my desktop, to Arduino, and actually fires the gun in the game to get a headshot.

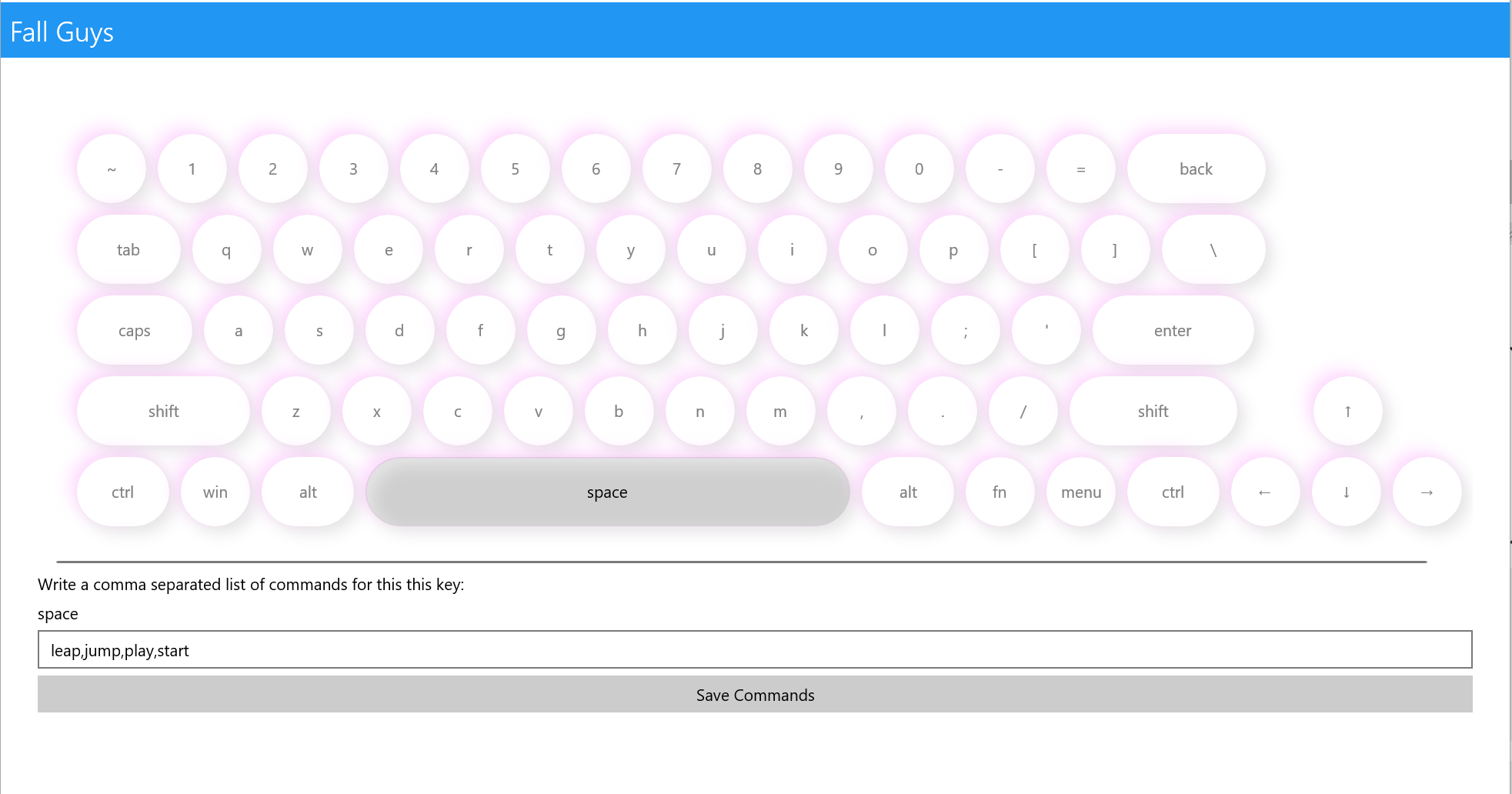

Here's an example of a Fall Guys profile of commands - select a key, give a list of commands, and when you speak them, it works!

You can also add macros to a profile:

Supported Input Platforms

Suave Keys is meant to be useable and useful for anyone on any device and thus allows for voice and expression input support from:

- Alexa Skill: Voice

- Google Action: Voice

- Bixby Capsule: Voice

- Android: Voice and Expression

- iOS: Voice and Expression

- Windows (UWP): Voice and Expression

- Twitch chat: Distributed chat

- Snapchat: Expression

You can also use any combination of these at the same time! Want to use your microphone from your desktop, but video from your Android phone? Great! No mic on your PC but a web cam? Use Alexa for voice and your camera for expression.

How I built it

The SuaveKeys mobile and desktop apps are built using C#, .NET, and Xamarin with the help of LUIS, Voicify, Azure App Service, Azure Postgres, Azure Cognitive Services, SignalR, and a whole lot of abstraction and dependency injection.

Infrastructure and Azure Resources

This project uses many different Azure resources and services to run at scale:

- Azure app services: Hosts .net core web api and signalr project

- Azure PostgreSQL: Data storage for user info and keyboard preferences

- LUIS: Used for NLU processing before sending detected inputs to the SuaveKeys API

- Azure Cognitive Services Face API: Used for detecting expression from live video feed that is used to map to key inputs

Software Implementation

While the SuaveKeys API and Authentication layers already existed, we were able to build the client apps to act as both ends of the equation.

Each page in the app is built using XAML, C#, and MVVM. To handle differences in platforms such as:

- Speech to text providers

- Camera previews and frame ingestion

- UI differences

- Changes in business logic

I built a dependency abstraction that lets us create an interface in the shared code, an implementation of that interface separately in each platform project, then inject it back into shared code.

For example, our ViewModel that handles the Speech to text flow that lets us actually talk to our app and have it work looks like this:

public class MicrophonePageViewModel : BaseViewModel

{

private readonly ISpeechToTextService _speechToTextService;

private readonly IKeyboardService _keyboardService;

public ICommand StartCommand { get; set; }

public ICommand StopCommand { get; set; }

public bool IsListening { get; set; }

public MicrophonePageViewModel()

{

_speechToTextService = App.Current.Container.Resolve<ISpeechToTextService>();

_keyboardService = App.Current.Container.Resolve<IKeyboardService>();

_speechToTextService.OnSpeechRecognized += SpeechToTextService_OnSpeechRecognized;

StartCommand = new Command(async () =>

{

await _speechToTextService?.InitializeAsync();

await _speechToTextService?.StartAsync();

IsListening = true;

});

StopCommand = new Command(() =>

{

IsListening = false;

});

}

private async void SpeechToTextService_OnSpeechRecognized(object sender, Models.SpeechRecognizedEventArgs e)

{

_keyboardService?.Press(e.Speech);

if (IsListening)

await _speechToTextService?.StartAsync();

}

}

This means, we need to implement and inject our IKeyboardService and our ISpeechToTextService. So to let Android actually use the built-in SpeechRecognizer activity and pass it to LUIS then voicify, we implement it like this:

public class AndroidSpeechToTextService : ISpeechToTextService

{

private readonly MainActivity _context;

private readonly ILanguageService _languageService;

private readonly ICustomAssistantApi _customAssistantApi;

private readonly IAuthService _authService;

private string sessionId;

public event EventHandler<SpeechRecognizedEventArgs> OnSpeechRecognized;

public AndroidSpeechToTextService(MainActivity context,

ILanguageService languageService,

ICustomAssistantApi customAssistantApi,

IAuthService authService)

{

_context = context;

_languageService = languageService;

_customAssistantApi = customAssistantApi;

_authService = authService;

_context.OnSpeechRecognized += Context_OnSpeechRecognized;

}

private async void Context_OnSpeechRecognized(object sender, SpeechRecognizedEventArgs e)

{

var languageResult = await _languageService.ProcessLanguage(e.Speech).ConfigureAwait(false);

var tokenResult = await _authService.GetCurrentAccessToken();

var voicifyResponse = await _customAssistantApi.HandleRequestAsync(VoicifyKeys.ApplicationId, VoicifyKeys.ApplicationSecret, new CustomAssistantRequestBody(

requestId: Guid.NewGuid().ToString(),

context: new CustomAssistantRequestContext(sessionId,

noTracking: false,

requestType: "IntentRequest",

requestName: languageResult.Data.Name,

slots: languageResult.Data.Slots,

originalInput: e.Speech,

channel: "Android App",

requiresLanguageUnderstanding: false,

locale: "en-us"),

new CustomAssistantDevice(Guid.NewGuid().ToString(), "Android Device"),

new CustomAssistantUser(sessionId, "Android User")

));

OnSpeechRecognized?.Invoke(this, e);

}

public Task InitializeAsync()

{

sessionId = Guid.NewGuid().ToString();

// we don't need to init.

return Task.CompletedTask;

}

public Task StartAsync()

{

var voiceIntent = new Android.Content.Intent(RecognizerIntent.ActionRecognizeSpeech);

voiceIntent.PutExtra(RecognizerIntent.ExtraLanguageModel, RecognizerIntent.LanguageModelFreeForm);

voiceIntent.PutExtra(RecognizerIntent.ExtraSpeechInputCompleteSilenceLengthMillis, 1500);

voiceIntent.PutExtra(RecognizerIntent.ExtraSpeechInputPossiblyCompleteSilenceLengthMillis, 1500);

voiceIntent.PutExtra(RecognizerIntent.ExtraSpeechInputMinimumLengthMillis, 15000);

voiceIntent.PutExtra(RecognizerIntent.ExtraMaxResults, 1);

voiceIntent.PutExtra(RecognizerIntent.ExtraLanguage, Java.Util.Locale.Default);

_context.StartActivityForResult(voiceIntent, MainActivity.VOICE_RESULT);

return Task.CompletedTask;

}

}

The gist of it is kicking off the speech recognition, then when we process the speech, send it to our ILanguageService (this is where we implement the LUIS call), then fire that processed data off to the voicify ICustomAssistantApi.

Here's the gist of the LuisLanguageService that is then injected into our android service:

public class LuisLanguageUnderstandingService : ILanguageService

{

private HttpClient _client;

public LuisLanguageUnderstandingService(HttpClient client)

{

_client = client;

}

public async Task<Result<Intent>> ProcessLanguage(string input)

{

try

{

var result = await _client.GetAsync($"https://suavekeys.cognitiveservices.azure.com/luis/prediction/v3.0/apps/fda4acbe-3c37-410d-a630-c66ec1722b12/slots/production/predict?subscription-key={LuisKeys.PredictionKey}&verbose=true&show-all-intents=true&log=true&query={input}");

if (!result.IsSuccessStatusCode)

return new InvalidResult<Intent>("Unable to handle request/response from LUIS");

var json = await result.Content.ReadAsStringAsync();

var luisResponse = JsonConvert.DeserializeObject<LuisPredictionResponse>(json);

// map to intent

var model = new Intent

{

Name = luisResponse.Prediction.TopIntent,

Slots = luisResponse.Prediction.Entities?.Where(kvp => kvp.Key != "$instance")?.Select(kvp => new Slot

{

Name = kvp.Key,

SlotType = kvp.Key,

Value = kvp.Value.FirstOrDefault()?.Value<string>()

}).ToArray()

};

return new SuccessResult<Intent>(model);

}

catch (Exception ex)

{

Console.WriteLine(ex);

return new UnexpectedResult<Intent>();

}

}

}

Here, we send the request off to our LUIS app, then take the output and map it to an simplified model that we can send to the Voicify app.

So all-in-all the flow of data/logic is:

- User signs in

- User goes to microphone page

- User taps "start"

- User speaks

- Android STT service listens and processes text

- Android STT service takes output text and sends to LUIS for alignment

- Android STT takes aligned NL and sends it to Voicify

- Voicify processes the aligned NL against the built app

- Voicify sends request to SuaveKeys API webhook

- SuaveKeys API sends websocket request to any connected client (UWP app)

- UWP app takes request and sends it to Arduino via serial connection

- Arduino sends USB data for keyboard input

- Action happens in the game or other software

Expression Management with Webcams and Face API

On top of using your voice with the mic on the device of your choice, you can also use the webcam on either your desktop or mobile device to map expressions to keyboard commands such as:

- Smiling

- Head position

- pitch

- yaw

- roll

- Emotion

This is implemented by using another platform specific abstraction where each platform implements a custom control that:

- Gets the user's camera permissions

- Projects a preview of the camera feed to the screen

- Grabs the current preview frame every 500ms (configurable)

- Encodes the preview frame to jpeg

- Sends the jpeg frame to the Azure Face API to detect the face elements

- Determines the expressions and translates them to Suave Keys commands

- Sends the command to the Suave Keys API to eventually send to the final keyboard execution on the client device

Here's an example of doing so with a custom CameraFragment in the Android application:

class CameraFragment : Fragment, TextureView.ISurfaceTextureListener

{

// ... private fields removed for readability

public CameraExpressionDetectionView Element { get; set; }

public CameraFragment()

{

}

public CameraFragment(IntPtr javaReference, JniHandleOwnership transfer) : base(javaReference, transfer)

{

}

public override Android.Views.View OnCreateView(LayoutInflater inflater, ViewGroup container, Bundle savedInstanceState) => inflater.Inflate(Resource.Layout.CameraFragment, null);

public override void OnViewCreated(Android.Views.View view, Bundle savedInstanceState) => texture = view.FindViewById<AutoFitTextureView>(Resource.Id.cameratexture);

public async Task RetrieveCameraDevice(bool force = false)

{

await RequestCameraPermissions();

if (!cameraPermissionsGranted)

return;

if (!captureSessionOpenCloseLock.TryAcquire(2500, TimeUnit.Milliseconds))

throw new RuntimeException("Timeout waiting to lock camera opening.");

IsBusy = true;

cameraId = GetCameraId();

if (string.IsNullOrEmpty(cameraId))

{

IsBusy = false;

captureSessionOpenCloseLock.Release();

Console.WriteLine("No camera found");

}

else

{

try

{

CameraCharacteristics characteristics = Manager.GetCameraCharacteristics(cameraId);

StreamConfigurationMap map = (StreamConfigurationMap)characteristics.Get(CameraCharacteristics.ScalerStreamConfigurationMap);

previewSize = ChooseOptimalSize(map.GetOutputSizes(Class.FromType(typeof(SurfaceTexture))),

texture.Width, texture.Height, GetMaxSize(map.GetOutputSizes((int)ImageFormatType.Jpeg)));

sensorOrientation = (int)characteristics.Get(CameraCharacteristics.SensorOrientation);

cameraType = (LensFacing)(int)characteristics.Get(CameraCharacteristics.LensFacing);

if (Resources.Configuration.Orientation == Android.Content.Res.Orientation.Landscape)

{

texture.SetAspectRatio(previewSize.Width, previewSize.Height);

}

else

{

texture.SetAspectRatio(previewSize.Height, previewSize.Width);

}

initTaskSource = new TaskCompletionSource<CameraDevice>();

Manager.OpenCamera(cameraId, new CameraStateListener

{

OnOpenedAction = device => initTaskSource?.TrySetResult(device),

OnDisconnectedAction = device =>

{

initTaskSource?.TrySetResult(null);

CloseDevice(device);

},

OnErrorAction = (device, error) =>

{

initTaskSource?.TrySetResult(device);

Console.WriteLine($"Camera device error: {error}");

CloseDevice(device);

},

OnClosedAction = device =>

{

initTaskSource?.TrySetResult(null);

CloseDevice(device);

}

}, backgroundHandler);

captureSessionOpenCloseLock.Release();

device = await initTaskSource.Task;

initTaskSource = null;

if (device != null)

{

await PrepareSession();

}

}

catch (Java.Lang.Exception ex)

{

Console.WriteLine("Failed to open camera.", ex);

Available = false;

}

finally

{

IsBusy = false;

}

}

}

public void UpdateRepeatingRequest()

{

if (session == null || sessionBuilder == null)

return;

IsBusy = true;

try

{

if (repeatingIsRunning)

{

session.StopRepeating();

}

sessionBuilder.Set(CaptureRequest.ControlMode, (int)ControlMode.Auto);

sessionBuilder.Set(CaptureRequest.ControlAeMode, (int)ControlAEMode.On);

session.SetRepeatingRequest(sessionBuilder.Build(), listener: null, backgroundHandler);

repeatingIsRunning = true;

}

catch (Java.Lang.Exception error)

{

Console.WriteLine("Update preview exception.", error);

}

finally

{

IsBusy = false;

}

}

// ... permission methods removed for readability

Bitmap captureBitmap;

bool _readyToProcessFrame = false;

async void TextureView.ISurfaceTextureListener.OnSurfaceTextureAvailable(SurfaceTexture surface, int width, int height)

{

View?.SetBackgroundColor(Element.BackgroundColor.ToAndroid());

cameraTemplate = CameraTemplate.Preview;

// Get the preview image width and height

captureBitmap = Bitmap.CreateBitmap(width, height, Bitmap.Config.Argb8888);

Device.StartTimer(TimeSpan.FromMilliseconds(500), () =>

{

_readyToProcessFrame = true;

return true; // return true to repeat counting, false to stop timer

});

await RetrieveCameraDevice();

}

bool TextureView.ISurfaceTextureListener.OnSurfaceTextureDestroyed(SurfaceTexture surface)

{

CloseDevice();

return true;

}

void TextureView.ISurfaceTextureListener.OnSurfaceTextureSizeChanged(SurfaceTexture surface, int width, int height) => ConfigureTransform(width, height);

void TextureView.ISurfaceTextureListener.OnSurfaceTextureUpdated(SurfaceTexture surface)

{

if (captureBitmap != null && _readyToProcessFrame)

{

_readyToProcessFrame = false;

using (var stream = new MemoryStream())

{

var bitmap = texture.GetBitmap(captureBitmap);

bitmap.Compress(Bitmap.CompressFormat.Jpeg, 100, stream);

var bytes = stream.ToArray();

Element?.ProcessFrameStream(bytes);

}

}

}

}

So if you smile at your camera within the 500ms timer, it will detect the smile and send "smile" as a command to the API in the same way that saying the word "smile" to your voice input device would.

There are tons of other things we can do going forward with a custom Face API model beyond what is built in with Cognitive services such as:

- Detecting blinking

- Detecting eye brow raising

- Detecting expression change speed

Extendability

Suave Keys is built to reach any user anywhere on any device. Thus, allowing other developers to build tools that can provide new input means where Suave Keys lacks an existing input is an important step. To build an extension, developers can authenticate the user using OAuth Auth code grant flow with a certified client, then send any input on behalf of the user to send to any output device.

Here are some examples of extensions that allow for other third party platforms to provide input to Suave Keys:

- Using Snapchat custom lenses to handle expression inputs with social abilities: https://github.com/SuavePirate/SuaveKeys.SnapReader

- Using twitch chat to control someone else's games: https://github.com/SuavePirate/SuaveKeys.TwitchBot

Challenges I ran into

With regards to performance, I'm exploring a couple things including:

- Balancing the process timing while speaking

- Running intermittent spoken word against LUIS to see if it is valid ahead of time

- Cognitive services costs at scale for Face APIs. If we can reliably and more cost effectively make requests from the live video feed to cog services or operate on it offline, we can allow for faster performance from expression to action

Accomplishments that I'm proud of

The biggest accomplishment was being able to see it in use! I was able to play games like Call of Duty, Sekiro, and Fall Guys using my voice! I was also able to even write some code in Visual Studio using my voice (pretty meta)! It feels like it's closer to a real option for people with disabilities to play competitive, fast paced, and difficult games and use software that isn't inherently accessible with as much ease as able-bodied people do and really level the playing field.

What I learned

I learned a lot about camera frame processing in both UWP and Android as well as how to use the Face API. I have had previous experience with other Azure services and even many of the other Cognitive services, but the Face API was entirely new for me and works amazingly for the goal of this project.

What's next for Suave Keys

As part of this new work on Project Suave Keys, I've surpassed my expectations for what the tech could really do and am excited to announce the next phase with the introduction of my new organization, Enabled Play!

More details will follow soon for Enabled Play and the tech that goes along with it. The goal of the organization is to work with community and technology leaders to bring more accessible hardware and software to the masses and doing so by creating an extendable and usable platform to enable everyone to work and play together.

As part of this, Project Suave Keys will be graduating to a more complete product: The Enabled Keyboard and Enabled Controller which will continue development on:

- Supporting more platforms and input control

- Extending developer support for extensions and first-party support in games and tools

- Performance of requests at scale

- UX design that works for everyone while also not looking like the ugly mess it is now

- Mass production of custom hardware

Be sure to follow me on twitter and twitch as we roll out more announcements and development live!

https://twitter.com/suave_pirate https://twitch.com/suave_pirate

Log in or sign up for Devpost to join the conversation.