Inspiration

A person in the U.S. has a stroke every 40 seconds. While stroke rehabilitation is proven to help recovery, long-term hospital sessions are too impractical and expensive for most; existing medical devices are costly, too. We wanted to create a cheap, home-based alternative that enables rehabilitation on your own time without sacrificing clinical-grade monitoring: Physio.

What it does

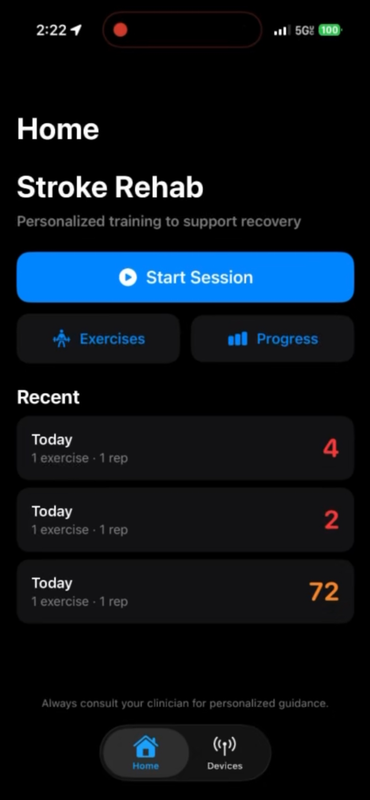

Dynamic exercise planning

Physio allows patients to select specific rehabilitation movements—ranging from isolated Range of Motion (ROM) exercises to complex Activities of Daily Living (ADL). Any combination and sequence of existing movements is supported.

Realtime movement scoring

Using our custom dual-sensor wearable and an on-device neural network, the app continuously evaluates the kinematic quality of the patient's movement. It compares their trajectory against clinical stroke baselines, outputting a highly responsive 0–100 movement quality score that immediately flags spasticity or compensatory movements.

Complete privacy

Physio uses a local-only approach to keep latency low and sensitive patient info secure. No data is sent to the web or put at risk of a security breach---ever.

Auditory encouragement and feedback

Rehab is hard. We integrated ElevenLabs TTS in our iOS app to provide encouraging, real-time voice guidance to the patient based on their workout selection and current performance score, gamifying the path to recovery.

How we built it

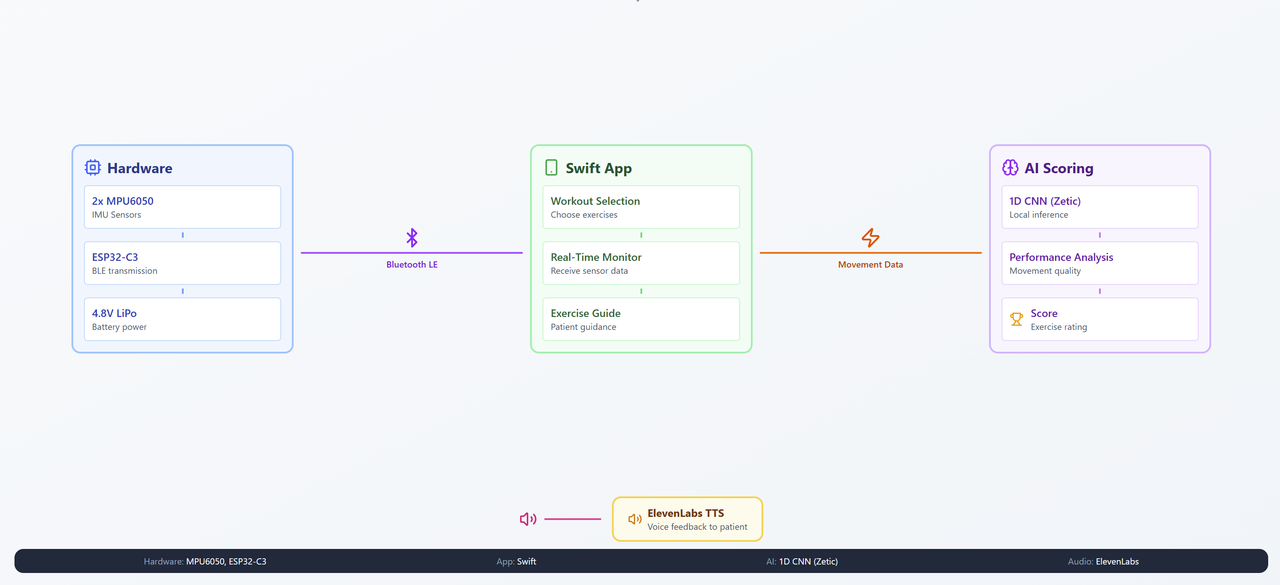

Hardware

Our embedded foundation acts as a high-throughput telemetry system. We used an ESP32-C3 microcontroller powered by a 4.8V LiPo battery, wired to two MPU6050 sensors placed on the bicep and wrist. To prevent I2C address collisions on the shared bus, we custom-routed the AD0 pin to map the sensors to 0x68 and 0x69. We also added an integrated I2C OLED screen for real-time device status and hardware debugging.

Communication

To feed a neural network without latency, you need a flawless data stream. We built a custom Bluetooth Low Energy (BLE) pipeline that processes data from the MPU6050 sensors and streams it to the phone. We expanded the MTU size to send a tightly packed 76-byte payload (containing timestamps, PRY, and raw accel/gyro channels for both sensors) at 80Hz.

1D CNN Model

Our AI evaluates movement by comparing patient data against the clinical JU-IMU dataset. Because time-series sensor streams are tricky, we built a custom PyTorch 1D CNN using a "shrink time, grow features" architecture. Data is pooled from diverse movements for abstract feature extraction.

We take 12 channels of input linearly interpolated to 128 timesteps, and pass it through three stride-2 convolutional blocks (kernel sizes 7 → 5 → 3). We swapped GroupNorm for BatchNorm and utilized a fixed AvgPool1d specifically to optimize the model for mobile compilation.

iOS Swift App

The Swift app is the orchestration layer. It uses CoreBluetooth to cache the 80Hz stream into sliding windows. Crucially, we used Zetic MLange to compile our trained PyTorch model into a deterministic .pt2 graph that runs entirely on the iPhone's Neural Processing Unit (NPU). This ensures zero cloud-compute delay and total patient privacy.

Challenges we ran into

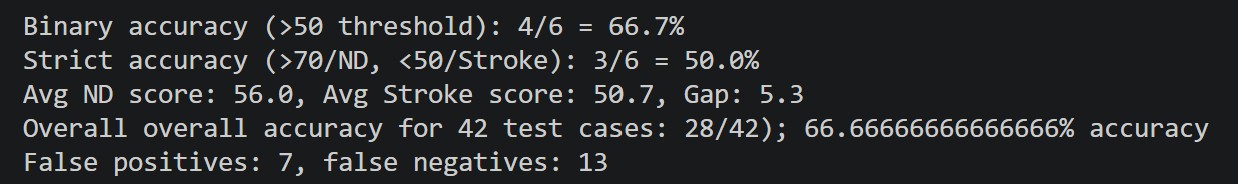

Model overfitting

Initially, our model fell into the "majority class trap," achieving 66% accuracy simply by guessing the patient was healthy every time. We had to heavily restructure our data preparation and architecture to force the model to learn the universal kinematic markers of a stroke rather than memorizing the training data for each movement.

Data preparation

We realized that standard per-sample Z-score normalization was destroying our data because it erased the "weakness" of a stroke movement. We engineered a Global Normalization strategy $$(x - \mu) / \sigma$$ over all training time-steps, which successfully preserved the cross-patient magnitude differences. We also had to implement "Side-Aware Selection" to dynamically swap sensor channels depending on whether a patient suffered left or right hemiparesis.

Wiring and sensor integration

Sometimes it's the hardware, not you. We rapidly iterated on our prototypes using breadboards when a sensor died, wires came loose, and existing sleeves didn't meet our needs. Quick Target runs were lifesavers.

Accomplishments that we're proud of

90.5% model accuracy

Breaking past the overfitting barrier to achieve 90.5% classification accuracy on clinical stroke data using a heavily downsized, edge-optimized 1D CNN. Global Z-score normalization, data augmentation, and hyperparameter tuning proved crucial in promoting deeper learning.

Sub-millisecond model latency

Successfully bridging a custom 80Hz hardware pipeline to an iOS app, and executing real-time PyTorch inference locally via Zetic MLange without dropping frames or needing Wi-Fi. As soon as the phone receives the data, the model produces a score.

Building a complete, closed-loop medical device

Going from bare microcontrollers, jumper wires, and raw CSV files to a fully functioning, gamified rehabilitation iOS app in under 36 hours.

What we learned

Data + architecture are harder than the model

We learned that the depth of a neural network doesn't matter if your data scaling is wrong. Figuring out how to properly align, interpolate (to 128 timesteps), and normalize spatial human movement data took significantly more engineering effort than writing the CNN itself.

Hardware-aware programming

Low-pass noise filters, sensor faults and replacements, loose connections; we learned the hard way that building a stable embedded hack takes a lot of testing and troubleshooting, not just cranking out lines of code.

What's next for Physio

Patient arm visualization

Visualizing patients' arms in realtime during training would help with correction and hand-eye coordination. Clinicians could review these animations---saved locally, of course---to help rehab scheduling.

In-app rehab planner/calendar

Saved scores for each movement could be used to inform long-term training and planning.

Passive movement classification w/ smaller hardware

Reducing the size and weight of the battery setup while enclosing wires and adding a soldered PCB would enable long-term wear. This could be combined with automatic movement classification running in the background to enable passive movement scoring during everyday life.

Additional + custom movements

The unified CNN model could be extended to all the movements in the JU-IMU dataset and beyond. Since it is designed to recognize impaired kinematics across various actions, users could eventually add custom, personalized exercises.

Log in or sign up for Devpost to join the conversation.