-

Use Stage Fight to prepare for presentations and public speaking

-

Do you suffer from stage fright?

-

Do you want to improve your public speaking skills?

-

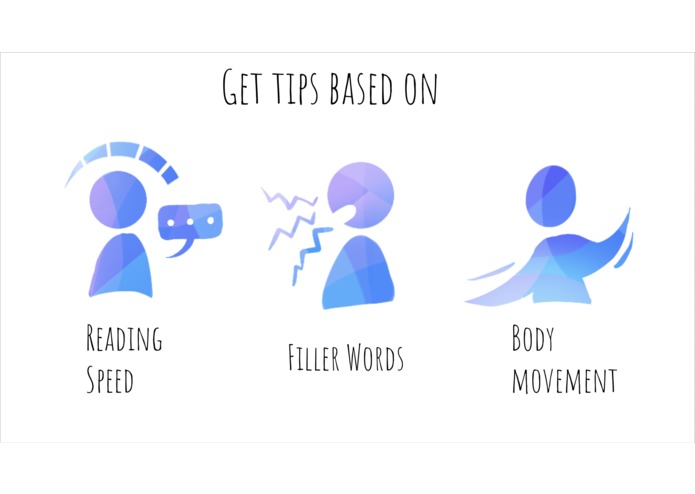

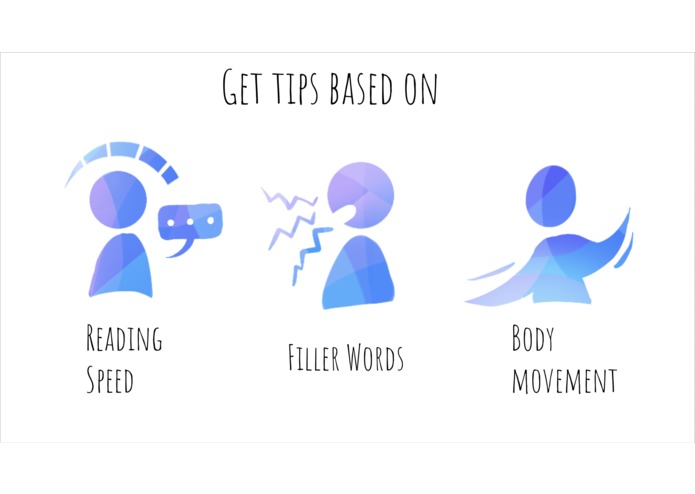

We use computer vision, machine learning, and speech processing to analyze your practice sessions; moving too much? Speaking too fast?

-

We provide objective feedback on your presentation!

-

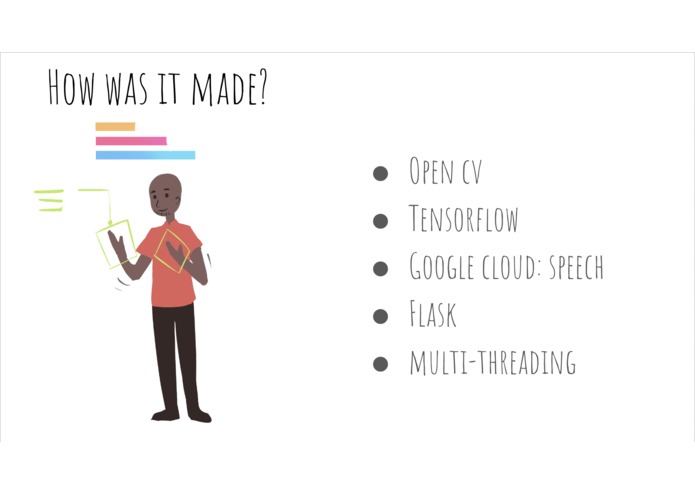

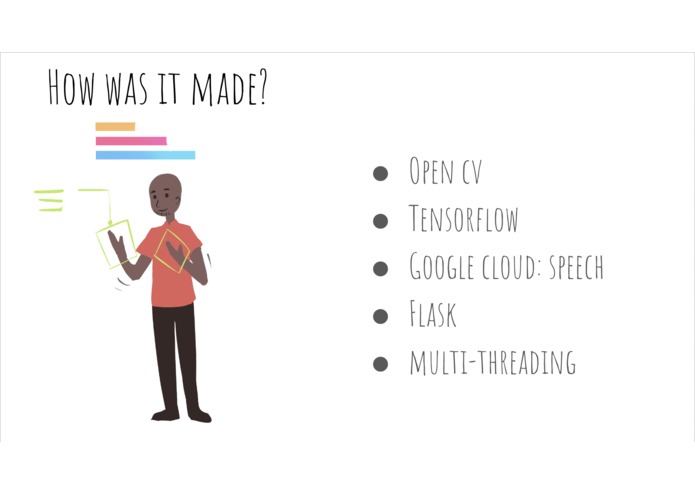

What tools did we use to build it?

-

Use the feedback to optimize and improve your public speaking abilities!

-

Use our tool to to improve the way you communicate!

-

End summary to tell you what you did well, and what can use improvement!

Inspiration

If you're lucky enough to enjoy public speaking, we're jealous of you. None of us like public speaking, and we realized that there are not a lot of ways to get real-time feedback on how we can improve without boring your friends or family to listen to you.

We wanted to build a tool that would help us practice public-speaking - whether that be giving a speech or doing an interview.

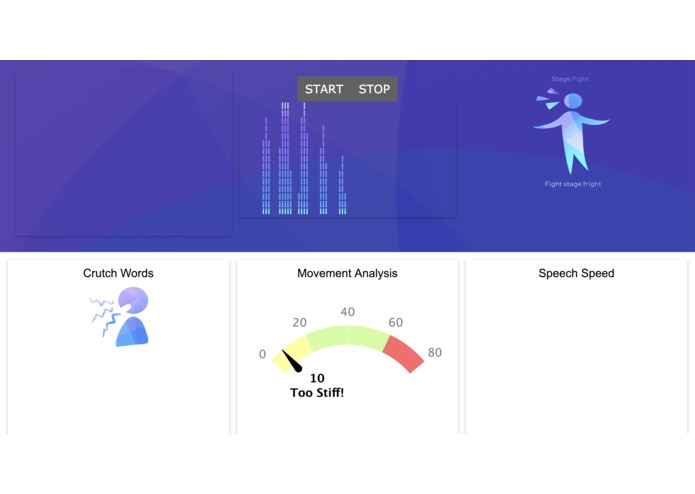

What it does

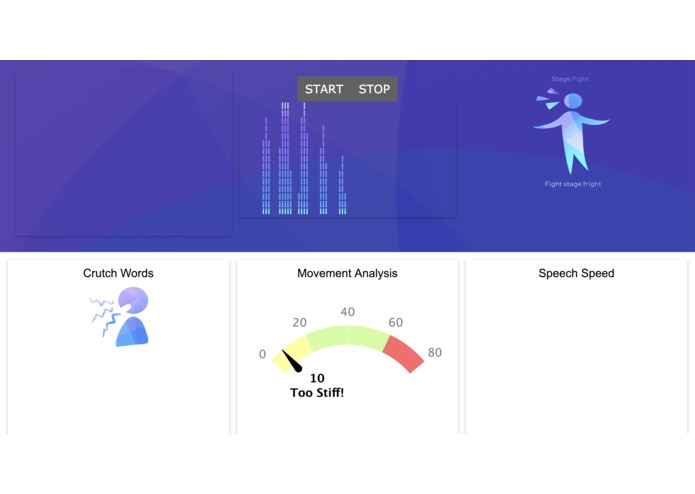

Stage Fight analyzes your voice, body movement, and word choices using different machine learning models in order to provide real-time constructive feedback about your speaking. The tool can give suggestions on whether or not you were too stiff, used too many crutch words (umm... like...), or spoke too fast.

How we built it

Our platform is built upon the machine learning models from Google's Speech-to-Text API and using OpenCV and trained models to track hand movement. Our simple backend server is built on Flask while the frontend is built with no more than a little jQuery and Javascript.

Challenges we ran into

Streaming live audio while recording from the webcam and using a pool of workers to detect hand movements all while running the Flask server in the main thread gets a little wild - and macOS doesn't allow recording from most of this hardware outside of the main thread. There were lots of problems where websockets and threads would go missing and work sometimes and not the next. Lots of development had to be done pair-programming style on our one Ubuntu machine. Good times!

Accomplishments that we're proud of

Despite all challenges, we overcame them. Some notable wins include stringing all components together, using efficient read/writes to files instead of trying to fix WebSockets, and cool graphs.

What we learned

A lot of technology, a lot about collaboration, and the Villager Puff matchup (we took lots of Smash breaks).

Log in or sign up for Devpost to join the conversation.