-

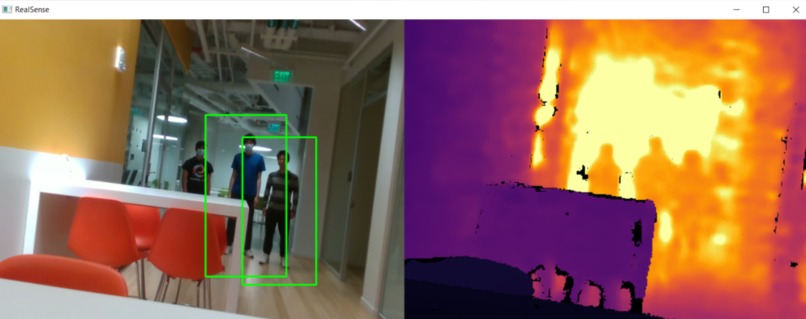

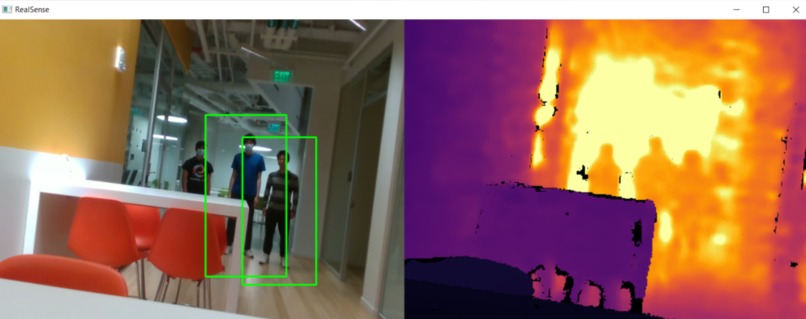

The interface for our camera feed. The left side shows RGB data + bounding boxes for detected humans, while the right shows depth data

-

Our photoresistor setup for ambient light detection, which ended up not being used

-

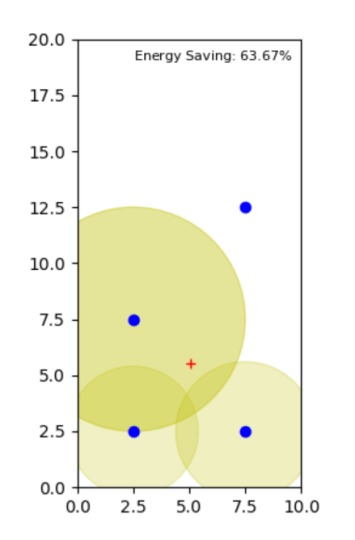

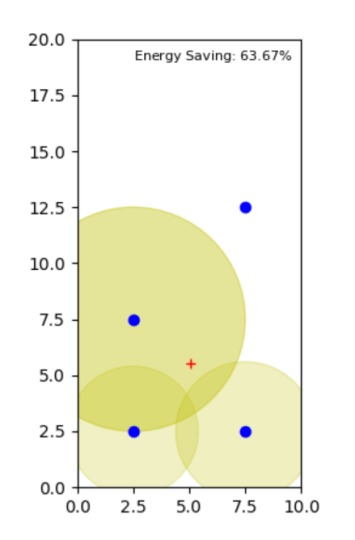

The lighting dashboard interface, showing light positions (blue), human positions (red), and energy savings

Inspiration

We came into the hackathon knowing we wanted to focus on a project concerning sustainability. While looking at problems, we found that lighting is a huge contributor to energy use, accounting for about 15% of global energy use (DOE, 2015). Our idea for this specific project came from the dark areas in the Pokémon video games, where the player only has limited visibility around them. While we didn't want to have as harsh of a limit on field of view, we wanted to be able to dim the lights in areas that weren't occupied, to save energy. We especially wanted to apply this to large buildings, such as Harvard's SEC, since oftentimes all of the lights are left on despite very few people being in the building. In the end, our solution is able to dynamically track humans, and adjust the lights to "spotlight" occupied areas, while also accounting for ambient light.

Methodology

Our program takes video feed from an Intel RealSense D435 camera, which gives us both RGB and depth data. With OpenCV, we use Histogram of Oriented Gradients (HOG) feature extraction combined with a Support Vector Machine (SVM) classifier to detect the locations of people in a frame, which we then stitch with the camera's depth data to identify the locations of people relative to the camera’s location. Using user-provided knowledge of the room’s layout, we can then determine the position of the people relative to the room, and then use a custom algorithm to determine the power level for each light source in the room. We can visualize this in our custom simulator, developed to be able to see how multiple lights overlap.

Ambient Light Sensing

We also implement a sensor system to sense ambient light, to ensure that when a room is brightly lit energy isn't wasted in lighting. Originally, we set up a photoresistor with a SparkFun RedBoard, but after having driver issues with Windows we decided to pivot and use camera feedback from a second camera to detect brightness. To accomplish this we use a 3-step process, within which we first convert the camera's input to grayscale, then apply a box filter to blur the image, and then finally sample random points within the image and average their intensity to get an estimate of brightness. The random sampling boosts our performance significantly, since we're able to run this algorithm far faster than if we sampled every single point's intensity.

Highlights & Takeaways

One of our group members focused on using the video input to determine people’s location within the room, and the other worked on the algorithm for determining how the lights should be powered, as well as creating a simulator for the output. Given that neither of us had worked on a large-scale software project in a while, and one of us had practically never touched Python before the start of the hackathon, we had our work cut out for us.

Our proudest moment was by far when we finally got our video code working, and finally saw the bounding box appear around the person in front of the camera and the position data started streaming across our terminal. However between calibrating devices, debugging hardware issues, and a few dozen driver installations, we learned the hard way that working with external devices can be quite a challenge.

Future steps

We've brainstormed a few ways to improve this project going forward: more optimized lighting algorithms could improve energy efficiency, and multiple cameras could be used to detect orientation and predict people's future states. A discrete ambient light sensor module could also be developed, for mounting anywhere the user desires. We also could develop a bulb socket adapter to retrofit existing lighting systems, instead of rebuilding from the ground up.

Log in or sign up for Devpost to join the conversation.