-

-

GIF

GIF

2D body sim, PID control

-

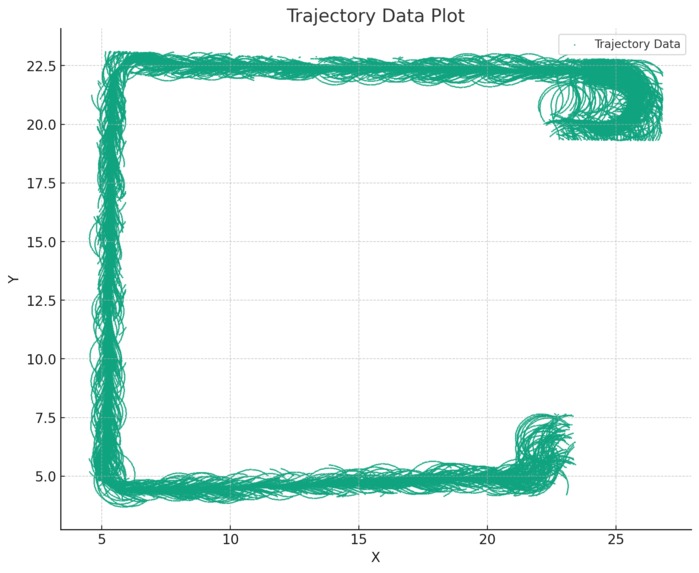

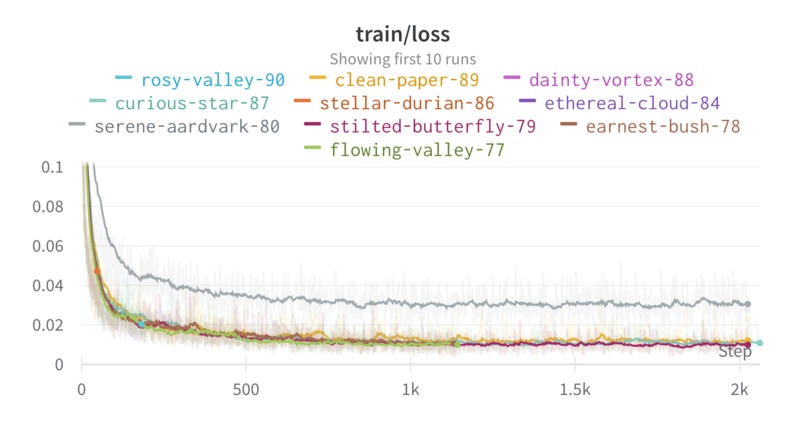

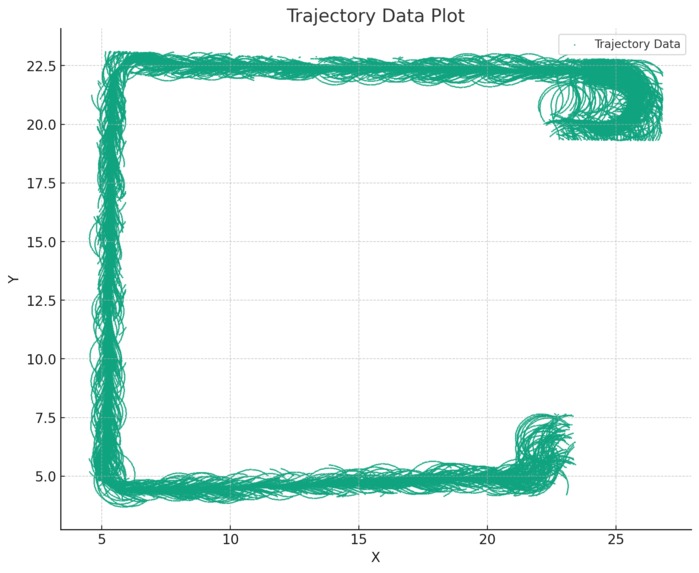

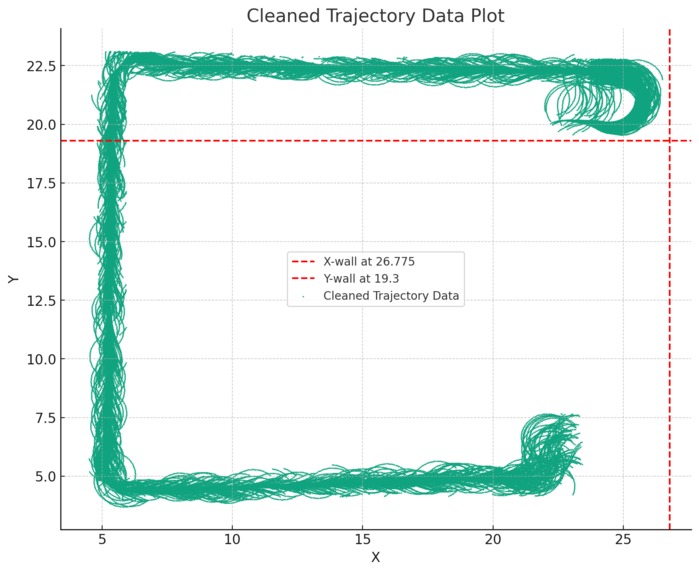

trajectory sampling plot

-

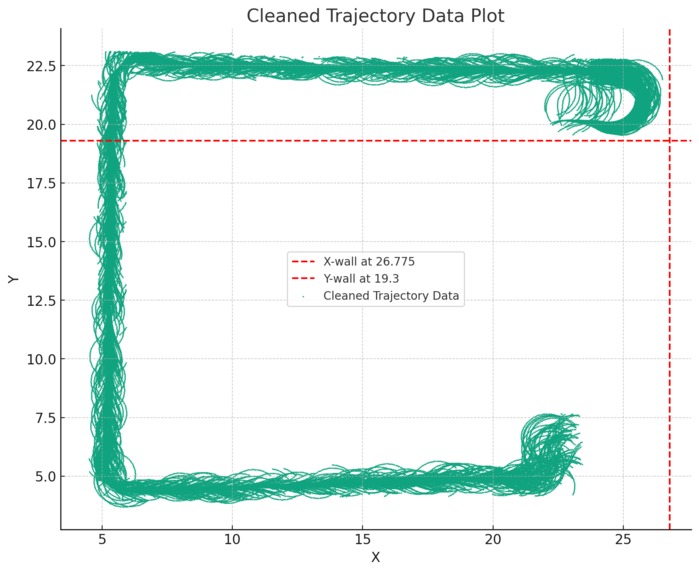

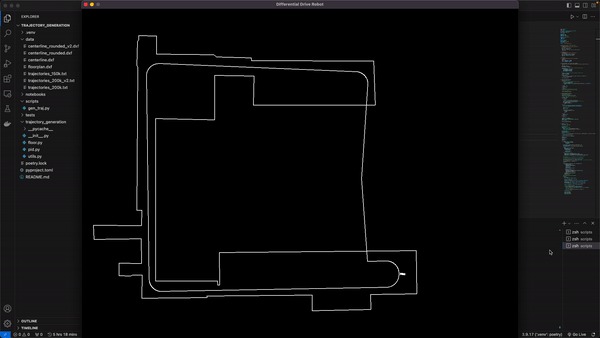

cleaning trajectory to remove noisy paths

-

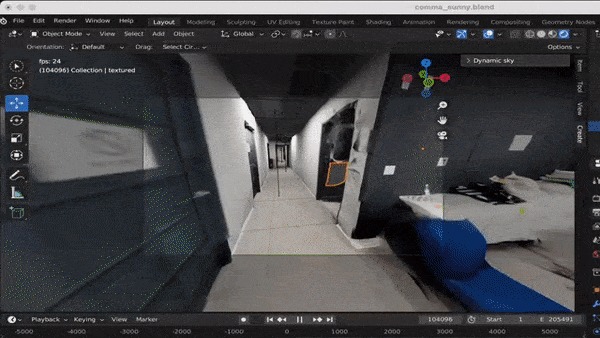

GIF

GIF

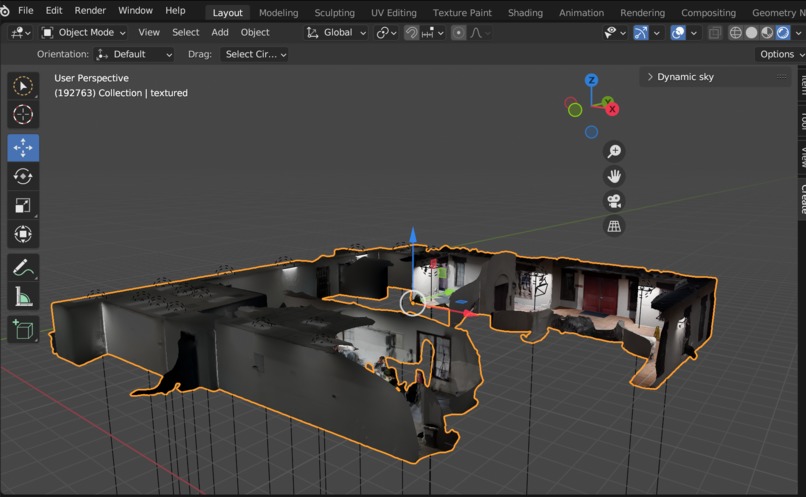

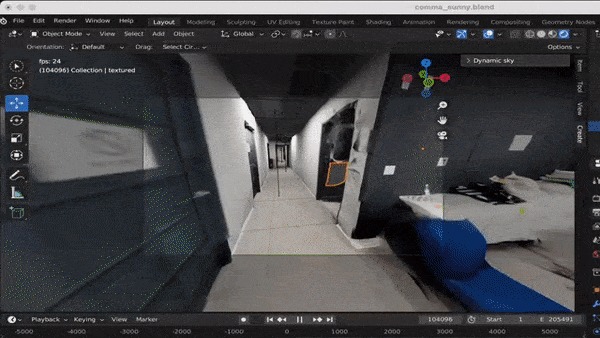

trajectories previewed in real-time using blender

-

the cropping zone inside the warped image (to match the comma camera settings)

-

overlapping between real image from the comma three and rendered image at the same position

-

-

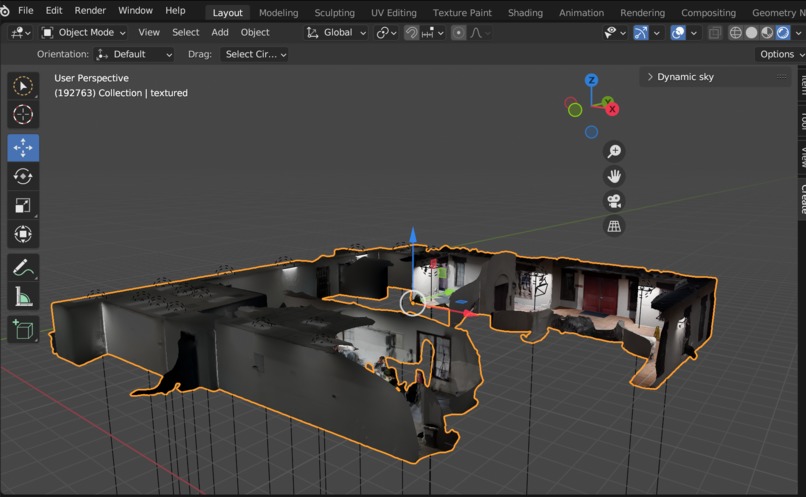

office 3D scanned imported to blender

Introduction

This project is part of the COMMA_HACK_4 hackathon in Comma office in San Diego. The goal is to create indoor navigation algorithms to make a human-size robot move around the office.

Inspiration

We got inspired by techniques used by comma and a project Armand and Rian did earlier in the year. The project was an autonomous tiny car race. We explored reinforcement learning on a simulated agent using a model of our car. This was really fun and we wanted to go deeper with these techniques.

What we did

We scanned the whole office using the iPhone 12 Pro LiDAR. We then drew a 2D vector floor plan, in which we created a path to follow.

We then tuned a PID to follow the path whenever an agent spawns on the office (to simplify a lot). We sample and conjoin the trajectories, then use this desired trajectory to get back to the line as the ground truth for the model.

Then we used the trajectories of the PID to create keyframe for a camera in à blender project of the scanned office. This way we have a pair of generated images / actions, that we use to train an EfficientNet that will run on-device. The EfficientNet uses the raw image to predict the action and trajectory for the next timesteps.

Log in or sign up for Devpost to join the conversation.