Inspiration

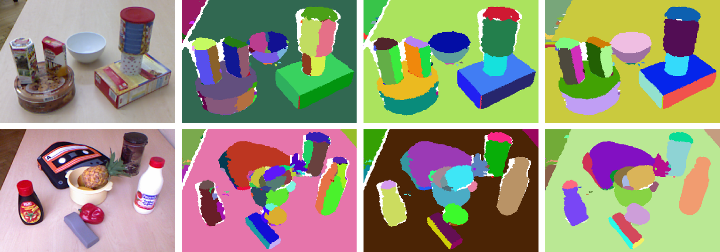

A.i. aware Spatial cognition requires a deep understanding of discreet spaces. Calculation of human position in a 3D world and object segmentation is critical for A.i. to understand the world beyond the sensor(s).

We aim to show how a base ontology and schema of regular house hold items may be controlled via natural language voice and state of the art sensors. Then allowing users to label and define additional meta data which may be recalled from a graph DB at a later point in time. Temporal spatial cognition via dynamic memory graphs is a critical key to A.i. and will revolutionize our lives in numerous ways.

What it does

Imagine a user will walk into a room , simply look at or point to a Lamp and ask MICA to execute an action in the environment on a particular IoT enabled device and report to the user new status of said IoT device.

** "Mica, turn off that light" ** while the user is pointing at a particular lamp. Using raycast line and collision object segmentation.

or

** "Mica, show me a Candlestick chart of stock ticker GOOG" ** a floating technical chart will be projected before the user. -> THEN ** "Mica, update this chart with stock ticker Bitcoin for the last 3 weeks" **

How I will build it

I hope to integrate the advanced sensor suite and spatial computation of MagicLeap One in order to determine proper occlusion and lighting is calculated while displaying the chart or device status.

I will incorporate the voice control mapping to specific triggers and then display a COMMANDS menu in the user field of view.

1] Train objects segmented in an environment. link http://buildingparser.stanford.edu/dataset.html ~~ A Large Scale Database for 3D Object Recognition

2] Link to a metadata ontology/schema that maps previous segmented objects with IoT functions.

3] Identify, save, and configure said IoT segmented devices in a spatial graph node hierarchy.

4] Interact with device by giving auto detected COMMANDS inherited from devices IoT metadata

Challenges I expect to run into

My custom VR / MR / AR rigs have been limited by the sensors , either a stereoscopic camera for depth field projection or the depth RGBD camera has not been fast enough to calculate the spatial model in time to provide real time segmentation.

Accomplishments that I'm proud of

Voice models and intent / entity triggers will map to various IoT systems and the voice controlled charts will integrate to my custom visual A.i. web service.

What's next for SpacialCognito

Incorporate graphical displays in real time and build a mnemonic memory for spatial segmented devices while mapping their device meta data and functions to known IoT ontology and APIs. Integration of voice recognition and use of MagicLeap SDK for fast device discovery and segmentation.

Built With

- a.i.cognition

- ai

- artificial-intelligence

- c++

- machine-learning

- node.js

- python

- ros

- slam

- unity

Log in or sign up for Devpost to join the conversation.