-

-

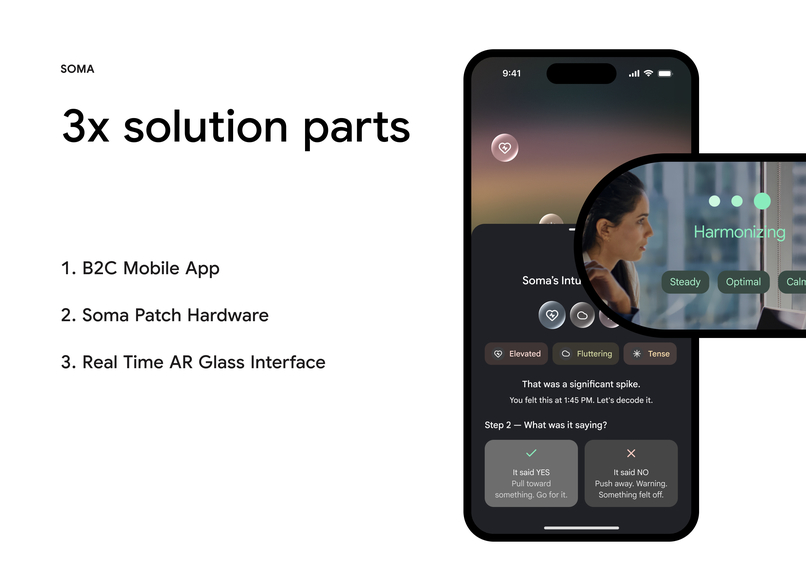

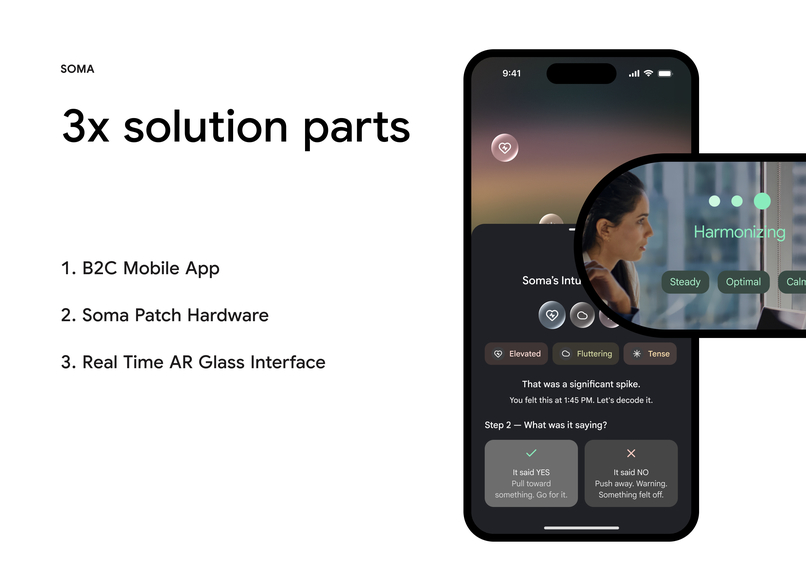

3x solution parts - 1. B2C Mobile App 2. Soma Patch Hardware 3. Real Time AR Glass Interface

-

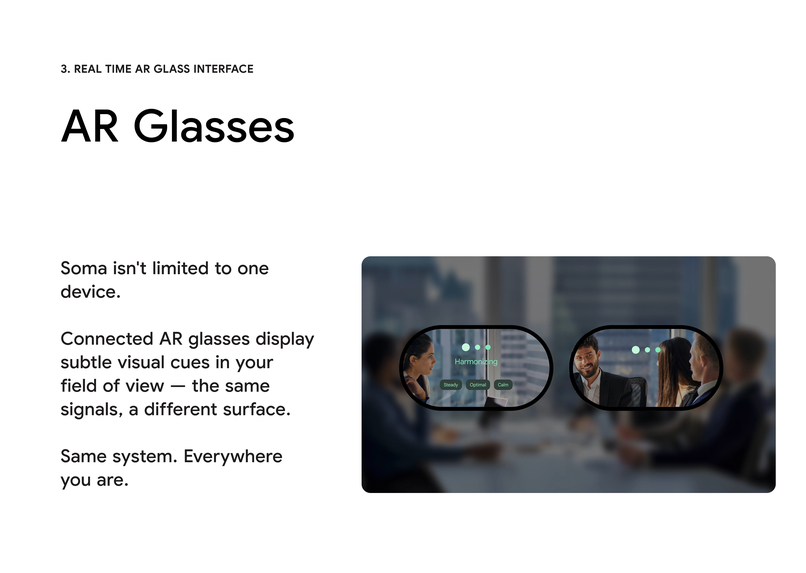

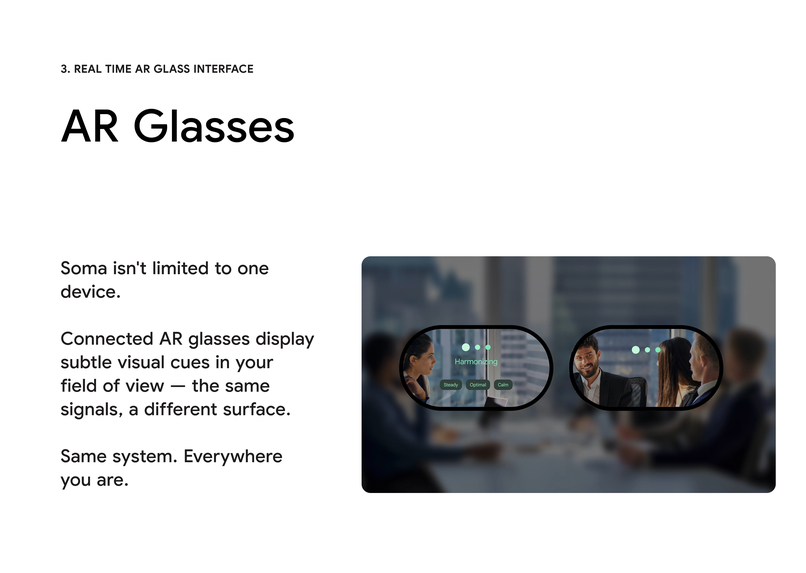

Soma isn't limited to one device. Same system. Everywhere you are.

-

When it detects a significant shift (e.g. a stress response, a gut reaction) it delivers subtle haptic feedback directly on your body.

-

A gut feeling is a real, measurable physiological event.

Inspiration

The enteric nervous system produces over 100 million neurons and 95% of the body's serotonin, yet no interface exists to surface those signals. Soma started with one question: what if your gut feeling had a UI?

What it does

Soma is a speculative wellness tool that detects, visualizes, and helps users interpret physiological gut signals in real time. A wearable patch monitors HRV, skin conductance, and ENS activity. When a significant shift is detected, the app visualizes it as resonance or dissonance, and delivers subtle haptic feedback so the signal reaches your body before your phone. After each event, a quick Intuition Check lets you log whether the signal was genuine intuition or anxiety, training a personalized model over time.

How we built it

Designed entirely in Figma — screens, interactions, and a Figma Make prototype. The visual system uses generative fluid forms to represent internal states, a liquid glass component language, and a dark, meditative aesthetic designed to minimize cognitive load.

Challenges we ran into

The hardest design problem was making something invisible feel legible without medicalizing it. Gut signals aren't data points — they're felt experiences. Finding the right visual language (fluid, ambient, non-clinical) took significant iteration. The Intuition Check flow also required careful thinking: the distinction between anxiety and intuition is subtle, and the UI had to honor that nuance without oversimplifying it.

Accomplishments that we're proud of

Building a three-step behavioral loop — detect, reflect, log — that actually closes meaningfully. The Intuition Check interaction model feels like a conversation, not a form. We're also proud of how the design system holds together: every surface, from the orb to the bottom sheets, feels like it belongs to the same world.

What we learned

That speculative design is most powerful when it's grounded in real biology. Framing Soma around the ENS and interoception gave every design decision a foundation — it wasn't just aesthetic, it was backed by neuroscience. We also learned that the hardest UX problems aren't visual. They're conceptual: how do you help someone trust themselves?

What's next for Soma - The interface for your gut feeling, backed by biology

Longitudinal pattern recognition — showing users how their gut signals correlate with real outcomes over weeks and months. AR glasses integration for ambient signal awareness. And eventually, a shared signal layer: understanding how physiological resonance works between people in the same room. The interface for your gut feeling. Backed by biology.

Built With

- figma

- figmamake

Log in or sign up for Devpost to join the conversation.