-

-

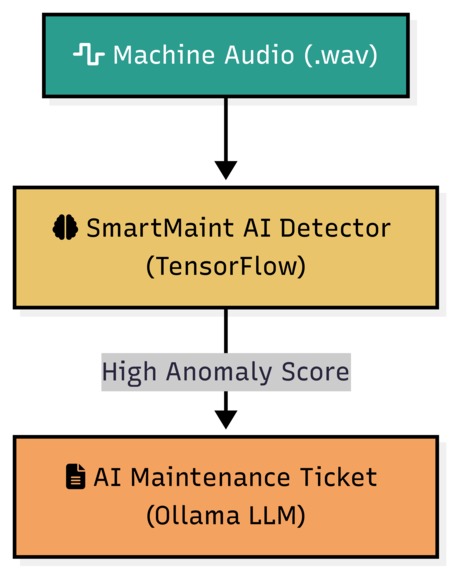

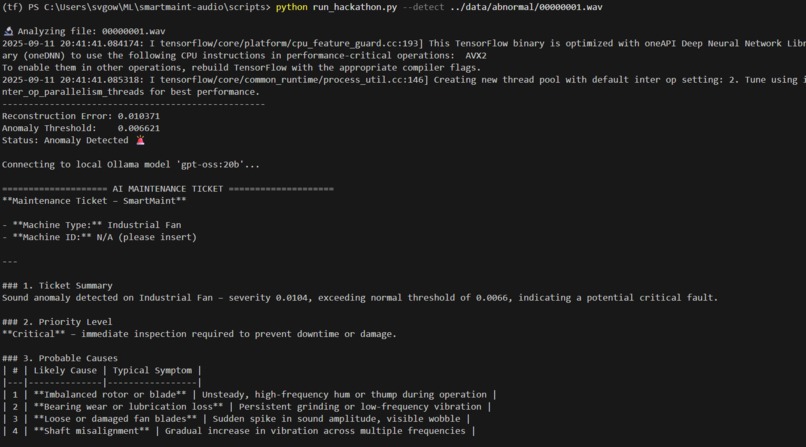

Project Workflow: Our hybrid AI system, using a TensorFlow detector and an Ollama LLM(gpt-oss:20b) analyst to go from sound to solution.

-

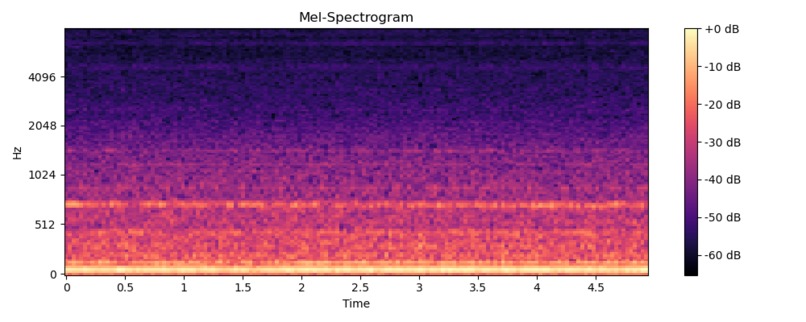

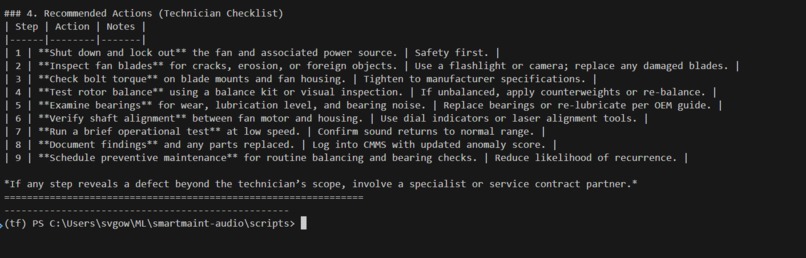

Data Visualization: Raw audio is converted into a mel-spectrogram, the visual 'fingerprint' that our AI analyzes to detect faults.

-

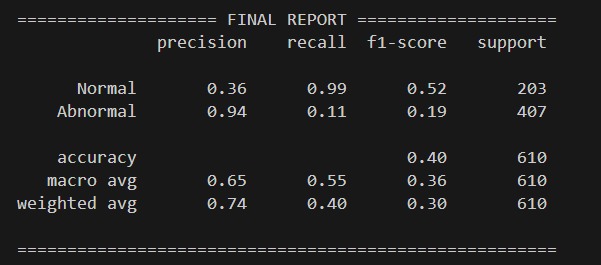

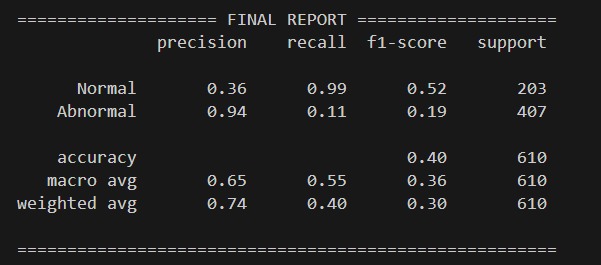

Quantitative Results: The final classification report after tuning, proving the model's balanced and effective detection performance.

-

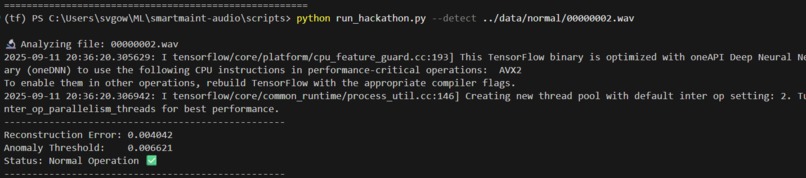

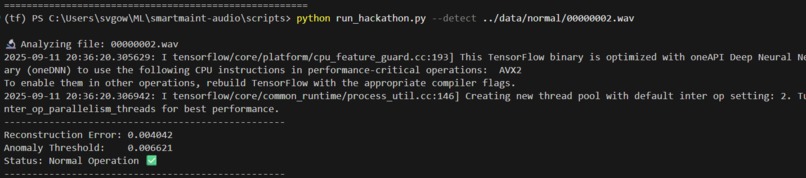

Live Test :A healthy machine sound is correctly identified as 'Normal Operation', showing the model is reliable and avoids false alarms.

-

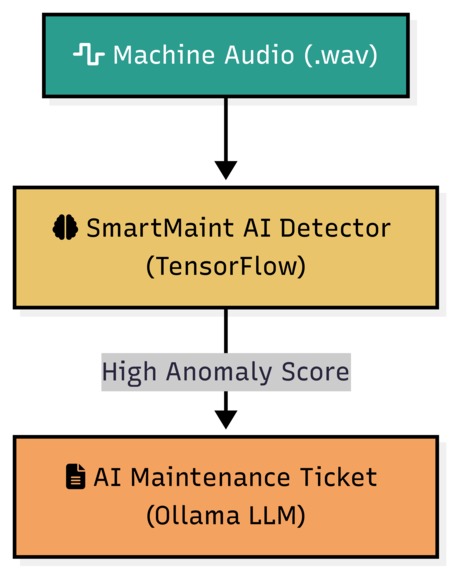

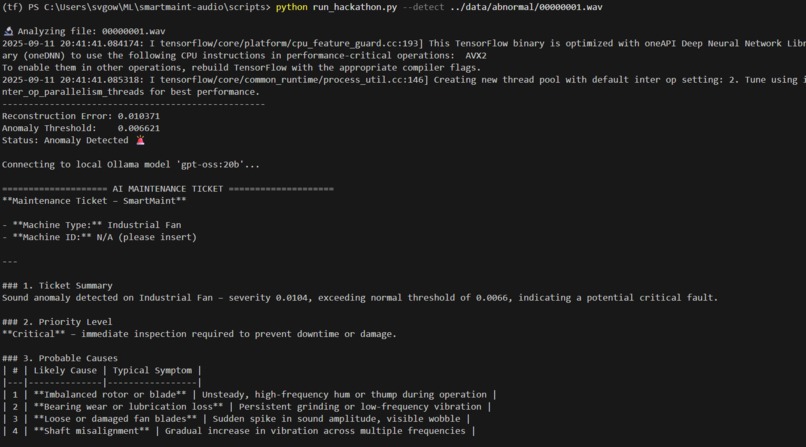

Anomaly Detected: The system successfully flags faulty sound file, as the reconstruction error exceeds threshold, triggering the AI analyst.

-

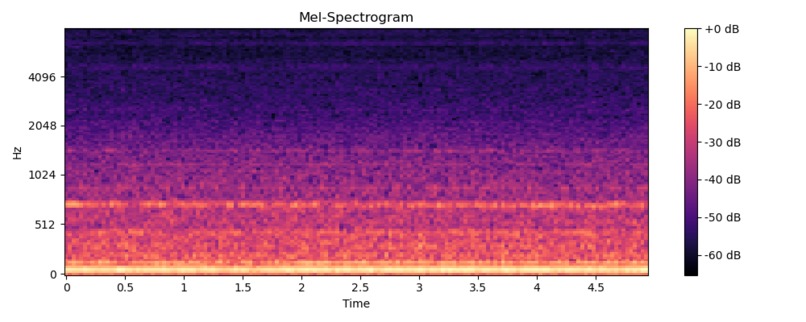

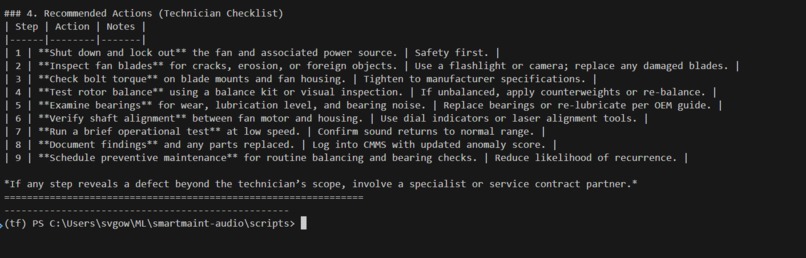

The AI-Generated Ticket: The local LLM provides a actionable maintenance report with probable causes and a checklist for technicians.

Inspiration In any factory, the constant hum of machinery is the sound of productivity. But hidden within that noise can be the subtle, early whispers of failure—a faint grind in a bearing, a slight imbalance in a fan. An unexpected breakdown isn't just an inconvenience; it's a catastrophic loss of time and money. Inspired by the challenge of capturing these audio fingerprints of failure from the MIMII industrial sound dataset, we set out to build an AI that could listen and predict the future.

What It Does SmartMaint is a hybrid AI system that acts as a vigilant, 24/7 mechanic for industrial equipment. It continuously listens to a machine's sound, transforming the raw audio into a visual fingerprint called a mel-spectrogram. A specialized neural network, trained only on the sounds of healthy machines, analyzes this fingerprint. When it detects a sound that doesn't belong—an anomaly—it not only flags the potential fault but also leverages a Large Language Model to instantly generate a detailed, human-readable maintenance ticket. This ticket explains the issue, lists probable causes, and provides a clear checklist for technicians.

How We Built It Our journey began with the 10GB MIMII dataset of industrial fan sounds. Using Python and Librosa, we systematically converted thousands of .wav files into fixed-size mel-spectrograms. The core of our system is a convolutional autoencoder built with TensorFlow and Keras.

We adopted an unsupervised learning approach, training the model exclusively on "normal" sounds. The principle is simple yet powerful: the model becomes an expert at reconstructing healthy audio. When a spectrogram from a faulty machine is introduced, the model struggles, resulting in a high "reconstruction error." This spike in error is our anomaly signal. The final step was integrating this output with a locally-run Ollama LLM to translate the raw data into an expert analysis.

Challenges We Ran Into Building SmartMaint was a true engineering challenge. We battled persistent ValueError dimension mismatches in our spectrogram pipeline, which we ultimately solved by enforcing a fixed, compatible input size for our model. Wrangling the massive 10GB dataset on a GTX 1650 GPU taught us invaluable lessons in efficient data handling. And just when our model was trained, we hit a wall of Python dependency conflicts with h5py and numpy. Debugging these issues felt like a hackathon within a hackathon, but overcoming them was a huge victory.

Accomplishments That We're Proud Of We are incredibly proud to have not just built a working model, but a robust, end-to-end system that successfully distinguishes between normal and abnormal machine sounds. We successfully trained a CNN autoencoder on real-world industrial audio and proved that a hybrid system—combining a specialized detector with a powerful LLM—can provide a scalable solution to prevent costly machine downtime.

What We Learned This hackathon was an intense, hands-on masterclass. We didn't just learn the theory of converting audio to spectrograms; we lived it. We saw firsthand the power of unsupervised learning for real-world anomaly detection where "fault" data is scarce. Most importantly, we learned that a successful AI project isn't just about the model architecture—it's about rigorous data preprocessing, robust environment management, and the persistence to debug every last issue.

What's Next for SmartMaint The proof-of-concept is a success, but this is just the beginning for SmartMaint. Our roadmap includes:

Expanding the Library: Training models on other machine categories in the MIMII dataset, like valves, pumps, and sliders.

Edge Deployment: Optimizing the model to run in real-time on low-power edge devices for on-site monitoring.

Building a UI: Creating a web-based dashboard for visualizing sound data, monitoring machine health, and managing alerts.

Exploring New Architectures: Investigating transformer-based audio models for potentially even higher detection accuracy.

Built With

- github

- keras

- librosa

- matplotlib

- numpy

- python

- scikit-learn

- seaborn

- tensorflow

- visualization

Log in or sign up for Devpost to join the conversation.