-

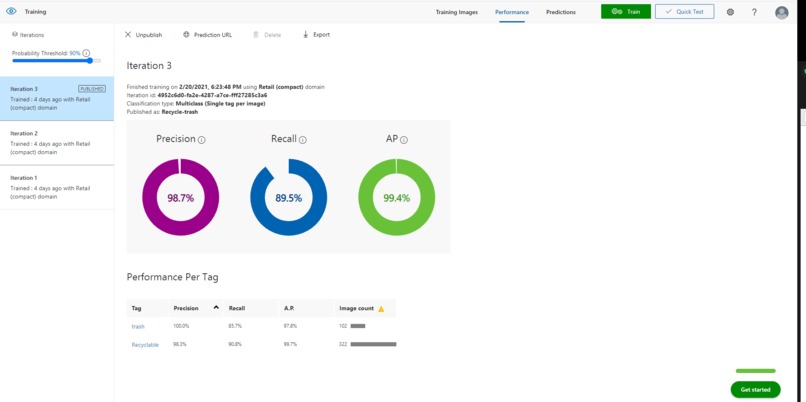

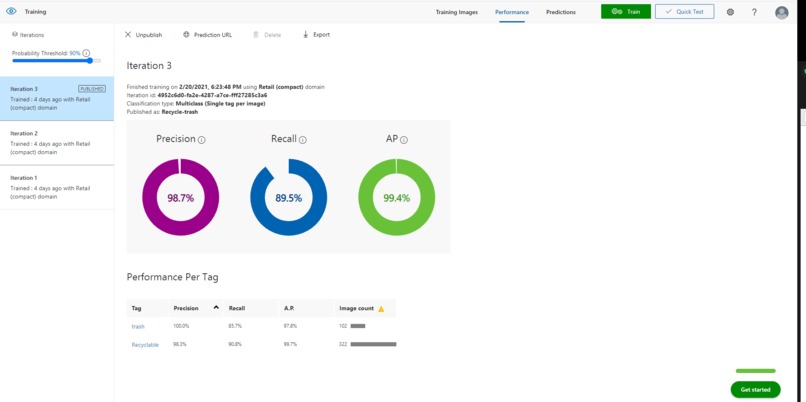

Our completed prediction model showed consistency in predictability of recyclable objects.

-

Using Postman to test the key/value pairings of the Custom Vision API.

-

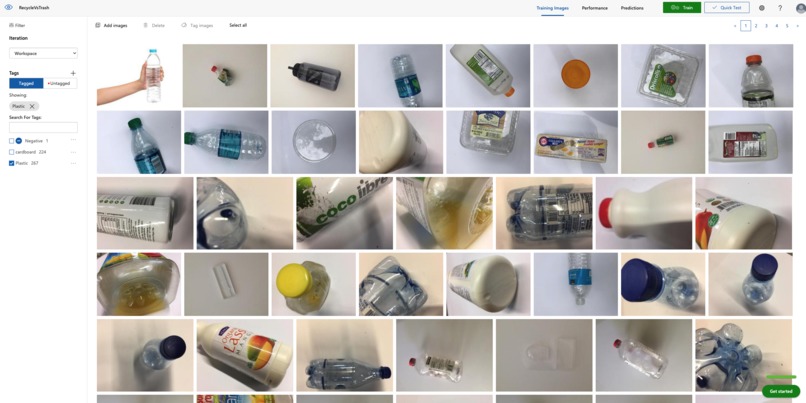

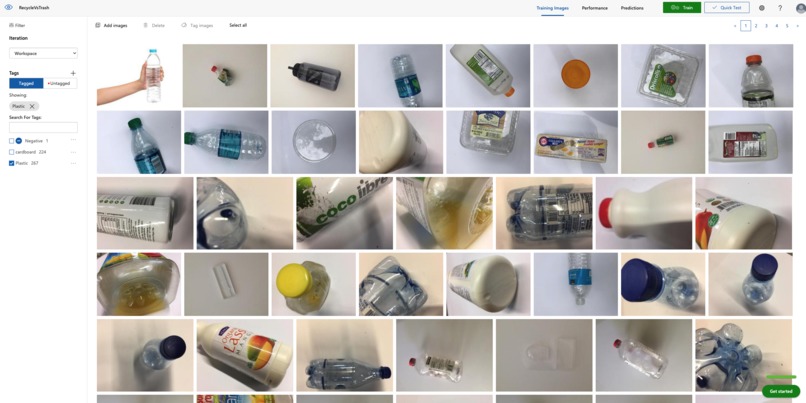

We trained our prediction model by uploading a large data set of objects and tagging them. Here, we uploaded photos tagged "plastic".

-

Screenshot of the Camera Interface users would use

Inspiration

Trash cans that are piled with recyclable objects. We wanted to create an application that identifies an object that is recyclable and one that is not.

What it does

Using the Custom Vision feature of the Azure API, our application snaps an image from a mobile app and is able to give synchronous feedback on whether that object is recyclable or not.

How we built it

We used React Native to develop our application's interface through expo. We linked this interface to Microsoft Azure's Custom Vision API. Using this API, we planned on having our image be fed into the API's prediction model. Through Azure's portal, we were able to train this prediction model by feeding Custom Vision a large dataset of photos. These photos we tagged as either being plastic, cardboard, glass, or neither (which would identify a trash item).

Challenges we ran into

The largest challenge we ran into was when we were trying to sync our running interface to the azure API. We think that this is a dependency error when we were trying to use Custom Vision with Expo React-Native. We tried first attempting to use Azure's libraries within our application's code to call functions that link our image files to the API headers. However, we could not figure out an error that involved the compatibility of these installed node_js files with react native. Although we were not able to solve this issue and link the API to our interface, we are planning on using axios libraries to send post requests to the Microsoft API.

Another challenge we ran into was training the Custom vision prediction model. We did not know where to find an image dataset large enough to train the AI. However, after finding a data set, we had to figure out the best way to tag and categorize different sets of photos. To get a prediction model that was accurate, we had to run multiple training models. Although not being a 'perfect' model, we were able to create a model that was accurate enough for our purposes.

Accomplishments that we're proud of

We were proud of diving into new tools we had no previous experience in. Through this process we were able to make functioning camera interface developed on expo. This interface was able to take an image and in our console, we were able to see the internal file path of this image. We were also able to create a functioning prediction model on Custom Vision.

What we learned

We learned so much by diving into this process. We had never attempted a project this extensive. Through first hand experience, we’re able to learn the ways at which mobile application interfaces are able to communicate with API's. Moreover, we dove deep into a series of new skillsets including TypeScript, React Native, expo, and using Microsoft Azure.

What's next for Smart Can

We want to eventually finish linking our interface to the API. We think that trying to make the camera interface on a web-based app instead of iOS will help solve the dependency issues we had with React-Native. We also envision our fully working interface would be added to physical trash cans so as people walk up, they be told by the trashcan whether or not their waste is recyclable or not.

Built With

- azure

- custom-vision

- expo.io

- postman

- react-native

- typescript

Log in or sign up for Devpost to join the conversation.