-

-

The SkinScan.io homepage, featuring a clinical-grade aesthetic and a clear call-to-action for users to begin their dermatological assessment

-

The core Skin Lesion Analysis dashboard where users can securely upload clinical images for processing.

-

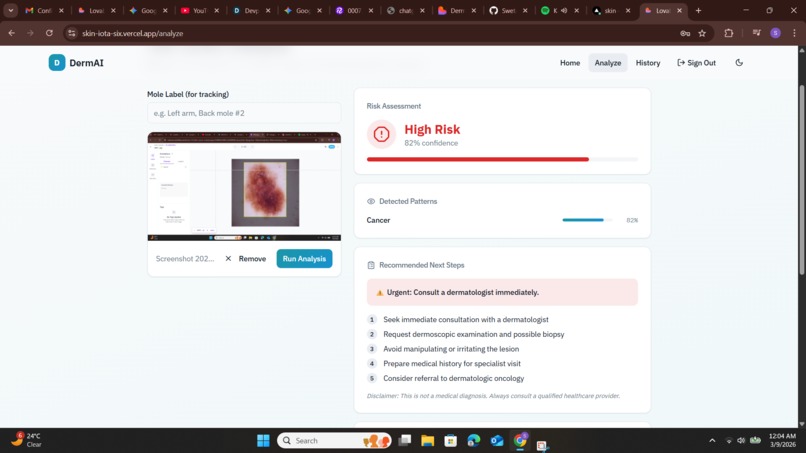

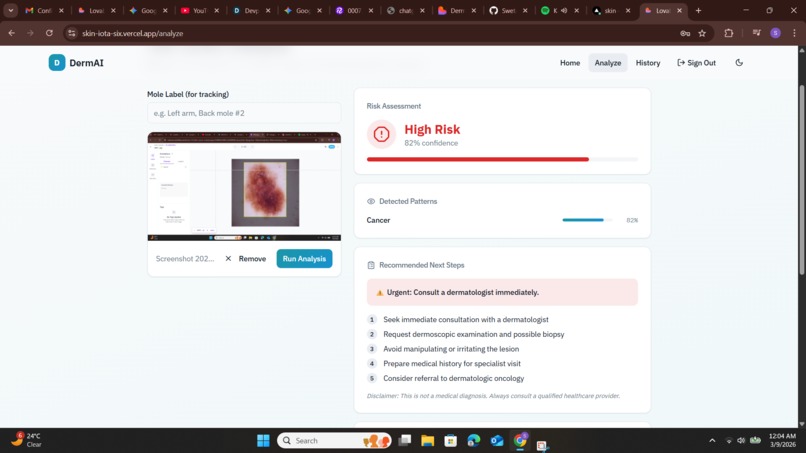

Caption: A real-time analysis result showing a High Risk assessment with an 82% confidence score.

Inspiration

The primary inspiration for SkinScan.io came from the critical need for accessible dermatological screening, where early detection is often the deciding factor in patient outcomes. Seeing the BACSA Hacks challenge at the University of Toronto motivated me to build a bridge between advanced computer vision and real-world biological problems. I wanted to create a tool that moves beyond simple image classification by providing a structured, data-driven reasoning process for every assessment. My goal was to empower users with a "clinical-grade" experience that simplifies complex medical data into actionable insights.

What it does

SkinScan.io is a specialized diagnostic support platform that transforms smartphone imagery into a clinical-grade risk assessment. Using a custom-trained Convolutional Neural Network (CNN), the app analyzes dermatoscopic or clinical images for patterns associated with malignancies like melanoma. Beyond simple detection, it provides a Unified Risk Index ($URI$), maintains a longitudinal history for mole tracking over time, and generates automated clinical recommendations for next steps.

How we built it

I architected SkinScan.io as a full-stack solution using React and Tailwind CSS for a professional, clinical-grade interface. The core vision engine utilizes a specialized CNN via the Roboflow Inference API to detect and classify skin lesion patterns in real-time. I implemented a mathematical logic gate to weight prediction probability against detection confidence, ensuring transparent results. To ensure data persistence, I leveraged Supabase for a secure backend that allows users to track their "Mole History" and monitor biological changes over time.

Challenges we ran into

One of the toughest challenges was managing the latency of a complex pipeline that required simultaneous calls to both vision and reasoning APIs. I had to implement an asynchronous state management system to ensure the UI remained snappy while the "Reasoning Report" was being generated in the background. Another hurdle was standardizing "Mole Evolution" data, as comparing images taken at different angles and lighting requires a precise metadata schema. Finally, defining the "Inconclusive" threshold was a major design challenge, as I had to balance the model's sensitivity with the risk of false positives.

Accomplishments that we're proud of

I am most proud of successfully engineering a functional, full-stack medical screening application within the strict 1-day timeframe of the hackathon. I managed to move beyond simple "black-box" AI by implementing a Unified Risk Index that effectively models uncertainty and provides a justification for its findings. Seeing the system correctly identify high-risk patterns and generate structured, actionable clinical recommendations—like suggesting an urgent dermatologist visit—demonstrated the real-world impact potential of this tool.

What we learned

Throughout this 1-day sprint, I gained a deep understanding of how to architect "responsible AI" for sensitive health-tech applications. I learned the technical necessity of modeling uncertainty, ensuring that the system prioritizes patient safety by identifying low-confidence scans rather than providing a high-variance guess. Orchestrating a multi-modal pipeline taught me how to synchronize specialized CNN vision models with LLM-based reasoning agents. Additionally, I refined my ability to justify engineering assumptions, such as specific probability thresholds, to a panel that values both technical rigor and biological accuracy.

What's next for SkinScan.io

To move SkinScan.io toward a production-ready medical device, the next step is expanding the dataset to include a wider range of skin tones and rarer dermatological conditions. I plan to implement on-device inference using TensorFlow Lite to enhance user privacy by ensuring sensitive images never leave the device. Furthermore, I aim to integrate a direct telehealth portal, allowing users with "High Risk" assessments to instantly share their longitudinal "Mole History" with board-certified dermatologists for a formal diagnosis.

Built With

- 2.0

- ai

- api

- backend

- bun

- cnn

- css

- database

- eslint

- fastapi

- flash

- frontend

- gemini

- github

- inference

- infrastructure

- learning

- lucide

- machine

- postcss

- postgresql

- python

- react

- roboflow

- shadcn/ui

- supabase

- tailwind

- tools

- typescript

- ui

- vercel

- vite

Log in or sign up for Devpost to join the conversation.