-

-

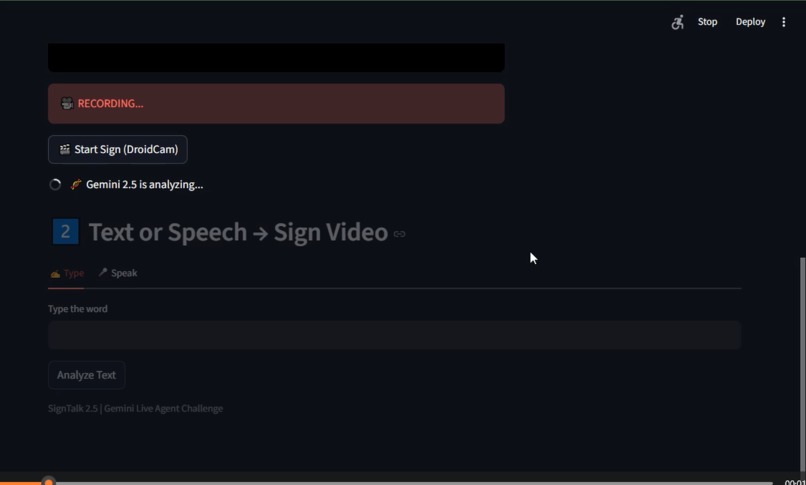

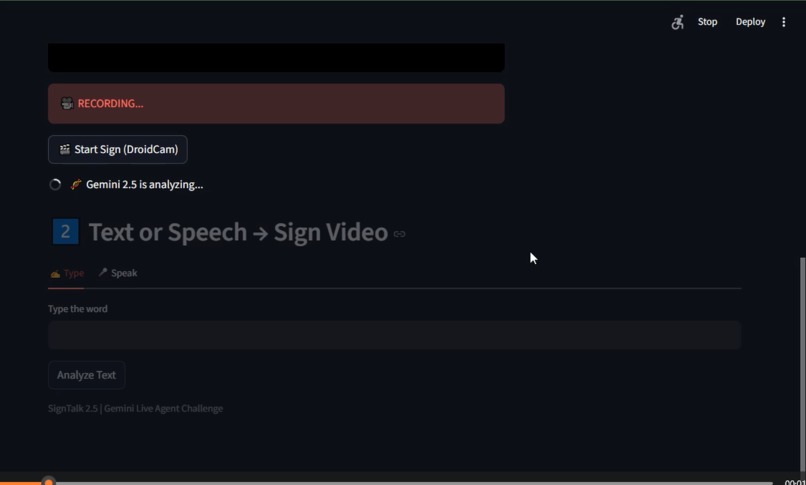

Gemini 2.5 Flash Vision translates real-time ASL gestures into instant text and audio, enabling seamless two-way communication.

-

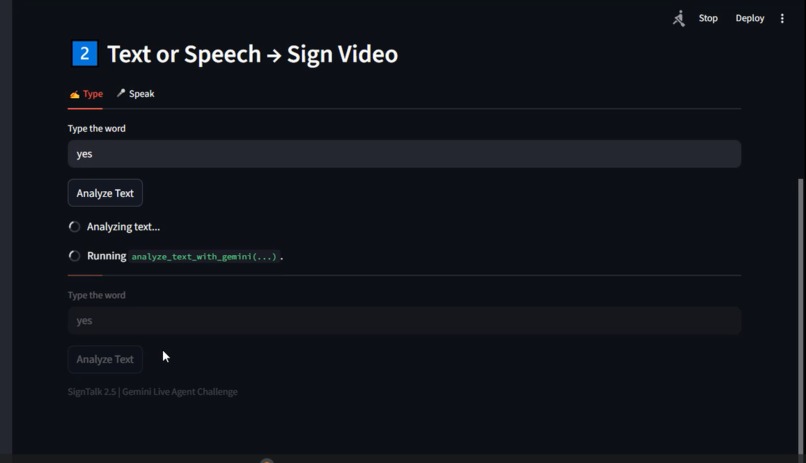

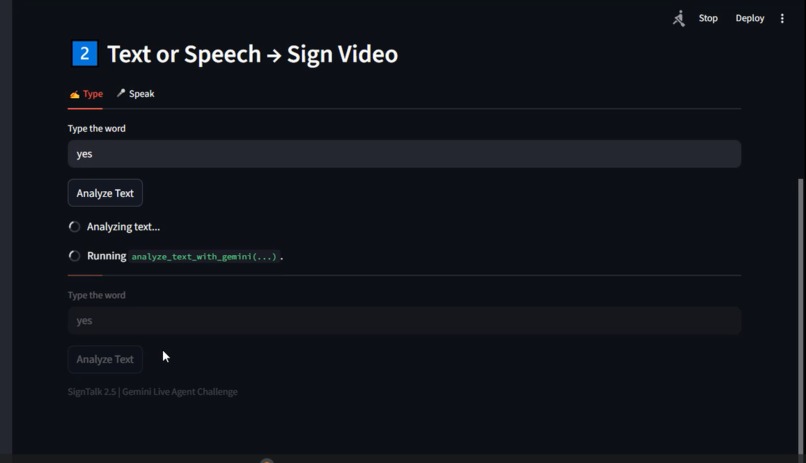

Text to Sign:Users type English words and Gemini instantly maps them to accurate ASL video clips making sign language accessible to everyone

-

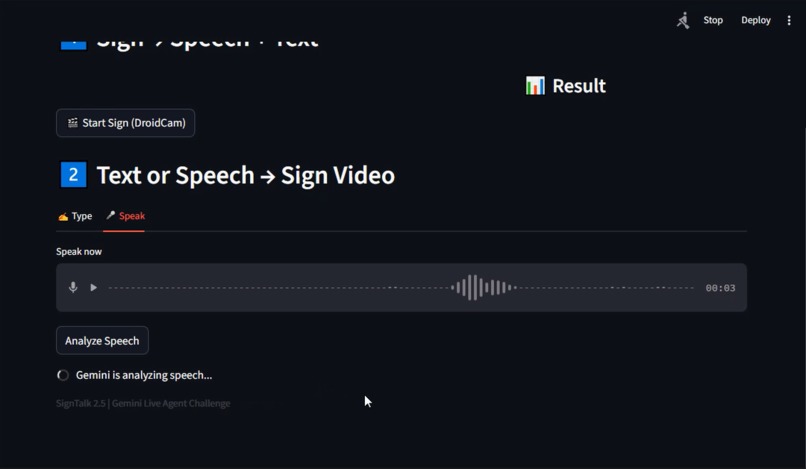

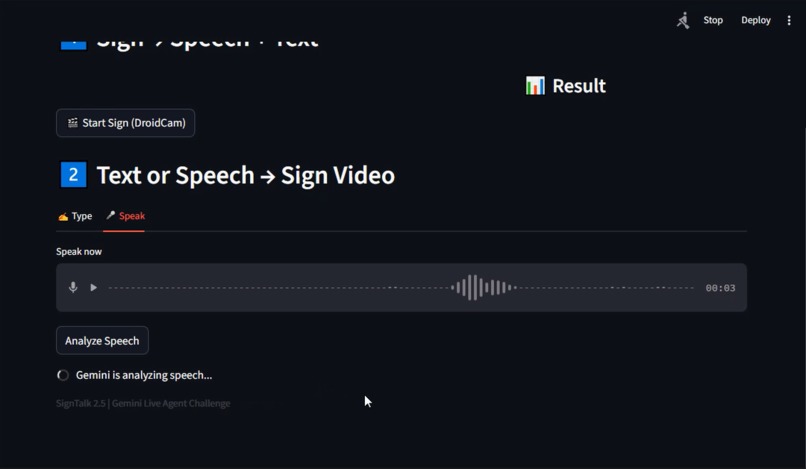

Speech-to-Sign: Live voice input is processed by Gemini 2.5 Flash and converted into clear ASL sign language videos in real time.

-

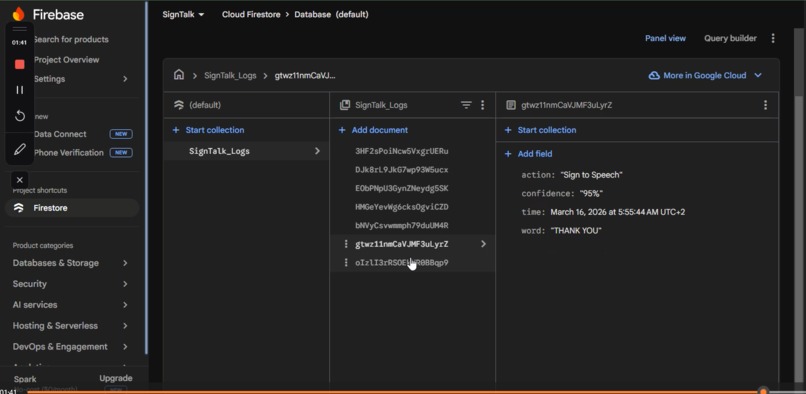

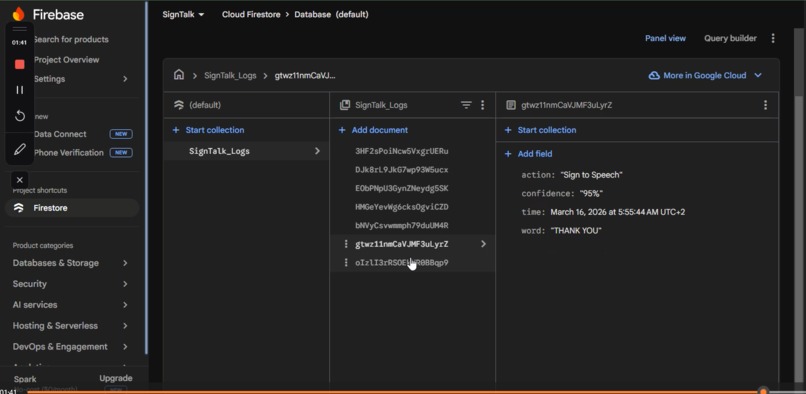

Scalable backend on Google Cloud: Every translation is securely logged in Firestore, proving full integration with Google Cloud services.

Inspiration

Communication between deaf and hearing people can be difficult because many people do not understand sign language. I wanted to build a simple AI-powered tool that helps bridge this communication gap. The goal of SignTalk is to make interaction easier by translating between sign language and spoken language in real time.

What it does

SignTalk 2.5 is a Live AI Agent that translates in two directions:

Sign → Speech & Text: Captures live hand gestures via camera and instantly converts them into spoken audio and text using Gemini 2.5 Flash. Speech/Text → Sign: Users type or speak a word, and the system displays the matching ASL sign language video. This creates seamless, accessible communication for both deaf and hearing users.

How we built it

The project was developed using:

Google Gemini 2.5 Flash (via GenAI SDK) for multimodal analysis of video, speech, and text. Google Cloud Firestore for logging every translation (fulfilling the Google Cloud requirement). Streamlit for the interactive UI. OpenCV + DroidCam for real-time camera input. gTTS for speech output. The system records short videos, sends them to Gemini for analysis, and returns results instantly.

Challenges we ran into

The main challenge was achieving accurate real-time sign recognition from short video clips with limited data. Processing live speech input and mapping it precisely to the correct sign video was also technically demanding. Balancing speed, accuracy, and quota limits of the free Gemini tier required careful optimization.

What we learned

This project taught me how to integrate multimodal AI (video + speech + text) in real time, how to use Google Cloud services effectively, and the importance of clean architecture and caching to manage API costs. It also deepened my understanding of accessibility technology.

What's next for SignTalk

Future plans include:

Expanding to full sentences and more signs Adding MediaPipe for higher gesture accuracy Mobile app version Full offline support using Gemini Nano

The long-term goal is to create a powerful accessibility tool that helps millions of deaf and hard-of-hearing people communicate more easily with the world.

Built With

- computer-vision

- firebase-firestore

- google-cloud

- google-gemini-ai-(gemini-2.5-flash)

- gtts-(google-text-to-speech)

- opencv

- python

- speech

- streamlit

Log in or sign up for Devpost to join the conversation.