-

-

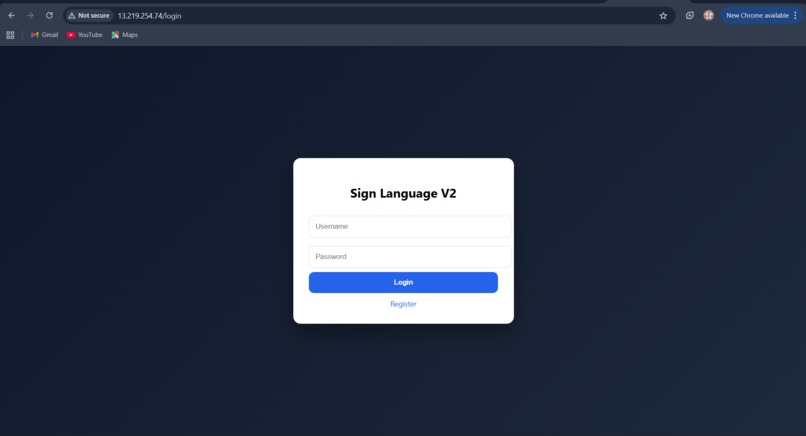

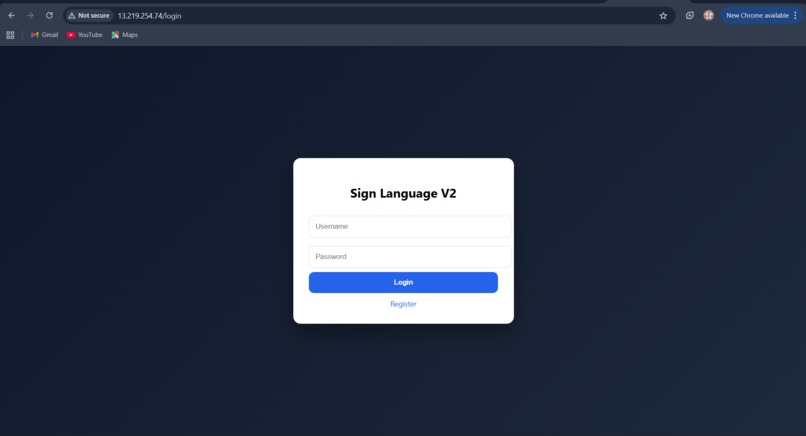

Secure user login interface with session-based authentication for personalized communication history.

-

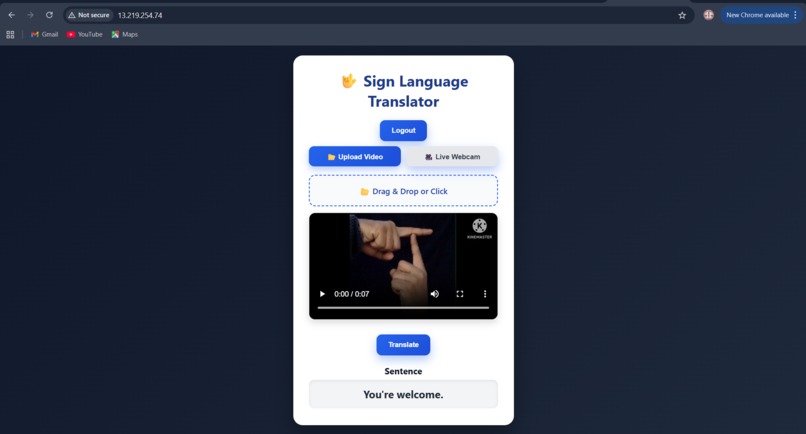

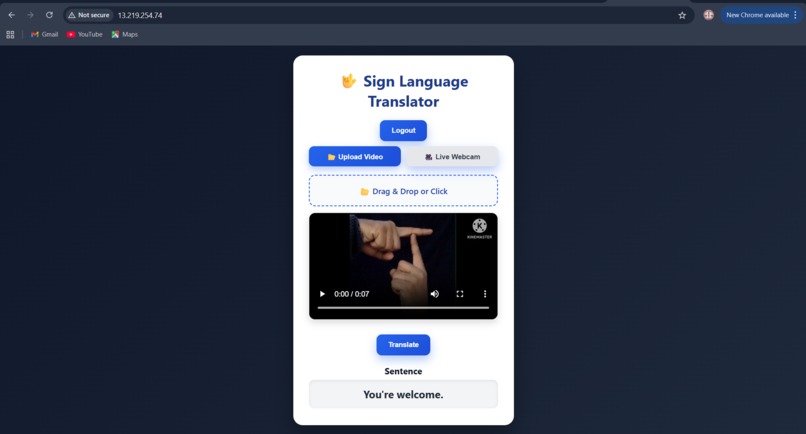

AI-generated natural sentence output with contextual refinement and speech synthesis integration.

-

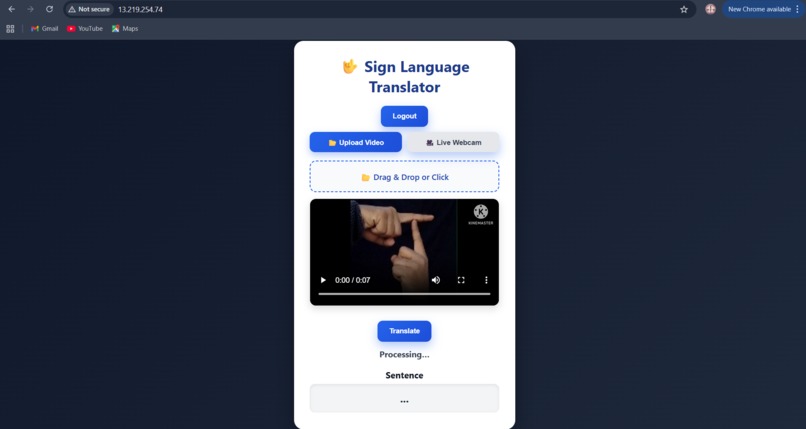

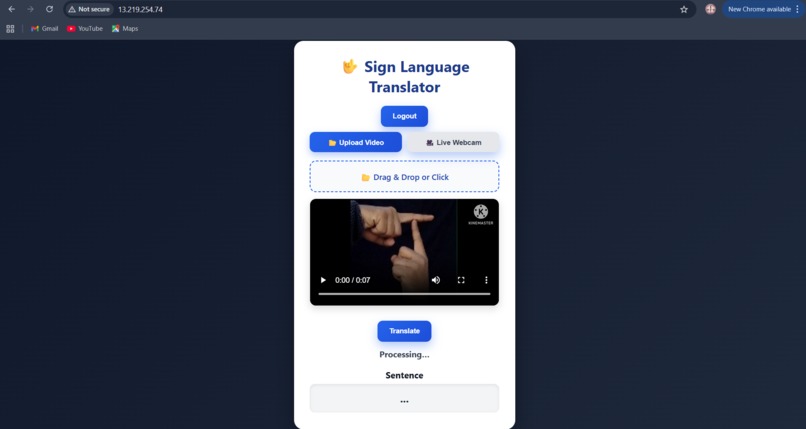

System processing uploaded sign language video using computer vision and deep learning models.

-

User dashboard with video upload and live webcam options for real-time sign-to-speech translation.

Inspiration

Many individuals who cannot speak rely on gestures or sign language to communicate, which can create barriers in everyday interactions such as education, healthcare, and public services. We built SignBridge AI to reduce this communication gap and enable more natural real-time expression through speech.

What it does

SignBridge AI converts sign language gestures into natural spoken sentences in real time. It accepts video, images, or live webcam input, detects hand landmarks, predicts gesture meaning, refines sentences using AI, and generates human-like speech.

This allows individuals who cannot speak to communicate more easily with others.

How we built it

We built the system using MediaPipe for hand landmark extraction and an ANN model trained on normalized landmark features for gesture classification.

Predicted words are refined into meaningful sentences using Groq LLM with contextual memory. Finally, ElevenLabs converts the generated sentence into natural speech.

The application is deployed as a Flask web app, containerized with Docker, and integrated with AWS CI/CD for scalable deployment.

Challenges we ran into

Key challenges included handling variations in hand orientation, lighting conditions, and partial hand visibility. Ensuring stable real-time prediction from video streams and maintaining sentence consistency using LLM context were also complex. Deploying ML inference efficiently inside Docker with cloud integration required careful optimization.

Accomplishments that we're proud of

We built a full end-to-end multimodal AI system combining computer vision, NLP, and speech synthesis. The system works across images, videos, and live webcam input while generating natural speech output. We also implemented contextual sentence refinement and production-ready cloud deployment.

What we learned

We learned how to design robust landmark-based gesture models, integrate LLMs safely into ML pipelines, and build real-time AI applications. This project strengthened our skills in MLOps, Docker deployment, and building scalable multimodal AI systems.

What's next for SignBridge AI — Sign Language to Speech Assistant

Next, we plan to add sentence-level temporal modeling for improved continuous signing, real-time streaming APIs, mobile deployment, and multilingual speech output. We also aim to optimize the model for edge devices and expand gesture vocabulary for broader real-world use.

Built With

- actions

- aws-ec2

- aws-ecr

- ci/cd

- css

- docker

- elevenlabs-api-(text-to-speech)

- flask

- github

- groq-api-(llm)

- html

- javascript

- keras

- mediapipe

- numpy

- opencv

- python

- scikit-learn

- tensorflow

Log in or sign up for Devpost to join the conversation.