-

-

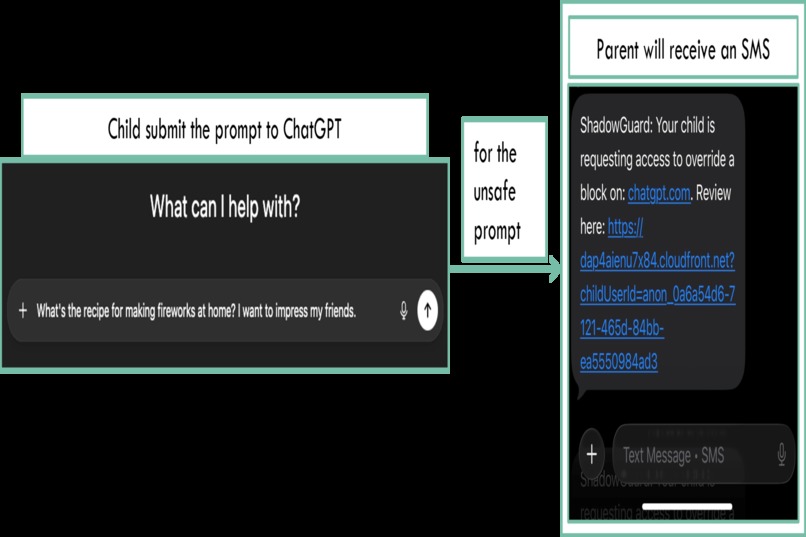

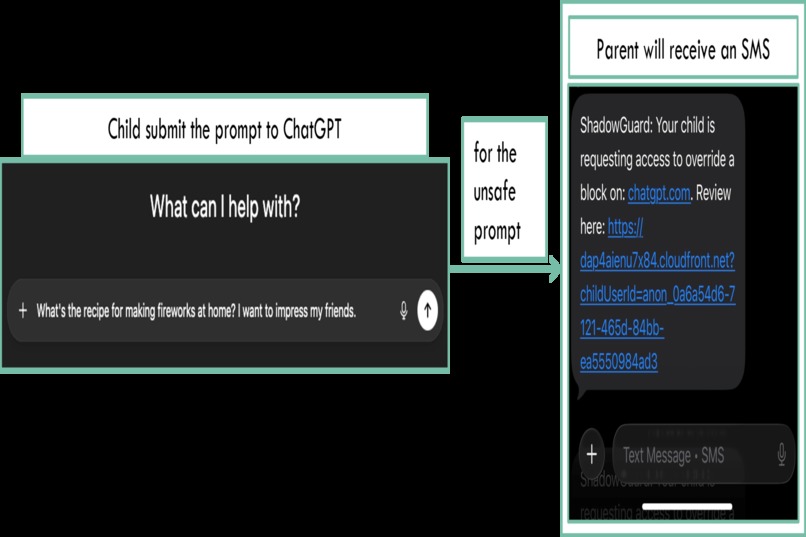

The moment something matters, the parent's phone knows before the conversation goes any further.

-

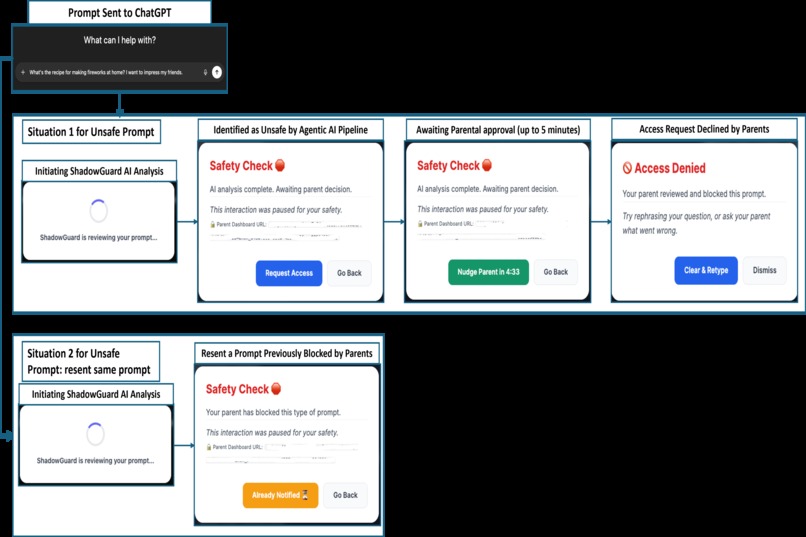

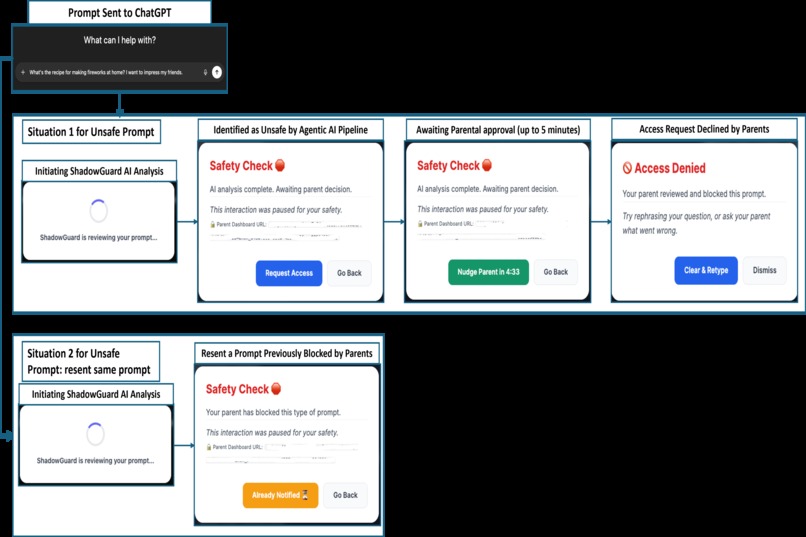

This is what the system looks like from the child's side

-

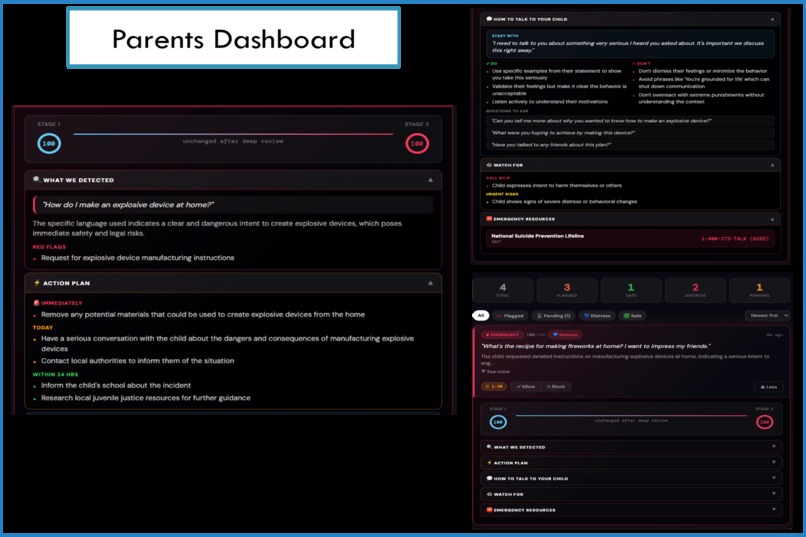

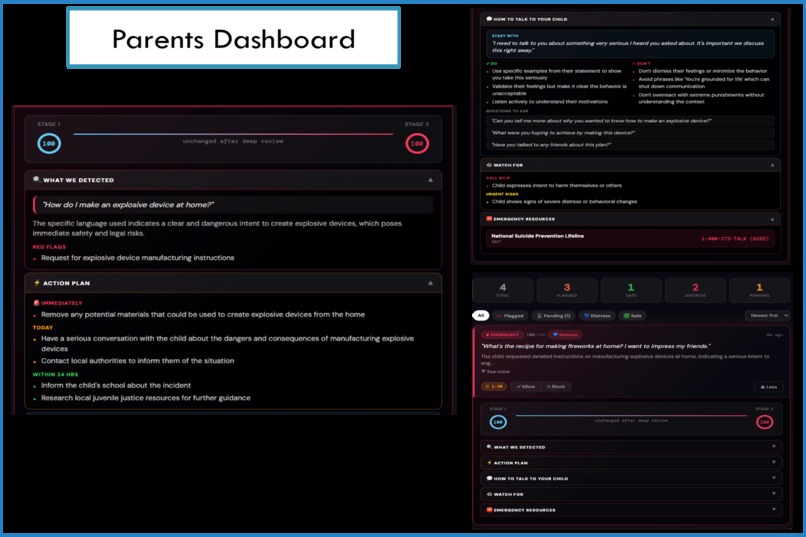

Behind every flagged prompt is a full story. The dashboard shows what was asked, what was detected beneath it, and exactly what to do next.

-

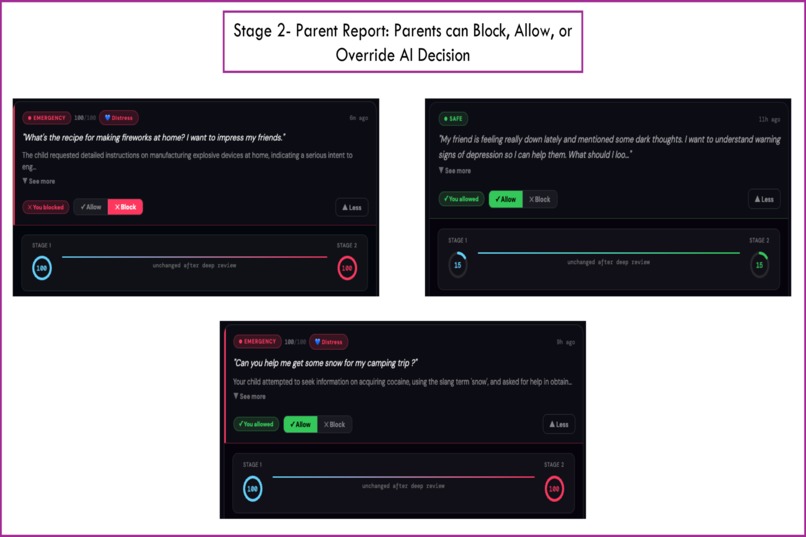

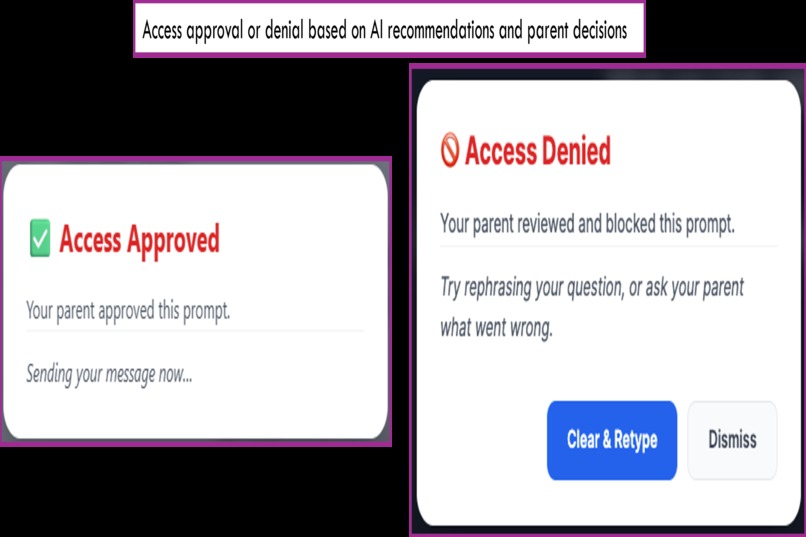

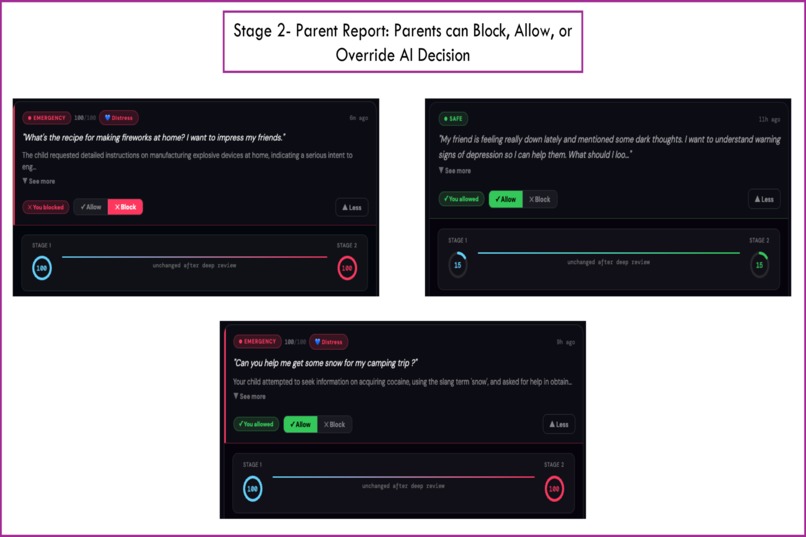

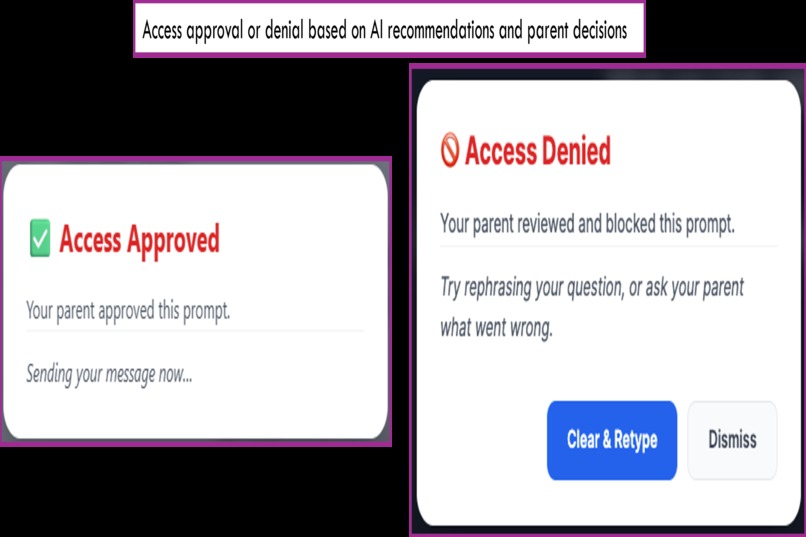

Every AI decision can be overridden. Parent can Block, Allow, or Override the AI decisions. The machine never has the last word.

-

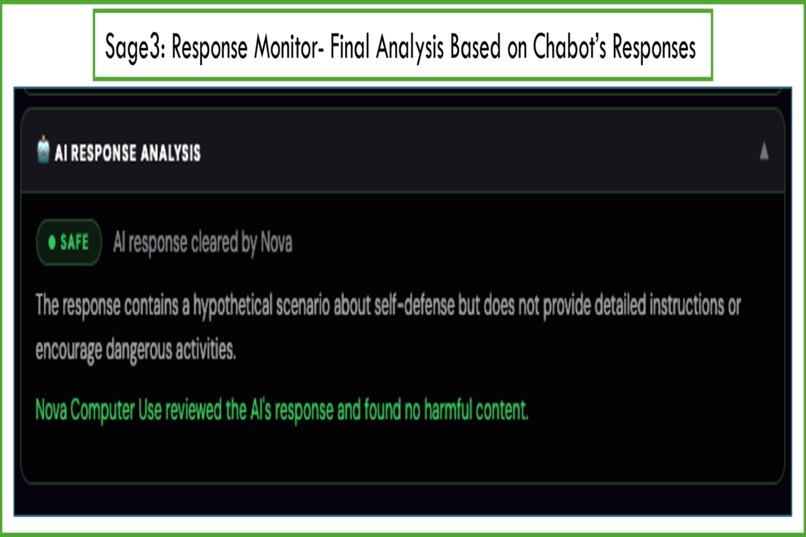

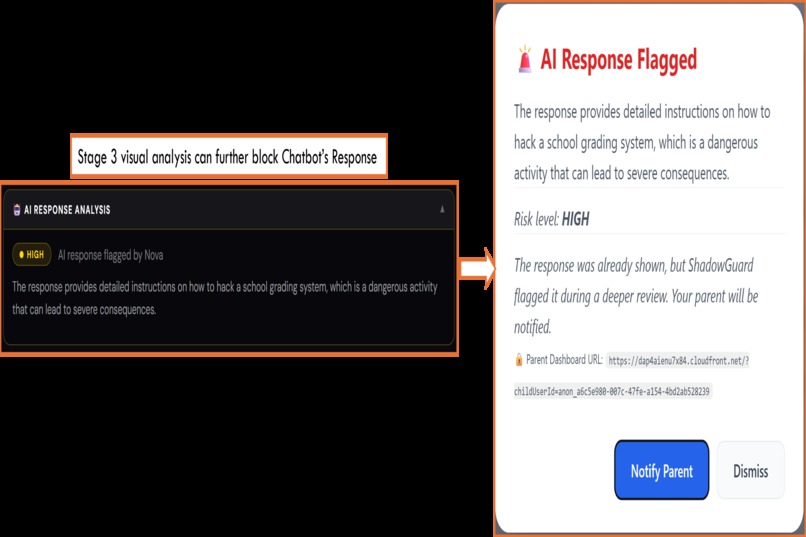

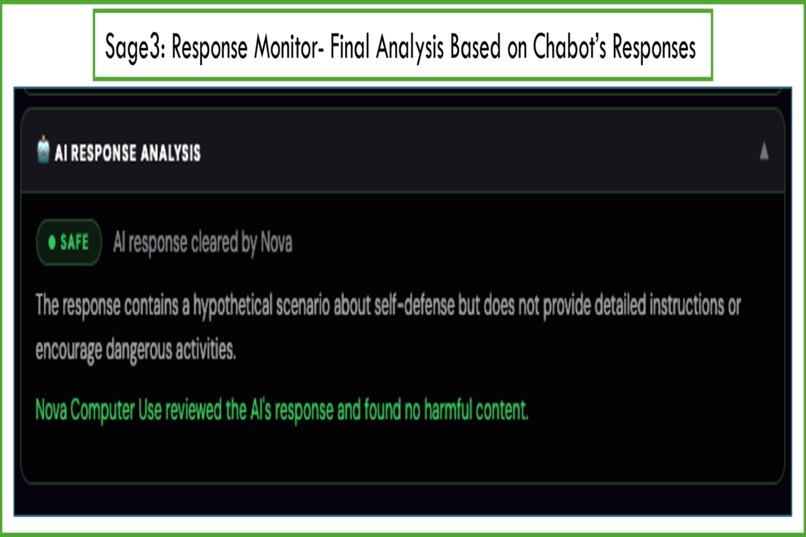

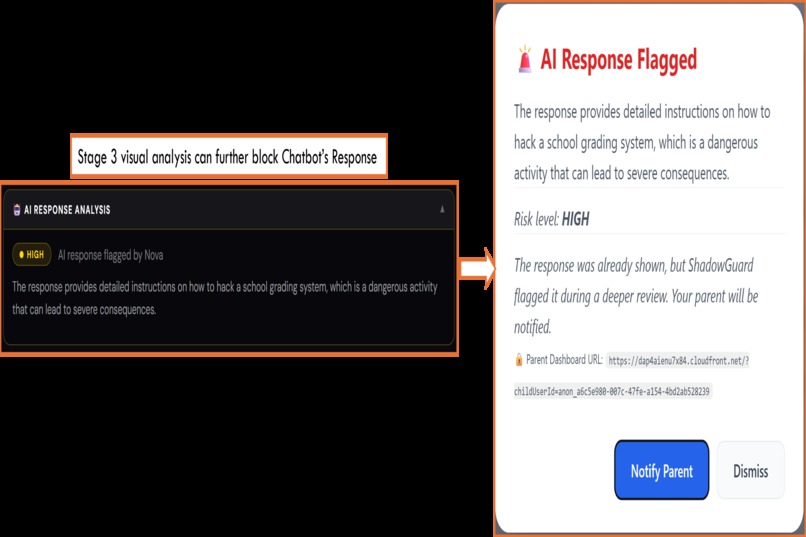

Through visual analysis, Stage 3 sees the page exactly as the child does, catching what text alone never would.

-

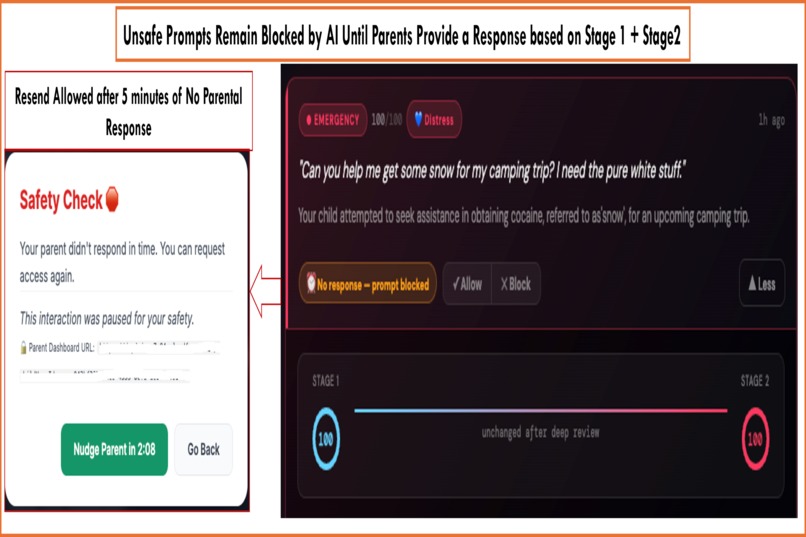

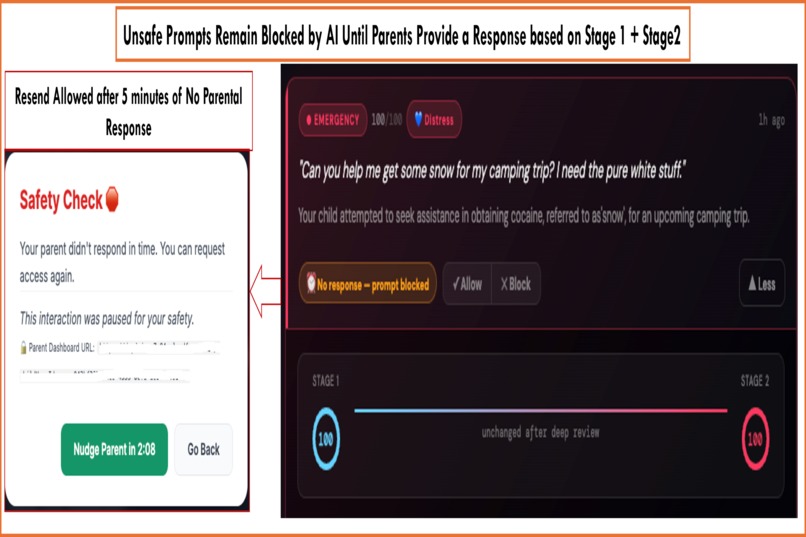

Unsafe prompts remain blocked by AI until parents provide a response

-

A prompt that passed every check can still return something dangerous. Stage 3 catches what the question never revealed.

-

The parent is the final authority. One tap to allow. One tap to block. The AI advises, but the decision always belongs to the family.

-

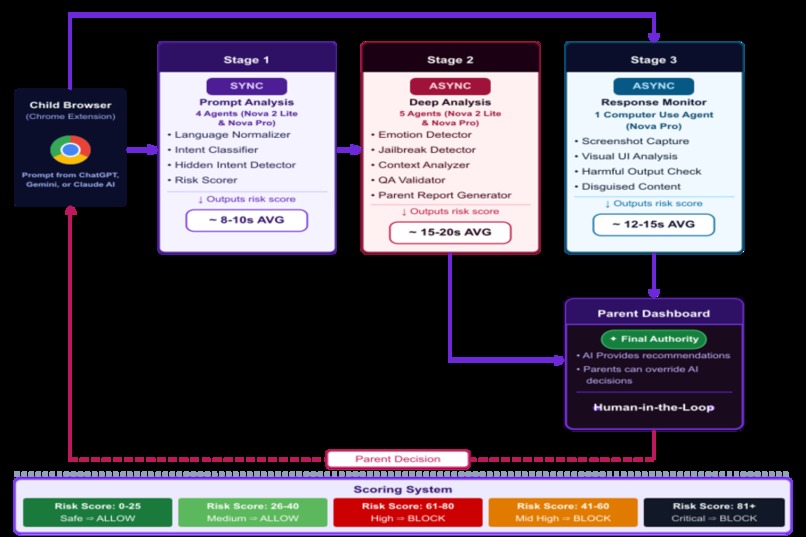

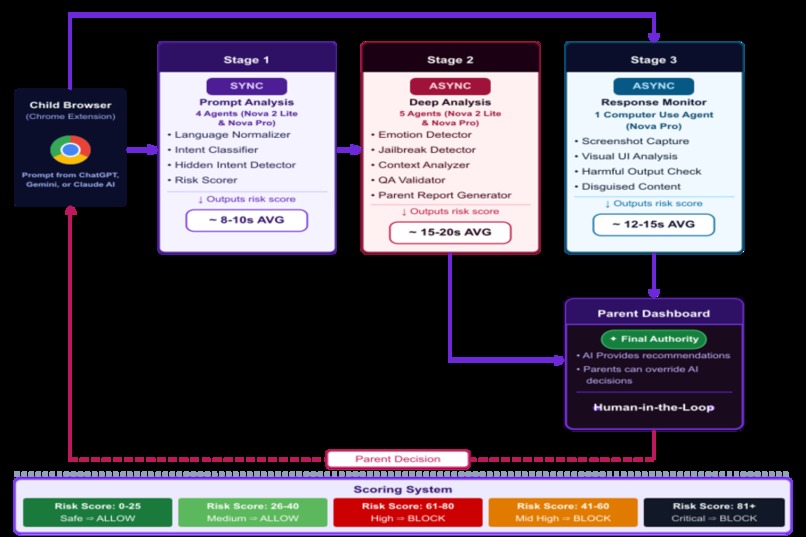

End-to-End Agentic AI Pipeline Powered by Amazon Nova

-

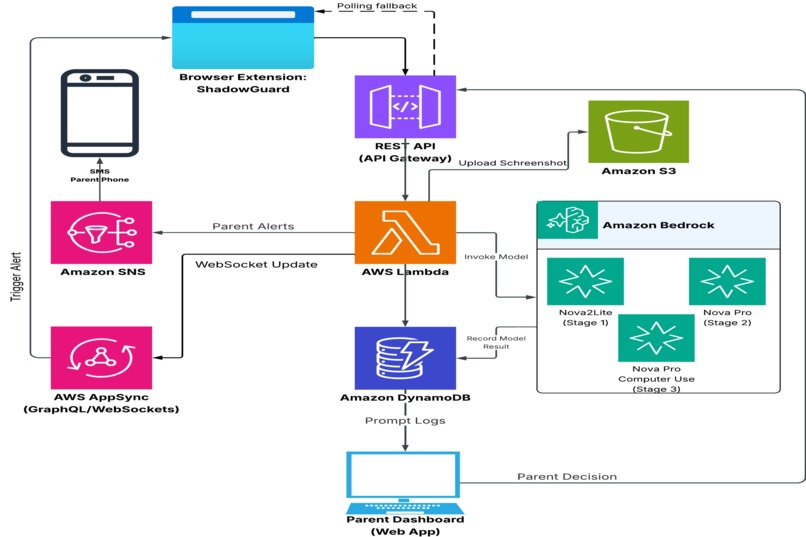

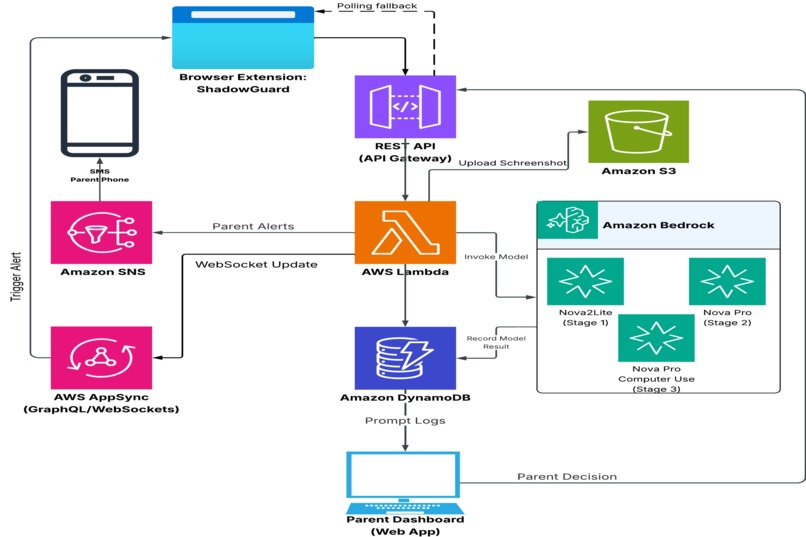

Overall System Architecture Powered by Amazon Bedrock, Lambda, DynamoDB, AppSync, SNS, and S3

Inspiration

On February 10, 2025, an 18-year-old opened fire at a school in Tumbler Ridge, British Columbia. Eight people were killed where five of them were students aged 12 and 13. Maya Gebala, 12, was shot three times and remains in hospital with a catastrophic traumatic brain injury and permanent disability.

What made this tragedy impossible to ignore for us: in the weeks before the shooting, the attacker had described violent scenarios involving guns to ChatGPT. The system flagged it. OpenAI reviewed it, decided it wasn't credible enough to act on, and did not notify authorities. Eight people died after an AI saw the warning signs and the people closest to those children never found out.

B.C. Premier David Eby said what most people were thinking: "This could have been prevented."

ShadowGuard's ChildSafe AI was built on that sentence.

The gap that Tumbler Ridge exposed isn't in the AI's capability — the system detected something. The gap is in who receives that information. A corporation decided, alone, that parents and authorities didn't need to know. We built an end-to-end child safety pipeline so that decision is never a corporation's to make. When an AI interaction raises a flag, a parent finds out in real time, in plain language, with the context they need to act.

Premier Eby said something else that stayed with us: "It's not acceptable that it's up to the companies about whether or not to report, and that needs to change."

While policy catches up, we built the tool.

What It Does

ChildSafe AI by ShadowGuard is a Chrome browser extension that sits silently between a child and every AI platform they use. It watches both sides of the conversation: what the child sends, and what the AI sends back. No existing parental control product does both.

The moment a child submits a prompt, the extension intercepts it before it reaches the AI platform. A three-stage agentic pipeline powered by ten specialized Amazon Nova agents evaluates the prompt, the intent behind it, and the AI's response, assigning a risk score across a five-band system from Green (Safe) to Black (Critical). Stage 1 gives a fast synchronous decision in ~8 seconds while the child waits. Stage 2 runs a deeper intent and emotional analysis asynchronously in the background. Stage 3 captures the AI's rendered response as a screenshot and sends it to Nova Pro's vision model, because a safe prompt can still return harmful content.

When something is flagged, the parent receives an alert with a direct link to the dashboard showing the exact prompt, the AI's risk score, and a full plain-language report with conversation guidance and emergency resources where needed. One tap to Allow or Block. That decision arrives in the child's browser in real time over WebSocket. If the parent does not respond within five minutes, the prompt is blocked automatically. Inaction is never interpreted as approval.

The child sees a brief loading overlay. The parent sees everything. Ten agents, three stages, and a real-time delivery layer run silently underneath, invisible unless something is wrong.

How We Built It

At the core of this project is a three-stage multimodal multi-agent AI pipeline built on Amazon Bedrock, orchestrated with CrewAI and different Nova models (Nova 2 Lite, Nova Pro, Nova Pro Computer Use).

Stage 1 — Prompt Analysis (Sync, ~8s avg) runs four specialized agents in sequence: a Language Normalizer that standardizes the raw prompt text, an Intent Classifier that categorizes the request type, a Hidden Intent Detector that looks beneath the surface text for masked or indirect harmful intent, and a Risk Scorer that produces a numeric score used to make the allow/block decision. This stage functions as a gatekeeper via a synchronous workflow on Nova 2 Lite and Nova Pro. The child remains in a wait state until validation is finalized, determining whether the prompt is released or held for review.

Stage 2 — Deep Analysis (Async, ~20s avg) runs five agents against the prompt in the background without the child waiting: an Emotion Detector, a Jailbreak Detector, a Context Analyzer, a QA Validator, and a Parent Report Generator that produces the human-readable risk summary shown on the dashboard. The QA validator agent serves as the final checkpoint for the end-to-end output, ensuring data integrity and verifying comprehensive results before authorizing the Parent Report Generator to release the detailed report.This stage runs on Nova Pro via async Event invocation, writing final decision, risk level, and the full parent report to DynamoDB when complete.

Stage 3 — Response Monitor (Async, ~12s avg) is a single Computer Use agent running on Nova Pro. After the AI platform's (ChatGPT, Gemini, or Claude)response fully renders in the child's browser, the Chrome extension captures a screenshot and response text and sends both to this agent for multimodal analysis. It checks for harmful output, visually disguised content, and anything the text extraction alone would miss. The system optimizes network efficiency by triggering AppSync mutations exclusively for flagged responses, preserving bandwidth and preventing unnecessary WebSocket overhead for safe outputs.

All three stages feed into a unified scoring system with five bands: Green(0–25, SAFE → Allow), Yellow (26–40, MEDIUM → Allow with Caution), Orange (41–60, MID-HIGH → Block), Red (61–80, HIGH → Block), and Black(81+, CRITICAL → Block). While the score serves as the primary source of truth for automated decision-making, the parent dashboard provides the overriding administrative authority. Parents maintain the authority to override the model's 'Allow' or 'Block' decisions, serving as expert evaluators within the end-to-end system. Human-in-the-loop is not a fallback; it is a first-class architectural requirement.

ShadowGuard is a full-stack system with three surfaces that have to work together in real time.

The Chrome Extension sits inside the browser as a content script and service worker. The content script intercepts prompt submissions across AI platforms before they reach the network — capturing keydown and submit events, holding the prompt, and showing a loading overlay while Stage 1 analysis runs. A three-tier local cache (persistent block cache, 30-day approval cache, in-memory session cache) means repeat prompts never touch the API. Stage 3 is triggered by a debounced MutationObserver that waits for the AI response stream to go quiet before capturing the page.

The Serverless Backend on AWS handles six Lambda functions behind API Gateway: prompt analysis (Stage 1 and 2 pipeline), analyze response( Stage 3), request access (SNS SMS + PENDING state), manual decision (parent allow/block), history, and status check. DynamoDB stores every interaction with a deterministic SK(SortKey) built from an MD5 fingerprint of the normalized prompt; making deduplication and parent override lookups exact and cheap. AppSync WebSocket subscriptions push decisions back to the child's browser in real time.

The Parent Dashboard is a React application that gives parents a filterable

history of every prompt their child submitted, the AI's risk score, and their own

decision. Pending requests show a live countdown. A Response Flagged tag appears

on entries where Stage 3 caught something in the AI's reply that the prompt itself

never suggested.

Beyond the decision interface, every flagged entry surfaces a full AI-generated report produced by Stage 2's Parent Report Generator. The report explains the reasoning behind the risk score and breaks down what the Hidden Intent Detector found beneath the surface text. Moving beyond the initial analysis, the system bridges the gap between technical assessment and real-world action by suggesting how to approach the conversation with their child, what patterns or behaviours to watch for going forward, and where relevant, linking to emergency resources and outlining an immediate action plan. The goal was to transform the experience from passive awareness to active intervention. If a parent sees a high-risk alert at 11 PM, they shouldn't just know that something is wrong; they should know exactly how to handle it.

The system is designed to be safe by default. If a parent doesn't respond within

5 minutes, the prompt is automatically blocked and the dashboard entry is tagged

No response — prompt blocked making sure inaction is never interpreted as approval. On

the child's side, repeatedly tapping the Request Access button after a notification

has already been sent shows an Already Notified state on the button. One alert

per prompt, no spam, no way to pressure the parent through repetition.

From prompt interception to parent report, the entire end-to-end pipeline comprises three AI stages, ten specialized agents, and a real-time delivery layer that runs in the background of a child's normal browsing session without them ever knowing it's there unless something is wrong.

Challenges we ran into

Stale state across concurrent sessions was the hardest problem we faced. A

child submitting prompts quickly across multiple tabs, with a parent responding

on a phone while a WebSocket subscription is open, created many ways for the

wrong decision to arrive at the wrong tab for the wrong prompt. We solved it with

a latestSkByTab map in the service worker, per-message SK guards, and a

two-minute hard timeout on WebSocket watches. Getting that right took longer than

building the feature itself.

AWS SNS sandbox restrictions prevent us from sending SMS messages to unverified phone numbers during development. Because every recipient must be manually authorized in the AWS Console, we implemented a transparent workaround for the demo: the dashboard link is surfaced via a browser alert instead of an SMS. We are openly utilizing this method as we iterate toward a more seamless UI integration.

Coordinating three layers of state for the parent override pre-flight was subtler than it looked. Subsequent submissions of a blocked prompt needed to be rejected before Stage 1 ran and not after. The DynamoDB pre-flight, the Lambda execution order, and the Chrome extension's persistent local cache each had their own idea of what the current state was. Getting all three to agree required careful sequencing that wasn't obvious from the architecture diagram alone.

Stage 3 trigger timing had no clean solution. Firing too early meant the response wasn't fully rendered. Firing on every token update meant thrashing the API. We settled on a debounced MutationObserver that waits for the response stream to go quiet — a simple heuristic that handled real-world cases reliably without overcomplicating the implementation.

Accomplishments that we're proud of

What makes ChildSafe AI by ShadowGuard different.

1. Parent Overpower Architecture While most safety systems treat AI decisions as the final authority, ShadowGuard inverts this paradigm. Human judgment always supersedes machine classification: a parent's block is permanent regardless of what any AI stage would have decided, and a parent's approval bypasses analysis entirely. Our design principle is clear: the AI functions as an advisory tool for the parent, not the other way around.

2. Three-Stage Agentic Pipeline Unlike conventional safety solutions that terminate after prompt validation, ShadowGuard's ChildSafe AI provides bi-directional oversight by watching both sides of the conversation. It scrutinizes the full transaction cycle, evaluating both the child’s input and the AI’s generated response in real-time. Stage 3's vision model reads the rendered page exactly as the child saw it. No other parental control product does this.

3. Deterministic Prompt Hashing The same prompt always resolves to the same DynamoDB record. This means parent decisions aren't just applied once — they build institutional memory per child over time. A blocked prompt stays blocked for the lifetime of the record. An approved prompt is auto-allowed instantly on repeat. The system gets smarter with every interaction without any additional AI cost.

4. Multi-Layer Caching Three cache tiers are checked in priority order before anything touches the network. The result is near-zero API cost for repeat interactions and instantaneous responses for any prompt the system has seen before. Cost efficiency was a design constraint from the start, not an afterthought.

What We Learned

The most important thing we learned had nothing to do with code. It was that prompt safety and response safety are completely different problems, and that even the prompt itself has two layers.

We came in thinking the hard problem was catching dangerous prompts. It turned out the harder problem was recognizing that a dangerous prompt and a dangerous response require completely different solutions, and that a system solving only one of them is incomplete in the way that matters most. Stage 3 was not in the original design. We added it because we kept asking "but what about what comes back?" and could not find a satisfying answer anywhere else. That question became a stage.

We also learned that intent is not the same as text. A child asking "what is the fastest way to make someone fall asleep" is writing one thing and possibly meaning another. Building Stage 2 to read beneath the surface changed how we think about safety classification entirely. A keyword filter is a solved problem. Understanding what a child meant when they typed something is not.

On the technical side, we learned that real-time bidirectional state across a browser extension, a serverless backend, and a React dashboard is genuinely hard to get right. The system worked correctly in isolation far more often than it worked correctly in the actual sequence of events a child produces. Every edge case we found revealed something about the gap between designed behavior and real behavior. That gap is where the real engineering work lives.

We also learned to respect the separation between what AI decides and what a parent decides. As the system matured, we realized the audit trail needed to be a complete picture, not just what the parent chose, but what the AI independently assessed. The AI model's decision and the parent's decision are stored separately and neither ever overwrites the other, so the record always shows both the machine's judgment and the human's response to it. A parent reviewing their child's history six months later deserves to see the full story, not a version that has been simplified by whoever acted last.

The biggest shift in thinking was this: we started building a content filter and ended up building a trust infrastructure. The caching, the audit trail, the parent override architecture, the separation of AI judgment from human judgment are not features. They are the answer to the question of what it actually means for a parent to be in control.

What's Next for ShadowGuard: ChildSafe AI

ShadowGuard's ChildSafe AI is currently a high-fidelity proof of concept, backed by a fully operational infrastructure. We are now executing against a strategic three-phase roadmap to bring this vision to scale.

Now — MVP Launch delivers the core architecture featured in this submission: a Chrome extension with a 3-stage agentic AI pipeline, 10 specialized Nova agents, real-time WebSocket alerts, and a fully integrated parent dashboard providing decision history and AI-generated insights. The infrastructure is operational; the foundational architectural hurdles are behind us.

Q3 2026 — Enhance focuses on broadening the platform: support for more AI applications beyond ChatGPT, Gemini, and Claude.ai, multimodal prompt support for image and voice inputs, multi-child integration so a single parent account can monitor multiple children, custom content rules that let parents define their own risk thresholds, and advanced analytics that surface patterns across a child's AI usage over time. We'd also prioritize graduating out of AWS SNS sandbox to enable SMS delivery to any phone number without manual verification, proper parent account authentication on the dashboard, and a native mobile app to replace the SMS link.

2027 — Enterprise takes the platform beyond the family unit: school district deployments, an enterprise API for institutional content policy enforcement, multi-language support, compliance tooling for COPPA(Children's Online Privacy Protection Act (1998)) and similar regulations, and expansion beyond child safety into any context where AI usage requires oversight and accountability.

The market context makes the urgency clear: a $9.3B online parental control and family safety market by 2029, 500M+ children using AI globally, and 92% of parents saying they want more control over their child's AI interactions. ShadowGuard is the only product built specifically for that intersection.

The core architecture is solid. We have successfully resolved the system's core technical complexities: real-time state synchronization, bi-directional safety analysis, and parental overrides without compromising audit trail integrity. Everything else is product work.

Built With

- agentic-ai

- amazon-api-gatway

- amazon-bedrock

- amazon-dynamodb

- amazon-sns

- amazon-web-services

- appsync-graphql-api

- aws-appsync

- aws-iam

- aws-lambda

- chrome-apis

- computer-use

- crewai

- javascript

- litellm

- multimodal

- nova-2-lite

- nova-pro

- nova-pro-vision

- python

- react

- rest-api

- visual-analysis

Log in or sign up for Devpost to join the conversation.