Building a Multi-Tenant Serverless Agentic OS and Control Plane on Vercel

The video shows a brief demo of interacting with the OS via Telegram, connecting 3rd party API authentications and performing API operations via prompts.

Inspiration

I've always been inspired by operating systems and control planes — systems that don't just respond, but continuously observe, decide, and act. Traditional AI agents feel ephemeral: they respond to a prompt and disappear. I wanted something closer to a daemon process — like a PID running on a VM — but built entirely serverlessly. With native web/browser support for arbitrary UI/assistive browsing operations and automations for services and platforms that lack APIs.

In other words: replace “always-on servers” with “always-on state.”

Why "Agentic OS" (and not just an agent)?

Most agent demos are effectively chatbots with tools. They can call APIs, but they rarely have the properties we expect from an operating system:

- Scheduling: something has to wake up, check timers, and run background jobs.

- Isolation: one user’s workflow shouldn’t corrupt another’s.

- Determinism: multi-step automation must be repeatable and debuggable.

- Stateful evolution: the system must improve its internal model of “what’s going on” over time.

The question that drove this project:

Can we recreate a long-running, stateful agent using only stateless infrastructure and KV Stores?

Traditional LLM interaction:

$$ Response = f(Prompt) $$

Operating systems evolve over time:

$$ S_{t+1} = f(S_t, E_t) $$

Where:

- $S_t$ = current workflow state

- $E_t$ = inbound event (message, cron tick, webhook)

- $f$ = deterministic transition function

Our architectural goal:

$$ \text{Persistent Agent} \approx \text{Stateless Compute} + \text{Durable State} $$

What I Built

I built a serverless Agentic Operating System deployed on Vercel that:

- Is multi tenant (multiple users supported with built in isolation via namespaces

- Uses Vercel Durable Workflows (WDK) for resumable, replayable execution

- Uses Vercel Cron to simulate persistent background processes

- Runs on Next.js Edge Functions for globally distributed compute

- Connects to apps via Composio OAuth toolkits (900+ integrations available)

- Executes remote infrastructure actions and runs external programs and code via SSH/bash

- Persists context, memories and chat/session state in a distributed Redis KV store

- Communicates with users through a Telegram bot, SMS or WhatsApp

- Uses OpenAI API via Vercel AI SDK for inference (gpt-5.2)

- Native multimodal support

- Integrated Web Search Engine and Native Web-Browsing Support

- Streams AI responses progressively using OpenAI Chat Streaming

The system behaves like a long-running control plane — but without servers. With all of the security benefits of multi tenancy via namespace isolation (Telegram ID /SMS/Whatsapp + Secret) This meant every set of user credentials (Including Search/Browsing, SSH and 900+ API Integrations via Composio and code sandboxes)were isolated per-tenant . Each Oauth connection and chat history all shared on the same databases and underlying edge infrastructure. Our control plane was connected to real Edge Infrastructure and Virtual Machines via SSH-over-HTTPS

link

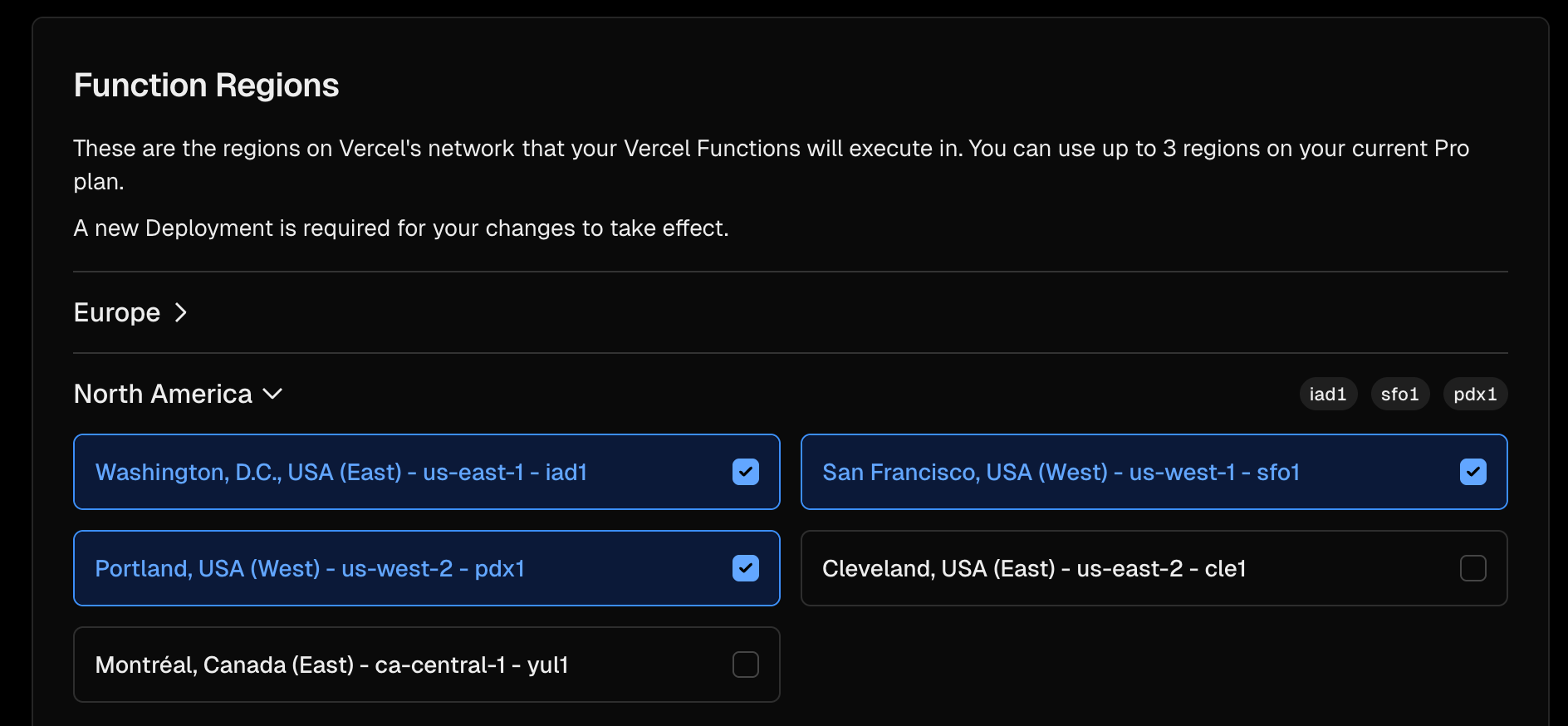

Vercel Edge Regions Configuration (Screenshot)

Below is the multi-region Edge deployment configuration in Vercel:

We created a series of Serverless Functions deployed in the edge under our /api directory.

States were managed by vercel via vercels wdk which natively consumes vercels workflow managed runtime (KV stores, pubsub, queues, kafka style streams etc)

Let the set of regions be:

$$ R = {r_1, r_2, r_3} $$

Requests are routed to the closest region KV State is read and replicated in the closest region: $$ r^* = \arg\min_{r \in R} d(user, r) $$

Where $d$ is geographic latency distance.

Compute is globally distributed.

State remains logically centralized and durable.

Durable Workflows as the "Kernel" and runtime environment

Each user interaction becomes a state machine transition, not just a function call:

$$ S_{t+1} = f(S_t, E_t) $$

Where:

- $S_t$ = current workflow state

- $E_t$ = inbound event

- $f$ = deterministic transition function

Deterministic orchestration:

$$ Workflow(S_t, E_t) \rightarrow S_{t+1} $$

Even though:

$$ LLM(Output) \sim \mathcal{P}(\text{Response}) $$

Durable Workflows provide:

- Replayability

- Idempotency

- Durable execution

- Step-level retries

- Failure isolation

- Unlimited runtime length (traditional serverless runtime is bound to arbitrary lengths for example ; 800 Second)

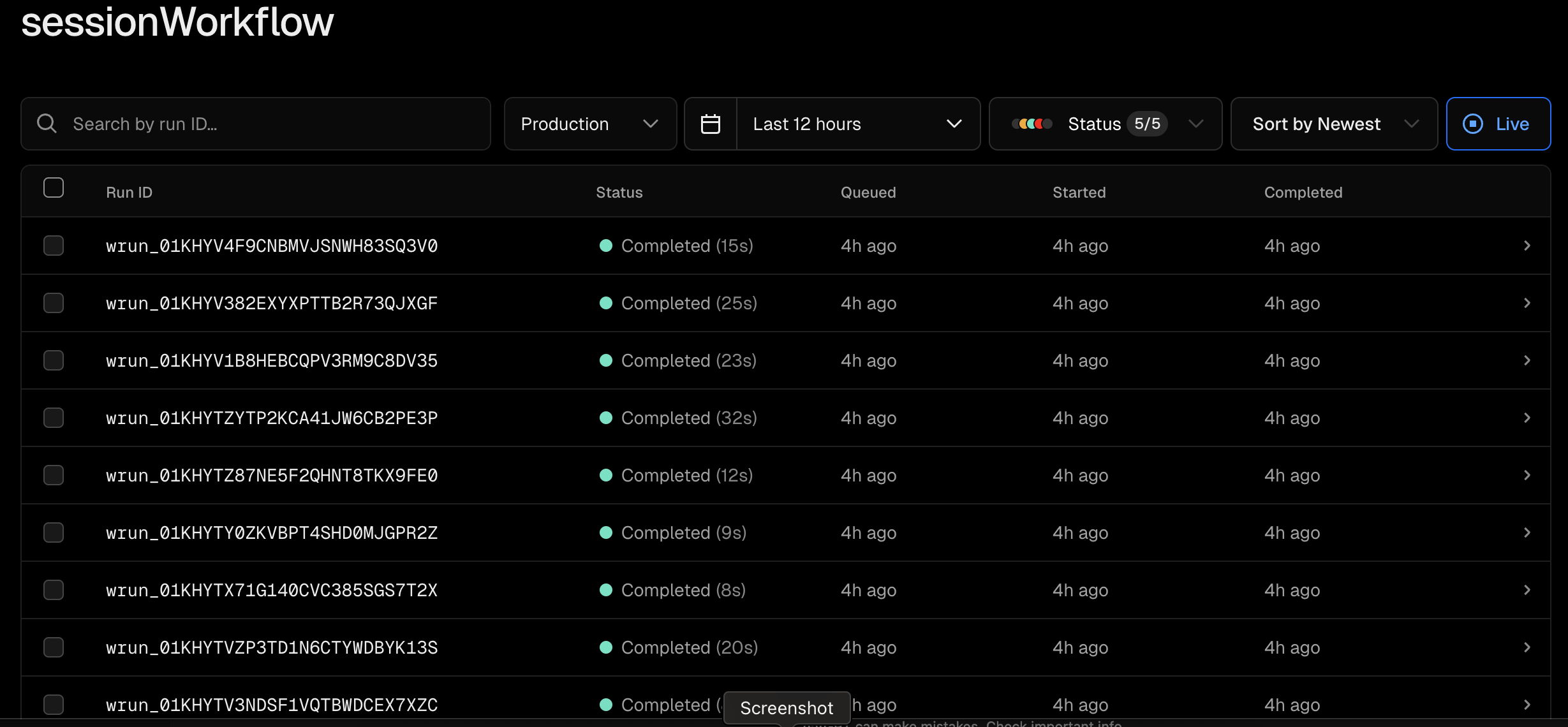

Vercel Workflow Runs (Screenshot)

Below is a view of sessionWorkflow executions in Vercel Durable Workflows:

Each workflow run:

$$ Run = {Step_1, Step_2, ..., Step_n} $$

Each step transitions state:

$$ Step_i : S_i \rightarrow S_{i+1} $$

With retry guarantees:

$$ P(Failure) \Rightarrow Retry(S_i) $$

This effectively acts as a serverless kernel scheduler.

Simulating a Long-Running Process with Cron

In a traditional VM, an agent would run as a daemon with a PID. In serverless, there is no PID.

We simulate persistence using Vercel Cron:

*/1 * * * * → wake up agent

Each tick:

- Loads durable state

- Evaluates pending tasks

- Advances the state machine

- Schedules next actions

Mathematically:

$$ Agent(t + \Delta t) = Evaluate(State_t) $$

And:

$$ \forall t, \exists \Delta t \le 60s $$

Meaning the agent never remains dormant longer than one interval.

Telegram as the User Interface

We use the Telegram Bot API as our primary interaction layer.

When a message arrives:

- Webhook normalizes inbound event

- Workflow transitions state

- We immediately call:

messages.setTyping

https://core.telegram.org/method/messages.setTyping

This typing indicator signals active processing.

Streaming OpenAI Responses

Instead of waiting for a full completion, we stream responses using OpenAI's chat streaming API.

Flow:

- Send placeholder message:

"…" - Begin streaming tokens

- Progressively edit the original Telegram message

Example:

for await (const token of stream) {

buffer += token

await editTelegramMessage(buffer)

}

Incremental response accumulation:

$$ Response_k = \sum_{i=1}^{k} token_i $$

Latency improvement:

$$ T_{\text{first token}} \ll T_{\text{complete}} $$

Stateless Compute, Durable Memory

Vercel Edge Compute is stateless:

$$ Memory \neq RAM $$

Instead:

$$ Memory = Durable(State) $$

Durable state lives in workflows and KV storage.

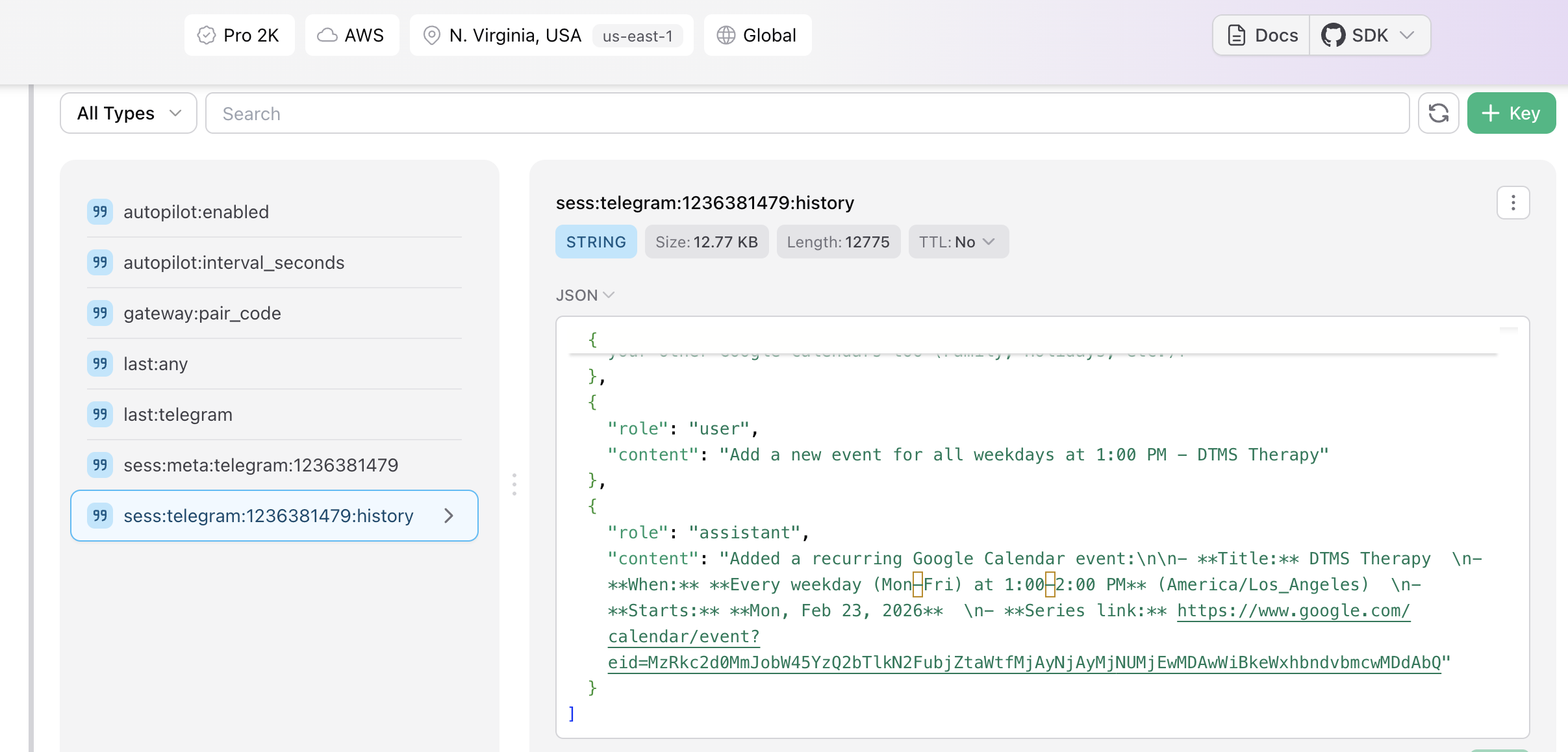

KV Store Chat State (Screenshot SC5)

Below is the KV store session state structure:

Session keys include:

sess:history:telegram:<id>sess:meta:telegram:<id>autopilot:enabled

Conversation modeled as:

$$ History_u = {m_1, m_2, ..., m_n} $$

Bounded by:

$$ |History_u| \le N_{max} $$

Ensuring replayability and bounded memory growth.

Composio + SSH: Acting on the World

Through Composio, the agent connects to Slack, GitHub, Google Workspace, Notion, and more.

These integrations act like system calls:

$$ Syscall(Intent) \rightarrow ExternalAction $$

Through SSH/bash, it can:

- Deploy services

- Restart infrastructure

- Inspect logs

- Execute scripts

Execution cycle:

$$ Intent \rightarrow Plan \rightarrow Action \rightarrow Verification $$

Security model:

$$ Access = OAuth(Token) \land Scope(Authorization) $$

What We Learned

- Durable state matters more than raw compute.

- UX (typing indicators + streaming) dramatically improves perceived intelligence.

- Serverless can emulate daemons using cron + workflows.

- Deterministic orchestration prevents chaos in multi-step systems.

- Global edge compute + durable state is a powerful architectural pattern.

Challenges We Faced

- Simulating continuity without real processes

- Handling streaming + Telegram edit rate limits

- Keeping workflows deterministic around probabilistic LLM outputs

- Preventing runaway cron loops

- Managing OAuth refresh lifecycles

- Controlling unbounded chat history growth

The Bigger Vision

This project proves that you don't need servers to build an operating system.

Durable Workflows

- Cron Scheduling

- Streaming AI Inference

- Persistent KV State

- OAuth Integrations

With durable workflows, cron scheduling, streaming AI, and external tool orchestration, I built a programmable AI control plane that behaves like a daemon — entirely serverless and edge-native.

The future of AI systems will not be monoliths.

They will be state machines on the edge.

Built With

- ai-sdk

- amazon-web-services

- bash

- cloud

- composio

- cron

- durableworkflows

- edge

- edgecompute

- javascript

- nextjs

- node.js

- oauth

- openai

- os

- redis

- restapi

- serverless

- sms

- ssh

- streams

- telegram

- typescript

- upstash

- vercel

- webhooks

Log in or sign up for Devpost to join the conversation.