-

-

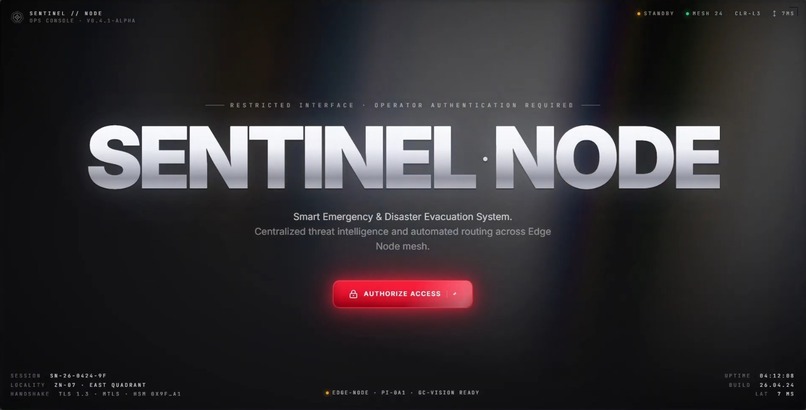

The main login portal where authorized operators access the Sentinel Node emergency evacuation system.

-

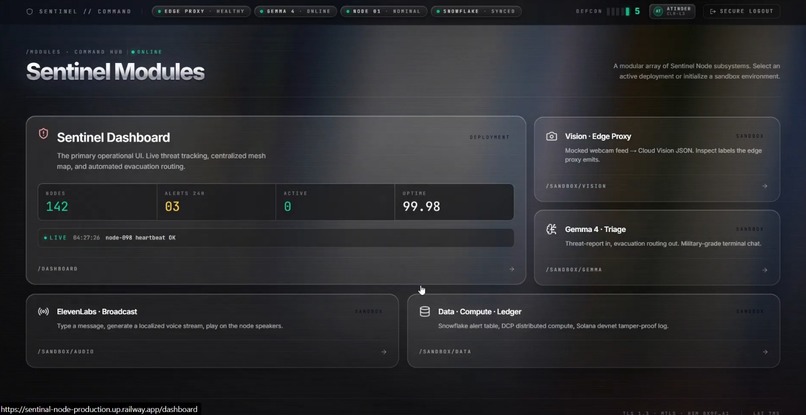

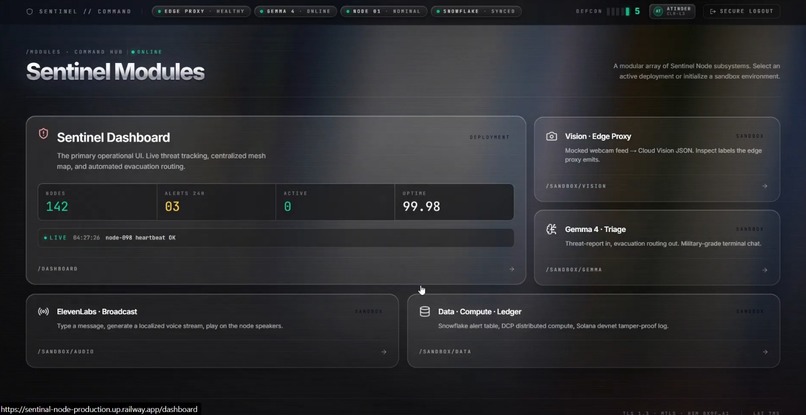

The Command Hub interface displaying live system status and providing access to the primary dashboard, AI sandboxes, and data ledgers.

-

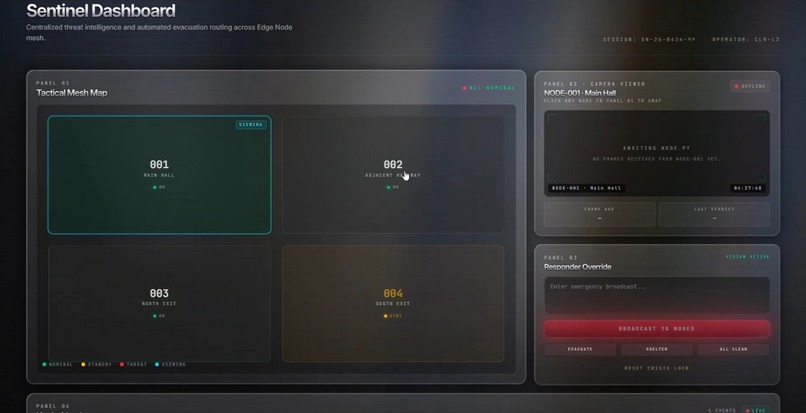

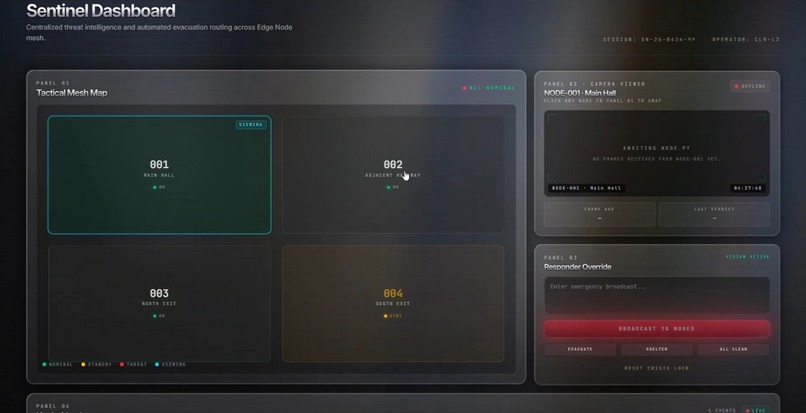

The primary Sentinel Dashboard displaying the live tactical mesh map, camera feeds, and manual broadcast controls for first responders.

-

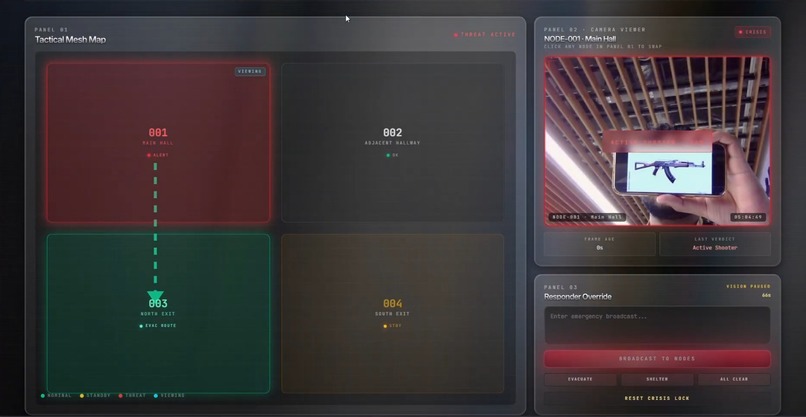

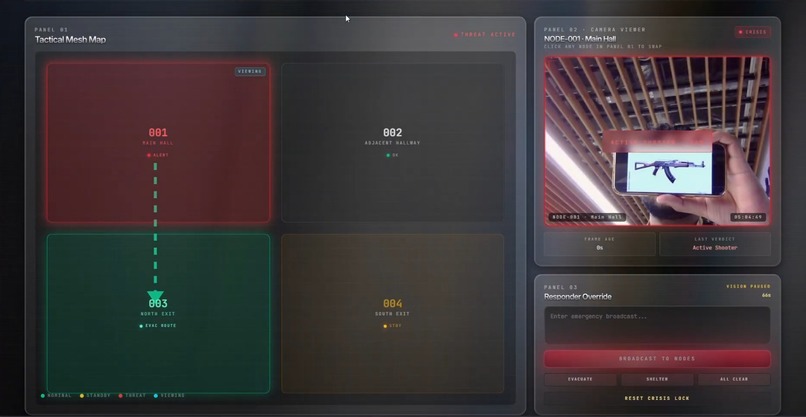

The dashboard in Crisis Mode identifying an active threat via the live feed and automatically mapping a safe evacuation route.

-

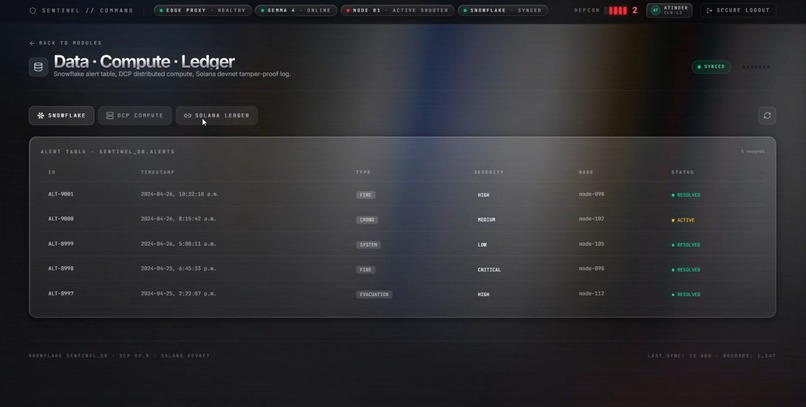

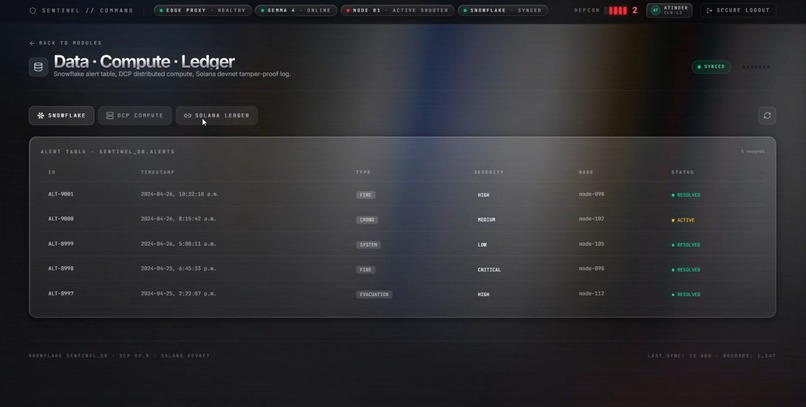

A real-time incident log providing a tamper-proof audit trail of all threat alerts, AI instructions, and operator actions.

-

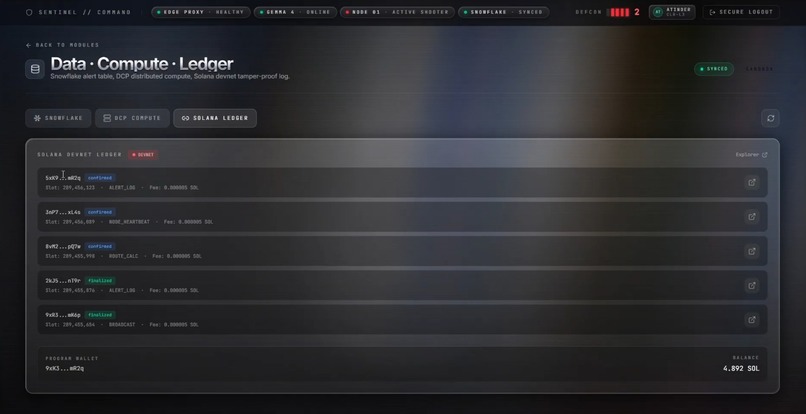

The Data Ledger module integrating Snowflake alert tables, DCP distributed compute, and a Solana tamper-proof log for auditing.

-

The Distributive Compute Protocol (DCP) dashboard, tracking real-time task offloading across edge node workers to maintain low latency.

-

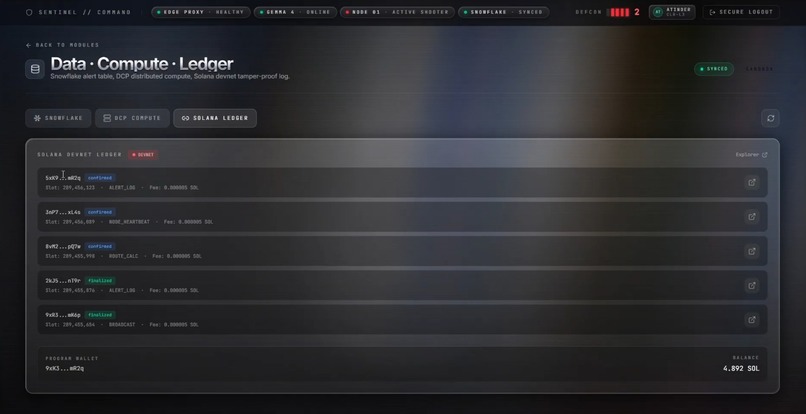

Live Solana blockchain integration, providing a decentralized, tamper-proof ledger for all emergency alerts and routing calculations.

🚨 Inspiration

Standard security cameras just passively record tragedies after the fact 📹. Meanwhile, traditional fire alarms blare panic-inducing sirens, often routing people directly into danger 🚪⚠️. We realized building security needed a paradigm shift from passive observation to active triage. Enter Sentinel Node 💡.

🧠 What it does

Sentinel Node turns standard CCTVs into an intelligent, decentralized mesh network. It actively detects threats, logs them immutably, and guides civilians to safety in real-time 🏃♂️🛟.

Detects & Flags: Identifies hazards and instantly alerts First Responders via a tactical dashboard 🚓.

Triages & Guides: Calculates safe evacuation routes away from the hazard and broadcasts localized audio instructions exclusively to safe zones 🎙️.

🛠️ How we built it

To prove the architecture end-to-end, we built a physical mesh network simulation using two laptops 💻💻.

Frontend: Custom Next.js & Tailwind dark-mode command center 🎨.

Backend: DigitalOcean droplet for high-availability orchestration ☁️⚡.

Detection: Node 1 captures frames and POSTs them to Google Cloud Vision API 🔍🔥.

Triage & Audio: Threat data is passed to Gemma 4 to generate concise routing instructions, synthesized into hyper-realistic audio via ElevenLabs 🔊.

Compute: Offloaded heavy processing across our edge nodes' browsers using Distributive Compute Protocol (DCP) ⚙️.

Audit Trail: Every AI decision is permanently logged in Snowflake 🧊📊.

⚠️ Challenges we ran into

Our original hardware utilized a Raspberry Pi 4 edge node 🤖, but aggressive hackathon Wi-Fi client isolation blocked our SSH and data transfers 🌐. Instead of letting it kill the project, we executed a rapid pivot 🔄. We abstracted the hardware to a two-laptop mesh, allowing us to successfully prove the API orchestration without firewall blockers 🚀.

🏆 Accomplishments that we're proud of

Chaining Vision, LLMs, Audio, and Databases usually causes massive latency ⏳. By centralizing logic on DigitalOcean and decoupling API routes, we achieved an end-to-end response time of just seconds ⚡. The moment Laptop 1 sees a fire, Laptop 2 is verbally telling people where to go 🗣️➡️.

📚 What we learned

We learned a massive lesson in hackathon survival: abstract early and pivot fast. We also gained deep experience orchestrating multiple AI models into a cohesive, event-driven pipeline rather than just building a basic API wrapper 🤝⚙️.

🚀 What's next

Deploying the edge-node software onto actual IoT devices (like Raspberry Pis) on a dedicated intranet 🌐. We also plan to add WebSockets for bi-directional communication 🔄, and real-time object tracking to dynamically update evacuation routes if a threat is moving 🎯.

Built With

- auth0

- dcp

- digitalocean

- distributive-compute-protocol

- elevenlabs

- gemma-4

- google-cloud-vision

- next.js

- react

- snowflake

- tailwind-css

- typescript

- zustand

Log in or sign up for Devpost to join the conversation.