-

-

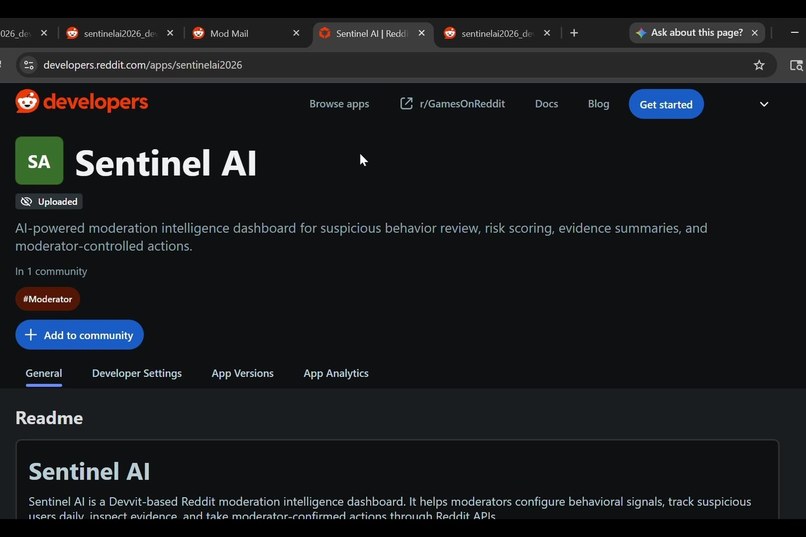

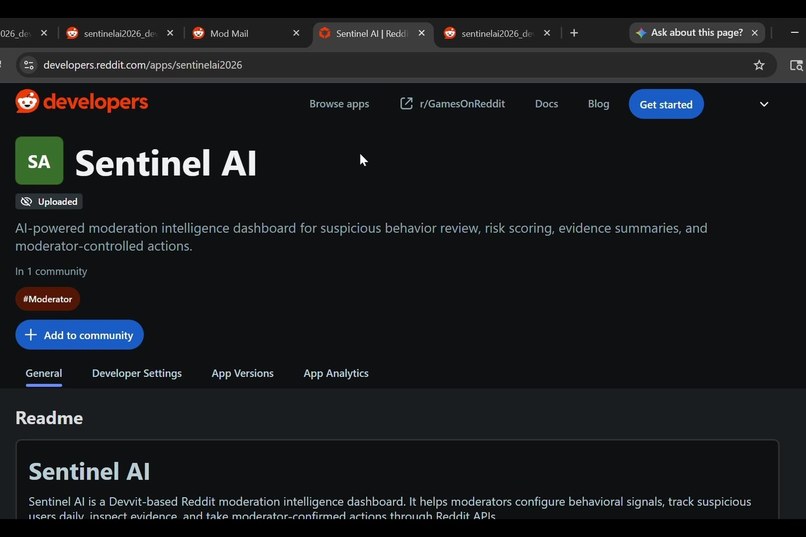

Devvit App Directory listing for Sentinel AI, the installable Reddit moderation intelligence dashboard.

-

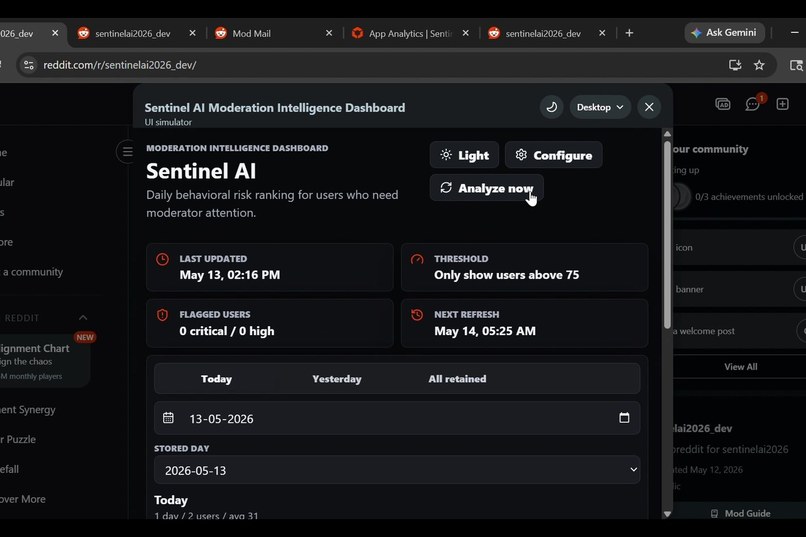

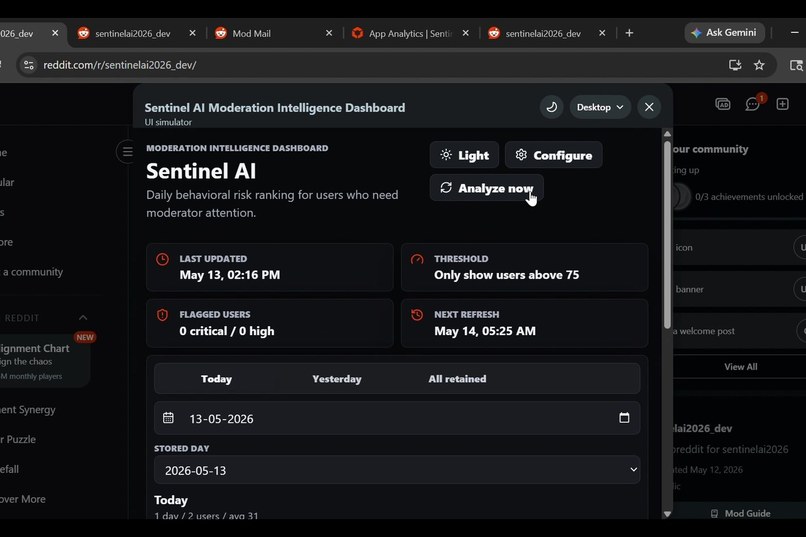

Dashboard with dark mode, Analyze Now, threshold controls, retained dates, and risk summaries.

-

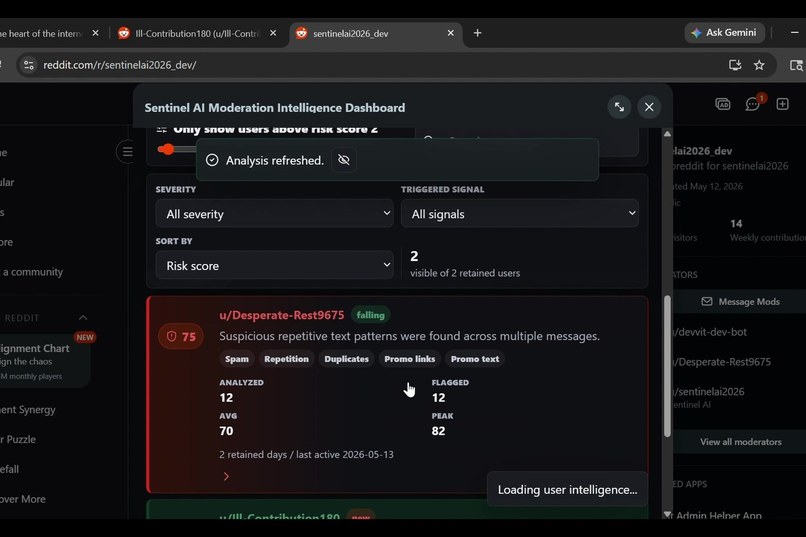

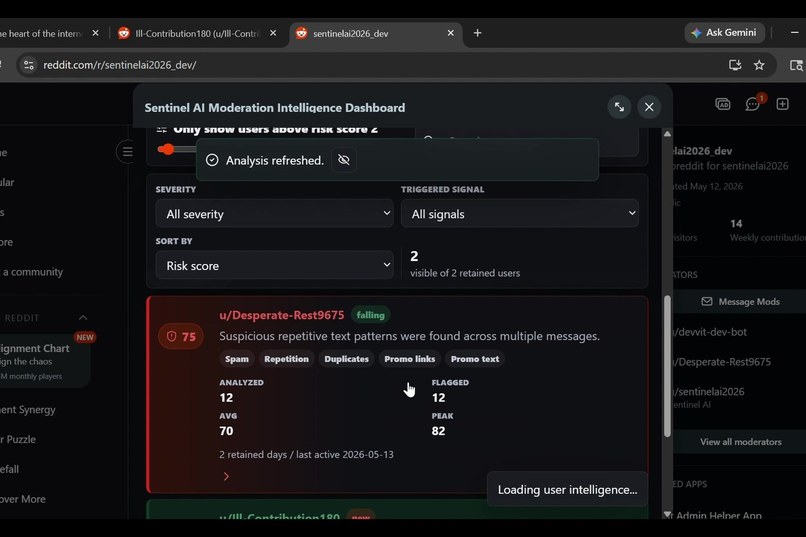

User intelligence view with AI summary, evidence, risk history, and moderator actions.

-

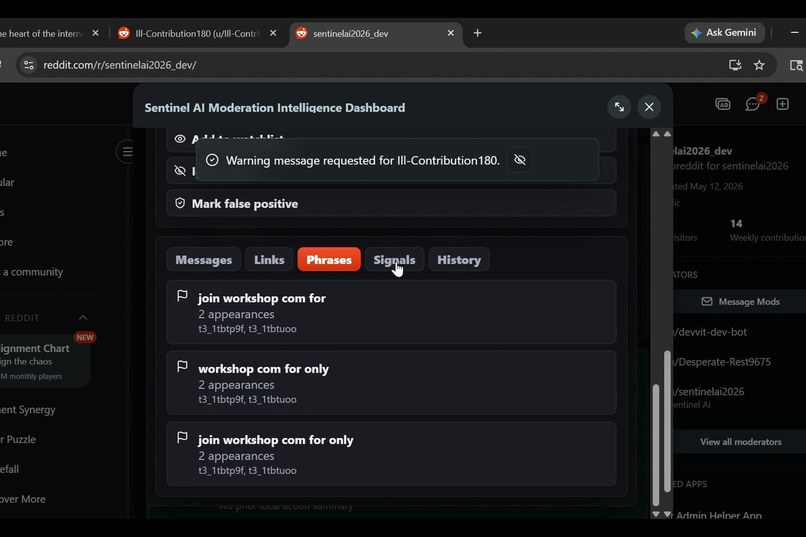

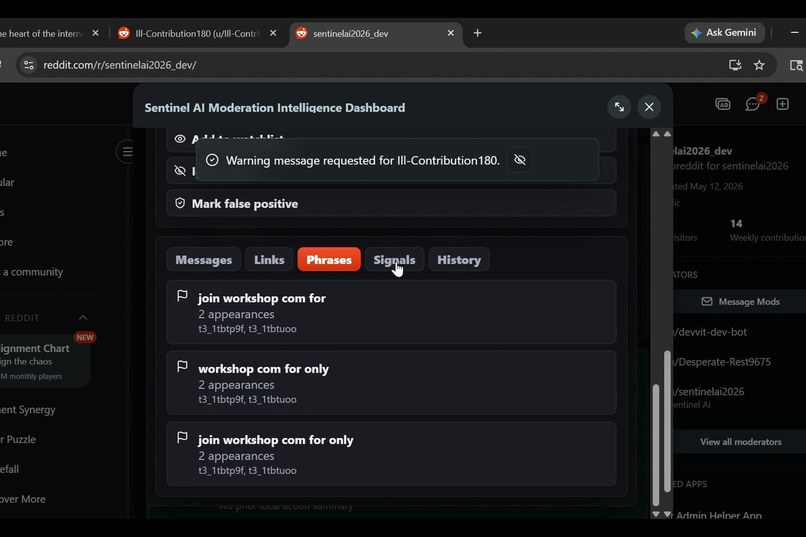

Evidence review highlights suspicious comments, repeated phrases, and available mod decisions.

Sentinel AI: moderation intelligence for the work moderators actually do

Moderation does not usually fail because moderators lack judgment. It fails because the evidence is scattered.

A suspicious user might post a promotional link in one thread, repeat the same phrase in another, leave a burst of low-quality comments an hour later, and only look clearly risky after a moderator manually connects all of those dots. On fast-moving subreddits, that investigation work becomes repetitive, slow, and easy to miss.

Sentinel AI is built for that exact gap: a Reddit-native moderation intelligence dashboard that turns scattered subreddit activity into a ranked, explainable review queue.

It is not a chatbot. It is not an autonomous moderation bot. It does not make final enforcement decisions. Sentinel AI analyzes behavior, explains why a user needs attention, and gives moderators a faster path from evidence to action while keeping humans in control.

What Sentinel AI does

When a moderator installs the app, Sentinel opens with a first-run configuration screen where the mod team chooses which behavioral signals matter for their community. The detector registry is expandable and includes signals such as spam, repetition, duplicate messaging, suspicious links, self-promotion, vulgarity, toxicity, harassment, posting velocity, repeated sentence structures, engagement farming, repeated domain promotion, and suspicious subreddit behavior.

Moderators can also add Custom Lookout Instructions for community-specific risks, for example:

- Detect Telegram promotions

- Flag crypto shilling

- Watch for OF bait

- Detect homework-answer spam

- Watch for political brigading patterns

Those instructions influence the analysis and explanations without turning the app into an uncontrolled AI moderator.

The dashboard then ranks users by daily behavioral risk score and defaults to showing only users above the selected threshold. Moderators can adjust the threshold slider, filter by severity, filter by triggered signal, search by username, change sort order, and inspect retained date views.

Clicking a flagged user opens a detailed intelligence view with:

- An AI-assisted explanation of why the user was surfaced

- Highlighted messages and comments analyzed that day

- Triggered signals and evidence labels

- Repeated phrases and links

- Daily risk trend and average score context

- Moderator actions such as remove flagged content, warn, mute, ban, add to watchlist, ignore flag, and mark false positive

The language is intentionally careful. Sentinel does not confidently claim that something is AI-generated. It uses review-safe phrasing like potential synthetic or repetitive engagement patterns, suspicious repetitive language structures, and spam-like activity.

Technical architecture

Sentinel AI is built as a Devvit app with a modular architecture designed for production growth:

- Devvit custom post UI for the installable moderator dashboard

- Reddit API integration for subreddit activity and moderation actions

- Redis-backed storage for configuration, retained daily analysis, watchlists, and action state

- Scheduled daily analysis for finalized daily risk snapshots

- Analyze Now for current-day refreshes without duplicate aggregation

- Detector registry for expandable behavior signals

- Weighted risk scoring from 0 to 100 across multiple evidence categories

- AI provider abstraction so hosted Gemini/OpenAI-style providers can be swapped or extended later

- Date-aware retained history so Today, Yesterday, and All retained views stay consistent until retention cleanup

The scoring system is deliberately evidence-weighted instead of alarmist. A single repeated phrase should not automatically become a 99/100 emergency. Scores are built from category evidence, repetition frequency, link behavior, velocity, severity, and custom lookout matches, then surfaced with explanation so moderators can decide what matters.

Why this belongs on Devvit

A lot of Reddit moderation infrastructure has historically lived outside Reddit as custom scripts, fragile bots, hosted dashboards, and third-party services. Sentinel AI is designed in the opposite direction: native, installable, subreddit-scoped moderation infrastructure that can live inside Reddit's Devvit ecosystem.

That matters because moderators should not need to operate servers or trust a disconnected external tool just to understand what is happening in their community. Sentinel AI brings the intelligence layer closer to Reddit itself.

Links

- Devvit app listing: https://developers.reddit.com/apps/sentinelai2026

- Test subreddit: https://www.reddit.com/r/sentinelai2026_dev/

- Live dashboard post: https://www.reddit.com/r/sentinelai2026_dev/comments/1taqkeq/sentinel_ai_moderation_intelligence_dashboard/

Why it can matter to real communities

Sentinel AI is useful anywhere moderators need to identify behavior patterns rather than just single bad posts: spam waves, link farming, shilling, brigading, harassment bursts, repetitive templated replies, suspicious promotion, and recurring low-quality activity.

The goal is simple: reduce the investigation burden, make evidence easier to review, and help moderators act faster and more consistently without replacing moderator judgment.

Built With

- devvit

- google-gemini-api

- moderation

- react

- redis

- scheduled-jobs

- typescript

- vite

Log in or sign up for Devpost to join the conversation.