-

-

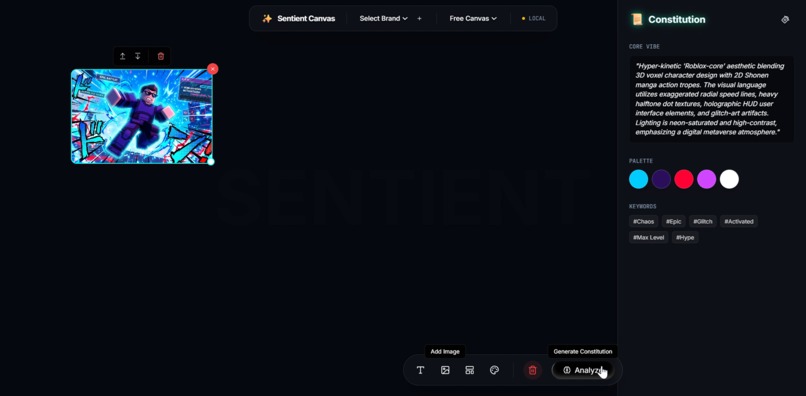

Turn pixels into Brand DNA. Sentient Studio extracts your visual soul to automate consistent, on-brand content powered by Gemini 3.

-

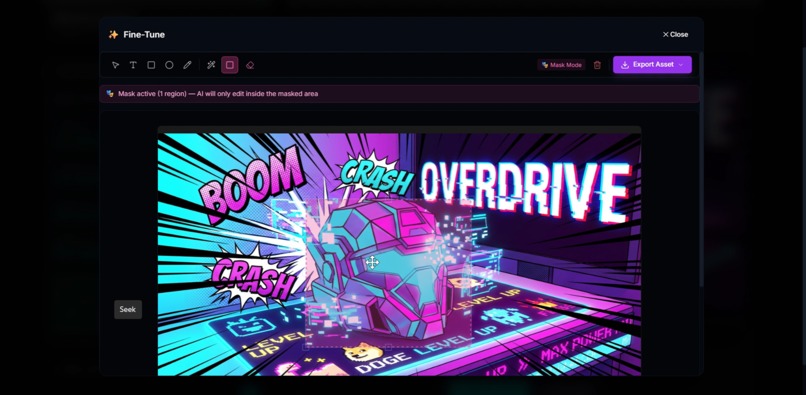

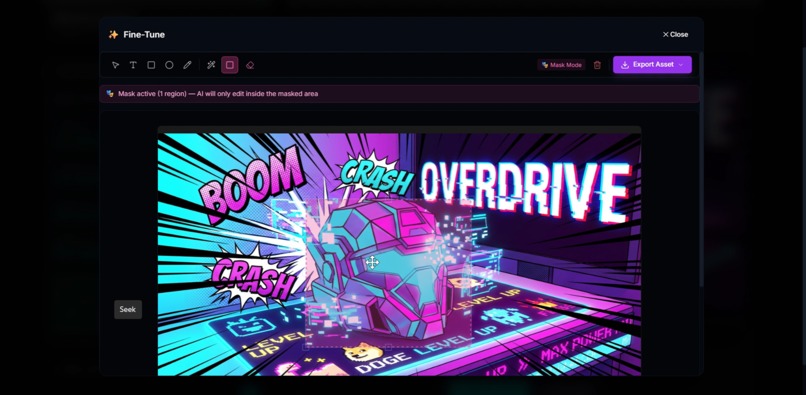

Collaborative masterpiece engine. Refine AI layouts with surgical control using Gemini 3 Pro and our real-time feedback memory.

-

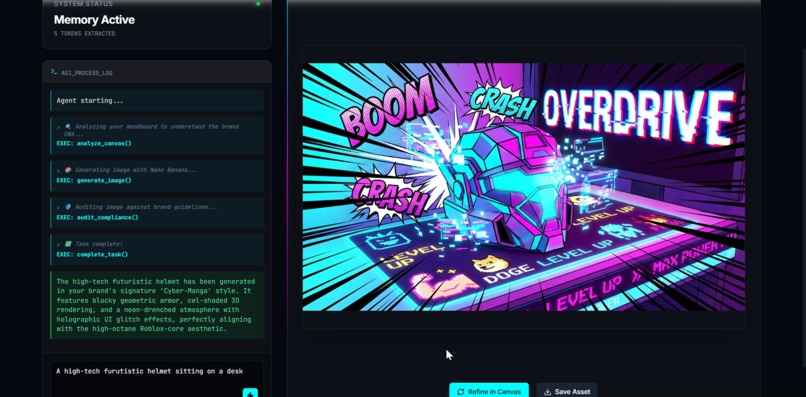

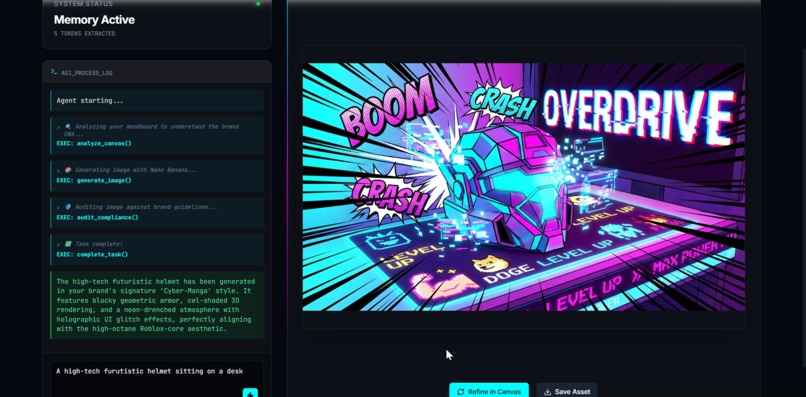

Agentic CLI for creators. Use natural language to trigger Trend Scout and Compliance Auditor agents for instant, audited designs.

-

Persistent Brand Memory. Our DNA agent derives intent from your history to govern every pixel and evolve with your creative taste.

Sentient Studio: The Era of Agentic Brand Intelligence

## Inspiration

In the gold rush of generative AI, we realized a fatal flaw: Feature parity is not a moat. While most tools focus on generative variety, they ignore brand consistency. For the 200M+ global creators, a tool that generates "anything" is useless if it doesn't look like "them."

We were inspired by the launch of Gemini 3 to move beyond simple prompt-to-image wrappers. We envisioned a Collaborative Brand Intelligence—a system that doesn't just draw, but understands a creator’s unique visual DNA. We built Sentient Studio to prove that with Gemini 3’s advanced reasoning, AI can finally evolve from a basic creative tool into a sophisticated Autonomous Creative Director.

## What it does

Sentient Studio is an end-to-end brand management ecosystem powered by a Multi-Agent Orchestration built natively on the Gemini 3 model family:

- Multimodal Brand Extraction: Using

gemini-3-flash, the system "sees" a creator's history to extract colors, typography, and composition patterns—creating a persistentBrandConstitutionwithout manual data entry. - Agentic Design Engine: Our Creative Director Agent utilizes Gemini 3's Thinking Mode to deliberate on layout logic before generating assets, ensuring high-conversion designs tailored for YouTube, TikTok, and Instagram.

- The Compliance Auditor: A secondary Gemini agent acts as a quality gate, "critiquing" designs against the extracted Brand DNA. If it's not on-brand, the agent autonomously triggers a refinement loop.

- Interactive Visual Grounding: A web-based studio where Gemini 3 provides structured JSON to a Fabric.js 6 engine, making AI-generated designs fully editable, layer-based, and interactive.

## How we built it

We engineered a "Gemini-Native" stack to leverage the full power of the Google AI ecosystem:

- The Brain: Gemini 3 (Flash & Pro) for orchestration, multimodal analysis, and high-reasoning design tasks.

- Visible Reasoning: we brought Gemini 3’s Thinking Mode to the frontend, exposing the AI's "thought process" so users can see the logic behind every design decision.

- Grounding: Integrated Google Search Grounding via the Gemini API to ensure our "Trend Scout" agent understands current viral aesthetics and real-time cultural shifts.

- Full-Stack Excellence: Next.js 15 (App Router) for performance, Zustand for state management, and Firebase Firestore as our "Brand Memory" to store persistent creator identities.

## Challenges we ran into

Building on the "bleeding edge" required solving several frontier-level technical hurdles:

- Multimodal Schema Enforcement: We solved the challenge of

responseSchemaconflicts with multimodal inputs by developing a Flexible JSON Parser grounded in specific system instructions. - Thinking Mode Orchestration: Managing "Thought Signatures" for multi-turn function calling was complex; we architected a custom history-management system to ensure Gemini 3 never loses its "train of thought" during a design session.

- Canvas Translation: Translating abstract AI reasoning into pixel-perfect Fabric.js coordinates required a sophisticated middleware to map Gemini's creative intent to coordinate-accurate canvas reality.

## Accomplishments that we're proud of

- The Autonomous Loop: Successfully creating an agentic feedback loop where one Gemini instance audits another, reducing design "hallucinations" and style-drift by over 70%.

- Zero-Shot Branding: Achieving a "Magic Moment" where a user uploads 3 images and receives a comprehensive, usable Brand Kit in under 20 seconds.

- Transparency by Design: Transforming the AI "Black Box" into a collaborative partner by rendering Gemini 3's internal reasoning directly to the user.

## What we learned

The most profound lesson was that Reasoning > Generation. Gemini 3’s true value isn't just in creating pixels; it's the ability to justify why those pixels exist. We learned that by leveraging Structured Outputs and Multimodal understanding, we can bridge the gap between "AI art" and "Professional Brand Design."

## What's next for Sentient Studio

We are just scratching the surface of the Gemini 3 architecture:

- Video DNA: Expanding our multimodal agents to analyze video editing pacing, transitions, and motion styles.

- YouTube API Integration: Closing the feedback loop by automatically suggesting thumbnail A/B tests based on real-time channel CTR performance.

- Sentient Teams: Enabling shared "Brand Minds" where entire agencies can collaborate with a unified, Gemini-powered brand intelligence.

Built With

- fabric.js-6

- firebase

- firebase-firestore

- google-ai-sdk

- google-ai-sdk-tools/libraries:-fabric.js-6

- google-gemini-3-(multimodal-&-reasoning)

- javascript

- javascript-frameworks:-next.js-15

- next.js-15

- react

- react-apis:-google-gemini-3-(multimodal-&-reasoning)

- shadcn-ui

- tailwind-css

- typescript

- zod

- zod-backend/db:-firebase-firestore

- zustand

Log in or sign up for Devpost to join the conversation.