Inspiration

Conversational agents in heathcare typically require a much higher level of rationalization, adaptation, and personalization compared to agents in other domains. Dialogue with the user must be carefully and logically guided by the user's responses so that the agent makes the appropriate responses and provides the right information or advice based on the questions the user asks and his or her responses to questions asked by the agent. The healthcare information, functions, and tools delivered by the agent must be tailored to the user's beliefs, needs, and goals which must be inferred from the user's interactions both from the current conversation as well as from previous conversations with the user which can be stored in and retrieved from a database. Conversational agents used to deliver psychotherapy or cogntive-based therapy must ensure their responses are guided by the both the patient's responses and the theraputic goals of the program.

Reproduced from Bendig, E., Erb, B., Schulze-Thuesing, L., & Baumeister, H. (2019). The Next Generation: Chatbots in Clinical Psychology and Psychotherapy to Foster Mental Health – A Scoping Review. Verhaltenstherapie, 1–13. https://doi.org/10.1159/000501812. https://www.karger.com/Article/FullText/501812

These requirements mean rule-based dialogue systems will always have an important role in healthcare conversational agents, in addition to more contemporary ML-model derived dialogue systems which probabilistically learn dialogue steps and actions from language models and training data. Rule-based conversational agents must rely on linguistic analysis of the user's responses vial NLP and NLU at each step and respond using rules, logic, patterns, and decision trees. The more information and knowledge that can be extracted from the user's input, the better the dialogue system will be able to respond appropriately fulfill its therapeutic function.

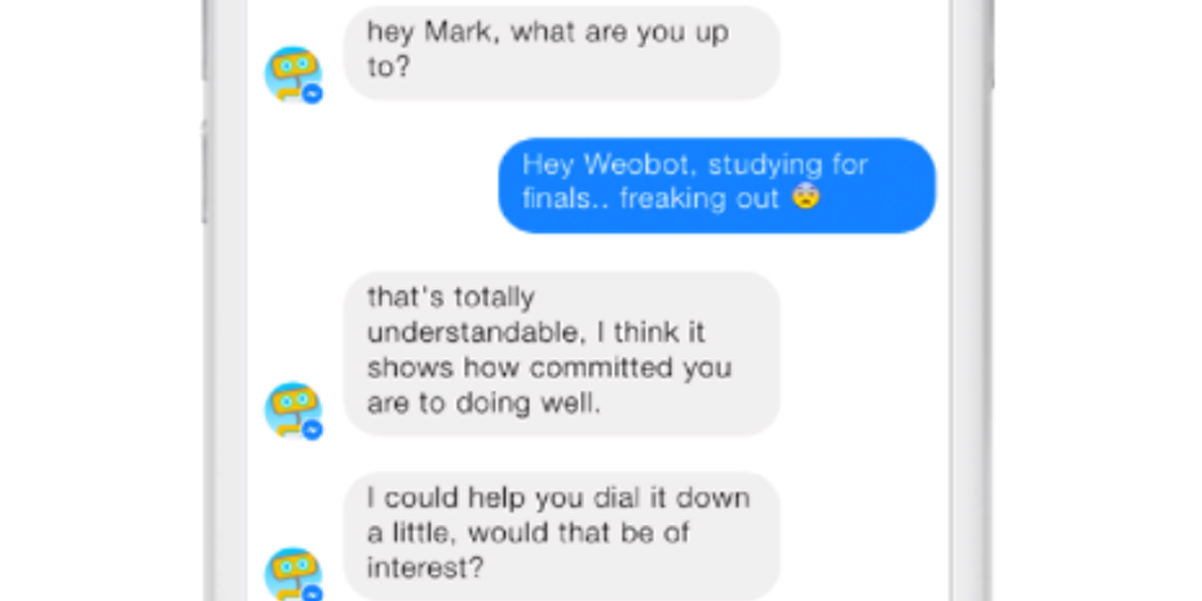

Contemporary mental-therapy apps like Woebot are typically designed to handle only short messages and sentences and only do surface level linguistic analysis of the messages users input, in the classic instant-messenger paradigm:

Another approach to online mental health therapy and treatment of mental health conditions like PTSD that is receiving a lot of attention is journal therapy:

Journal therapy is a writing therapy focusing on the writer's internal experiences, thoughts and feelings. This kind of therapy uses reflective writing enabling the writer to gain mental and emotional clarity, validate experiences and come to a deeper understanding of him/herself. Journal therapy can also be used to express difficult material or access previously inaccessible materials.

From "How to Journal Therapy Appointments – Journal Template" by Linday Braman - https://lindsaybraman.com/how-to-journal-therapy/

From "How to Journal Therapy Appointments – Journal Template" by Linday Braman - https://lindsaybraman.com/how-to-journal-therapy/

A mental health chatbot that can do deep linguistic analysis on a user's journal entries, summarize and extract information and knowledge about the user's state of mind, and make guided recommendations towards therapeutic goals can be a very useful complement to existing psychotherapy bots.

What it does

Selma is a multimodal CUI designed to provide accessible and inclusive access to healthcare self-management tools like medication trackers, mood and symptom trackers, therapeutic journals, time, activity and exercise, trackers, personal planners, reliable knowledge bases on health conditions and diseases, and similar tools used in the management of chronic physical and mental diseases and disorders like PTSD and conditions like ADHD or chronic pain where self-management skills for life activities are critical.

Selma follows in the tradition of 'therapy bots' like ELIZA but updated with powerful ML-trained NLU models for interacting with users in real-time using both typed text and speech. Existing self-management apps like journal, activity-, and symptom-tracking apps all use GUIs or touch UIs and assume users are sighted and dexterous. The reliance on a visual medium and complex interface for entering and reviewing daily self-management data can be a significant barrier to adoption of these apps by people with disabilities and chronic conditions, who form a large segment of a self-management app's user base.

Selma eschews complex GUI forms and visual widgets like scales and calendars and instead uses a simple line-oriented conversational user interface that uses automatic speech recognition and natural language understanding models for transcribing and extracting symptom descriptions, journal entries, and other user input that traditionally requires navigating and completing data entry forms.

For this hackathon we added the ability to support journal therapy by analyzing long-form narrative journal entries using the expert.ai Natural Language API. From a set of writing prompts the user enters a long-form journal entry which is analyzed using the expert.ai NL API for knowledge triples, lemmas, entities, emotional and behavioral traits. The information is used to guide the therapeutic process and provide relevant feedback and tools and is persisted in a database where it can be reviewed by the user or their therapist.

How we built it

Overview

Selma is written in F#, running on .NET Core and using PostgreSQL as the storage back-end. The Selma front-end is a browser-based CUI which uses natural language understanding on both text and speech, together with voice, text and graphic output using HTML5 features like the WebSpeech API to provide an inclusive interface to self-management data for one or more self-management programs the user enrolls in. The front-end is designed to be accessible to all mobile and desktop users and does not require any additional software beyond a HTML5 compatible browser.

Client

The CUI, server logic and core of Selma are written in F# and make heavy use of functional language features like first-class functions, algebraic types, pattern-matching, immutability by default, and avoiding nulls using Option types. This yields code that is concise and easy to understand and eliminates many common code errors, which is an important feature for developing health-care management software. CUI rules are implemented in a declarative way using F# pattern matching, which greatly reduces the complexity of the branching logic required for a rule-based chatbot.

Server

The Selma server is designed around a set of micro-services running on the OpenShift Container Platform which talk to the client and stored data in the storage backend.

Since the data is highly-relational and commonly requires calculation of statistics across aggregates, a traditional SQL server is used for data storage.

Tech Stack

| Name | Description |

|---|---|

| WebSharper | F#-to-JavaScript compiler and application framework for building web applications in pure F#. |

| HTML5 WebAudio/WebSpeech | Browser API for capturing speech and performing speech-to-text without requiring a mobile or desktop app. |

| .NET Core | Open-source, cross-platform application development SDK and runtime. |

| PostgreSQL | Advanced, mature open-source relational and multimodel database. |

| OpenShift Container Platform | Kubernetes-based PAAS fabric which automates many aspects of deploying and managing web applications. |

Knowledge Extraction

We created a .NET API based on automatically-generated bindings for the expert.ai NL REST API. Using F# we then created types like the following to model relations and Subject-Verb-Object triples:

type Relation<'t1, 't2> = Relation of 't1 * string * 't2 with

member x.T1 = let (Relation(t1, _, _)) = x in t1

member x.Name = let (Relation(_, n, _)) = x in n

member x.T2 = let (Relation(_, _, t2)) = x in t2

type Triple = Triple of SubjectVerbRelation * VerbObjectRelation option with

member x.Subject = let (Triple(s, _)) = x in s.T1

member x.Verb = let (Triple(s, _)) = x in s.T2

member x.Object = let (Triple(_, o)) = x in if o.IsSome then Some(o.Value.T2) else None

and SubjectVerbRelation = Relation<Subject, Verb>

and VerbObjectRelation = Relation<Verb, Object>

and Subject =

| Subject of string

| Relation of Relation<string, string>

and Verb = Verb of string with override x.ToString() = let (Verb t) = x in t

and Object =

| Object of string

| Relation of Relation<string, string>

Built With

- .net

- expertai

- f#

- html5

- postgresql

- websharper

Log in or sign up for Devpost to join the conversation.