Inspiration

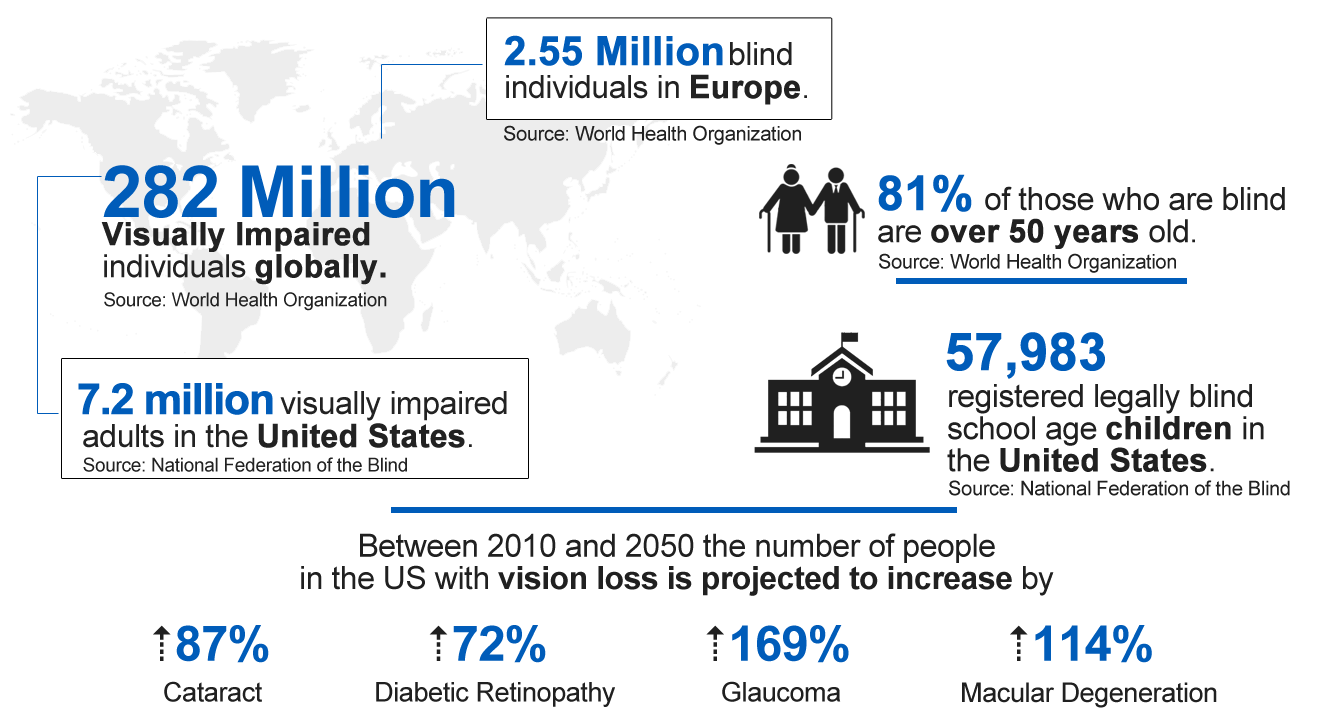

Over 1 billion people have moderate to severe vision impairment. 2 out of 100 Canadians are partially sighted or blind, and that number is expected to double in the next 25 years. Visual impairment prevents many people from completing “everyday” tasks and affects work, mobility and self-esteem. Although there are preventative and reparative remedies, many overlook the difficult day-to-day life struggles that come with sight-loss. Our team wanted to focus on solving a smaller problem that many might not even be aware of.

Vispero (2020). Empowering Independence

Sunny is a fashion-conscious high school student. She recently became diagnosed with Lebers Hereditary Optic Neuropathy, a disease that induces central vision failure. With her sudden loss of vision, ordinary tasks that are as simple as choosing a fashionable outfit from the closet or going shopping becomes a challenge that requires the help of others.

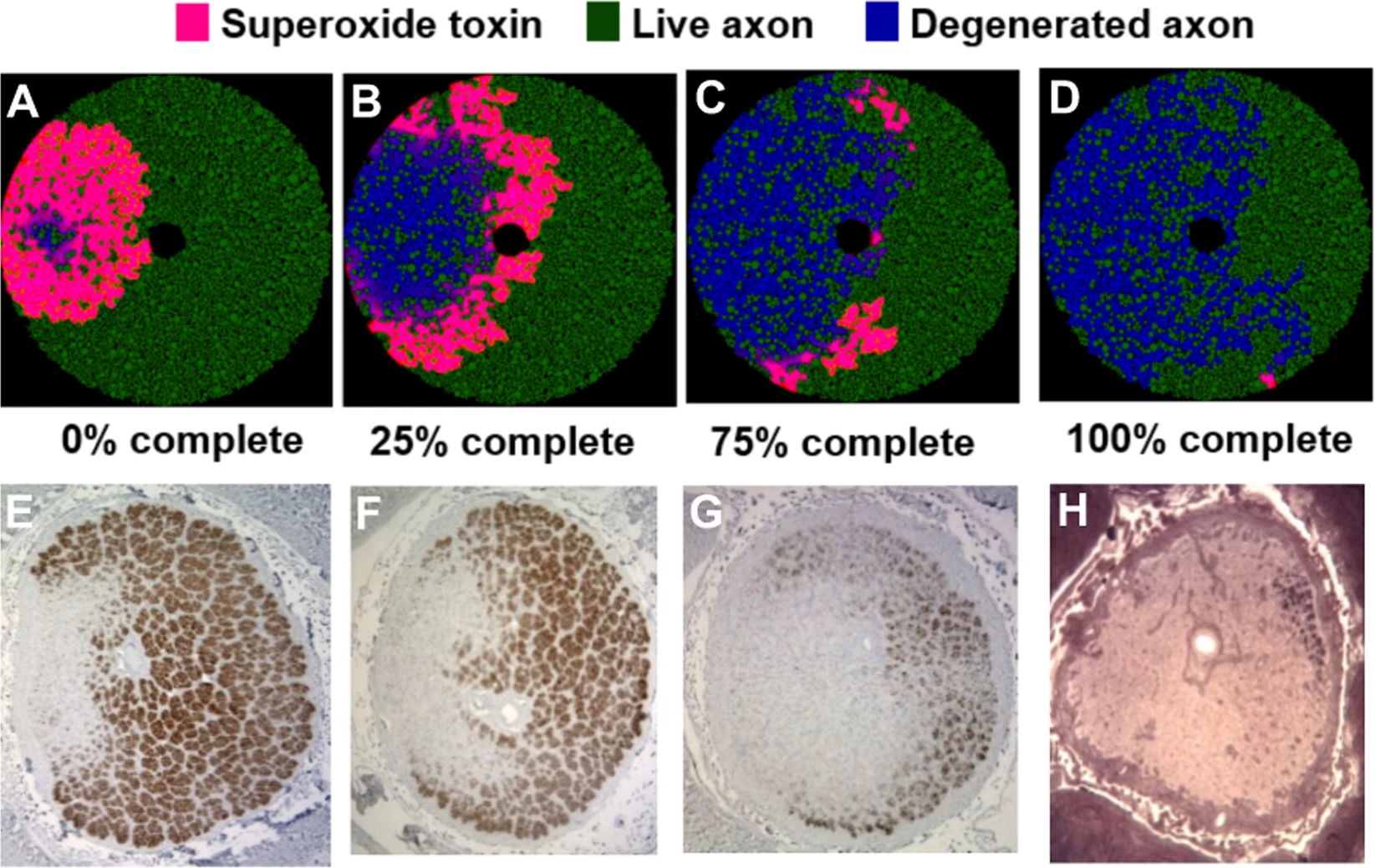

Propagation and Selectivity of Axonal Loss in Leber Hereditary Optic Neuropathy Nature

Propagation and Selectivity of Axonal Loss in Leber Hereditary Optic Neuropathy Nature

The question we posed while creating our project is how can we assist the visually impaired to navigate an industry built around sight, be reassured about choices they make, and be confident about independence?

What it does

Introducing Seemless.

For the visually impaired who want to achieve independence while shopping and styling clothing, Seamless is a highly accessible mobile app that provides item recognition and fashion recommendations by using machine learning and OCR.

Seemless acts as a "helper" to a user by reading out what type of clothing they have and providing recommendations on what to style it with. The entire app was designed following WCAG standards and each component within the app has accessibility enabled. You may ask what it means to have accessibility enabled. What it means is that when a user touches a component on the screen, it reads out a description of what the component is, how the user should interact with it, and what the component might do. Everything component within Seemless is audible and designed for people with vision impairment.

All the user needs to do is point their camera at an article of clothing they already own or one that they want to buy, and our application will look through the database of other clothing that the user has (they would have previously inputted it into the system already) as well as current trends in the fashion industry to make suggestions on what the user should consider wearing or matching their outfit with.

How we built it

Seemless is created using React Native for frontend, Cockroach DB for database, Express and Node.js for the backend, Google Cloud’s Vertex AI and Azure Machine Learning for training the identification model and Google Cloud Functions for creating APIs to process the predictions for the interact with between our model data and the frontend. The dataset that was used for training our model was iMaterialist Challenge (Fashion) at FGVC5 from Kaggle. We would like to point out that we paid extra attention to ensuring accessibility within our app by enabling accessibility hint, tag, and label props so the screen reader can provide assistance with navigation. We also designed the app from Scratch on Figma while following WCAG standards and using accessible color palettes and shapes for components.

Challenges we ran into

Some challenges that we ran into was finding the right dataset for training our model as many were password protected or the formats were difficult to work with. We also spent a lot of time looking for documentation on Google’s Vertex AI and had to debug for a long time. The biggest challenge of all was designing and testing the product so that it could be vision-impaired accessible.

Although these drawbacks did set our timeline back by a few hours, our team collaborated to resolve these issues.

Accomplishments that we're proud of and what we learned

We are excited to be able to demo a fully-functioning app, developed by 4 people that share the same drive. It is one of our teammate's first hackathons and she joined us as a designer; working on the most important parts of the app as accessible UX/UI. We had so much fun brainstorming the idea and learning about all the different aspects of accessibility features that we could add to our project. Our team consists of a science major, a business and computer science major, and two engineering majors, allowing us to think about our ideas from a more diverse perspective. We're proud to learn key skills from one another!

What's next for Seemless

Seemless strives to provide an efficient and quality experience for all and we believe in decreasing stigmas against disabilities such as vision impairment.

In the future, we would like to streamline logging in by using fingerprint identification rather than typing so users will not need to spend as much time trying to login to the application. We also want to create a spatial guidance system that instructs the user where to point their camera to so the images being analyzed will be of higher quality.

Business Viability

To produce Seemless, the team has trained a model on how to identify articles of clothing in extensive detail. We have also created an API which may be sold to business for them to integrate into their ecommerce websites, helping to increase the accessibility to their site. Current alt text generated on webpages are very generic and often provide little to no meaning to someone using a screen reader to understand what an item is. Our Seemless API is will help to generate better alt text, a critical but commonly overlooked part of helping the visually impaired navigate the internet. We would be selling it as a SAAS product. The recommendations engine could also prove to be profitable for larger retail stores as they would want to know what is in a potential customer's closet already and learn about how it might affect their future purchasing patterns.

Log in or sign up for Devpost to join the conversation.