-

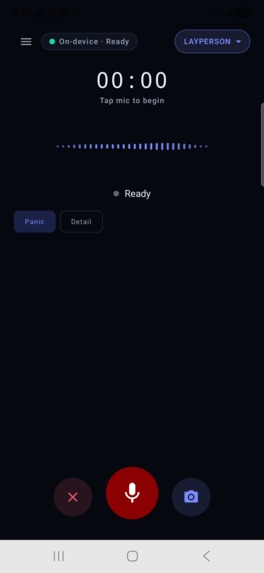

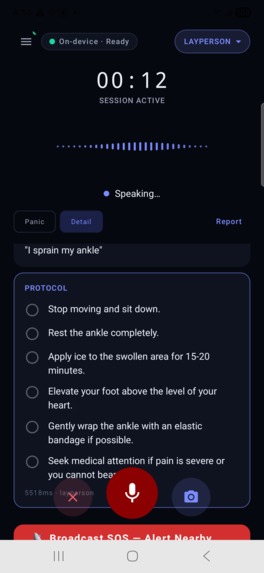

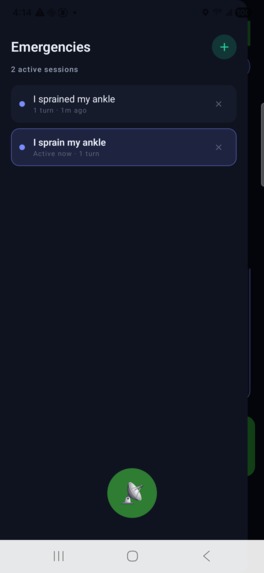

This is the starter screen the user will see

-

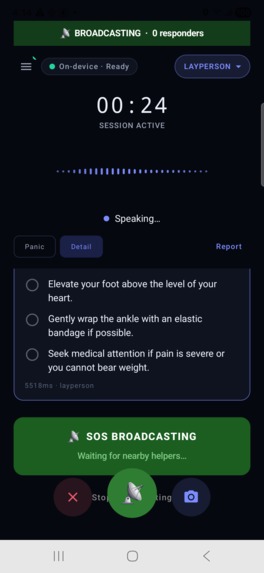

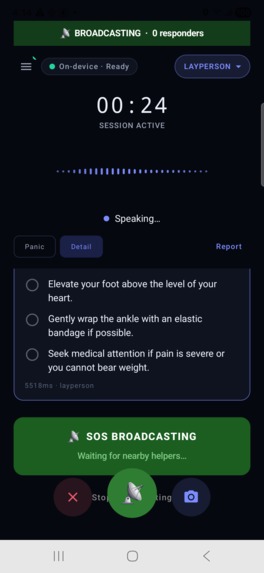

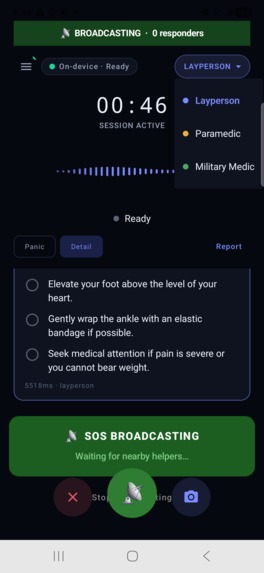

This is for SOS broadcasting which basically works as an emergency feature that locates nearby phones with the same app and leads them there

-

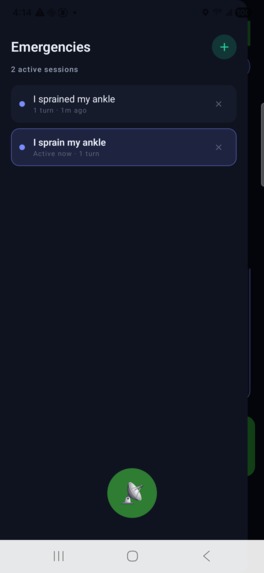

This has all the old emergencies and can go back to them as well

-

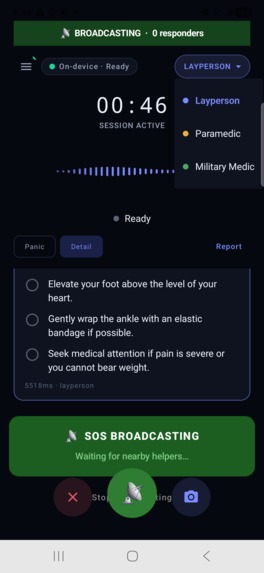

There are different handoffs where if a paramedic arrives we can hand it off to them and they will be able to interact with their language

-

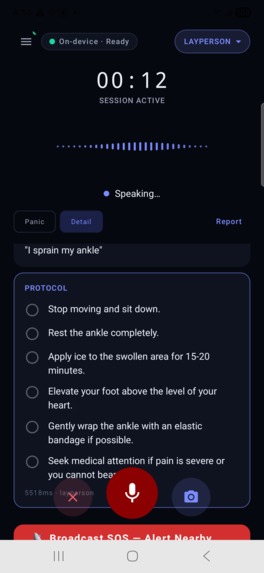

This is something I used as a scenario where "I sprained my ankle" and it gives a detailed description on what to do

-

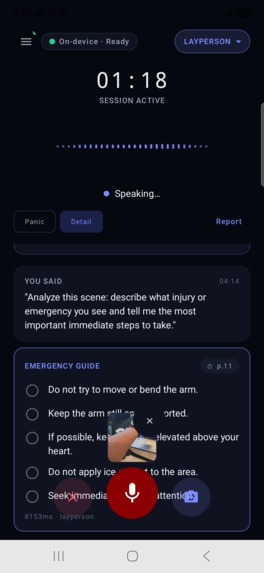

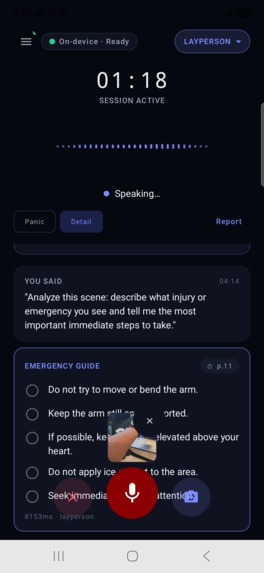

We can also take a photo that will allow it to read the picture and allow the AI to understand the situation

Inspiration

Every 4 minutes, someone in the US dies from a cardiac arrest that bystanders could have treated. The hardest part isn't willingness — it's that people freeze. They know something is wrong but have no idea what to do, and by the time they've searched the internet or waited for a 911 operator, the window has closed.

We asked ourselves: what if the right medical knowledge was always in your pocket, worked anywhere on Earth with zero signal, and could be accessed just by speaking?

That question became SecondLife.

What it does

SecondLife is a fully offline, voice-activated emergency medical assistant running Google's Gemma 4 E4B model entirely on-device via LiteRT. You speak the emergency — "someone is choking", "he's not breathing" — and within a second the app reads back exactly what to do, step by step, hands-free. Your hands stay on the patient.

Sub-100ms response for the 9 most common emergencies via a deterministic keyword router — Gemma only runs for unknown or complex follow-ups Role-aware guidance — Layperson, Paramedic, and Military Medic modes change language, clinical depth, and protocol entirely Offline peer-to-peer SOS mesh using Google Nearby Connections over Bluetooth and WiFi Direct — one phone broadcasts an SOS, every nearby SecondLife device is alerted and navigated to the scene with a live compass Native CPR metronome at 100 BPM, pressure timers, and seizure timers that trigger EMS call thresholds automatically Camera scene analysis — capture the injury and Gemma's vision encoder incorporates what it sees into its guidance Tamper-evident audit log — every query and response chained with SHA-256 hashes for medical accountability

How we built it

On-device AI pipeline: A RAG pipeline using FAISS vector search over clinical protocol PDFs with BM25 retrieval as Android fallback. Gemma 4 E4B runs via LiteRT-LM on an explicit 8-thread CPU backend — GPU/NPU backends caused OOM crashes loading the full 3.4 GB model into GPU-addressable RAM. CPU uses memory-mapped I/O so only touched pages enter physical RAM.

Android app: Jetpack Compose UI, CameraX for 768×768 scene capture, Android SpeechRecognizer for voice input, and TextToSpeech to read responses aloud hands-free. A persistent Conversation object retains multi-turn context across follow-up questions. A MeshService foreground service keeps Nearby Connections alive with the screen off.

Sensor layer: PCM 16-bit 16kHz audio capture, accelerometer shake-to-activate, and a CompassNavigator fusing GPS and rotation sensors to point responders toward the injured person in real time — no internet, no cell signal, satellites only.

Challenges we ran into

Latency. Our first prototype took ~60 seconds to respond — completely unacceptable in an emergency. The breakthrough was realising Gemma doesn't need to run at all for the 9 most common emergencies. A deterministic keyword classifier now handles CPR, choking, and bleeding in under 1ms, dropping first-response time from 60 seconds to under 100ms.

LiteRT API instability. The API changed mid-build — sendMessageAsync() was replaced with synchronous sendMessage(), maxNumTokens below 4096 triggered a DYNAMIC_UPDATE_SLICE native crash, and passing systemInstruction in ConversationConfig caused "Failed to invoke the compiled model" on every call. Each took hours to isolate with no documentation.

Nearby Connections hides RSSI. We designed a Bluetooth proximity meter assuming we could read raw signal strength. Google intentionally removed RSSI from the Nearby Connections API. We pivoted to live GPS coordinate exchange over Bluetooth payloads — more accurate and still fully offline.

Merge conflicts under pressure. Three team members working simultaneously produced three-way conflicts where the AI pipeline, sensor integration, and UI all touched the same inference loop at the same time.

Accomplishments that we're proud of

Getting Gemma 4 E4B running entirely on a phone with no server, no cloud, no internet — and doing it fast enough to be useful in a real emergency The peer-to-peer SOS mesh — watching two phones with no internet connection automatically alert each other and navigate a responder to the scene felt genuinely meaningful 60 seconds → under 100ms response time through pure architectural thinking, not hardware Building a system that works for a panicking bystander and a combat medic with a single tap between them A SHA-256 chained audit log that could hold up in a medical review — something most consumer health apps don't think about at all

What we learned

On-device AI is ready for real-world applications — Gemma 4 E4B on a mid-range Android phone is genuinely capable, not a toy demo Workflow beats model — the biggest latency wins came from rethinking when to call the model, not from tuning it Offline-first forces better design — every feature had to work with zero infrastructure, which eliminated shortcuts and made the architecture cleaner LiteRT-LM's native layer is sensitive in ways the documentation doesn't warn you about — defensive coding and extensive logcat reading are essential Google Nearby Connections is powerful but opaque — understanding its limitations early would have saved significant time

What's next for SecondLife

Wake word activation — "Hey SecondLife" triggers the mic without touching the phone, using an on-device wake word engine like Porcupine Real-time GPS distance — live coordinate exchange over Bluetooth so the compass shows exact metres to the injured person as both parties move Fix Nearby Connections RSSI — switch to raw BLE scanning to get real signal strength for indoor proximity when GPS is unavailable Expanded protocol library — stroke, drowning, hypothermia, diabetic emergency, spinal injury and more Wearable trigger — a Wear OS companion that lets you activate SecondLife from your watch when your hands are full EMS handoff QR code — generate a scannable summary of the entire session for paramedics arriving on scene

We are running this on a GitHub Release - that is how our ADK will be shown

Log in or sign up for Devpost to join the conversation.