-

-

Feel the desert heat as sand and dust swirl around you while a storm approaches in your video

-

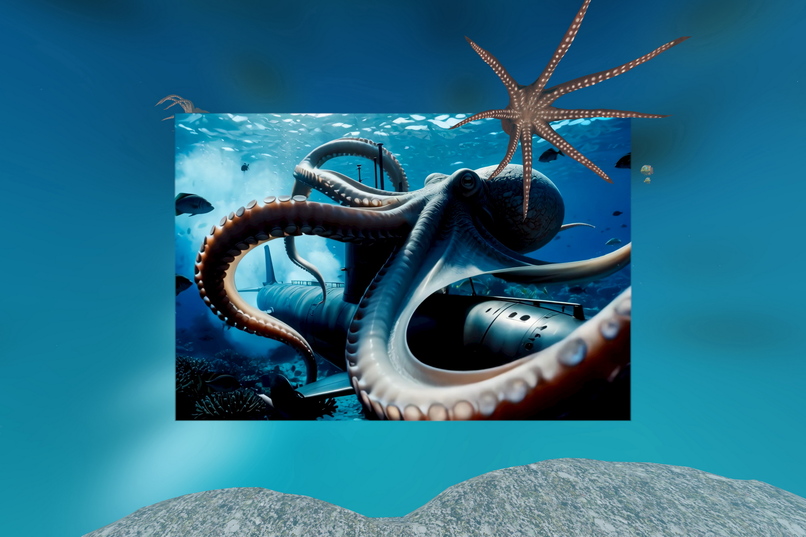

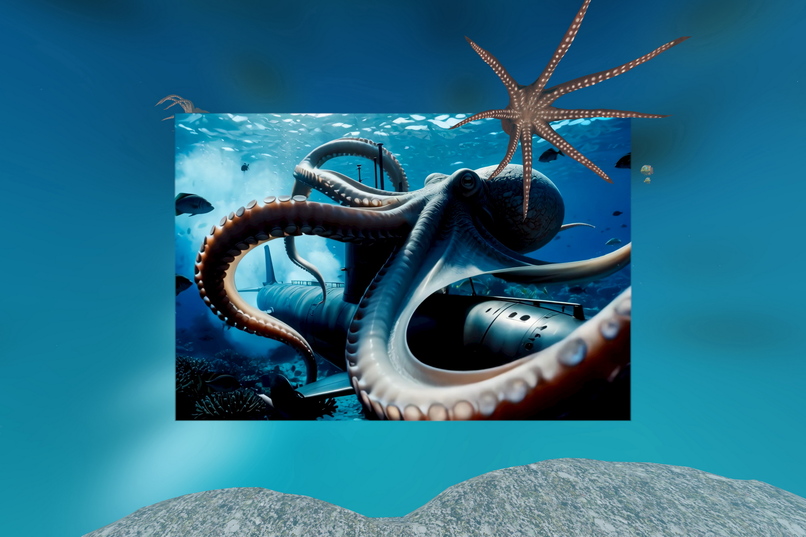

Underwater scenes in your movies surround you with animated sea life in every direction

-

While watching a rainy scene, feel like you're standing inside the rain!

-

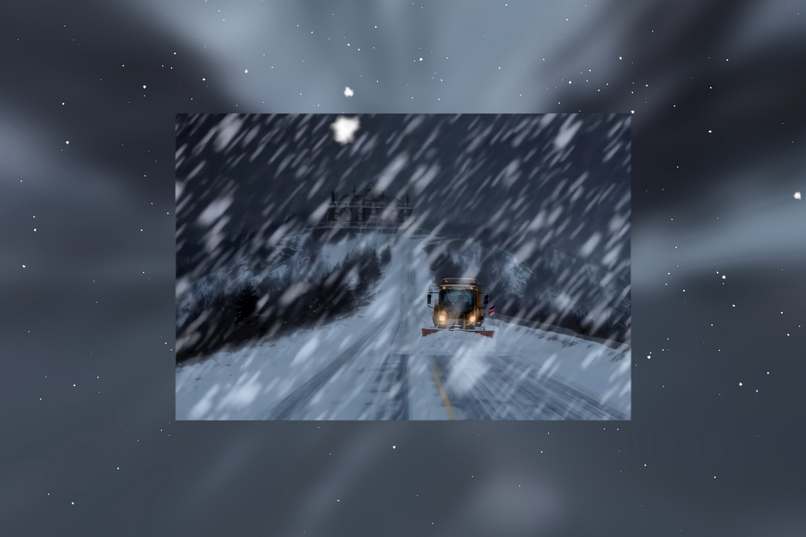

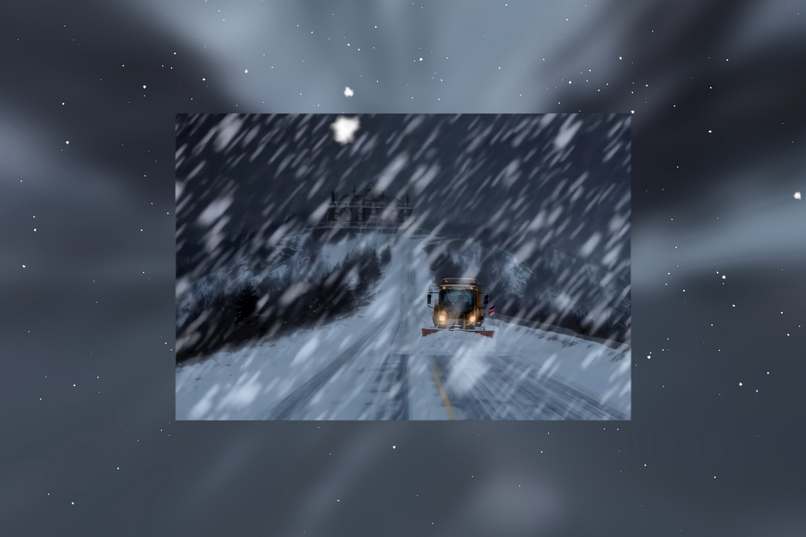

Snow falls all around you with 3D particles, extending your movie far beyond the cinema screen

-

The Birds

-

Falling autumn leaves bring the scene to life

-

Meadow ambience with floating dust and pollen adds immersion to your nature videos

Inspiration

Virtual Reality already delivers a high level of immersion, but traditional video playback is still mostly flat. With Scriptable Immersive Movie SFX / Environments we want to go beyond “watching a screen in VR” and let ordinary videos drive real-time 3D effects and 360° environments around the viewer.

A key moment for us was watching a movie scene with heavy rain: on a flat screen you just see the rain, but in VR, once we added our 3D particle rain all around the user, it suddenly felt like you are standing inside the rain, not just observing it. Those kinds of transitions—from “cinema screen” to “being there”—are what inspired this project.

What it does

Each video can be accompanied by a small sidecar .immerVR file or an extended .srt subtitle file, following our immerVR MovieHint specification v0.1.

These plain-text files contain timed movieHint cues that describe:

- which environment or particle effect to use (e.g.,

Rain,Snow,Leaves,Underwater,Halloween,Xmas,StPatricksDay,HotAirBalloons,Birds,Easter,Egypt,Platform), - optional 360° background media wrapped around the viewer,

- intensity and fade-in/fade-out parameters.

Example: when the movie cuts to a rainy street scene, a movieHint with env:Rain and a suitable intensity kicks in exactly at that timestamp. In immerGallery, we then render 3D rain particles in full 360° around the user. The viewer no longer feels like they are watching a rectangle in front of them – they feel inside the same rain that the characters are standing in.

Non-aware video players simply ignore the extra tags. Our VR media player app immerGallery and other compatible media players can parse them and render synced 3D particle systems and 360° spheres at runtime. This keeps the approach backward-compatible while opening it up as an open spec that other apps can implement with their own visual style.

How we built it

During the competition we implemented a major UPDATE in immerGallery: a human-readable scripting layer defined by the MovieHint spec.

Key work items:

- Designing a minimal text syntax that survives real-world editors, copy/paste, and version control.

- Implementing an efficient parser and runtime that resolve overlapping hints on two channels (environment + background) at any playback time.

- Integrating the runtime into our existing rendering pipeline on Meta Quest, reusing and extending particle effects (rain, snow, leaves, sand dust, etc.) and 360° skyboxes.

Because hints are plain text, creators can edit them with any editor, keep them in Git alongside their media, and share them as tiny files.

Challenges we ran into

- Balancing human readability with strict, unambiguous parsing rules.

- Keeping CPU/GPU cost low enough for smooth video playback on standalone headsets while enabling immersive effects and environments.

- Designing extensible enums and environment names that can evolve without breaking existing content or third-party implementations.

Accomplishments that we're proud of

- Turning a previously image-only feature into a generalized, time-based system for movies.

- Achieving a format that is both creator-friendly and robust enough for production use.

- Keeping the whole concept open and implementation-agnostic so other VR media players can adopt it.

What we learned

We learned first-hand how much synchronized immersive effects change the experience: users report that scenes feel significantly more engaging when the environment around them reacts to what the movie shows (rain, snow, leaves, underwater scenes, etc.).

On the technical and design side, we:

- Gained experience with small, domain-specific, scriptable formats and how to keep them both strict and approachable.

- Learned how to structure and iterate on a formal specification that other developers can implement independently.

What's next

Next steps include:

- A visual authoring tool to “paint” hints on a timeline instead of editing text only.

- More environment presets and mood profiles (e.g., “tropical beach sunset”, “thunderstorm in the city”, “deep forest at dusk”, “rooftop fireworks night”).

- Deeper integration with Meta Quest features, such as hands-first controls to toggle immersive tracks and adjust intensity while watching.

Log in or sign up for Devpost to join the conversation.