-

-

Landing page

-

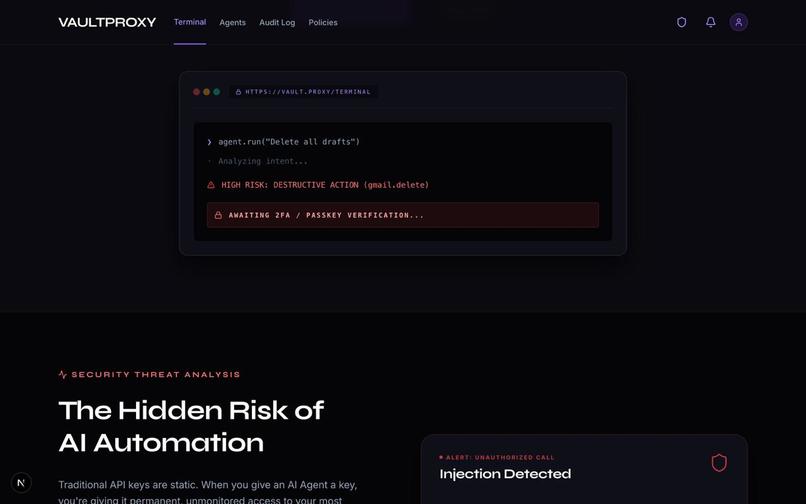

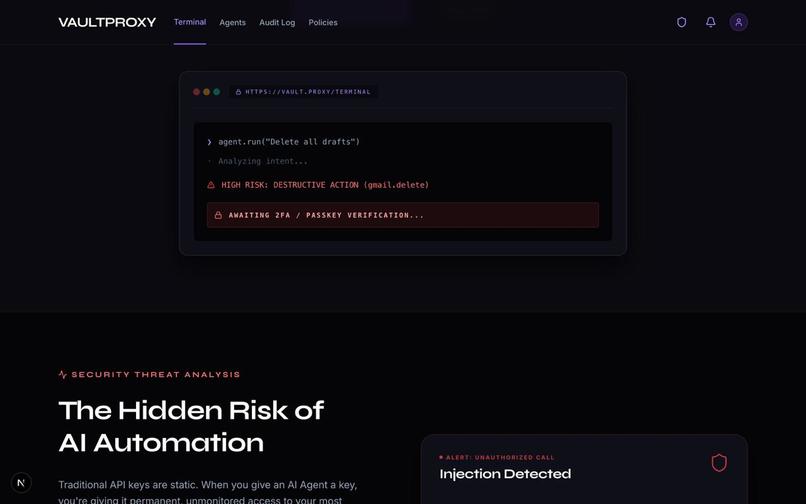

Real-time agent execution pipeline — high-risk actions are instantly detected and paused for MFA verification before any damage can occur.

-

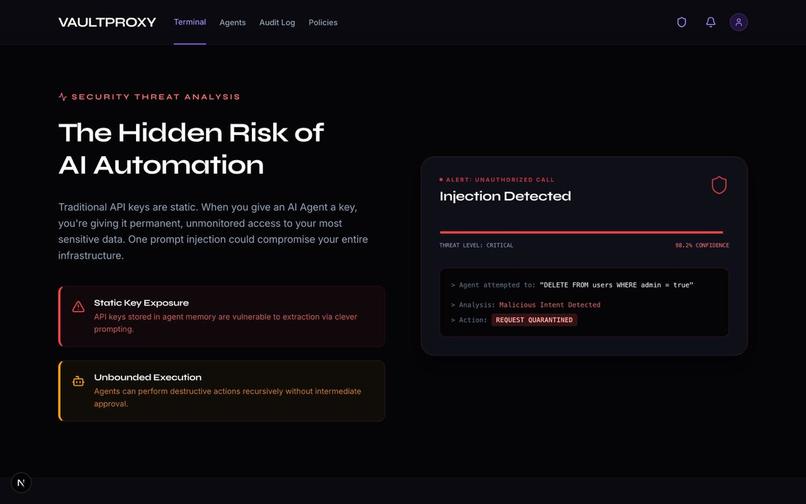

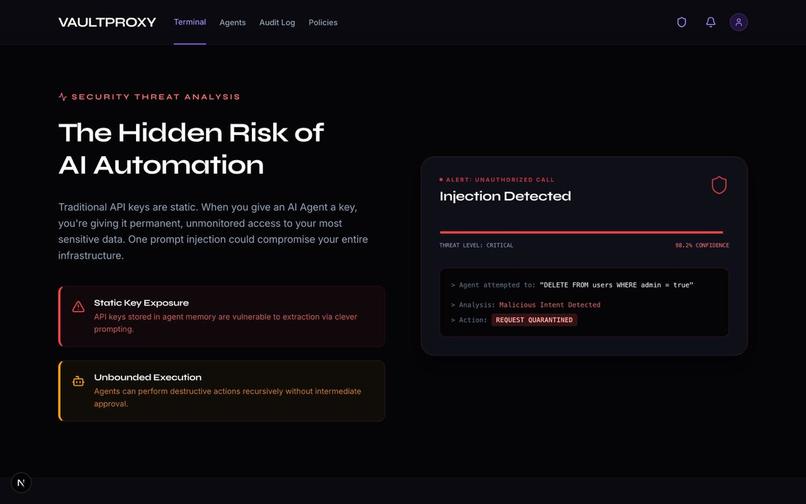

Detecting hidden risks in AI automation — preventing token exposure, uncontrolled execution, and prompt injection attacks in real time.

-

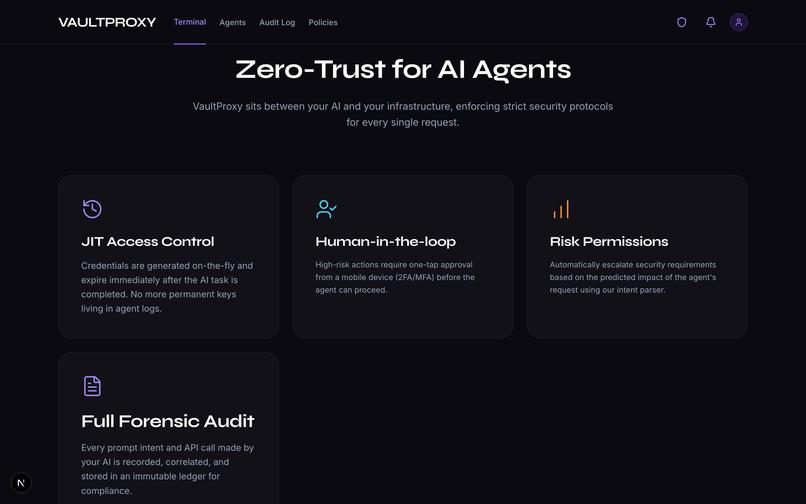

VaultProxy Dashboard: A secure control center designed to manage AI agent permissions and track every action with real-time audit logs.

-

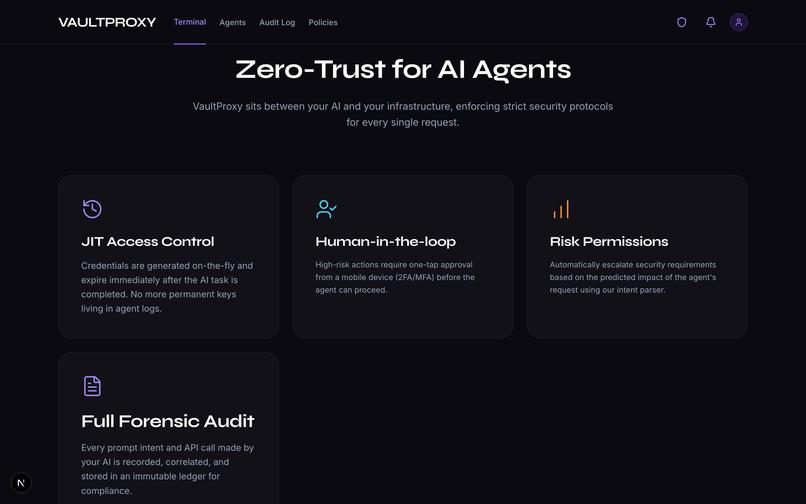

Core Zero-Trust architecture — Just-in-Time access, human-in-the-loop approvals, risk-based permissions, and full forensic audit logging.

Inspiration

We are entering an era where AI agents autonomously execute tasks on our behalf—from modifying active databases to dispatching hundreds of emails. While this unlocks incredible efficiency, it introduces a terrifying security gap. How do we stop an autonomous AI from executing a catastrophic mistake or falling victim to prompt injection?

In traditional finance, moving money requires friction: passing through a Unified Payments Interface (UPI) PIN or a biometric scan. We realized that AI needed this exact same "human-in-the-loop" safeguard. VaultProxy was inspired by the need for a zero-trust firewall for AI—bringing financial-grade security gates to autonomous execution.

What it does

VaultProxy acts as an intermediary control center between an AI agent and its execution environment. When a user gives an AI a task, VaultProxy monitors what the agent intends to do. If it detects a sensitive or potentially destructive command (like sending emails or altering records), it intercepts the action and locks it into a secure "Vault."

The AI cannot proceed until an authorized human explicitly reviews the intercepted action and passes a high-fidelity, UPI-style security checkpoint to approve it.

How we built it

We engineered VaultProxy using Next.js and TypeScript, with Lucide-React for a polished, highly-responsive UI interface. The core architecture relies on three pillars:

The Intent Analysis Engine: Before any tool is triggered, our parser evaluates the AI’s output to extract target parameters. It computationally weighs the action against predefined risk thresholds. If it flags as sensitive, execution halts immediately. The Verification Gate: Halted actions are sent to the Vault. To release them, we built a simulated biometric scan coupled with a captive local authentication flow for approval. The Lockout Protocol: To neutralize brute-force attacks, we integrated a strict 3-strike lockout policy. The probability of an adversary guessing the correct secure parameters in ( n=3 ) attempts is modeled as: $$ P(\text{Breach}) = 1 - \prod_{i=1}^{3} (1 - P(\text{Success}_i)) $$

Because our biometric and captive authentication flow keeps ( P(\text{Success}) ) effectively at zero for unauthorized users, the system remains mathematically secure. 3 failed attempts results in an immediate 24-hour timeout, killing the AI agent's session context.

Challenges we ran into

Building a security layer for unpredictable AI models was chaotic:

The Auth0 Catastrophe: Mid-development, our third-party Auth0 integration completely broke down. Because we couldn't rely on it for our core security loop, we had to rapidly adjust and build our own locally hosted, captive authentication system from scratch. Taming AI Hallucinations: When extracting dynamic parameters (like how many emails to send), the AI would sometimes hallucinate default values. We had to drastically tighten our parser rules and regular expressions to enforce strict adherence to the exact input. Complex Lockout State Management: Preserving the exact state of a blocked AI action across different React UI components over a 24-hour lockout required meticulous state management to ensure we didn't lose the original context. Accomplishments that we're proud of We are incredibly proud of successfully pivoting under pressure. When our external authentication pipeline broke, building a captive local fallback in a matter of hours ensured the prototype remained fully functional. We are also proud of the UI/UX; taking the time to refine the layout and swap basic placeholders for clean Lucide icons transformed the project from a rough script into a premium product.

What we learned

Friction is a Feature: Developers are usually taught to make user experiences invisible. VaultProxy taught us that in security, friction is your best friend. Forcing the user to pause, read, and scan biometrics is the bedrock of safety. Own Your Critical Path: The Auth0 failure taught us that if a feature is fundamental to your app's main purpose (in this case, the security gate), you should limit reliance on external dependencies that you can't control or debug.

What's next for VaultProxy :

Bonus Blog Post

Whenever you grant an AI agent the ability to execute real-world tasks—like reading user data or dispatching emails on your behalf—you are faced with a terrifying dilemma. You have to give the machine your keys.

When building VaultProxy, the core architecture required our "Intent Parser" to intercept sensitive AI tool interactions (like sending a promotional campaign via Gmail) and effectively freeze the AI's execution context until a human passed a strict security authorization gate. However, this introduced an immediate, massive technical hurdle: How do we securely manage the third-party access tokens between the time the AI initiates the action and the time the human approves it?

If a halted action sits in our review "Vault" for hours, a standard OAuth access token will expire. The traditional approach would be to store raw Google refresh tokens directly in our local database so the agent could refresh them itself upon human approval. But in a zero-trust environment designed specifically to prevent unauthorized database and AI exploitation, storing highly sensitive third-party refresh tokens natively was an unacceptable vulnerability.

This is exactly where Auth0's Token Vault became the architectural backbone of our project.

Instead of managing cryptographic secrets ourselves, we offloaded the entire third-party credential layer to Token Vault. By enabling offline_access through Token Vault on our Google connection, we ensured that the AI agent never touches the raw refresh keys. When a user finally clears the VaultProxy biometric security gate, our backend securely requests the fresh, temporary access token directly from the Token Vault at the exact millisecond of execution.

Implementing Token Vault completely abstracted the danger of third-party token leakage. It transformed our hackathon concept from a neat "prompt interceptor" into a cryptographically sound, enterprise-grade AI execution firewall—an achievement we are incredibly proud of.

Built With

- 3.3

- api

- auth0

- better-sqlite3

- css

- framer

- groq

- llama

- lucide

- next.js

- qrcode

- react

- sdk

- speakeasy

- tailwind

- token

- typescript

- vault

Log in or sign up for Devpost to join the conversation.