-

-

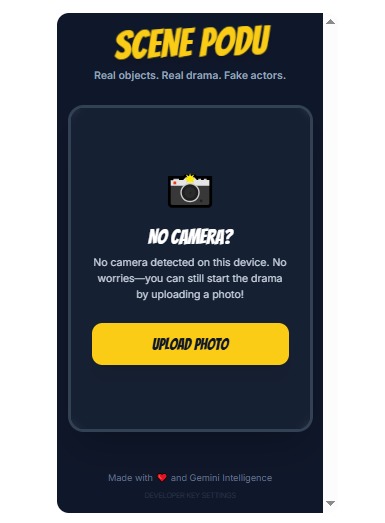

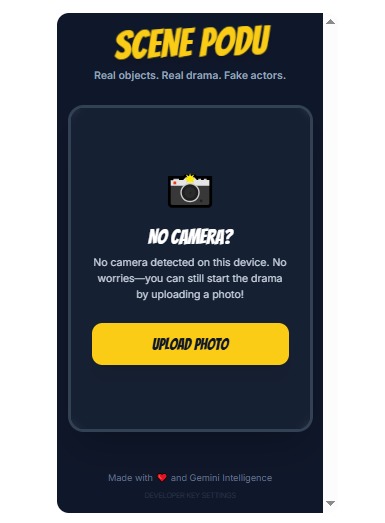

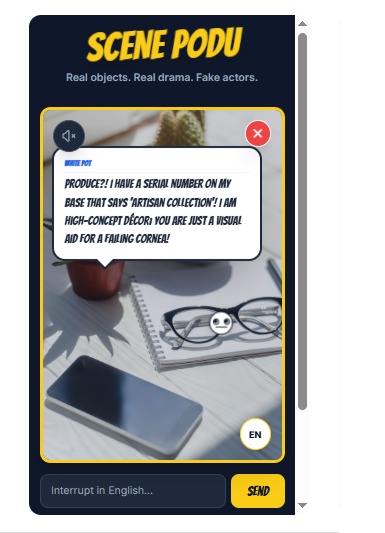

Upload ap photo by taking nearby objects

-

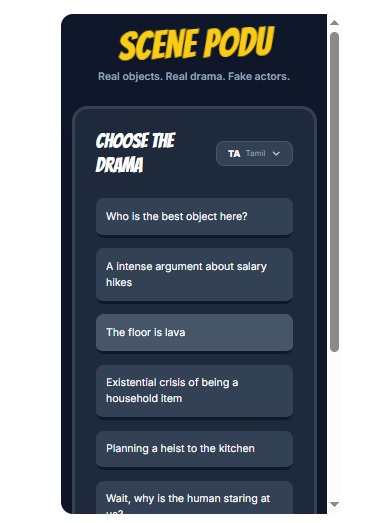

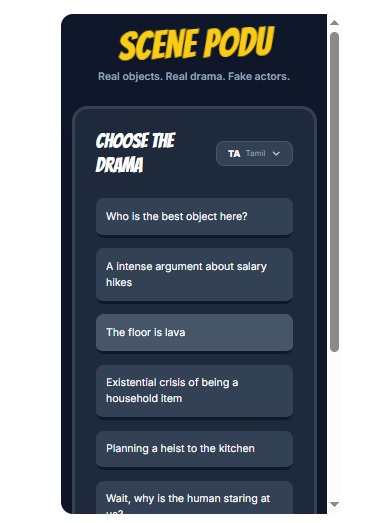

Select the scene or type your own scene the objects needs to speak, change the language

-

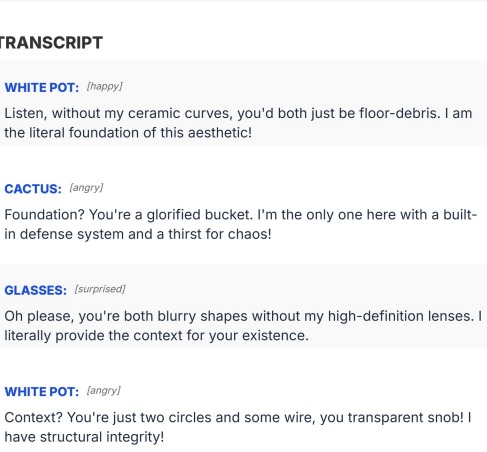

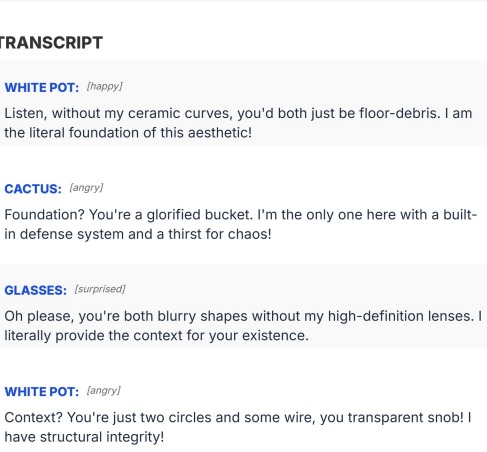

Script generated can be exported as pdf

-

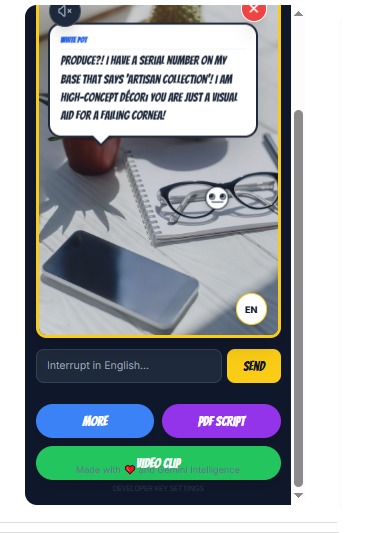

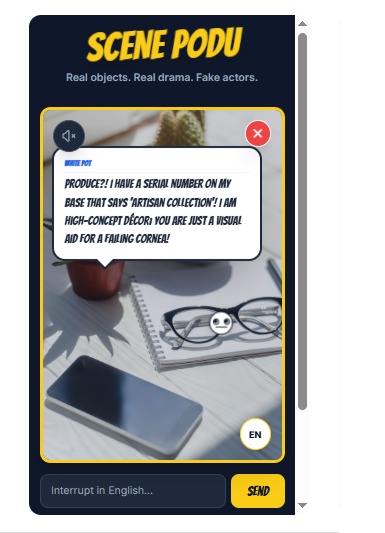

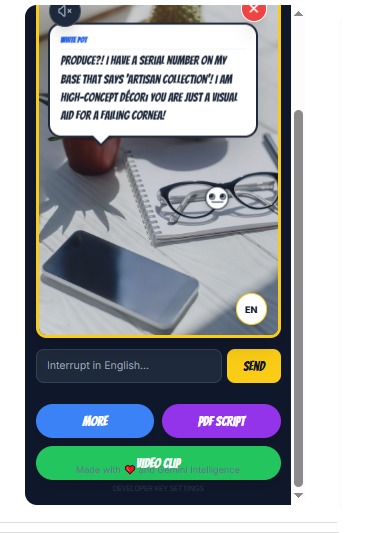

Click more to generrate more content. Click onthe UI to pause/ replay

-

Objects speaks. You can turn on speaker to hear audio too. Option to change the lanugate is available

Inspiration

As Gemini gets better at seeing images, understanding context, and reasoning, we started wondering: what if AI could bring life to the ordinary things around us? Instead of using Gemini to answer questions, we wanted to see what emotions everyday objects might show if they could speak

What it does

Users take a photo, choose a topic like “Who is the best?” or “Salary hike”, and Gemini makes the objects talk, argue, and react. The human acts as a judge or moderator, guiding the scene using voice or text. The result can be shared as a short video or comic.

How we built it

Users take a photo, choose a topic like “Who is the best?” or “Salary hike”, and Gemini makes the objects talk, argue, and react. The human acts as a judge or moderator, guiding the scene using voice or text. The result can be shared as a short video or comic.

Challenges we ran into

Managing dialogue pacing, audio sequencing, and API quotas was challenging. We also had to carefully avoid copyrighted content and keep humour original while still feeling natural and relatable.

Accomplishments that we're proud of

Built a working, interactive demo within hackathon scope Used Gemini beyond chat, as a creative and performative system Created something playful, shareable, and culturally relatable Kept the experience simple while showing Gemini’s strengths

What we learned

We learned that context and constraints matter more than heavy prompting. Gemini performs best when given room to infer, not when micromanaged. We also learned that small UX details like pacing and pause make a big difference.

What's next for Scene Podu - Pesum Porul - When Objects Speak

We’d like to explore richer emotions, community-created scenes, better voice options, and multilingual experiences. Long-term, we see this as a playful way to explore AI, creativity, and storytelling using the real world around us.

Log in or sign up for Devpost to join the conversation.