-

-

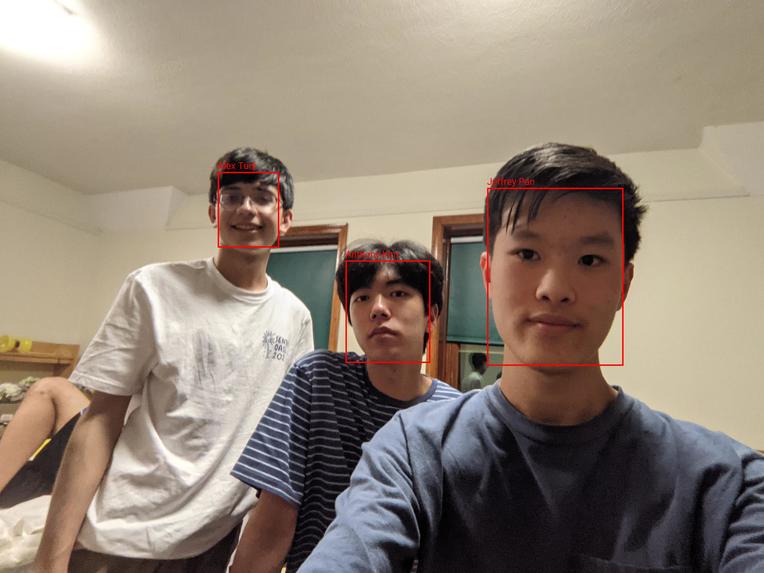

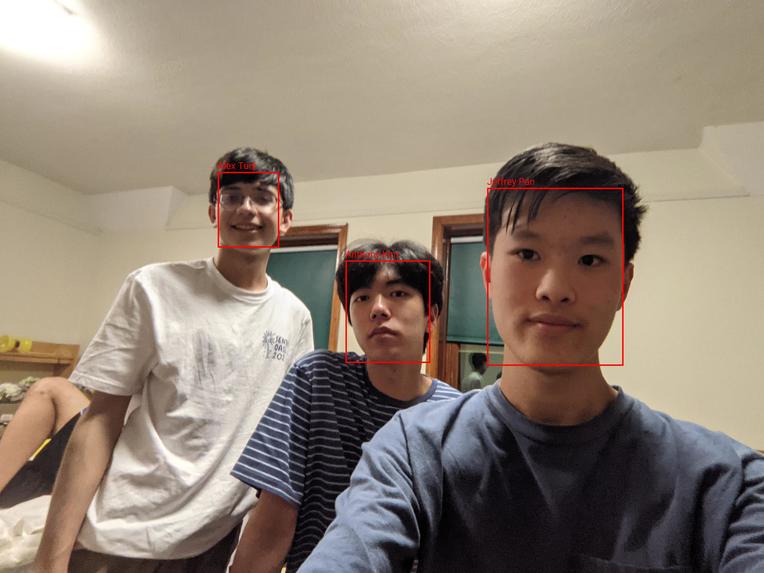

In this image, we are all easily identified by a facial detection model.

-

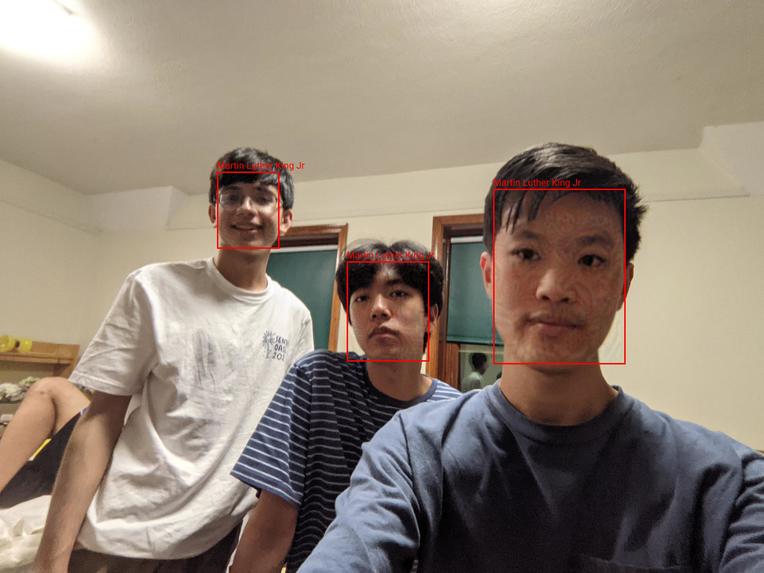

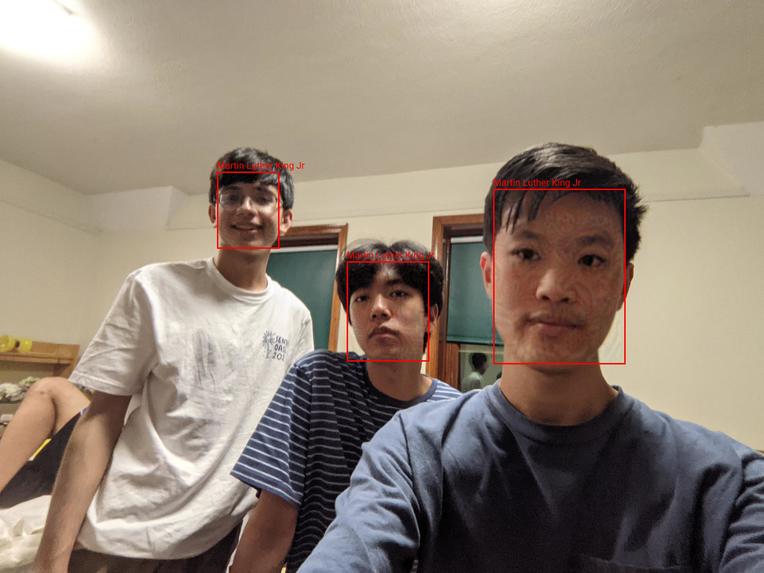

To prevent such detection, we add adversarial noise to the pixels around each face.

-

We can cause the facial detection model to misidentify us all as MLK.

-

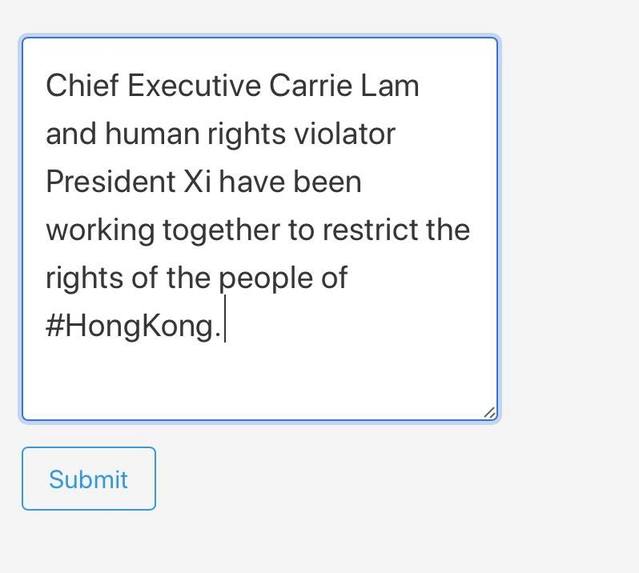

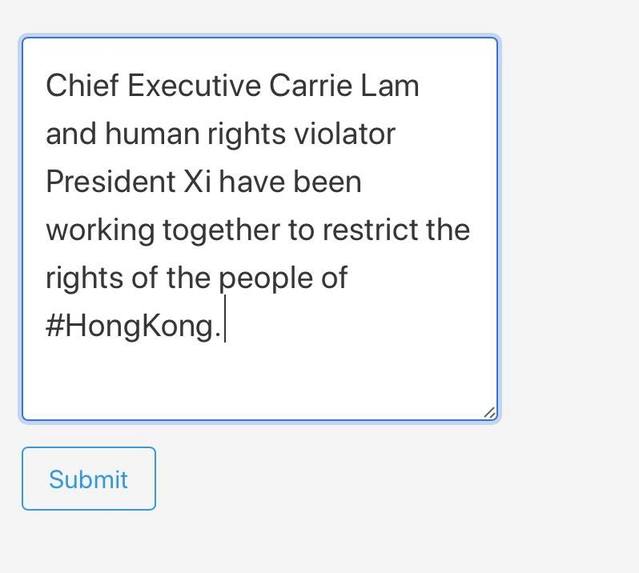

Here, we have an example of a caption criticizing the government.

-

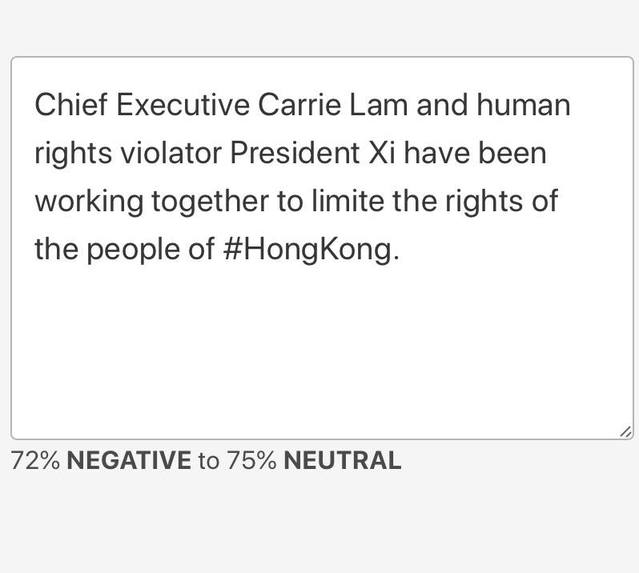

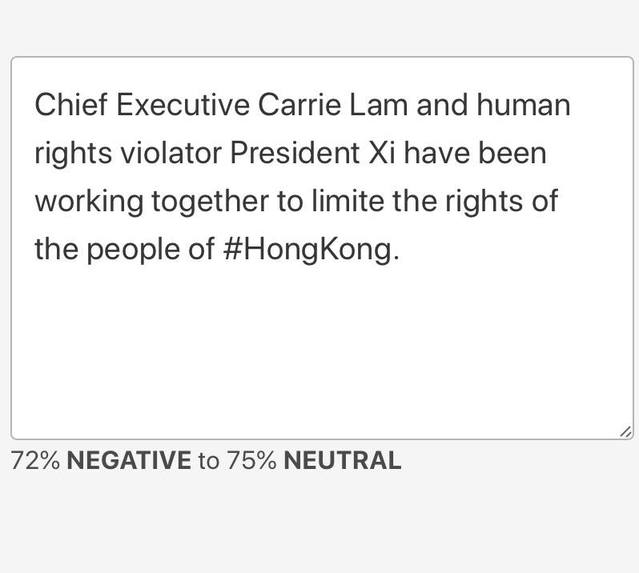

By only slightly changing the text of the caption, we can hide the negative sentiment of the caption.

Inspiration

Machine learning has allowed computers to understand the world through vision and language like never before. However, the proliferation of such algorithms has led to increased abuse.

In the United States, at least one in four law enforcement agencies are able to use facial recognition technology with little oversight. Increasingly, governments have used machine learning algorithms to crack down on protests by using facial recognition algorithms to identify and retaliate against protestors.

Additionally, the advancement of powerful language models provides an opportunity for oppressive regimes around the world to better detect and retaliate against messages spread by political activists.

We've seen how social media has played a crucial role in bridging gaps and uniting people across the world again oppression. Thus, we made Saru to help protect protestors' social media posts from government retaliation and censorship.

What it does

With Saru, one can easily upload an image and caption to our website. Using state-of-the-art adversarial attacks, we'll slightly modify the pixels in your image and the language in your caption to avoid facial recognition and sentiment analysis algorithms, allowing you to express your opinion without fear.

How we built it

Saru utilizes two grey-box adversarial attacks, one for avoiding facial recognition and one for avoiding sentiment analysis. We transfer a projected-gradient-descent attack tuned on a FaceNet model to modify the input image to prevent facial matching between the input image and a database of identities. To modify the caption, we use the novel TextFooler algorithm to flip the predicted sentiment of a caption, thus avoiding governmental detection.

What we learned

We're proud of creating such a technically difficult project. Adding two novel machine learning techniques into our backend and ultimately integrating everything into one cohesive project was difficult but rewarding.

We've learned a lot about how frighteningly good machine learning models today are but how adversarial attack methods can subvert such models.

Log in or sign up for Devpost to join the conversation.