-

-

AI Family Monitor analyzing a video frame and classifying it as Green (Safe Content) using Gemini multimodal AI.

-

The system detects potentially sensitive content and marks the frame as Yellow, warning parents about possible concerns.

-

Gemini analyzing a violent confrontation scene and classifying it as RED – nappropriate content in the SafeStream AI monitoring sys.

-

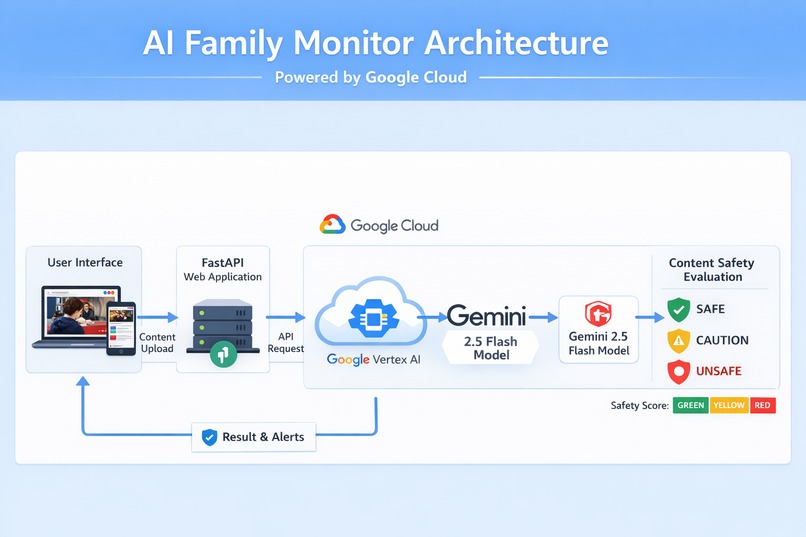

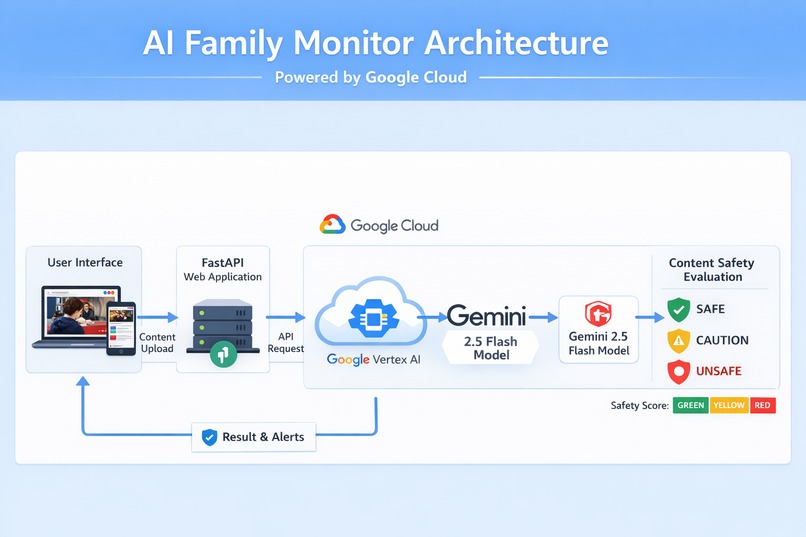

Architecture Diagram

Inspiration

Today children consume large amounts of digital media through streaming platforms, smart TVs, tablets, and social media. While parental control tools exist, most of them rely on simple keyword filtering or website blocking. These approaches cannot understand the actual visual content being displayed on the screen.

As a result, children may still be exposed to violent scenes, inappropriate imagery, or disturbing media content. This inspired us to explore how multimodal AI agents powered by Google Gemini could analyze visual content and help families monitor media safety in real time.

SafeStream AI was created to demonstrate how AI can move beyond text-based systems and actively analyze visual environments to help create safer digital experiences for families.

What it does

SafeStream AI is a multimodal AI-powered content safety system that analyzes video frames and classifies them according to family safety guidelines.

The system evaluates visual content and categorizes it into three levels:

- 🟢 Green – Safe content

- 🟡 Yellow – Potentially sensitive content

- 🔴 Red – Unsafe or inappropriate content

Users upload frames extracted from a video stream, and the AI analyzes each frame using the Gemini multimodal model. The system then generates an overall safety score based on the most severe classification detected.

This simple scoring mechanism allows parents to quickly understand whether the content being watched is appropriate.

How we built it

The project combines multimodal AI with cloud-based infrastructure to process and analyze visual content.

The application architecture includes:

User Interface

- A simple web interface that allows users to upload image frames from video content.

Backend API

- Built using FastAPI in Python to process image uploads and communicate with AI services.

AI Processing

- Images are sent to Gemini 2.5 Flash via Vertex AI using the Google GenAI SDK.

Content Safety Classification

- Gemini analyzes the visual scene and determines whether it is safe, sensitive, or unsafe.

Deployment

- The system is deployed on Google Cloud Run, enabling scalable and serverless hosting.

This architecture allows the application to perform real-time visual content safety analysis using multimodal AI.

Challenges we ran into

One of the biggest challenges was integrating multimodal inputs with the Gemini API. Image data must be formatted correctly when sending requests to the model, and raw byte data cannot be passed directly without proper wrapping.

Another challenge was ensuring consistent AI responses. Generative models sometimes return natural language explanations instead of structured output. To solve this, we designed prompts that enforce strict JSON responses for reliable classification.

We also had to configure Google Cloud authentication and Application Default Credentials (ADC) so the containerized application could securely access Vertex AI services.

Finally, designing a clear and intuitive user interface that communicates safety results simply was an important usability challenge.

Accomplishments that we're proud of

We successfully built and deployed a working multimodal AI safety monitoring system powered by Google Gemini.

Key accomplishments include:

- Building a full AI-powered workflow from image upload to AI safety classification

- Integrating Gemini multimodal models through Vertex AI

- Deploying the application on Google Cloud Run

- Creating a simple and intuitive Green / Yellow / Red safety scoring system

- Demonstrating how AI agents can analyze real-world visual media environments

This project shows how multimodal AI can move beyond chat interfaces and become a practical safety tool for families.

What we learned

During the development of SafeStream AI, we learned how to:

- Build multimodal AI applications using Gemini

- Integrate Vertex AI with Python-based web applications

- Deploy scalable AI services using Google Cloud Run

- Design prompts that produce structured and reliable AI outputs

- Build AI-powered workflows that combine computer vision, cloud infrastructure, and web interfaces

Most importantly, we explored how AI agents can interpret visual content and assist users in real-world safety scenarios.

What's next for SafeStream AI: Smart Home Content Safety Agent

This prototype focuses on analyzing uploaded image frames, but the system can be extended significantly.

Future improvements could include:

- Real-time video stream monitoring

- Audio analysis using speech-to-text models

- Smart home integrations such as:

- automated alerts

- smart light notifications

- parental mobile notifications

- Automatic frame extraction from CCTV or streaming devices

- Personalized safety profiles for different age groups

In the future, SafeStream AI could become a real-time AI guardian for digital media environments, helping families create safer home entertainment experiences.

Log in or sign up for Devpost to join the conversation.