-

-

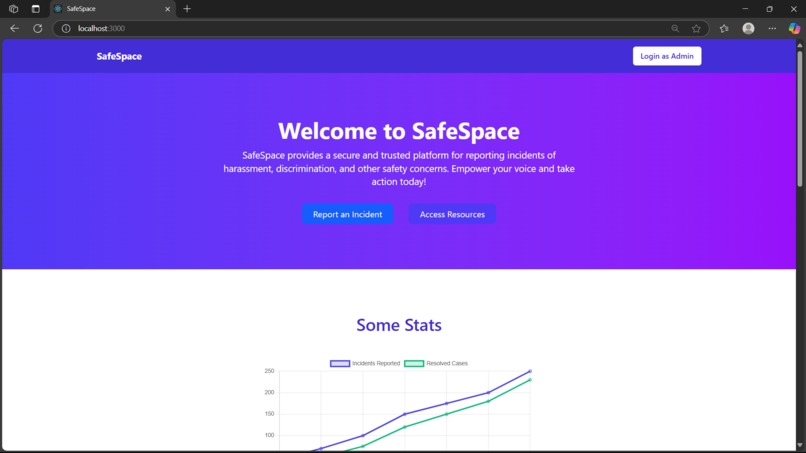

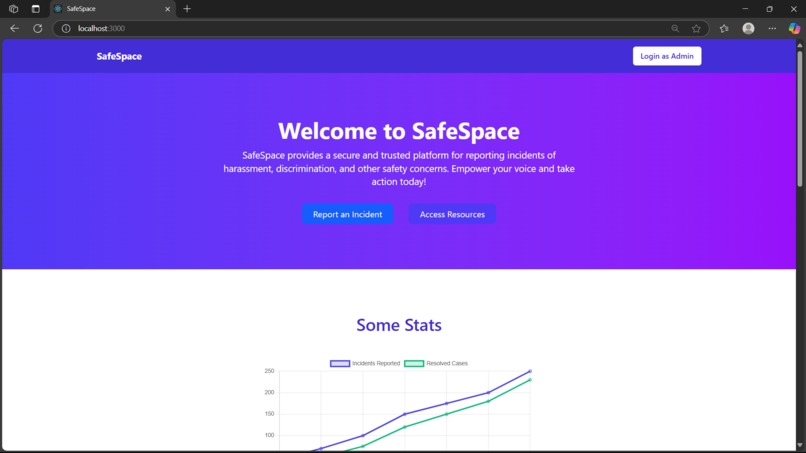

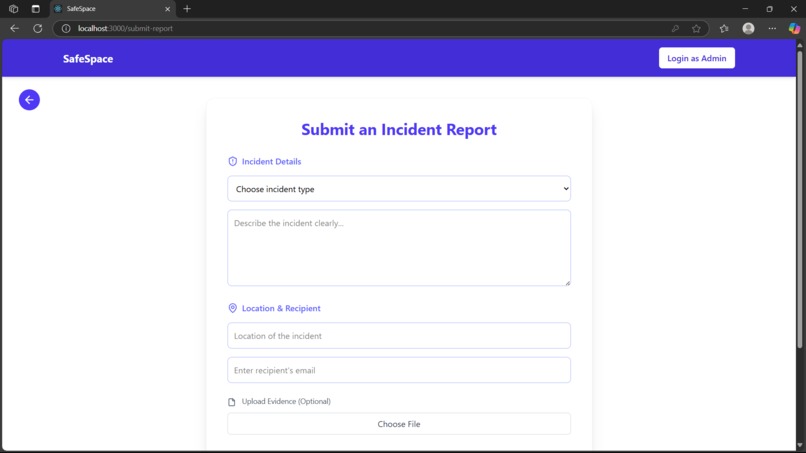

home page

-

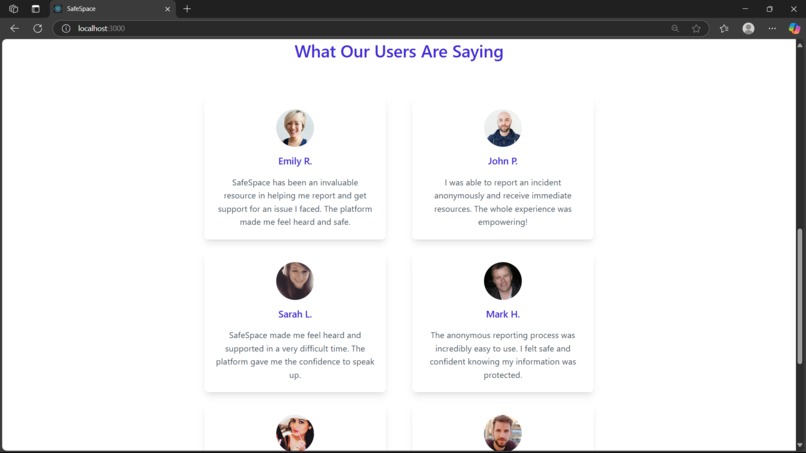

user reviews

-

footer

-

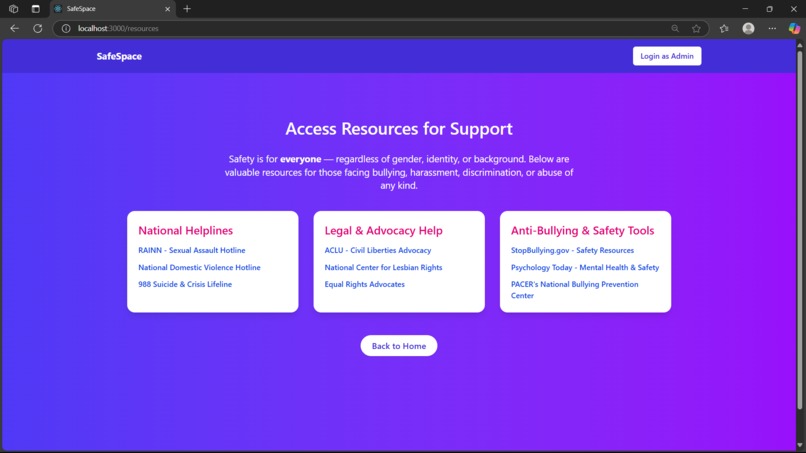

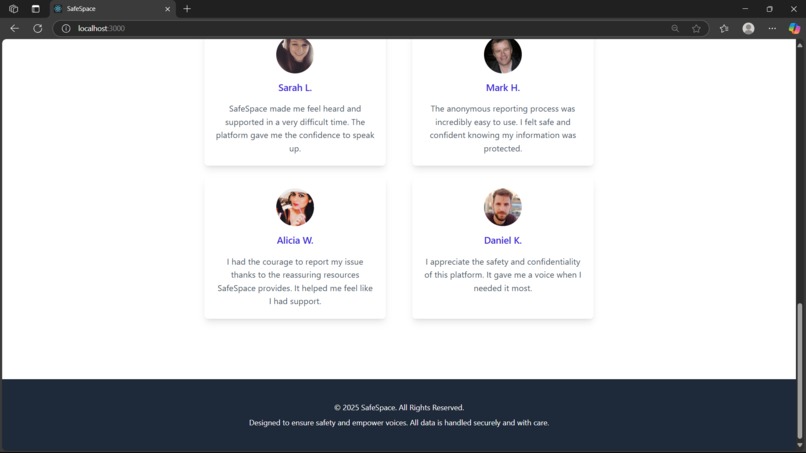

additional resources for help

-

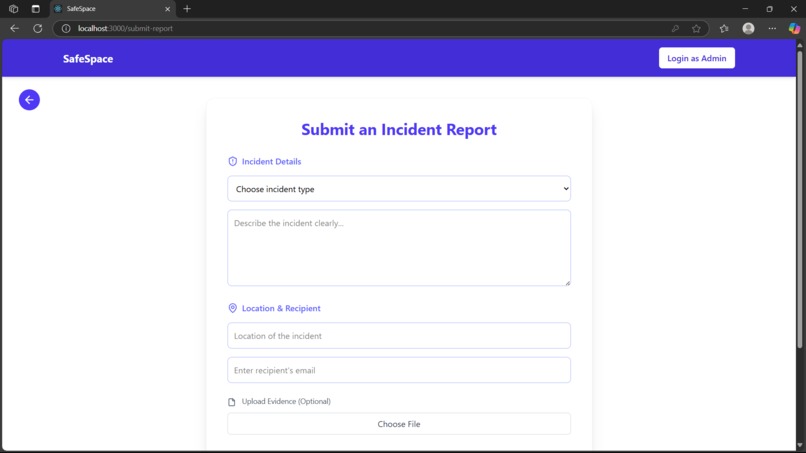

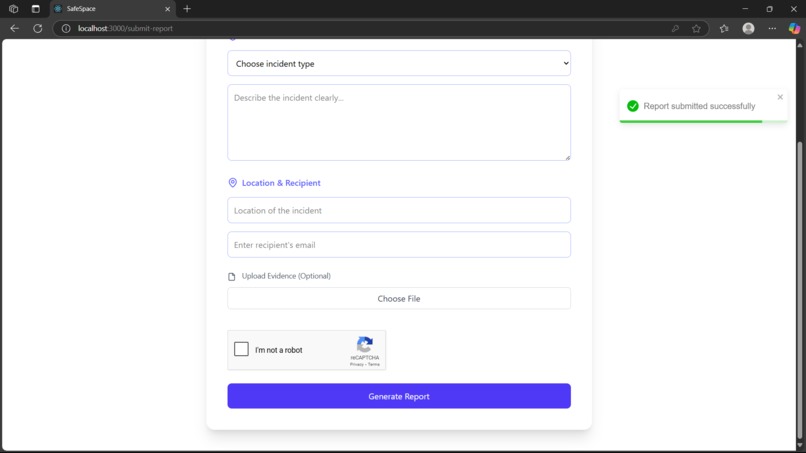

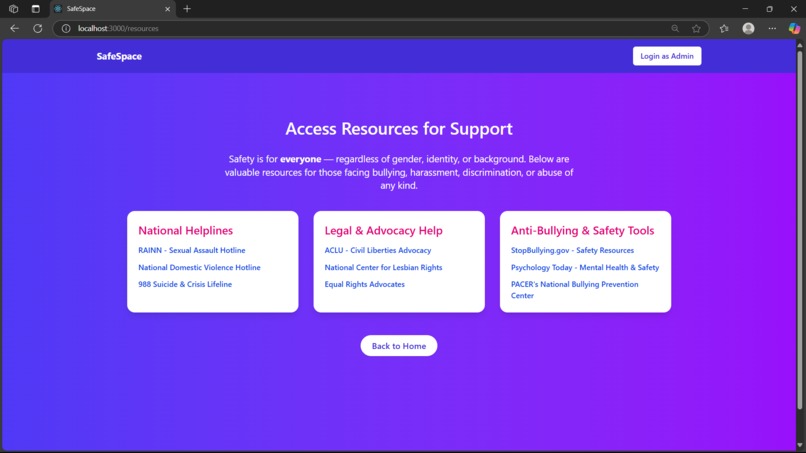

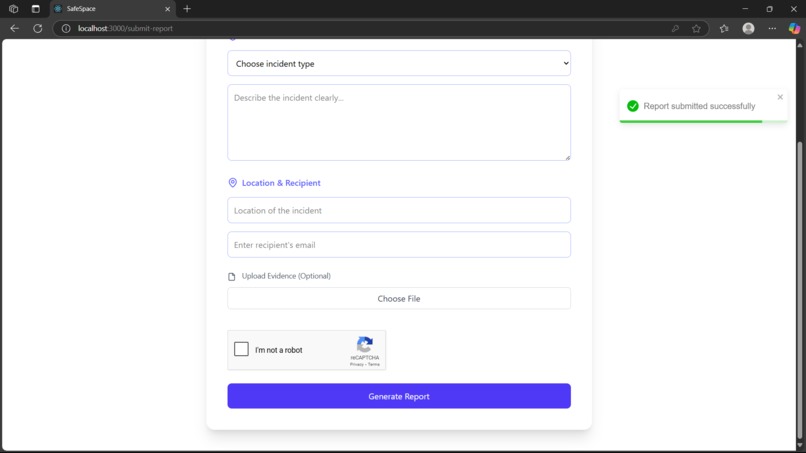

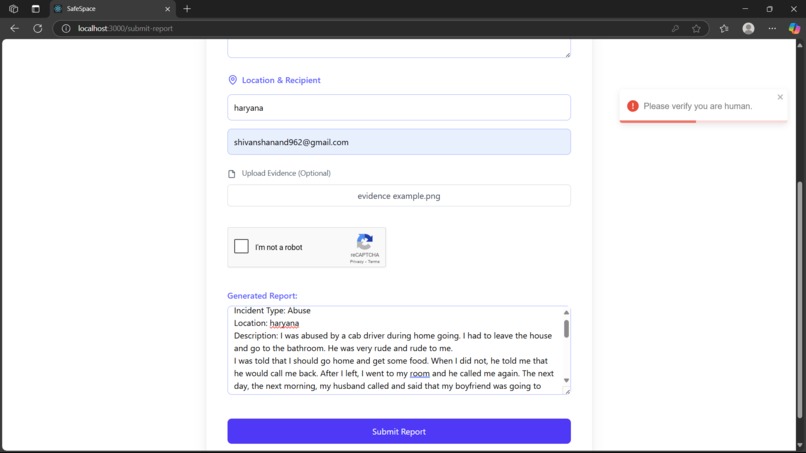

report form

-

report sucessfully went to that particular mail that user wrote in recipient mail

-

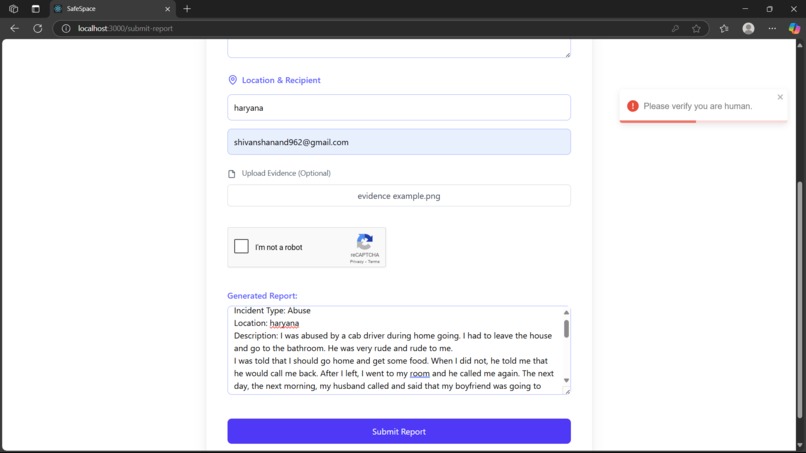

ai generated report which is editable and you should confirm as human before submitting

-

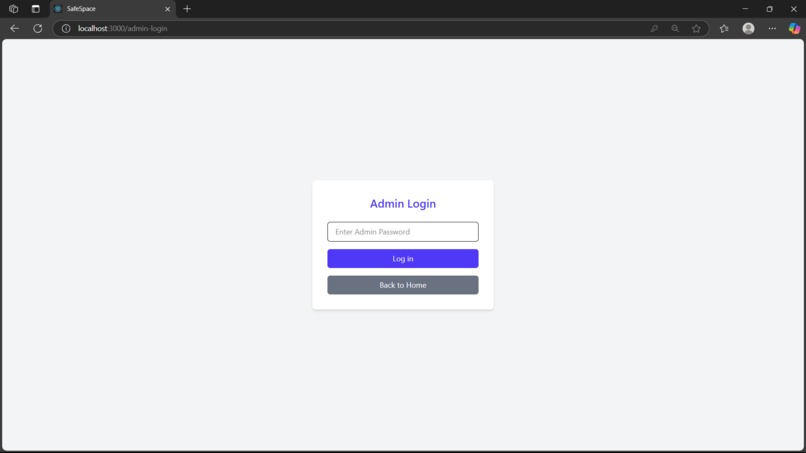

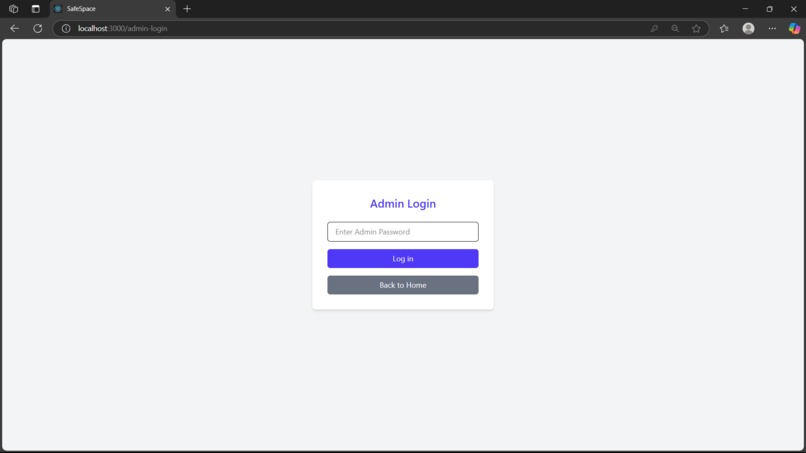

admin login which can be done by me only as its password is not disclose

-

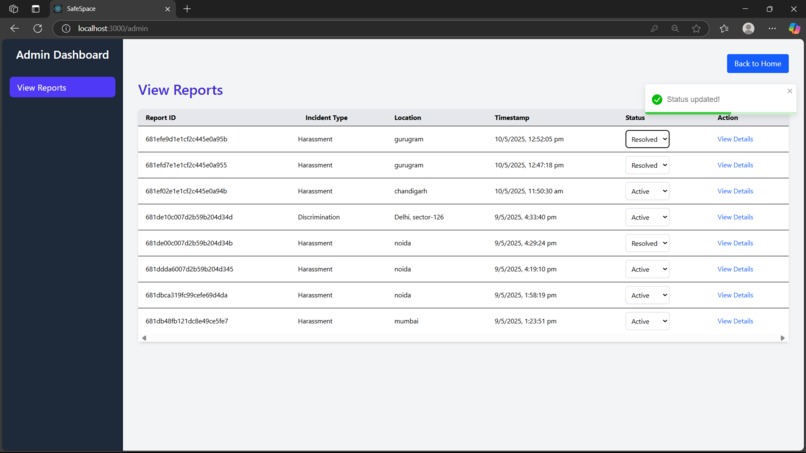

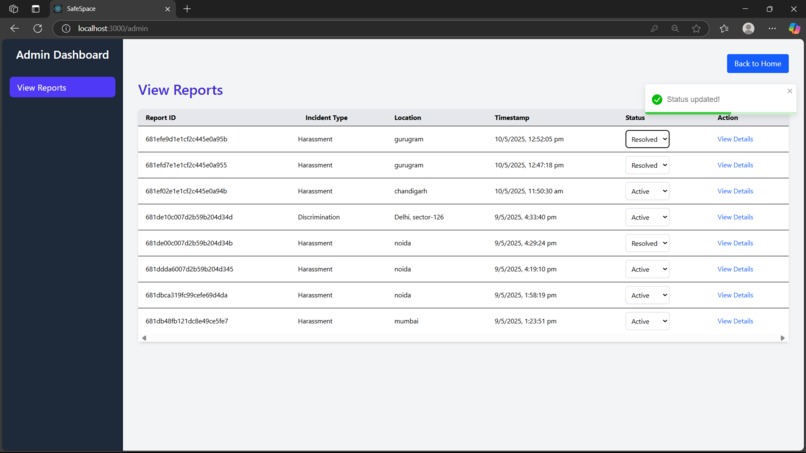

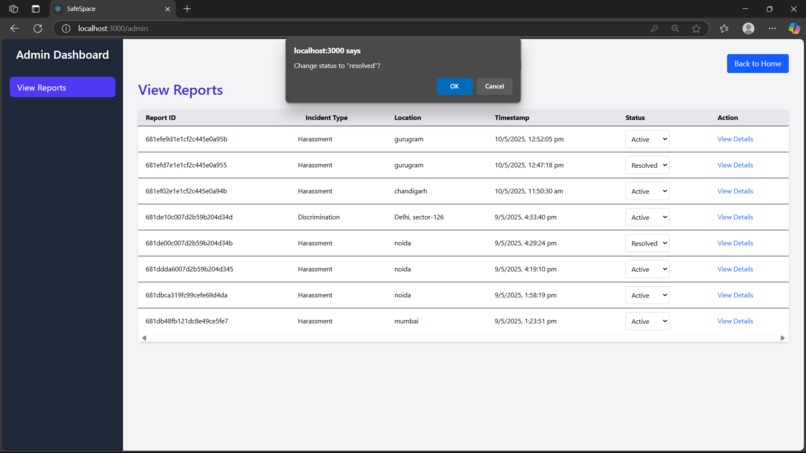

admin dashboard to mark cases as resolved or active

-

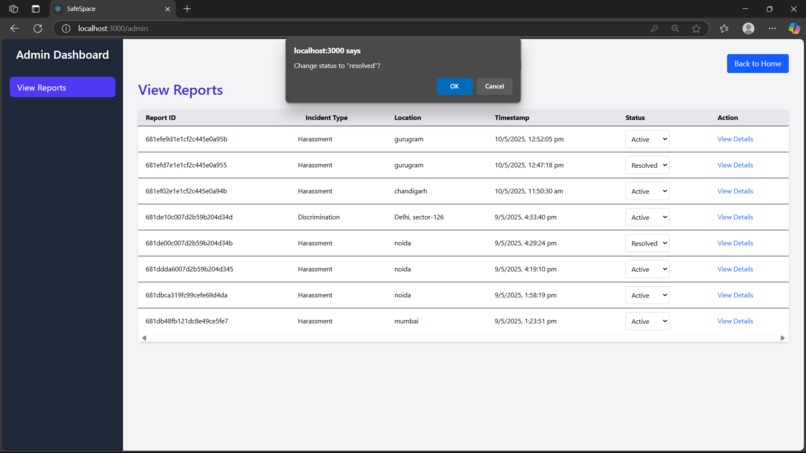

ask if admin is sure whether particular case's status is updated.

-

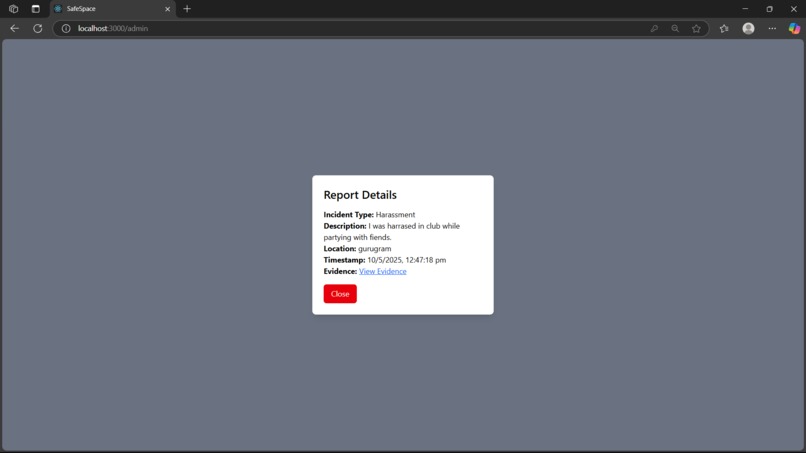

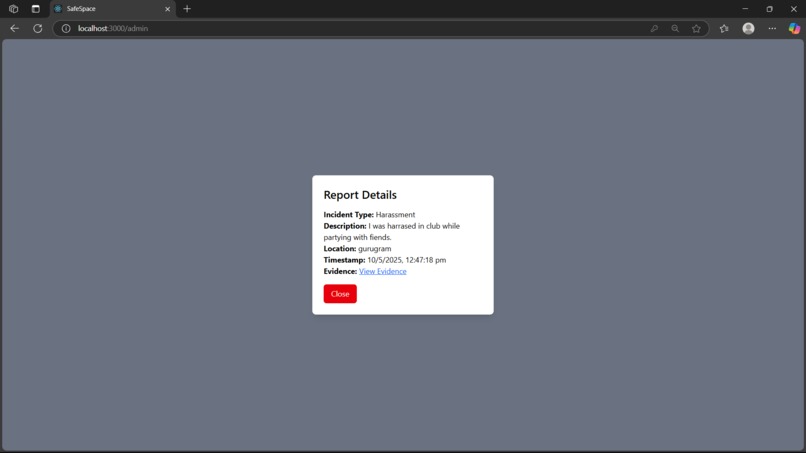

to view details of particular incident

Inspiration

The idea for SafeSpace emerged from the urgent need to create safer, more inclusive environments where individuals can report discrimination, harassment, or abuse—without fear of retaliation or judgment. Whether in schools, workplaces, or public spaces, many victims hesitate to report incidents due to complex procedures, language barriers, or lack of anonymity. We wanted to build a platform that removes these barriers and empowers people to speak up safely and confidently.

What it does

SafeSpace is a web-based AI-powered platform that allows users to report incidents of discrimination, harassment, or abuse anonymously. The platform provides:

- A user-friendly report form enhanced with AI-generated text assistance

- Theme customization (light/dark mode) for accessibility

- An admin dashboard to view all submitted reports in a clean, organized manner

How we built it

We built the project using the MERN stack (MongoDB, Express.js, React.js, and Node.js). Here's a breakdown:

- Frontend: Built with React.js and styled using Tailwind CSS, including pages for report submission, admin dashboard, and settings

- Backend: Powered by Node.js and Express.js with REST APIs to handle report submissions, retrieval, and storage

- Database: MongoDB stores reports with relevant metadata such as incident type, location, description, and evidence URLs

- AI Integration: A lightweight tokenizer/model setup was integrated to assist users in generating or refining report text

- Cloudinary: Used for secure file evidence upload, with restrictions for file type and size

Challenges we ran into

- State Management Across Components: Sharing global settings like language preference between settings and other pages was tricky until we implemented React Context

- Responsive UI Design: Balancing clean aesthetics with responsiveness across different devices required several layout iterations

- Secure File Uploads: Managing safe and efficient uploads through Cloudinary with proper validation took more time than expected

- Limited Time: Building and polishing features like report status tracking and user feedback mechanisms couldn't be completed in this version

Accomplishments that we're proud of

- Successfully created a working prototype within a short timeframe with a customizable and responsive user experience

- Designed an AI-augmented report writing assistant that adds real-world value by helping users articulate sensitive experiences

What we learned

- Building SafeSpace taught us how crucial it is to think from the perspective of vulnerable users

- We learned how to securely handle data, file uploads, and admin controls using a robust backend

- This project helped us understand React Context, conditional rendering, and component scalability better than ever

What's next for SafeSpace: AI-Powered Incident Reporting for Justice

In future updates, we aim to:

- Reduce the report generation time by AI by optimising the model.

- Implement a report tracking system with statuses like "Under Review", "Resolved", etc.

- Allow admin feedback or responses to users

- Add email or in-app notifications for status updates

- Integrate analytics dashboards to help organizations identify patterns and take proactive action

- Launch a mobile-friendly version

- Expand AI capabilities to better assist users with tone, clarity, and context in reports

- Incorporate evidence authenticity verification, using AI and metadata analysis to flag possibly altered images, videos, or documents—ensuring the integrity of submitted proof

We believe SafeSpace has the potential to become a trusted digital companion for victims and witnesses who seek justice, dignity, and change.

Built With

- cloudinary

- express.js

- googlecaptcha

- mongoose

- node.js

- nodemailer

- postman

- python

- react.js

- tailwindcss

Log in or sign up for Devpost to join the conversation.