-

-

AR Live Screen 1: Energy holding steady The room checks in. Energy consistent. All good.

-

AR Live Screen 2: Energy dropping Energy down 11%. The room already responded.

-

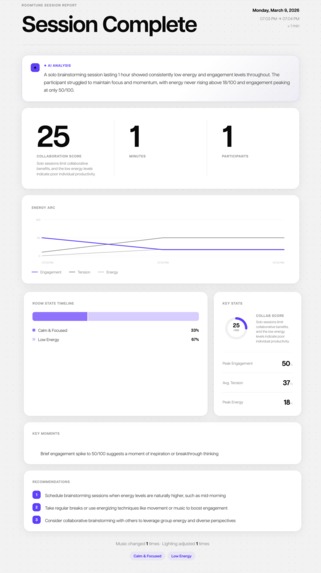

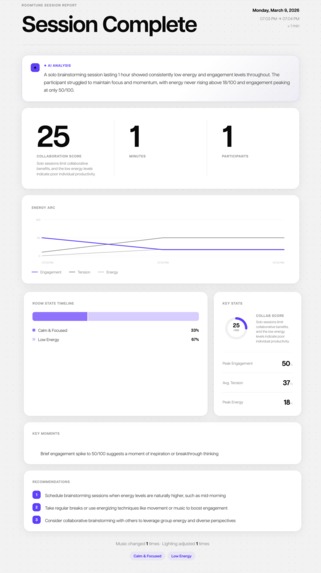

Session Report: Every session leaves a record. Only yours to see.

-

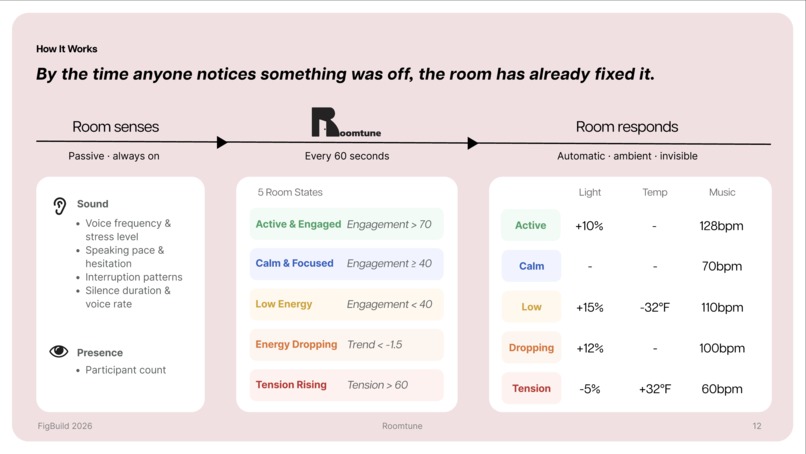

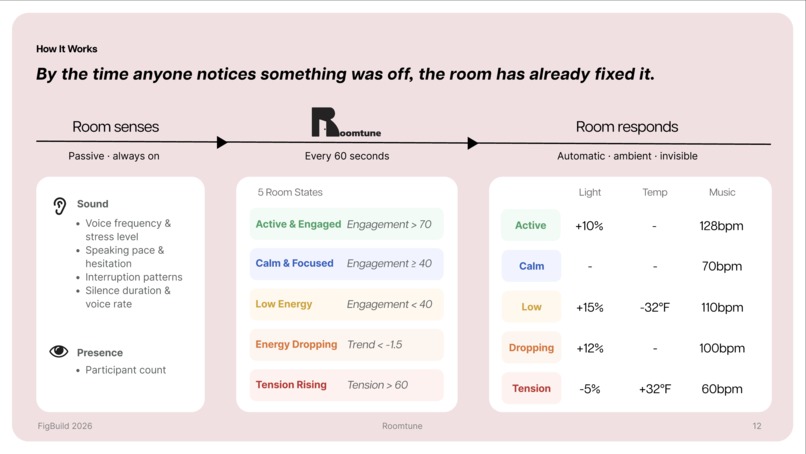

How It Works: From sensing to response. Every 60 seconds.

-

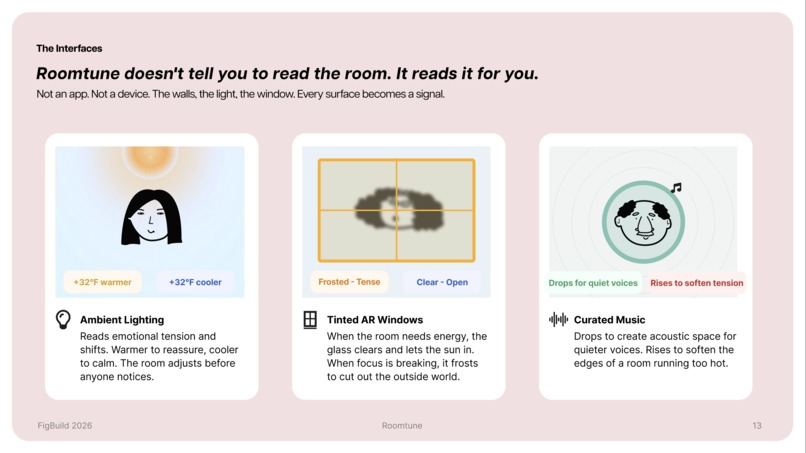

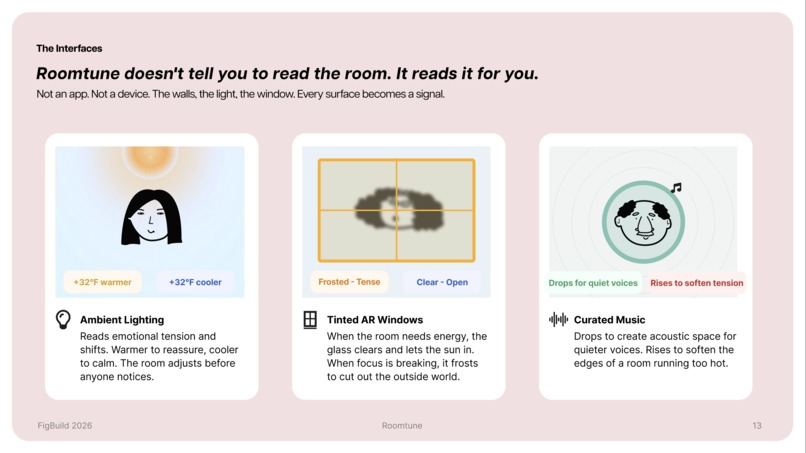

The Interfaces :Three surfaces. One system. No app needed.

-

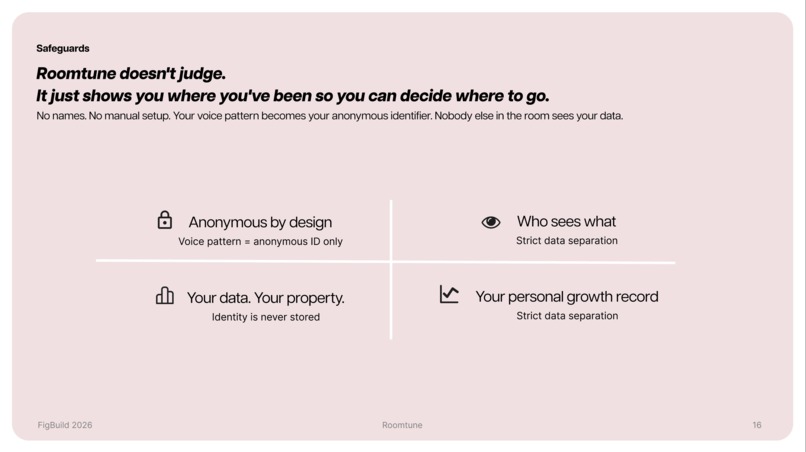

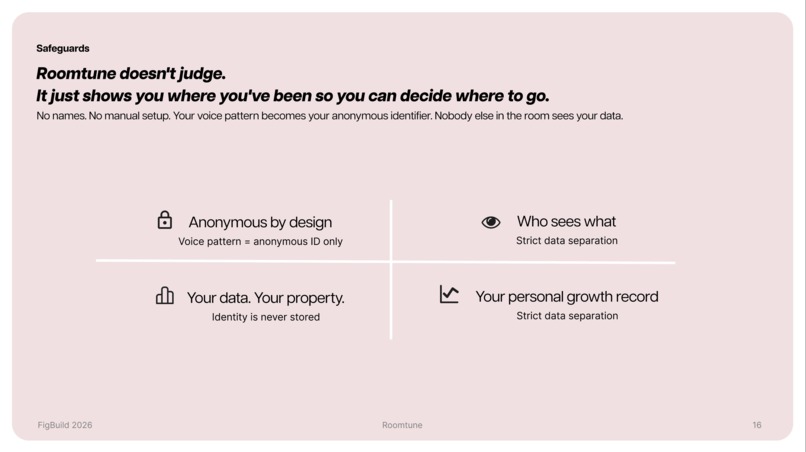

Safeguards: Your data. Your voice. Nobody else's to see.

Inspiration

Brainstorming as a team isn't always easy. There are moments where suddenly there's an awkward silence. You have something to say, but something holds you back. Maybe it's the language. Maybe it's not knowing how you'll be received.

And it goes both ways. Sometimes you're the one holding back. Sometimes you're the one thinking, "why isn't she saying anything? Does she not care?" That misread. That gap between what someone feels and what the room sees. That's where the problem lives.

When we looked at the data, we found we weren't alone. 1.58 million international students are studying in the U.S. right now, a record high. 36% experience anxiety. 35% experience depression. And only 7.67% seek support. They're not disengaged. They're untranslated.

What it does

Roomtune is an ambient sensing system that reads the emotional and social dynamics of a room and adjusts the environment in response. No apps. No wearables. No setup. You just walk in.

The room listens for voice stress signals, speaking pace, interruption patterns, silence duration, and participation imbalance. Every five seconds, it calculates Energy, Engagement, and Tension scores, then classifies the room into one of five states: Active & Engaged, Calm & Focused, Low Energy, Energy Dropping, or Tension Rising.

The room then responds automatically:

- Lighting shifts warmer to reassure, cooler to calm

- Temperature drops to sharpen focus, rises to ease tension

- AR windows frost when focus is needed, clear to let sunlight in when energy is low

- Music crossfades to match the room's needs, from 60bpm to 128bpm

At the end of each session/semester, every participant receives a personal report. Participation patterns, tension peaks, growth over time. The facilitator sees only room-level data. Never individual. Silence is never flagged as wrong.

How we built it

Roomtune was built entirely inside the Figma ecosystem, with cloud infrastructure layered on top.

Figma Make — the live room dashboard, built with React and TypeScript. Real-time audio analysis runs via Web Audio API, detecting voice activity, speaking frequency, silence duration, and participation balance. Face detection runs via MediaPipe Tasks Vision for participant counting.

Supabase — Edge Functions serve as a secure proxy between the app and Anthropic API, keeping API keys off the client. Supabase Storage hosts all music assets streamed per room state.

Anthropic Claude API — two separate calls: (1) Vision analysis every 15 seconds, reading facial expressions and nonverbal cues from the camera feed to improve room state accuracy; (2) Post-session report generation, analyzing the full session log and returning a structured AI report with collaboration score, key moments, and recommendations.

Figma Slides — the full pitch deck, embedded with the live Make prototype for in-presentation demos.

Figma Design — design system and component library, including tokens, type styles, color system, illustrations, and all visual assets used across Make and Slides.

Challenges we ran into

Technical Challenges MediaPipe's WASM files are blocked by Figma Make's CSP, requiring a full npm-based workaround with custom Vite config. Keeping API keys secure in a browser app meant routing all Claude calls through Supabase Edge Functions. Audio alone misreads silence as tension, so we layered in Vision-based facial analysis and state debouncing to stabilize room classification.

The Human Challenge We are three people who grew up in different languages and cultures. At some point we had to stop and actually be honest with each other. Jihyeon and I knew what it felt like to hold back. Justin knew what it felt like to watch someone go quiet and not know why. Wanting to ask, but afraid of saying the wrong thing. That hesitation on both sides? That's the gap Roomtune is trying to close. We weren't just building for a user. We were building for ourselves.

Accomplishments that we're proud of

A fully functional ambient AI system running entirely in the browser — real-time audio analysis, Claude Vision facial detection, automatic environment response, and AI session reports, all inside Figma Make. No backend server. No setup.

What we're most proud of is the conversation we had to have to get here. Three people, two cultures, one shared problem. We figured out what we all needed in common. That felt like the real prototype.

What we learned

Browser sensing is more constrained than expected. Blending audio and visual signals produces meaningfully better results than either alone. Ambient UI has to work when no one is looking at it.

In addition, that silence is never empty. Every pause has a reason. We just never had a way to hear it before. We also learned that designing for inclusion means designing for the gap. Not the person on one side of it or the other. Both sides are struggling. Both sides deserve better.

What's next for Roomtune

Real HomeKit and Philips Hue integration for actual lighting and temperature control. Roomtune already reads the silence. Every pause, every hesitation, every moment someone held back . That's been data all along. We just never knew how to listen to it. Now we do.

Built With

- anthropic-claude-api

- figma-make

- html5

- mediapipe

- post-session-ai-report-generation-web-audio-api

- react

- supabase

- typescript

- vite

- web-audio-api

Log in or sign up for Devpost to join the conversation.