-

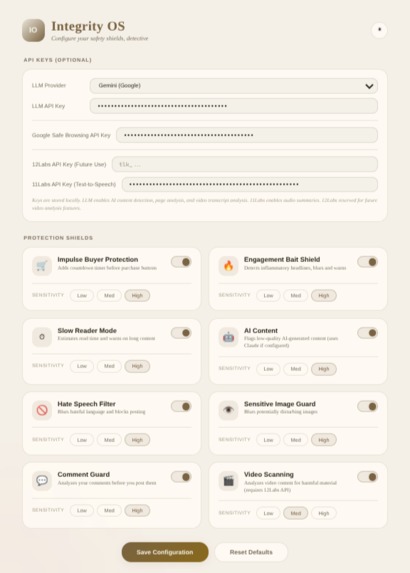

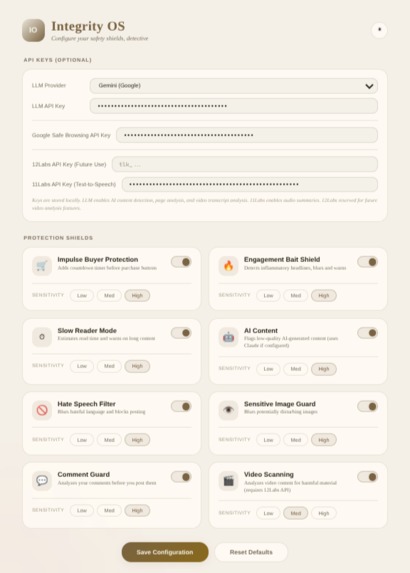

Light mode example showcasing all available user customization options.

-

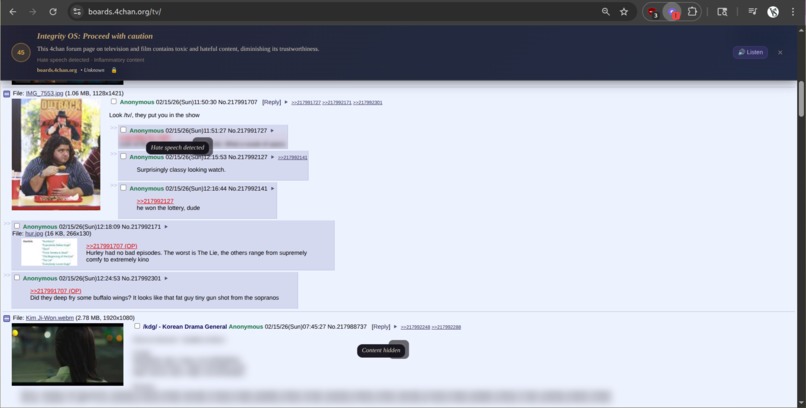

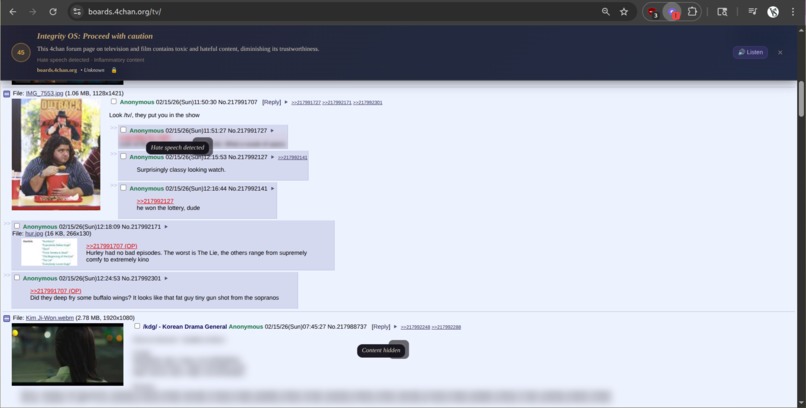

The extension analyzing and properly blurring user defined hate speech and misinformation on a bad actor website [ie. 4chan].

-

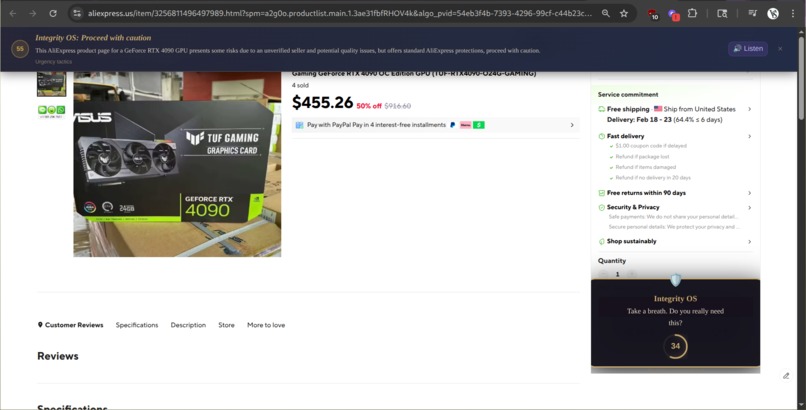

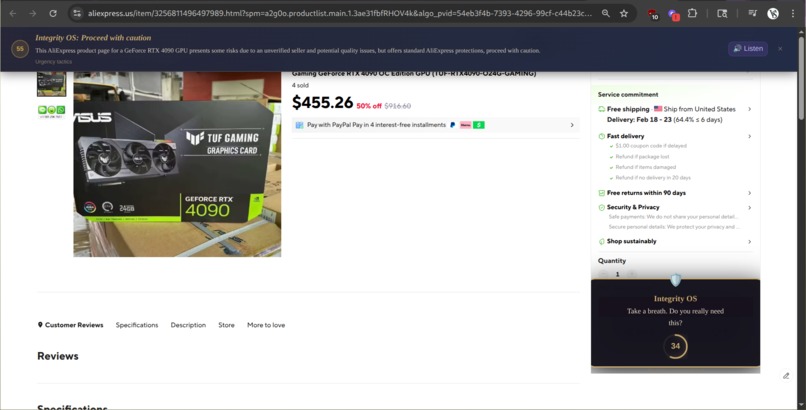

Example of a fake GPU listing that was flagged as a scam due to the pricing, seller details, etc. Hence, not allowing the user to checkout.

-

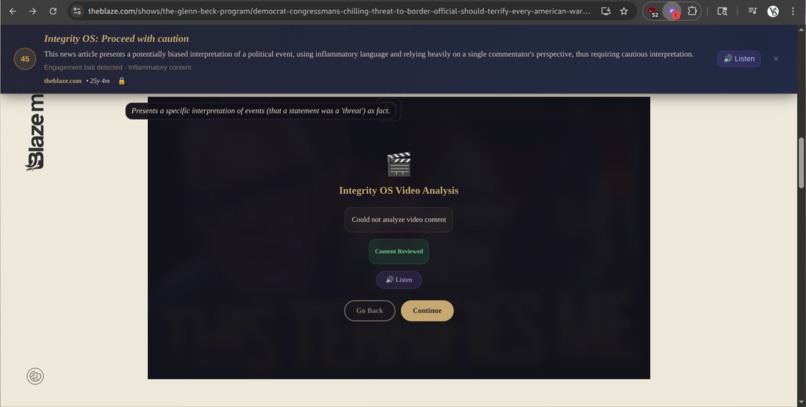

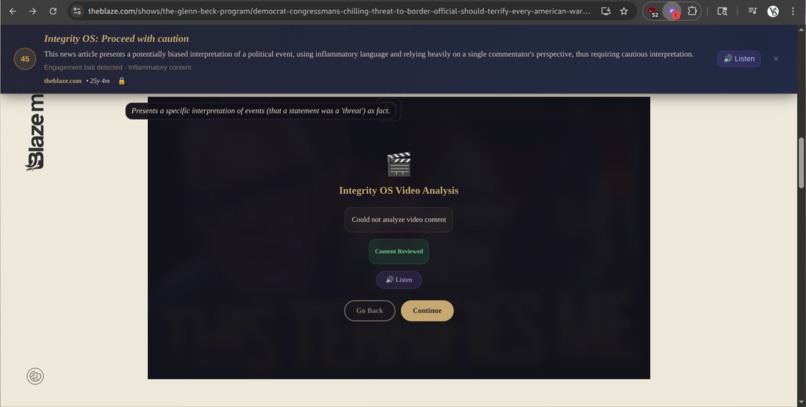

Example of a website being flagged for being very biased and politically leaning while blurring text and providing proper reasoning.

-

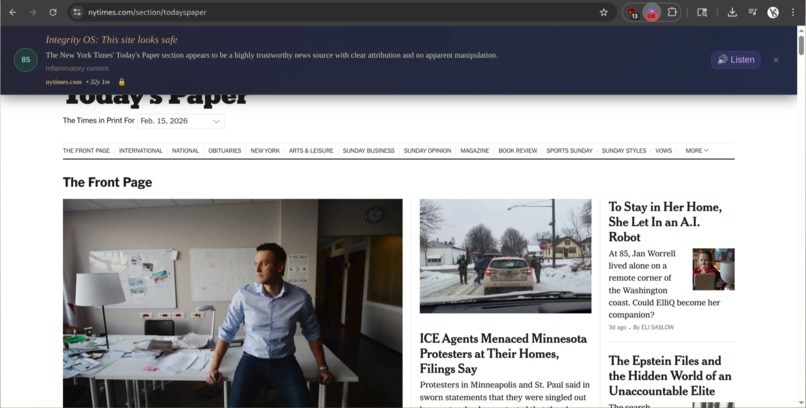

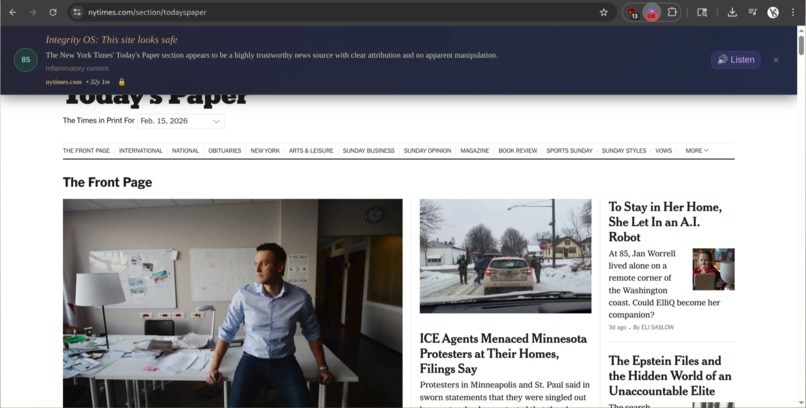

The extension verifying a reputable site and giving a proper score.

-

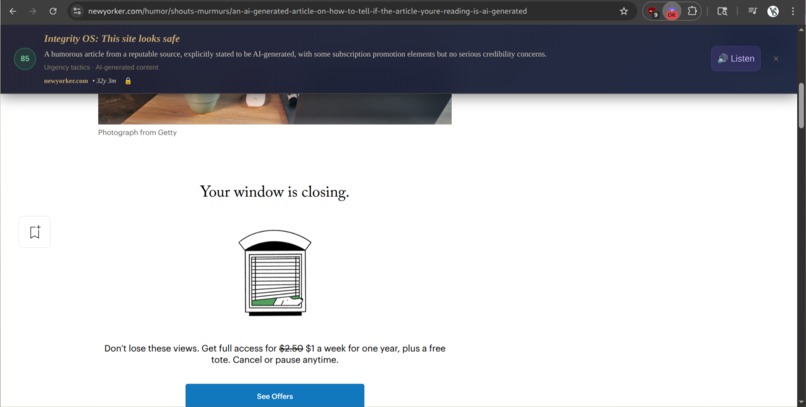

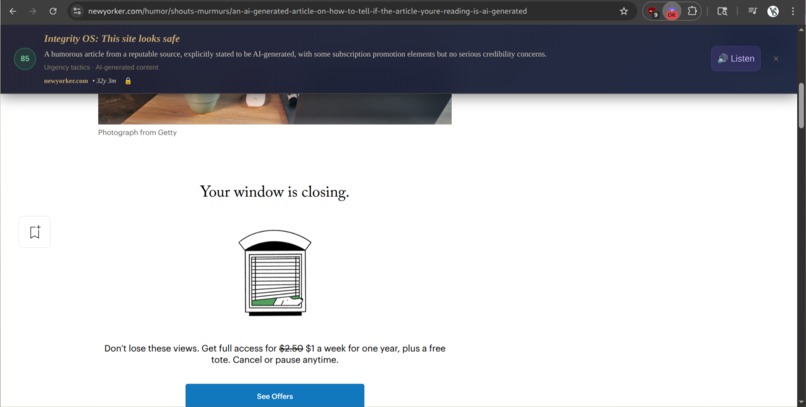

Example of the extension detecting AI written text, images, and a timed price-wall. It then flags the article, warning the user as a result.

Inspiration

As generative AI becomes more advanced and accessible, misinformation and online deception are no longer limited to poorly written phishing emails or suspicious links. Modern scam websites, misleading promotional content, and engagement-driven "bait" can now be generated at scale using AI generated messages and media.

This has created an environment where even tech-savvy users are vulnerable to social engineering tactics embedded directly into a webpage's content and structure. Instead of simply stealing credentials, many modern attacks aim to directly influence behavior, indirectly pushing users toward impulsive purchases, unsafe payments, or emotionally driven engagement with misleading content.

We wanted to explore how users could be protected not after interacting with deceptive content, but before they take interact with it.

What It Does

Integrity OS is a browser extension that assigns a real-time trust score to every webpage a user visits using a layered protection system.

Rather than relying solely on known threat databases, the extension evaluates structural and behavioral indicators of manipulation directly from the webpage itself. Suspicious pages are escalated to a deeper AI-driven scan capable of detecting AI-generated misinformation and urgency-based social engineering language.

Based on the user’s selected protection profile, Integrity OS can:

- Warn users before engaging with low-trust comment sections

- Recommend cooling-off periods during suspicious or impulsive checkout flows

- Block payment submission on high-risk pages

- Provide transparent summaries of detected risk factors, allowing users to directly listen to an AI summary of the websites trustworthiness and safety.

This enables proactive behavioral protection before misinformation or manipulation results in harmful decisions.

How We Built It

Integrity OS was designed as a layered trust evaluation pipeline to balance performance with meaningful detection.

Layer 0 – User Profile

Users select a preferred protection mode ranging from informational-only feedback to active behavioral intervention such as payment blocking or engagement warnings.

Layer 1 – Quick Trust Check

A lightweight scan is performed on every webpage in under one second. This includes:

- Domain age lookup via WHOIS

- SSL certificate validation

- Known scam database comparison

- Trusted-site blacklist comparison

Page structure analysis for:

- Payment or checkout forms

- Comment submission fields

- Excessive pop-ups

- Heuristic based suspicious urgency-based language

These indicators are used to generate an initial trust score from 0–100.

Layer 2 – Deep AI Scan

If a page’s score falls below a defined threshold, the extension triggers a deeper AI analysis. During this process:

- All visible webpage text is extracted and analyzed for AI-generation patterns and manipulative language

- Suspicious urgency or scam-related messaging is flagged

- A summarized risk report is generated and optionally rendered as audio feedback for user transparency

The final trust score is then updated using both structural and AI-derived indicators.

Layer 3 – Protection Rules

A rule-based protection engine evaluates the final score against the user’s selected profile. Depending on detected risk factors, the extension may:

- Block payment submission on high-risk pages

- Display cooling-off prompts during checkout

- Warn users before posting in low-trust comment sections

Layer 4 – User Interface

Users receive visual feedback through a dynamic trust badge:

| Score Range | Status |

|---|---|

| 80–100 | Verified Safe |

| 60–79 | Proceed with Caution |

| 0–59 | Threat Detected |

Clicking the badge reveals a full trust report including detected red flags and recommended actions.

Challenges We Ran Into

Maintaining real time and efficient performance while still enabling meaningful AI analysis was a major challenge. Running deep detection on every webpage would introduce unacceptable latency, so we implemented a trigger-based escalation system to ensure that expensive scans only occur when structural risk indicators are present.

Additionally, detecting checkout flows or comment submission forms across dynamically rendered websites proved difficult due to inconsistent DOM patterns and modern frontend frameworks.

Balancing user autonomy with protective intervention also required careful consideration to ensure users were informed without being overwhelmed by technical detail.

What We Learned

Through this project, we explored:

- Real-time detection of AI-generated misinformation

- Multimodal trust evaluation techniques

- Behavioral intervention design for online safety

- Tradeoffs between detection depth and latency

- Practical challenges in identifying social engineering tactics at the interface level

What's Next

Future improvements to Integrity OS could include:

- Trusted contact notifications for high-risk actions

- Configurable interaction delays for suspicious checkout flows

- Crowd-sourced trust scoring

- Integration with localized scam reporting datasets

- Support for engagement warnings on social media platforms

Log in or sign up for Devpost to join the conversation.