-

-

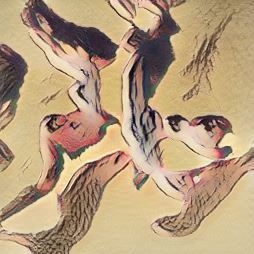

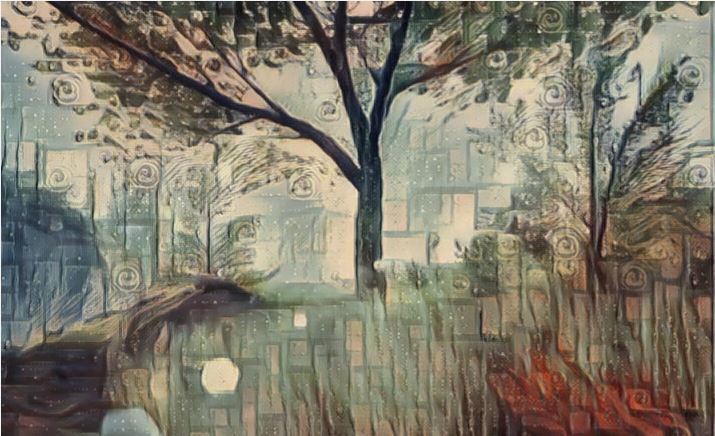

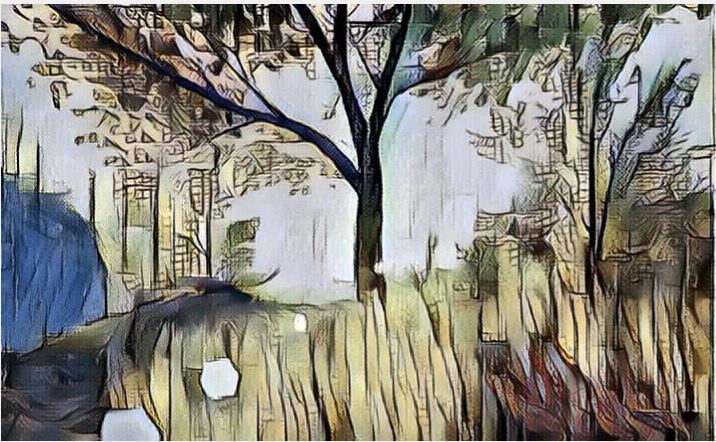

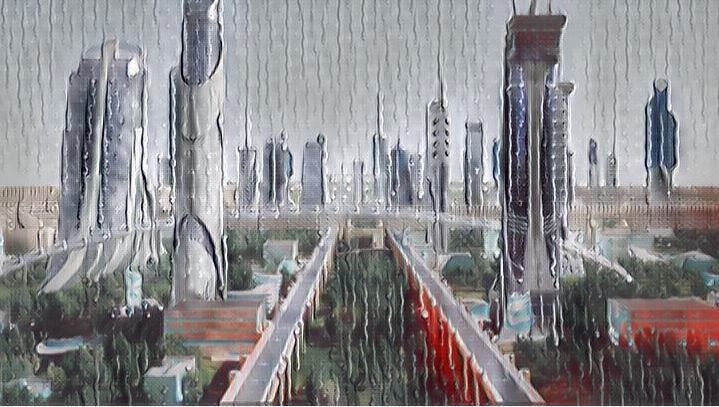

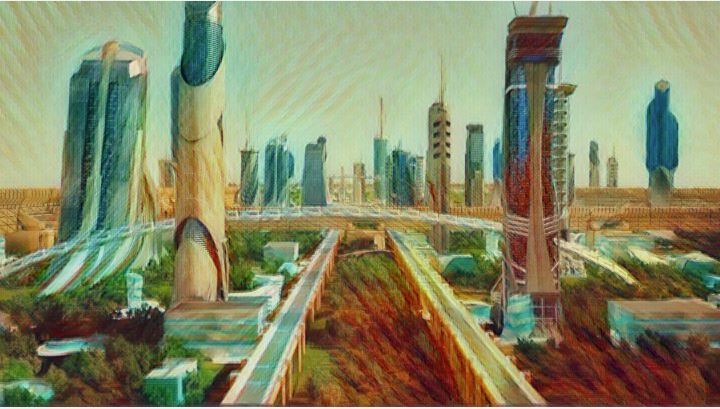

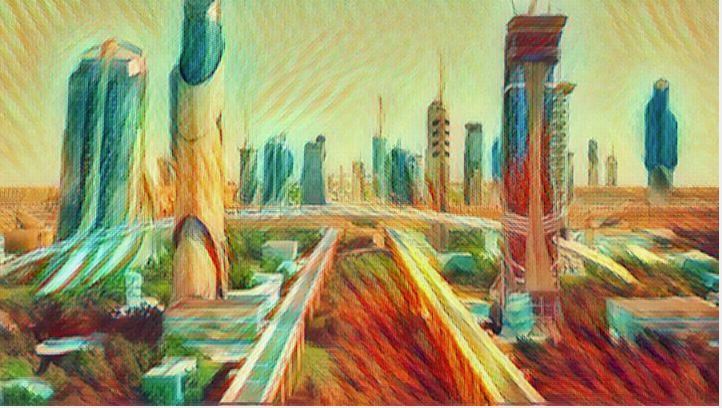

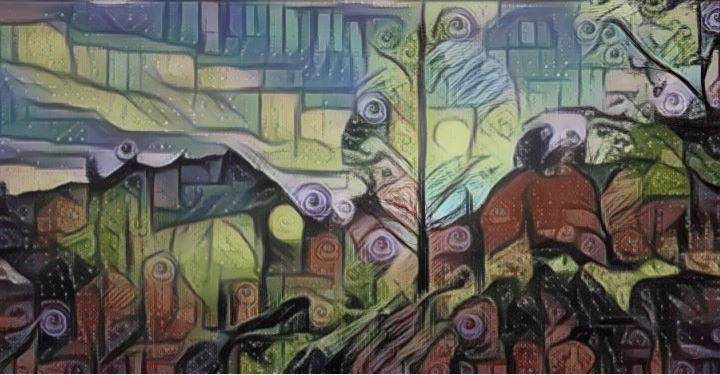

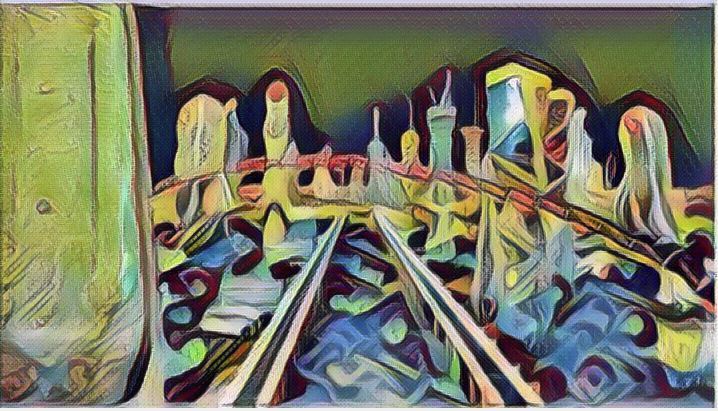

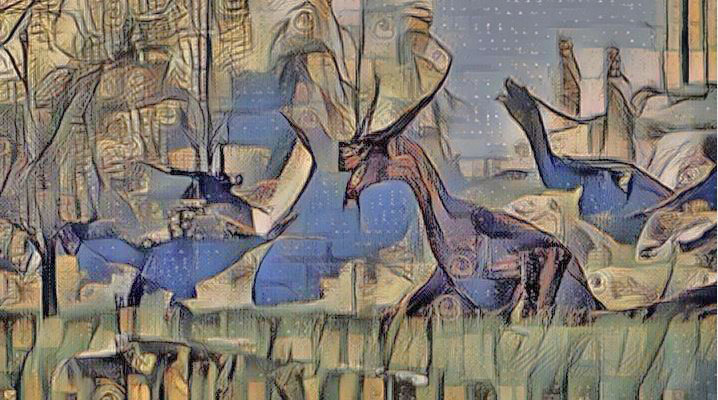

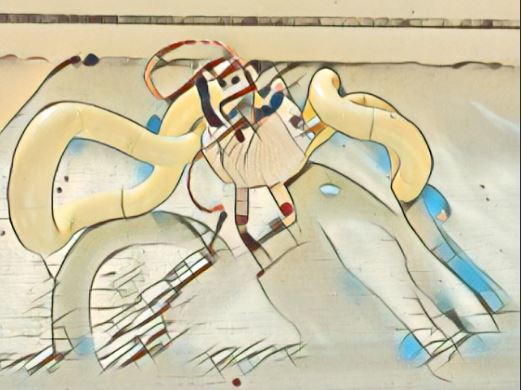

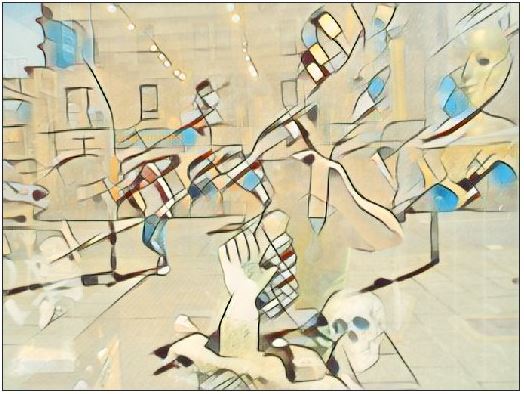

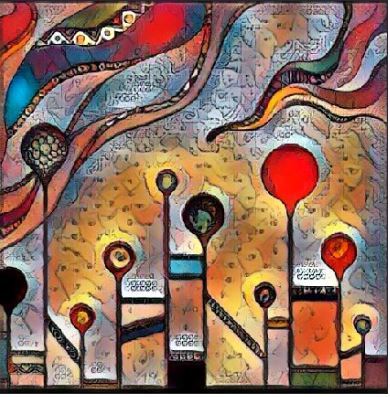

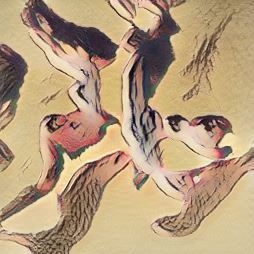

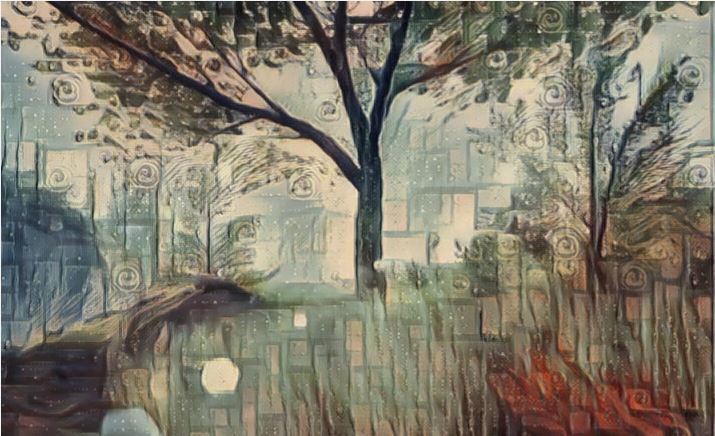

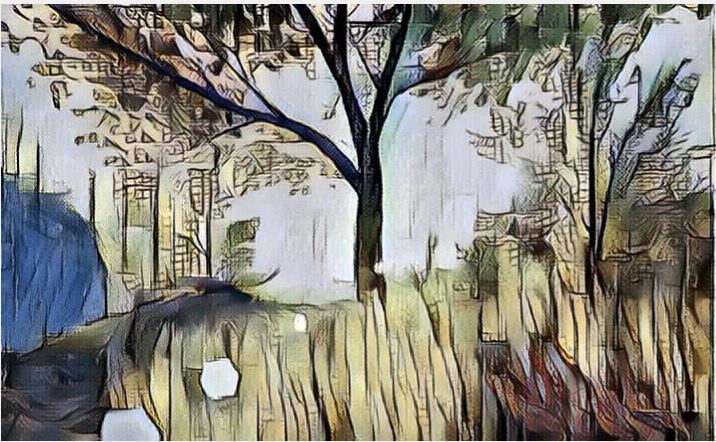

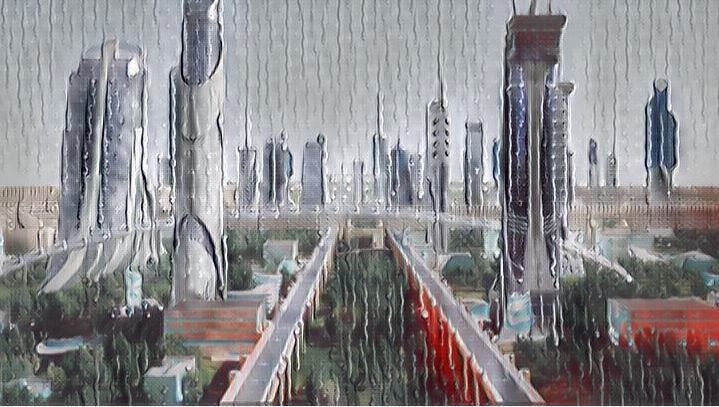

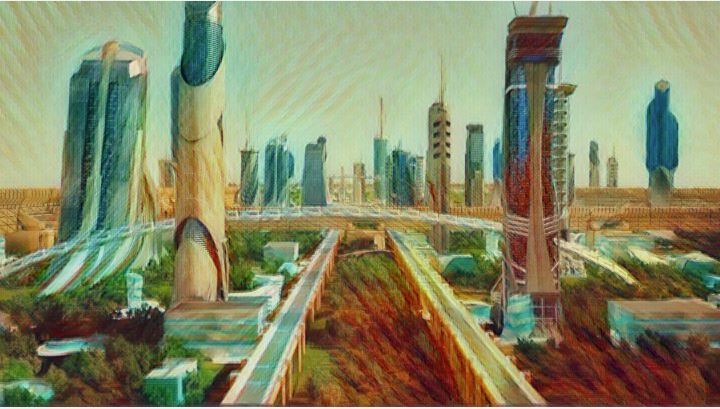

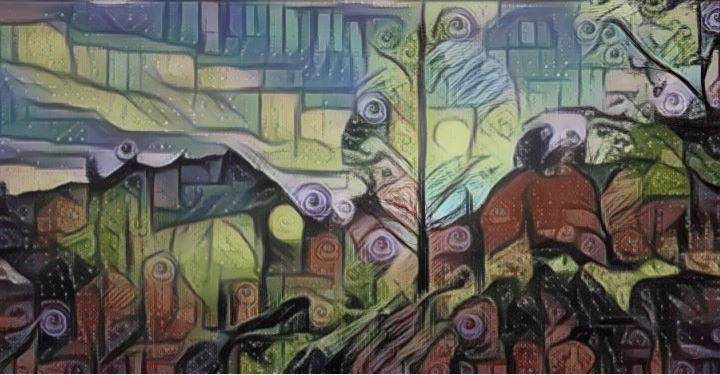

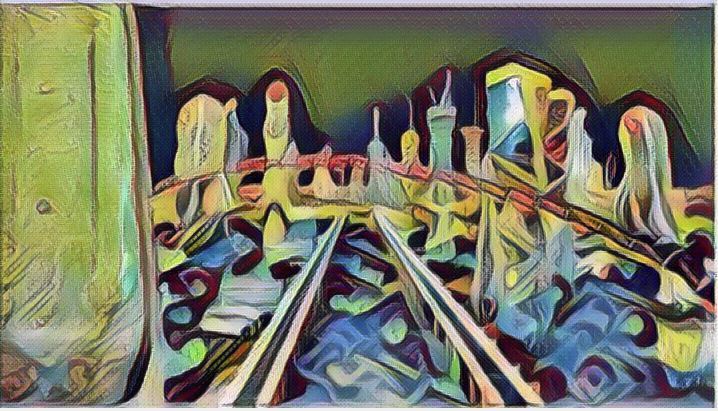

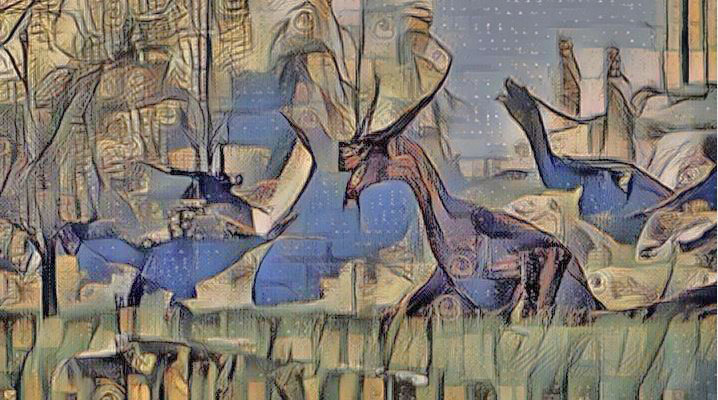

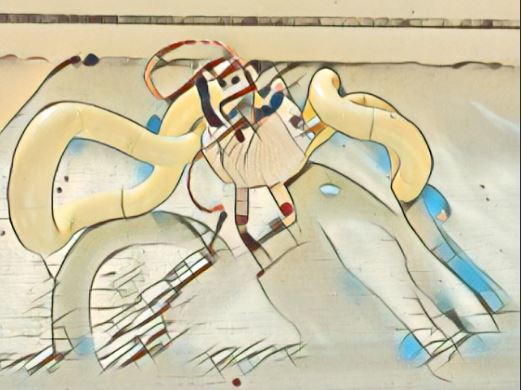

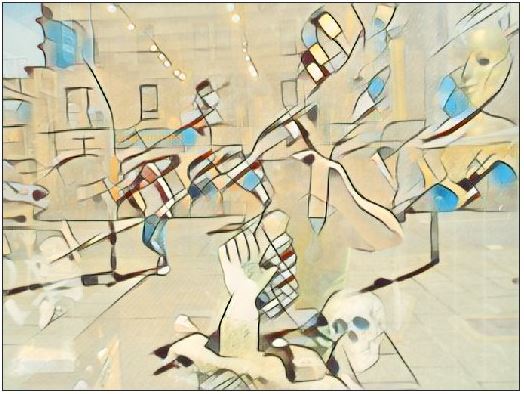

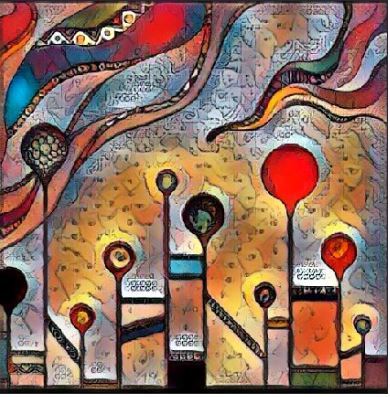

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

using gan

-

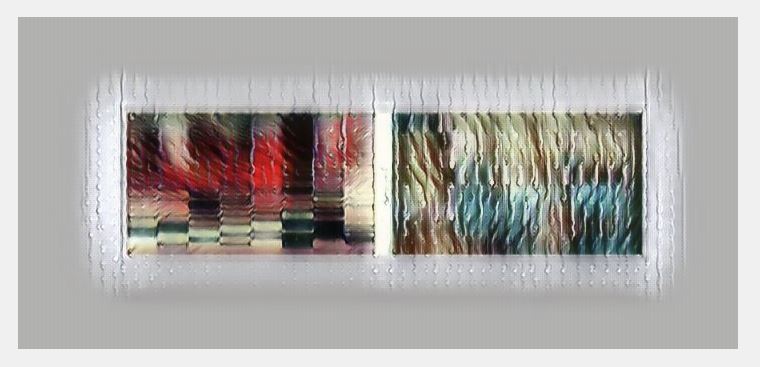

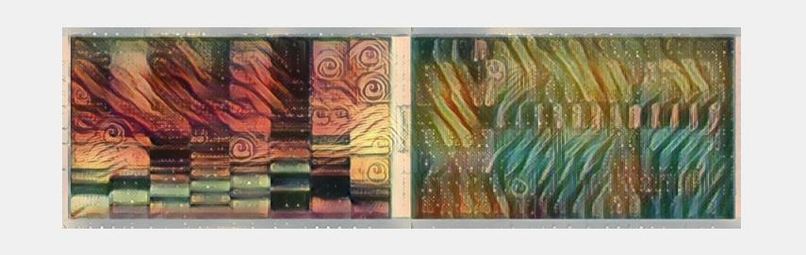

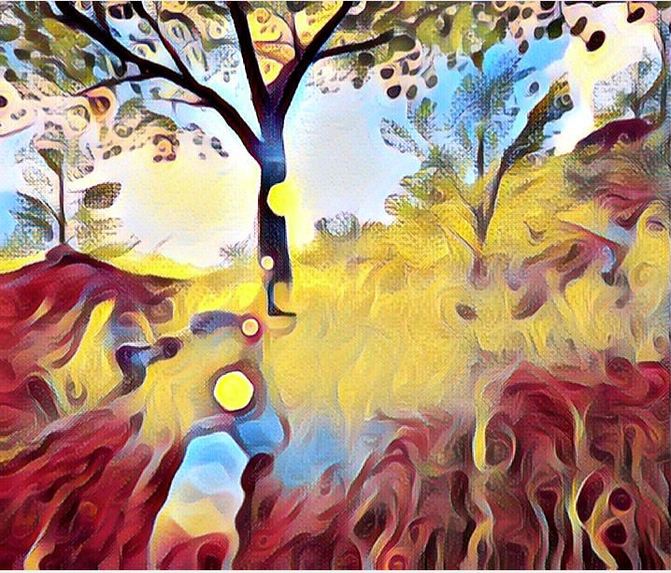

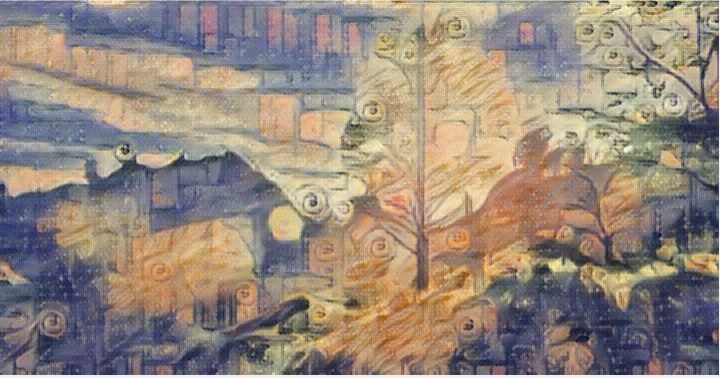

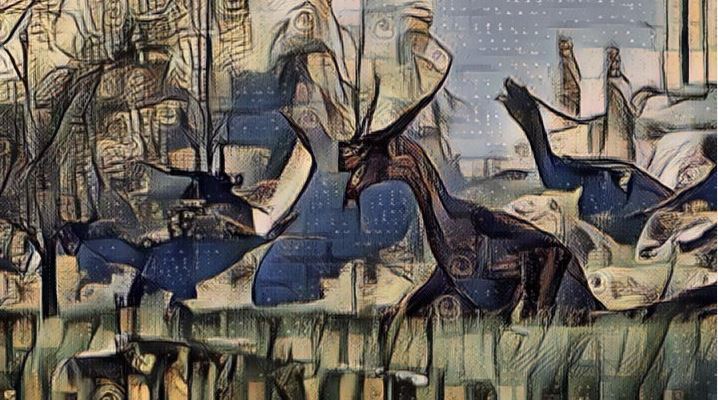

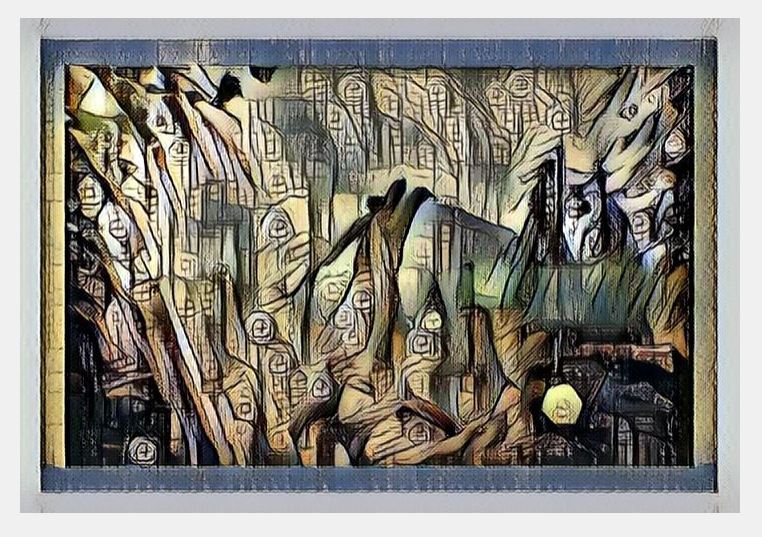

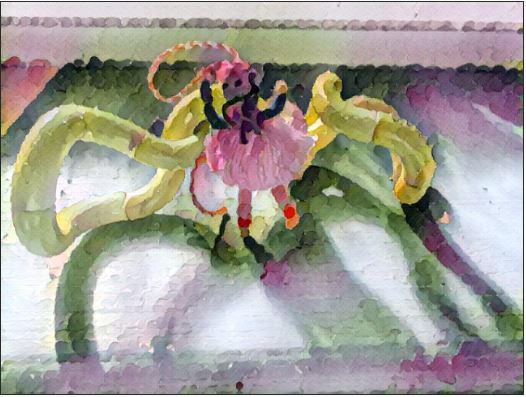

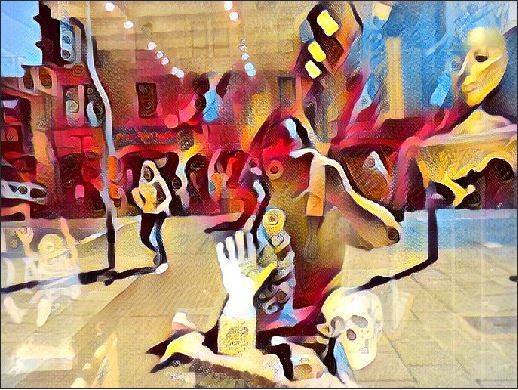

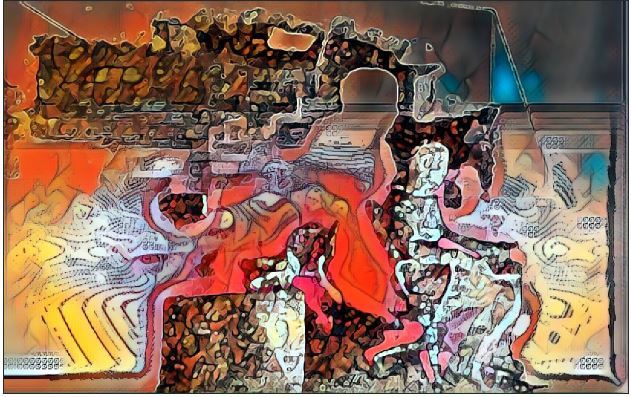

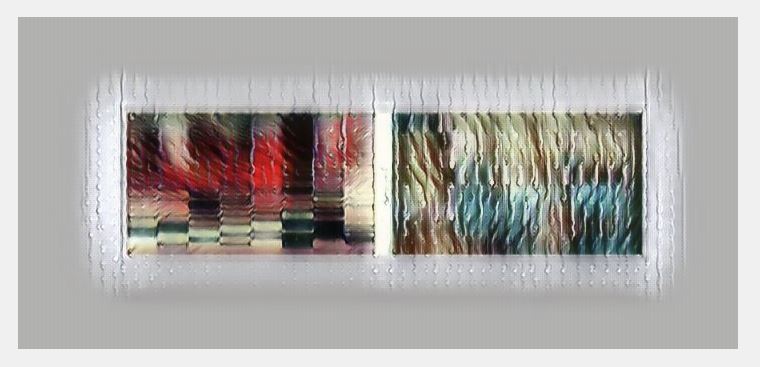

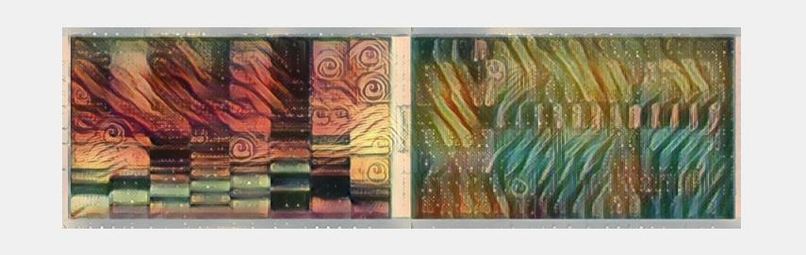

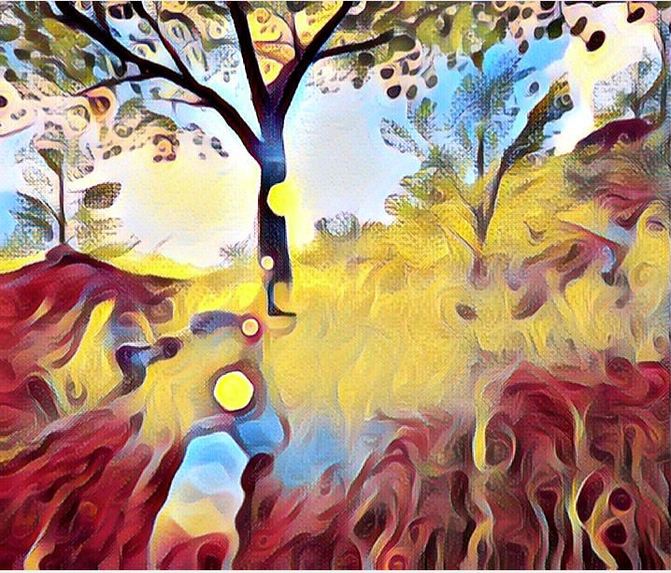

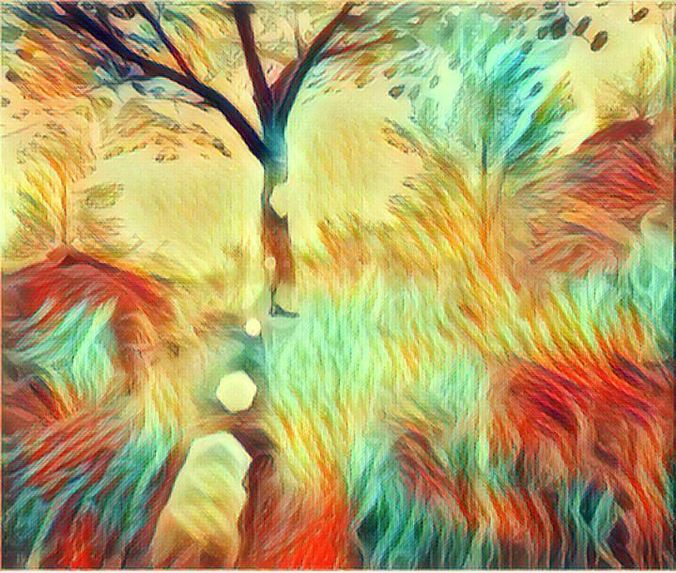

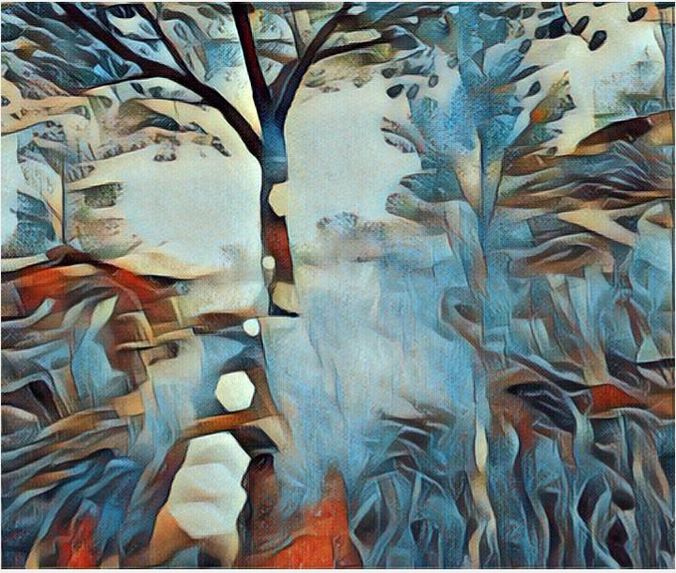

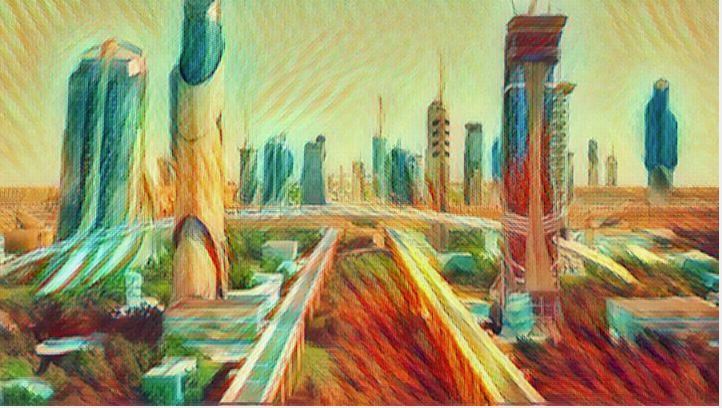

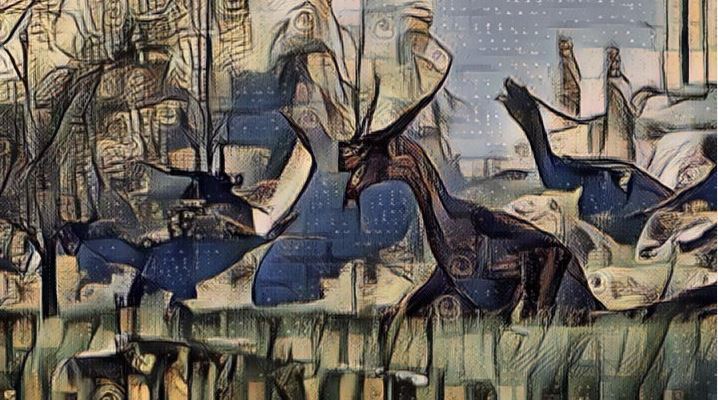

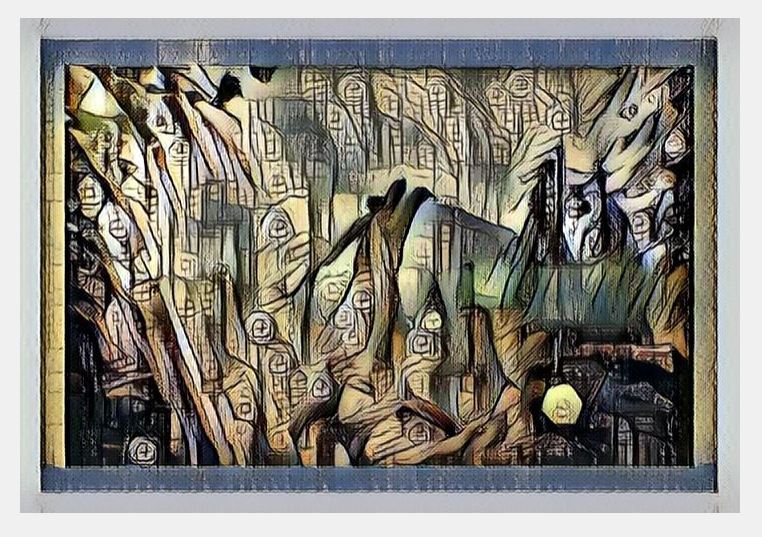

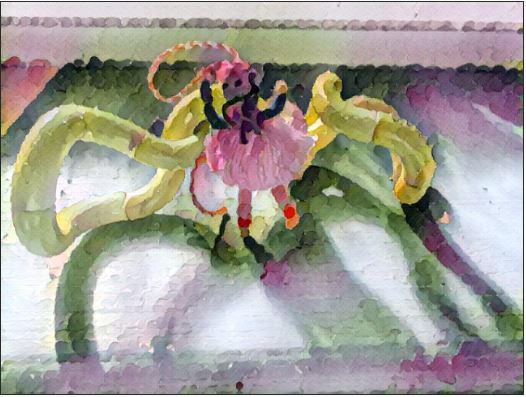

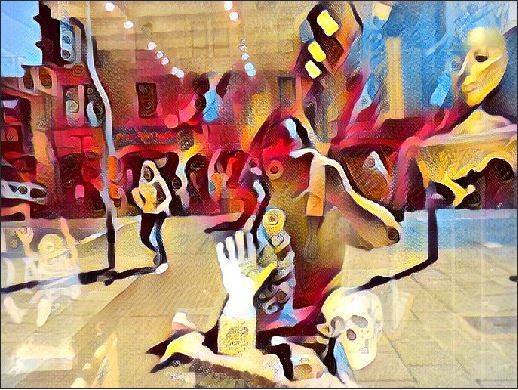

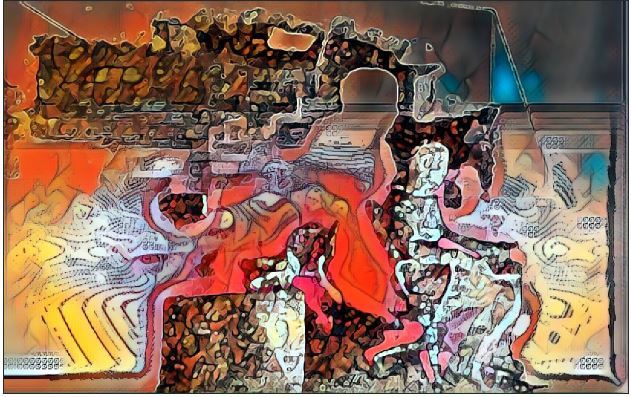

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

multi style gan applied to sound spectrum analysis picture of music using librosa

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

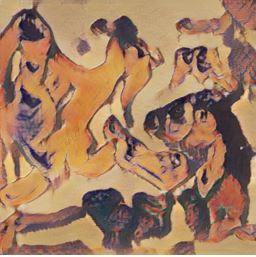

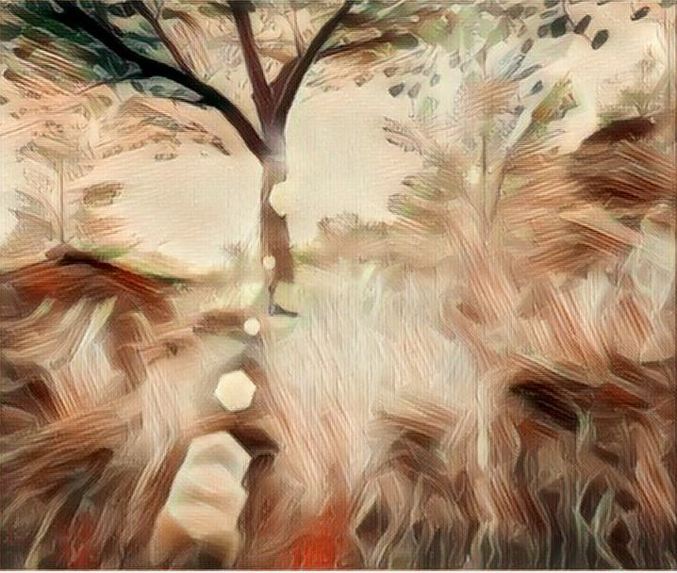

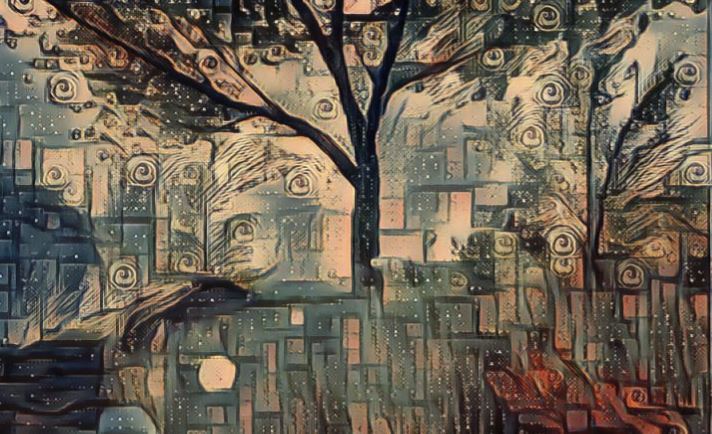

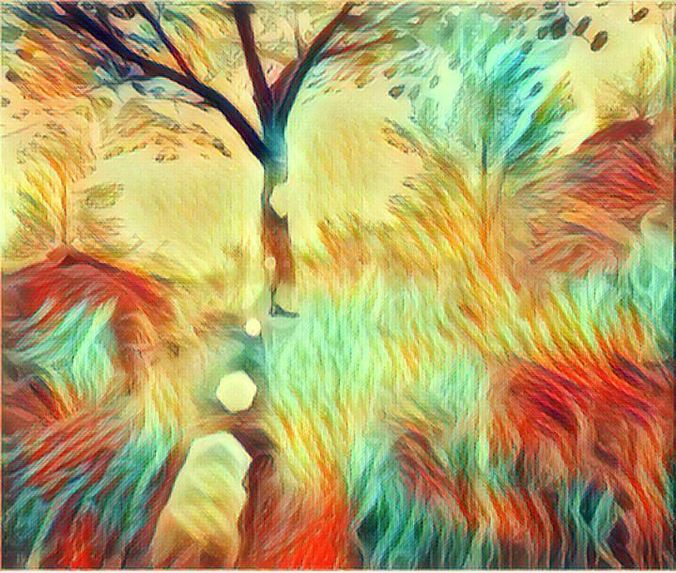

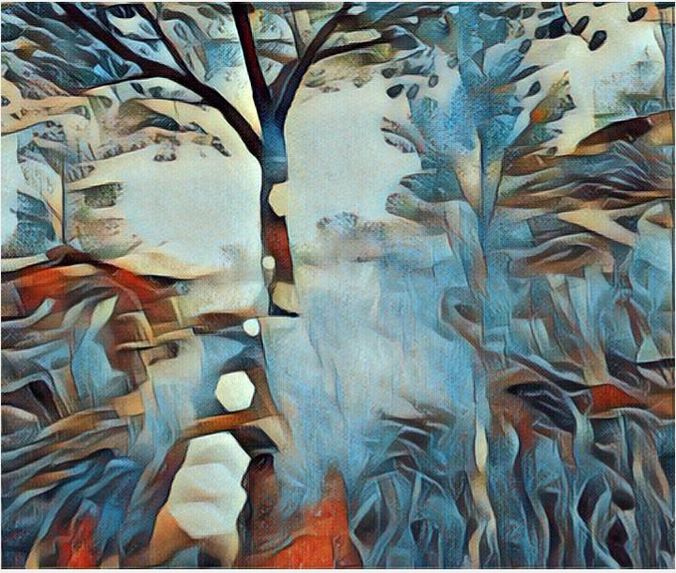

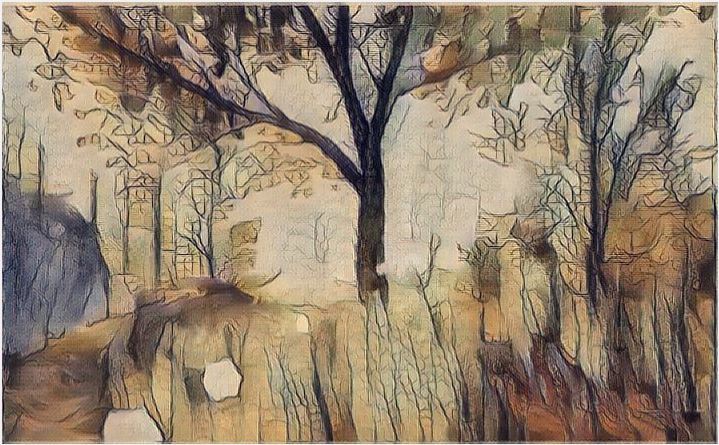

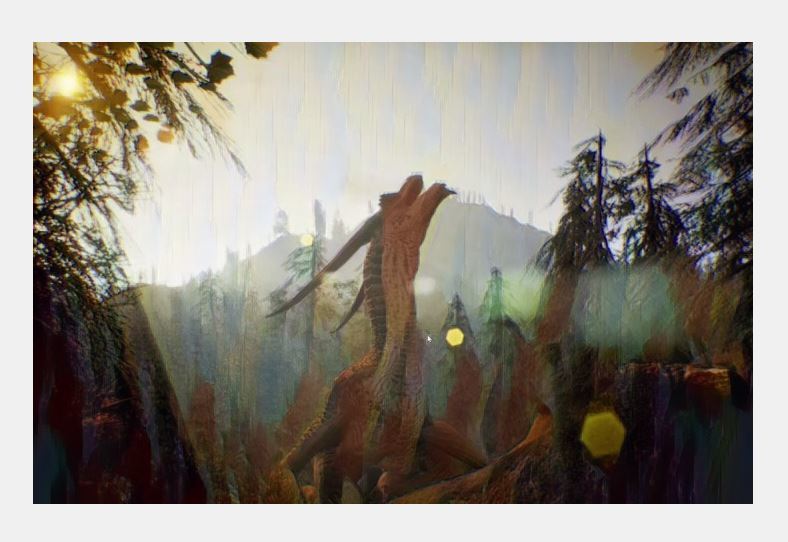

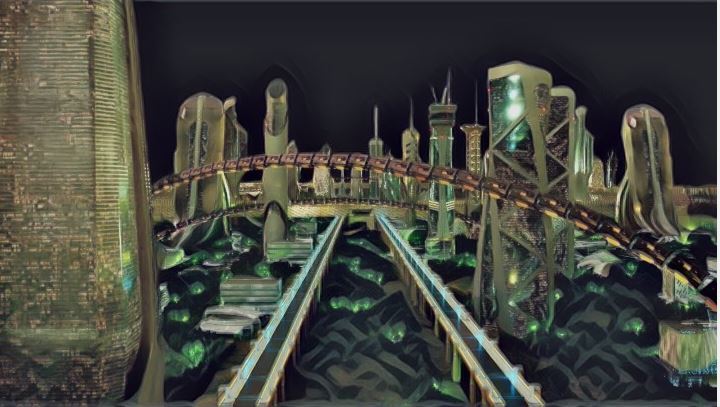

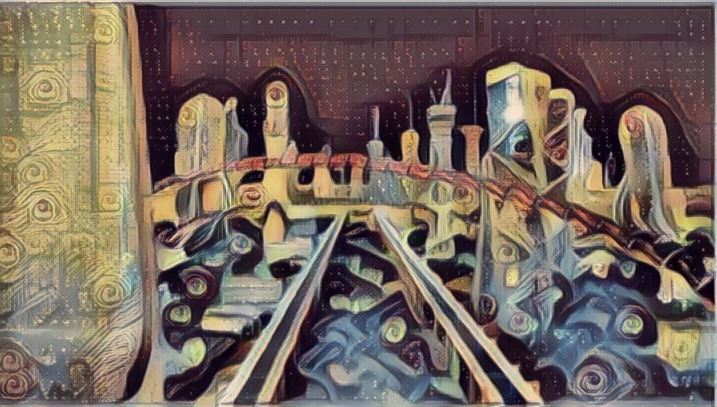

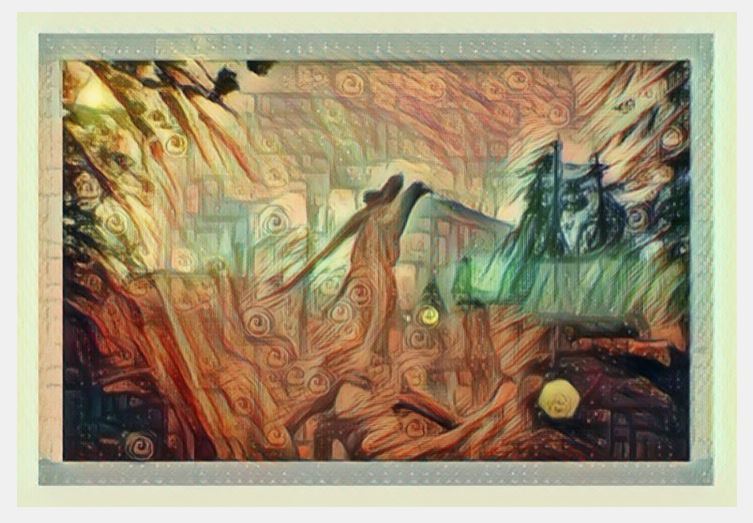

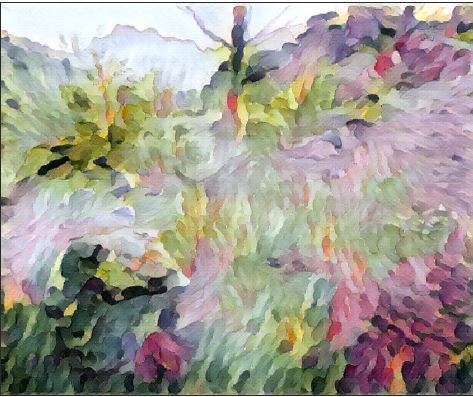

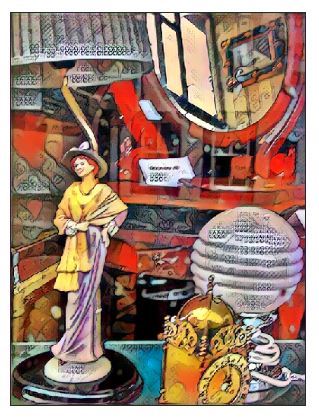

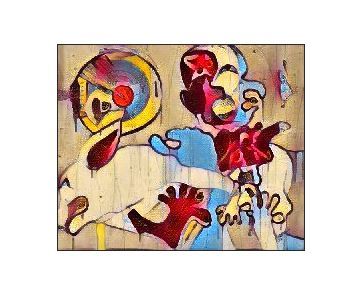

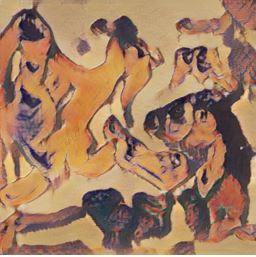

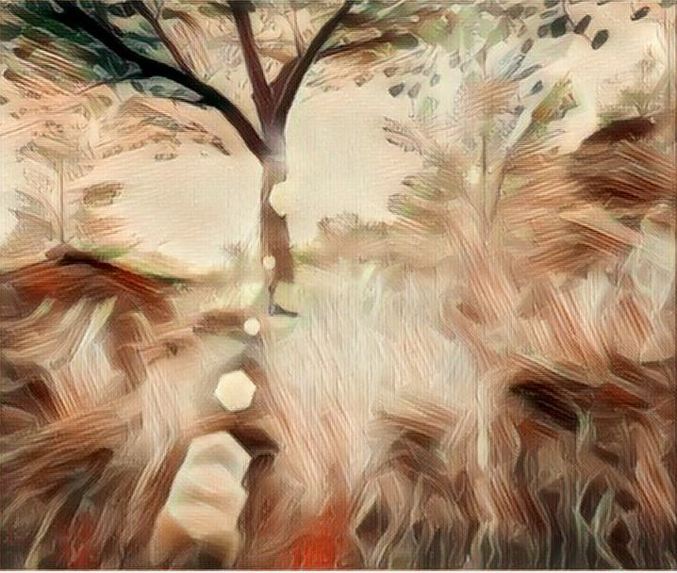

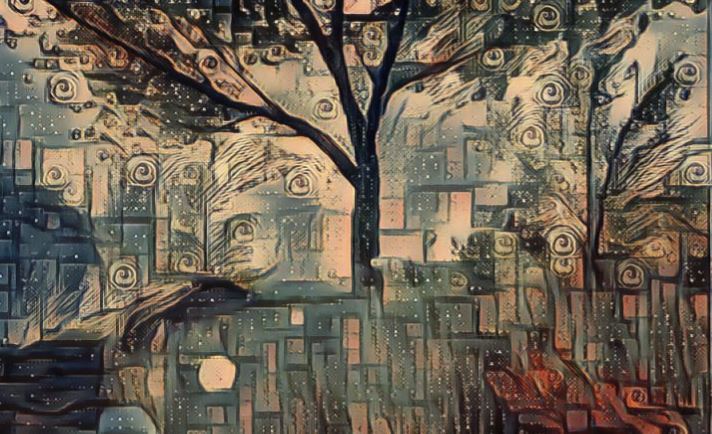

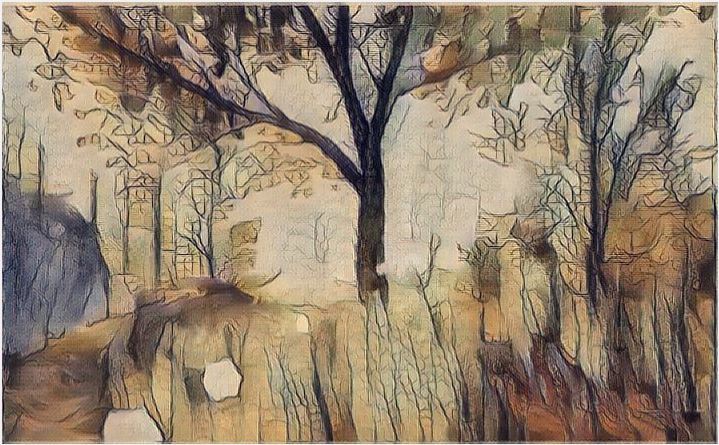

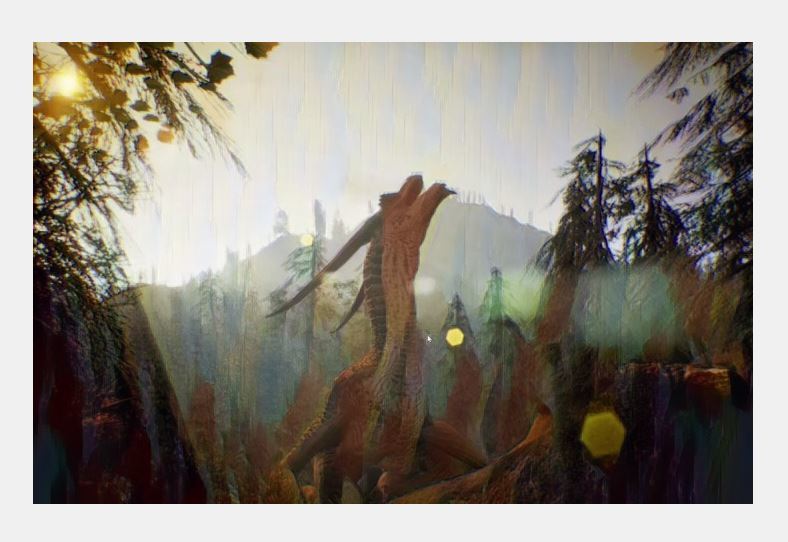

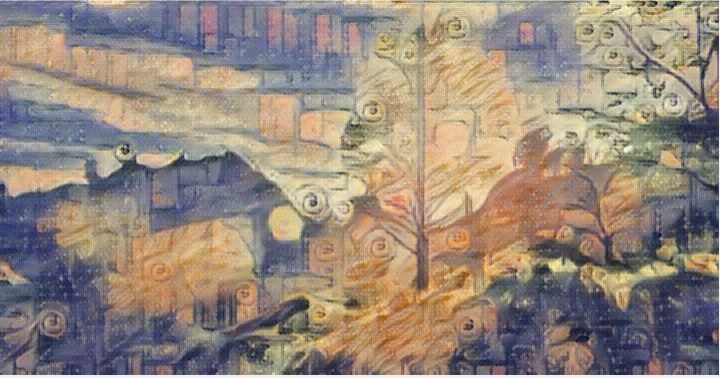

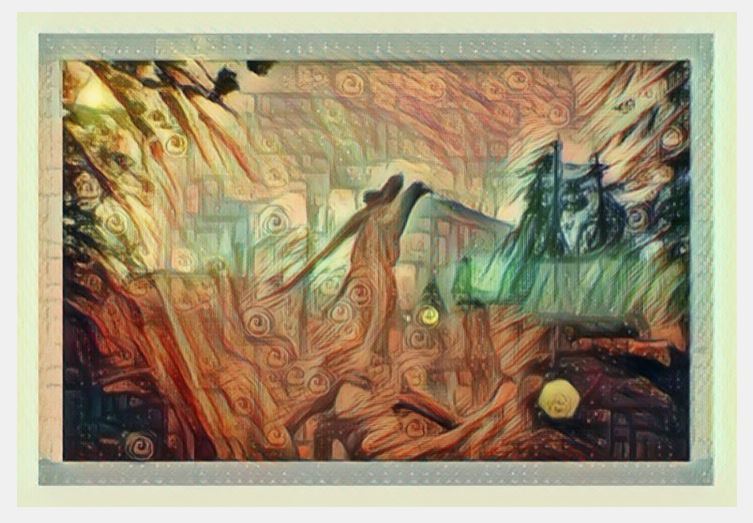

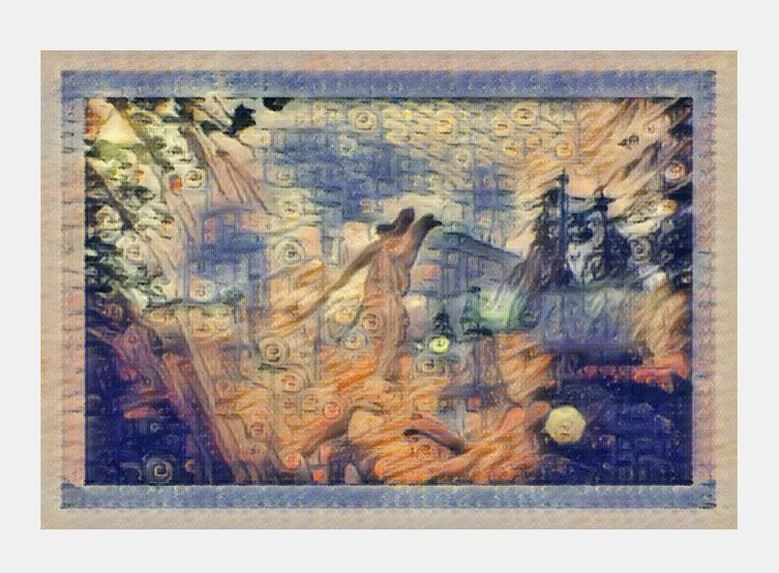

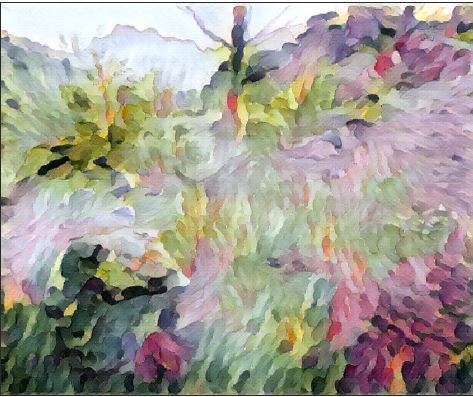

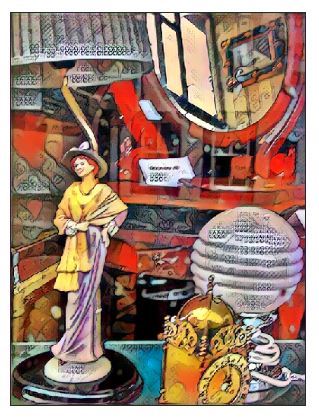

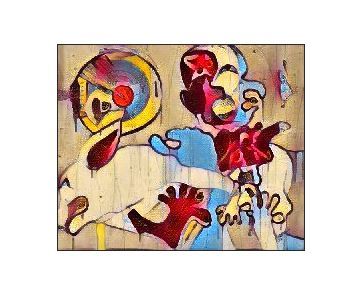

oil painting i did and put through ML

-

oil painting i did and put through ML

-

oil painting i did and put through ML

-

oil painting i did and put through ML

-

-

-

-

-

-

-

my dog!

-

-

-

-

-

-

-

-

-

-

-

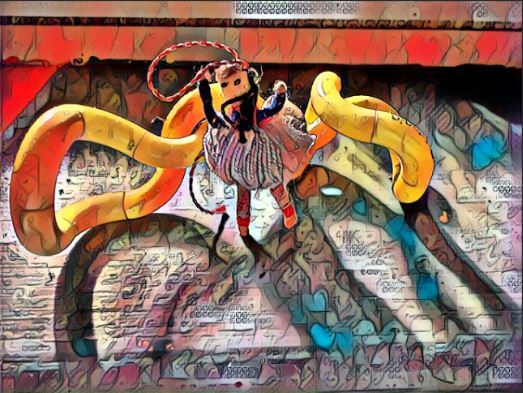

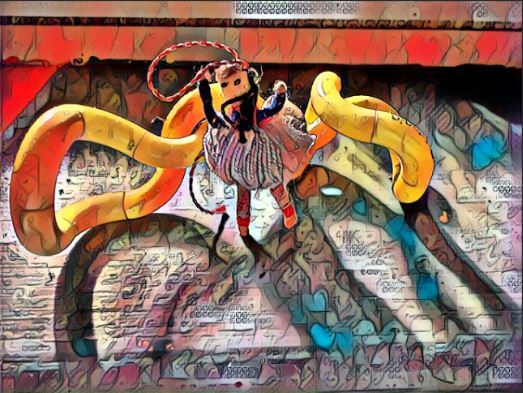

crushing his car

-

-

-

-

-

-

-

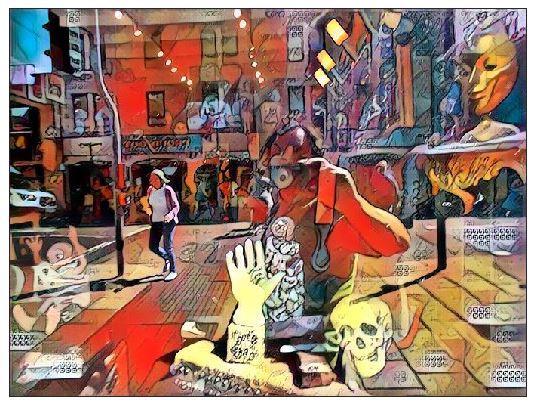

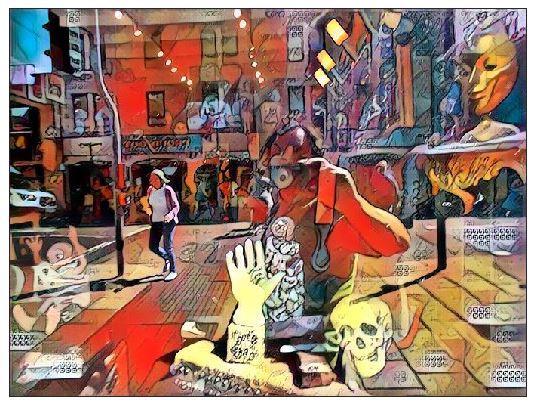

Inspiration

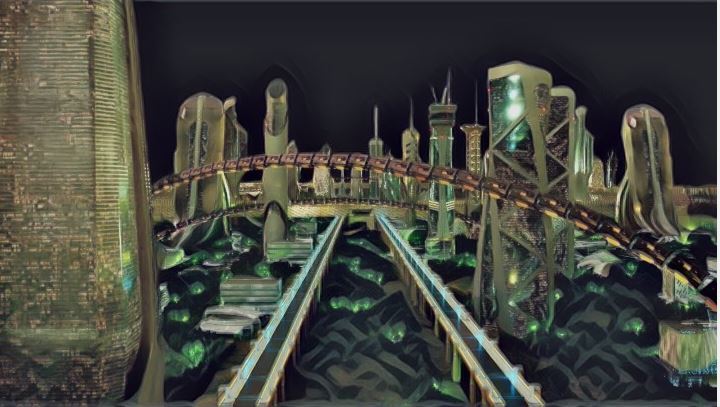

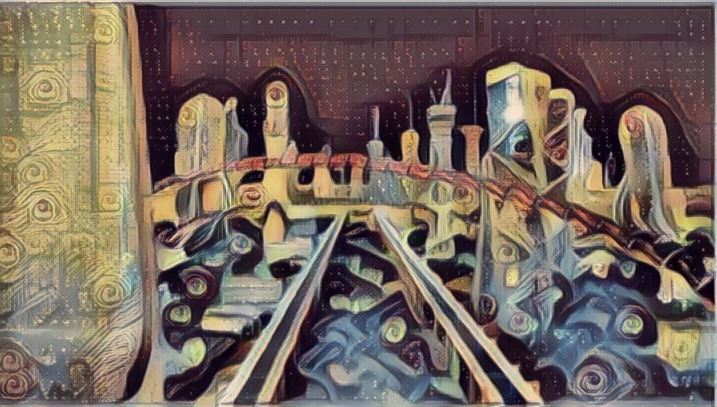

Inspired by the original description to combine machine learning and visual and sound together, some 20 years ago i had experimented with the visual art and computer mixture in these old pages that are no longer available to most browsers to see the full effect of the java and javascript that is running, this was to explore visual art / interactive games with my music and art. link this was primarily to play preview music and have interactive funky visual with some games in java link. Around the same time it was to look at both virtual reality scenes (VRML) and for interactive speech and games link link so after some years of making video and music link link link link as well as programming of AI ML link After learning about style GAN as part of the hackathon various new visual art possiblities have been explored and a new site mixing arts with machine learning in the art form has been produced, this will be presented as part of the the submission. These can be seen here , this also contains some composition using magenta machine learning transcribing wav into midi , there is more on these to come https://www.youtube.com/channel/UCzt-1m9T9SjmrJ1seYsGBmA

About this :- Its a style gan art (multi filter) with music composed using onsets and frames python to tranpose wav to midi and improvise it, I have made sight adjustment to quantise but kind of kept that machine learning output for this sound.. enjoy and please visit the other exhibits on here and try the chatbot if you are brave enough.

The project is entirely myself for this AIML hackathon, although help might have been grateful as time commitments meant i couldnt do as much as was liked originally and the fact that i had so much inspiration artistically with the new things taught as a consequence meant I didnt fully complete all the initial desires.

This portal of entirely my own music (various forms) accompanied with various art forms related to machine learning or created by machine learning. This exploration is for various different style gans and ways in which i can see the mind and art merging in sound and vision and a unique and unusual way. The work also in some videos pictorally and artistically shows different uses of aIML in for example other vision analysis. The work also encompasses various visual art and animation and my photography and music scenes and experiments in different ways from entirely played entirely programmed or used a intelligence bot online.

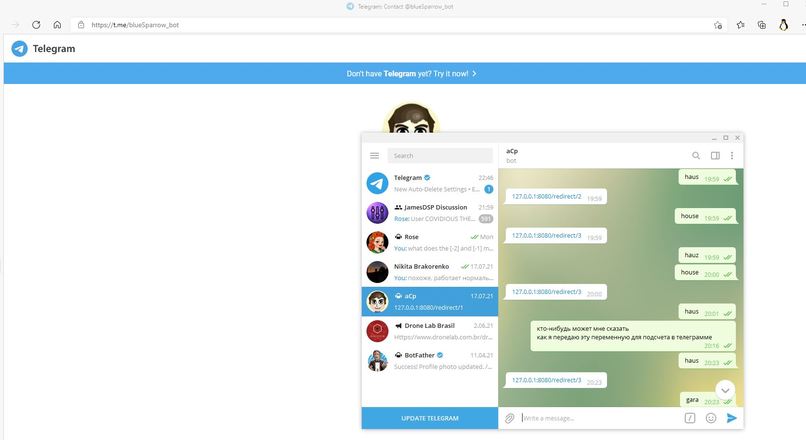

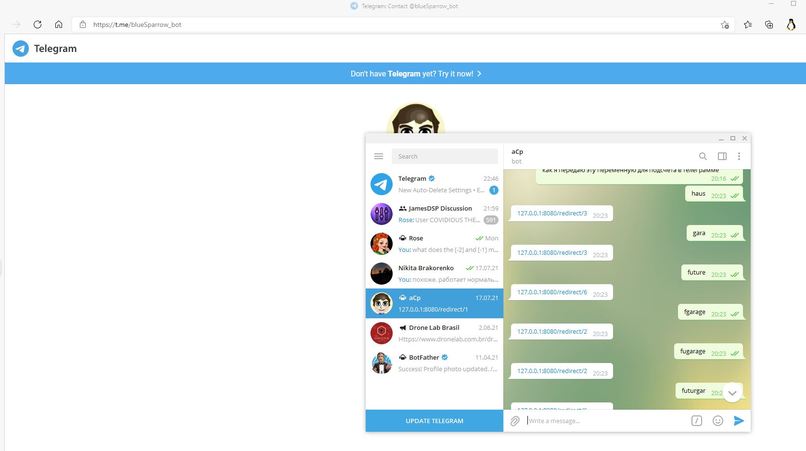

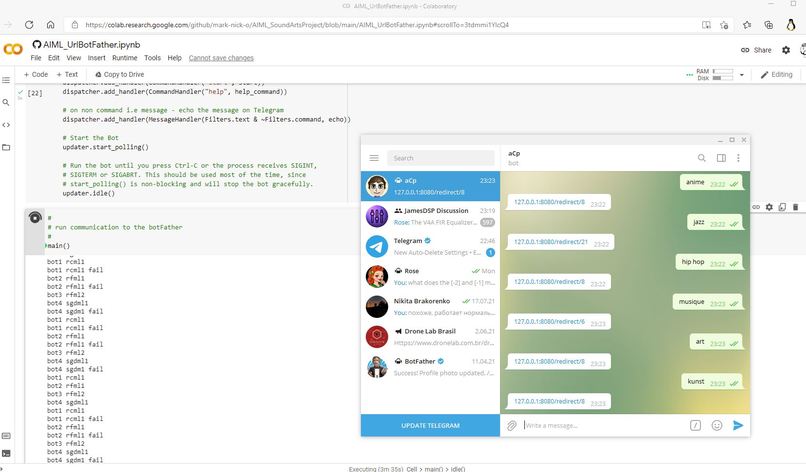

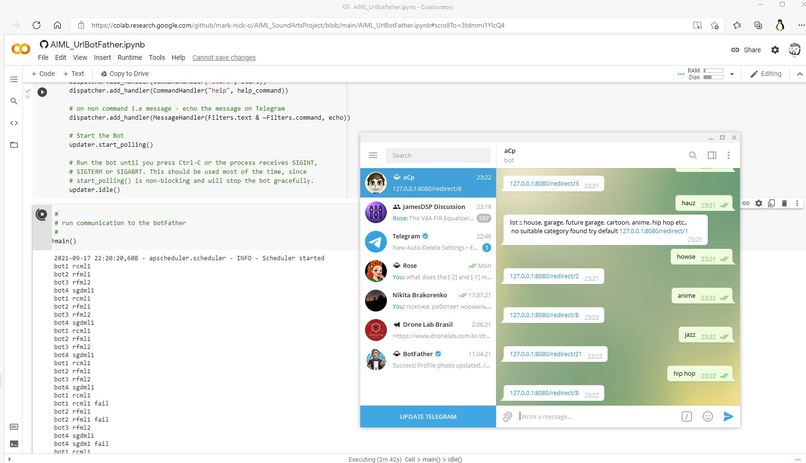

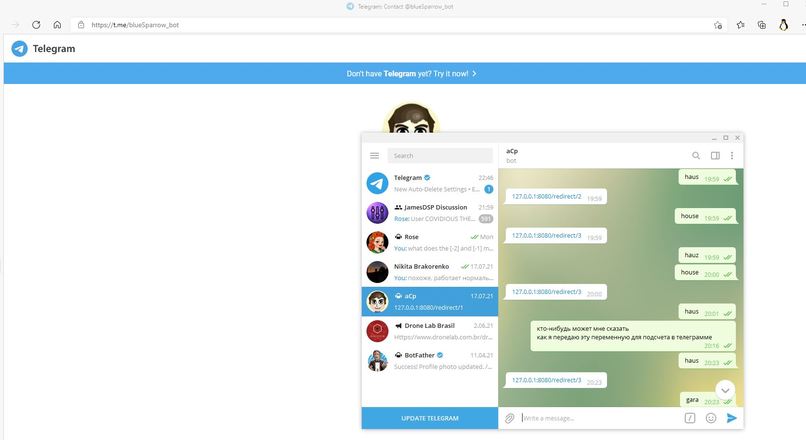

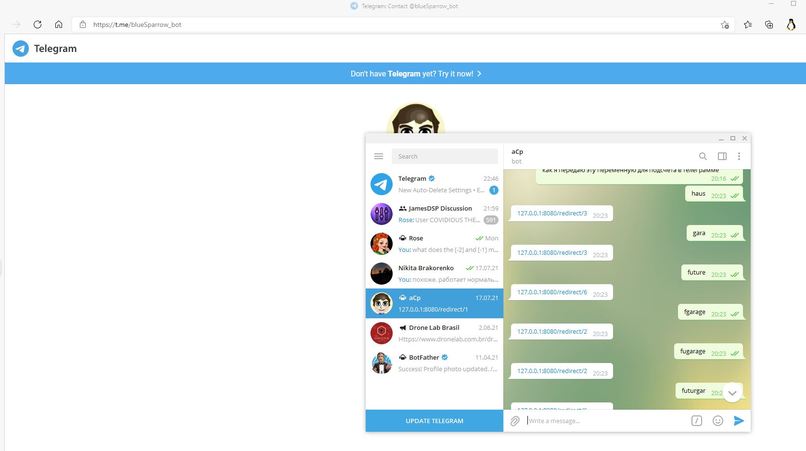

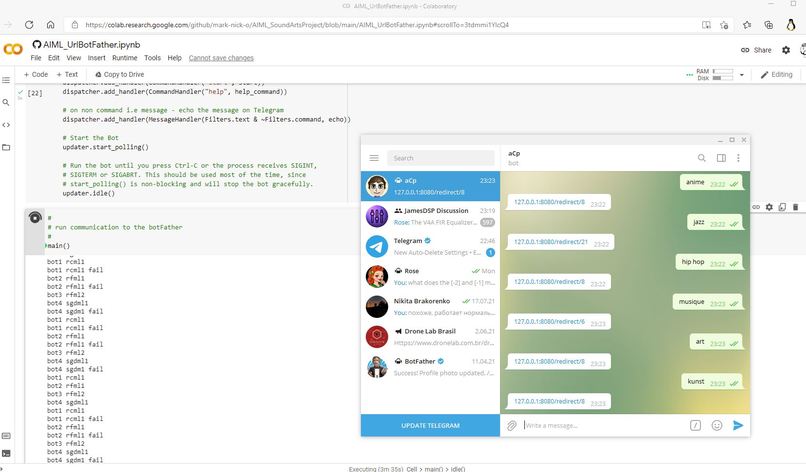

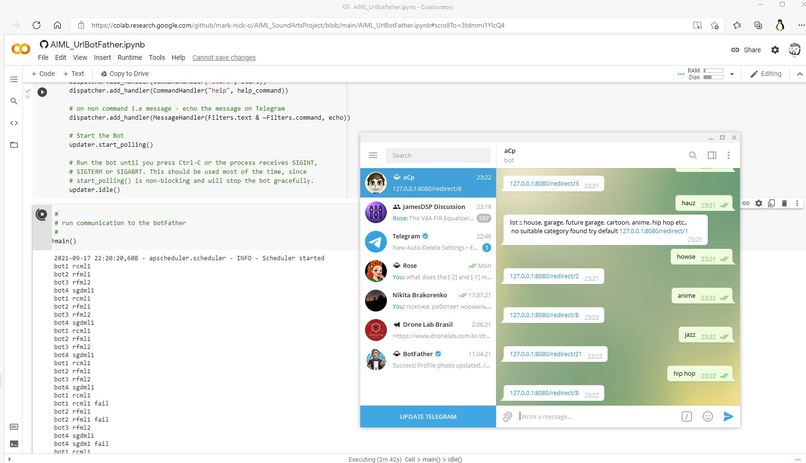

The github respository accompanying it has a mesanger chatbot using NLP to decide which video from this site and other sites encompassing my work you are most looking for, and it then should send the appropiate link. I have made 2 version of the python script one which will directly give you the link the other goes through a redirection server allowing you to reconfigure it. The server is not at present hosted. Some other python i have used in the progress of the musical and artistic program are also listed on the site (they were modified from programs made by others).

As well as the exploration into sound production and art using the AIML. The exploration into language is also made Miimi API has been used to speak and understand japanese via restful API and this has been used in one of the music tracks as a vocal. Other speech synthesis and translation has been made to make the request to the chatbot verbal, this was not completed into a demonstration yet.

What it does

Demonstrate various abilities of language to choose an exploration of machine learning in art creativity of music and do this from a text input on the messanger bot. While the speech processing in verbally choosing the video was also done my resource time (only myself) and deployment problems has meant there is most likely not a website just python script you can run on this side.

How we built it

using python and c++ and using various multimedia and sound applications and funky and novel use of electronic synthesisers and manipulation of played instrument sounds, as well as various photographic art and contemporary artistic design in photoshop and blender and actual drone camera footage. the server can be ran locally and loaded with the urls on the cloud which are part of the visual arts music made by the author and the jupyter notebook can be ran and will communicate with the botFather at @blueSparrow_bot. This will be refined and the speech recogonition and other features added during the project timescale.

Challenges we ran into

Working out how to do things Managing to spend time on it when I have other things taking my time

Accomplishments that we're proud of

Making new art work in a novel way using python machine learning as a filter or skin to the input picture by entering words and creating picture and by uploading music into the AIML model Also by using the composition transcription of wav to midi in magenta, which i was not aware of most of the time i have played or drawn the stimulus. Learning more about programs in python and machine learning and feeling more confident with using or making changes

What we learned

Python and Jupyter StyleGAN Transformers How far machine learning has been developed in interesting and novel ways. how to transcribe wav to midi using Onsets&Frames Python programming for NLP StyleGAN librosa Magenta Mimi HybridNSynth foto GoArt selfie2Anime

What's next for ringroseTest

To try to deploy the bot and also interface to other input systems such as other messanger services Hopefully build a working example and exhibit some of the work To enjoy being creative.

Log in or sign up for Devpost to join the conversation.