-

-

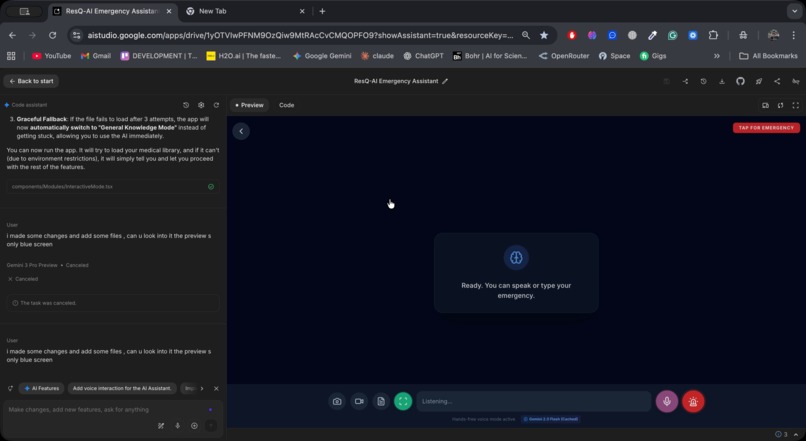

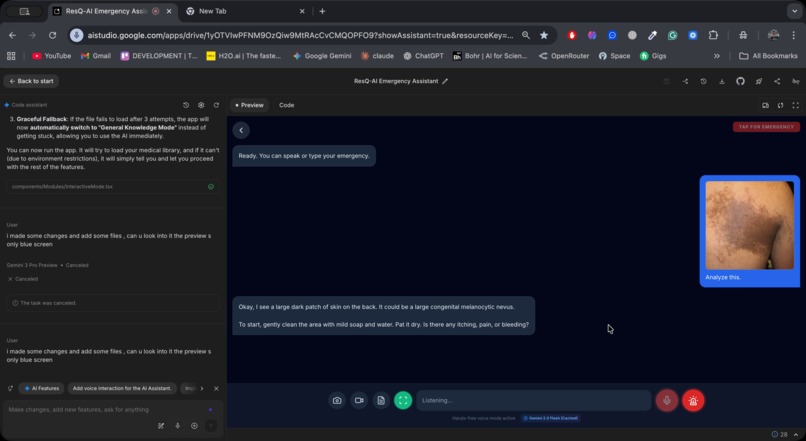

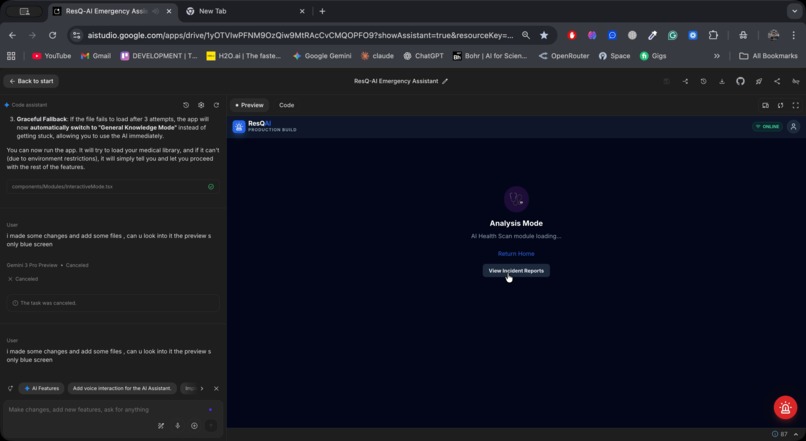

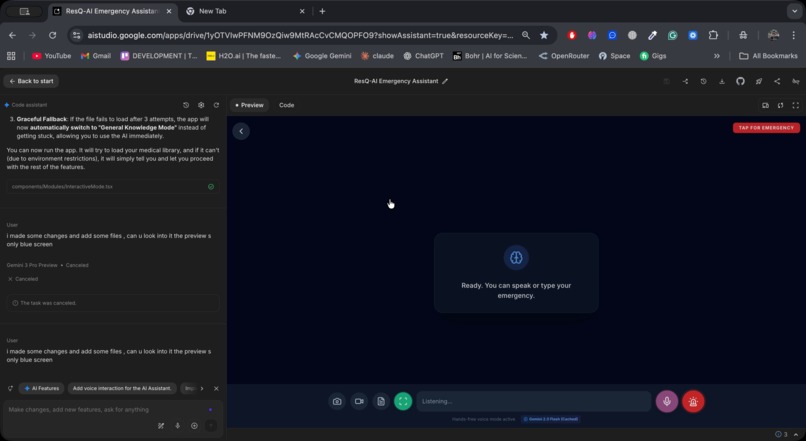

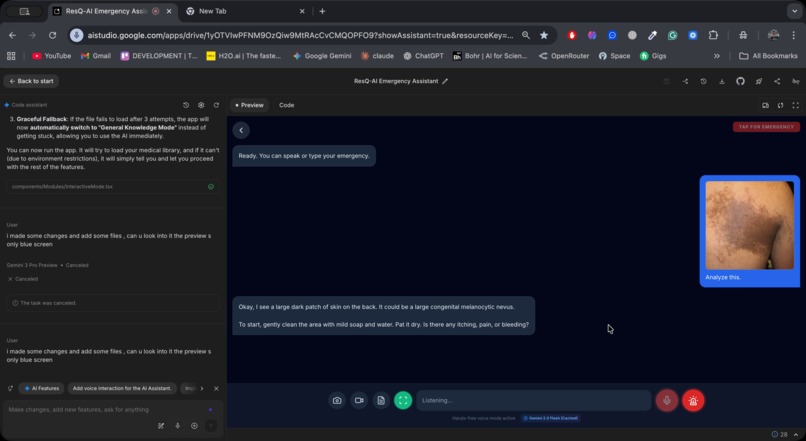

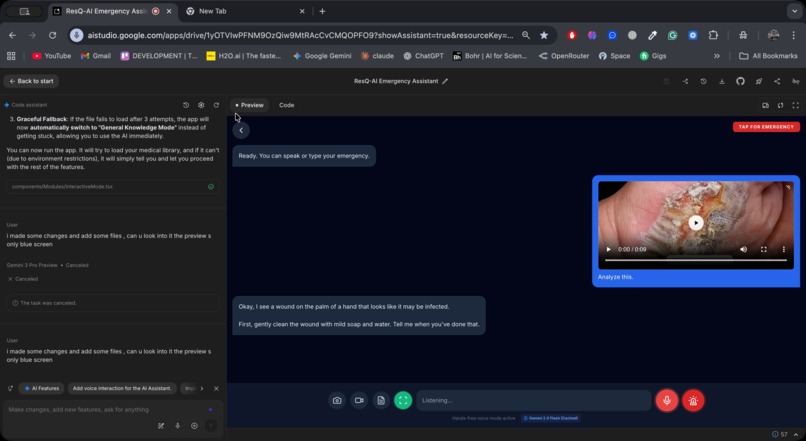

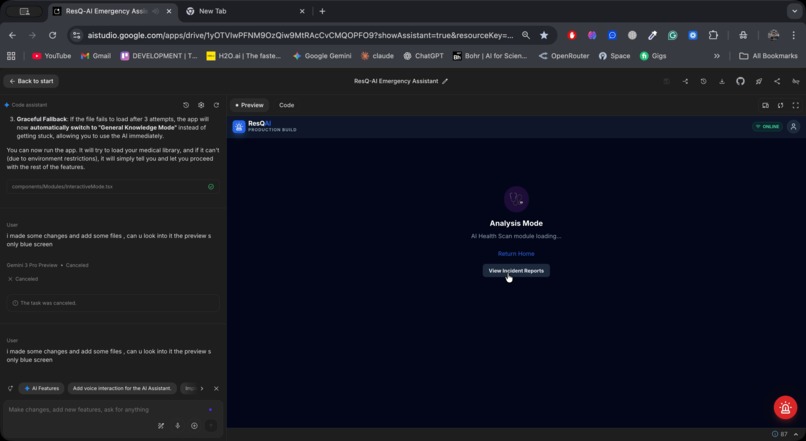

Analysis Mode

-

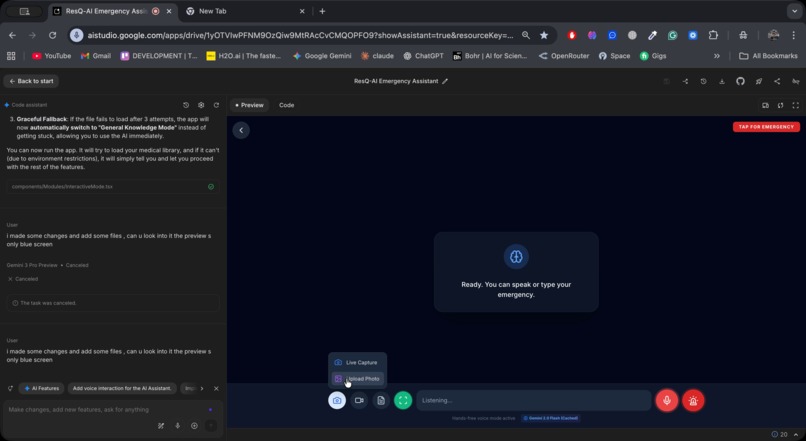

Video and Photo options available within chat

-

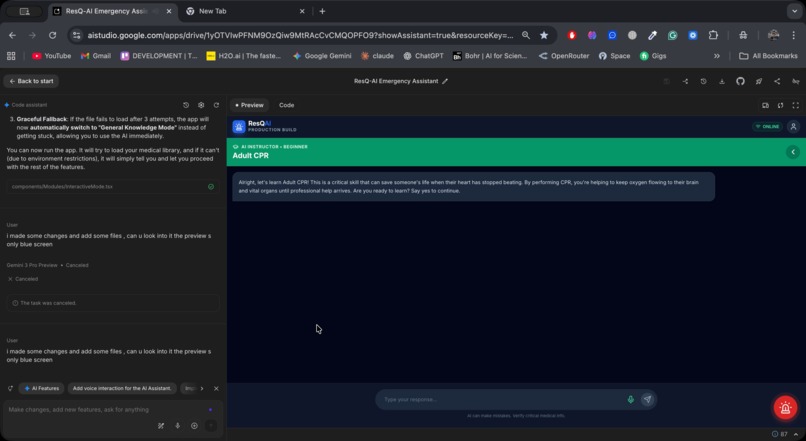

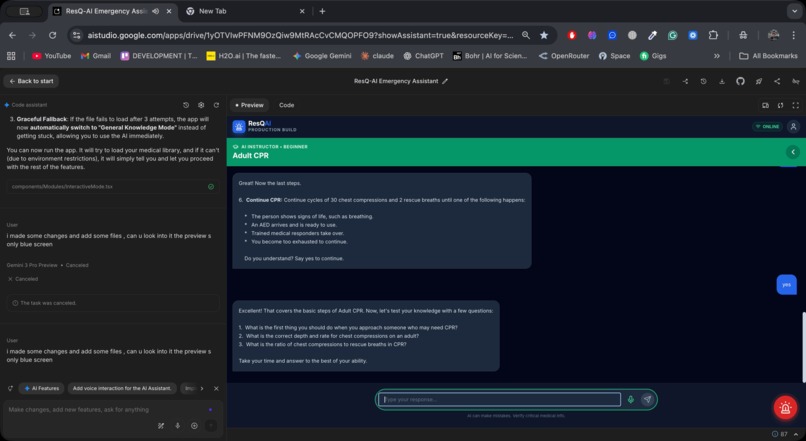

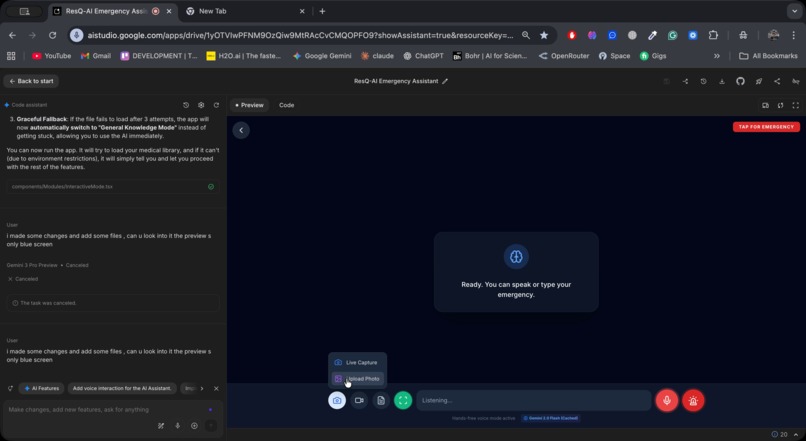

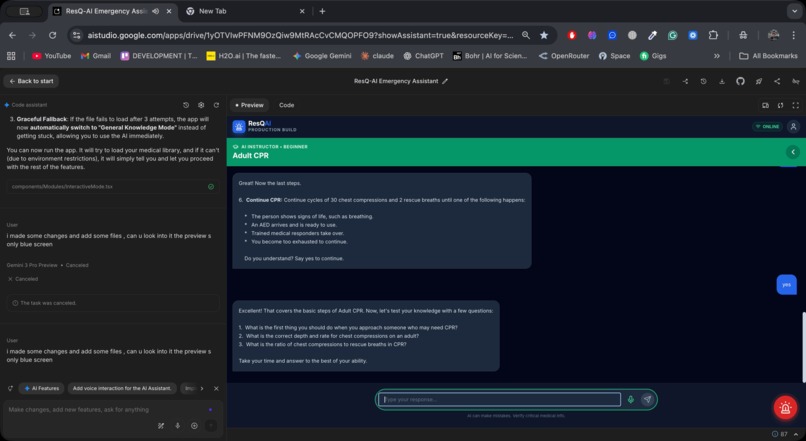

Daily Lesson

-

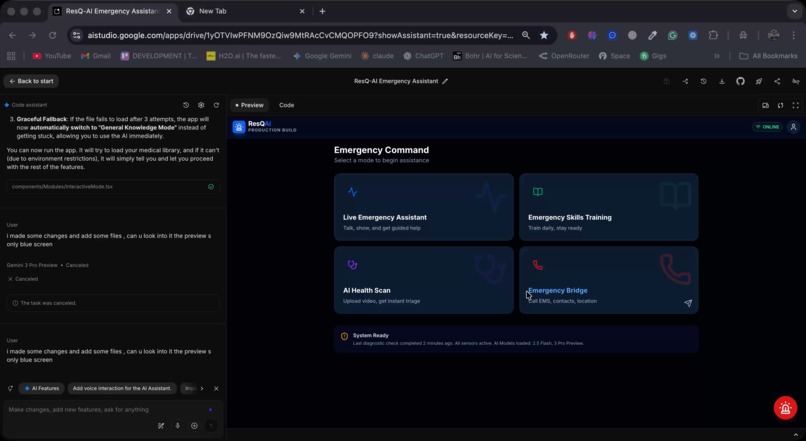

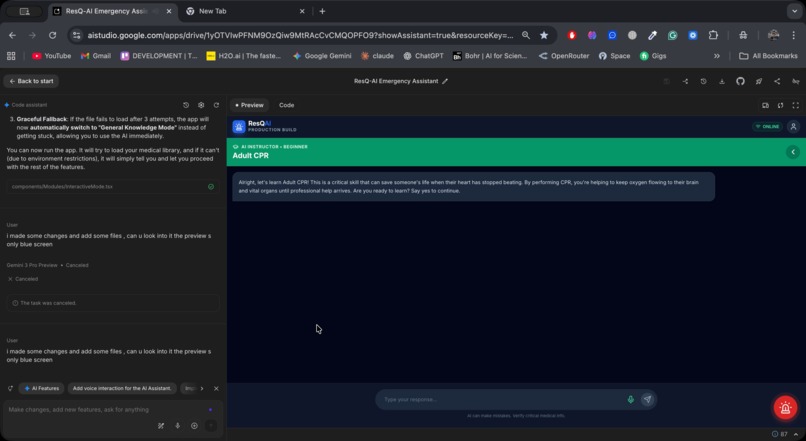

Dashboard

-

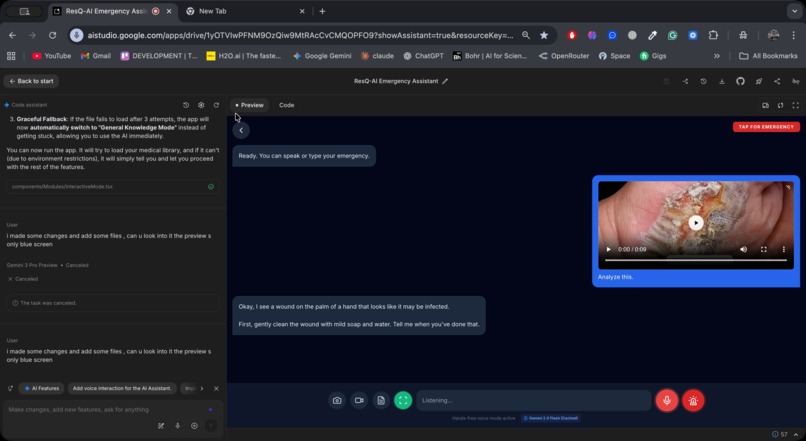

Photo Analysis

-

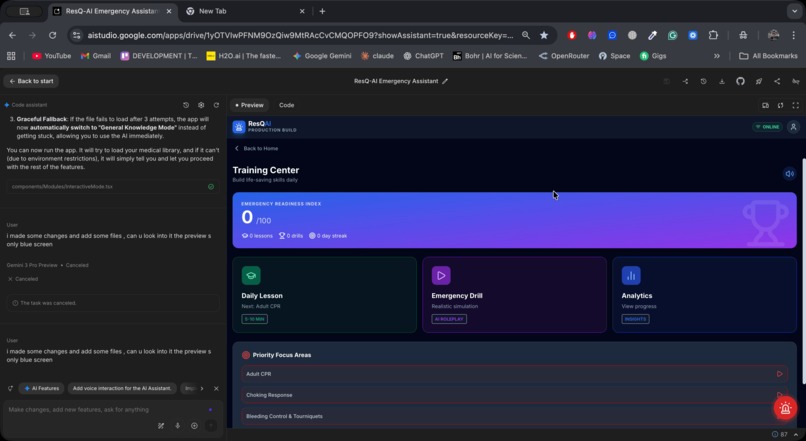

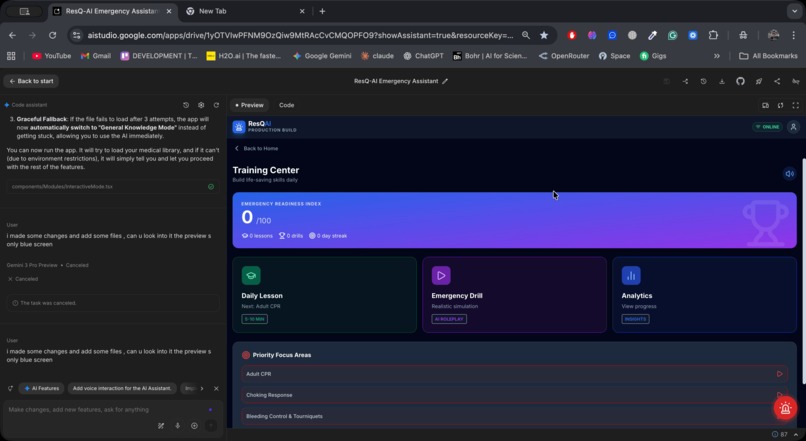

Learning Mode

-

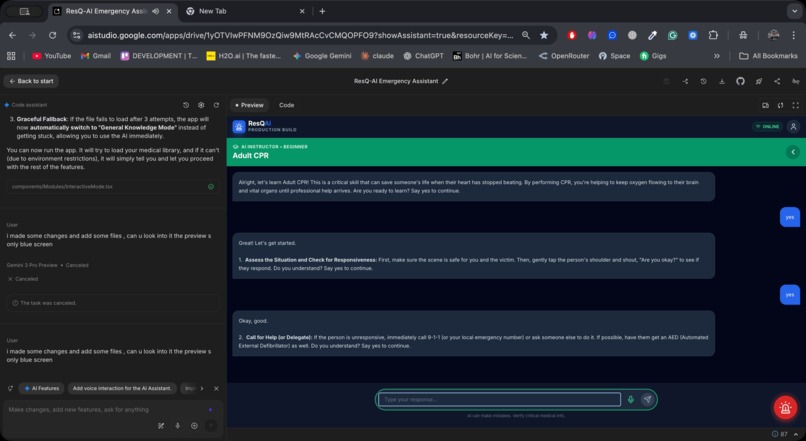

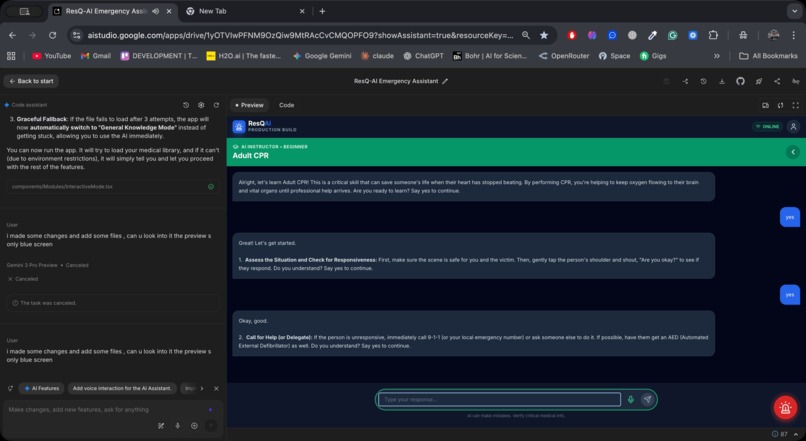

Daily Lesson (contd.)

-

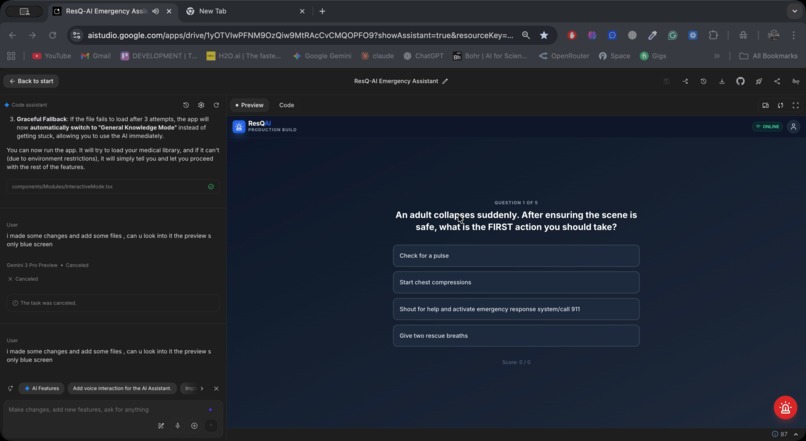

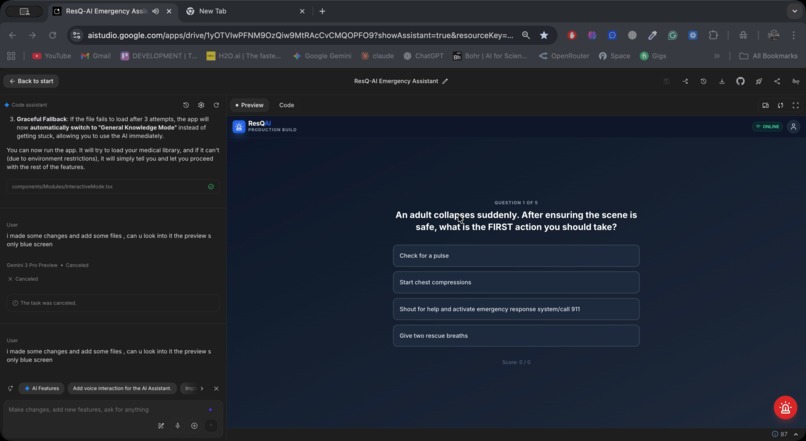

Daily Quiz

-

Daily Lesson (contd.)

-

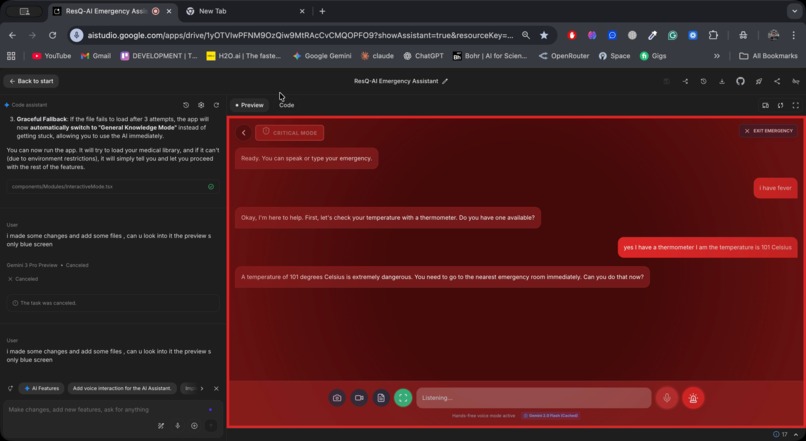

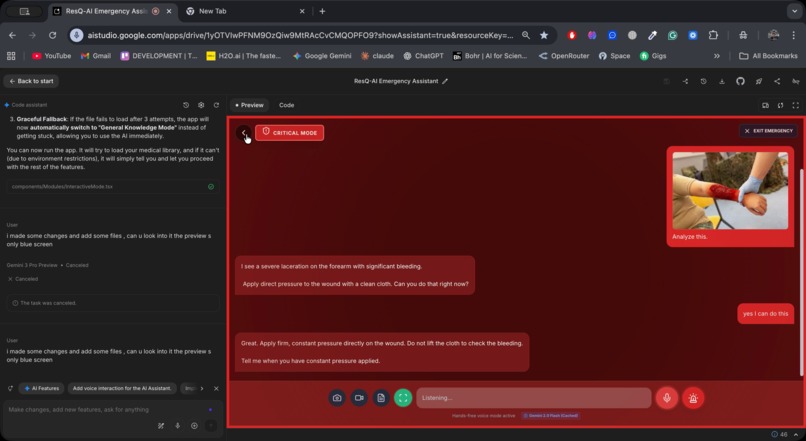

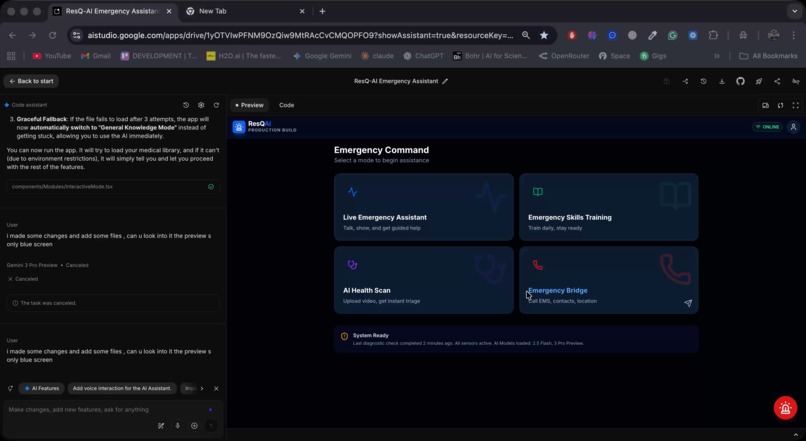

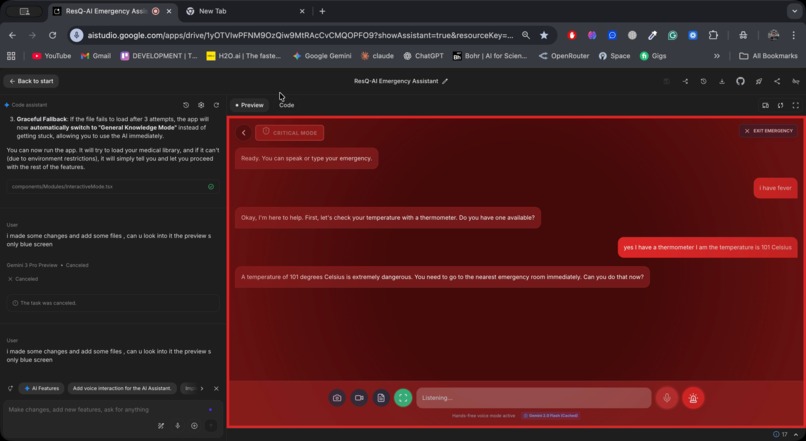

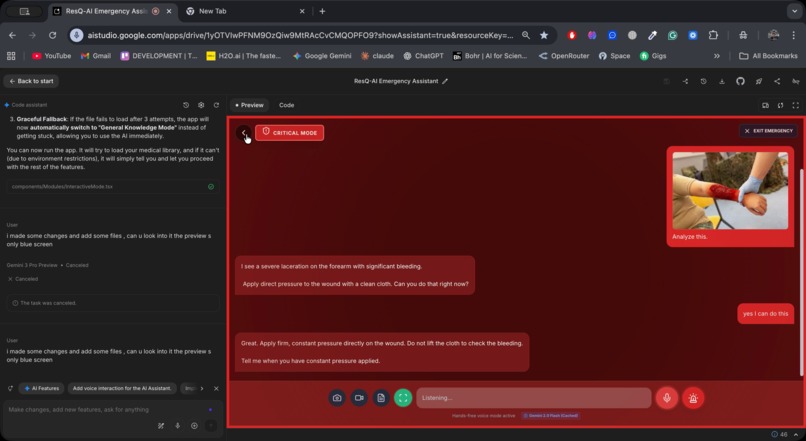

Emergency Mode Active

-

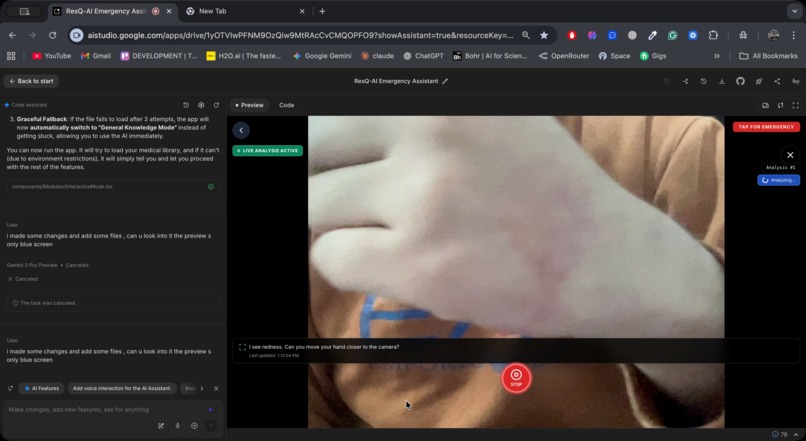

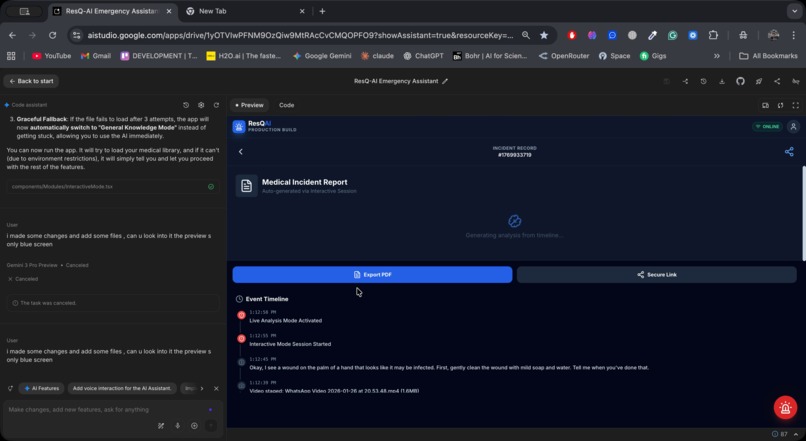

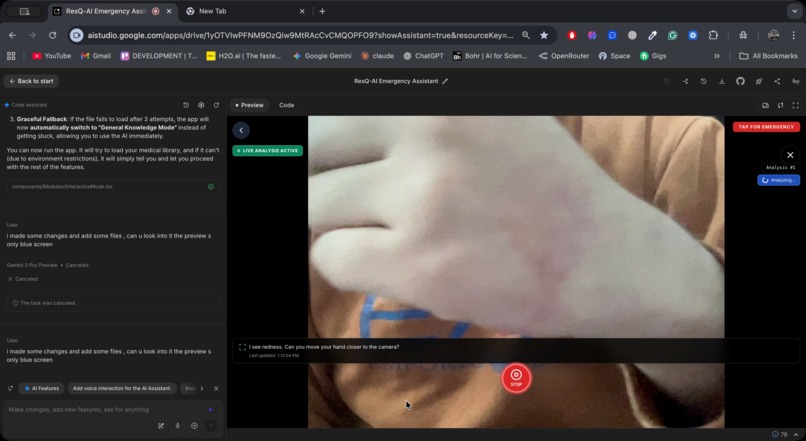

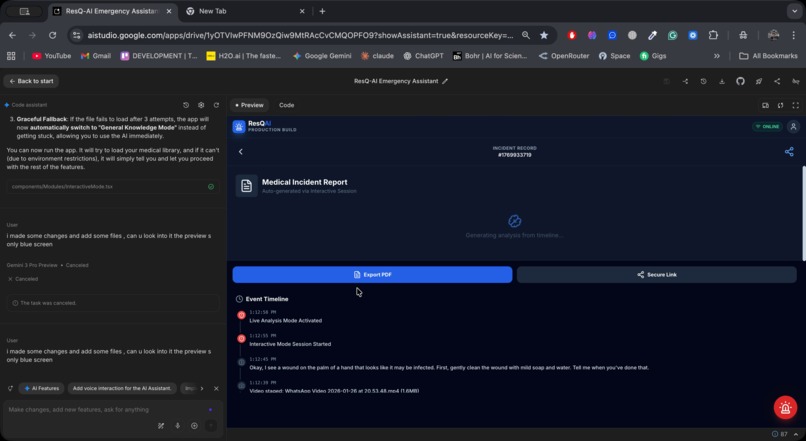

Video Analysis

-

Emergency Mode Active

-

Realtime Analysis

-

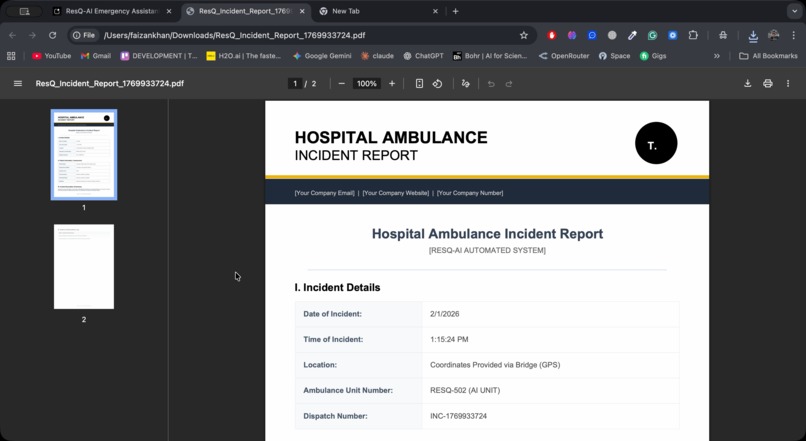

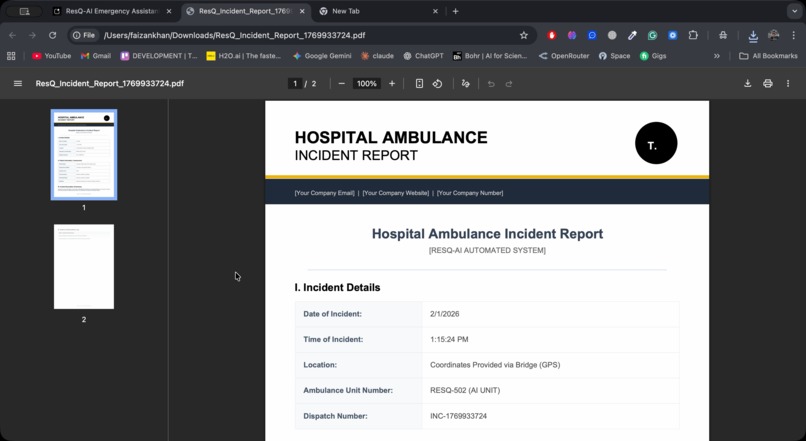

Incident Report PDF

-

Incident Reports Dashboard

-

Quiz (How to handle emergency situation)

-

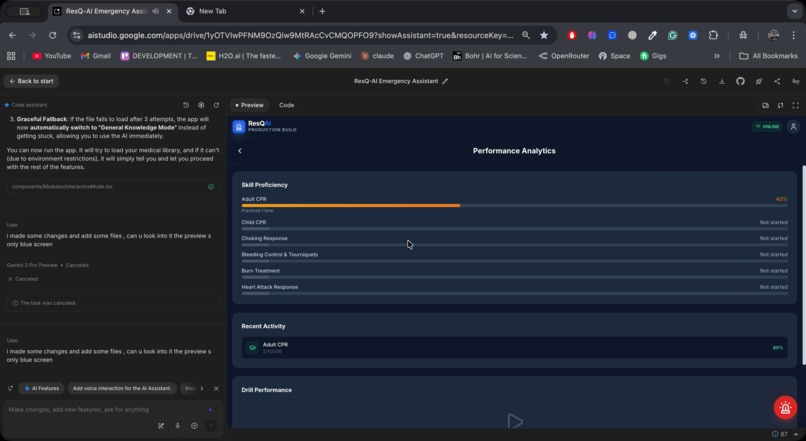

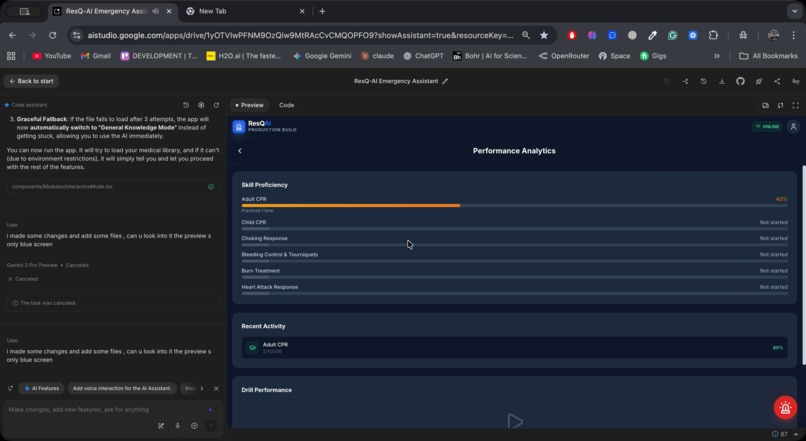

Skill Profile

-

Incident Reports Dashboard (contd.)

-

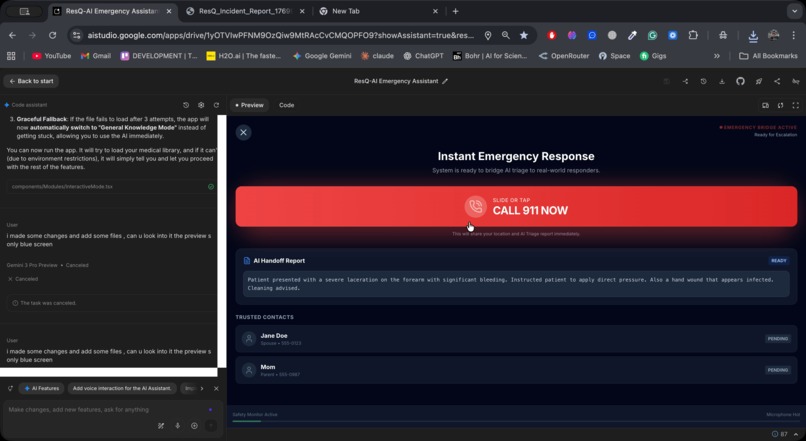

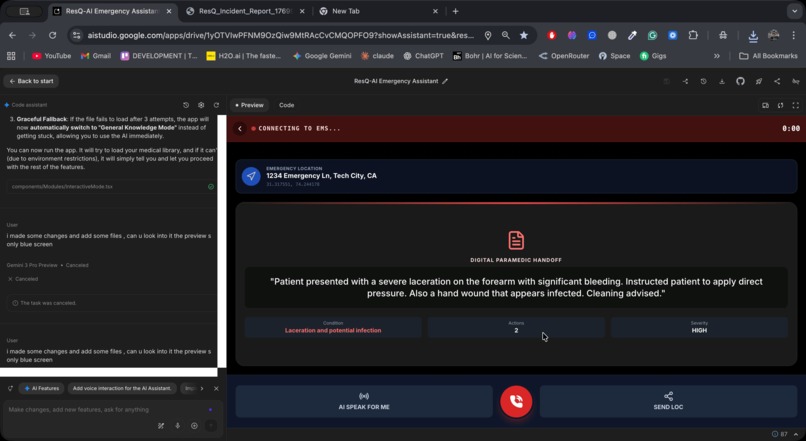

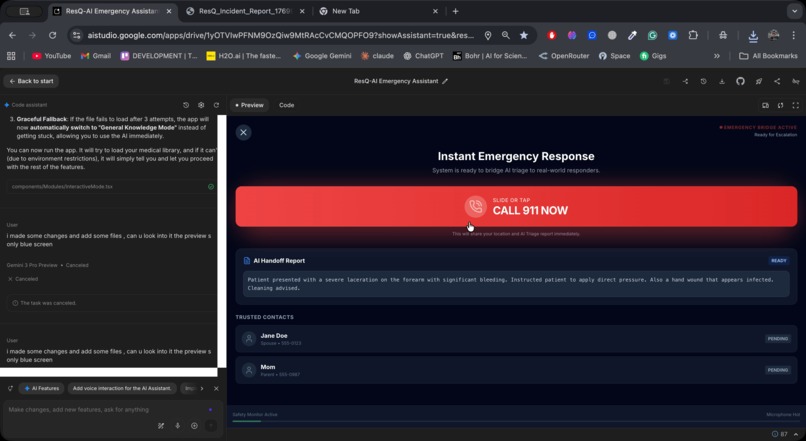

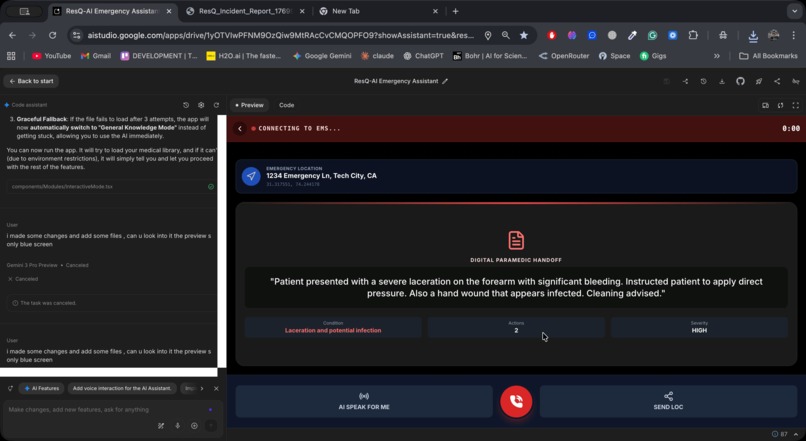

Emergency Call Dashboard

-

Automated Emergency Call

ResQ-AI

Inspiration

It starts with a moment we hope most people never experience.

A man collapses in a grocery store aisle. Someone yells for help. A few people rush over. Phones come out, but no one moves. A bystander quietly says, “I think we’re supposed to do CPR… but I’m not sure.” Minutes pass. By the time paramedics arrive, the window to act has already closed.

This happens every day.

In the United States alone, more than 350,000 out-of-hospital cardiac arrests occur each year. Survival often depends on what happens in the first five minutes, yet fewer than 40 percent of victims receive bystander CPR. The gap is not a lack of empathy. It is fear, uncertainty, and the absence of guidance in the moment it matters most.

We asked ourselves a simple question:

What if your phone could act as a calm, trained first responder and guide you step by step when panic takes over?

That question became ResQ-AI.

What it does

ResQ-AI is a real-time, voice-driven emergency medical assistant designed for the moments before professional help arrives.

Interactive Mode

Users speak naturally while ResQ-AI listens, analyzes live camera frames or uploaded images and videos, and delivers one clear instruction at a time. The system performs real-time analysis and automatically detects critical conditions such as cardiac arrest, heavy bleeding, or choking, activating urgency-aware emergency protocols.

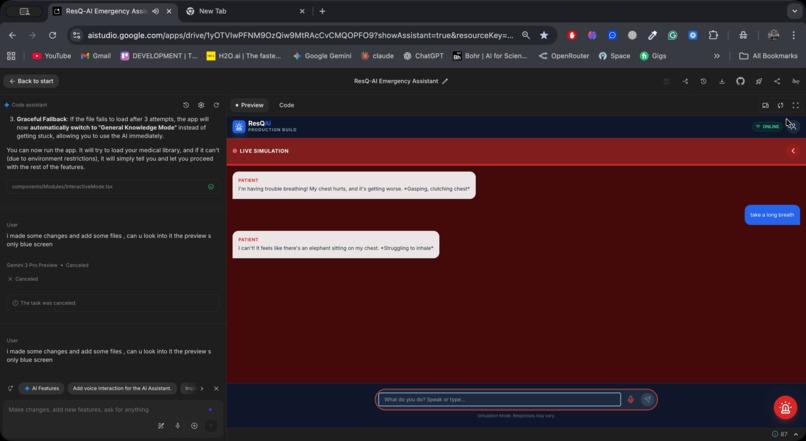

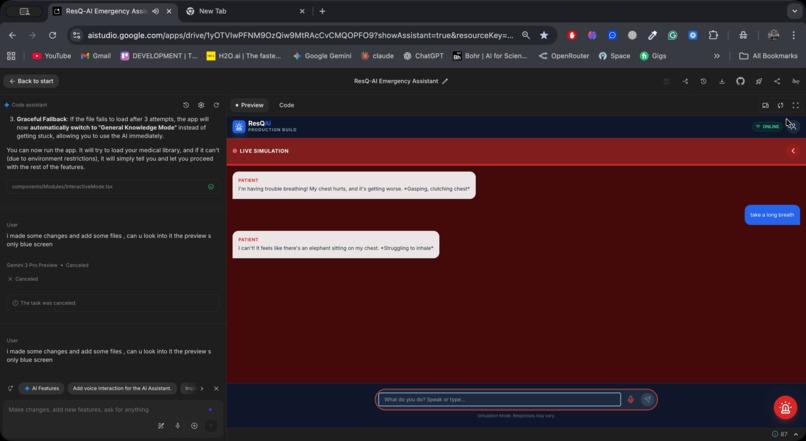

Learning Mode

ResQ-AI becomes a medical instructor. It teaches life-saving skills through step-by-step lessons, generates decision-focused quizzes using structured JSON output, and runs realistic emergency drills where the AI role-plays as a patient whose condition improves or worsens based on user actions. Preparedness is tracked using a composite Readiness Index that accounts for accuracy, recency of practice, lesson completion, drill performance, and consistency.

Emergency Bridge (911 Mode)

During emergency calls, ResQ-AI presents a live AI-generated triage summary including condition, severity, actions taken, and estimated vitals. An “AI Speak For Me” feature can read this summary aloud to the dispatcher when users are panicked, injured, or unable to communicate clearly.

Medical Reports

Medical Reports are a separate feature that automatically generates structured, exportable PDF incident reports from the full emergency timeline. These reports are designed for paramedic and clinician handoff, providing a clear chronological summary without relying on user memory under stress.

How we built it

ResQ-AI is built entirely client-side using React 19, TypeScript, and Vite, with no backend server or database.

The intelligence layer is powered by Google Gemini 3 Flash Preview via the @google/genai SDK.

Context Caching

We compiled a ~5 MB Emergency Medical Guide from authoritative sources including the Oxford Handbook of Emergency Medicine and the AHA Heartsaver Manual. This guide is cached using the Gemini Caching API with a 24-hour TTL and is automatically refreshed every two days via GitHub Actions. This allows every session to be grounded in real medical knowledge without re-uploading large context each time.

Multimodal Input

ResQ-AI sends live camera frames, uploaded images, videos, and PDFs alongside text in a single conversational context. Inputs follow a strict priority hierarchy so Gemini always reasons over the most relevant signal.

Structured Output

Using JSON schemas, we enforce deterministic output for quiz generation, triage summaries, and medical reports. This makes AI output reliable and safe for UI rendering and emergency workflows.

Chat Sessions and System Instructions

Multi-turn chat sessions preserve context across an entire emergency. Dynamic system instructions allow the same model to act as a calm emergency doctor, a training instructor, a distressed patient during drills, or a medical scribe for reports.

Hands-free interaction is enabled through the Web Speech API, and reports are generated using jsPDF.

Challenges we ran into

One of our earliest challenges was context size. Incorporating multiple emergency medicine textbooks quickly exceeded Gemini’s token limits. This made context caching essential, allowing us to ground conversations in authoritative medical knowledge without injecting massive text into every prompt.

Real-time video guidance introduced additional constraints. To maintain quick response times while analyzing live camera frames every few seconds, we had to reduce the active context window even further, trimming conversation history aggressively and prioritizing only the most relevant information.

We also encountered context cache expiration issues, which initially caused mid-session failures. We solved this by implementing graceful fallbacks that automatically recreate sessions without disrupting the user experience.

Live camera analysis pushed us close to free-tier rate limits. We introduced mutex locks, request throttling, trimmed conversation history, and a STABLE marker that allows Gemini to indicate no change detected without generating unnecessary responses.

Large video uploads caused processing timeouts, which we addressed with adaptive timeout thresholds and a live frame analysis mode during playback.

Handling simultaneous multimodal inputs required a strict priority hierarchy to avoid conflicting or ambiguous context.

Accomplishments that we’re proud of

- Real-time analysis of voice, live camera input, images, and user actions as emergencies unfold

- Fast and reliable response times, even during live video guidance

- A user-friendly and calming UX/UI designed to reduce cognitive load under stress

- Fully hands-free operation from detection to guidance to emergency calling

- “AI Speak For Me”, enabling clear communication with emergency dispatchers

- Structured medical reports generated automatically for clinician handoff

- An automated medical knowledge pipeline with zero manual maintenance

- Realistic emergency training through AI role-play in Drill Mode

- A zero-backend architecture that runs entirely in the browser using only a Gemini API key

What we learned

We learned that response time is directly tied to the size of the context window. In real-time emergency scenarios, smaller and more focused context consistently outperforms large, unfocused prompts.

We also learned that Gemini can identify which parts of the conversation and knowledge base matter most when context is constrained. By structuring prompts, cached content, and system instructions carefully, Gemini prioritizes clinically relevant information while ignoring less critical details.

This reinforced an important insight: effective real-time AI is not about sending more information, but about sending the right information at the right moment.

We also learned that multimodal AI requires thoughtful UX design to remain calm and intuitive during high-stress situations, and that designing within rate limits often leads to cleaner, more efficient architectures.

What’s next for ResQ-AI

Closing the Loop Between Bystanders, Paramedics, and Hospitals

Our next phase focuses on closing the loop between bystanders, paramedics, and hospitals.

We plan to integrate ResQ-AI directly with EMS and hospital workflows, enabling structured triage data generated in the field to flow seamlessly into clinical systems. Paramedics and emergency departments would receive pre-arrival digital handoffs containing condition severity, estimated vitals, actions taken, and concise AI-generated summaries created during the emergency.

We envision deep integration with paramedic tablets and hospital dashboards, allowing emergency teams to prepare equipment, medications, and personnel before a patient arrives. This has the potential to reduce administrative burden, shorten waiting times, and significantly improve coordination across emergency departments.

Built for the Real World

ResQ-AI is being designed as an offline-first system, ensuring critical emergency guidance remains available even in low-connectivity or disaster scenarios. Support for multiple languages, including sign language and accessibility-first interfaces, ensures help is available to everyone—regardless of language, hearing ability, or literacy level.

The platform is also being extended to support wearable devices, enabling automatic detection of emergencies such as falls, abnormal heart rhythms, or sudden loss of consciousness. When triggered, wearables can provide early alerts, share contextual health data, and activate guided response workflows—reducing time to intervention when every second counts.

By integrating trusted medical reference libraries and clinical guidelines, ResQ-AI grounds its recommendations in established medical knowledge while adapting guidance to real-world emergency contexts.

Data-Driven Impact

Over time, aggregated and fully anonymized data can support improvements in diagnostic accuracy, optimize emergency workflows, and drive better patient outcomes—including reduced morbidity and mortality.

Looking Ahead

Longer term, we aim to support:

- Wearable-triggered emergency detection and alerts

- Community responder networks connecting nearby trained volunteers

- Offline-first emergency decision support

- Multilingual and sign-language emergency guidance

- Deeper integration with medical textbooks, protocols, and clinical references

- Real-time coordination between bystanders, paramedics, wearables, and hospitals

Healthcare does not begin in the emergency room.

It begins with the person standing next to you when something goes wrong.

ResQ-AI exists to make sure that person is never alone.

Built With

- gcp

- gemini

- jspdf

- react

- typescript

- vite

Log in or sign up for Devpost to join the conversation.