-

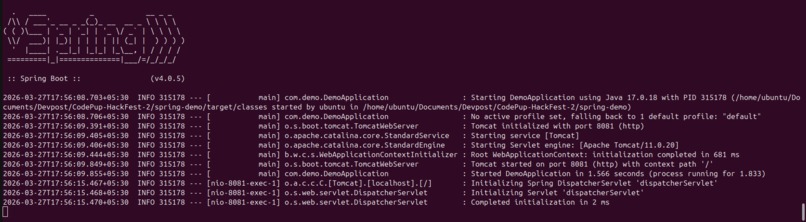

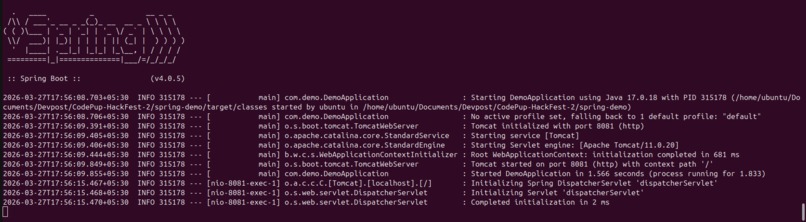

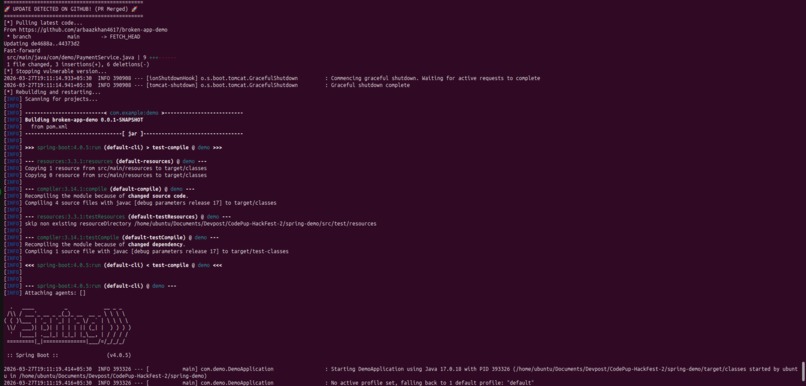

Spring Boot service booting up locally — the system is live and ready to simulate real production failures.

-

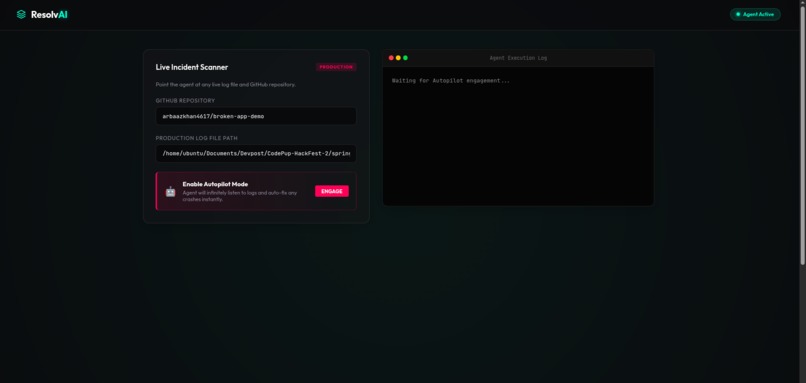

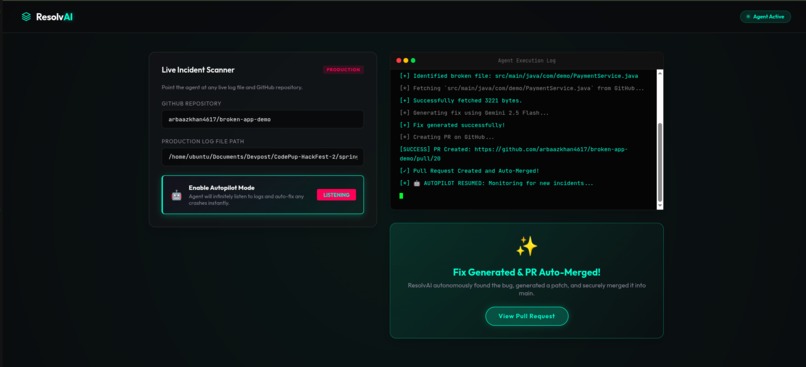

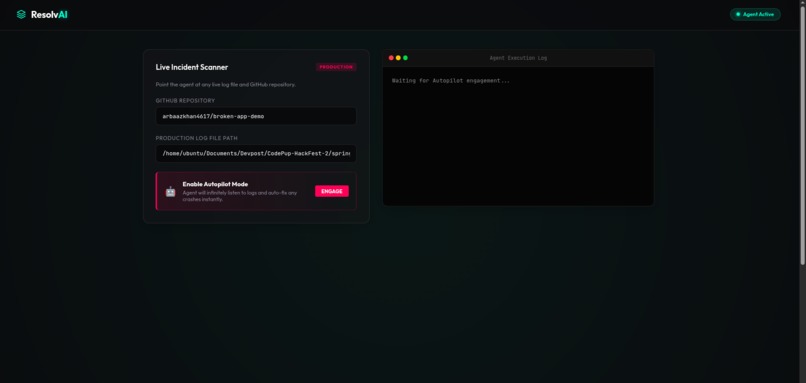

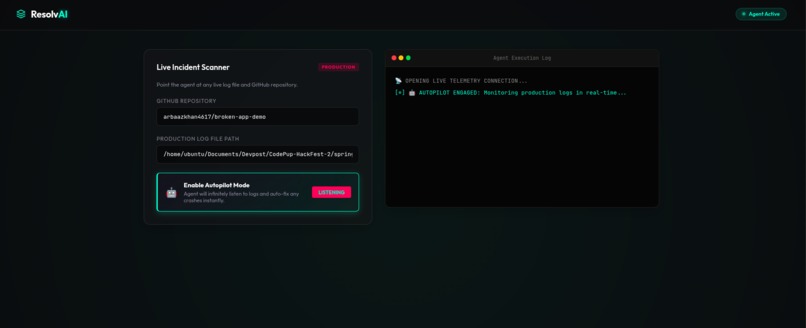

ResolvAI dashboard — unified interface to monitor logs, trigger autopilot, and visualize real-time incident resolution.

-

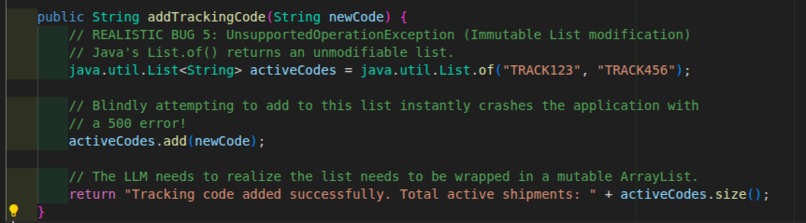

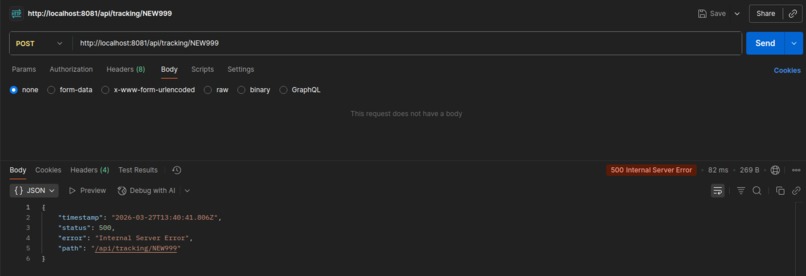

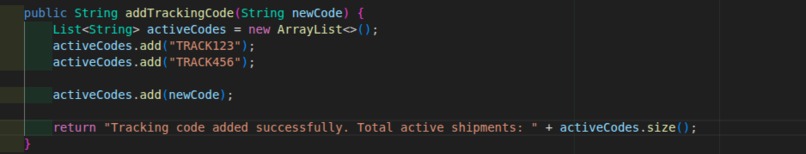

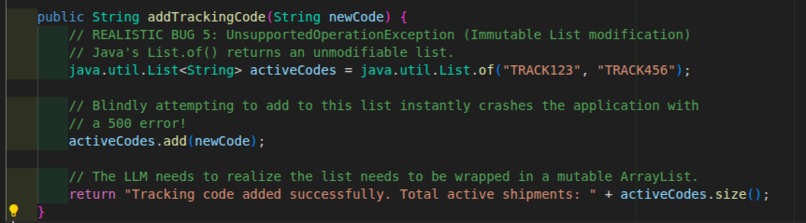

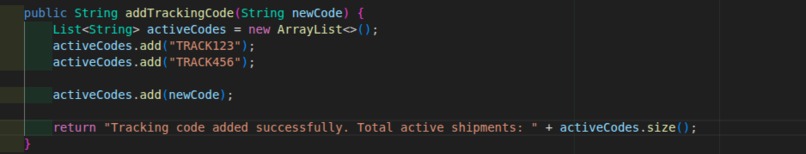

A critical bug: modifying an immutable List.of() causing a runtime crash (500 error) — a realistic production failure scenario.

-

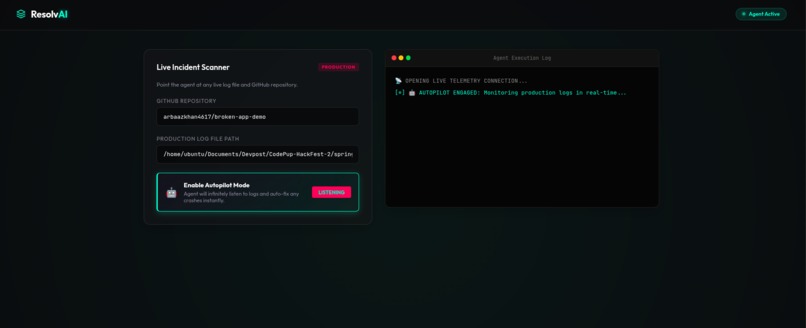

Autopilot mode activated — the agent continuously monitors live logs and proactively detects issues in real time.

-

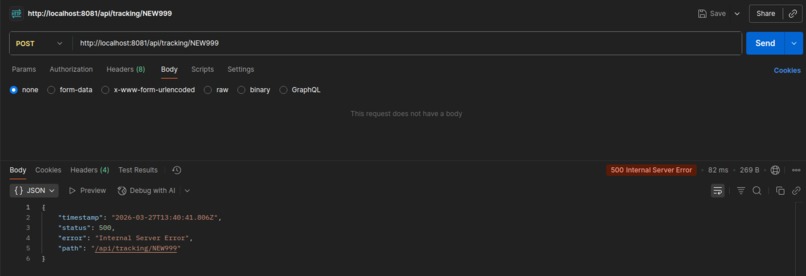

Live failure captured: API returns 500 Internal Server Error, triggering ResolvAI’s automated debugging pipeline.

-

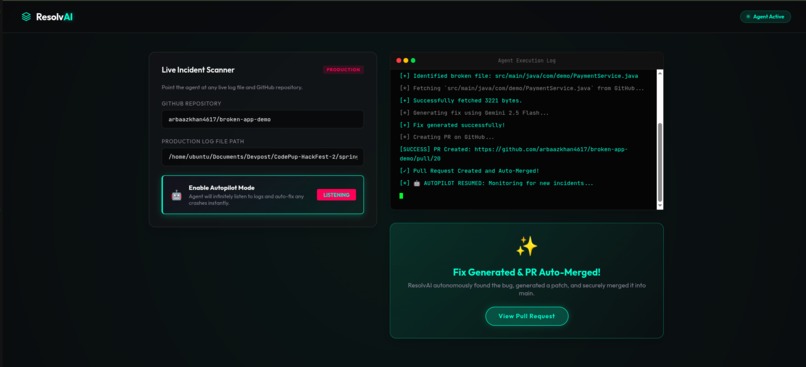

ResolvAI detects a production-breaking bug, generates a fix using AI, and automatically creates + merges a PR — zero human intervention.

-

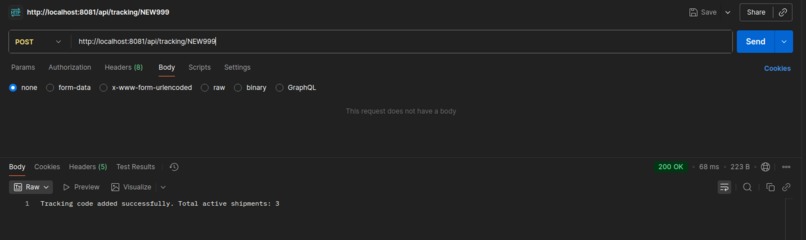

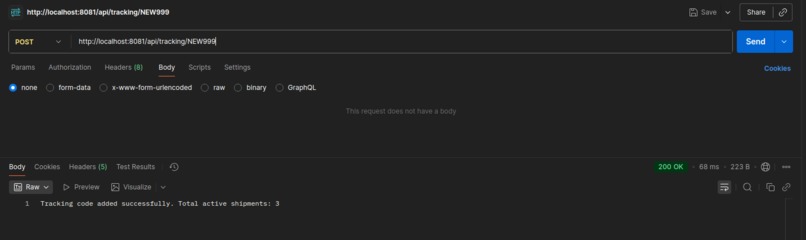

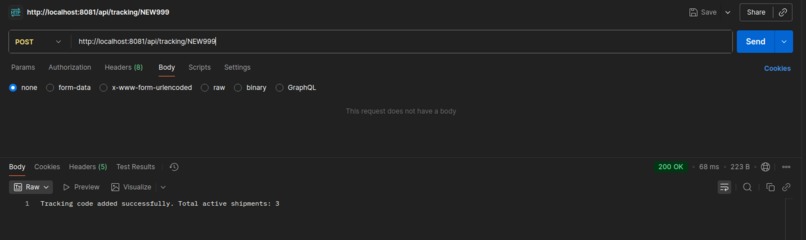

After autonomous fix deployment, the API responds successfully — issue resolved and system stabilized instantly.

-

After autonomous fix deployment, the API responds successfully — issue resolved and system stabilized instantly.

-

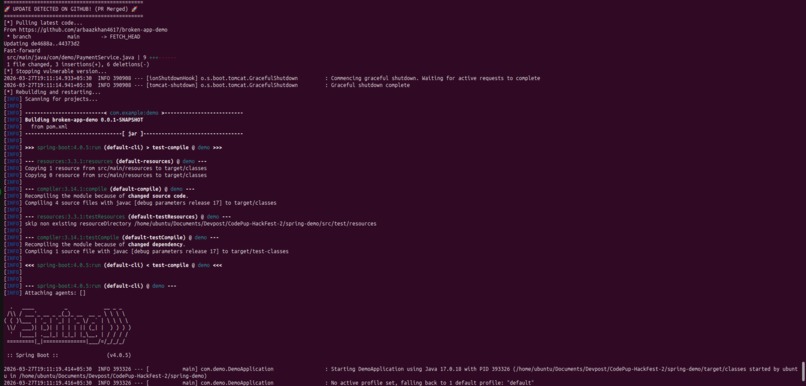

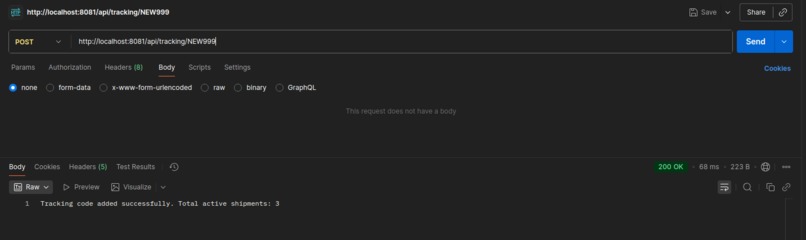

Post-merge, the system automatically pulls latest changes, rebuilds, and restarts — completing the self-healing loop.

-

Code-Fixed

Inspiration 💡

Production incidents happen at 3 AM, and developers hate waking up to fix a NullPointerException or an IndexOutOfBounds bug. We thought: what if an AI agent could monitor the servers, isolate the exact stack trace, clone the codebase, write the patch, and orchestrate the deployment entirely on Autopilot while we're sleeping?

What it does 🤖

ResolvAI is a "fire-and-forget" continuous loop autonomous SRE agent.

- Live Telemetry: You feed it a production log file, and the agent initiates an infinite Server-Sent Events (SSE) telemetry stream on our sleek, cyberpunk monitoring dashboard.

- Anomaly Detection: When a crash happens on your live backend, the agent instantly wakes up, identifies the surge in log file size, and scrapes the raw logs.

- Root Cause Analysis & Patching: Powered by Google Gemini 3.1 Flash Lite, it extracts the stack trace, scrapes the relevant Java file from your GitHub repository, and synthesizes a production-grade defensive patch.

- Autonomous Deployment: It securely creates a branch, pushes the fix, and uses the GitHub API to auto-squash and merge the Pull Request into main.

- Auto-Healing Loop: A daemon script on your server detects the remote merge, seamlessly stashes local history, pulls the fix, and restarts the environment. The bug is dead before a human even opens Slack.

How we built it 🏗️

- Backend Architecture: High-performance Python

FastAPIinstance managing asynchronous processes and emitting SSE live streams to the frontend. - AI Core: Integrated with Google's latest Gemini 3.1 Flash Lite model, optimized for rapid code-understanding with a generous rate-limit quota to survive heavy load.

- Frontend Design: Pure HTML/CSS/JS driving a reactive macOS-style terminal that renders live logs, ensuring the UI felt enterprise-grade and premium.

- Infrastructure:

PyGithubhandles the heavy lifting of cloning, tree building, and PR merging in the cloud. A custom watch_and_run.sh script actively synchronizes the local Spring Boot mock-environment with the latest AI-generated commits.

Challenges we ran into 🧗♂️

The Stash & Pull Race Condition: While testing the auto-healing bash loop, the live Tomcat server kept logging to spring-app.log at the exact millisecond git stash executed. This re-dirtied the git index instantly, causing git pull --rebase to crash our automated deployer! We had to rigorously isolate log streams from Git tracking (git rm --cached) to ensure the auto-runner was bulletproof.

Accomplishments that we're proud of 🏆

- Transitioning from a static demo into a True 24/7 Autopilot daemon. ResolvAI doesn't need to be clicked. It sits in the background, waits for errors, fixes them, and goes back to sleep.

- Creating 5 highly diverse bug pipelines in a real Spring Boot test environment (ranging from

NullPointerExceptions to Immutable ListUnsupportedOperationExceptions) and watching Gemini solve and push bounds-checking code for all of them completely autonomously. - Designing an incredibly polished, cyberpunk-aesthetic UI.

What we learned 🧠

We learned exactly how potent modern frontier models are at analyzing completely raw, unstructured terminal logs. The transition from "LLM as a Chatbot" to "LLM as an Orchestrator/SRE" opens up massive capabilities for autonomous DevOps.

What's next for ResolvAI 🚀

Adding an LLM "Confidence Threshold": if the AI isn't 99% confident in the patch, it texts a human for approval instead of auto-merging the code into production.

Log in or sign up for Devpost to join the conversation.