-

-

REFLEKTOR

-

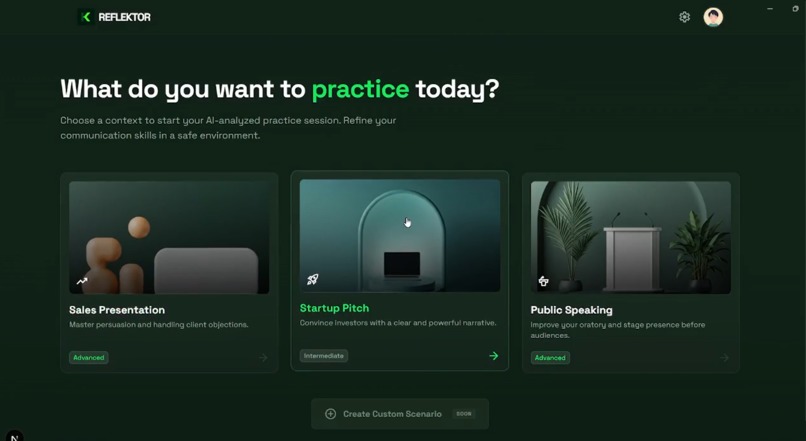

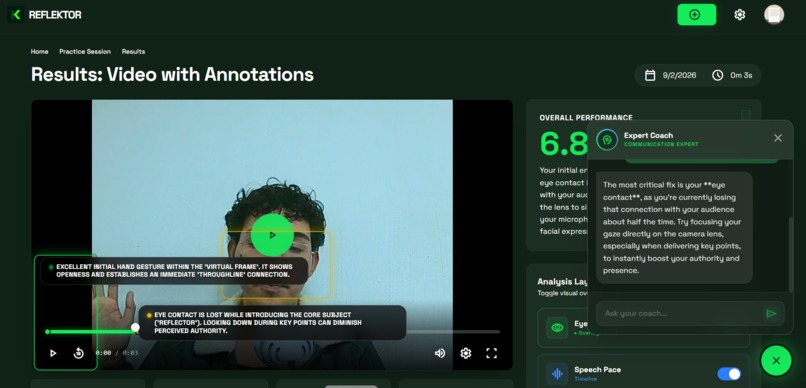

HOME - Choose your scenario Pick your presentation type: Sales, Pitch, or Public Speaking. Each gets customized AI analysis.

-

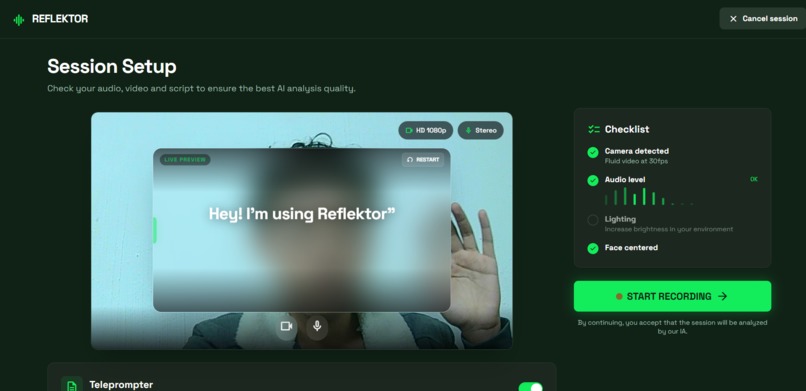

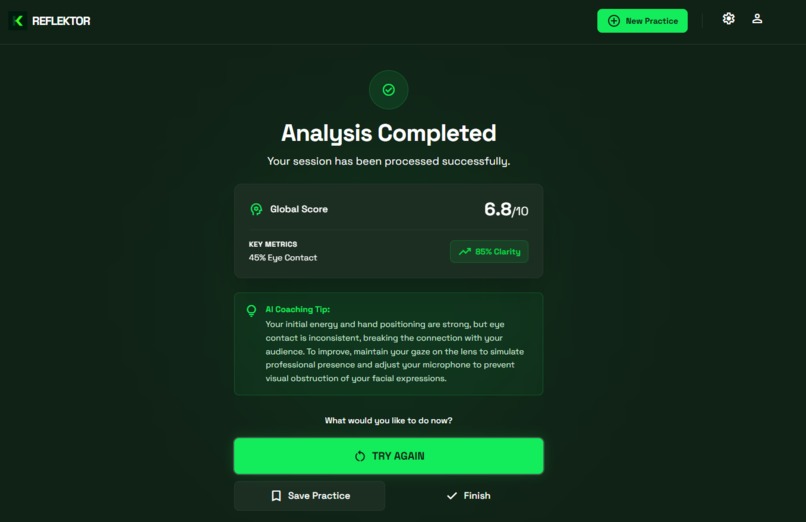

SETUP - Configure your session Check camera, audio, and teleprompter. System ensures everything's ready before you start.

-

RECORDING - Record your practice Present with the teleprompter on screen. HD video captures every detail in real-time.

-

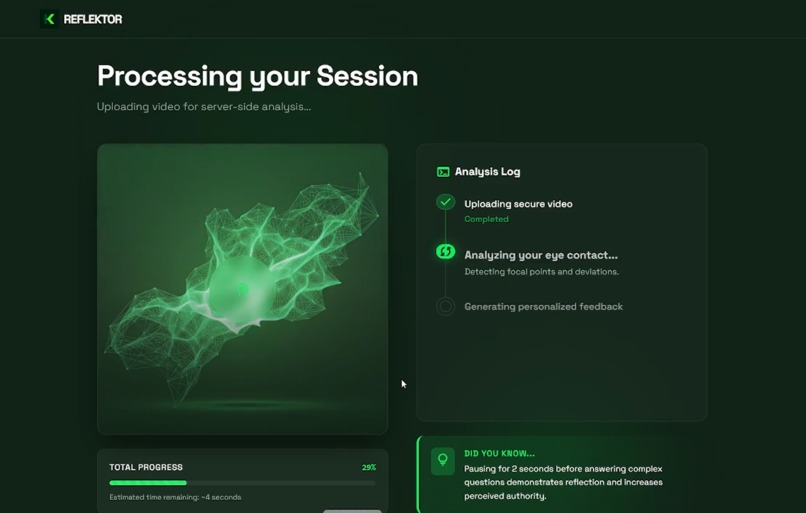

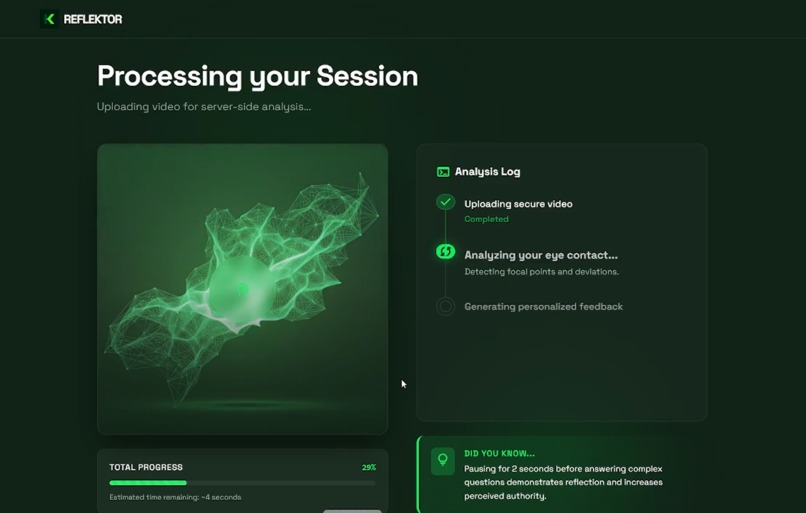

PROCESSING - Analysis in progress Gemini analyzes eye contact, gestures, fillers, and pace. Watch it work in real-time.

-

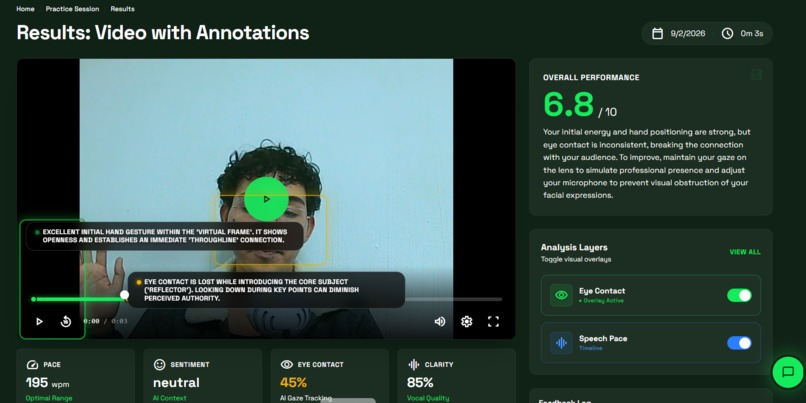

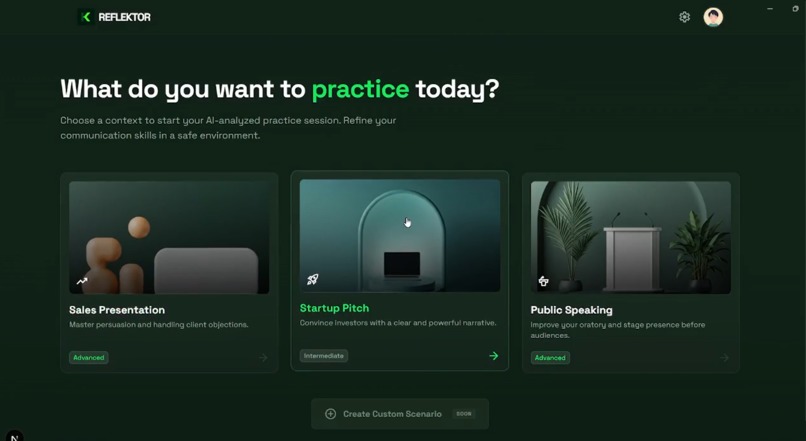

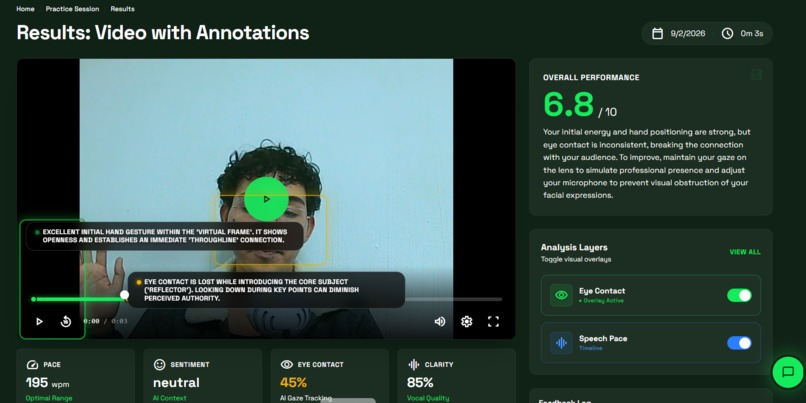

RESULTS - Video with annotations Watch your presentation with visual overlays. Click timeline markers to jump to key moments.

-

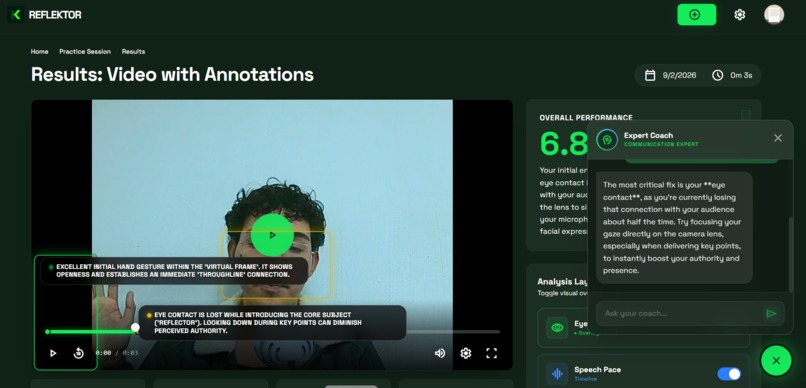

CHAT - Expert coach Ask anything. The coach knows your video and gives advice based on what it detected.

-

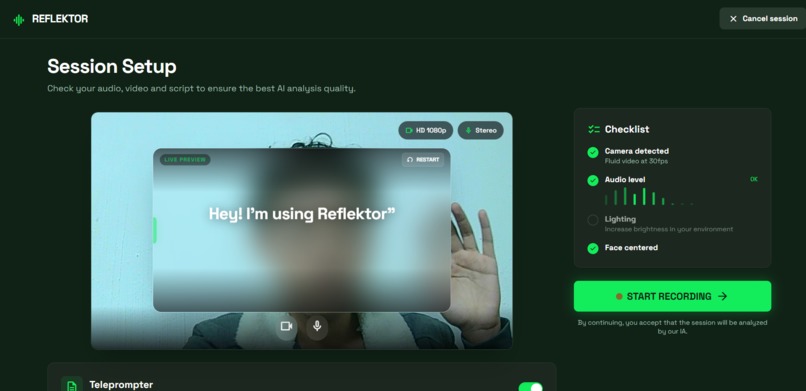

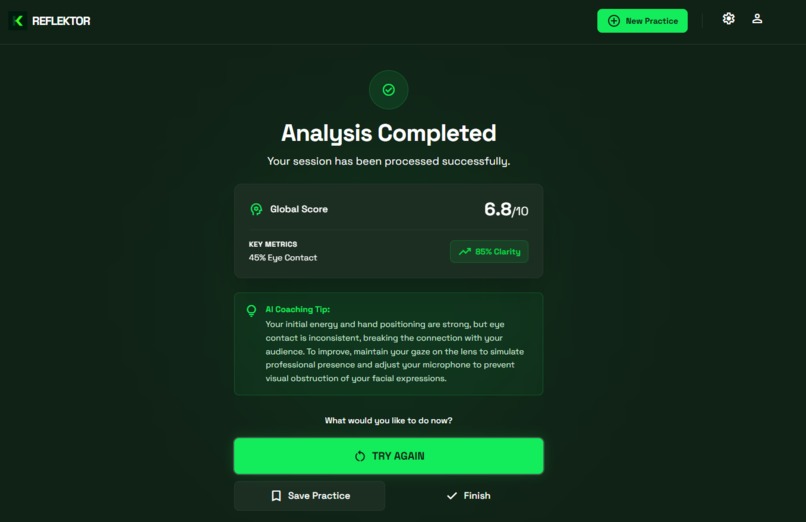

FINISH - Results ready. Your score and key metrics. AI coach gives feedback.

🎯 Reflektor - Your AI-Powered Presentation Coach

Inspiration

The inspiration for Reflektor was born from two personal experiences: the frustrations of practicing presentations without objective feedback and seeing entrepreneurs struggle to perfect their pitches before investors.

After failing in important presentations by not realizing my filler words ("um", "like") or my lack of eye contact, I understood that practicing in front of a mirror isn't enough. Entrepreneurs spend thousands of dollars on professional coaches, and most never receive honest feedback about their communication skills.

With the launch of Gemini 3.0 Flash, I saw the perfect opportunity: a multimodal model fast and powerful enough to analyze video in real-time, detect body language, and provide specific contextual feedback.

Thus Reflektor was born: democratizing access to quality presentation coaching using multimodal AI.

What it does

Reflektor transforms the way you practice presentations. Record your pitch, sales presentation, or public speech, and receive detailed AI analysis.

🎥 Professional Recording

- HD camera with real-time preview

- Integrated teleprompter with adjustable auto-scroll and live editing

- Audio visualizer to monitor your volume

- Automatic persistence (never lose your recording)

🧠 Multimodal Analysis with Gemini 3.0

Reflektor analyzes multiple dimensions of your presentation:

- Eye Contact: Detects every time you look away from the camera

- Body Gestures: Analyzes hand movements, posture, and body language

- Fillers: Identifies in English (um, like, you know) and Spanish (esteee, o sea, este)

- Pace: Calculates words per minute \(\text{WPM} = \frac{\text{Total words}}{\text{Duration (min)}}\)

- Sentiment: Evaluates perceived energy and confidence

- Vocal Clarity: Measures projection and articulation

📊 Interactive Visualization

- Real-time overlays on the video with detection boxes

- Clickable timeline with color markers for each event

- Floating pills that show contextual feedback frame by frame

💬 Expert AI Coach

Contextual chat that knows your video and specific results:

- Personalized responses based on real methodologies:

- Sales: SPIN Selling, Sandler Training

- Pitches: Y Combinator Demo Day, Sequoia format

- Public Speaking: TED Talks, Toastmasters

- Understands your context: "How do I improve my close?" gives you advice based on YOUR specific video

📥 Advanced Export

- Download your video with permanently drawn overlays

- Ready to share with mentors, teams, or portfolio

🎯 Three Specialized Scenarios

- Sales Presentation: Optimized for sales presentations

- Startup Pitch: Designed for pitches to investors

- Public Speaking: Focused on oratory and public speeches

How we built it

Technology Stack

Frontend & Video Processing

- Next.js 16.1.1 with React 19 for a smooth and modern experience

- Native Canvas API for efficient overlay rendering at 60fps

- IndexedDB to store videos up to 500MB locally without servers

- MediaRecorder API for HD video capture directly in the browser

Multimodal AI

- Gemini 3.0 Flash Preview as the main analysis engine

- Automatic fallback system to Gemini 2.5 Flash for maximum reliability

- Next.js API Routes for secure serverless processing

- Specialized prompt engineering by scenario (Sales/Pitch/Speaking)

User Experience

- TailwindCSS v4 for mobile-first responsive design

- Framer Motion for smooth and professional animations

- TypeScript for type-safe and maintainable development

Data Architecture

Gemini 3.0 receives the video and returns a structured JSON with:

Temporal Events - Each moment where it detects something important:

- Exact timestamp in the video

- Event type (loss of eye contact, filler, gesture)

- Normalized coordinates \((x, y, w, h)\) to draw precise overlays

- Severity (low, medium, high) to prioritize feedback

Aggregated Metrics - General scores from 0-100:

- Overall eye contact

- Total filler count

- Average pace in words per minute

- Overall presentation sentiment

Contextual Recommendations - Specific advice based on:

- The selected scenario (Sales vs Pitch vs Speaking)

- The specific errors detected in your video

- Professional coaching methodologies

Application Flow

- Scenario Selection: The user chooses the presentation type

- Practice with Teleprompter: Record while reading your customizable script

- Analysis with Gemini: The video is processed with multimodal AI

- Visual Results: Video synchronized with frame-by-frame feedback

- Continuous Improvement: Chat with AI coach to deepen in specific areas

Challenges we ran into

🎬 Video Export with Visual Annotations

The Challenge: Users wanted to share their analyzed videos with mentors or save them for future reference, but the overlays (boxes, labels, markers) only existed as interface elements that disappeared when downloading the original video.

The Solution: We developed a "video compositing" system that combines the original video with annotations in real-time. The process captures frame by frame, renders the corresponding overlays for each moment using Canvas API, and generates a new video file with everything permanently integrated.

The Result: Professional videos with built-in visual feedback, perfect for sharing with coaches, including in portfolios, or documenting progress over time.

📱 Responsive Overlays on Mobile Devices

The Challenge: The floating labels (pills) that show feedback would go off-screen on vertical devices or cover important video information. The coordinates that Gemini provides are normalized (0-1), but converting them to pixels on different-sized screens was complex.

The Solution: We implemented an intelligent positioning system that detects screen edges in real-time, automatically calculates the best position (top/bottom/left/right), and dynamically adapts when rotating the device.

The Result: Consistent experience with overlays always visible without obstructions.

💾 Persisting Large Videos Without Backend

The Challenge: We wanted Reflektor to work completely in the browser (no login, no servers, no costs) to maintain total privacy, but practice videos can be 50-500MB, much more than localStorage's 5MB limit.

The Solution: We used IndexedDB, a browser database designed for large binary data. We created a custom wrapper (SessionStore) that handles video Blobs, analysis results, and session configuration.

The Result: Users never lose their work, can close the browser and come back later, and have complete control of their data - everything works offline after the first load.

🎯 Precise Video-Overlay Synchronization

The Challenge: Showing annotations exactly on the correct frame required synchronizing:

- Video timestamp (can vary by framerate)

- Events detected by Gemini (timestamps in decimal seconds)

- Canvas rendering (must be 60fps for smoothness)

- User interactions (pause, skip, change speed)

The Solution: We implemented an event system that listens to timeupdate from the <video> element, filters events within a ±0.5 second range of the current timestamp, and uses requestAnimationFrame for smooth rendering synchronized with the monitor's refresh rate.

The Result: Perfectly synchronized overlays that follow the video even when the user jumps to different moments or changes playback speed.

Accomplishments that we're proud of

🏆 Truly Multimodal AI in Action

Getting Gemini 3.0 to not just "see" or "hear", but to synchronize both to detect complex patterns:

- Identifying when a filler word coincides with a nervous gesture

- Noticing when you lower your voice just as you look away

- Detecting patterns like "every time you mention the price, you cross your arms"

This holistic understanding transforms data into actionable insights.

🎨 UX That Makes AI Tangible

Converting JSON coordinates into intuitive visual overlays was a major design challenge. We're proud of:

- Interactive timeline where you can click on any marker and jump to the exact moment

- Floating pills with intelligent positioning that never obstruct

- Export that preserves all visualization in the final video

⚡ Performance Without Compromises

Achieving 60fps rendering while processing:

- HD video in playback

- Multiple animated overlays simultaneously

- Real-time positioning calculations

- All running in the browser without servers

🌍 Real Accessibility

Reflektor works for anyone with a modern browser:

- No need for account or login

- No server costs that limit access

- Support for Spanish and English from day one

- Mobile-first design that works on any device

🎓 Intelligent Contextual Coaching

The chat doesn't just answer generic questions - it knows exactly what you did in your video:

- "How do I improve my close?" → Tells you specifically what to do based on YOUR pitch

- Understands context: Sales vs Pitch vs Speaking require different strategies

- Uses real methodologies (SPIN, Sandler, YC format) not just vague advice

What we learned

About Gemini 3.0 Flash

Surprising Precision: The model detects incredibly subtle details without additional training:

- Gaze shifts of less than 1 second

- Difference between emphatic and nervous gestures

- Facial microexpressions indicating insecurity

- Fillers without prior examples

Contextual Understanding: Gemini adapts its analysis according to the scenario:

- In a Sales Pitch, briefly looking at notes is fine; in a TED Talk, it's not

- Detects that a pause before mentioning the price can be strategic

- Understands that broad gestures are positive in Speaking, but can be excessive in Pitch

- Differentiates between genuine confidence and overacting

Structured JSON is a Game-Changer: Native JSON support eliminated hours of parsing, validation, and error handling. We receive precise coordinates, exact timestamps, and well-formatted recommendations - all ready to render.

About UX Design for AI

Visual Beats Text: Showing a box around your hands when you make nervous gestures is 10x more effective than writing "nervous gesture at 1:23". The brain processes visuals instantly and the feedback becomes memorable.

Interactivity Drives Practice: Being able to click on the timeline or specific events and jump directly increased engagement dramatically. Users who can easily review specific moments practice up to 3x more.

Contextual Chat is Critical: Initially we thought it would be secondary, but we discovered that users need to be able to ask specific things like:

- "Why did Gemini mark this as a filler?"

- "How can I sound more confident without seeming aggressive?"

- "Is this gesture okay or distracting?"

About Developing with Generative AI

Prompt Engineering is 50%: Creating the perfect prompt for Gemini required more iterations than the UI code. We learned to:

- Be specific about output format (detailed JSON schema)

- Provide examples of what we want to detect

- Adjust severity according to the scenario

- Request normalized coordinates (0-1) instead of absolute pixels

Fallbacks Are Essential: Models can fail or be overloaded. Having an automatic system that switches from Gemini 3.0 to 2.5 Flash ensures the app always works. Reliability is as important as capabilities.

Experience During Wait Matters: While Gemini analyzes, we show "fake logs" of visual progress. Users tolerate waiting much better when they see something happening vs a generic loading screen.

About Modern Frontend Architecture

Canvas API is Powerful: We don't need heavy video libraries - native Canvas can do complex rendering at 60fps when used correctly.

IndexedDB Unlocks New Possibilities: Being able to store large amounts of data locally opens the door to apps that previously required a complete backend. We learned its limitations (asynchronous, variable quotas per browser) and how to work with them.

TypeScript Prevents Subtle Bugs: Especially when working with coordinates, timestamps, and complex events, having strong types prevented dozens of bugs that would have been difficult to debug.

What's next for Reflektor

🔄 History and Progress

Implement a complete session system that allows:

- Save multiple practices and compare them

- See your progress over time (e.g., "your fillers dropped from 20 to 5 in a month")

- Evolution graphs per metric

- Export progress reports in PDF

- System of toggleable layers to focus on what you need to improve

👥 Collaborative Mode

Features for teams:

- Share sessions with your sales team

- Compare scores among team members

- Corporate coach that analyzes team-wide trends

- Perfect for corporate training

📊 Deeper Analysis

Expand analysis capabilities:

- Advanced emotion detection: Micro-nervousness, genuine vs forced enthusiasm

- Narrative structure analysis: Does your pitch follow a clear arc?

- Benchmark against best practices: Compare with top TED Talks or successful pitches

- Slide analysis (if you share screen): Do your slides complement or distract?

🌍 Multi-Language Expansion

Full support for more languages:

- Filler detection in French, Portuguese, Mandarin, German

- Culturally appropriate contextual coaching

- Fully localized interface

⚡ Technical Improvements

Results Streaming: Show feedback while Gemini analyzes, instead of waiting. Seeing detections appear in real-time would be a magical experience.

Complete PWA: Convert Reflektor into an installable app that works 100% offline after the first load.

Live Analysis: Option to receive feedback DURING recording, not just after. Ideal for practicing with real-time prompts.

🎯 Long-Term Vision

We want Reflektor to be the "Grammarly of presentations" - an indispensable tool that everyone uses before important presentations.

Imagine a world where:

- Entrepreneurs practice unlimited pitches without paying $500/hour for a coach

- Students improve their public speaking before important presentations with objective feedback

- Salespeople close more deals because they perfect their demos based on data

- Anyone can become an effective communicator with AI-guided practice

Effective communication is one of the most valuable skills in the professional world, but traditionally it has been accessible only to those who can afford expensive coaches or have available mentors. Reflektor democratizes this access using multimodal AI.

Built With

- css

- framermotion

- gemini

- indexdb

- konva

- lucidereact

- next.js

- node.js

- tailwind

- typescript

- vercel

Log in or sign up for Devpost to join the conversation.