-

-

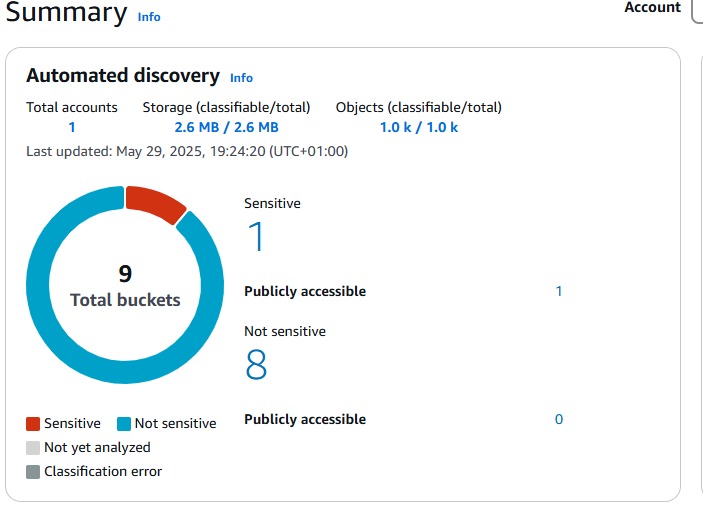

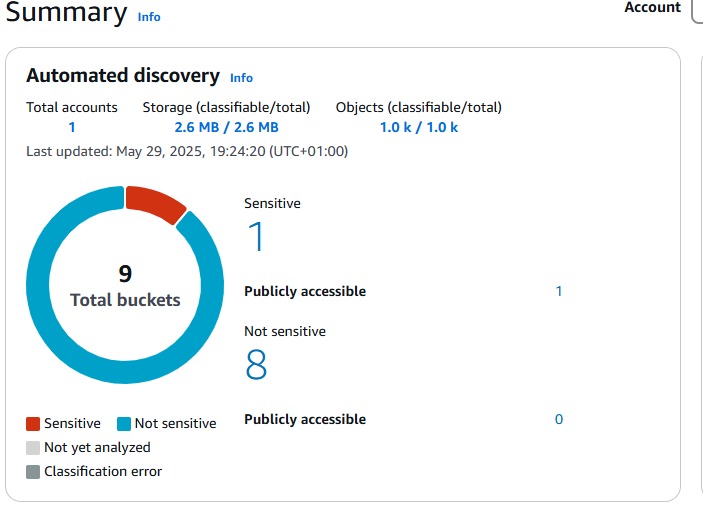

Macie findings

-

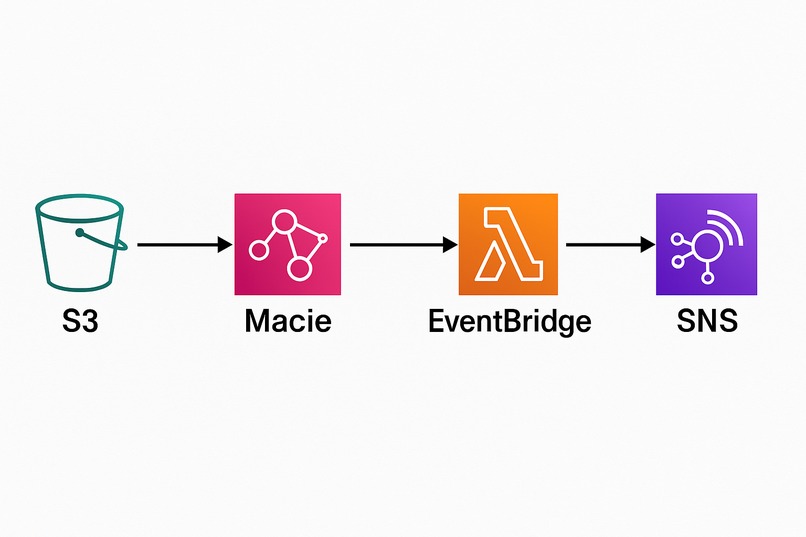

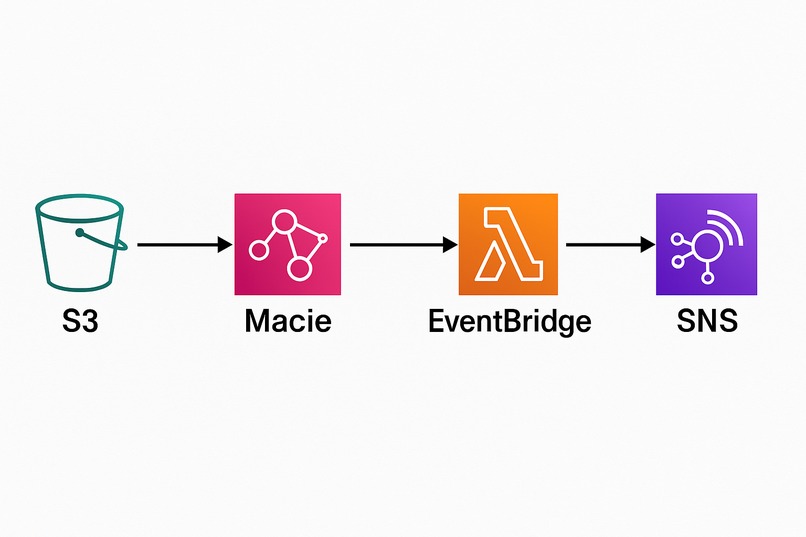

Architecture Overview

-

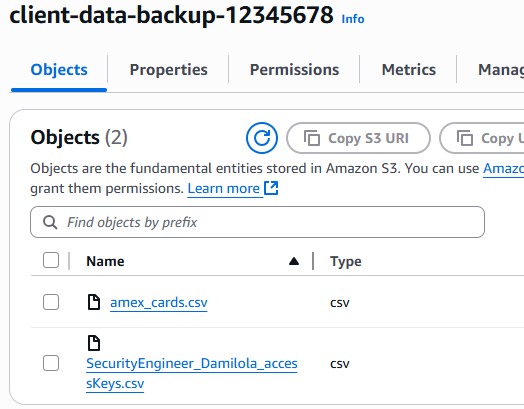

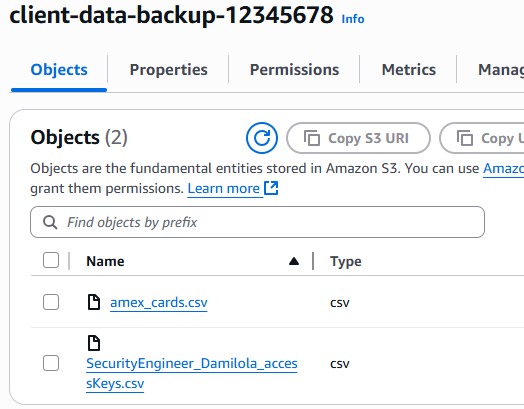

vulnerable and secure (encrypted) S3 buckets

-

vulnerable S3 bucket with sensitive Object(PII) before automation

-

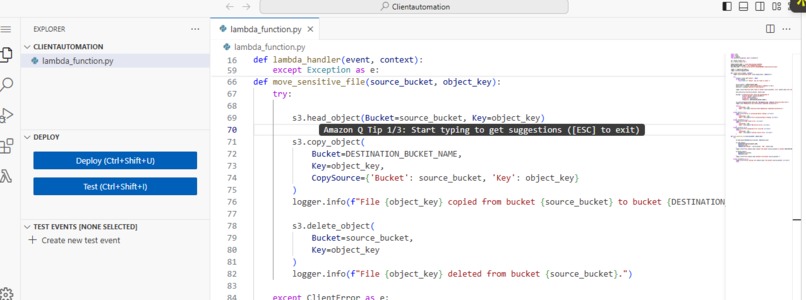

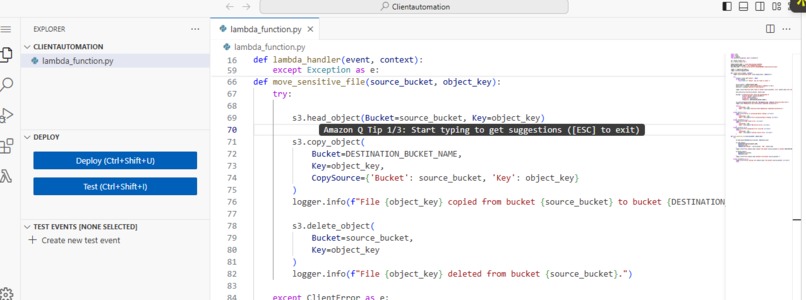

Lambda function code

-

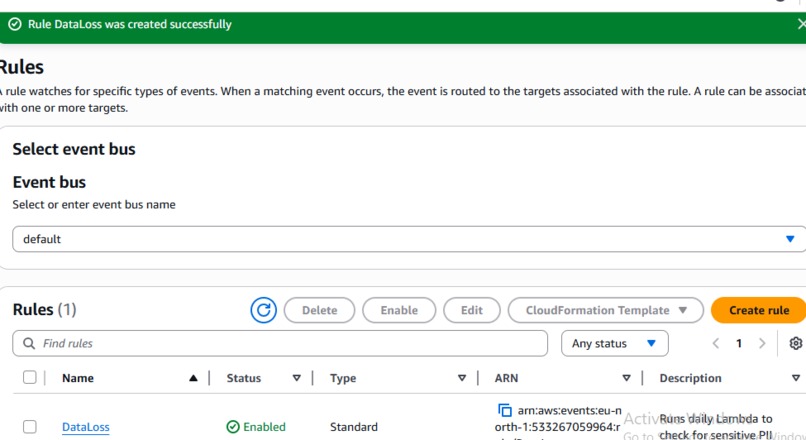

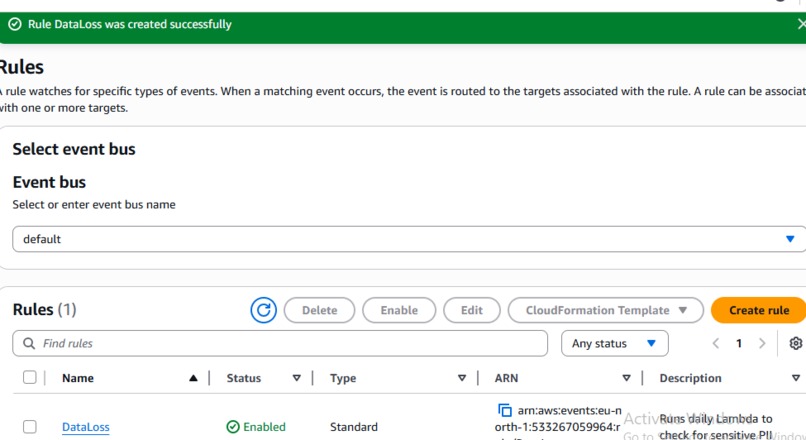

EventBridge Rule created to trigger Lambda function

-

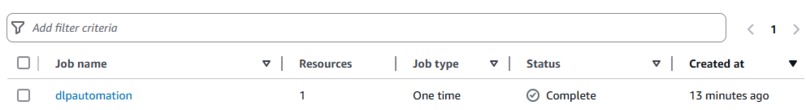

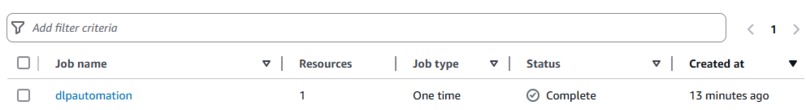

Job created for Marcie to automate findings daily

-

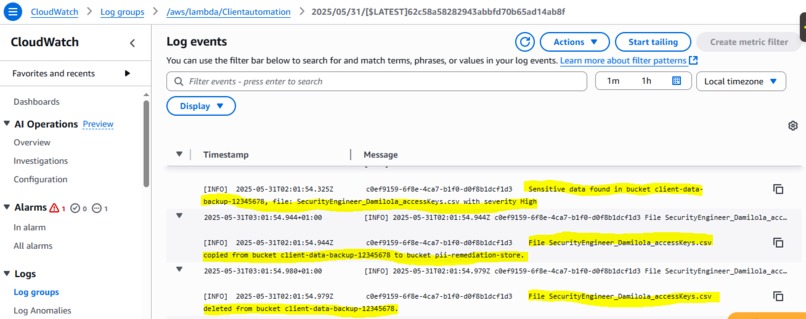

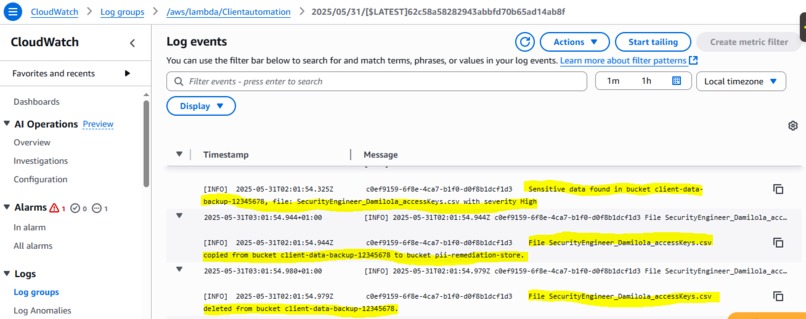

CloudWatch logs confirming the automated event

-

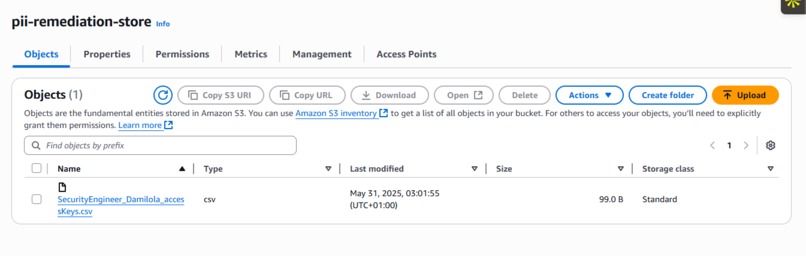

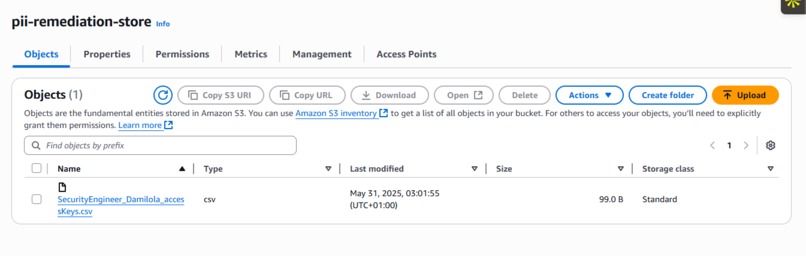

Sensitive PII moved from the vulnerable bucket to the encrypted bucket with Lambda function triggered by EventBridge

Inspiration

Before I became a Cloud Security Engineer, I was deep in the world of Microbiology. We were trained to isolate viruses, prevent contamination, and stop outbreaks before they spread. Precision and timing could save lives.

But one day during my internship at a teaching hospital, I saw something that stuck with me. A patient’s private medical record(name, diagnosis, lab results) was mistakenly printed and left in the open. A simple oversight. But that moment taught me something: data is just as sensitive as the body. Fast forward to today, I now help AI startups in healthcare build secure systems in the cloud. But that lesson has never left me.

When I learned that most early-stage teams still manually detect data exposure, it reminded me of that hospital hallway. Too late. Too exposed. So I built a real-time automation system to detect and respond to sensitive data leaks in under 2 minutesbefore harm is done. Not just for compliance but for peace of mind.

What it does

This system detects Personally Identifiable Information (PII) like emails or credit card data in Amazon S3 using AWS Macie, and then uses EventBridge and Lambda to instantly move flagged files to an encrypted S3 bucket, delete the exposed bucket, log the event to CloudWatch for monitoring and compliance purpose and alert the security team via SNS all without any manual work.

How I built it

Amazon Macie scans S3 for sensitive content EventBridge listens for Macie alerts AWS Lambda remediates exposure by moving data to secure buckets and deleting the vulnerable ones SNS sends real-time email notifications to devs and compliance officers CloudWatch Logs monitor and log each automation step

Challenges I ran into

Building this automation pipeline wasn't a plug-and-play experience. Each layer came with its own lesson.

First, understanding how Macie reports findings was more complex than expected. The event structure wasn’t clearly documented, and at first, it felt like I was decoding cryptic JSON hieroglyphics. I had to manually trigger test scans, sift through logs, and reverse-engineer which part of the payload mattered for EventBridge.

Then came the test environment issue I couldn’t use actual patient data for obvious reasons, but I still needed realistic PII samples to validate the detection. I ended up creating anonymized, but structurally accurate mock data(emails, names, IDs) that mirrored real-world risk without violating privacy. It took a few iterations before Macie even flagged anything. That was a moment of frustration and progress.

IAM was another tricky layer. Lambda needed just enough permission to act but not too much. At one point, the function silently failed because it didn’t have s3:DeleteBucket, and I had to comb through CloudWatch logs to debug the policy.

Finally, timing was critical. Macie’s job isn’t instantaneous. My Lambda was firing before the job completed resulting in empty actions. I had to tweak my approach, using custom filters and wait logic to ensure the system only acted after findings were finalized.

Every challenge deepened my AWS security knowledge and made the final pipeline not just functional, fun and resilient.

Accomplishments that I'm proud of

After building this project, I shared it with my network not expecting much, just proud of what I had learned. But then, I got a DM from a founder of another AI startup. They saw the demo and said, “This is exactly what we need but do you think it would work in our stack?” I wasn’t sure… but I offered to try.

After a few tweaks adjusting the IAM roles and modifying how they handled S3 encryption the system integrated beautifully. It detected a PII leak in their dev environment within minutes of uploading test data. That was the moment it all clicked. This wasn’t just a portfolio project or a hackathon entry. It was solving a real, painful problem in the wild.

What we learned

Building automation around AWS Macie and integrating it into live systems Designing secure-by-default architectures for sensitive industries Communicating technical risk and compliance in plain English to founders and product teams Event-driven security workflows are incredibly powerful and underutilized

What's next for Real-Time Data Leak Prevention Automation in AI Healthcare

Add Granular Classification for different types of PII. Integrate with Security Hub for cross-service monitoring. Extend to multi-region compliance and GDPR-ready workflows. Productizing It Under ShipSecure. This solution will be packaged as a plug-and-play framework under ** ShipSecure**, my seed-stage AI security startup. AI startups will be able to adopt it quickly no deep cloud security team required.

Log in or sign up for Devpost to join the conversation.