-

-

Front view of Ralph!

-

Ralph's Side Profile

-

Body of Ralph, containing the RC car, and Pi 5

-

Our prototype of Ralph, containing the main motor function and a DIY battery pack/holder (seen on the right as the long black tube)

-

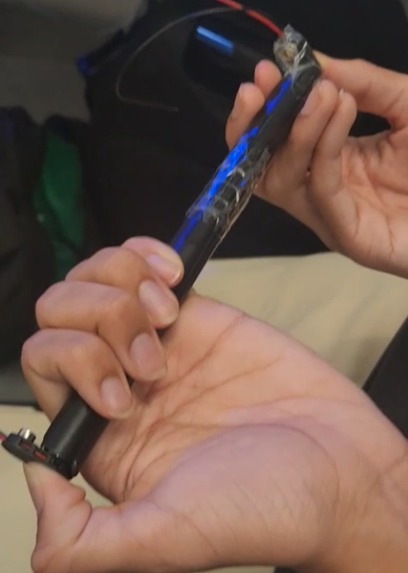

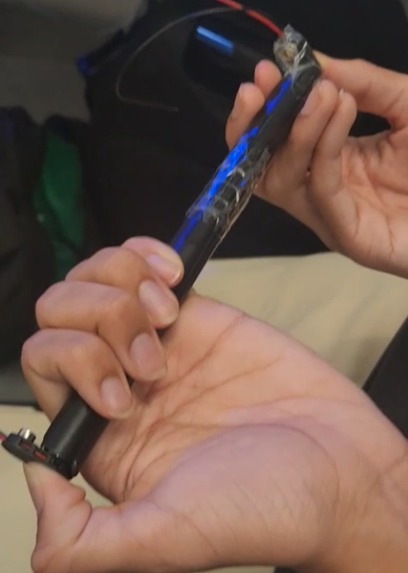

Picture of our DIY battery pack up close. Sequentially connected batteries to create a circuit and used tape to maintain the connection.

-

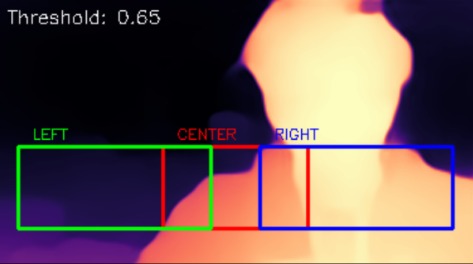

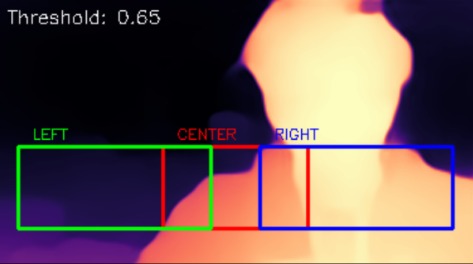

Sample image of what Ralph sees. Brighter warmer colours indicate closer distance while lighter colder colours represent further distance.

Inspiration

Ralph was inspired by the challenge of making navigation safer and more accessible using low cost hardware. We wanted to explore whether modern computer vision models could provide meaningful spatial awareness using only a single camera, without relying on expensive sensors. The project is motivated by assistive technology and the idea that intelligent guidance systems should be affordable, portable, and able to run entirely on device.

What it does

Ralph is an AI powered guide robot that navigates its environment in real time. Using a single USB camera, it detects people and vehicles while also estimating depth to identify walls and large obstacles before collision. By combining object detection and depth perception, Ralph can decide when to move forward, slow down, stop, or turn to avoid obstacles safely.

How we built it

We built Ralph on a Raspberry Pi 5 and used PyTorch as the core machine learning framework. YOLOv8 Nano handles real time object detection, allowing the system to recognize relevant obstacles like people and vehicles. MiDaS is used for monocular depth estimation, giving Ralph an understanding of how close large surfaces such as walls are. The camera feed is processed on device, and the outputs from YOLO and MiDaS are fused into a control system that drives the robot’s movement logic.

Materials

- Raspberry Pi 5

- RC Car Kit

- Battery Pack

- Driver Motor

- Batteries

- Wires

- Multimeter

Challenges we ran into

One of the main challenges was running multiple AI models simultaneously on limited hardware while maintaining real time performance. We had to carefully optimize resolution, frame rates, and inference frequency to keep the system responsive. Another challenge was working with relative depth instead of true metric depth, which required calibration and tuning to ensure reliable obstacle avoidance.

One of the main obstacles we ran into was a defective battery holder. The springs weren't conductive so we had to disassemble our RC car and work on creating a DIY battery holder. The battery holder was crucial to powering the car's motor and motor drivers.

Accomplishments that we're proud of

We are proud that Ralph runs entirely on device using a single standard webcam, without any specialized depth sensors. Successfully integrating YOLOv8 Nano and MiDaS together in real time on a Raspberry Pi was a major technical achievement.

We were able to DIY a battery holder using lots of tape, and 2 straws from the bubble tea offered in the basement, dried with toilet paper. A line was cut through both of the straws to allow for batteries to fit in and provide a tight container for the batteries. Lots of tape was applied to ensure that appropriate force was used to maintain a circuit.

What we learned

Through this project, we learned how to optimize deep learning models for edge devices and how to balance accuracy with performance. We gained hands on experience with real time computer vision, sensor fusion, and robotics control logic. We also learned the importance of simplifying systems and focusing on reliability when building under tight deadlines. Facing our challenges, we learned how to use materials that were available to us to DIY components such as the battery holder.

What's next for Ralph

Next, we want to improve Ralph’s navigation by adding smoother path planning and more robust obstacle avoidance. We plan to explore additional safety fallbacks such as distance sensors around the robot to improve vision. We also aim to improve performance through model optimization and acceleration through stronger hardware. Long term, we hope to expand Ralph into a more fully featured assistive navigation platform that can adapt to a wider range of environments.

Log in or sign up for Devpost to join the conversation.