-

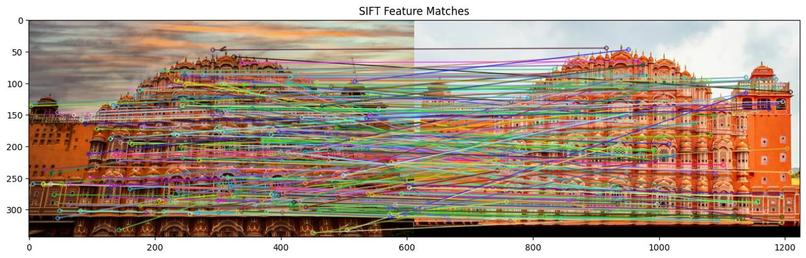

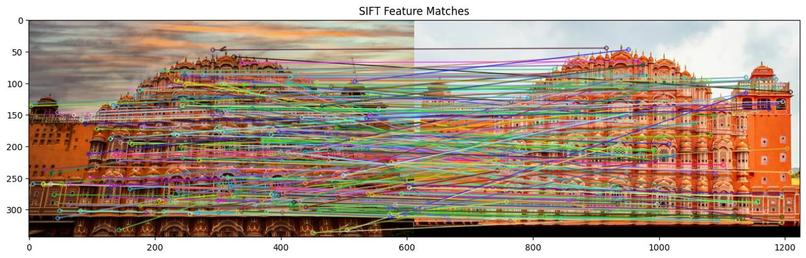

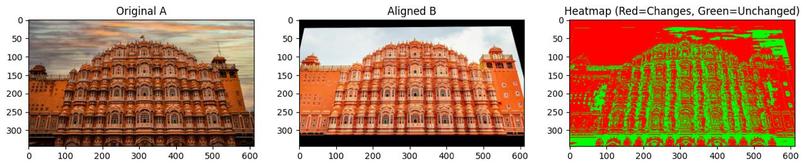

SIFT and RANSAC used for feature extraction, feature mapping using brute force matcher and Lowe's Ratio Test

-

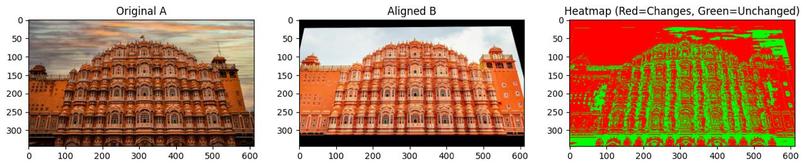

Image Aligned using RANSAC (homography estimation)

-

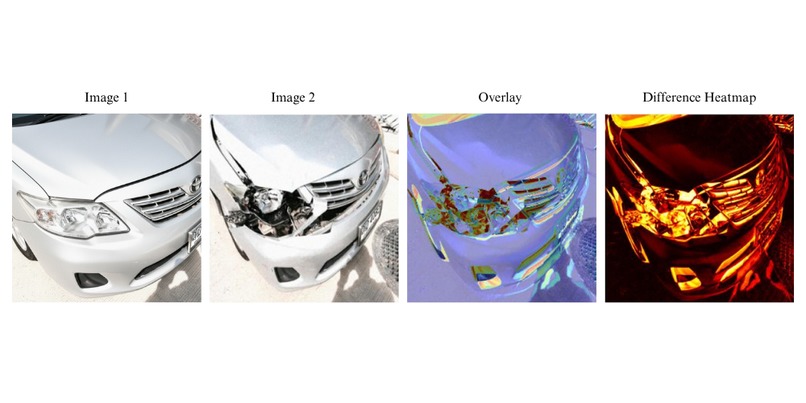

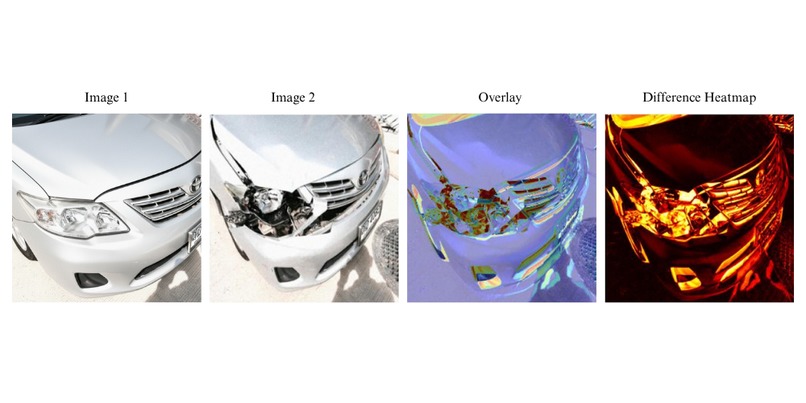

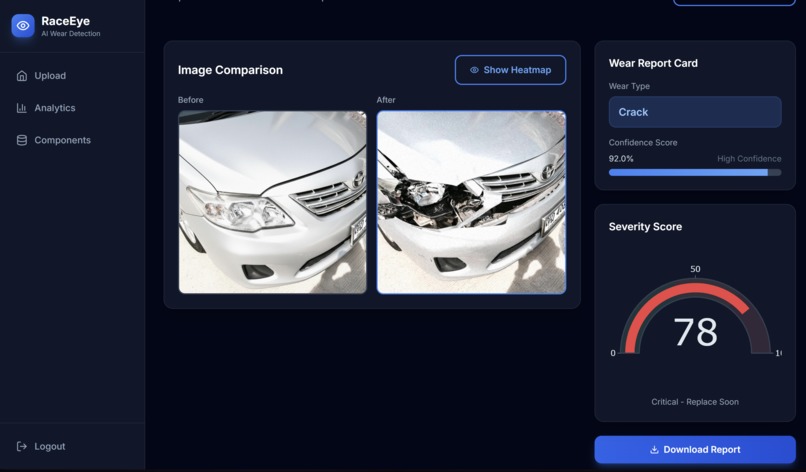

heatmap generation using pixel absolute difference and threshold mapping using pre and post image overlay

-

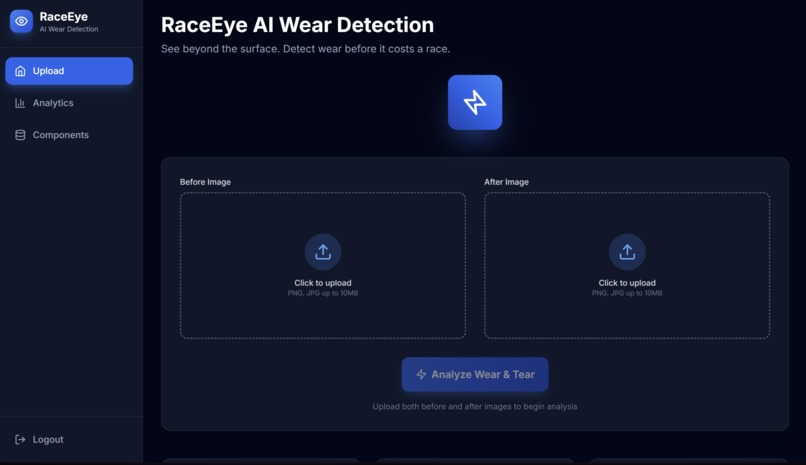

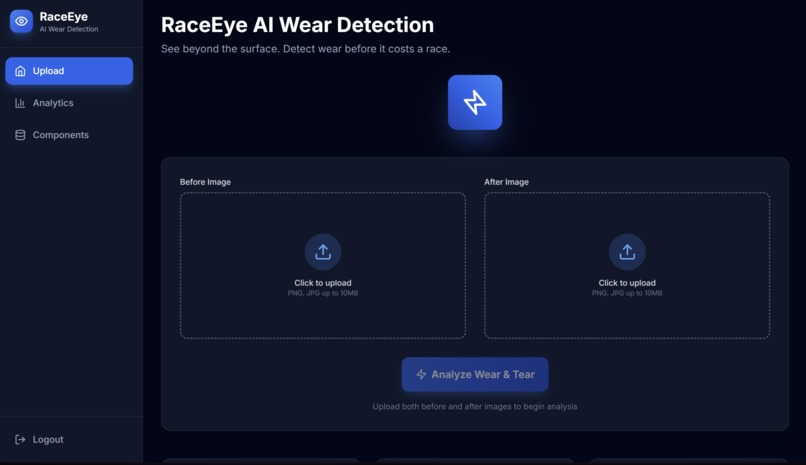

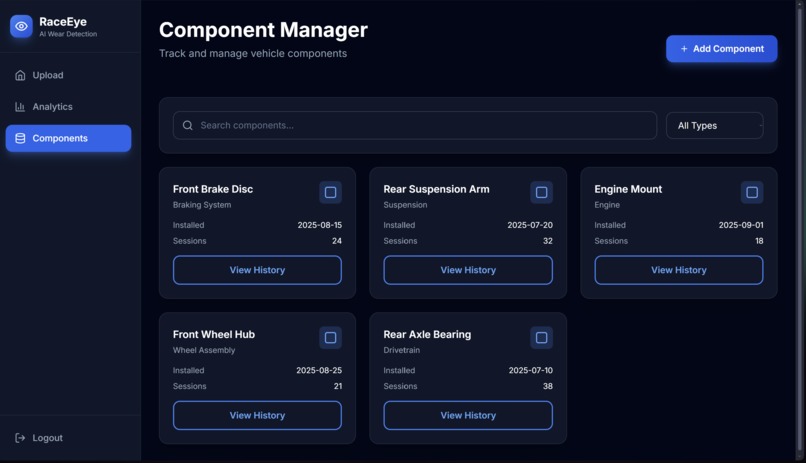

Dashboard for inputting images

-

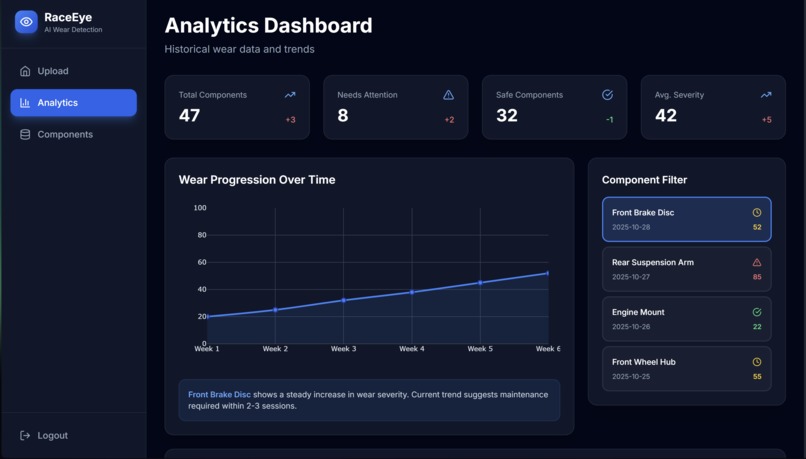

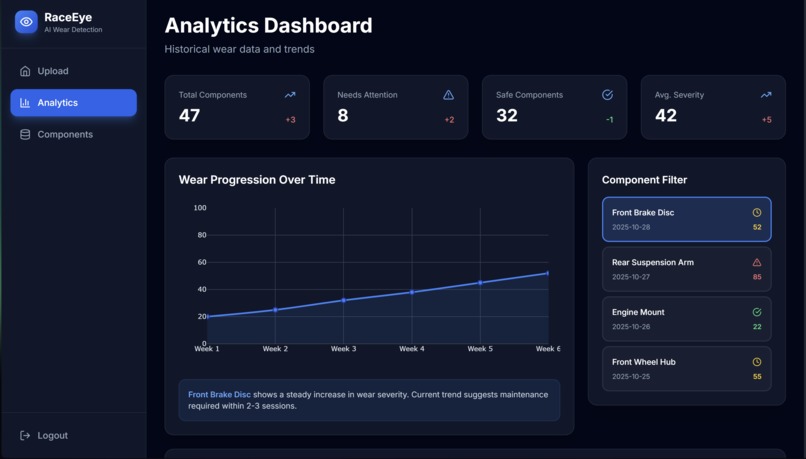

Complete analysis of past breakdowns

-

Severity Report of past breakdowns

-

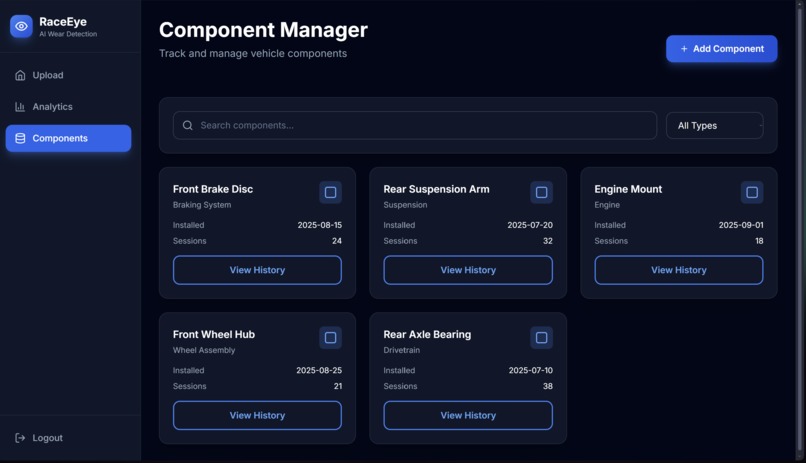

Maintained history of stored component

-

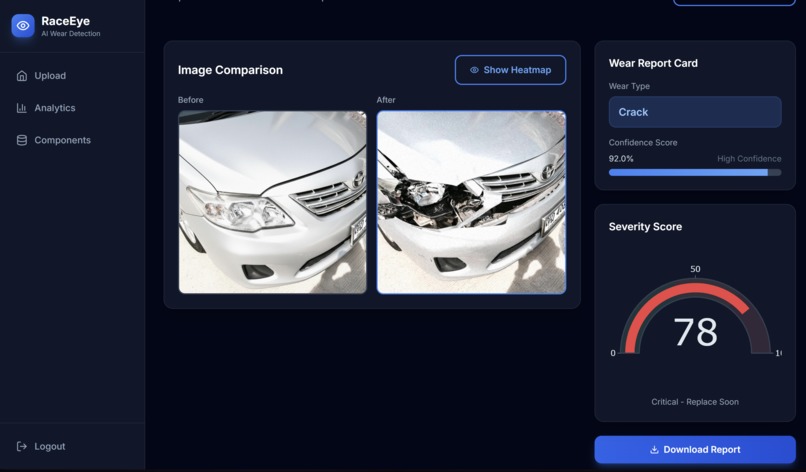

Report generation, reporting confidence score and severity

Inspiration

We wanted to create a system that could compare two images and detect subtle differences between them — something that’s extremely useful in areas like quality inspection, forgery detection, image registration, and computer vision-based change tracking.

The goal was to not just compute pixel-level differences but to understand structural, geometric, and perceptual variations between two similar images.

What it does

Our solution takes in two input images and performs:

- SIFT-based keypoint detection to find unique visual features.

- RANSAC-based homography estimation to align both images, handling translation, rotation, and scale changes.

- Background removal (via rembg) to eliminate irrelevant regions before comparison.

- Feature matching visualization with confidence lines drawn between keypoints.

- Difference heatmap generation that highlights only meaningful differences after alignment.

- Quantitative metrics including:

- Structural Similarity Index (SSIM)

- MSE (Mean Squared Error)

- Feature Match Count and Confidence

The output consists of:

imgA→ Original ImagealignedB→ Aligned Target Imageheatmap_img→ Difference Heatmapmatches_img→ Feature Matching Visualizationsimilarity_score,mse, andconfidence_scoreas numeric metrics

How we built it

We used a multi-stage computer vision pipeline built in Python with OpenCV, NumPy, scikit-image, and rembg.

Steps involved:

- Preprocessing: Both images are resized and normalized.

- Feature Extraction:

Used SIFT (Scale-Invariant Feature Transform) to detect local descriptors invariant to scale, orientation, and illumination. - Feature Matching:

Used a FLANN (Fast Library for Approximate Nearest Neighbors) matcher to find the best correspondences between keypoints.

Applied Lowe’s Ratio Test to remove ambiguous matches. - Homography Estimation:

Computed a 3×3 transformation matrix using RANSAC (Random Sample Consensus) to eliminate outliers and align the second image with the first. - Background Removal:

Applied rembg (U²-Net) to strip backgrounds before alignment for cleaner comparison. - Difference Heatmap:

Computed pixel-wise differences and visualized them with a colormap for interpretability. - Metric Computation:

SSIM for perceptual similarity,

MSE for pixel-level deviation,

Match ratio as a proxy for confidence.

Challenges we ran into

- Handling scale and rotation variations between images during alignment.

- Managing edge mismatches after background removal.

- Ensuring the heatmap wasn’t too sensitive to lighting changes or shadows.

- Debugging OpenCV’s findHomography failures when keypoints were insufficient.

Accomplishments that we're proud of

- Successfully combined SIFT + RANSAC + rembg in a single robust pipeline.

- Created both qualitative (visual) and quantitative (metric-based) deliverables.

- Made the program modular so that it can easily plug into a Flask or React web interface.

- Achieved near-perfect alignment and high-confidence similarity scoring even for noisy inputs.

What we learned

- The importance of feature-based registration over pixel-based comparison.

- How RANSAC dramatically improves robustness by filtering noisy matches.

- How to compute and interpret SSIM, MSE, and confidence metrics for real-world vision tasks.

- Best practices in OpenCV pipeline structuring for production-grade image comparison tools.

What's next for Untitled

We plan to:

- Integrate this pipeline into a web dashboard for drag-and-drop image comparison.

- Add deep feature extraction using VGG/ResNet embeddings for semantic-level difference detection.

- Implement auto-report generation (PDF with metrics + visuals).

- Extend to video frame comparison for surveillance and defect detection use cases.

Log in or sign up for Devpost to join the conversation.