-

-

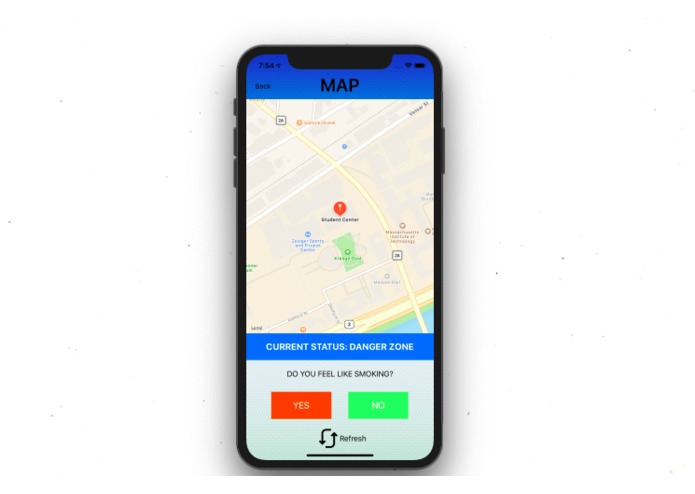

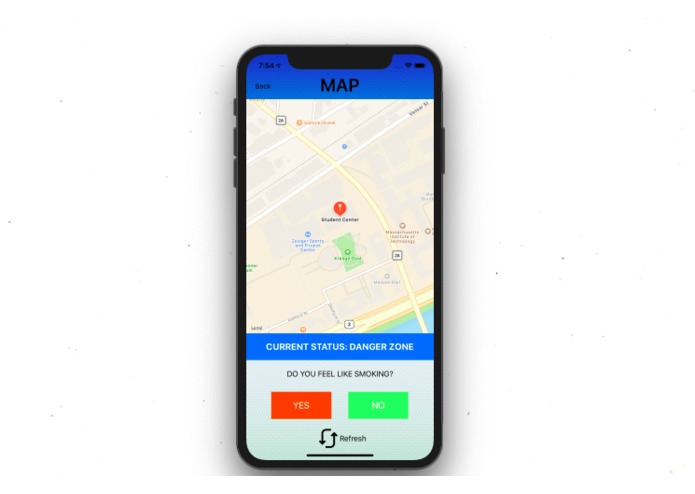

Map interface for a danger zone detection. Upon detection, the user will choose whether or not they are inclined to smoke at the moment.

-

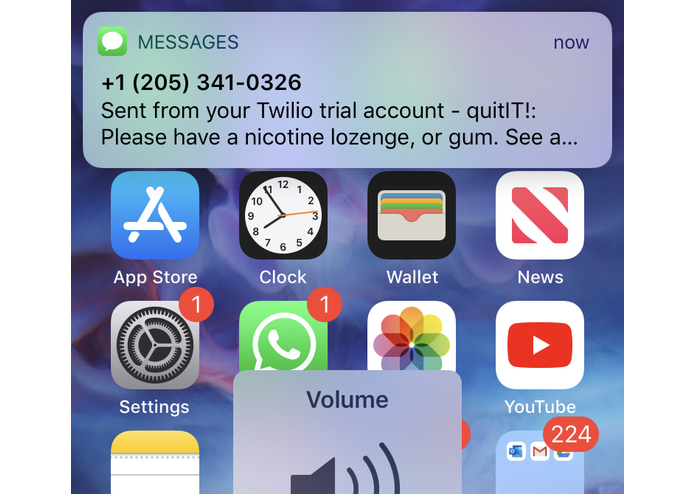

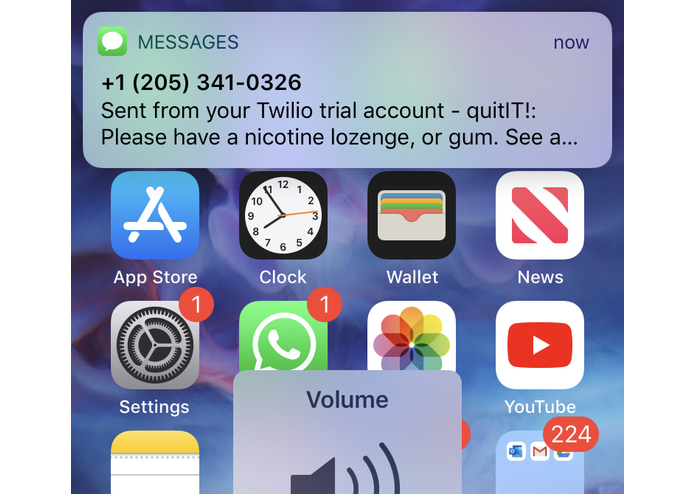

The user receives an SMS alert when they have entered a danger zone (detected area where they are likely to smoke).

-

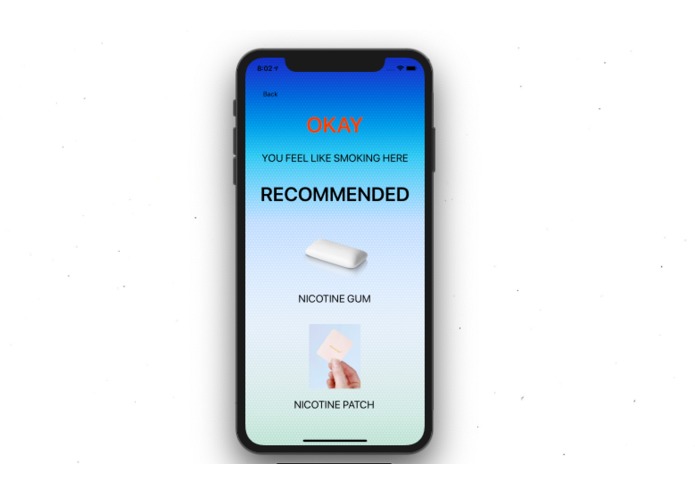

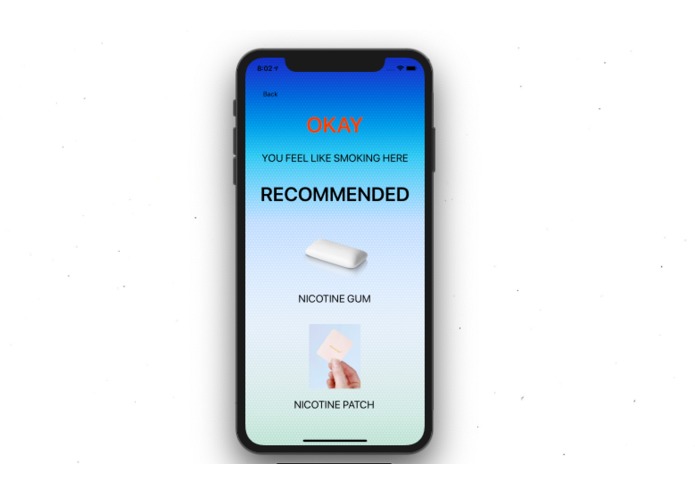

When user selects "Yes" (they want to smoke), they will be presented with recommendations (alternatives to smoking that involve nicotine).

-

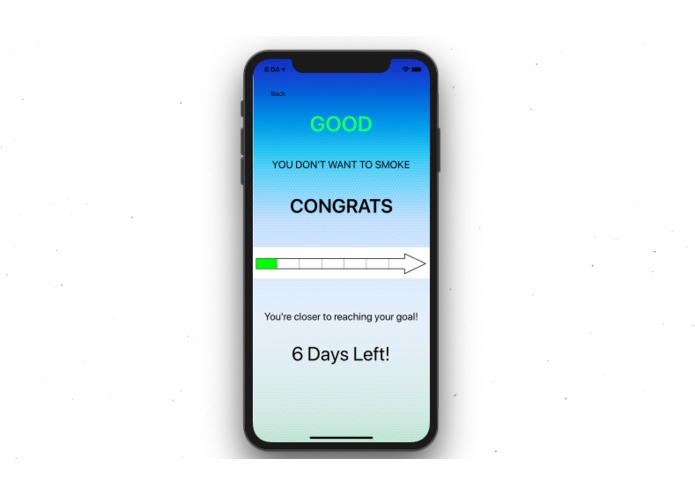

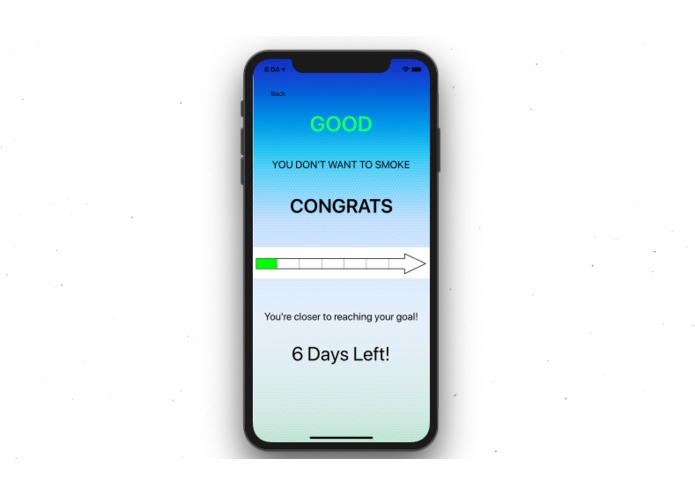

When user selects "No" (they don't want to smoke), they will be congratulated and notified of their weekly progress.

-

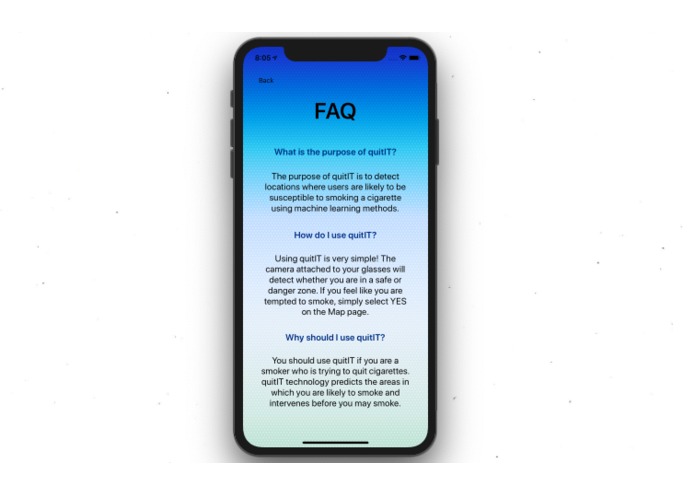

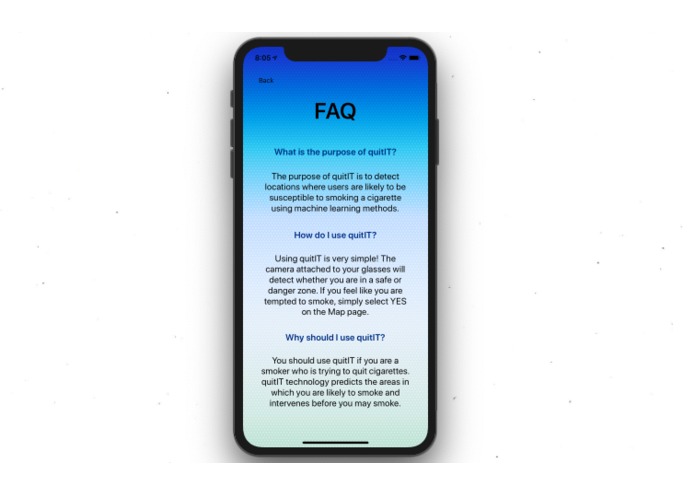

Contains a Frequently Asked Questions section to inform users of the purpose of the app.

-

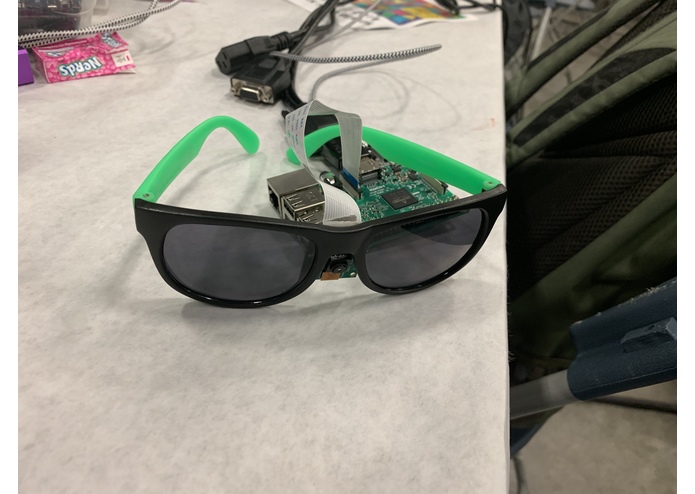

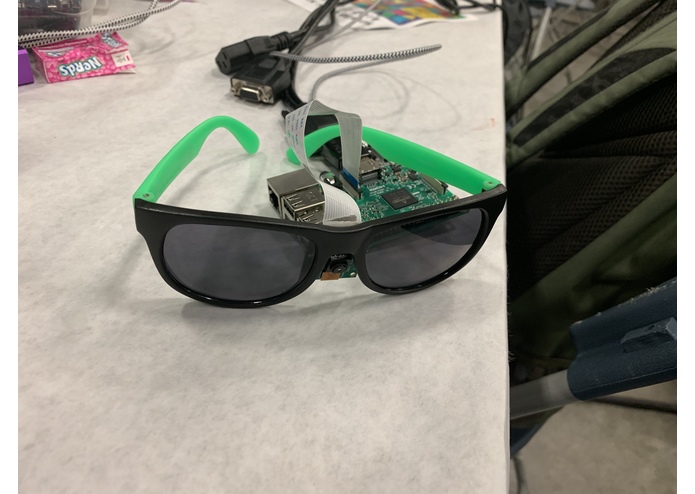

Image of Pi cam attached to glasses to simulate a human's field of vision.

-

Home page for the mobile application. Provides access to the Map and FAQ views.

Inspiration

Google Glass was, and always will be, one of the most magical advancements in wearable, intelligent technology. With recent advances in mobile processing power and computer vision techniques, the team was driven to combine ideas of the past with technologies of the present to solve complex, yet critical, problems in healthcare and lifestyle, to approach the future.

Introduction

Our solution, coined "QuitIT!", aims to help smokers quit smoking by preventing relapse by employing environment-based risk prediction. Cigarette smoking results in the deaths of 500,000 US individuals a year, yet the best smoking cessation interventions, of which only a small percentage of smokers take advantage, achieve less than 20% long-term (6-month) abstinence rates. We rely heavily on the concept of just in time adaptive interventions (JITAIs), which aim to provide the right type/amount of support, at the right time, by adapting to an individual's changing internal and contextual state.

What it does

Our device attaches to the user's head by clipping onto sunglasses, or being placed inside a hat, to provide an accurate stream of the user's point of view (POV). Images are taken every 10 minutes and classified, as smoking areas or non-smoking areas, using a deep learning framework deployed on the cloud (IBM Watson Health). Classifications are sent to a backend server to be stored securely and temporarily. Heuristics, determined from literature analysis, present in the server dictate whether the user is notified. The patient receives an SMS when he or she enters an area likely to elicit smoking craving. The SMS informs the patient to employ an appropriate cessation intervention, such as chewing nicotine gum or applying a nicotine patch.

The user can then check their activity/progress on a simple mobile application. Weekly goals motivate users to reduce their nicotine/smoking reliance. User-specific encounters with smoking environments can serve to warn other patients due to our backend solution recording geographical location, and displaying elegant visualizations in the map functionality. Critically, accumulation of user data in regions can serve to define areas of smoking for non-smokers as well. Non-smoker users such as pregnant women, asthma patients, etc. could benefit from our solution by minimizing adverse outcomes due to passive smoking. Lastly, our solution provides a mechanism for physican/psychiatrist data access for monitoring user activity. This mechanism, however, is completely dependent upon the consent of user, to maintain privacy compliancy.

How we built it

We used a Raspberry Pi Model B with its companion Pi Camera v2 for capturing images and communicating with cloud and backend components. Bash scripts served to capture images at a constant rate (1 image ~ 10 minutes) as well as communicate with our deep learning classification algorithm implemented using IBM's Visual Recognition service. The algorithm outputs a binary variable and was trained on ~350 images. Half of the images were images of smoking areas and half were images of non-smoking areas. The images were scrapped from Google Image Search using a Python package. Image queries were based upon objects/scenes found to be associated with smoking and non-smoking from recent literature (see below).

Classification results were returned to the Pi, after which they were directly passed to a Firebase database. This is followed by a request to the user's mobile device, which obtains geolocation coordinates and also allows for an app update if the user is in a smoking area. The app (iOS) was designed using Swift and serves to show a map of smoking areas and to motivate the user to meet weekly milestones/goals. The mobile app also provides recommendations if an individual is tempted to smoke at his/her location (chewing nicotine gum or applying nicotine patches).

Challenges we ran into

None of us really had any backend development experience. We had initially chosen a different backend solution and spent quite a bit of time changing trying to resolve issues, before switching to Firebase. Choosing a mobile OS was also challenging due to only half of our team having access to MacOS. Regardless, we assigned tasks accordingly and overcame our hurdles.

Accomplishments that we're proud of

We managed to create a novel end-to-end, mobile, machine learning framework for healthcare in less than 24 hrs. Our team members all came with different experiences, interests, and expertise but managed to address all parts of our project. This is especially significant given the diverse composition of our project, which pertained to topics such as deep learning, hardware, camera sensing, backend development, mobile development, and clinical data science.

What we learned

During the first three quarters of our project, our team had doubts of whether we would have a final product. There were just so many components to our ambitious project that it was very difficult at times to envision a finished product. Alongside learning about IBM's Visual Recognition platform and backend development, our team truly embraced the process of hacking by persevering through our setbacks.

What's next for QuitIT: Helping smokers quit with computer vision

Our team is very fond of our project, despite its lack of immediate practicality. Despite to this, the team would be open to continuing work, especially on interesting analytics which could originate from this unique type of device. We believe that the device can only get better with more users and data and would be very interested in learning how alter the existing configuration to allow for greater scalability.

Acknowledgements

The team is extremely grateful of the IBM support team present at HackMIT. They went leaps and bound above with their technical support and patience with our frequent questions/inquiries.

Our team leader, Abhi Jadhav, conducts research under the supervision of Drs. Matthew Engelhard and Joe McClernon, in the Department of Psychiatry & Behavioral Sciences at Duke University. We acknowledge Abhi's mentors for their invaluable guidance and mentoring during his summer research internship, which sparked Abhi's enthusiasm and passion for this specific project and field of research.

References

Engelhard MM, Oliver JA, Henao R, et al. Identifying Smoking Environments From Images of Daily Life With Deep Learning. JAMA Netw Open. Published online August 02, 20192(8):e197939. doi:10.1001/jamanetworkopen.2019.7939

Log in or sign up for Devpost to join the conversation.