-

-

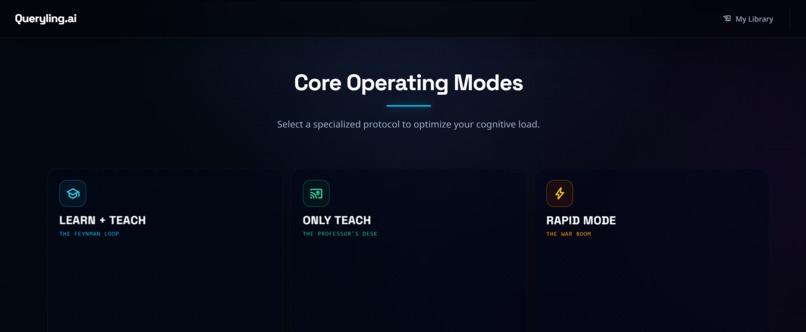

Home Page

-

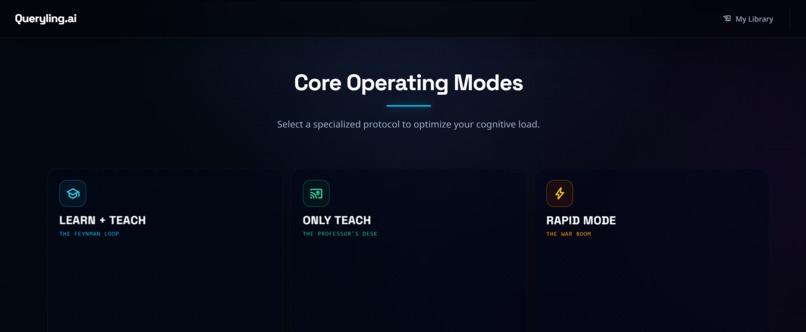

Learning Modes

-

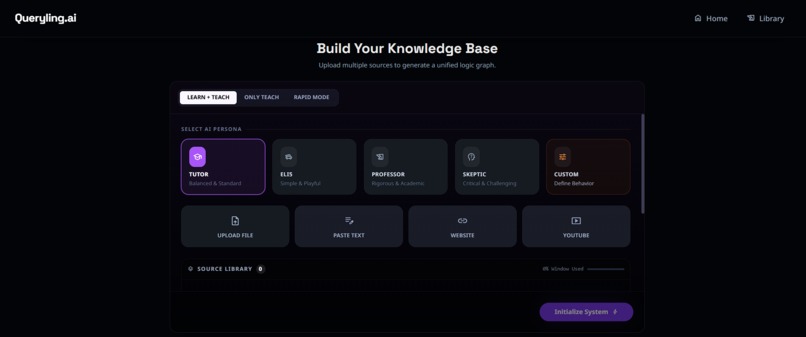

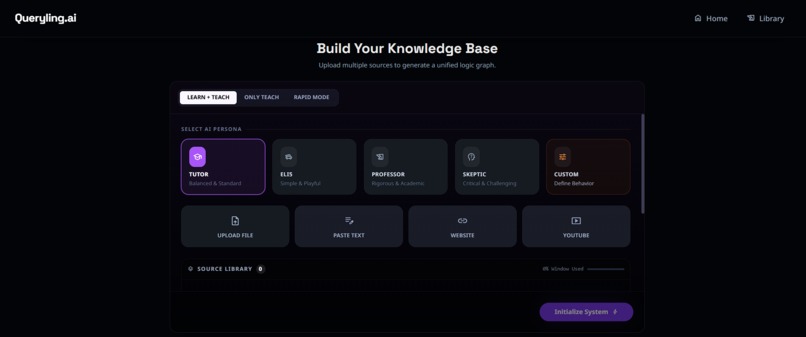

Study Material Upload

-

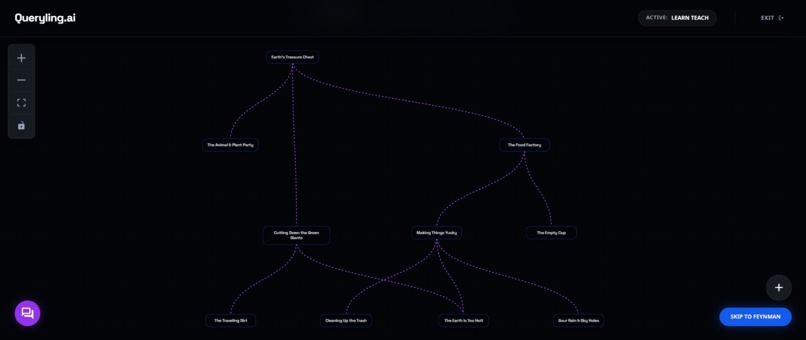

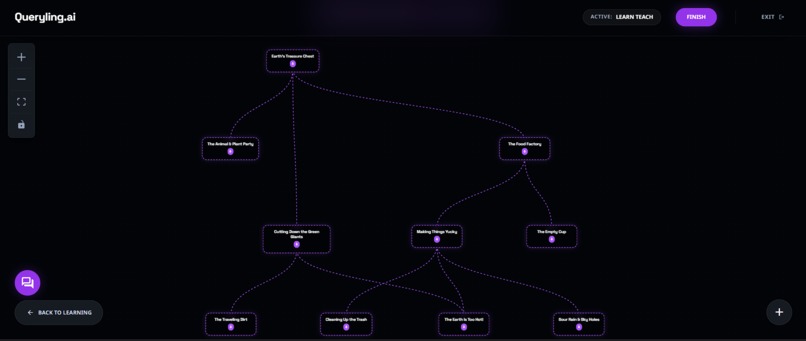

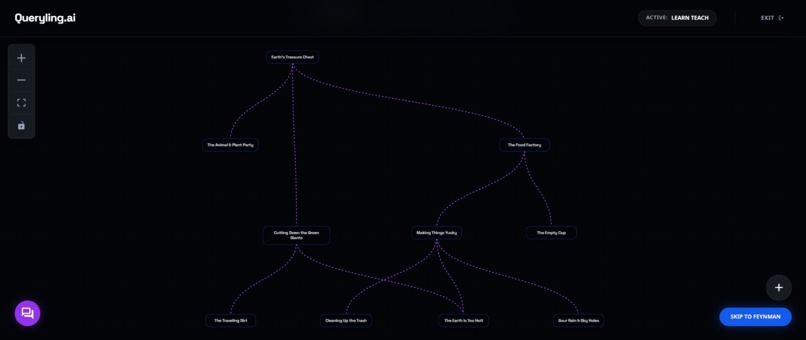

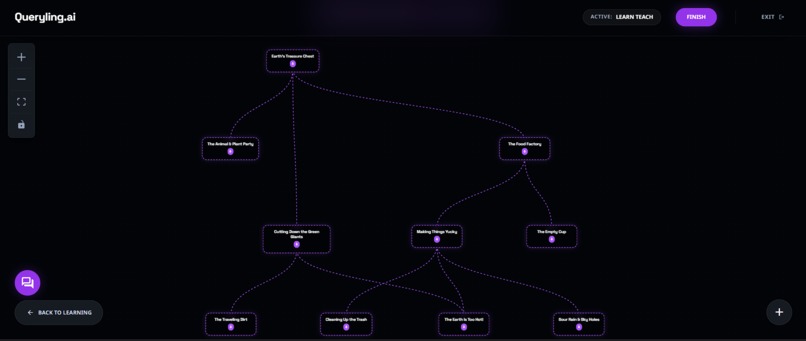

Flow Chart Generation using Gemini 3 Pro Preview

-

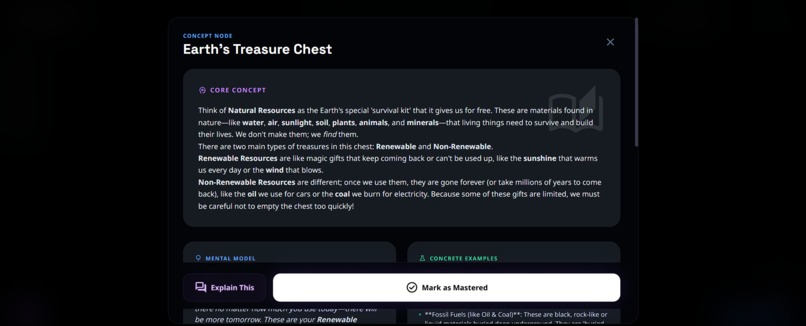

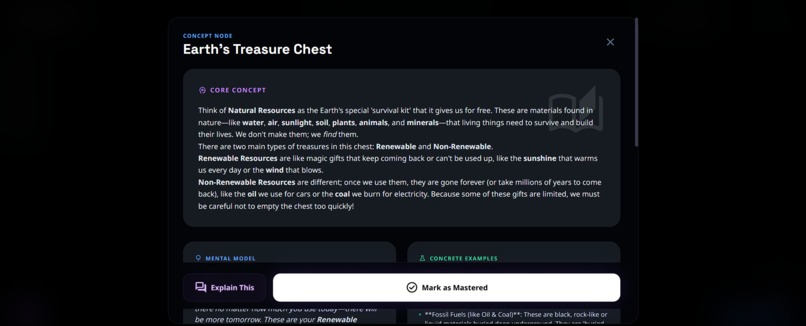

Explanation of the node using Gemini 3 Pro

-

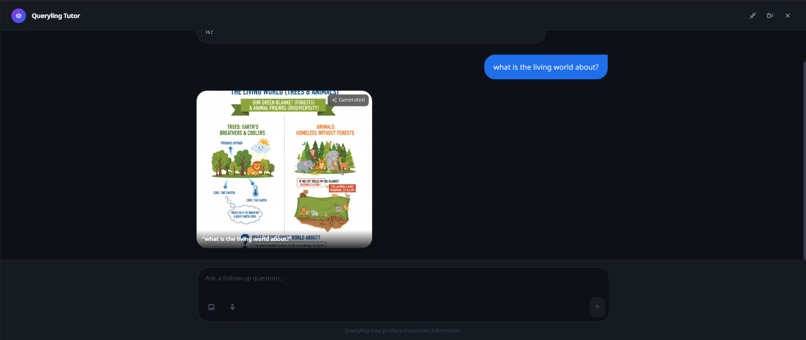

Image based node explanation using Gemini 2.5 Flash (Nano Banana)

-

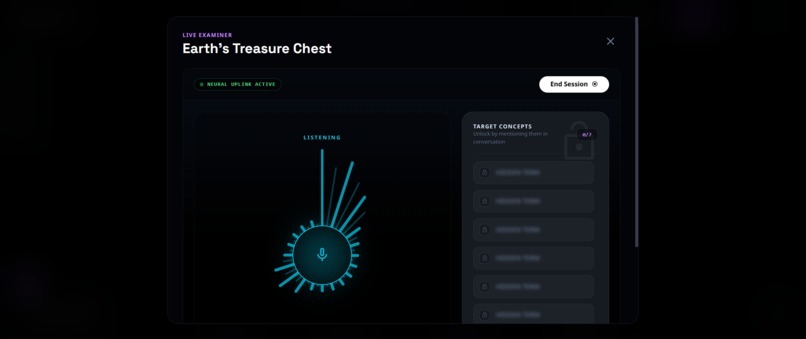

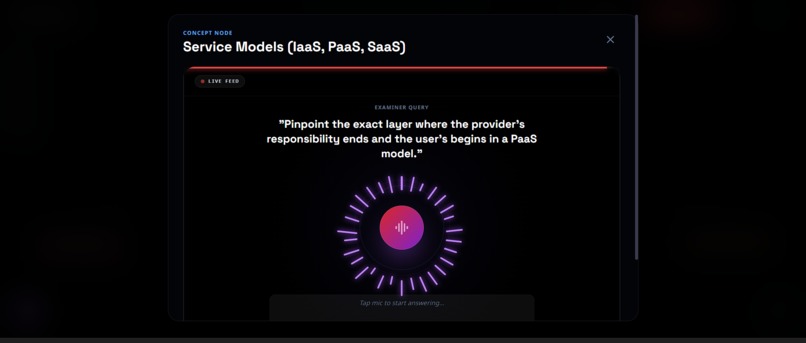

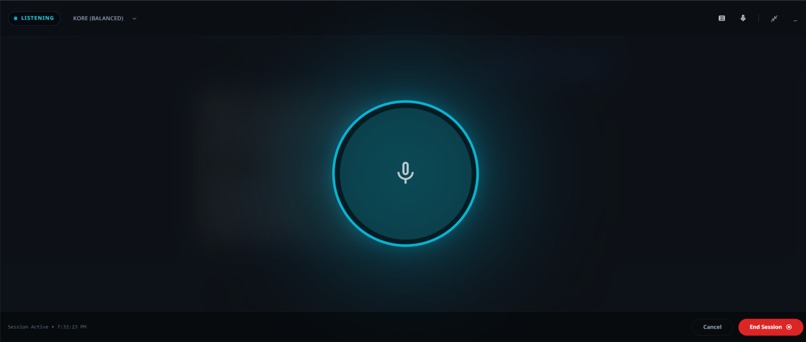

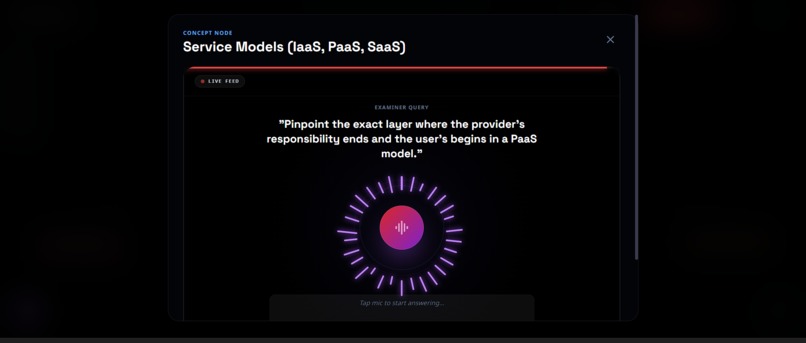

1 to 1 duplex voice interaction with ai using Gemini Multi-Modal live API

-

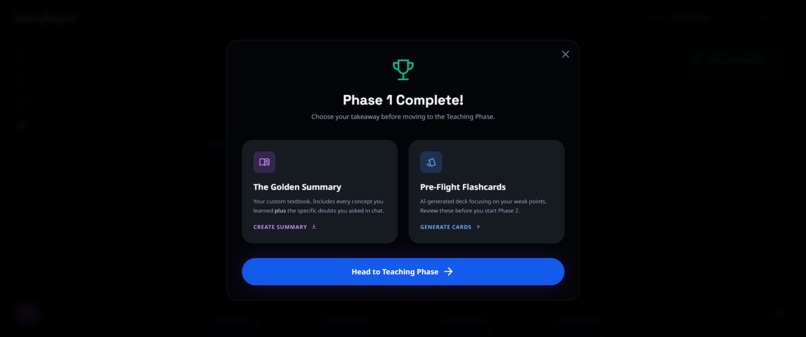

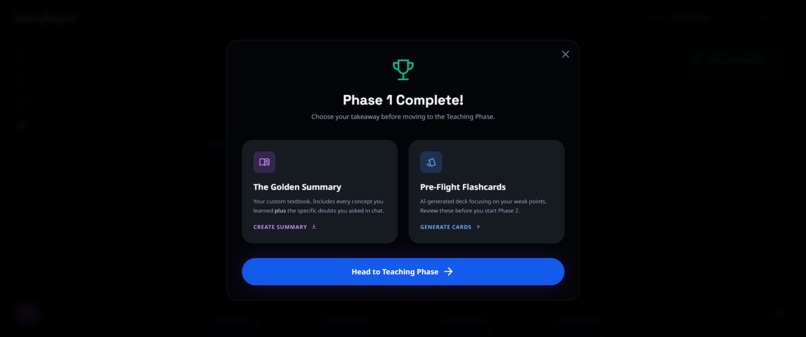

Learning Phase completed

-

Flashcards generation using Gemini 2.5 Flash

-

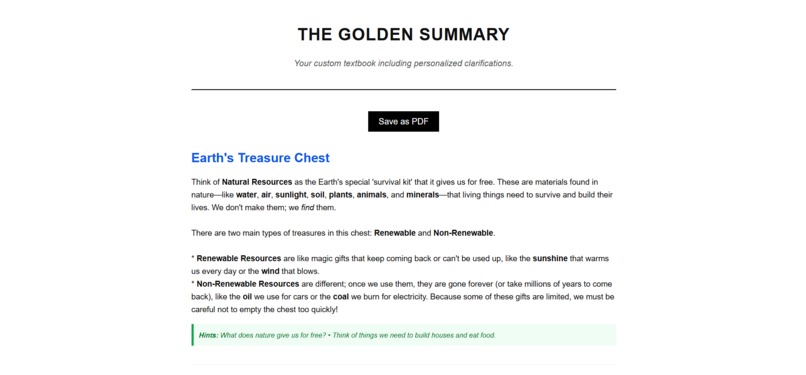

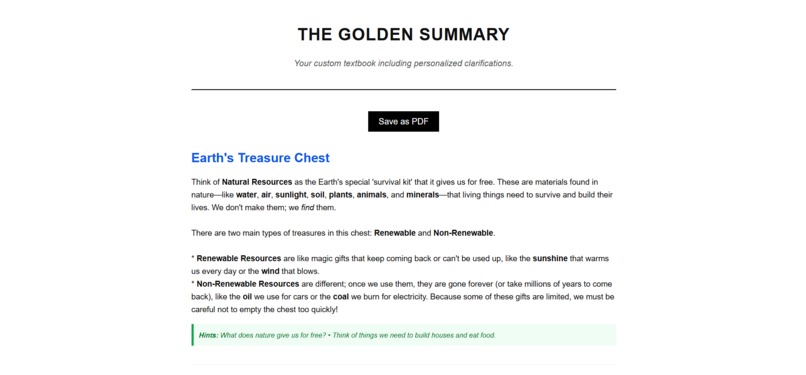

Golden summary PDF including all the conversations and explanation of the topic

-

Teaching phase flowchart

-

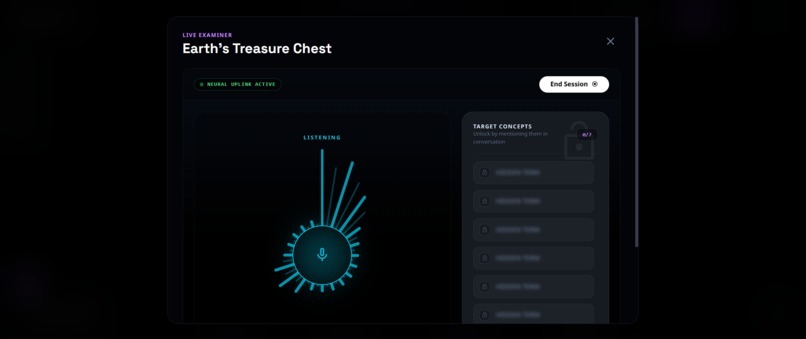

Live voice teaching window with locked keywords that unlock when explained featuring a gamified experience

-

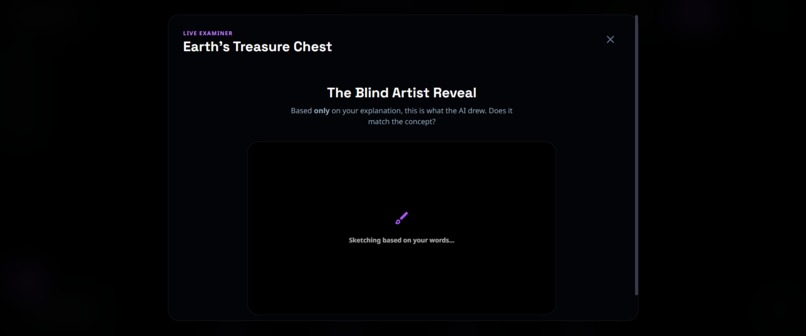

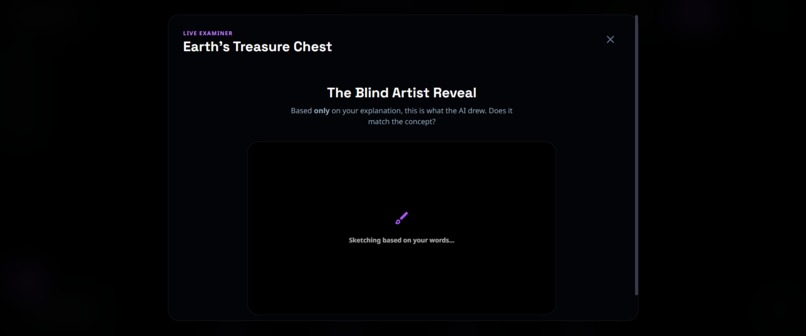

Image generation based on the explanation to the AI using Gemini 2.5 Flash

-

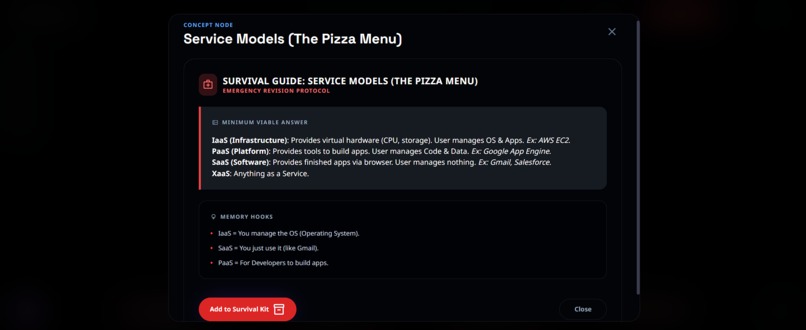

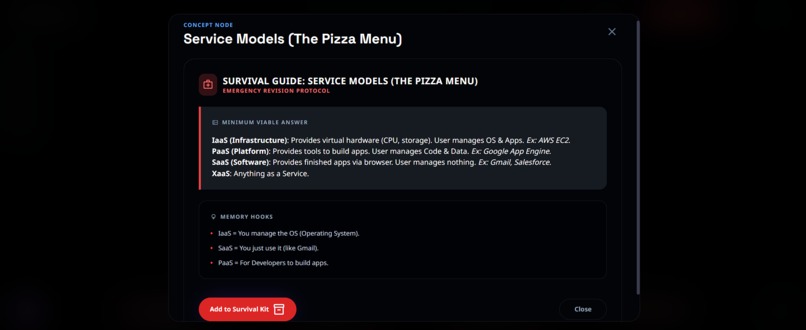

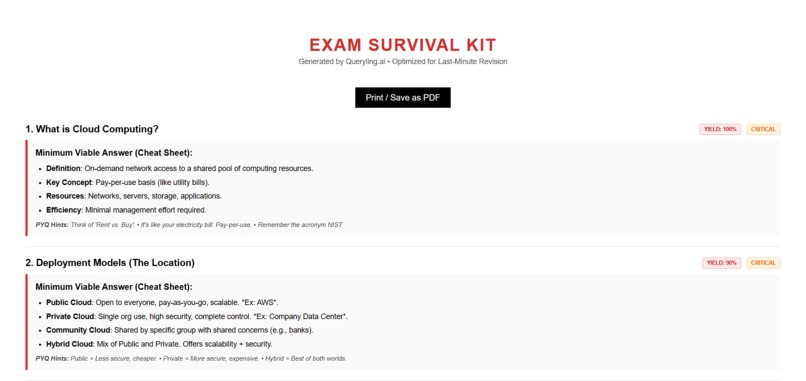

Survival mode for last minute exam prep featuring most probable exam question based on PYQ's with bulleted answer points

-

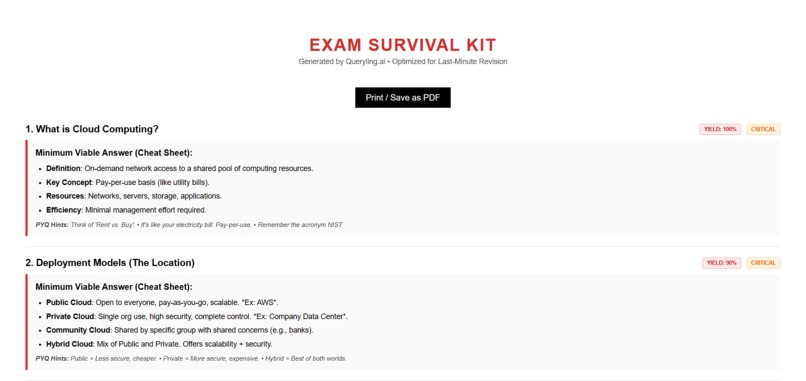

Survival kit PDF

-

Velocity mode for final exam prep using rapid fire questions based on PYQ's

-

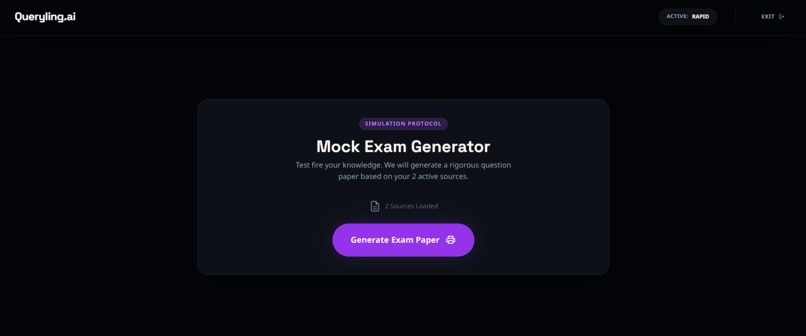

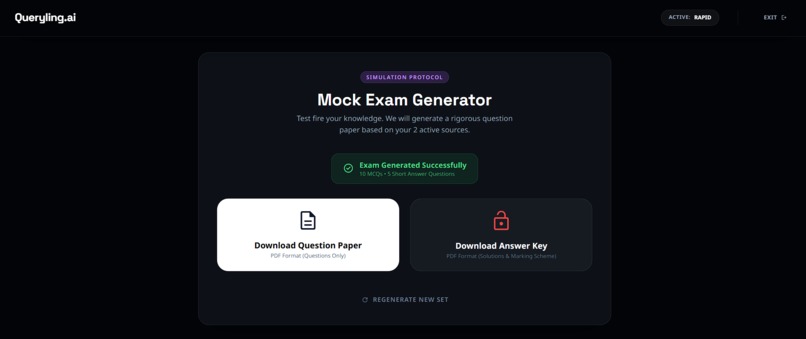

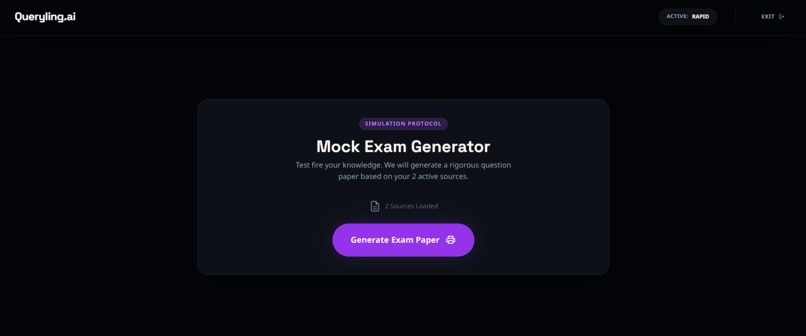

Mock exam generator based on PYQ's

-

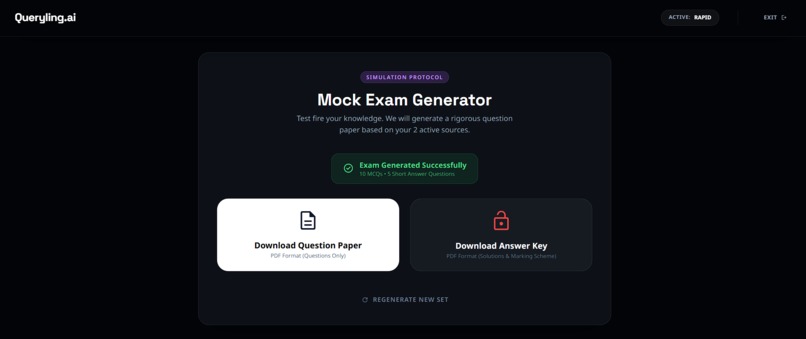

Mock exam paper with and without answer keys

-

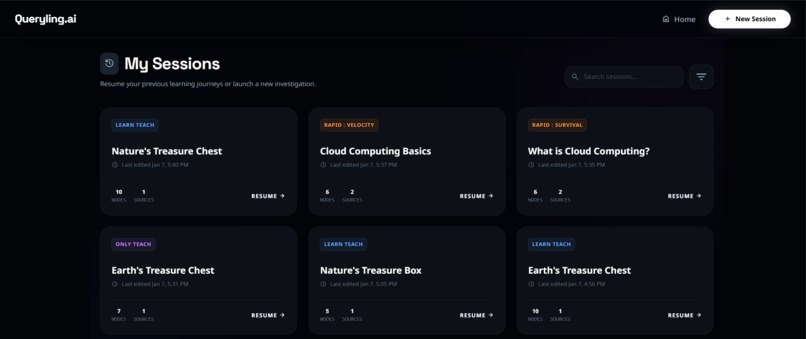

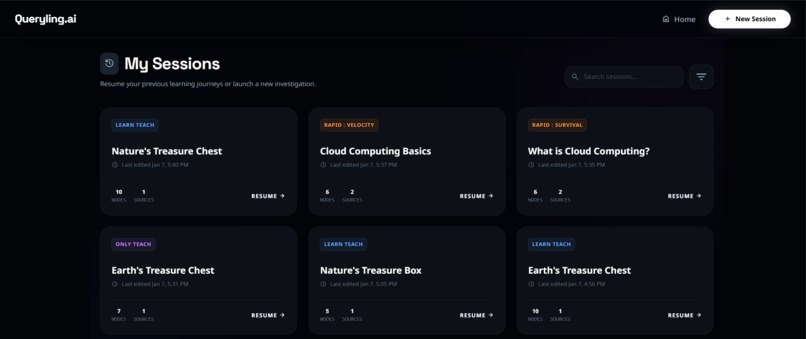

Session history

Queryling.ai — AI-Native Recursive Reasoning Engine

Queryling.ai is an AI-native learning and reasoning platform designed to move beyond traditional chat-based AI interactions. Instead of treating AI as a question–answer machine, the project enables users to structure their thinking using interactive logic graphs (Directed Acyclic Graphs) and then simulate, critique, and explain those reasoning chains using Gemini models.

The core philosophy is simple: AI should help users reason better, not just respond faster.

Inspiration

The inspiration for Queryling.ai came from two key ideas:

- Feynman-style learning: Real understanding emerges when ideas are explained step-by-step. Most AI tools optimize for answers, not understanding.

- Graph-based thinking: Many real-world problems—debugging systems, designing architectures, studying complex topics—naturally form dependency graphs rather than linear conversations.

The Gemini 3 Hackathon motivated us to explore whether modern multimodal AI models could actively participate in structured reasoning workflows instead of replacing them with opaque responses.

What It Does

Queryling.ai allows users to:

- Build logic graphs (DAGs) where each node represents a claim, question, or subproblem.

- Simulate reasoning over the graph using Gemini-powered explanations.

- Apply different personas (teacher, critic, examiner) to evaluate ideas from multiple perspectives.

- Interact using both text and real-time voice via Gemini Live preview models.

- Generate mock exams, explanations, and reasoning traces directly from the graph structure.

Rather than producing a single answer, the system exposes intermediate reasoning steps so users can understand why a conclusion was reached.

How We Built It

At a high level, Queryling.ai follows this flow:

- React frontend for graph editing, chat, and voice interaction

- Global state store to manage graph state, sessions, and UI flow

- Central AI service layer for prompt orchestration, personas, and context building

- Google Gemini models for text and voice-based reasoning

The frontend is built with React and React Flow to support interactive graph editing.

A centralized AI service layer constructs structured prompts by combining:

- System-level persona instructions

- Serialized graph context (nodes and dependencies)

- User intent (simulate, critique, explain)

This layered prompting approach ensures predictable and controllable AI behavior.

For voice interaction, Gemini Live preview models are used with streaming input and output. This enables interruptible, near real-time conversations, allowing users to question or redirect the AI mid-response.

Challenges We Ran Into

Designing a true “Learn → Teach → Revise” loop without overwhelming users.

We solved this by clearly separating Phase-1 (Learning) and Phase-2 (Teaching), while keeping both phases reusable across different modes.Maintaining low latency for real-time voice interactions, especially in Rapid Mode.

This required careful prompt structuring, keyword-based evaluation, and efficient session state handling.Balancing depth versus speed for exam-focused users.

Instead of forcing a single approach, we introduced multiple Rapid Mode protocols (SURVIVAL, VELOCITY, and SIMULATION) to adapt to different urgency levels.Ensuring clarity across multilingual inputs while keeping the interface language consistent.

This was handled by treating uploaded content language independently from the UI language.

Accomplishments That We're Proud Of

- Designing an AI system that reasons over explicit structure instead of raw prompts.

- Achieving responsive, real-time voice interaction using Gemini Live preview models.

- Building a flexible persona system that allows the same logic graph to be evaluated from multiple viewpoints.

- Creating a platform that judges and users could understand, question, and verify.

What We Learned

- Model capability matters less than system and prompt design.

- Centralizing AI calls simplifies security, testing, and iteration.

- Multimodal AI is far more effective when paired with structured interfaces.

- Explainability must be intentionally designed into AI systems.

What's Next for Queryling.ai

Future improvements include secure server-side AI proxies, retrieval-augmented reasoning with citations, collaborative graph editing, persistent storage for long-term knowledge building, and automated evaluation frameworks for reasoning quality.

Conclusion

Queryling.ai explores how AI can augment structured thinking rather than replace it. By combining visual reasoning, persona-driven evaluation, and Gemini’s multimodal capabilities, the project demonstrates a practical approach to explainable, AI-assisted learning and problem solving.

Built With

- framer

- gemini2.5flash

- gemini3pro

- geminimultimodalliveapi

- googlecloudtts

- indexdb

- jspdf

- react

- reactflow

- typescript

- vite

- zustand

Log in or sign up for Devpost to join the conversation.