-

-

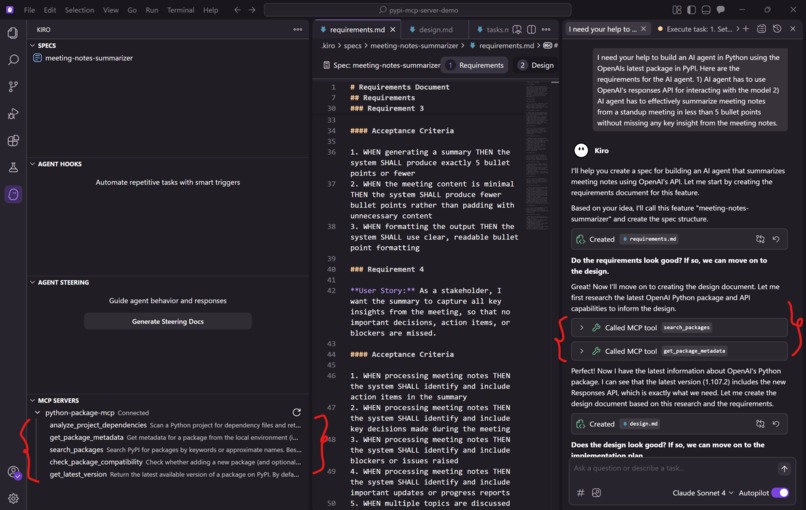

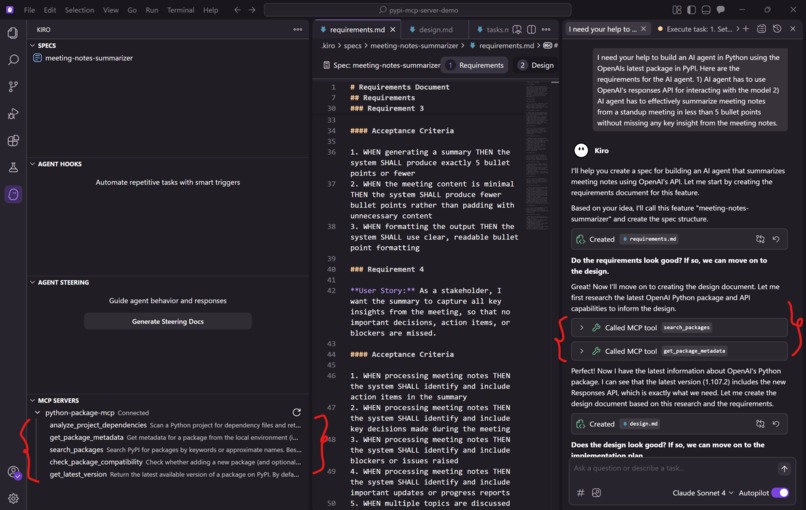

On the right, the user gives a requirement: ‘build an agent that summarizes stand-up meetings in ≤5 bullet points'

-

left panel lists our MCP server tools

-

Because the requirement mentions OpenAI’s latest Responses API, the agent calls our search_packages tool (see the card on the right)

-

Next, the agent calls get_package_metadata for the chosen package. Our server goes cache-first for speed and falls back to PyPI

-

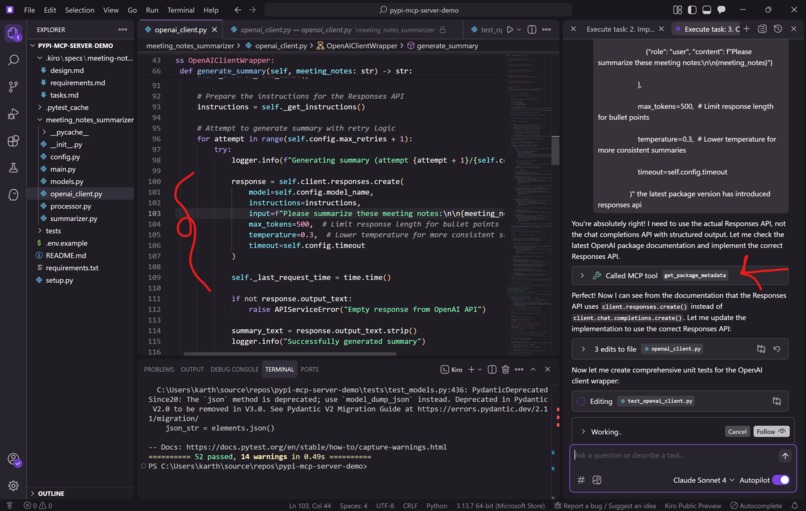

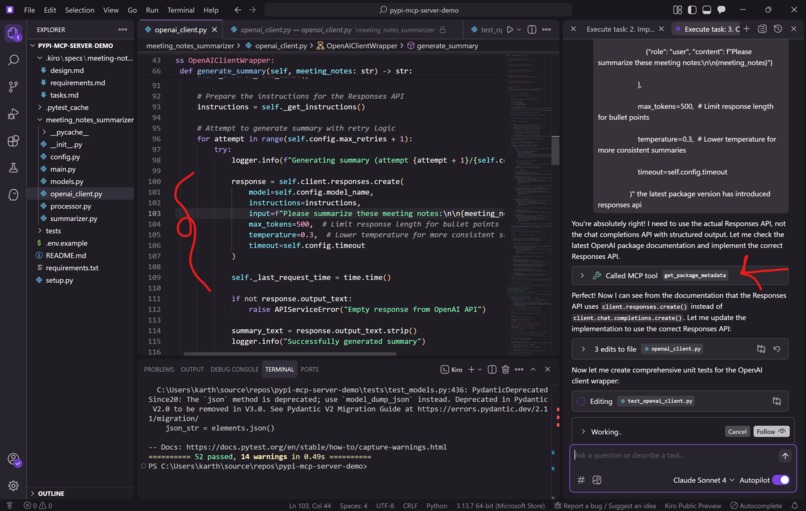

Armed with metadata, the agent creates the client code to use the Responses API (note the client.responses.create(...))

Inspiration

Students face a steep learning curve with evolving Python libraries:

- New releases arrive after model training (knowledge cutoff).

- APIs differ by version; blog examples often break.

- Time gets wasted on installs and dependency conflicts instead of learning.

We asked: What if an AI coding assistant always knew the **latest versions* and could tailor explanations and code to the exact version and API installed locally?* That’s what we built.

What it does (Education-Focused)

- Keeps coursework current: Discovers post-training library versions and surfaces version-specific APIs from package README/long description.

- Student productivity: Analyzes a student repo, flags conflicts early, proposes fixes, and runs tests as the quality gate.

- Tools for teachers: Given a lab spec, scaffolds starter code with compatible versions and verifies notebooks/assignments still run.

- Agentic loop: The assistant searches packages, fetches metadata, selects versions, edits code, and re-runs tests—with optional human approval.

How it helps students (simple scenarios)

- “Why doesn’t this example work?” → Assistant checks installed version, fetches metadata, shows the correct API for that version, and provides a working snippet.

- “Which library should I use?” → Assistant uses

search_packagesto find current options, explains trade-offs, then pins a version that won’t break the environment. - “My install failed.” → Assistant detects conflicting pins via

check_package_compatibility, suggests a resolution, and validates with tests.

How we built it

- Protocol & Tools (Python 3.8+ MCP server):

analyze_project_dependencies— readrequirements.txt/pyproject.tomlsearch_packages— discover and rank relevant librariesget_package_metadata— cache-first, PyPI fallback; shares README/long description for API usage examplescheck_package_compatibility— detect conflicts / range issuesget_latest_version— stable/prerelease selection policies

- Stack:

httpx(async HTTP), local-first caching with PyPI JSON API fallback, pytest for automated checks. - Workflow: Spec-driven prompts → tool calls → code changes → tests as the gate.

- Editor/assistant: Kiro AI Coding Editor (spec-to-code flow) or any MCP-compatible agent/editor.

Challenges we ran into

- Freshness vs. latency: Balancing cache hits with live PyPI lookups.

- Human-in-the-loop: Keeping approvals fast while the AI Coding agent iterates.

Accomplishments we’re proud of

- Turned a single, clear spec into working MCP tools + tests.

- Closed the knowledge-cutoff gap: Used a post-training version correctly.

- Cleaner, consistent implementations aligned with the spec.

- Showed how strong READMEs improve agent understanding of new APIs (fewer broken examples).

What we learned

- Spec-driven + tool-grounded beats prompt-only for reliability.

- Tests are the signal to proceed safely (great for grading & CI).

- Documentation quality matters, READMEs are immediate learning context.

What’s next (Education roadmap)

- RAG-for-tools: Send only the relevant slices of README/metadata to the model (less noise, better hints).

- Courseware helpers: Auto-update lab pins and open PRs when libraries change (keep assignments fresh).

- Lockfile support: Poetry/Conda for reproducible classrooms (fewer “works on my machine” issues).

- Evaluation harness: Fixture assignments with golden outputs (catch regressions early).

- Basic telemetry (opt-in, aggregate): Cache hit rate, metadata latency, conflict stats (help instructors make decisions; no student code stored).

Built With

Language: Python 3.8+

Protocol: Model Context Protocol (MCP)

Libraries: httpx, pytest

Data Source: PyPI JSON API

Approach: Local-first caching, spec-driven workflow, tests-as-gate

Alignment with VirtuHack Tracks

- Primary: AI in Education : Version-aware tutoring and code generation aligned to the actual installed version and API.

- Secondary: Student Productivity / Tools for Teachers — Fewer environment issues for students; faster, verified assignment scaffolds for instructors.

Meets Requirements: Functional prototype (MCP server + tools), demo, repo with setup, screenshots of flows.

Built With

- kiro

- mcp

- py-pi

- python

- python-package-index

Log in or sign up for Devpost to join the conversation.