-

-

Project Jump Scare

-

World Map

-

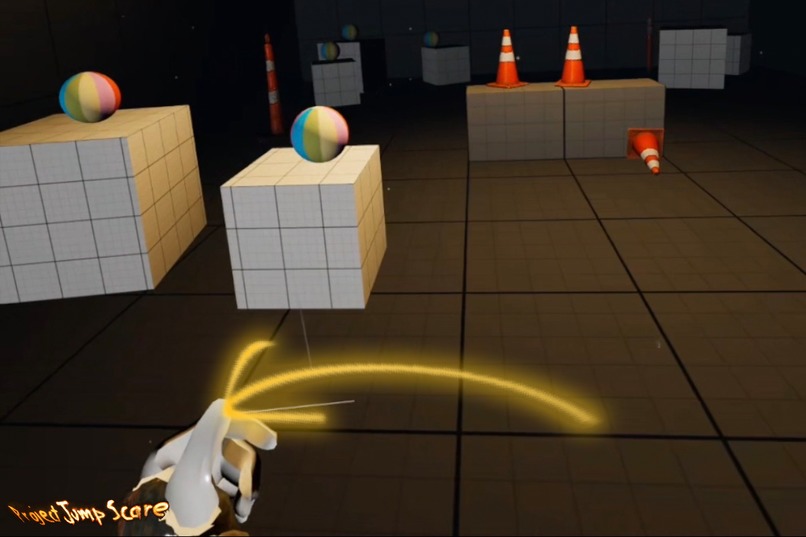

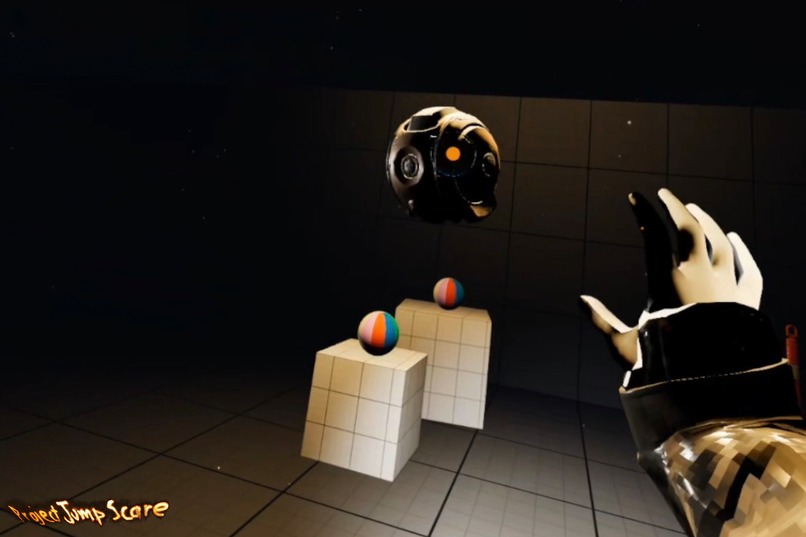

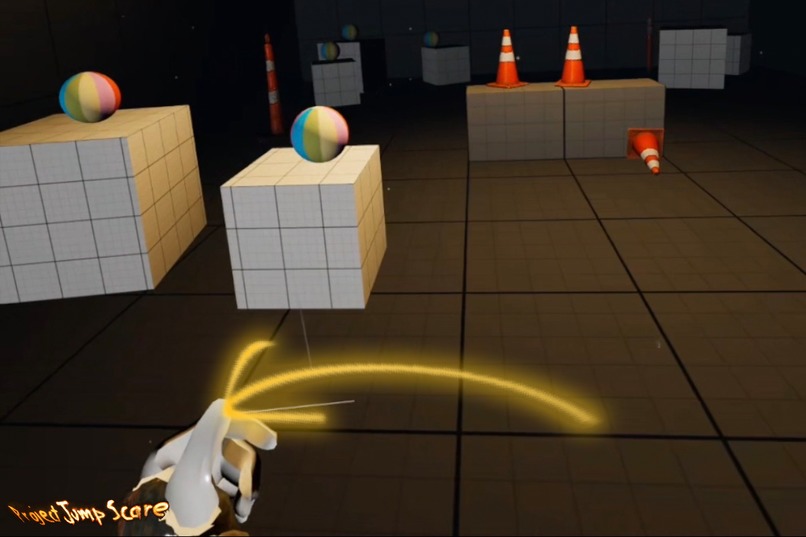

XR Test Room

-

Hand Tracking Locomotion knuckle contact point

-

Yaw rotation with Right Hand Tracking

-

Strafe with Left Hand Tracking

-

Stroke downward like a fishing rod to engage forward motion

-

XR Cursor ensures feedback for selection

-

Pinch to project forward or long pinch to grab

The core philosophy behind Project Jump Scare’s interaction design is simple... every platform should feel native without rebuilding the game for each one.

To achieve this, we built a unified input model that adapts seamlessly across XR and traditional formats such as this feature add of Meta Quest hand tracking in tandem with an original capability of motion controllers, the ability to switch between the two interfacing systems seamlessly, VisionOS gesture input with a DualSense, mouse/keyboard on PC, and touchscreen controls on iOS.

Instead of treating each platform as a separate fork, the project abstracts actions into a single cross-platform interaction layer. In VR, this becomes diegetic hand gestures and spatial behaviors. On VisionOS, natural gestures and indirect focus. On iOS, direct screen taps. On PC, mouse input. And on consoles or paired controllers, traditional analog inputs. The result after developing on this philosophy is a blanket interface design that lets the same game logic operate across 2D and fully immersive 3D modes without compromise having one design philosophy, many ways to play.

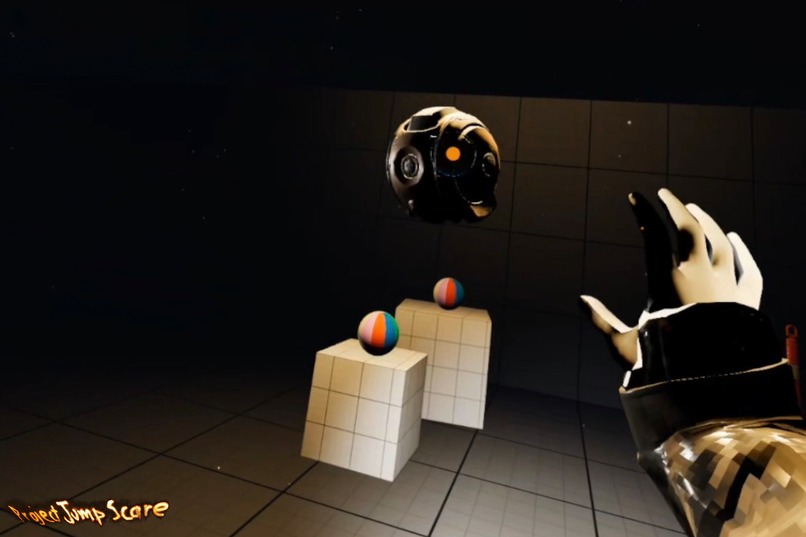

Project Jump Scare itself is inspired by atmospheric cryptid lore, survival horror tension, and the idea that vulnerability is heightened when the player’s own body (not controllers) becomes the interface. We have strong user testing showing the heightened impact in having your real body being properly represented in the game.

Designing this Hand Tracked Locomotion functionality on the Meta Quest during the hackathon enables us to fulfill the core philosophy we have for XR without being restricted by motion controller access.

What Does It Do?

Project Jump Scare delivers an atmospheric, hands-first cryptid-survival experience built natively for Meta Quest.

Players navigate, interact, and respond to threats entirely through natural gestures and physical motions. The game interprets real-world hand and body behavior as in-world actions from picking up objects to directional locomotion to defensive gestures during encounters.

The same core game logic also supports:

- Hand Tracked Locomotion being designed on Meta Quest for this hackathon.

- VisionOS gestures and focus-based input in parallel with actively using a Dualsense controller

- iOS direct touch and XR tracking mode leveraging ARkit 6dof capability

- PC mouse/keyboard for the core flat traditional play option

- Controller-based play when desired on multiple platforms.

This makes the experience playable both as an immersive XR horror title and as a traditional flatscreen game without losing coherence or identity.

How We Built It?

Built in Unreal Engine, with a custom interaction layer abstracted over MetaXR and OpenXR.

Implemented gesture-based locomotion, object interaction, and threat response systems tuned for hands-first play.

Developed a unified input routing framework that supports VR gestures, touch input, mouse events, analog sticks, and controller buttons using the same underlying action schema.

Designed AI-driven cryptid behaviors using Unreal behavior trees, perception systems, and custom proximity/line-of-sight logic.

Optimized lighting, fog, shaders, and tick behavior to maintain stable performance on Quest hardware while looking impressive and also looks fantastic on PC and iOS.

Ensured the interaction model remains consistent across 2D, VR, and MR modes without maintaining separate branches.

Challenges We Ran Into

Designing gestures that remain accurate during panic motion and sudden player movement.

Making locomotion intuitive without controllers or traditional UI cues.

Achieving consistent behavior across multiple platforms (Quest, VisionOS, PC, iOS, etc).

Maintaining system performance while rendering dark environments, fog volumes, and AI behaviors.

Accomplishments That We're Proud Of!

Successfully built a cross-platform interaction system that works identically in VR, MR, touch, and flat-screen environments.

Achieved stable, natural-feeling hands first locomotion and interaction on Meta Quest.

Created a cohesive horror tone without relying on blood, gore, or graphic content.

Proved that a survival horror experience can scale from fully immersive XR to traditional 2D without losing its creative identity.

Built a foundational architecture that can support multiple platforms simultaneously with minimal rewriting.

What We Learned?

How dramatically hand tracking alters player psychology and immersion in horror environments.

That gesture design must account for stress reactions, not just ideal player posture.

How to build an abstraction layer that cleanly supports vastly different input types.

How to tune XR visuals for performance on mobile hardware without compromising atmosphere.

That cross-platform design encourages cleaner, more maintainable gameplay code.

What's Next for Project Jump Scare?

Expanding the hand-interaction system with additional gestures, two-handed mechanics, and environmental puzzles.

Introducing more cryptid types, new biomes, and dynamic encounter patterns.

Implementing emergent systems to constantly change the level structure.

Preparing wider deployment across iOS, VisionOS, Steam, and PCVR, all using the same unified interaction design.

Refining onboarding and comfort systems.

Moving toward a larger content roadmap including narrative layers, progression, and full early-access releases.

Built With

- metaxr

- openxr

- unreal-engine

Log in or sign up for Devpost to join the conversation.