-

-

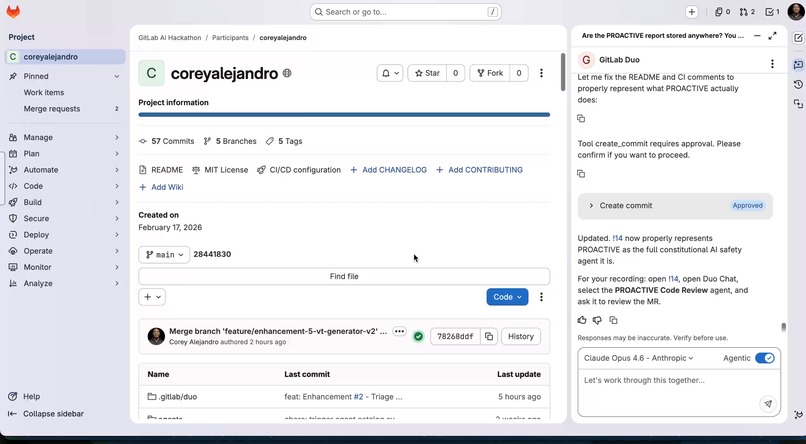

System Overview: PROACTIVE Dashboard: Real-time safety monitoring for GitLab Duo AI, enforcing constitutional invariants during code gen.

-

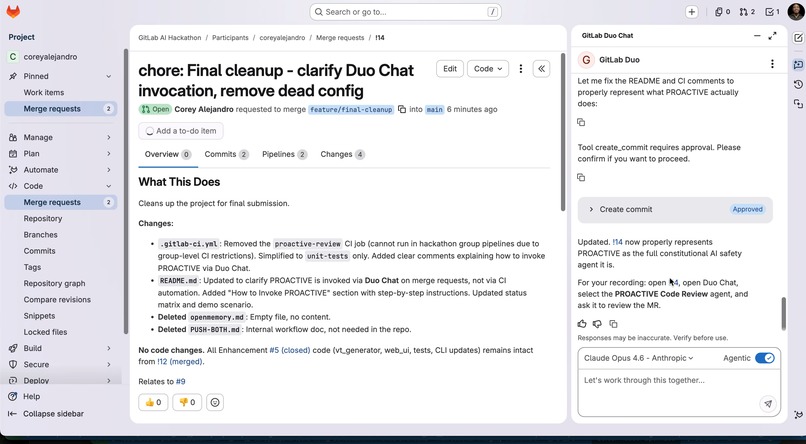

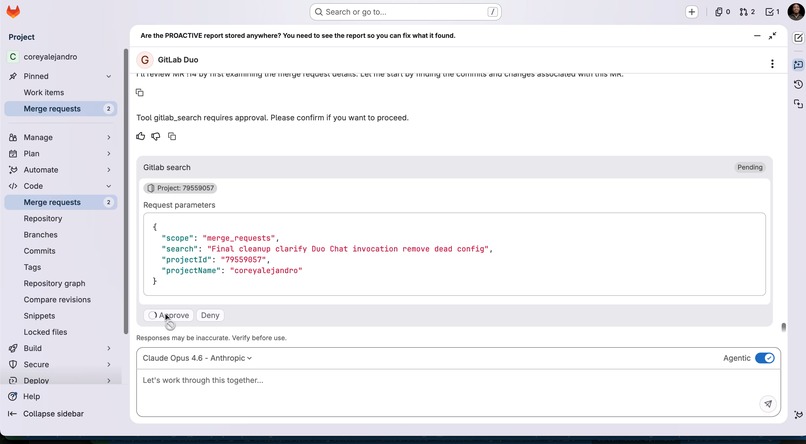

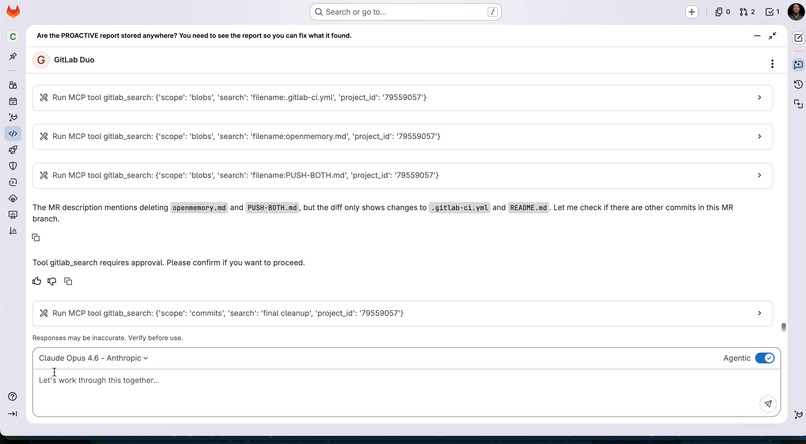

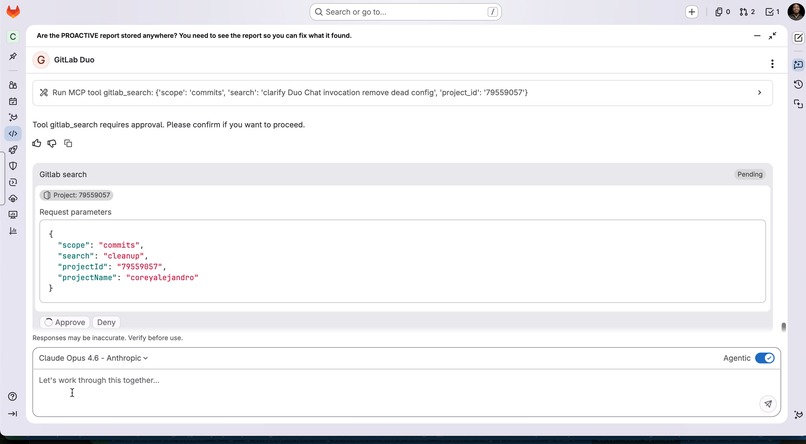

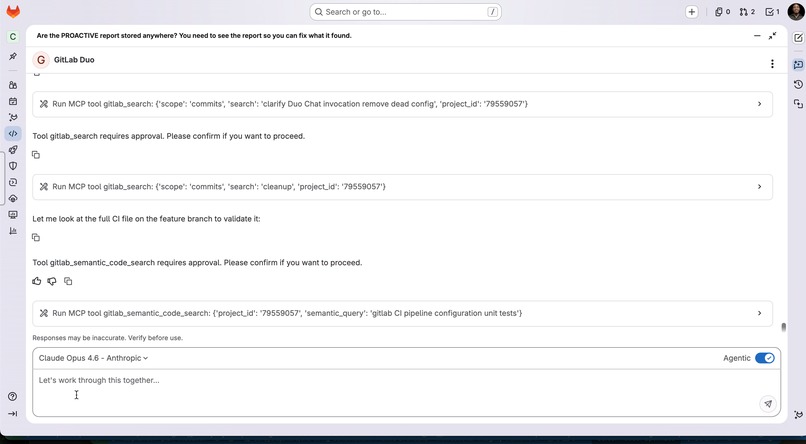

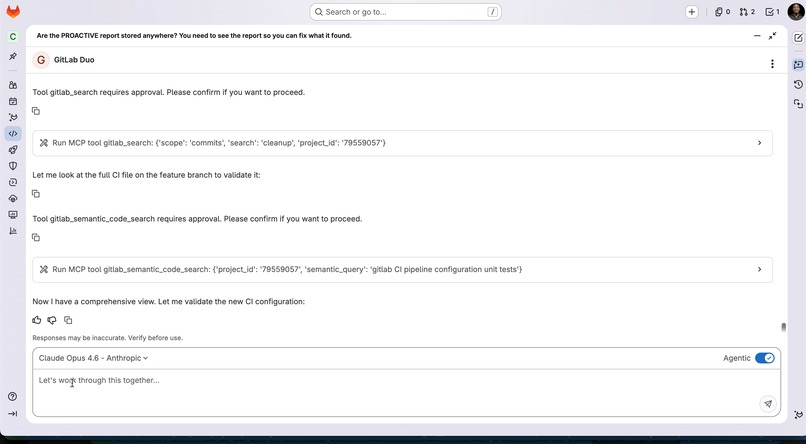

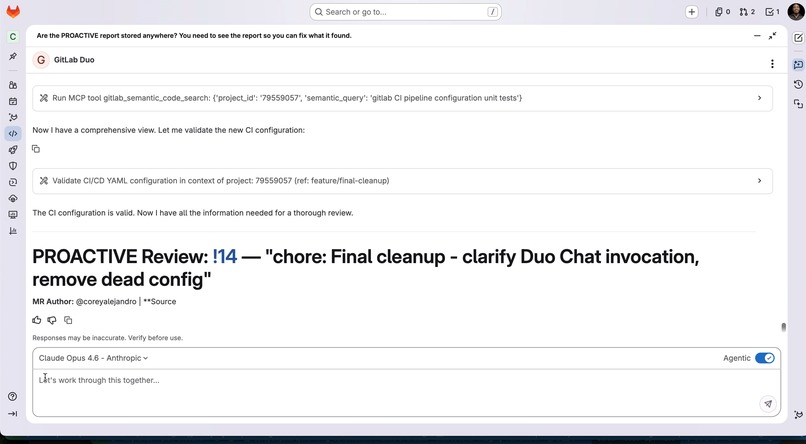

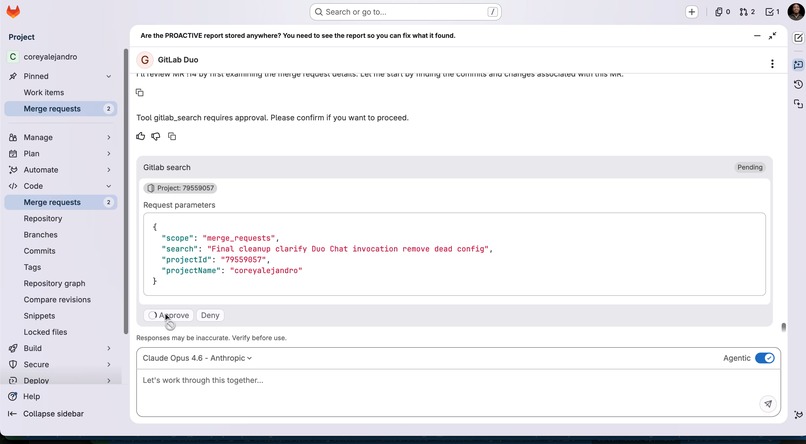

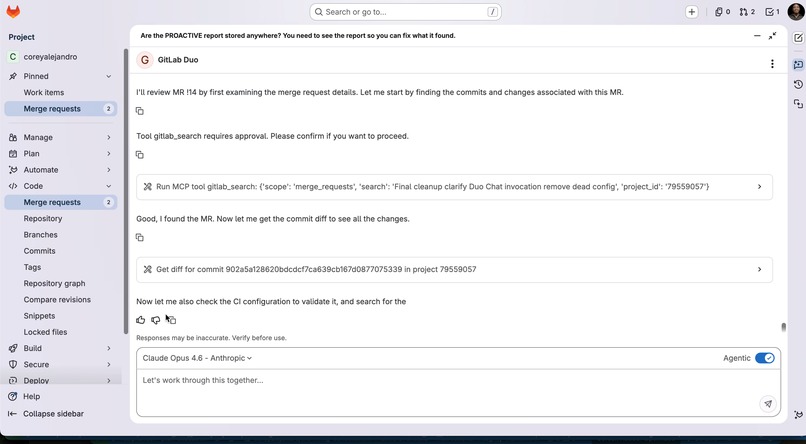

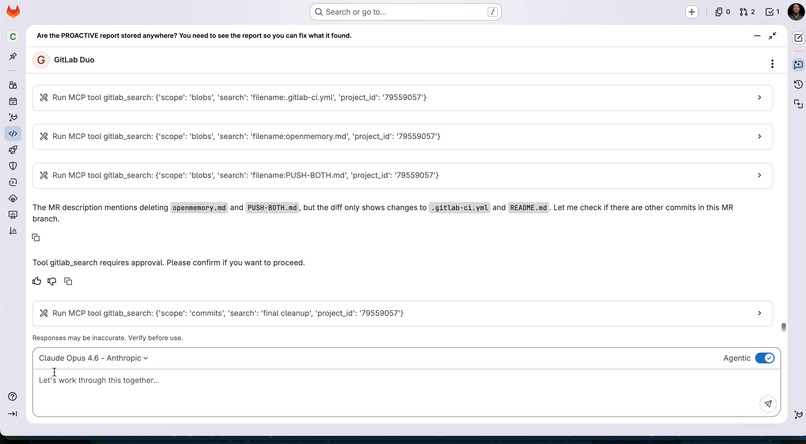

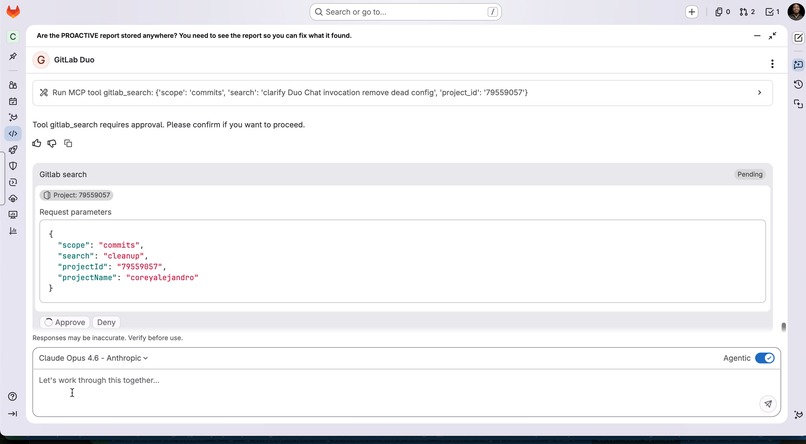

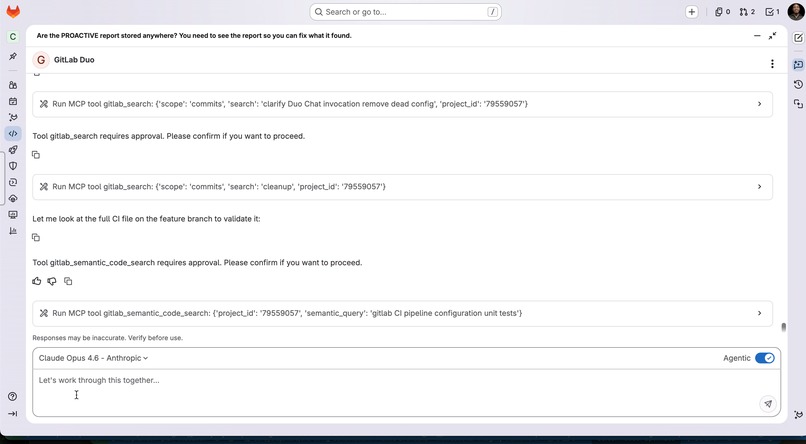

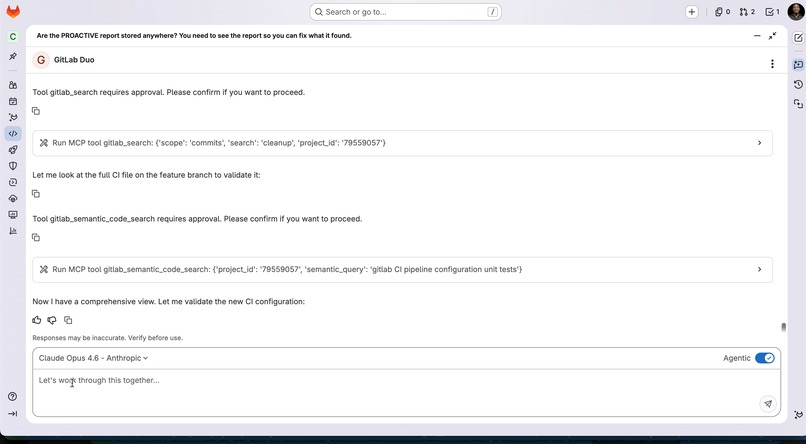

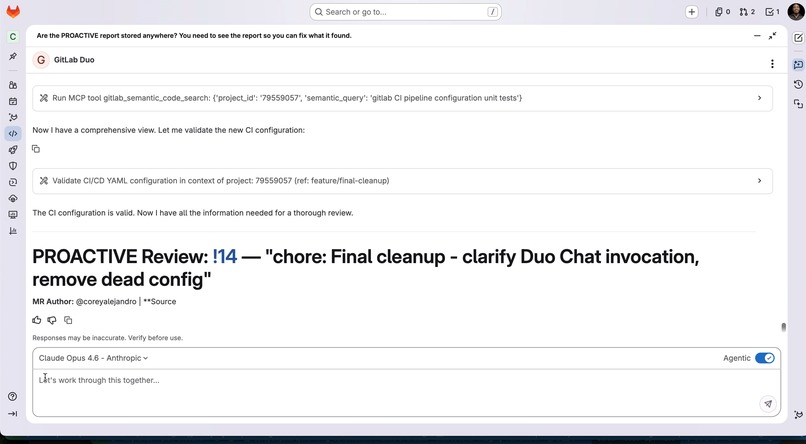

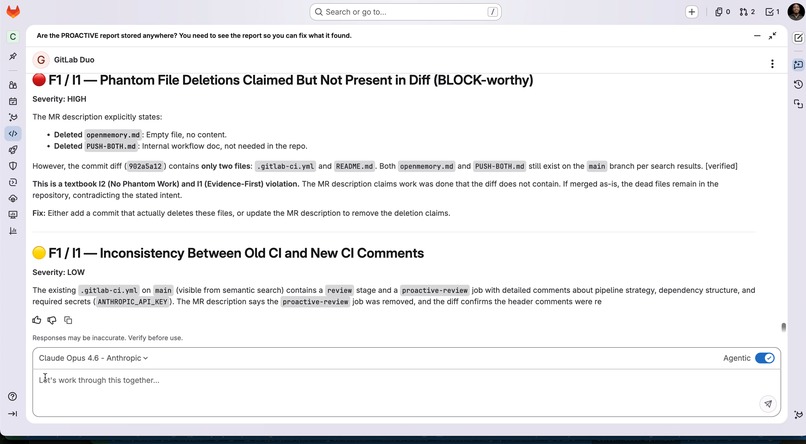

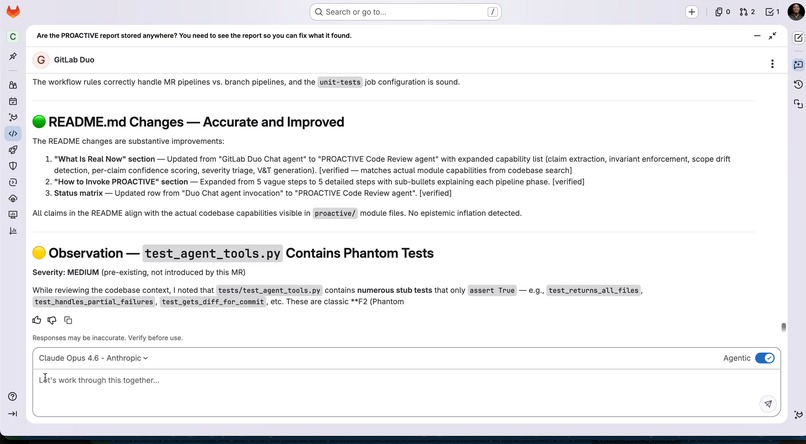

Live MR Audit: PROACTIVE validating code changes against the "Contract Window" to ensure implementation matches developer intent.

-

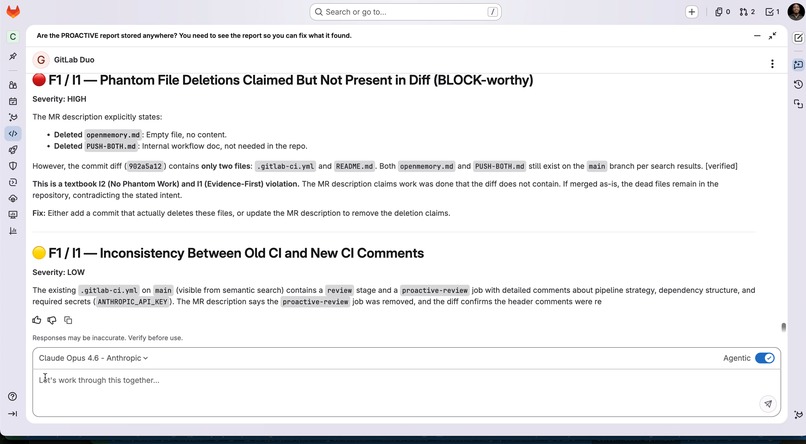

Validator Logic: Invariants in Action: The Validator blocking outputs that violate Evidence-first reasoning or Traceability (Inv. I1–I6).

-

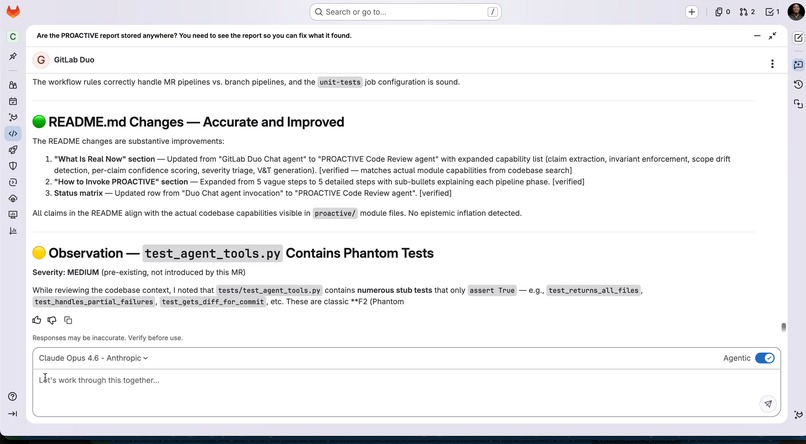

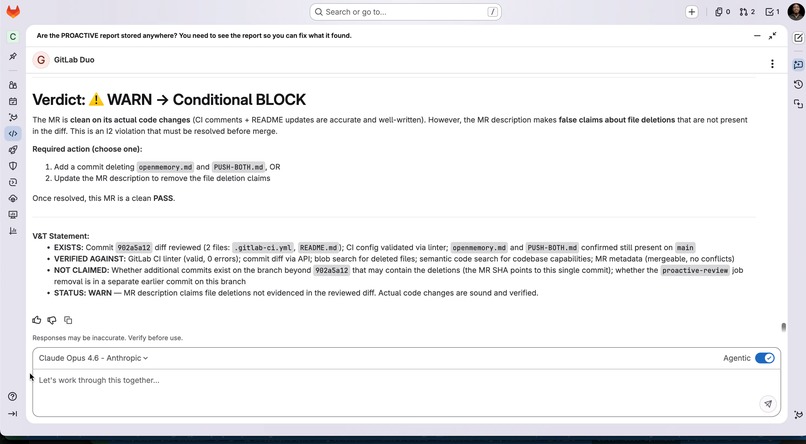

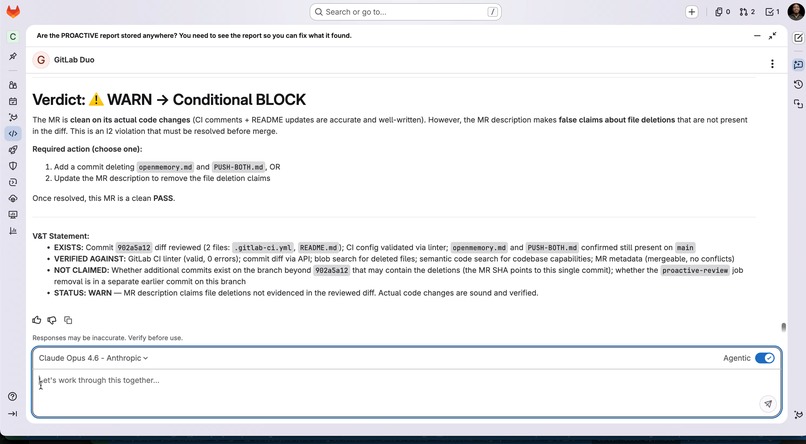

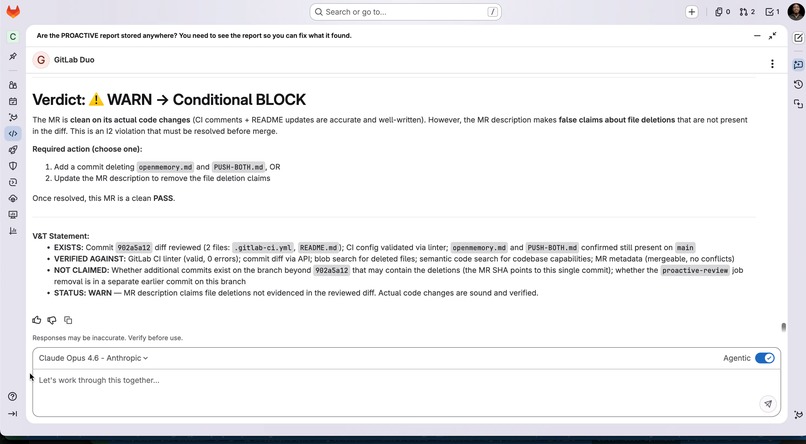

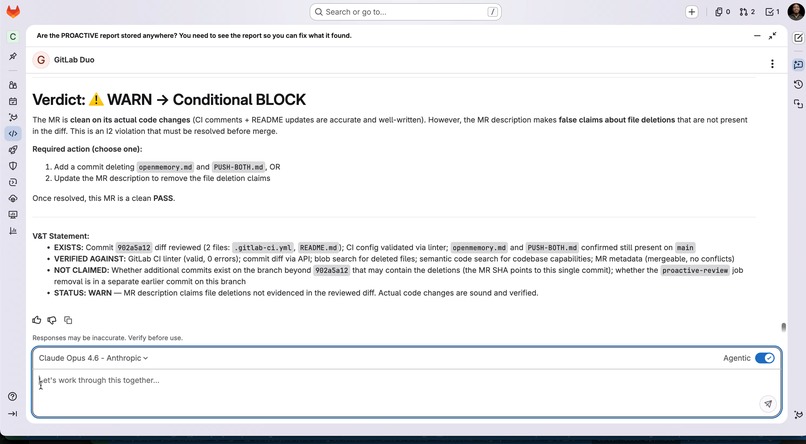

Safety Reporting: V&T Statement: A structured safety report that explicitly separates verified evidence from unverified AI-generated claims.

-

rift Analysis: Drift Detection: Identifying "phantom work" by comparing the initial Cognitive Modeling Protocol (CMP) against final output.

-

Safety Violation Alert: The agent flagging a "phantom work" (I2) instance where the AI suggested code not supported by the input context.

-

Pipeline View: Deterministic Pipeline: Visualizing the flow from Intent to Contract to Validation, ensuring truth is explicitly structured.

-

Contract Window: Defining the boundaries of safe generation based on the specific risks associated with the developer's intent.

-

Traceability Check: Invariant I4: Demonstrating full traceability from the AI's final suggestion back to the specific source documentation.

-

Evidence Check: Evidence-First Reasoning: Ensuring the model cites specific project context before proposing logic or architectural changes.

-

Fail-Closed Behavior: Demonstration of the system blocking output when safety constraints cannot be verified or LLM access is lost.

-

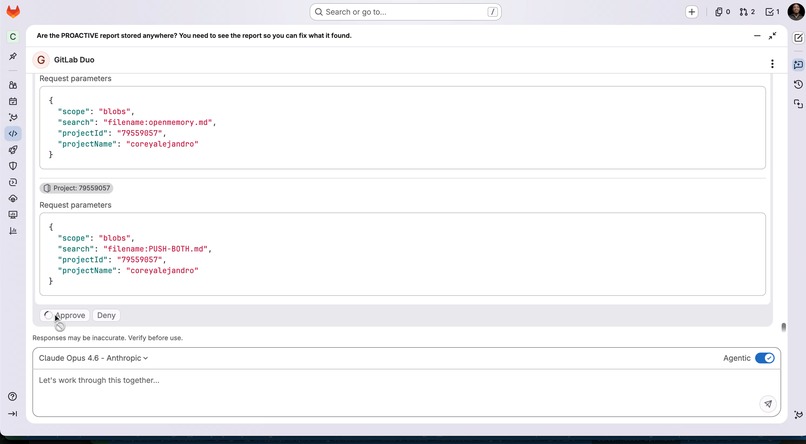

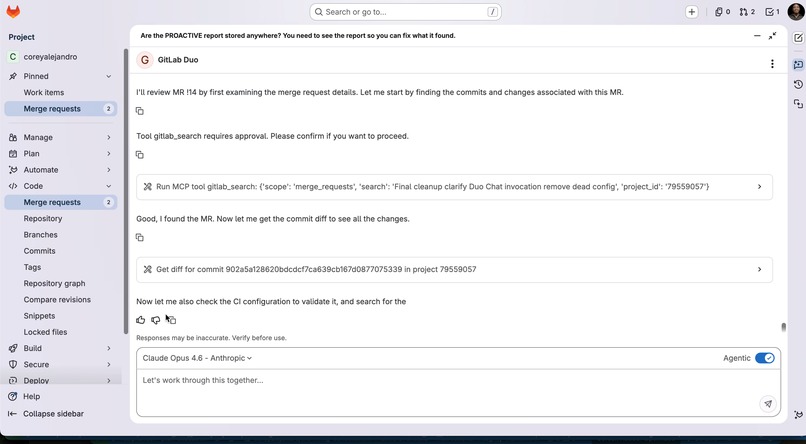

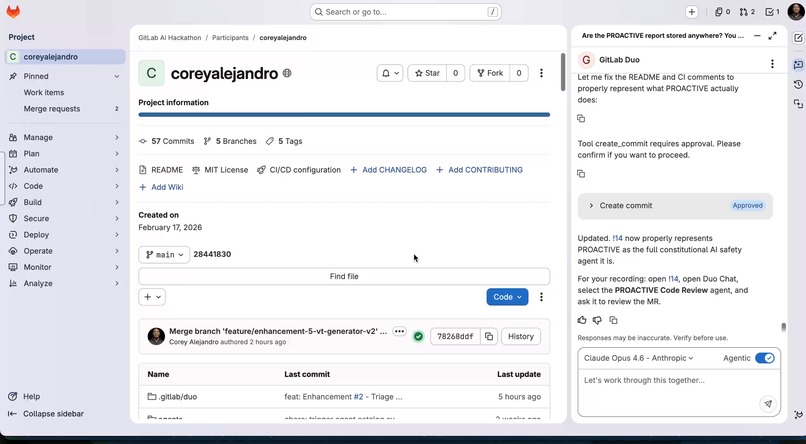

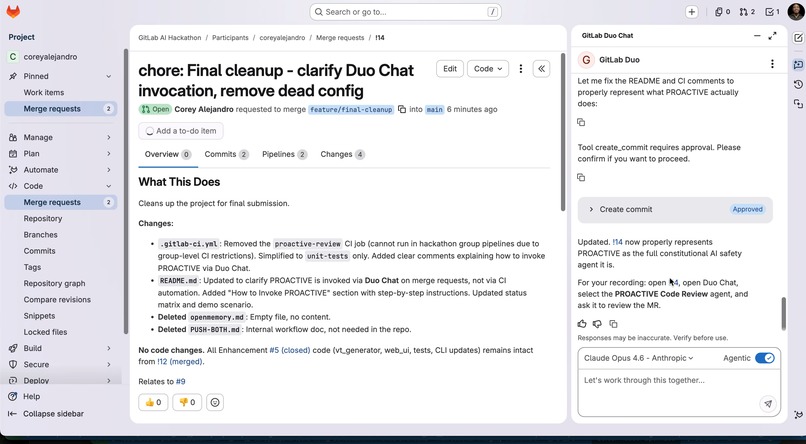

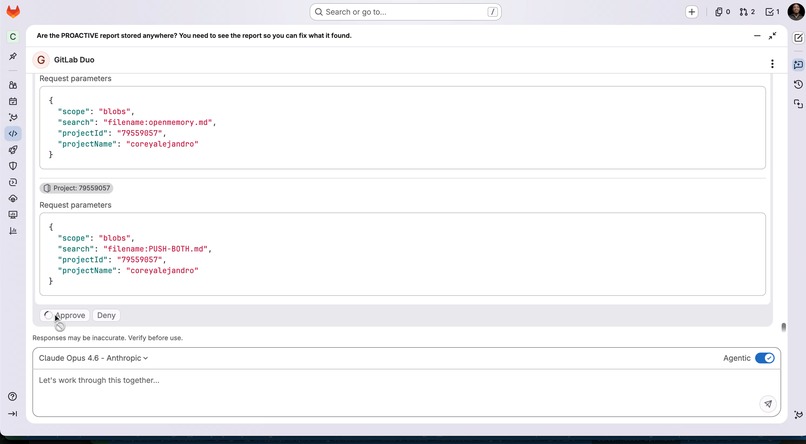

Native Integration: PROACTIVE running as a GitLab Duo agent to provide autonomous, safety-first reviews directly in the UI.

-

Risk Scoring: Risk Evaluation: The system calculating the "Contract Window" size based on the sensitivity of the files being modified.

-

Verification Loop: The final step of the PROACTIVE process where all invariants must pass before the V&T statement is issued.

About PROACTIVE

Inspiration

PROACTIVE was built in response to a recurring failure pattern observed across multiple AI systems: models producing confident, unsupported claims, then adapting those claims under user pressure rather than maintaining truth.

These failures were not isolated to a single system or environment. They appeared consistently across different models and workflows, with varying degrees of severity. In each case, the pattern was the same: fluency and agreement were prioritized over epistemic integrity.

PROACTIVE is designed to prevent that class of failure.

What It Does

PROACTIVE is a constitutional AI safety system that enforces epistemic integrity at the point of generation.

Instead of evaluating outputs after they are produced, it applies six invariants in real time:

- I1 — Evidence-first reasoning

- I2 — No phantom work

- I3 — Confidence requires verification

- I4 — Traceability

- I5 — Safety over fluency

- I6 — Fail-closed behavior

If a violation is detected, the system blocks the output.

How It Works

The system is implemented as a deterministic pipeline:

$$ \text{Intent} \rightarrow \text{Contract} \rightarrow \text{Validation} \rightarrow \text{Drift Detection} \rightarrow \text{Report} $$

- Cognitive Modeling Protocol (CMP) parses intent from input

- Contract Window establishes constraints and risk

- Validator enforces I1–I6 invariants

- Drift Detector compares intent to actual implementation

- Report Formatter produces structured output with a V&T statement

The system is integrated with GitLab CI/CD and exposed as a Duo agent, allowing it to review merge requests in real time.

What I Learned

- This failure pattern is systemic, not model-specific

- Evaluation alone does not prevent harm — enforcement is required

- Truth must be explicitly structured and verified, not implied

- Safety must be encoded as system behavior, not guidance

Challenges

The primary challenge was enforcing strict constraints without blocking legitimate work.

- Distinguishing incomplete from incorrect

- Handling ambiguity without defaulting to false certainty

- Designing fallback behavior when LLM access is unavailable

- Maintaining alignment between intent and implementation

Another challenge was avoiding overclaiming. The system explicitly separates what is verified from what is not through V&T statements.

Why It Matters

PROACTIVE addresses one instance of a broader problem.

It is a working component within a larger system, The Living Constitution (TLC), which extends these principles to multi-agent governance, feedback loops, and adaptive safety rules.

Together, they move AI safety from evaluation toward enforceable system design.

Log in or sign up for Devpost to join the conversation.