-

-

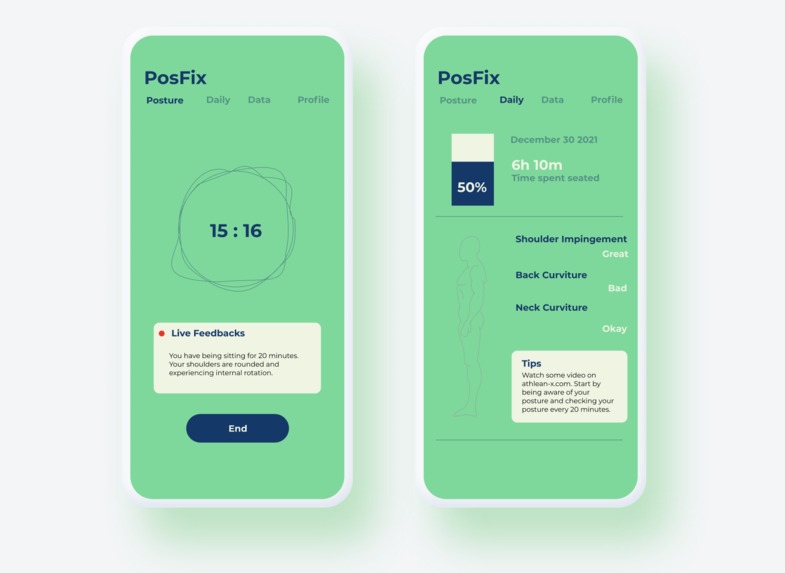

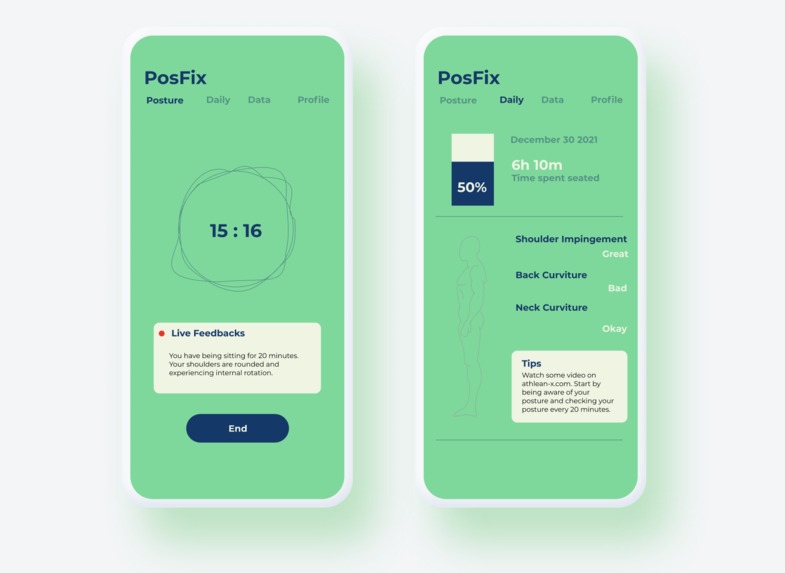

Frontend UI/UX design created using Figma

-

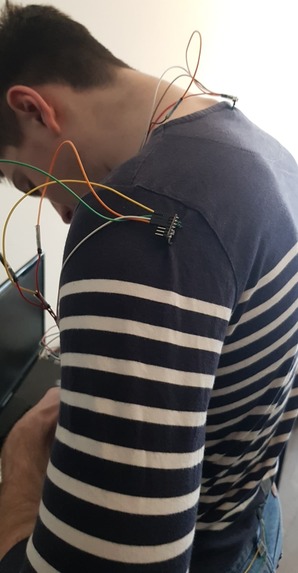

The hardware component for this project (a tight shirt with sensors attached)

-

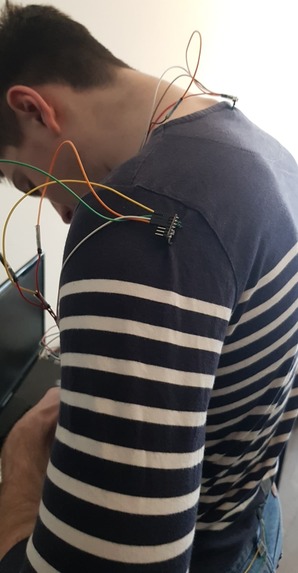

The hardware component for this project (a tight shirt with sensors attached)

-

Screenshot of our application on the posture page with live notifications on your posture

-

Daily page with an overview of your posture throughout the day

Inspiration

Due to covid-19 and online school, the number of hours we spend seated has increased dramatically. From a human physiology perspective, because seating is not a natural position for our body, it can lead to significant long-term health consequences. A bad posture can amplify these effects on our back and neck, and many of us often don’t realize that we have suboptimal posture. This is why we have created PosFix. We wanted to create a device that allows users to monitor their posture, become more self-aware of their posture, and take the necessary measures to correct it. Our device would allow users to limit the damage associated with bad posture, such as back pain, headaches, and stress/anxiety.

What it does

We provide a shirt that has been enhanced with sensors and a computer system, as well as an app that works in tandem to model and record the position of vital points on your body. In order to get a good model for posture, we focused on four main areas: the right & left shoulders, the neck, and the lower back. PosFix monitors the orientation of the shoulders, neck, and lower back, to determine the relative orientation in space of these four points to monitor for suboptimal positioning. In addition, we monitored the curvature of the back to determine if the user was sitting up straight and to ensure a natural curvature to the lower back which is something research indicated was important to a healthy posture.

The user starts a session on our app, which signals the raspberry pi to start recording sensor values to Firebase's Cloud Firestore database. At the end of a session, this information was used to calculate a heuristic and recorded onto our app.

How we built it

There were two main components to our project: the hardware side and the software side. On the hardware side, the orientation of the shoulders, neck, and lower back was measured using 4 IMU (Inertial measurement unit) sensors, which contain an accelerometer, gyroscope, and magnetometer. The curvature of the back was measured using a flex sensor. Processing orientation in space was probably one of the bigger challenges and involved using an algorithm that takes an accelerometer, gyroscope, and magnetometer data and passes it through a Kalman filter to give an accurate estimate for orientation in space relative to the earth. The filter works to eliminate drift caused by noise in the sensors. This data is processed in real-time on the raspberry pi from all four sensors and sent to the database along with the flex sensor data.

On the software side, we built our mobile app using React Native because of its similarity to React and its ability to seamlessly create cross-platform mobile applications. In the app, we processed information from the cloud in real-time and we displayed it to the user in a simple but useful way.

Challenges we ran into

Because the hackathon was online this year we ordered our own hardware parts, however, the delivery took longer than expected to arrive due to shipping delays which left us only a few days to build and test the hardware component.

As well, we originally wanted to also measure the position with orientation, however, there were a number of factors for why this was infeasible and eventually scrapped. Firstly, there is too much noise inherent to working with IMU’s, and so position over a long period of time is never accurate and needs to be periodically fixed by other sensors/information. As well, we had performance issues caused by the relatively low power ARM chip on the Raspberry Pi, and the fact that python inherently can’t take advantage of multiple cores to distribute the load of processing multiple sensors. There are ways to remediate the performance concerns, but they weren’t a problem if we were just considering orientation.

Since this was our first project working with React Native, we ran into a few issues connecting the application to the Raspberry Pi through the Cloud Firestore to allow bidirectional data transmission.

Accomplishments that we're proud of

From the five sensors to the mobile app, we are proud that we were able to seamlessly integrate all the components together. In addition to the various hardware and software components, we also faced challenges such as delays in hardware shipment and limitations of the processing speed of the Raspberry Pi. These obstacles required effective planning and communication to integrate everything together. In the end, when we were proud to see our sensors retrieve accurate information and display it on our mobile app in realtime. There was a lot that went into both the hardware and software side, so we’re also proud that we managed to get so much done in basically 2 and a half days.

What's next for PosFix

In the future, we would like to explore new methods of getting even more accurate data on orientation used to model posture. Due to the processing limitations of the Raspberry Pi, we were not able to get accurate enough position data from the IMU. Furthermore, we have explored the idea of utilizing machine learning to help us interpret the sensor data, and classify good posture from bad posture.

Furthermore, the hardware needs to be refined, including using something more appropriate than a raspberry pi for processing the data, using a real-time operating system. The orientation data can be improved this way, and further processing can be done on the pi. As well right now the pi needs to be plugged into the wall, which is inconvenient. These fixes would help improve the usability and usefulness of PosFix.

Built With

- figma

- firebase

- flex-sensors

- imu

- javascript

- raspberry-pi

- react-native

Log in or sign up for Devpost to join the conversation.