-

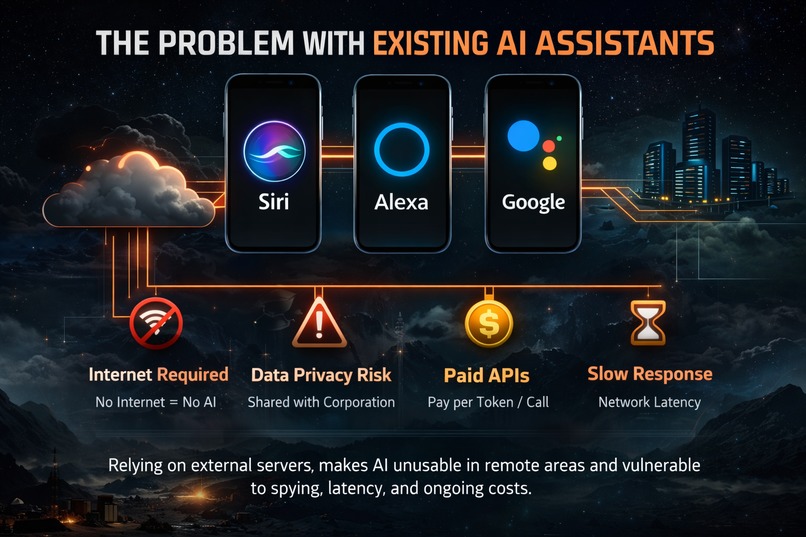

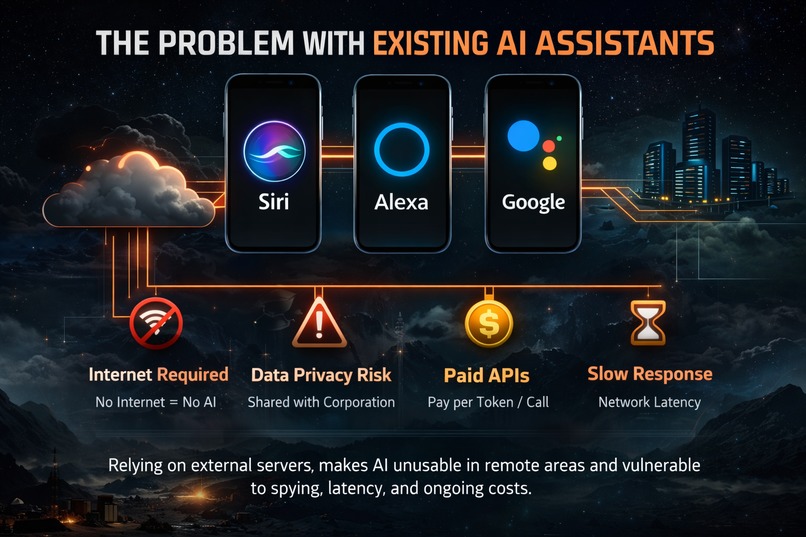

Cloud AI fails without internet and risks user privacy.

-

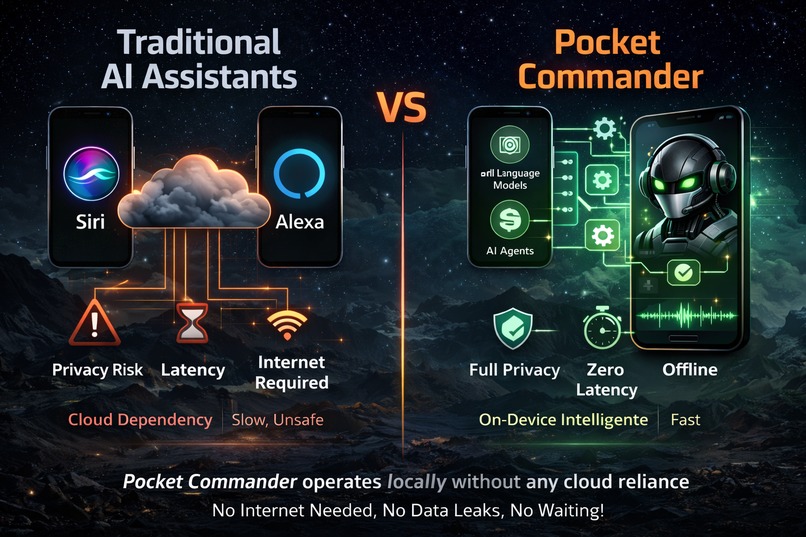

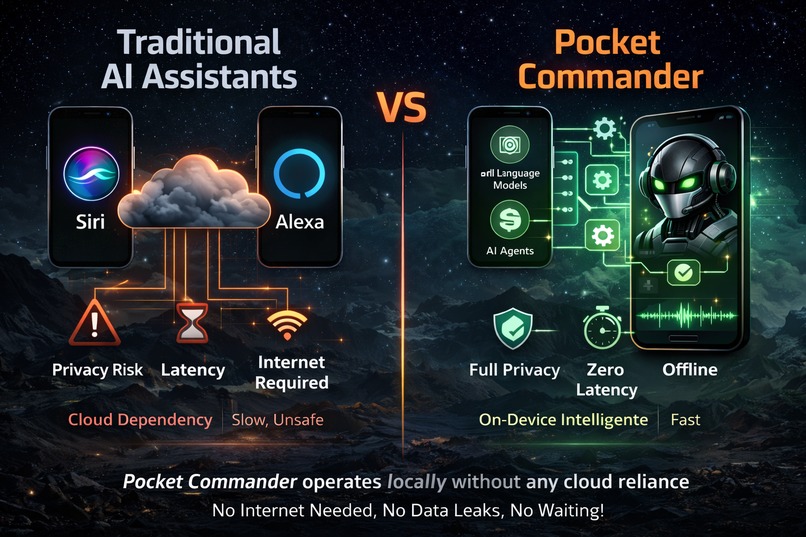

Traditional assistants vs Pocket Commander: cloud dependence vs on-device intelligence.

-

Pocket Commander: an offline AI assistant with zero cloud and full privacy.

-

Emergency help powered by on-device AI when the network is unavailable.

-

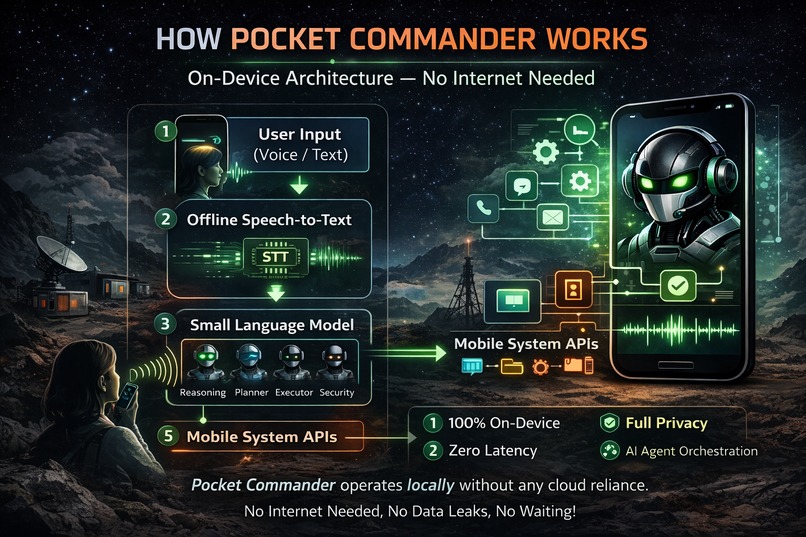

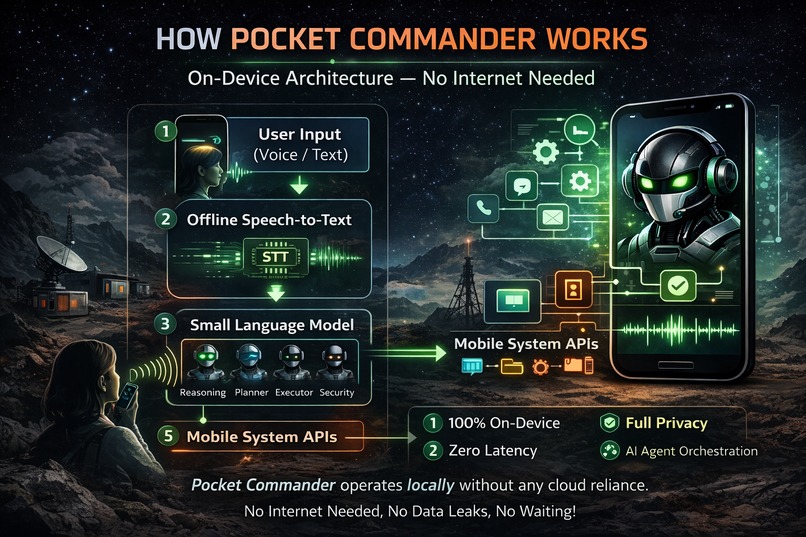

How Pocket Commander works using on-device models and AI agents.

-

Offline task execution: AI compiles projects without internet access.

Inspiration

Most AI assistants today depend on the cloud. They need constant internet access, send user data to remote servers, and suffer from latency and privacy risks. In many real-world situations such as subways, rural areas, flights, or disaster zones, the internet is unreliable or unavailable.

We were inspired by the idea of building an AI assistant that does not “rent intelligence” from the cloud, but instead carries intelligence inside the device itself. The “Kill The Cloud” challenge motivated us to rethink how AI agents could work when there is no network, no API calls, and no external servers.

Our goal was to design a mobile AI system that could understand user commands and perform actions locally, while keeping user data completely private.

What it does

Pocket Commander is an offline AI mobile assistant that allows users to control their phone using voice or text commands. It can perform tasks such as opening apps, sending messages, setting alarms, organizing files, and creating notes — all without internet access.

Unlike traditional assistants, Pocket Commander runs fully on-device using small language models and an AI agent system. No data is sent to any cloud service, and no external API is required. This makes it fast, private, and reliable even in disconnected environments.

How we built it

We designed Pocket Commander using an on-device AI pipeline:

User input is taken through voice or text.

Voice input is converted into text using an offline speech-to-text model.

The request is processed using a small language model running locally.

A multi-agent system (Reasoning Agent, Planner Agent, Executor Agent, and Security Agent) collaborates to understand the intent and decide what action should be taken.

The selected action is executed using mobile system APIs such as application launch, file handling, alarms, and notifications.

The result is returned instantly to the user, without any network dependency.

The RunAnywhere SDK is used as the core runtime to manage local model execution, memory, and agent orchestration.

Challenges we ran into

Designing an AI system that works entirely offline required careful consideration of mobile hardware limits. Large models are not suitable for phones, so we had to focus on small language models and quantization strategies to keep memory usage low while preserving reasoning quality.

Another challenge was ensuring safety. Since the assistant can execute system-level actions, we designed a Security Agent that prevents unsafe or restricted operations and requires user permissions for sensitive tasks.

Balancing performance, privacy, and real-world usability without relying on cloud services was the main challenge, and also the most valuable learning experience.

What we learned

This project taught us how powerful on-device AI can be when combined with efficient models and agent-based design. We learned how to think in terms of edge computing instead of cloud computing, and how to architect systems that are resilient, private, and fast.

We also learned that AI agents can make complex tasks more reliable by separating reasoning, planning, execution, and safety into different roles instead of using a single monolithic model.

Why this fits the “Kill The Cloud” vision

Pocket Commander directly aligns with the core goals of the challenge:

• True Privacy – All processing happens on the device, and no user data leaves the phone. • Offline Edge – It works in environments with no internet connectivity. • Zero Latency – There is no network delay because all intelligence runs locally.

This makes Pocket Commander a practical example of the Local AI Revolution: an assistant that lives on the chip, not on the server.

Built With

- ai-agent-orchestration

- android-accessibility-api

- android-system-apis

- deepseek-r1-distilled-model

- llama-3-(small-language-model

- on-device-inference-runtime

- quantized)

- runanywhere-sdk

- whisper-(offline-speech-to-text)

Log in or sign up for Devpost to join the conversation.