-

-

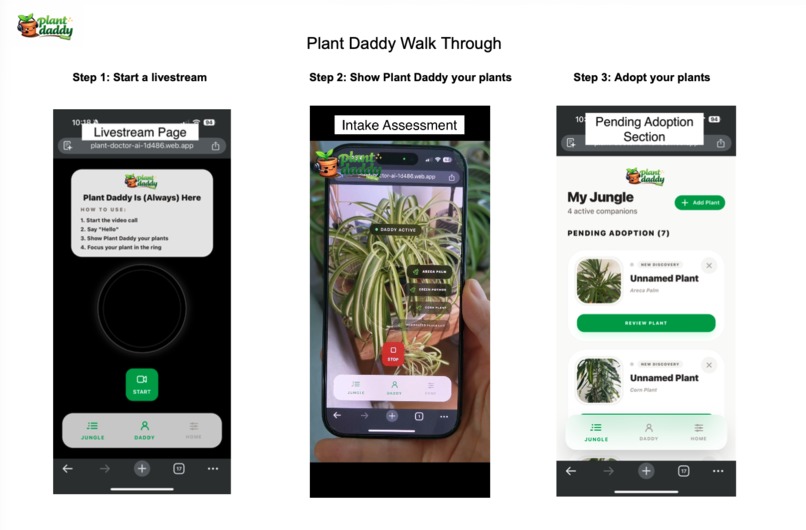

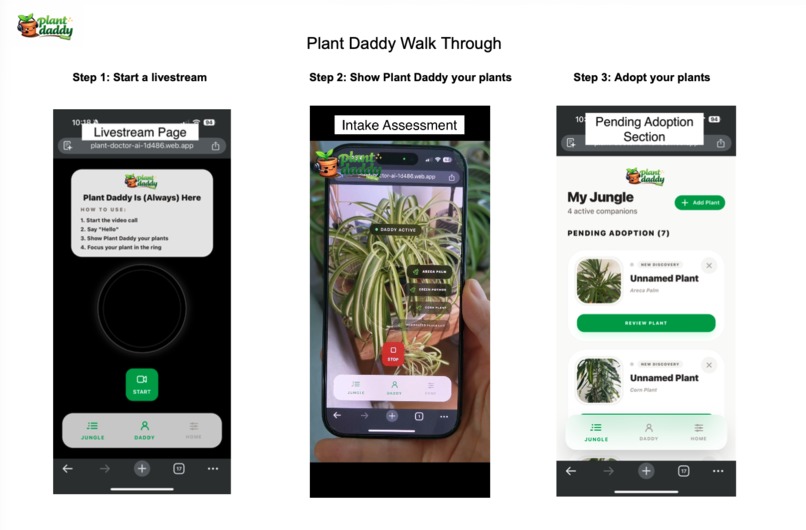

Start a livestream and show Plant Daddy your plants. Review and adopt them.

-

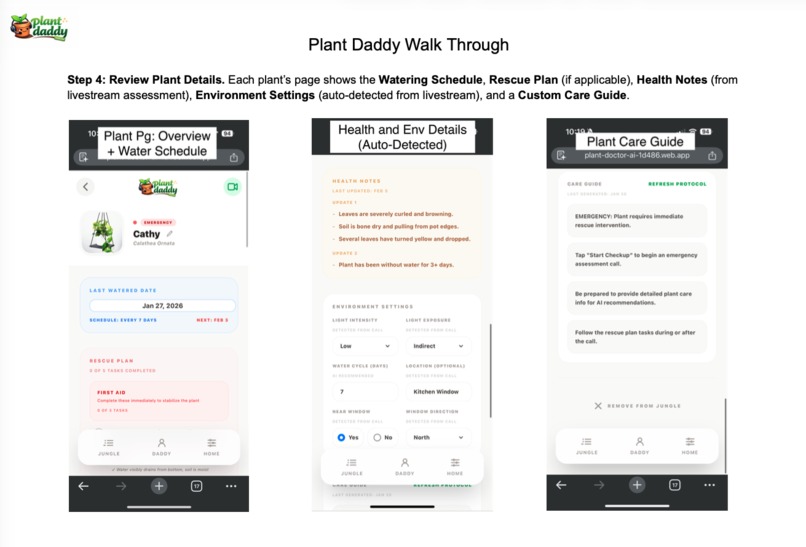

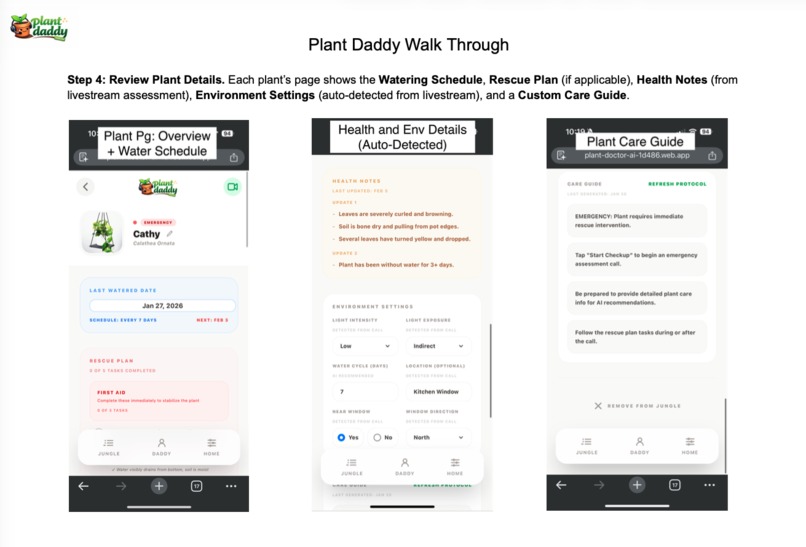

Plant Daddy creates each plant's watering schedule, health notes, environment settings, and care plan.

-

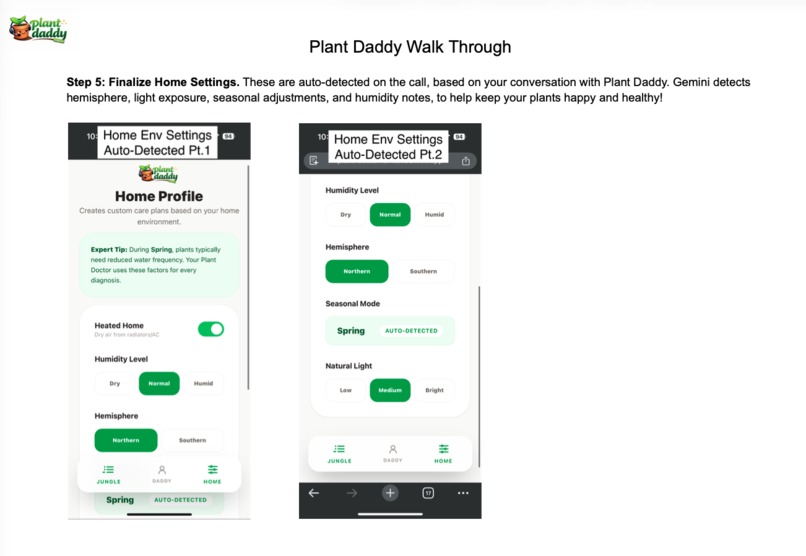

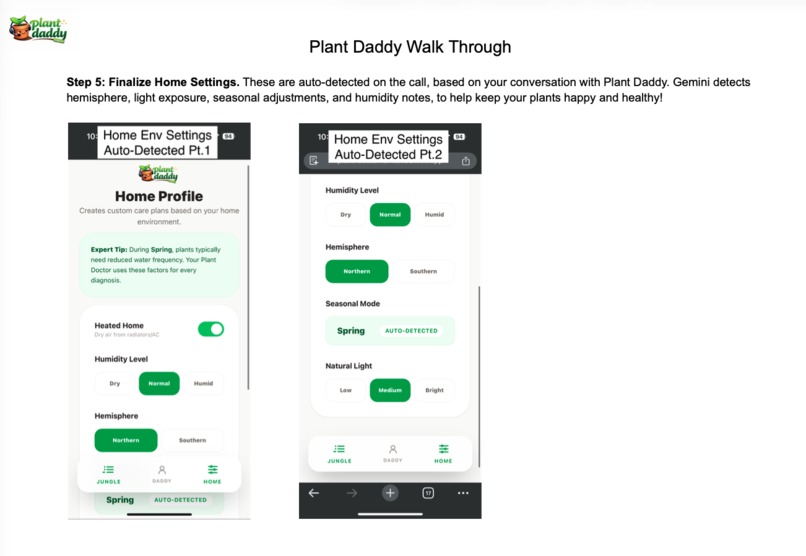

Plant Daddy also detects your home settings.

-

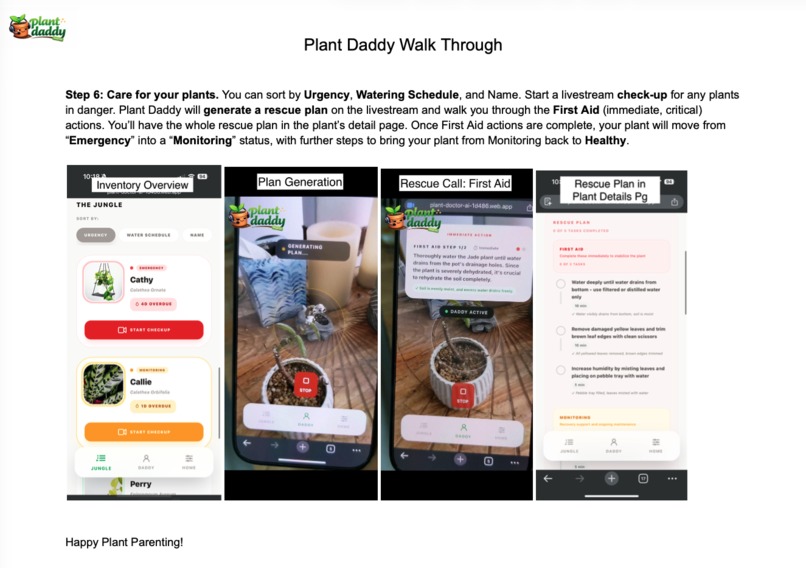

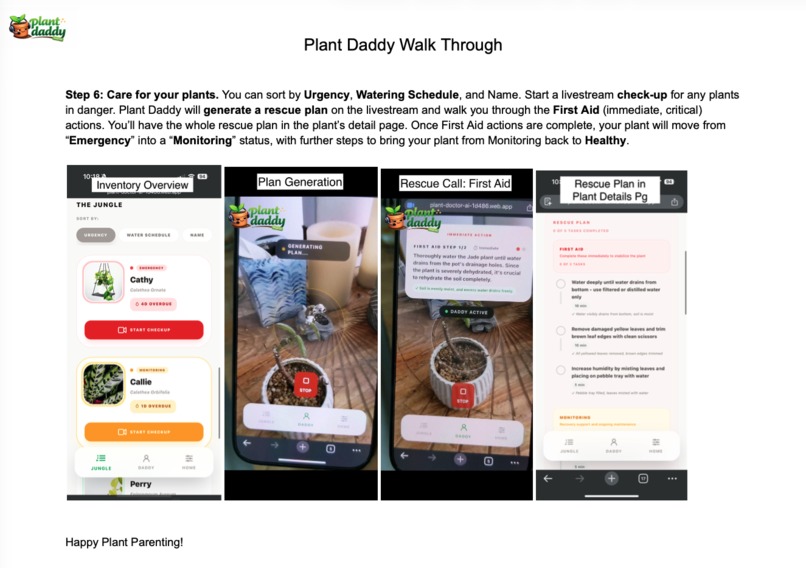

For plants in danger, start a check-up and Plant Daddy will generate a rescue plan and walk you through the First-Aid actions.

Inspiration

A rap sheet of my plant parent failures. Until now, the best we had was uploading images to Reddit/Planta (app). Photo scans can ID plants, but they can’t fully assess health, understand my specific contexts, and generate a truly personalized plan.

What it does

Gemini 3 unlocked livestream video and voice. Start a livestream and show Plant Daddy your plants (soil, leaves, surroundings, etc.). Multi-modal reasoning gives him the ability to:

- Generate health assessments, care plans, and rescue plans in real time

- Detect environment-specific contexts (light exposure, window proximity, season, humidity cues)

- Call out subtle issues like soil condition, mineral build-up from local water quality, even dusty leaves (ouch)

How I built it

Started in Google AI Studio to test livestream, then migrated to Antigravity with the assistance of Gemini CLI to create the web app. Using Firebase for database and hosting. Gemini 3 powers:

- Live video + audio streaming to continuously assess plant condition and surroundings

- Vision-based reasoning to infer light levels, placement, and environmental factors

Challenges I ran into

- Video + Audio livestream time limits: the current ~2-minute cutoff without context compression forces tradeoffs between long-form observation and memory continuity

- Agent Initiation: due to WebSocket + backend proxy setup, there is latency and fuzzy initiation. Opted for the user to initiate conversation to ensure a reliable call/response flow.

- For now, only gemini-live-2.5-flash-preview-native-audio supports bi-directional streaming needs, but the rest of the calls use Gemini 3

Accomplishments that I'm proud of

- Plant Daddy has a dash of personality, from calling a peace lily “a little dramatic” to explaining why my jade plant’s mineral buildup is caused by LA water quality.

- Already in-demand by testers, family, and friends who need this now

What I learned

- Agent Initiation Speed: latency is still ~5-8 seconds for first response. Had to set up a backend proxy to avoid to exposing the API key in the client bundle, which creates additional latency.

- Audio Sensitivity: noisy environments pollute the input. Users must be in a quiet environment

What's next for Plant Daddy

Shipping Plant Daddy as a mobile app:

- Longer livestream calls as context windows improve

- Integration across other APIs like Nest, Google Home, Weather to provide environmental cues.

Built With

- antigravity

- claude-code

- firebase

- firebase-functions

- firestore

- gemini

- gemini-cli

- google-ai-studio

- google-cloud-run

- javascript

- next.js

- node.js

- react

- typescript

- vite

Log in or sign up for Devpost to join the conversation.