-

-

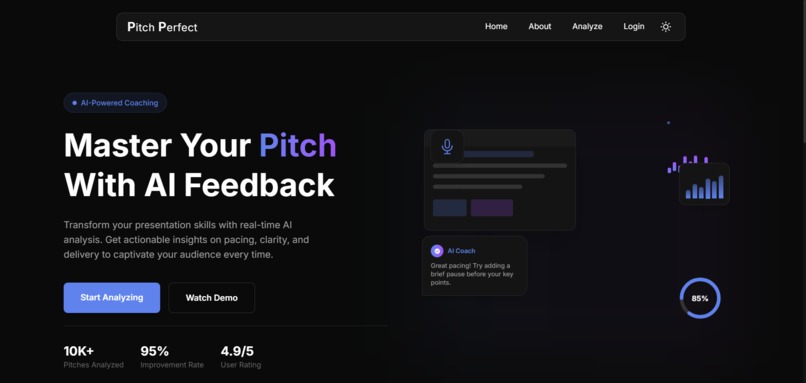

The home/landing page with basic description of project

-

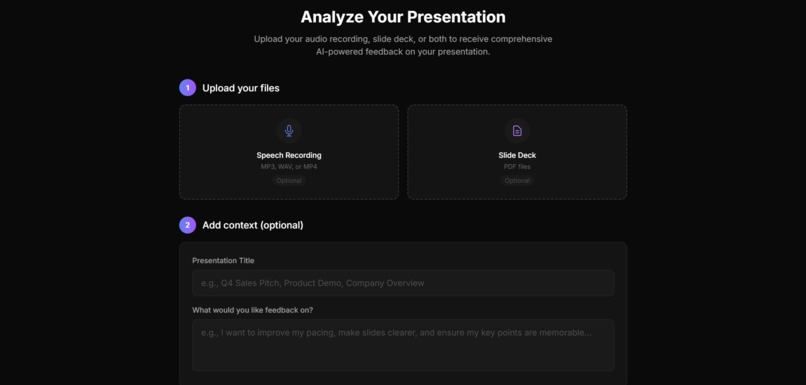

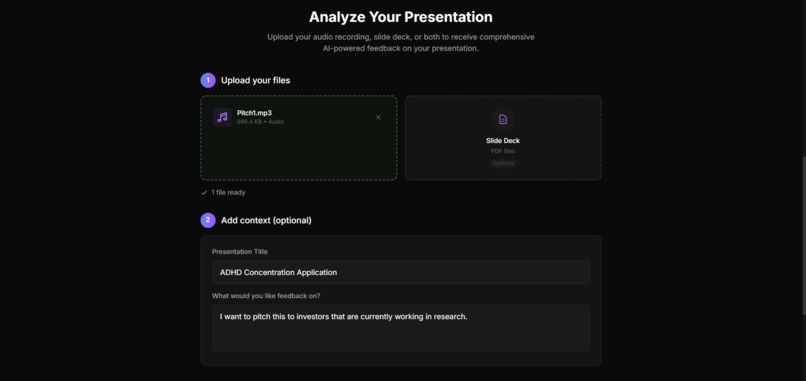

User input area for audio, slide deck and target audience specifications

-

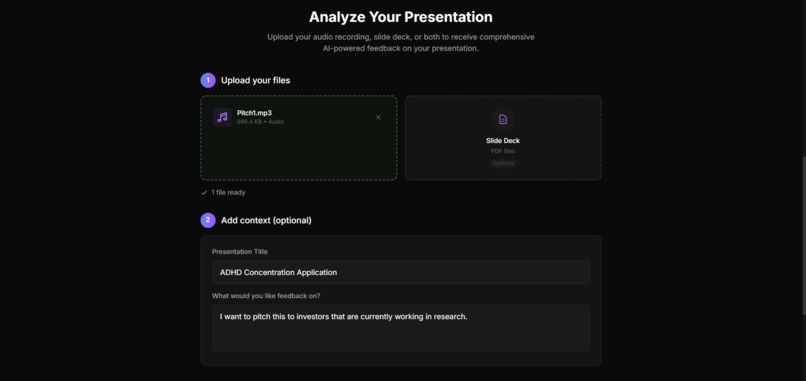

Completed user input

-

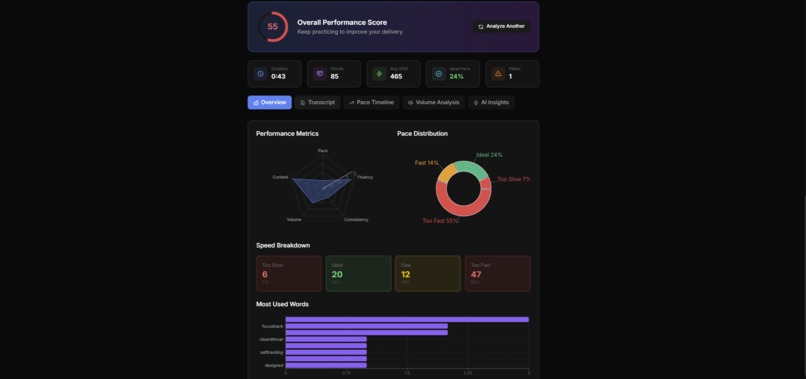

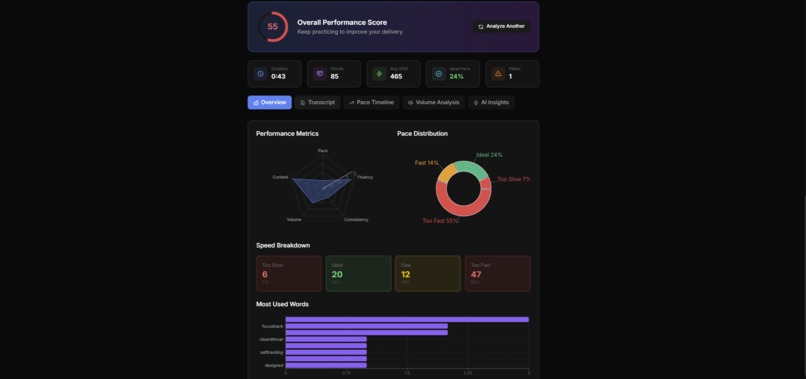

Basic overview of presentation and metrics

-

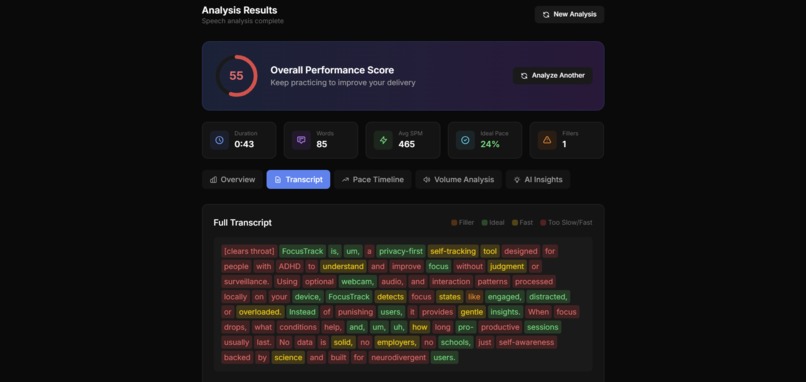

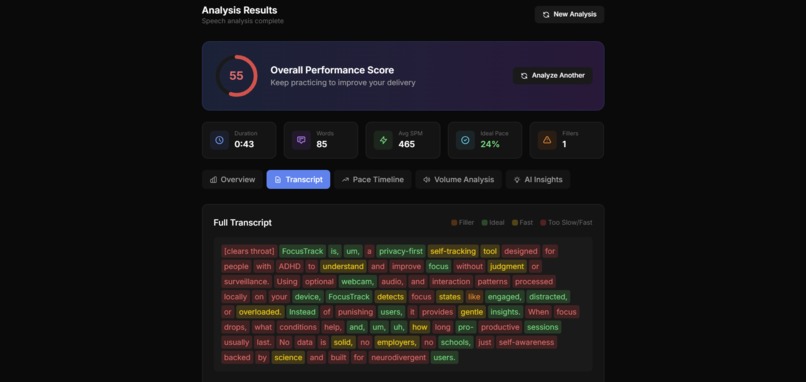

Transcription with visual representation of areas in which user is speaking too quickly/ideally/too slowly

-

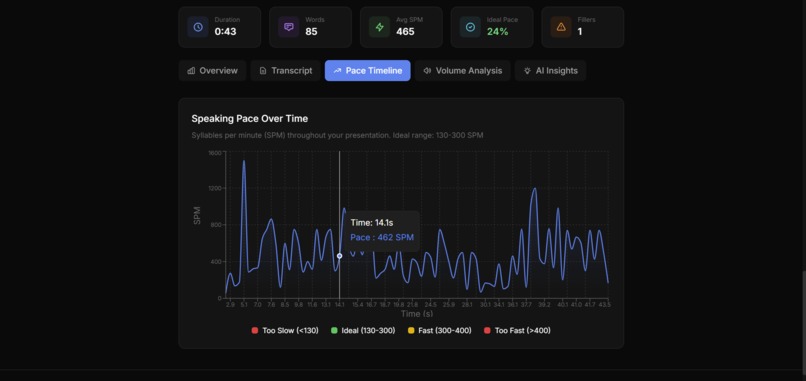

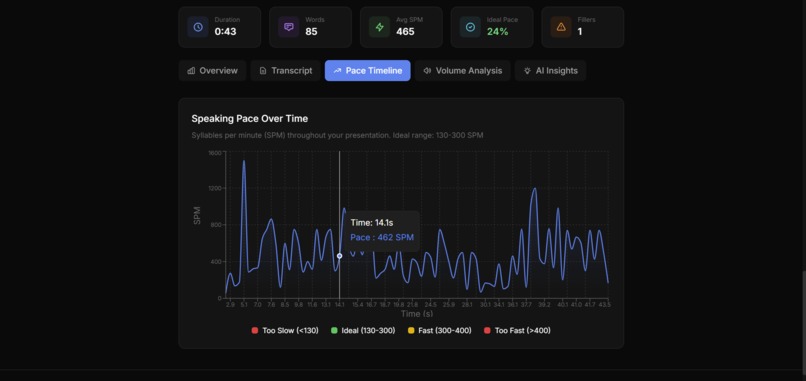

Interactive graph of syllables/time(min)

-

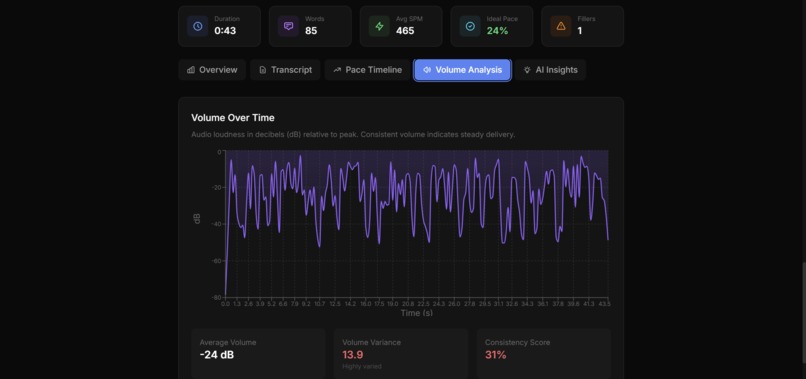

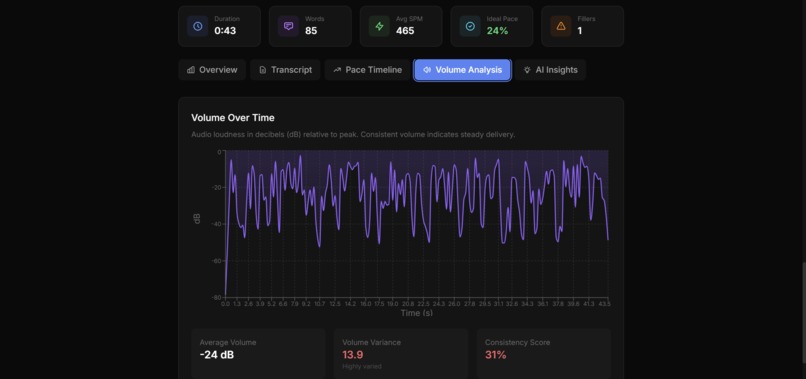

Interactive graph of dB/time

Inspiration

A great pitch can be the difference between getting funded, winning a grant, closing a sale, or flopping a hackathon demo. As hackers (and aspiring founders) we frequently noticed the same problem, we spend lots of time building (30+ hours in this case) but much less time focusing on how to communicate impact. We have access to so many tools for shipping reliable code fast, but almost nothing for shipping the story.

What it does

Pitch Perfect helps hackers, founders, and builders level up their pitch delivery and pitch content with fast, structured feedback that focuses on both:

- How you sound (pacing, clarity signals, filler words, delivery consistency), and

- What you're saying / showing (high-level structure and feedback on your slide deck).

Users can upload MP3 files and pitch decks directly to our platform, or use the built-in recording feature if they prefer. From there, our AI-enabled pipeline generates instant, data-driven feedback on what is working, what is unclear, and what to fix next so you can record and iterate quickly.

As a stretch feature, we also explored a voice improvement mode, where our system aims to generate an "optimized" version of the pitch audio so users can hear what implementing the feedback could sound like.

How we built it

Pitch Perfect was built with an impressive lack of sleep and a concerning amount of caffeine; but most importantly, grit, collaboration and a robust tech stack.

We used Next.js for the web app experience and deployed on Vercel (we even threw a .tech domain into the mix). For the AI analysis, we built a separate FastAPI backend with endpoints for audio and slide deck analysis.

On the audio side, Elevenlabs was used for speech to text transcription with word-level timestamps. We then extracted delivery signals like pacing and filler word frequency, and passed those signals into Gemini to generate coach-style feedback that is actually actionable. Elevenlabs was also used to develop our premium text-to-speech generation feature which clones the voice in the uploaded audio.

On the deck side, we parse uploaded pitch decks (PDF) to extract text and structure, then use Gemini to critique clarity, flow, and messaging so founders can tighten the story, not just the delivery.

Postman was also used during integration, mostly for testing endpoints while wiring the frontend to the backend.

Challenges we ran into

Considering the lengthiness of the toolchain and stack described above, this project did not come without challenges. Integrating multiple services, managing file uploads, and keeping deployments stable under time pressure was troublesome to say the least. We ran into some last-minute middleware and CORS issues, tested the limits of our credits and API keys, and pivoted scope and product direction more than once. We also had to deal with a somewhat complex architecture and deviate from our initial single repo to accommodate separate backend routes and keep the AI pipeline moving without blocking the app. Some features also needed to be deprecated in place of reliable production code, these features are mentioned in our 'next steps'.

Accomplishments that we're proud of

Challenge is part of why we love hackathons; overcoming the issues we faced this weekend gave us a real sense of pride in what we shipped. Early on and throughout, we made strong executive decisions to narrow scope and ship a functional prototype that still demonstrates real value. We integrated transcription, delivery analysis, slide deck critique, and LLM coaching into one loop that helps founders iterate faster. We were also proud of how far we pushed sponsor tech and prize tracks, shoutout to Google Gemini and ElevenLabs.

What we learned

Given the variety of features implemented into Pitch Perfect over the weekend, our team learned a great deal both collaboratively and individually.

Some of us learned how to deploy an API service, new frameworks like Next.js, and others learned how to integrate multiple AI providers into a web app.

What's next for Pitch Perfect

Our first order of business would be to fully integrate all of the features we set out to deploy this weekend, such as the voice cloning option (which works locally), and the agentic framework we originally constructed (this also works locally).

If we continued building this beyond the weekend, we would focus on turning feedback into a repeatable training loop, which would entail the following:

- Progress tracking across attempts, so users can see improvement over time

- A real-time coaching mode during recording that would also take in video input

- Team accounts for accelerators and startup cohorts.

As we don't plan to maintain this project after this weekend, if you would like to monetize it, take it to YC, make a spin-off, use the idea for another hackathon, or whatever else - feel free!

Built With

- elevenlabs

- fastapi

- gemini

- javascript

- python

Log in or sign up for Devpost to join the conversation.