-

-

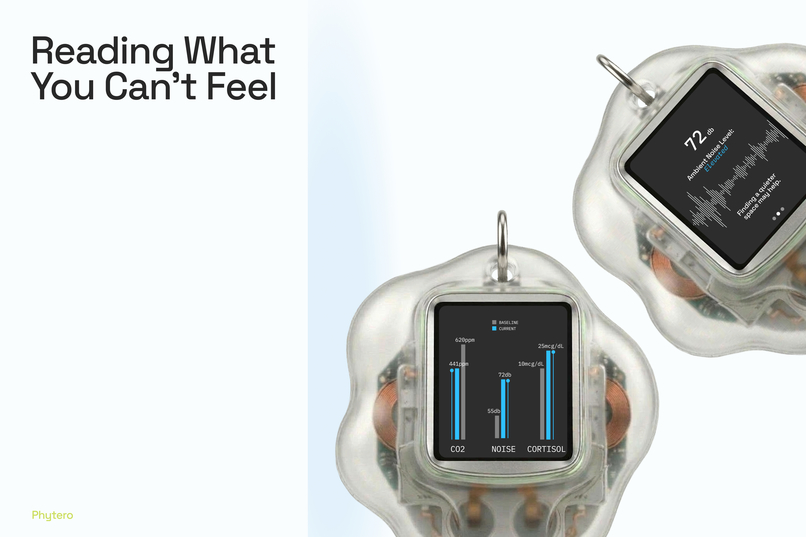

Phytero worn on the body, paired with the app on your phone.

-

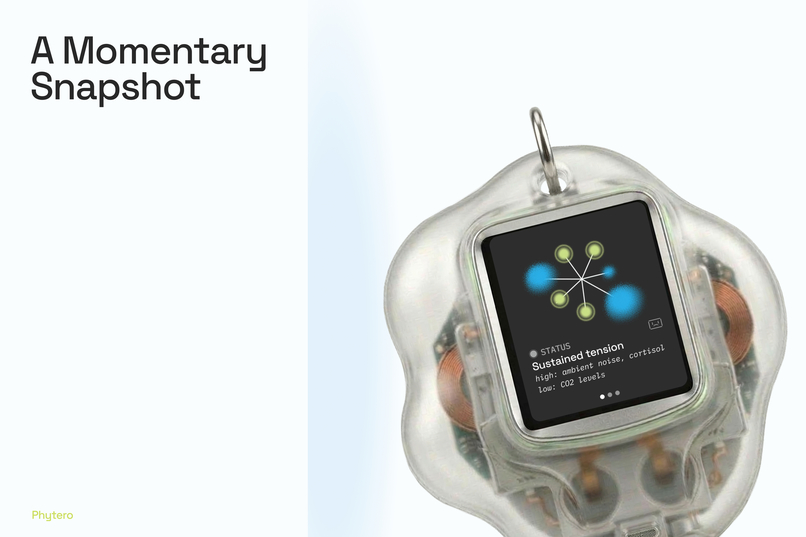

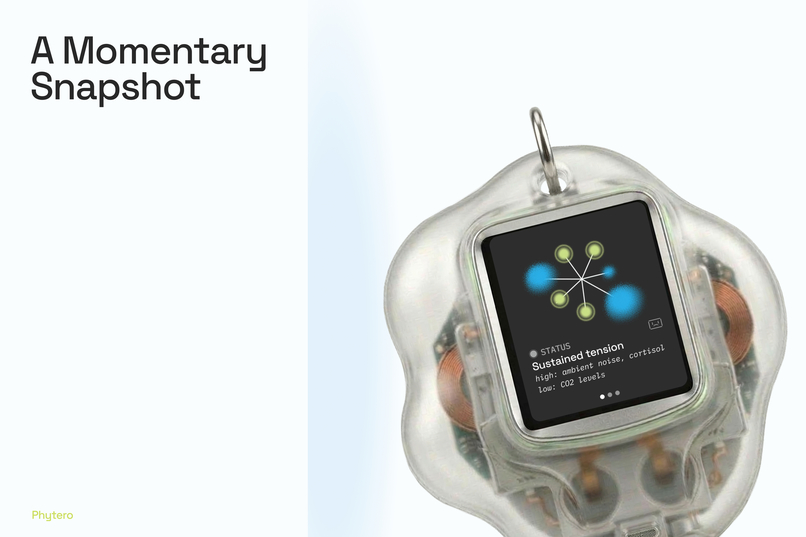

The wearable's display shows your current interoceptive state at a glance.

-

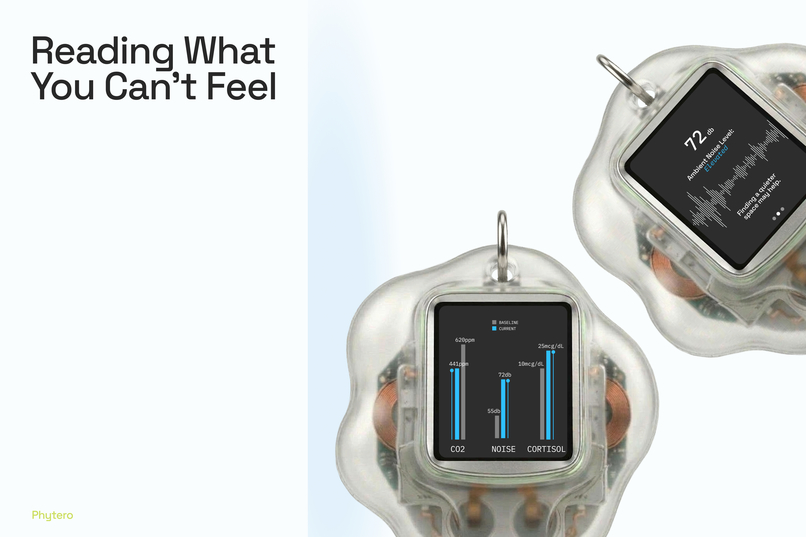

CO₂, noise, and cortisol levels: signals your body registers before your mind does.

-

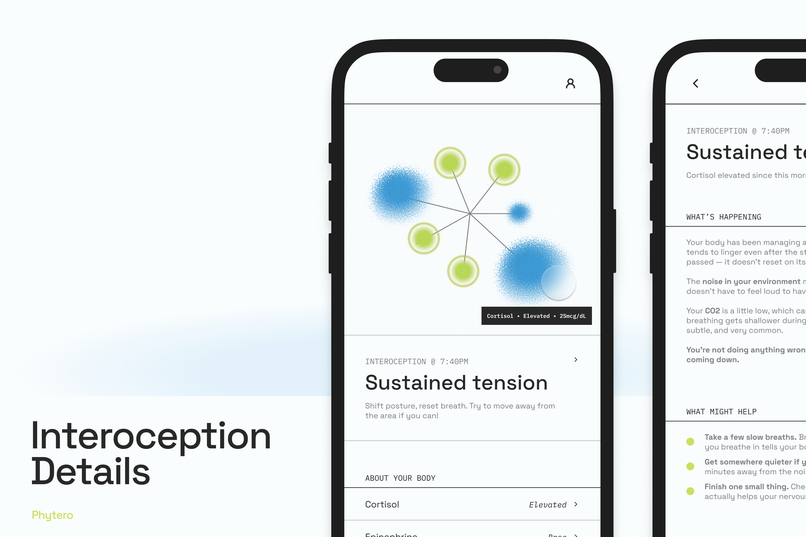

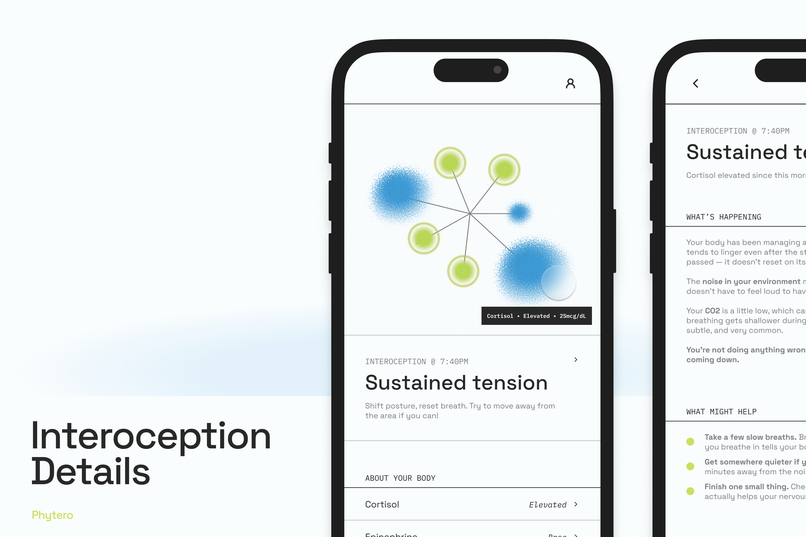

A full breakdown of what your body is experiencing and why it matters right now.

-

Your interoceptive patterns over time — by day, week, month, and beyond.

Inspiration

One of our team members has ADHD, and honestly, the project started with her. Interoception is the body's sense of its own internal state, and it's frequently impaired in neurodivergent individuals. She'd lived it: stress responses that came out of nowhere, signals she couldn't read until they'd already peaked, years of blaming herself for things that turned out to be her environment. We kept her at the center of every design decision, using her experience as a kind of autoethnographic anchor throughout the whole process.

Plants scream when they're stressed (Khait et al., 2023). They emit ultrasonic signals that science can now detect, and we kept coming back to the fact that humans do the same thing. Our bodies are constantly responding to our environments, and we have no interface for it. We just feel the aftermath: depleted after a dinner we half-enjoyed, unable to focus in a room we didn't know had elevated CO₂, wondering if we're "too sensitive" after a friendship that keeps leaving us drained.

What it does

Phytero turns your body's stress signals into something you can actually see. It's an abstract, living visualization that shifts with your internal state: green and calm when your environment is working for you, blue and restless when stress hormones are climbing.

There are two surfaces. The main device is a standalone wearable, a translucent keychain pendant with a small screen that works without your phone. The companion app is for when you want to go deeper: interoception history over time, hormone breakdowns, pattern observations, and settings. Core functionality lives on the device; the app is supplemental.

Under the hood, Phytero reads three stress hormones in sweat: cortisol, epinephrine, and norepinephrine, alongside environmental data like CO₂, noise, and temperature. It's grounded in real published science (Stressomic, Science Advances, 2025). It never reads a single value in isolation. Combination, duration, and context are all cross-referenced against your personal baseline. Patterns surface automatically over time, no manual logging required. And if you don't want continuous input, check-in mode lets you control when you engage.

How we built it

We used the Figma toolkit: FigJam for ideation, Figma Design for the interface and prototyping, and Figma Slides for the presentation. The prototype uses scripted scenarios rather than live sensor data, which was intentional. We're designing for hardware that doesn't exist yet as a consumer product.

We also leaned on AI tools throughout: Claude, Figma Make, Variant, Flora, and Nanobanana mainly for organizing notes, finding visual inspiration, and building prototype mockups. Our ideation, product proposal, and design decisions were our own. AI helped us move faster and stay visually consistent while working remotely across time zones.

Challenges we ran into

The hardest challenge was language. Phytero surfaces what your body observes, but it must never label a person or place as the cause. Every UI string had to describe a body state or environmental condition, never an attribution. Enforcing that across every screen and recovery message meant doing a full copy audit, which took longer than we expected.

We also had to figure out the visual language from scratch. The abstract forms needed to be readable on a tiny pendant screen, expressive enough to communicate state without labels, and calm enough not to feel alarming. Finding that balance took real iteration.

Remote collaboration across time zones meant we had to be unusually deliberate: clear documentation, explicit handoffs, hard scoping decisions. We cut a fourth use case to protect the depth of the three we kept.

Accomplishments that we're proud of

Getting the visual language to work at such a small scale is something we're genuinely proud of. So is the two-surface architecture: a standalone wearable for the moments when discretion matters, and a companion app for the pattern recognition that builds over time.

We're also proud of how conceptually tight the final product is. The biomimicry isn't a skin on top of a feature set: it drives the architecture, the inner workings of the device, and shows up in the copywriting and visual metaphors throughout. The 7-spoke radial shape echoes a flower. The states are "wilting," "trembling," "thriving." The physical device is organic and amorphous. It holds together because the idea was inspired by plants from the start, not decorative.

The safeguards being design decisions rather than disclaimers matters to us too. No audio is structural, not just policy. No attributional language is enforced at the copy level. Transitions are gradual so Phytero never feels like an alarm; and the check-in model (rather than continuous, as would a ring or bracelet suggest) allows the user to engage with the device on their own terms. For our teammate with ADHD, this was the bare minimum.

We're also really proud of our collaboration. We were strangers going into this with no mutual connections, and left as friends who would do it all again. Hackathons are funny like that!

What we learned

Speculative design demands restraint. The real discipline is asking: what is the one thing this product needs to do, and what's the most legible interface for it? That question shaped everything from the visual system to the copy rules.

Having a team member serve as both designer and user-proxy also changed how we approached research. Autoethnography isn't a shortcut. It demands honesty, especially when the person doing the research is the one whose experience is under scrutiny. Her willingness to examine her own patterns gave the project a specificity that secondary sources alone couldn't have provided.

We learned things we didn't expect to. Many neurodivergent individuals have heightened sensitivity to touch and physical sensation, which reframed how we thought about the device entirely. It's part of why the pendant is something you choose to hold rather than something strapped to your wrist: the interaction model had to respect that the user might not want to be "on" at all times, in the way an Apple Watch assumes you will be.

Designing for emotional resonance without prescribing emotion was also harder than we anticipated. It's surprisingly easy to slide into telling users what to feel, or to design something that tips from "useful" into unsettling.

And practically: we got a lot more resourceful with AI tools, using them not just for ideation but to generate 3D mockups that matched a very specific visual register. Getting AI to output something that fits your vision rather than something generic turns out to be its own skill.

What's next for Phytero

The next design work is deepening the physical device: form, materials, and haptic language. We want to validate the visual system with a broader set of neurodivergent users. And there's more to explore in the visual language itself: we constrained ourselves to what was legible and buildable in 72 hours, but a truly speculative concept can use a more pushed, distinctive graphic identity. And definitely more animation!

The history view has room to grow too; moving from pattern recognition to something more anticipatory, flagging recurring stressful contexts before you walk into them rather than after.

Phytero's real future depends on Stressomic-class sensing reaching consumers. We're not pretending the hardware is ready. We're designing the experience layer that should exist when it does.

Built With

- claude

- figma

- figma-make

- figma-slides

- flora

- nanobanana

- variant

Log in or sign up for Devpost to join the conversation.