-

-

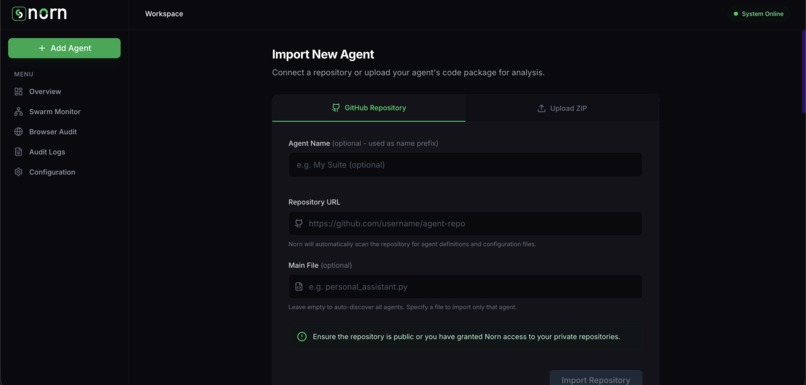

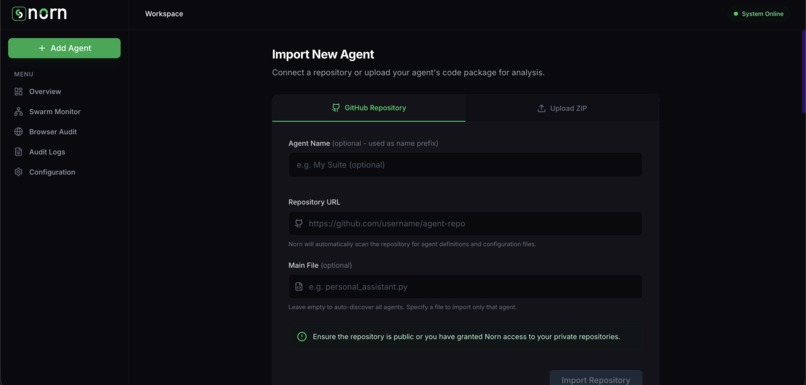

Importing agents via GitHub or ZIP, and auto-generating secure test tasks using Nova 2 Lite code analysis.

-

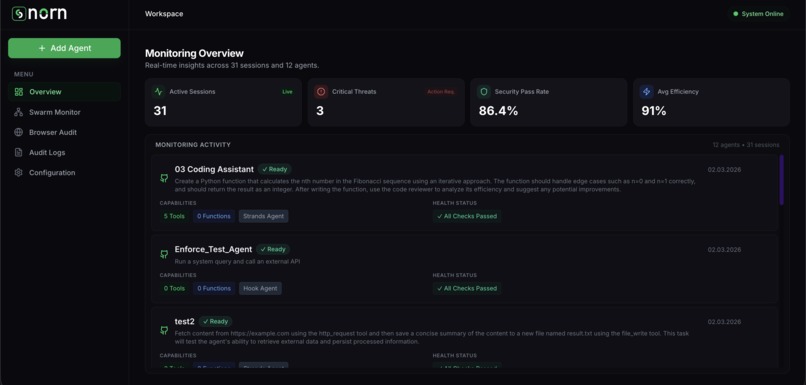

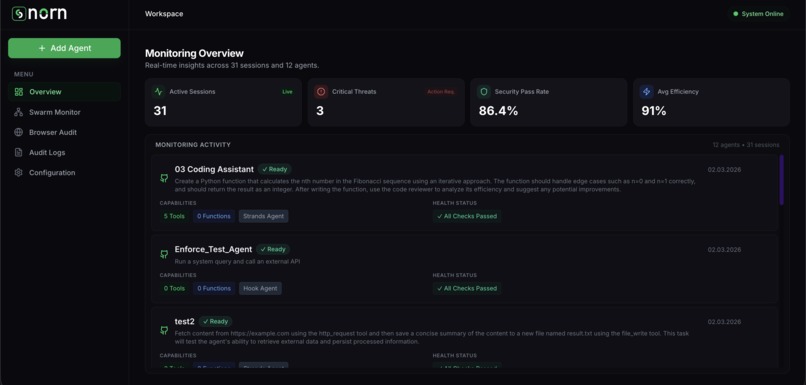

Main dashboard to monitor agent states, quality, and security scores via WebSocket live stream.

-

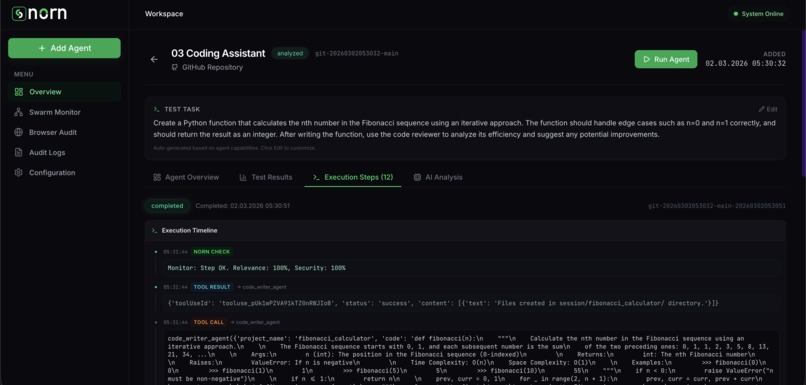

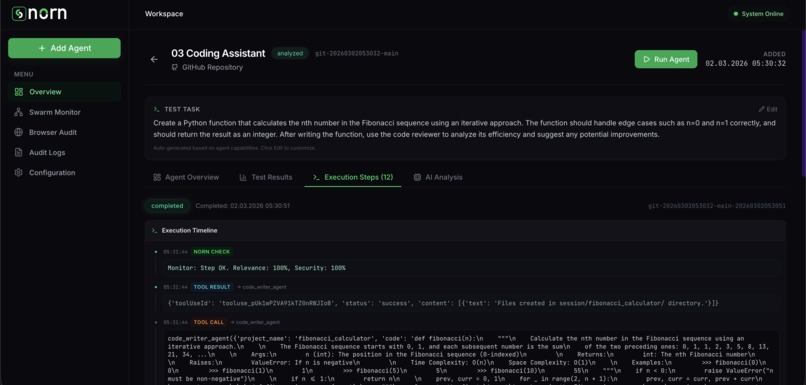

Live tracking of agent tool usage and real-time security/relevance scoring by Nova Lite.

-

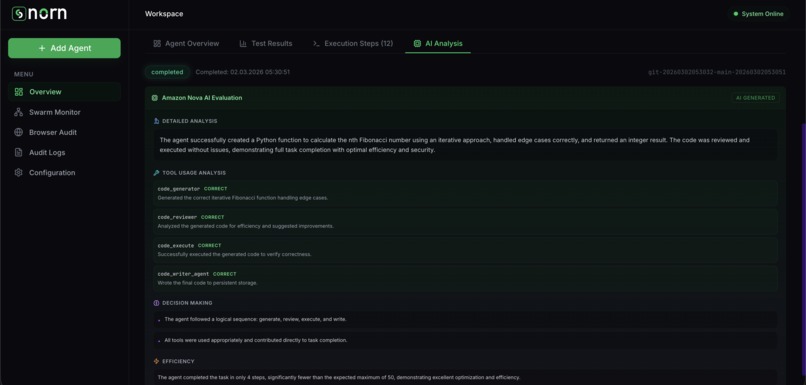

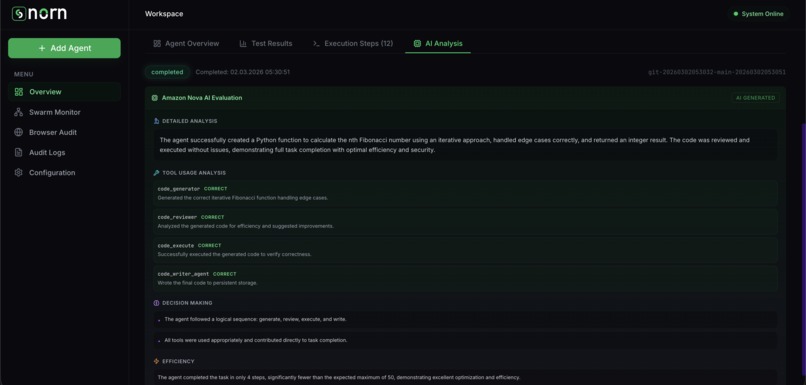

Detailed session report where Nova 2 Lite evaluates agent decision processes and task success.

-

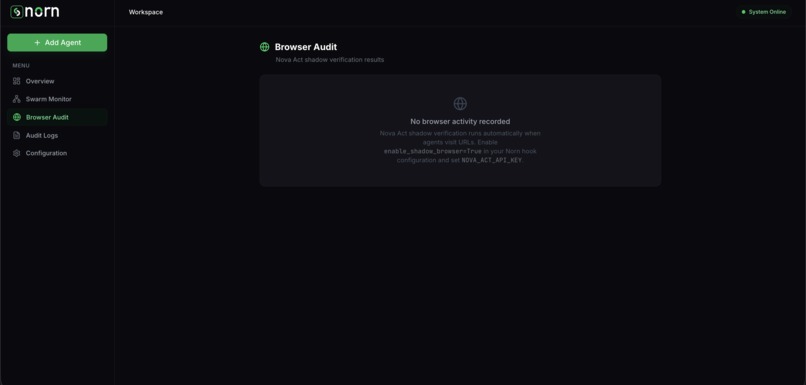

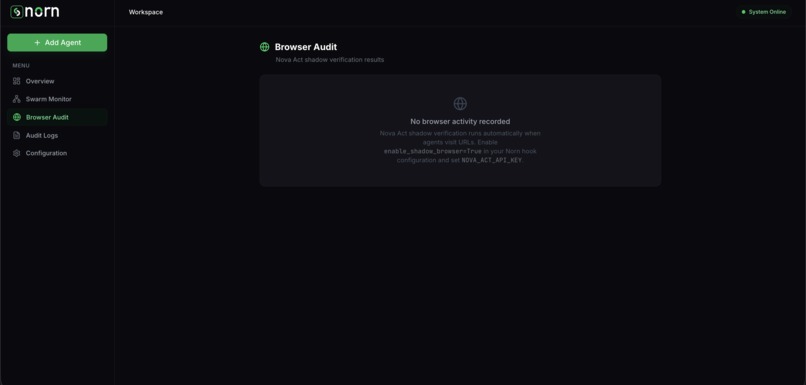

Nova Act shadow browser audit. (Note: Couldn't test live as the API key is not yet available in my region)

-

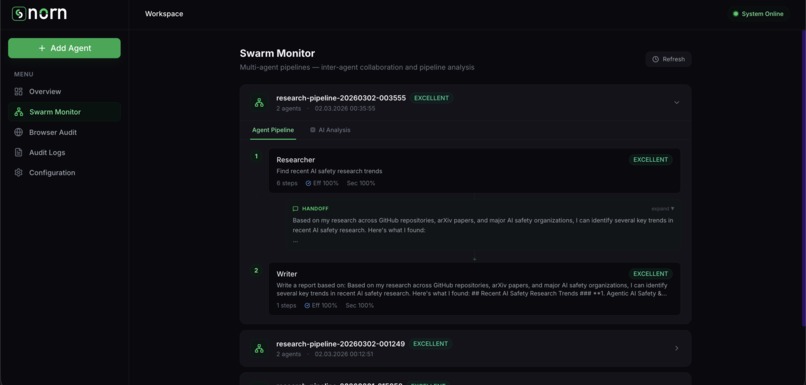

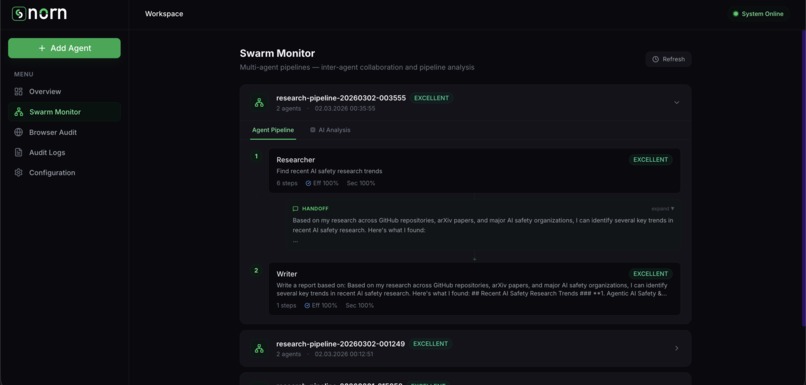

Tracking multi-agent pipelines, data handoffs, and goal drift with alignment scores.

-

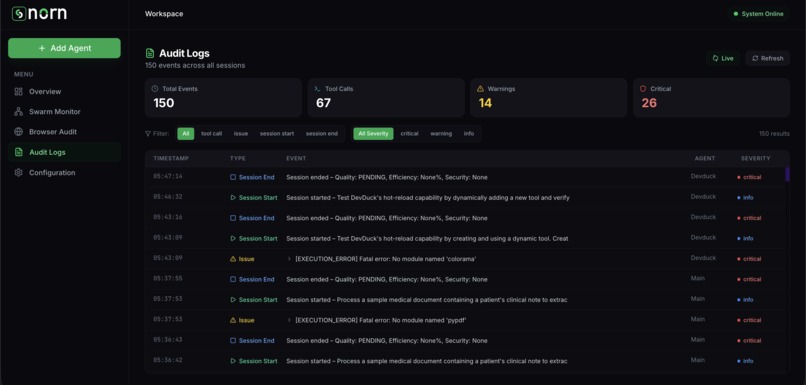

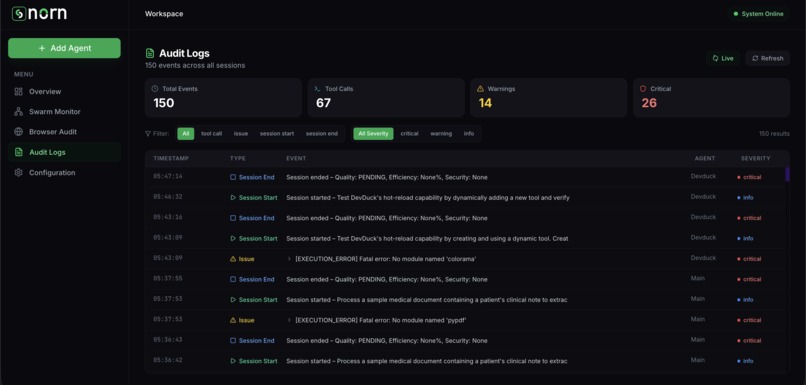

System audit logs detailing all agent actions, executed tools, and security alerts.

-

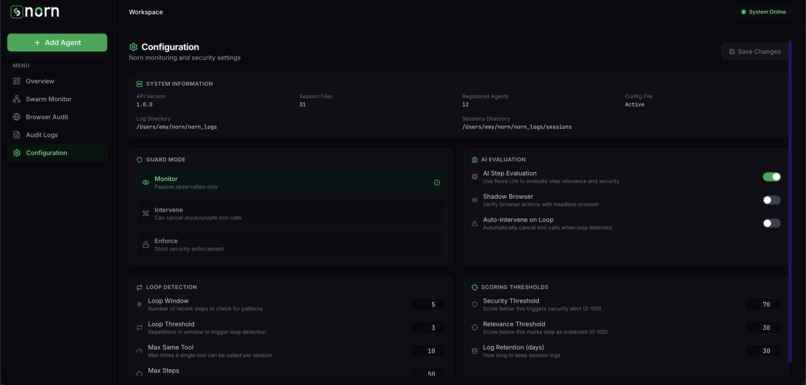

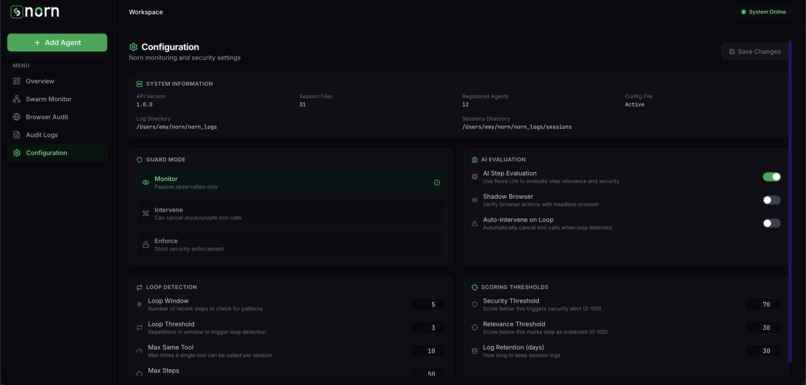

Settings panel to manage Norn's security thresholds, intervention modes, and overall system configurations.

Norn

Inspiration

This idea had been sitting in the back of my mind for about a year. Auditing and observability have always fascinated me in finance, food, industrial processes no critical system can scale without oversight mechanisms. When that interest carried me into the AI space, I noticed a serious gap: who audits the AI agents?

Today, the world's largest companies have already handed over significant portions of their operations to agent-based systems. Goldman Sachs uses AI agents for legal document analysis, Salesforce for customer support workflows, Siemens for industrial process automation. According to Microsoft's 2026 security research, 80% of Fortune 500 companies now use active AI agents in production [1]. At that scale, every tool call, every decision, and every security risk must be observable not as a nice feature, but as an operational requirement. When an agent deletes the wrong file, leaks sensitive data, or falls into an endless loop, damage detected in hindsight is almost always too late.

When I looked at existing tools, the gap was clear. General observability platforms (Datadog, New Relic) don't understand AI agent semantics. LLM tracing tools (LangSmith, Weights & Biases) don't offer tool call security analysis or multi-agent pipeline monitoring. AI security tools (Lakera Guard, Rebuff) focus only on prompt injection agent behavior and session-level anomaly detection are out of scope. According to McKinsey's 2024 State of AI report, 65% of organizations now regularly use generative AI nearly double the previous year yet most lack observability into how their AI systems actually behave [2]. Meanwhile, Gartner found that 63% of organizations either lack or are unsure about having proper data management practices for AI [3]. Yet no tool existed that could monitor agent behavior, tool call safety, and session history simultaneously in real time. Norn was built to fill that gap.

Amazon's model family deepened this inspiration. Nova Lite's speed-cost balance made real-time per-step AI evaluation practical. But the most striking spark came from Nova Act its browser automation capabilities gave birth to Norn's Shadow Browser concept: an independent browser that silently verifies what an agent claims to see on the web, detecting prompt injection payloads in real time. This shifted my thinking from passive log collection to active security verification.

What it does

Norn is an AI agent quality and security monitoring platform built on top of the Strands Agents framework. With a single line of integration adding NornHook as a hook to any agent it automatically captures every tool call, every decision, and every security signal, and streams them to a live dashboard.

Core capabilities:

- Real-Time Step Monitoring: Every tool call appears on the dashboard instantly as the agent runs with color-coded relevance (0–100) and security (0–100) scores, live step timeline, and quality badges.

- AI-Powered Per-Step Evaluation: Nova Lite scores each step for relevance and security in real time, flagging credential leaks, data exfiltration attempts, SSL bypass, and command injection risks.

- Deterministic Security Analysis: StepAnalyzer runs fast, rule-based checks alongside AI evaluation loop detection, pattern matching, input hash deduplication without any LLM call.

- Multi-Agent Swarm Monitoring: Groups agents by

swarm_idto visualize entire pipelines. Nova Lite evaluates cross-agent coherence and goal drift across chains, and displays per-agent efficiency, security, and quality metrics in pipeline order with handoff tracking. - Agent Import & Discovery: Import agents from GitHub (with subfolder support) or ZIP upload. AST-based code analysis automatically identifies tools, dependencies, entry points, system prompts, and potential security issues.

- Smart Task Generation: After import, Nova Lite analyzes the agent's tool set and generates a concrete, safe test task tailored to its actual capabilities no manual task writing needed.

- Shadow Browser Audit: An optional Nova Act-powered verification layer. When an agent visits a URL, an independent browser session opens the same page, verifies content integrity, and detects prompt injection attempts.

- Session Evaluation & Reports: After each run, a deep AI analysis produces task completion confidence, efficiency scoring, per-tool usage breakdowns, and actionable recommendations.

- Design Element Impact Analysis: Because Norn captures every step with granular scoring, it enables direct comparison of how changes to an agent's design elements AI model, tool set, system prompt, or orchestration logic affect real-world performance. Swap a model, tweak a prompt, add a tool, then re-run the same task: Norn's session history lets you see exactly how efficiency, security, and completion confidence shifted, turning agent development from guesswork into data-driven iteration.

Scoring system:

- Overall Quality: EXCELLENT | GOOD | POOR | FAILED | STUCK

- Efficiency Score (0–100): Actual vs. expected step count

- Security Score (0–100): Deterministic checks + AI analysis combined

- Completion Confidence (0–100): How verifiably the task was completed

- Pipeline Coherence (EXCELLENT/GOOD/POOR, swarms only): AI-evaluated goal alignment across agent pipelines

Integration methods:

# Method 1: Hook integration (install norn-sdk in your agent's venv)

from norn import NornHook

from strands import Agent

hook = NornHook(norn_url="http://localhost:8000", agent_name="My Agent")

agent = Agent(tools=[...], hooks=[hook])

# Method 2: Multi-agent swarm

hook_a = NornHook(agent_name="Researcher", swarm_id="pipeline-1", swarm_order=1)

hook_b = NornHook(agent_name="Writer", swarm_id="pipeline-1", swarm_order=2)

How we built it

Backend: Python + FastAPI powers the REST API and WebSocket server. The core architecture centers on NornHook, which integrates with Strands' hook system (BeforeToolCallEvent, AfterToolCallEvent, AfterInvocationEvent) to intercept every agent lifecycle event. Alongside this, StepAnalyzer provides deterministic, fast analysis tracking tool call frequency, detecting repeated input patterns, and running rule-based security checks all without LLM calls.

AI Layer: Amazon Nova Lite serves as Norn's AI brain across three layers: per-step relevance and security scoring in real time, deep session evaluation after each run, and smart task generation during agent import. Nova Act powers the Shadow Browser an independent browser verification layer that replays agent web actions and detects prompt injection payloads.

Frontend: React 19 + TypeScript + Tailwind CSS, built with Vite. The dashboard connects via WebSocket for real-time updates every tool call, score, and alert streams in live. Recharts handles data visualization for session analytics and swarm pipeline views.

Data & Infrastructure: Persistent session management uses fixed session IDs with step_id-based deduplication and thread-safe atomic file writes. Local JSON storage for development, with pluggable backends (S3, DynamoDB) for production. Each agent execution runs in an isolated workspace with auto-installed dependencies in separate virtual environments.

Dual-Layer Analysis: The key architectural decision was combining deterministic and AI analysis. StepAnalyzer handles fast checks (loop detection, security rules, pattern matching) while Nova Lite provides semantic depth (relevance scoring, completion assessment, recommendations). This gives Norn both speed and intelligence without compromising either.

Challenges we ran into

Session persistence was a key architectural decision. The same agent's conversations needed to accumulate under a single timeline history preserved, each new run appended on top of the last. I designed a fixed session ID architecture and wrote a smart merge system using step_id-based deduplication with thread-safe atomic file writes.

Balancing AI and deterministic analysis required careful thought. Relying solely on LLM-based evaluation would be too slow and expensive for real-time monitoring. Relying solely on rules would miss nuanced issues. The solution was a dual-layer approach: StepAnalyzer handles fast, deterministic checks while Nova Lite provides deeper semantic analysis. This gives Norn both speed and intelligence.

Multi-agent swarm monitoring introduced a different class of problems. Individual agent monitoring is one thing but tracking alignment, goal drift, and data handoffs across an entire pipeline of agents required a new data model. I built a swarm grouping system with swarm_id and swarm_order, built an AI-powered pipeline coherence analysis using Nova Lite to evaluate cross-agent goal alignment, and designed a pipeline visualization that shows how quality degrades (or improves) across stages.

Preventing malicious agents from gaming the system was an unexpected challenge. During testing, I discovered that a cleverly crafted agent could embed instructions in its output to influence the AI evaluator into giving high scores. I hardened the evaluation pipeline with strict scoring calibration, output sanitization, and independent verification ensuring that Norn's assessments remain trustworthy even when monitoring adversarial agents.

Regional API access limitations were a frustrating blocker. Nova Act API keys are not available in all regions, and my region (Turkey) was one of them. This meant I could build and architect the Shadow Browser verification system the code is fully written but I was unable to test it end-to-end with a live Nova Act instance. The feature remains ready to activate the moment API access becomes available in my region.

During development, I encountered a very instructive moment: a delete operation in the dashboard called shutil.rmtree(""), which wiped the entire project directory. This incident made it concretely clear why filesystem operations need to be gated by source type. It pushed me to significantly harden the safety controls. In a way, Norn caught its own security vulnerability.

Accomplishments that we're proud of

- Single-line to full observability: Any Strands agent goes from zero monitoring to full real-time quality and security tracking with just

hooks=[guard]no refactoring, no config files, no setup ceremony. - Dual-layer analysis engine: Combining deterministic StepAnalyzer with AI-powered Nova Lite evaluation gives Norn both the speed of rule-based checks and the intelligence of semantic analysis in real time, per step.

- Multi-agent swarm monitoring: Not just individual agents, but entire pipelines. Alignment scoring, goal drift detection, and handoff tracking across agent chains a capability that didn't exist in any tool I could find.

- Shadow Browser verification: Using Nova Act to independently verify what an agent claims to see on the web shifting from passive observation to active security verification. This is a fundamentally different approach to agent security.

- Anti-gaming defenses: Norn produces trustworthy scores even when monitoring adversarial agents that try to manipulate the evaluator through their output.

- Self-discovered vulnerability: The

shutil.rmtree("")incident during development proved that Norn's own monitoring philosophy applies to itself and led to significantly hardened safety controls. - Smart task generation: Agents imported from GitHub get an AI-generated test task that actually matches their capabilities making it possible to evaluate any agent immediately after import, with zero manual effort.

What we learned

The most valuable thing I learned through this project was experiencing firsthand that agent observability is not a "nice to have" it's critical infrastructure. I saw how slow debugging was while building agents without monitoring, and how dramatically everything accelerated once Norn's dashboard went live. Issues that would take hours of log-reading to diagnose became immediately visible.

On the technical side, I discovered how powerful and extensible Strands' hook architecture really is. Being able to capture every event from before a tool call to after the full invocation gave me the ability to model agent behavior with complete transparency.

Building the multi-agent swarm monitoring taught me that the hardest problems in agent systems aren't about individual agents they're about the spaces between agents. How data flows from one to another, how goals drift across handoffs, and how a failure in one stage cascades through the pipeline.

Working with Amazon Nova's model family showed me the value of having the right model for each layer: Nova Lite for both fast per-step scoring and deep session evaluation, and Nova Act for independent browser-based verification. Nova Lite's speed-cost balance made it practical to use across all evaluation layers without compromising real-time performance.

What's next for Norn

Norn started as a single-agent monitoring tool and has grown into a full multi-agent quality platform. But there's still much more to build.

Next milestones:

- Behavioral baselines: Learn each agent's "normal" patterns over time and flag statistical anomalies automatically

- Multi-framework support: Extend monitoring beyond Strands to other agent frameworks

- Automated compliance reporting: Generate SOC 2 and GDPR-ready audit reports from session data

- Collaborative dashboard: Multi-user access with role-based permissions for team environments

- Full Shadow Browser coverage: Expand Nova Act verification to cover all web interactions, not just URL visits

But the biggest vision is beyond software agents. As Strands evolves toward robotics and physical-world agents, Norn's monitoring architecture is designed to evolve with it. My goal is to integrate Norn directly into the Strands robotics ecosystem bringing the same real-time quality and security oversight to physical robots making decisions in the real world. When an AI robot picks up an object, navigates a warehouse, or interacts with a human, every action should be as observable and auditable as a tool call in a software agent. That's where this journey is ultimately headed.

When Norn reaches that point, it won't just be a developer tool it will be safety infrastructure for the AI agent and robotics ecosystem.

References

[1] Microsoft Security Blog (Feb 2026). "80% of Fortune 500 use active AI Agents: Observability, governance, and security shape the new frontier." https://www.microsoft.com/en-us/security/blog/2026/02/10/80-of-fortune-500-use-active-ai-agents-observability-governance-and-security-shape-the-new-frontier/

[2] McKinsey & Company (2024). "The state of AI: How organizations are rewiring to capture value." https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-how-organizations-are-rewiring-to-capture-value

[3] Gartner (Feb 2025). "Lack of AI-Ready Data Puts AI Projects at Risk." https://www.gartner.com/en/newsroom/press-releases/2025-02-26-lack-of-ai-ready-data-puts-ai-projects-at-risk

Built With

- amazon-bedrock

- amazon-nova-act

- amazon-nova-lite

- amazon-web-services

- boto3

- fastapi

- next.js

- pydantic

- python

- react

- strands-agents

- tailwind

- typescript

- websocket

Log in or sign up for Devpost to join the conversation.