-

-

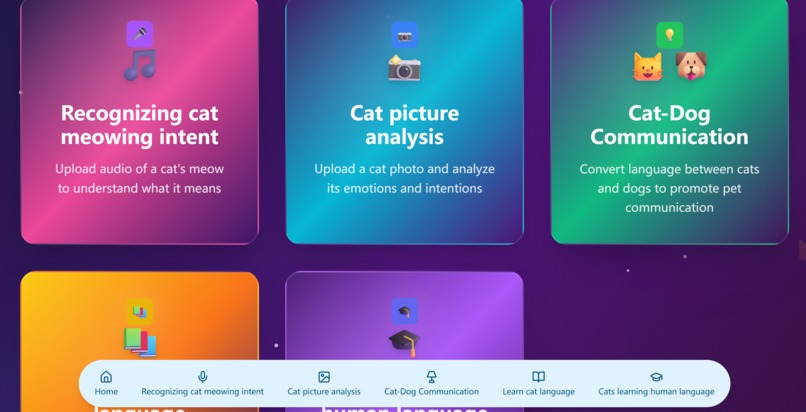

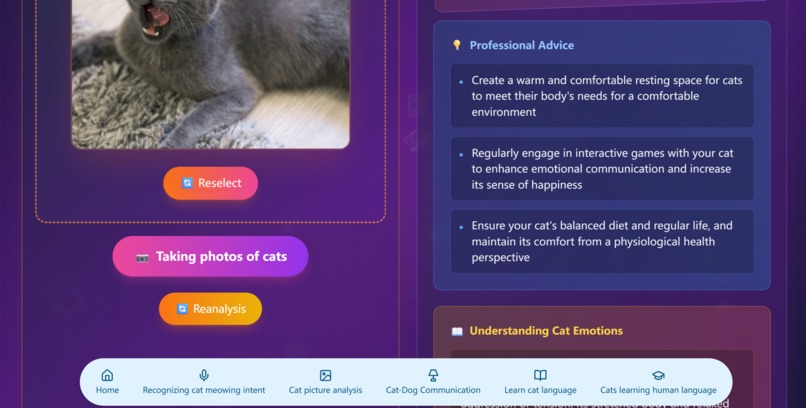

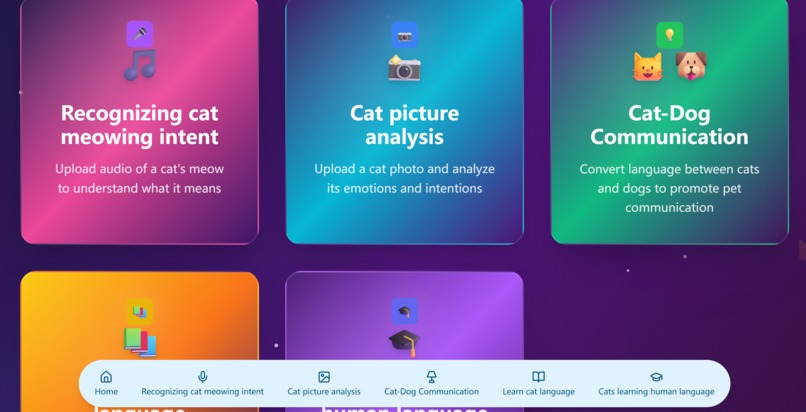

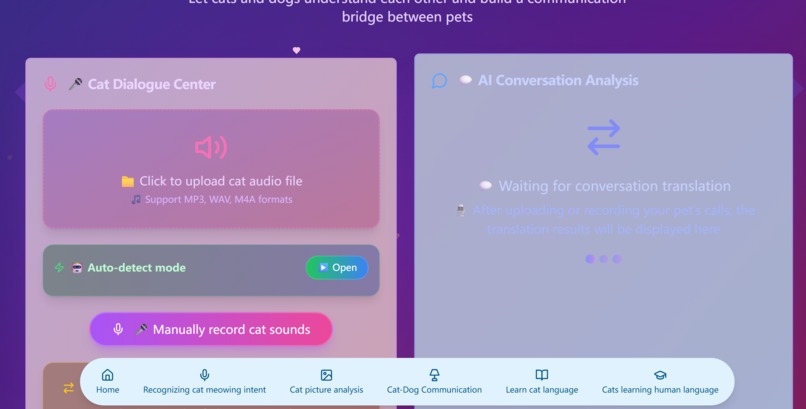

Home page

-

Function Navigation

-

Homepage Footer

-

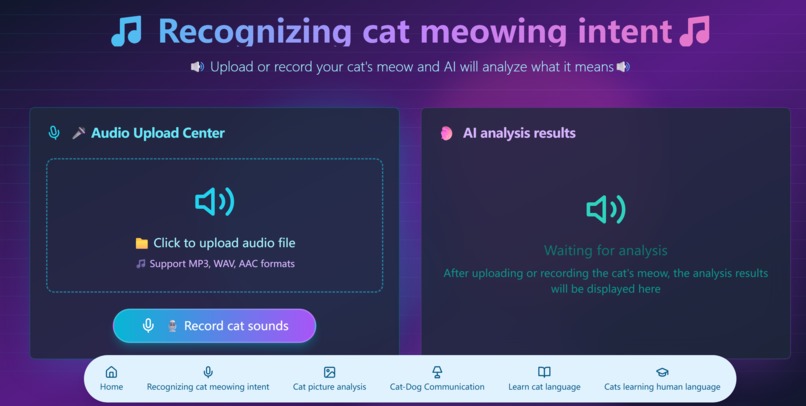

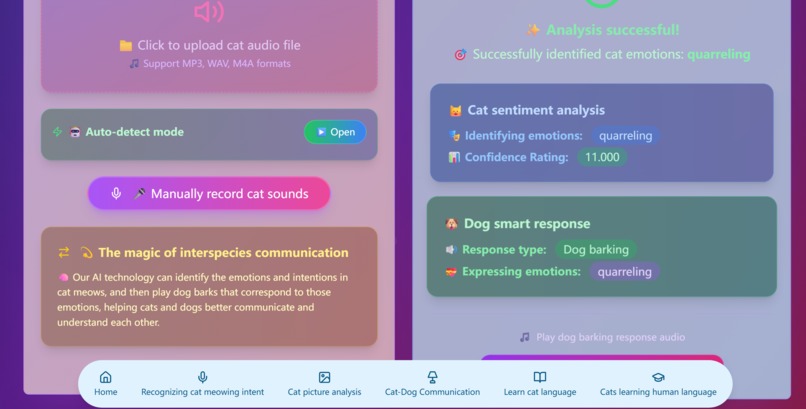

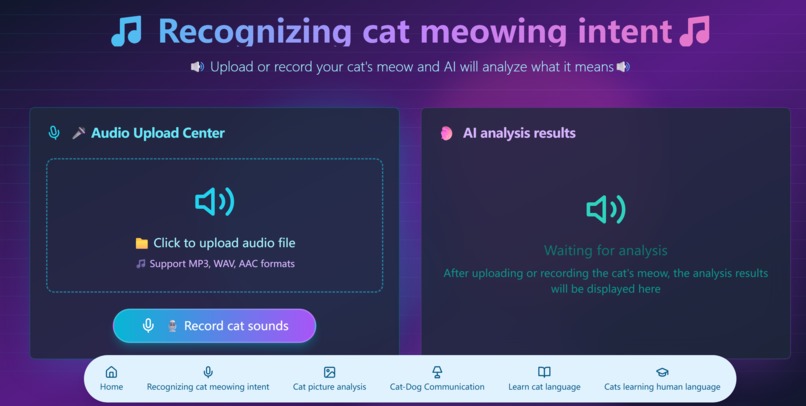

Animal language translation(for people)

-

result of translation

-

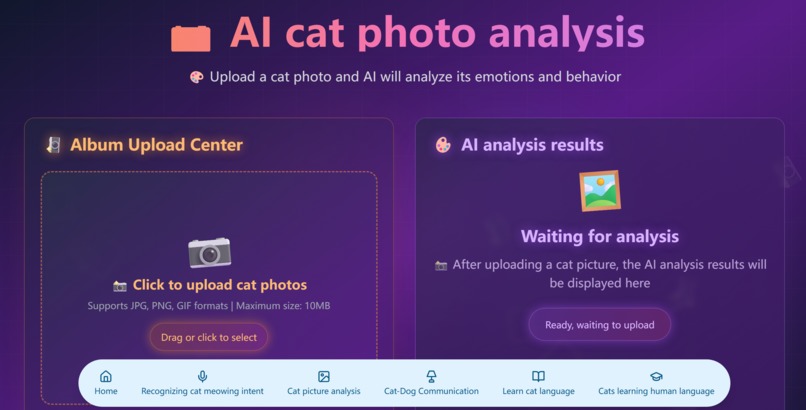

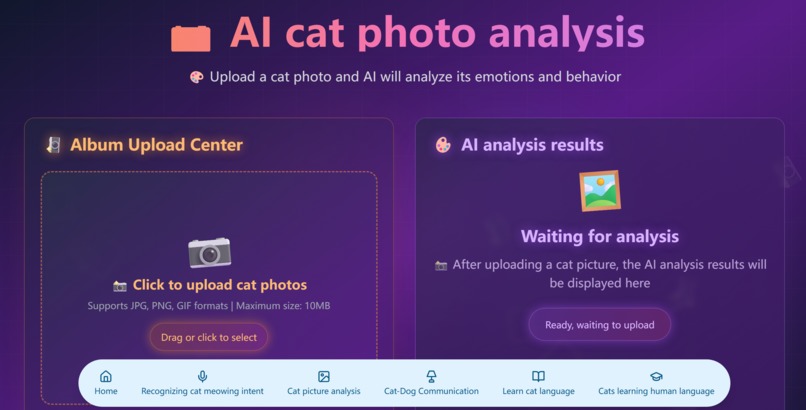

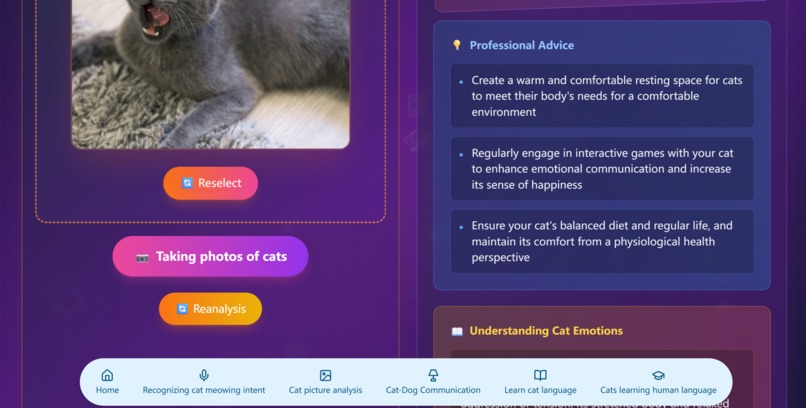

Image Analysis

-

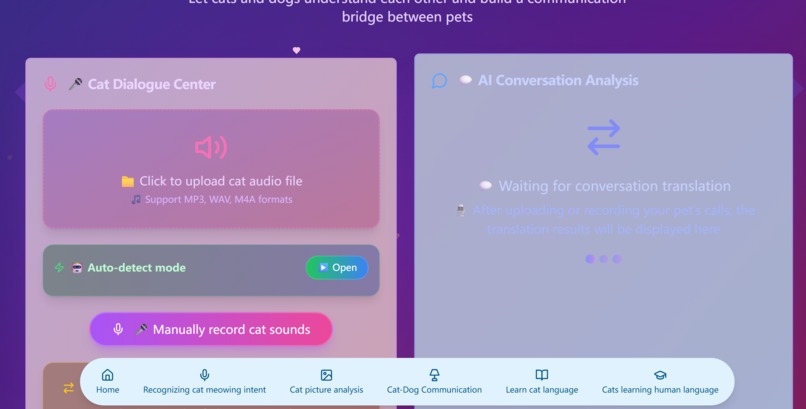

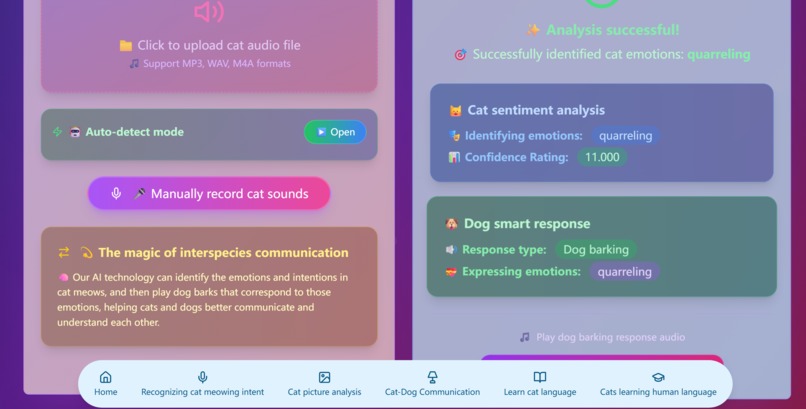

Animal language translation(for animal)

-

Result of Image Analysis

-

Result of Animal language translation(for animal)

-

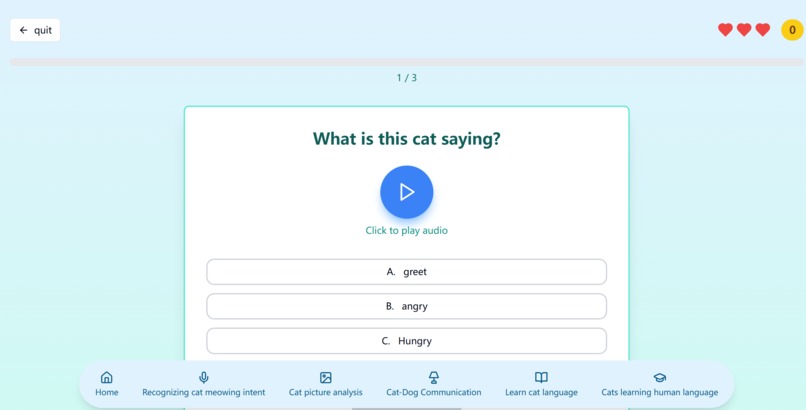

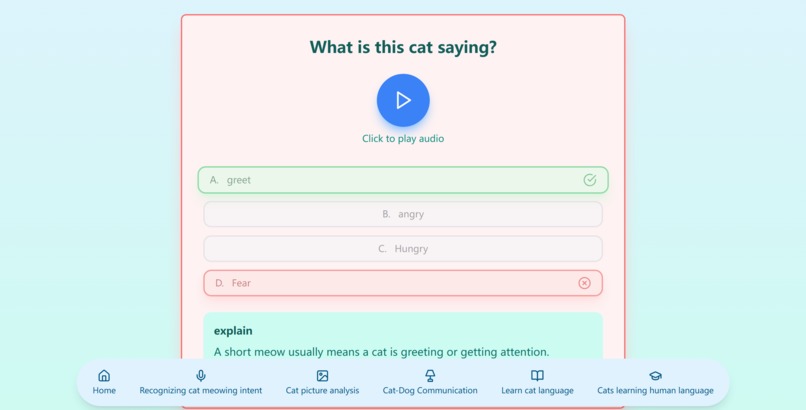

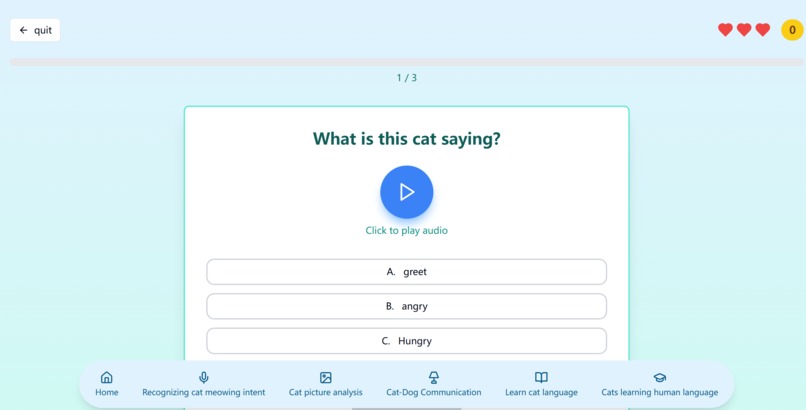

Dolingo for Human learning Cat Language

-

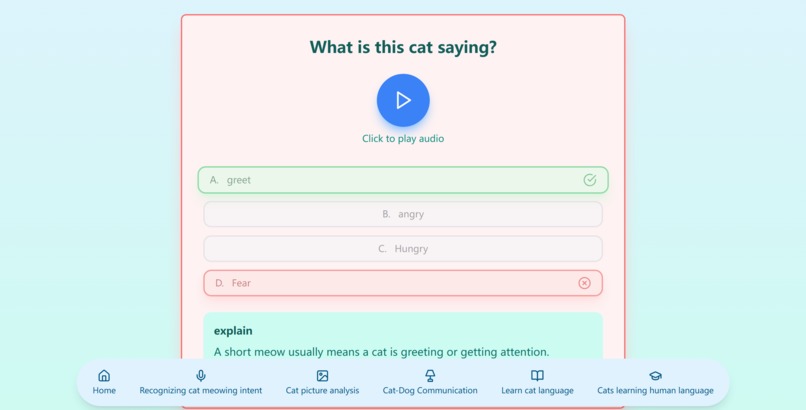

Some questions-1

-

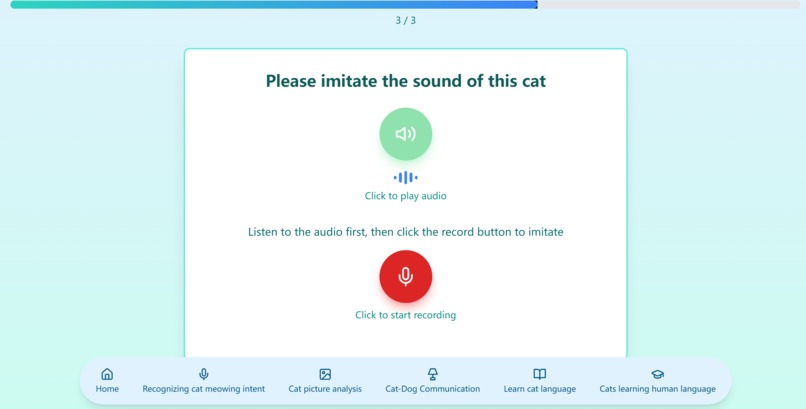

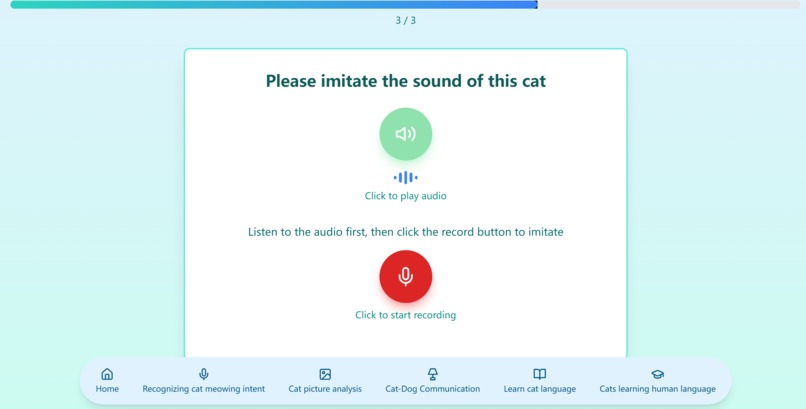

Some questions-3

-

Some questions-2

-

-

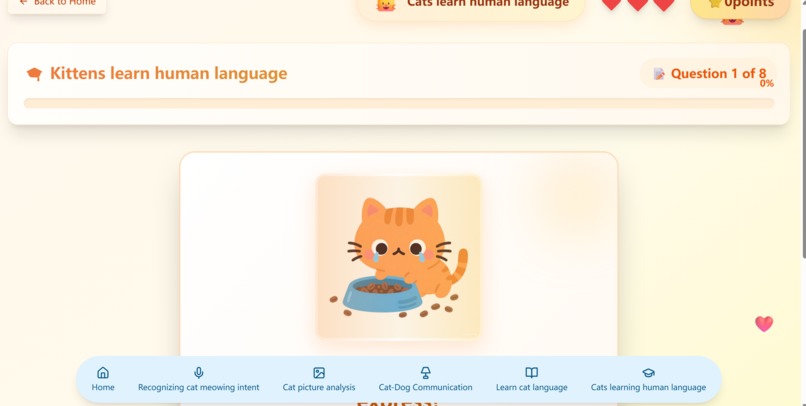

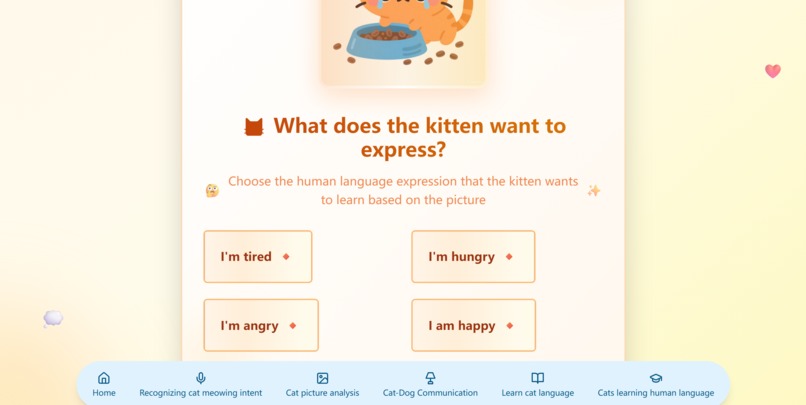

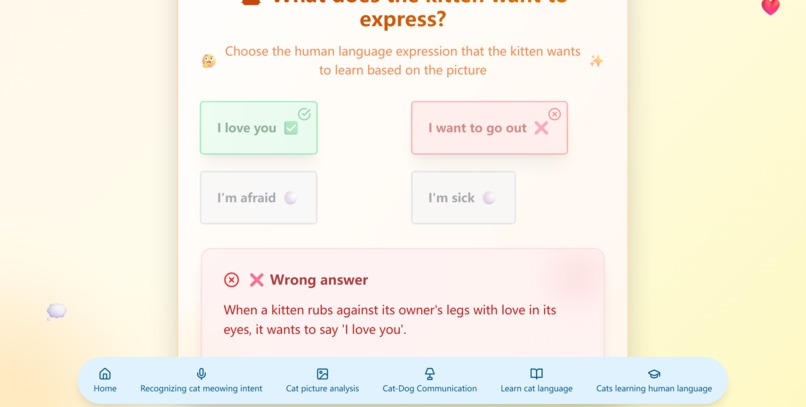

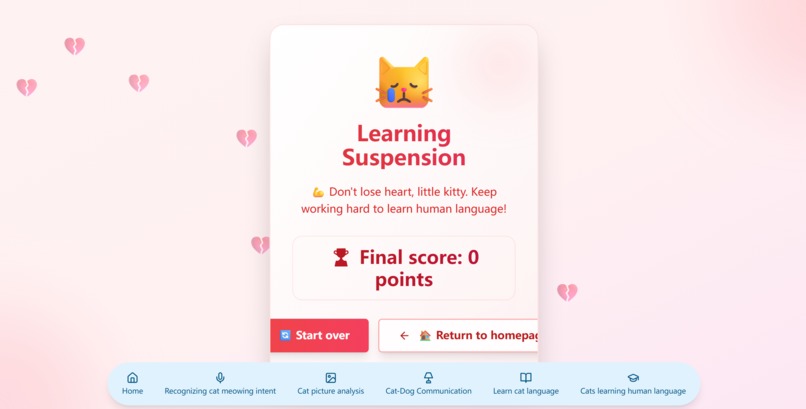

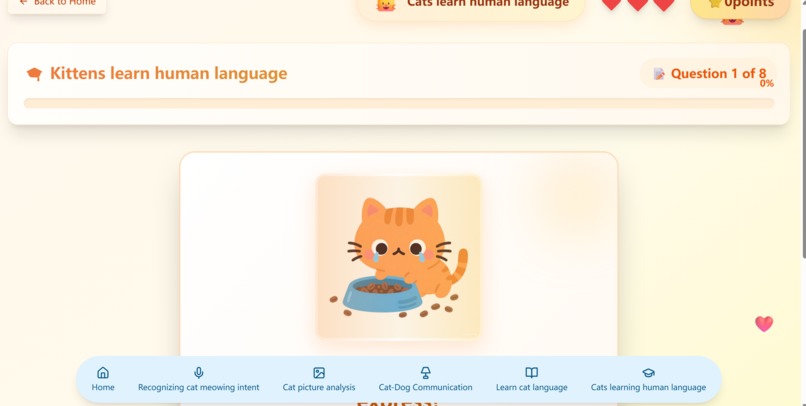

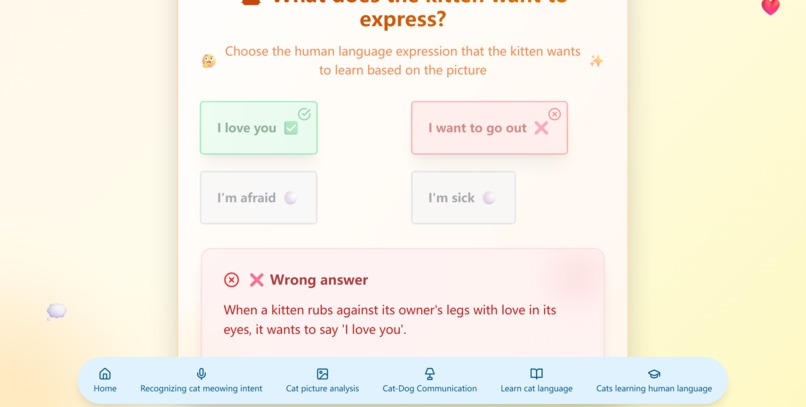

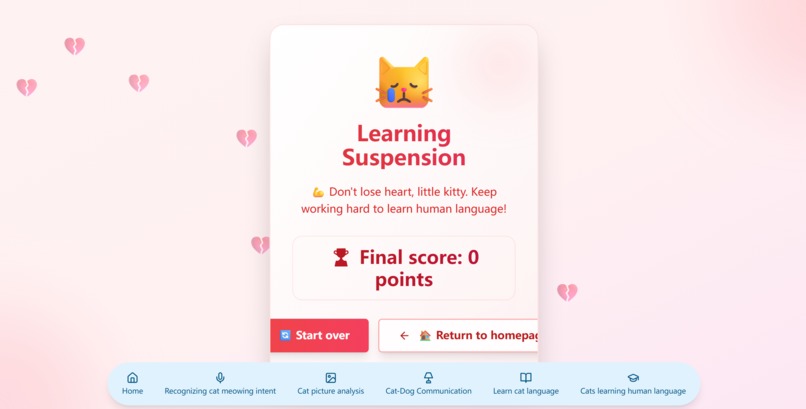

Cats learn human language in a Dolingo-style way(1)

-

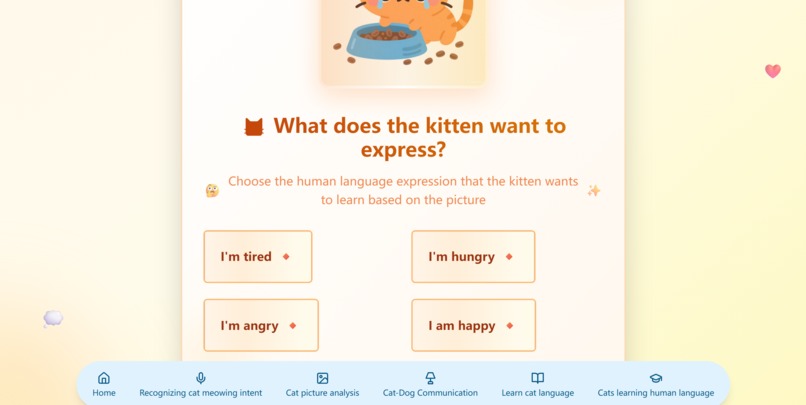

Cats learn human language in a Dolingo-style way(2)

-

Cats learn human language in a Dolingo-style way(3)

🌟 Inspiration

The story begins with my little orange tabby and my grandpa’s golden retriever. Every time I brought the cat to Grandpa’s house, we got a classic “chickens flying, dogs jumping” scene—the kitty arched its back in panic, the dog wagged its tail in excitement, and we humans stood there helpless, having no idea what they were “saying.” I kept wondering: why can parrots learn human words while our closest companions—cats and dogs—can’t talk to us directly? Then one day on a video site I saw someone imitate animal sounds and trigger huge reactions from pets. Even more amazing, I learned that speech-language pathologist Christina Hunger taught her dog Stella to communicate with buttons, opening a new era in animal-language research. These discoveries made me realize: animals have always been “talking,” we just haven’t learned to “listen.” In this age of rapid AI growth, could we build a bridge so emotional communication between humans and pets is no longer blocked by species?

🎯 What it does

PetLingo is an ML-powered smart pet-language translation system. Main features: 🎵 Pet-emotion recognition: audio analysis identifies 18 distinct emotional states for cats and dogs 🔄 Cross-species translation: convert cat speech to equivalent dog speech and vice versa 🎧 Real-time audio: live recording + file upload 📊 Emotion visualization: intuitive charts of pet mood & confidence 🎮 Interactive learning: playful lessons for humans to “speak animal” 📱 Modern UI: responsive design, any device 🛠️ How we built it Modern full-stack stack: Frontend: React 18 + Vite | Tailwind CSS | Framer Motion | Radix UI | React Router Backend: FastAPI | Scikit-learn | Librosa | NumPy & Pandas | Uvicorn Workflow: Data: 18 cat moods & 7 dog moods audio samples Features: MFCC, spectral centroid, etc. via Librosa Model: Random-Forest classifier API: RESTful endpoints for upload & prediction UI: interactive React front-end Integration: end-to-end user experience

🚧 Challenges we ran into

Tech: Audio-format chaos across devices Choosing the most emotive features Accuracy on limited data Sub-2-second latency Learning: Audio-ML from zero Merging front, back & ML Debugging invisible sound bugs 🏆 Accomplishments we’re proud of 🎯 85 %+ emotion accuracy ⚡ < 2 s processing 🎨 Delightful UI, great feedback 🔧 Married classic ML with modern web 📚 Team leveled up in AI, audio, full-stack

💡 What we learned

We felt “tech serving emotion.” Best moment: first time we decoded my tabby’s “I’m hungry” meow, played the matching dog “hungry” bark, and Grandpa’s retriever ran to its bowl! We saw we’re improving human-pet bonds, not just shipping code. Tech take-aways: Audio signal processing ML design & training Full-stack teamwork UX matters Life take-aways: Empathy > algorithms Inter-disciplinary thinking Learn fast, forever

🚀 What’s next for PetLingo

Short (3–6 mo): More species (rabbits, birds) More data → higher accuracy Native iOS & Android apps User community Mid (6–12 mo): Smart collars & cams Per-pet personalization Vet-health insights Multi-human-language UI Long (1–3 yr): Full AI pet assistant Sustainable business Research partnerships Global rollout Ultimate vision: Make PetLingo the bridge between human and animal worlds, so every family can understand and care for their companions, building a harmonious co-existence planet.

Built With

- ai

- css

- fastapi

- python

- radix

- react

- scikit-learn

- tailwind

- uvicorn

- vite

Log in or sign up for Devpost to join the conversation.