-

-

OpenNeuralingo - Logo

-

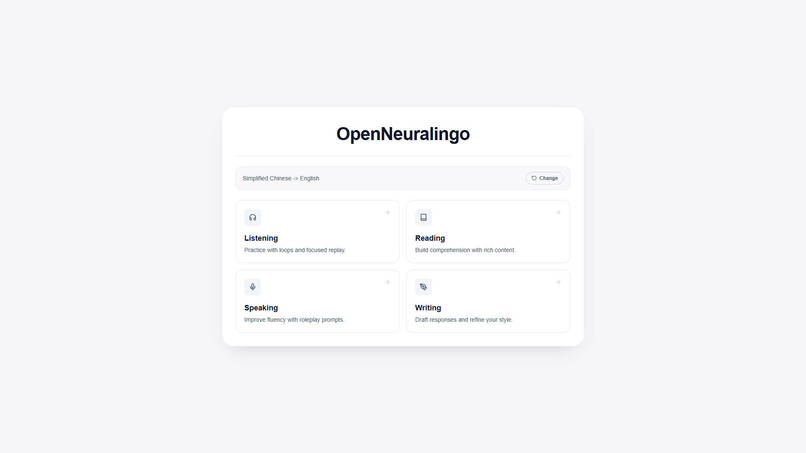

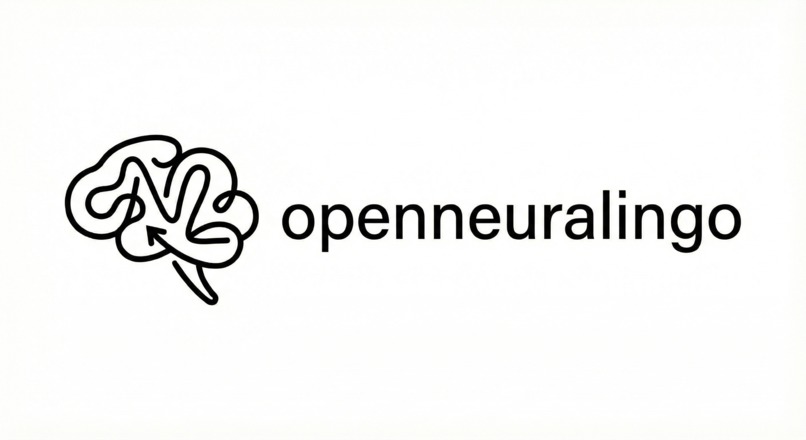

OpenNeuralingo - Landing Page

-

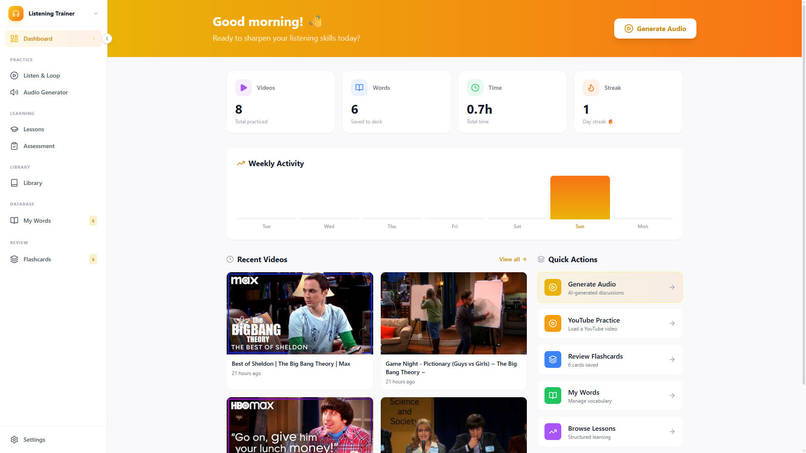

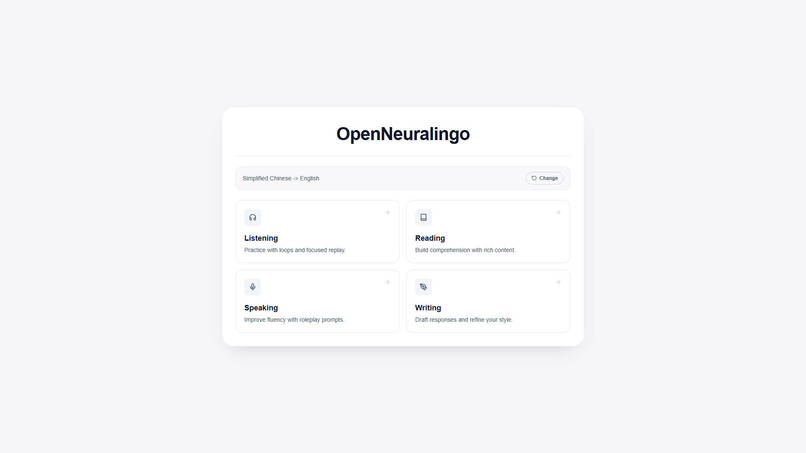

Listening module

-

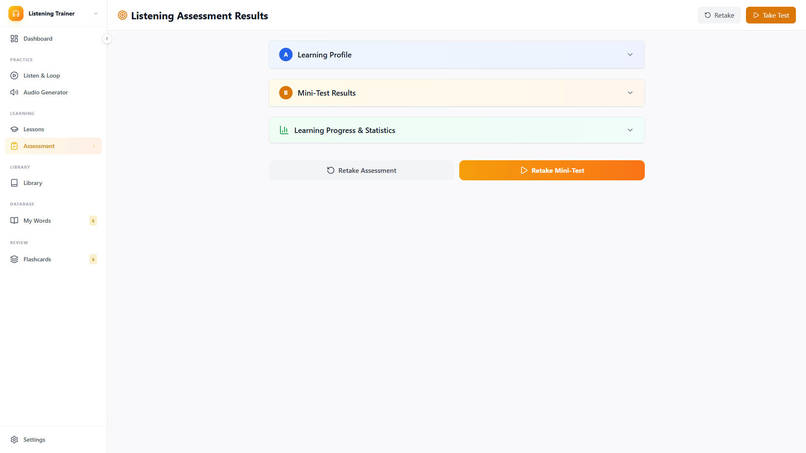

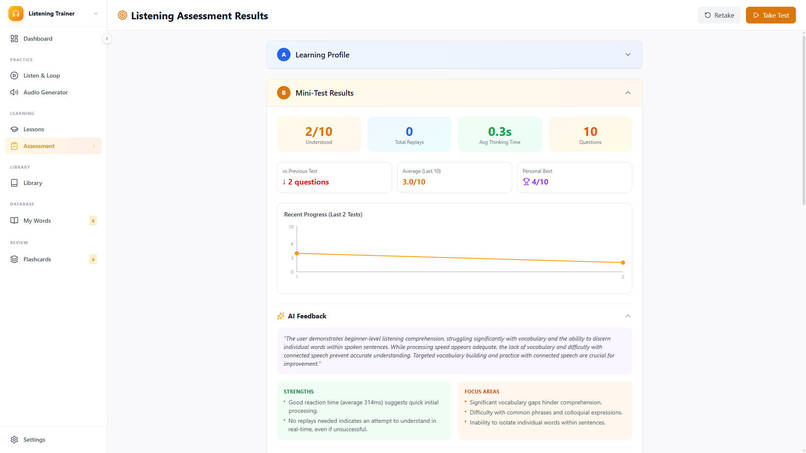

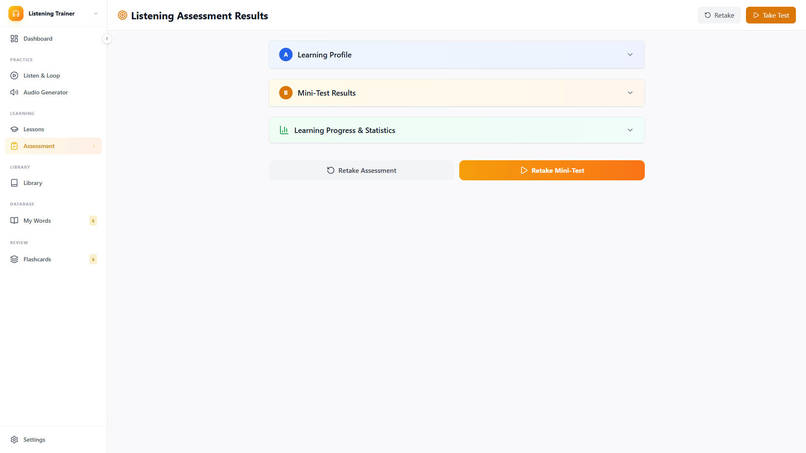

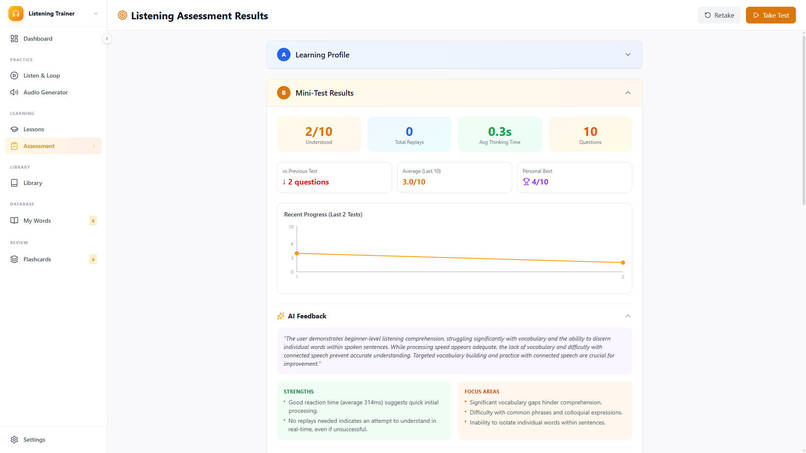

Listening Assessment Results

-

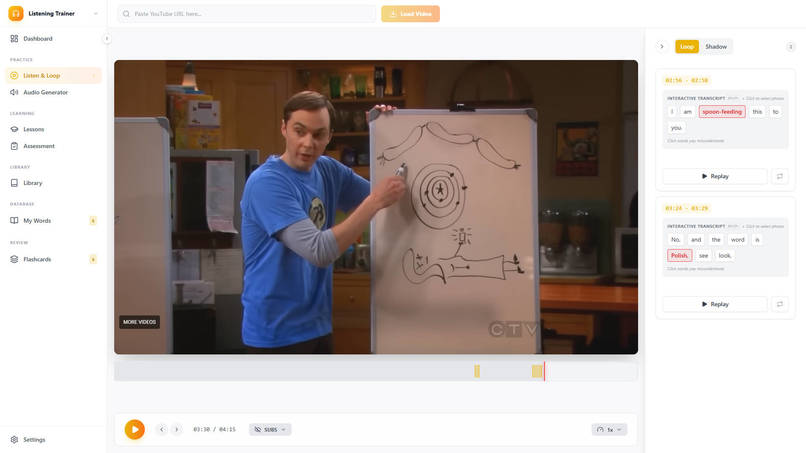

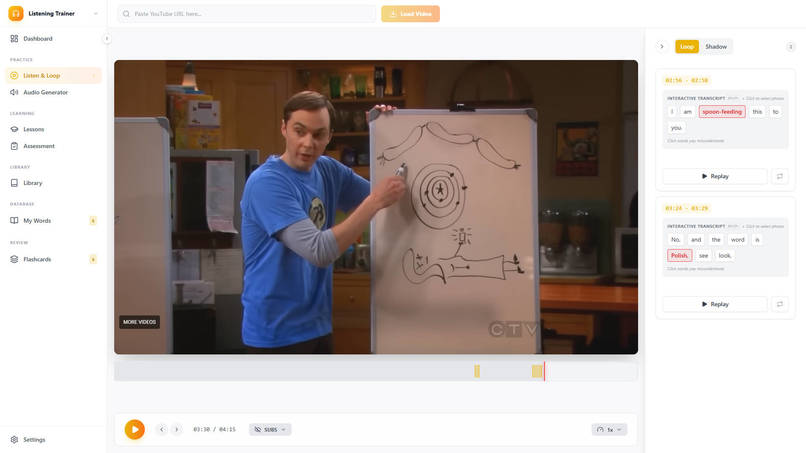

Listening - Listen and Loop

-

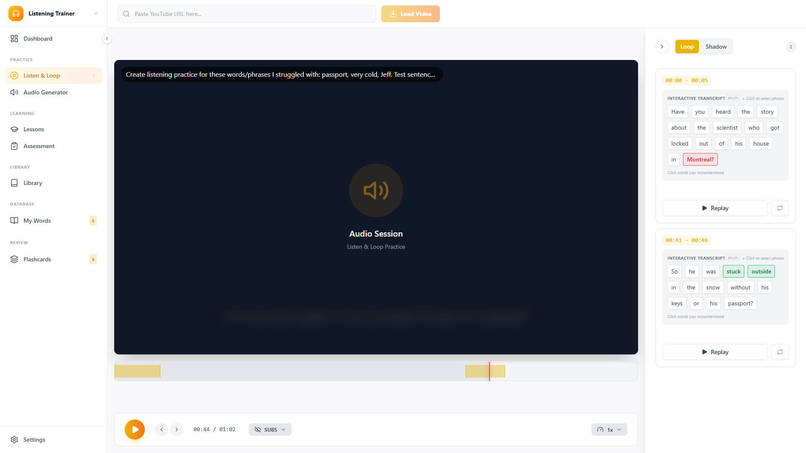

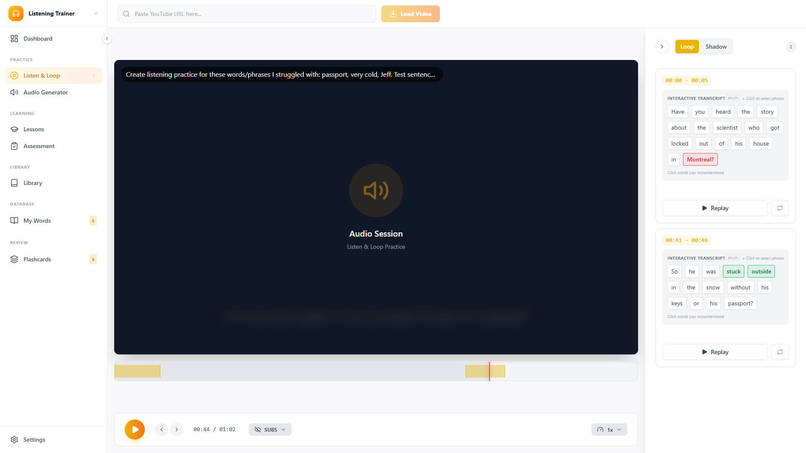

Listening - AI generated audio practice

-

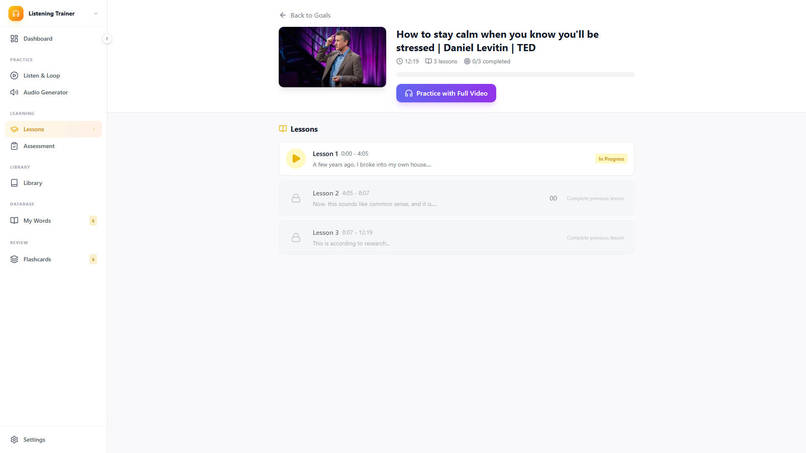

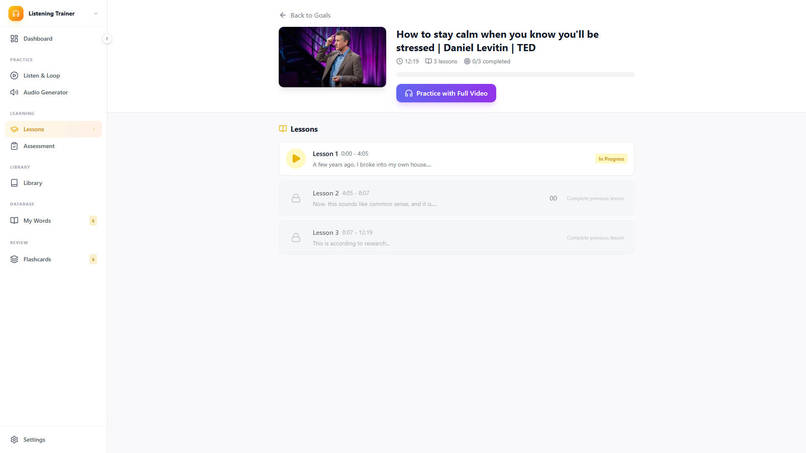

Listening - AI generated lessons

-

Listening - Assessment-feedback

-

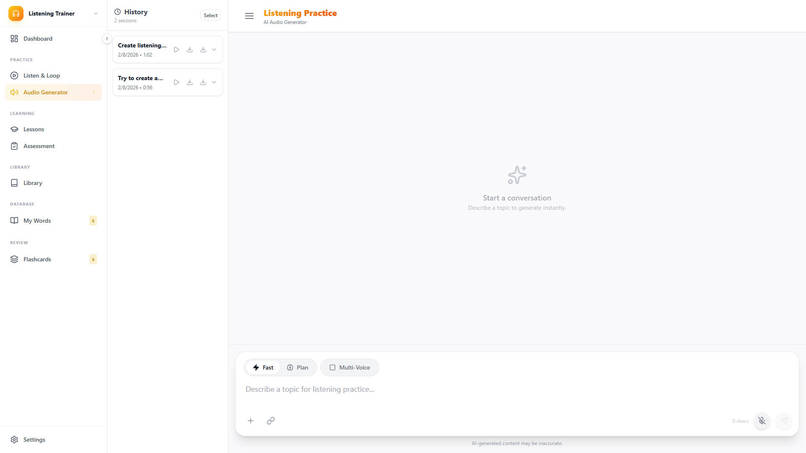

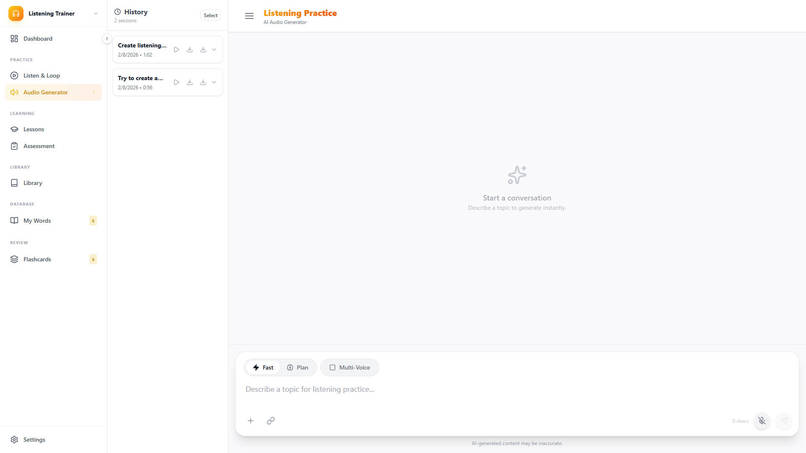

Listening - Audio generator

-

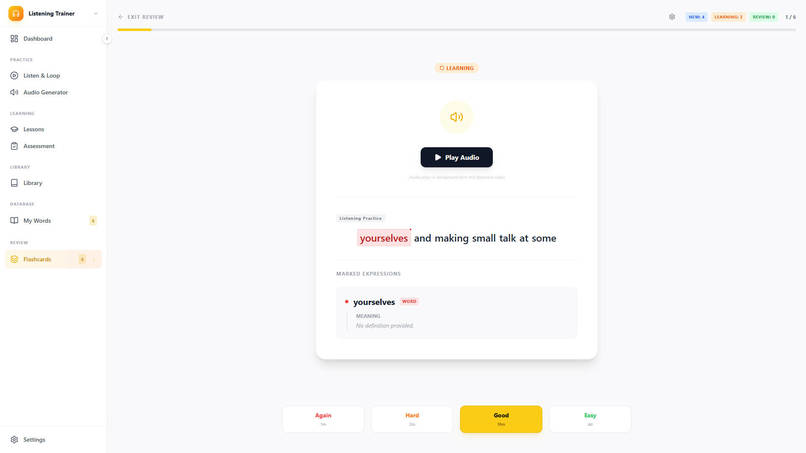

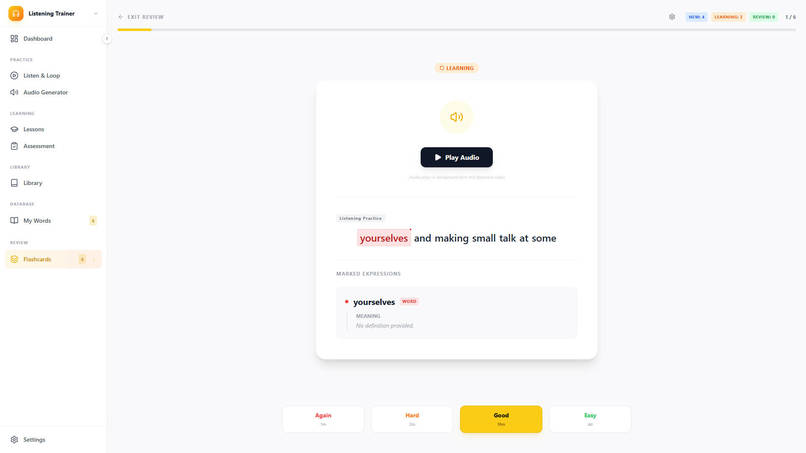

Listening - Flashcards

-

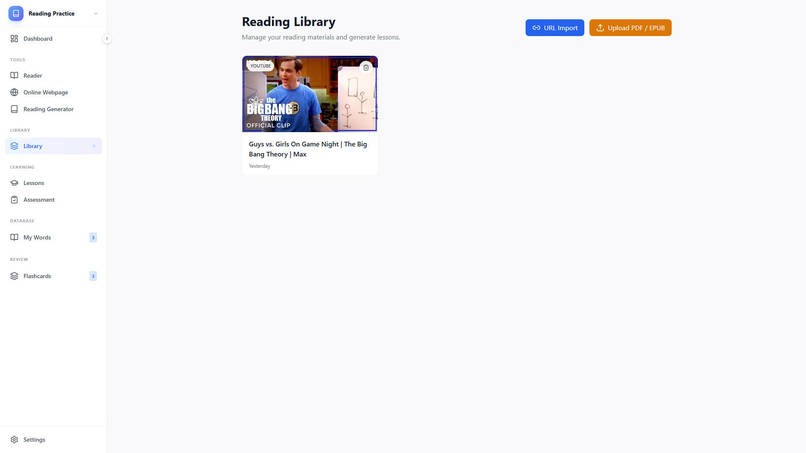

Listening - Library

-

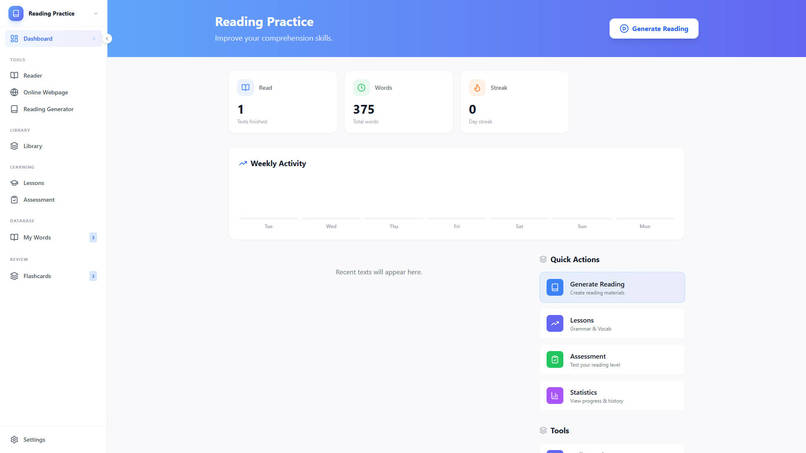

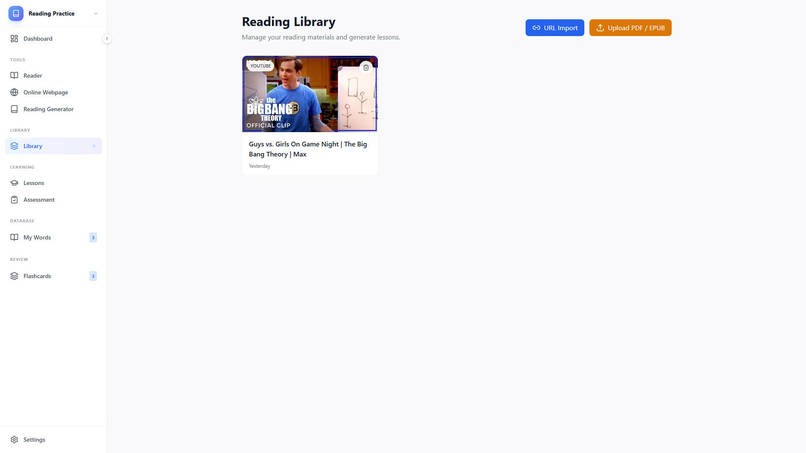

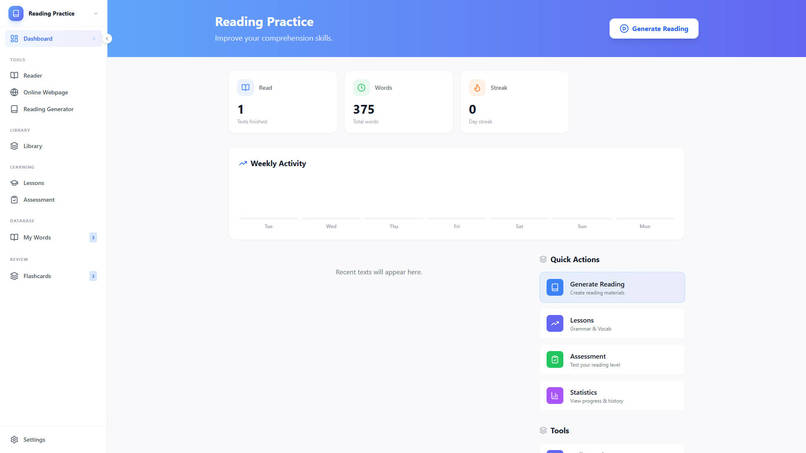

Reading Module

-

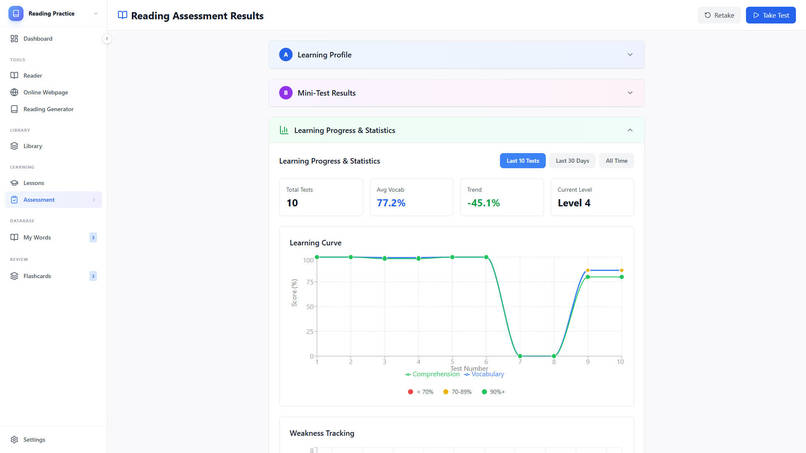

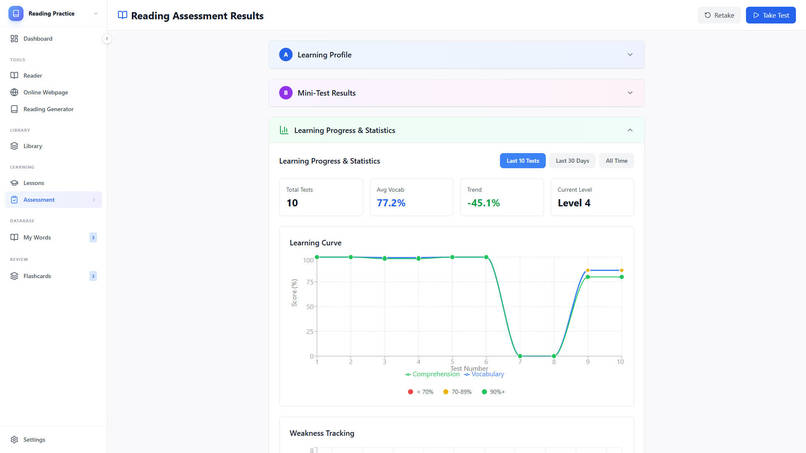

Reading - Assessment

-

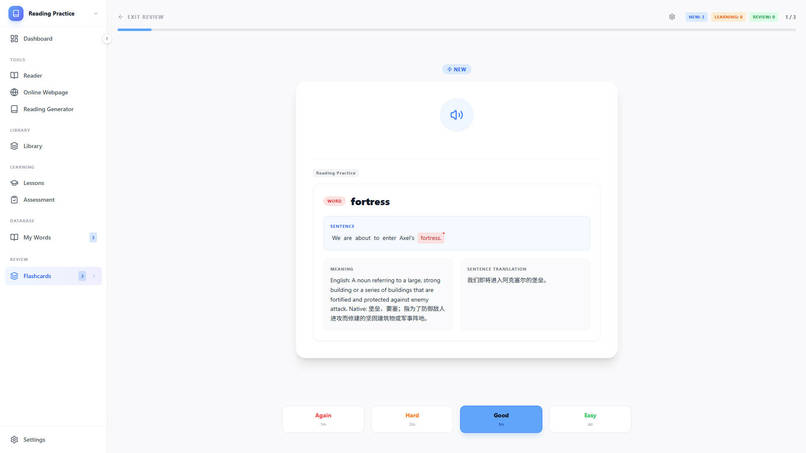

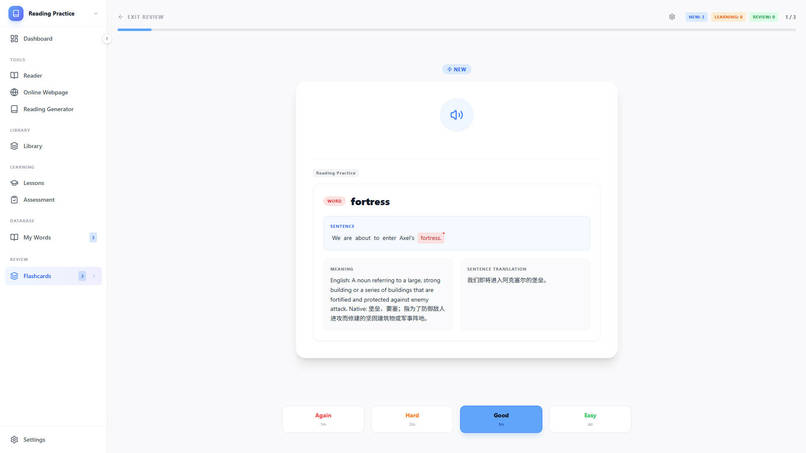

Reading - Flashcard

-

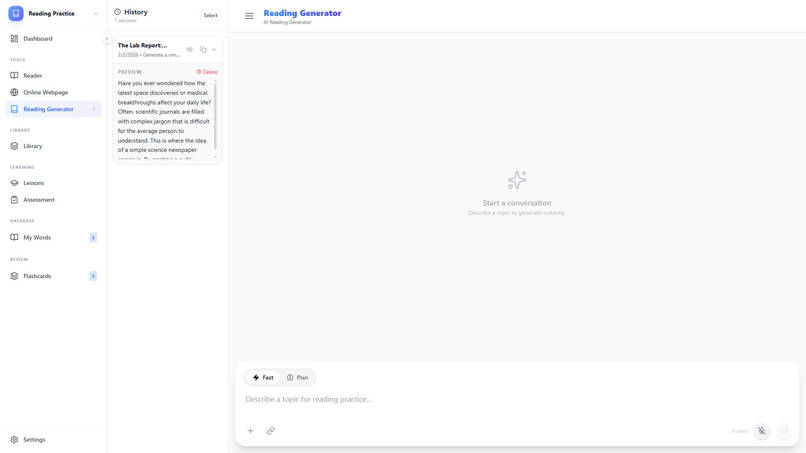

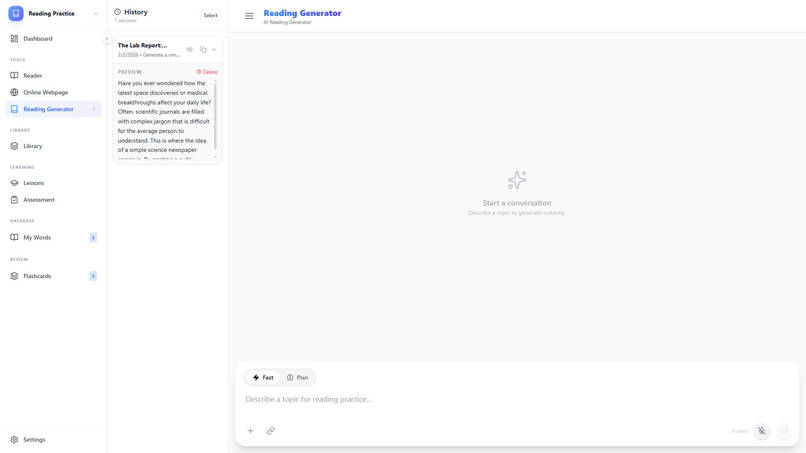

Reading Generator

-

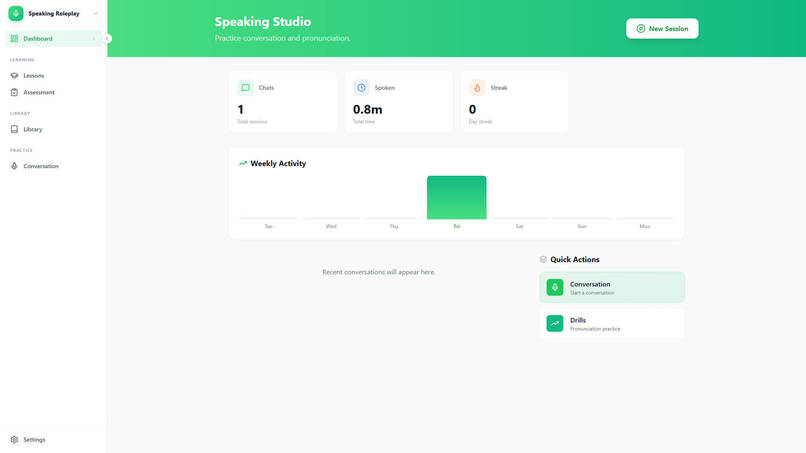

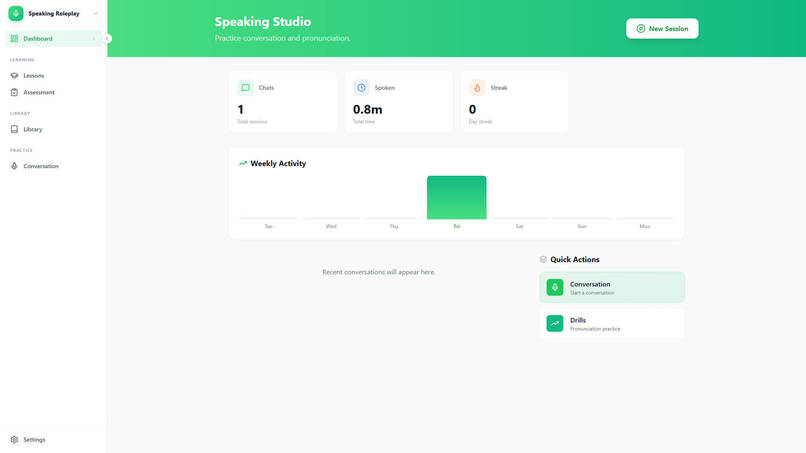

Speaking - Module

-

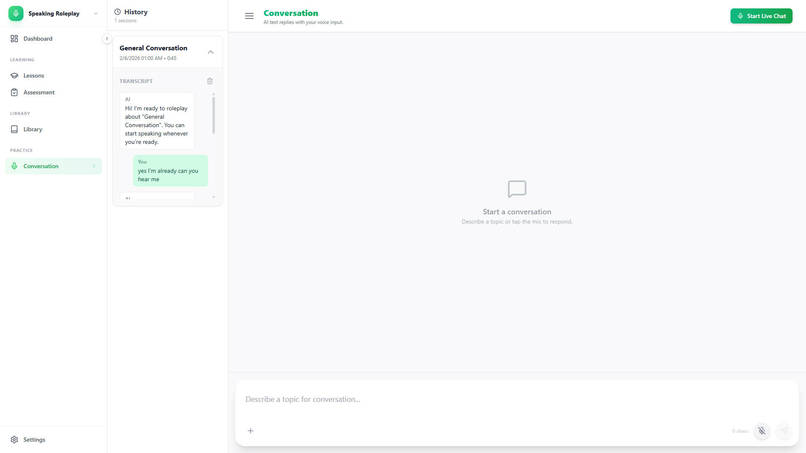

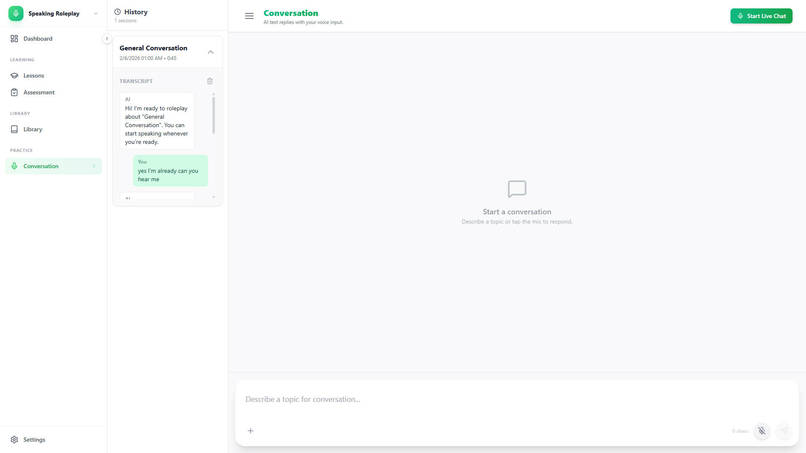

Speaking - AI conversation

-

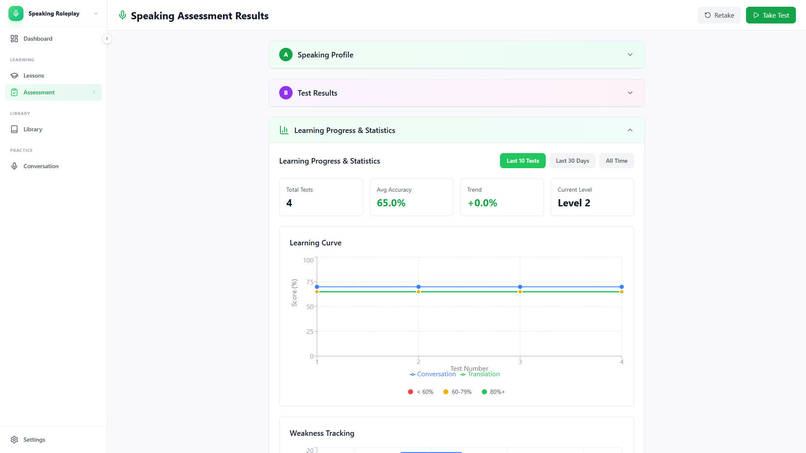

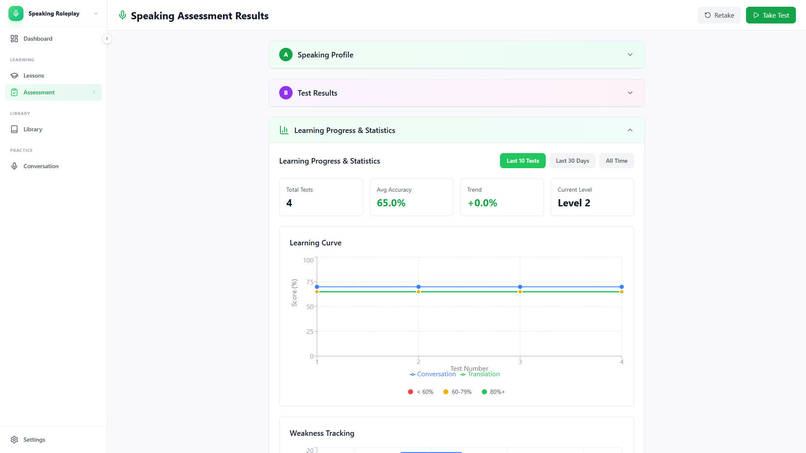

Speaking - self-assessment

-

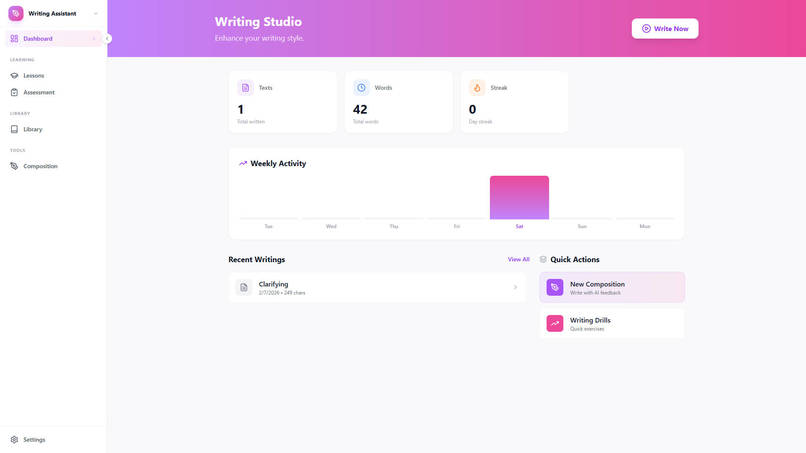

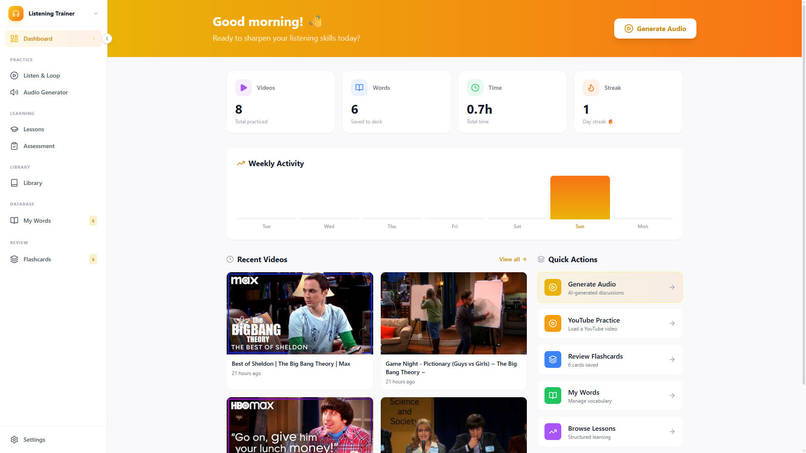

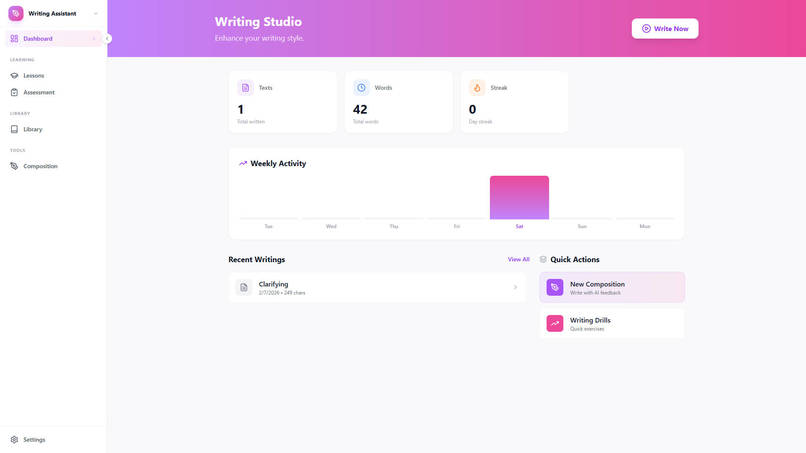

Writing Module

-

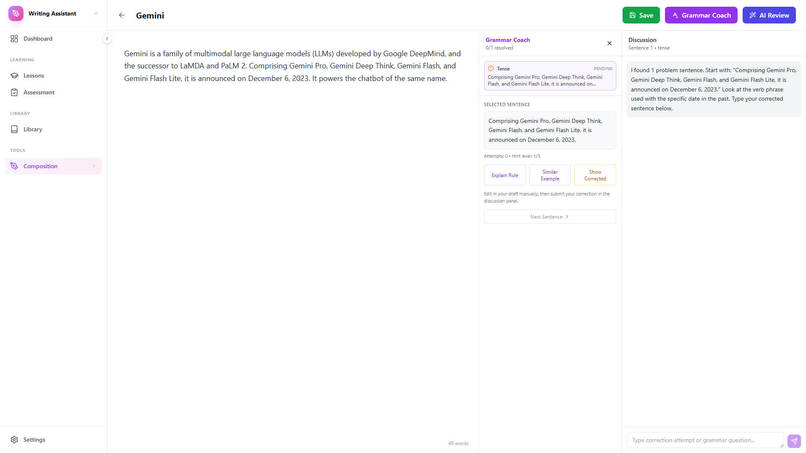

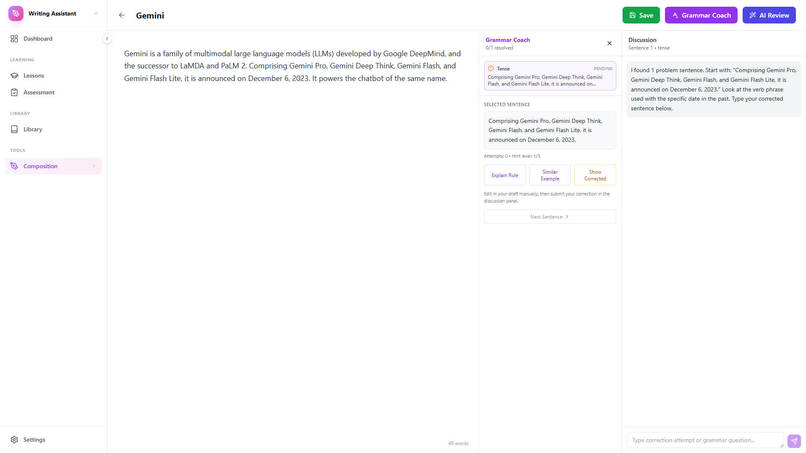

Writing - Grammar coach

-

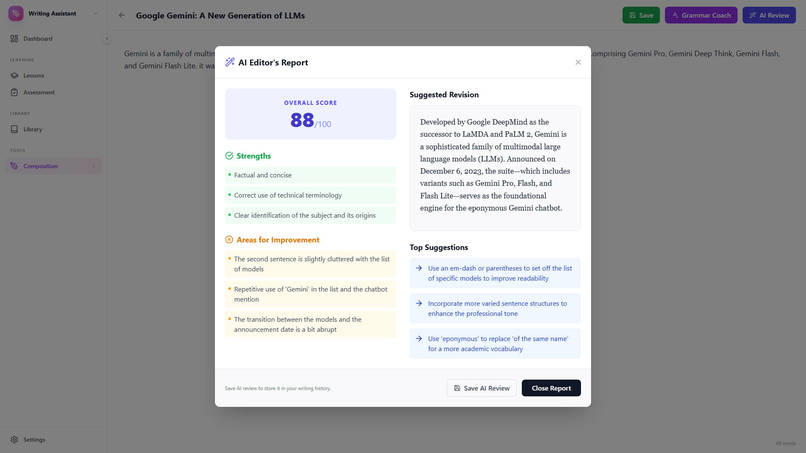

Writing - AI review

Inspiration

Language apps often optimize for time-on-app rather than how humans truly learn. From a neuroscience perspective, learning is driven by attention, reward, spaced repetition, and the specific errors a learner makes over time. I wanted to build a system where the AI doesn’t just generate content, but instead uses human behavior as a feedback signal—so learning materials continuously improve through interaction.

OpenNeuralingo started from a simple question:

What if a language app treated each learner as an experiment-of-one, adapting in real time based on behavior?

What it does

OpenNeuralingo is a neuroscience- and human behavior-driven AI language learning app that turns real-world content into adaptive practice.

The current version is designed for learners who already have some background in the language. It is not yet optimized for complete beginners.

Core idea: the system tracks learner behavior (e.g., self-assessment, errors, response patterns, revisit frequency) and uses that data to generate better next-step content.

Over time, the app can:

- Personalize practice based on skill level and vocabulary gaps

- Convert video subtitles, web content, or Markdown-based materials into exercises (listening, reading, targeted review)

- Adjust difficulty and review timing using behavioral signals (spaced repetition, adaptive feedback, self-evaluation)

- Create a closed learning loop:

learner behavior → AI inference → improved content → better learning outcomes

How we built it

Frontend: React 19, TypeScript, Vite 6, Tailwind CSS

Backend: Python (Flask)

Database: SQLite

AI: Google Gemini API

Video: YouTube IFrame API (react-youtube)

Architecture highlights

- Clean separation between user interface, backend logic, and AI generation

- Lightweight learning-event database tracking performance and interaction history

- Prompt pipelines conditioned on behavioral summaries (mistakes, weak areas, review patterns), grounding AI output in real learner data

Challenges we ran into

- Transforming behavior into learning signals: User interactions are noisy and unstructured. We designed simple but robust metrics for adaptation.

- Controlling AI output quality: Generative models can drift in difficulty and structure, requiring careful prompt engineering and constraints.

- Latency and user experience: AI calls were optimized through caching and scoped generation flows.

- Building a real adaptive loop: The hardest part wasn’t content generation—it was making each new exercise measurably better than the last.

Accomplishments that we're proud of

- Built a full adaptive learning pipeline: content → behavior → AI personalization

- Integrated real-world media into structured learning workflows

- Implemented continuous personalization instead of static lesson paths

- Delivered a modern, deployable web architecture

What we learned

- AI alone doesn’t teach — measurement and feedback drive learning

- Behavior data is the core signal for personalization

- Learning experience design matters as much as model capability

- Structured prompts and schemas outperform raw generation

What's next for OpenNeuralingo

- Advanced learner modeling: finer skill diagnosis across vocabulary, grammar, listening, and comprehension

- Personalized spaced repetition: moving beyond SM-2 to behavior-based scheduling

- Beginner support: building structured learning paths for users with zero language background

- More modalities: speaking/pronunciation feedback and richer listening tasks

- Desktop version: packaging with Tauri/Electron for smoother media workflows

Log in or sign up for Devpost to join the conversation.